Simple Database Development with SQL Change Automation

SQL Change Automation makes automation simple enough that it can adapt to suit many different approaches to SQL Server database development. Phil Factor describes a project to update the Pubs database, using it in combination with a PowerShell function and to maintain in source control the build scripts, migration scripts and object-level scripts, for every version of the database.

SQL Change Automation (SCA) gives some people a first impression of being dauntingly complex. There is a reason for this: it is designed to support the whole range of database development methodologies, including shared, isolated, migration-oriented, state-oriented. Maybe, the feeling is the same as walking into a carpenter’s shop when all thought you wanted was a hammer and nails. It is sometimes best described as a DevOps Lego kit for database deployment.

At the ‘advanced’ end of the Lego kit is the NuGet package system that guarantees that the deployed database is, in fact, at the version it claims, and that it is exactly the version that is in source control. It guarantees this even where the target database has no connection with either the source or a reference database. This is done to ensure that the version of the database that is eventually released into production is identical to the one that was developed, tested, checked for security and functionality, passed as suitable by the users, and so on.

However, the Lego kit doesn’t force you to make only Spaceship Galactica models; you can start with something much simpler. If, for example, you can check the released production version of a database directly with version control, the NuGet package approach is no longer essential. I’ll be showing an approach with SCA to support a simpler approach database development.

Why use SCA?

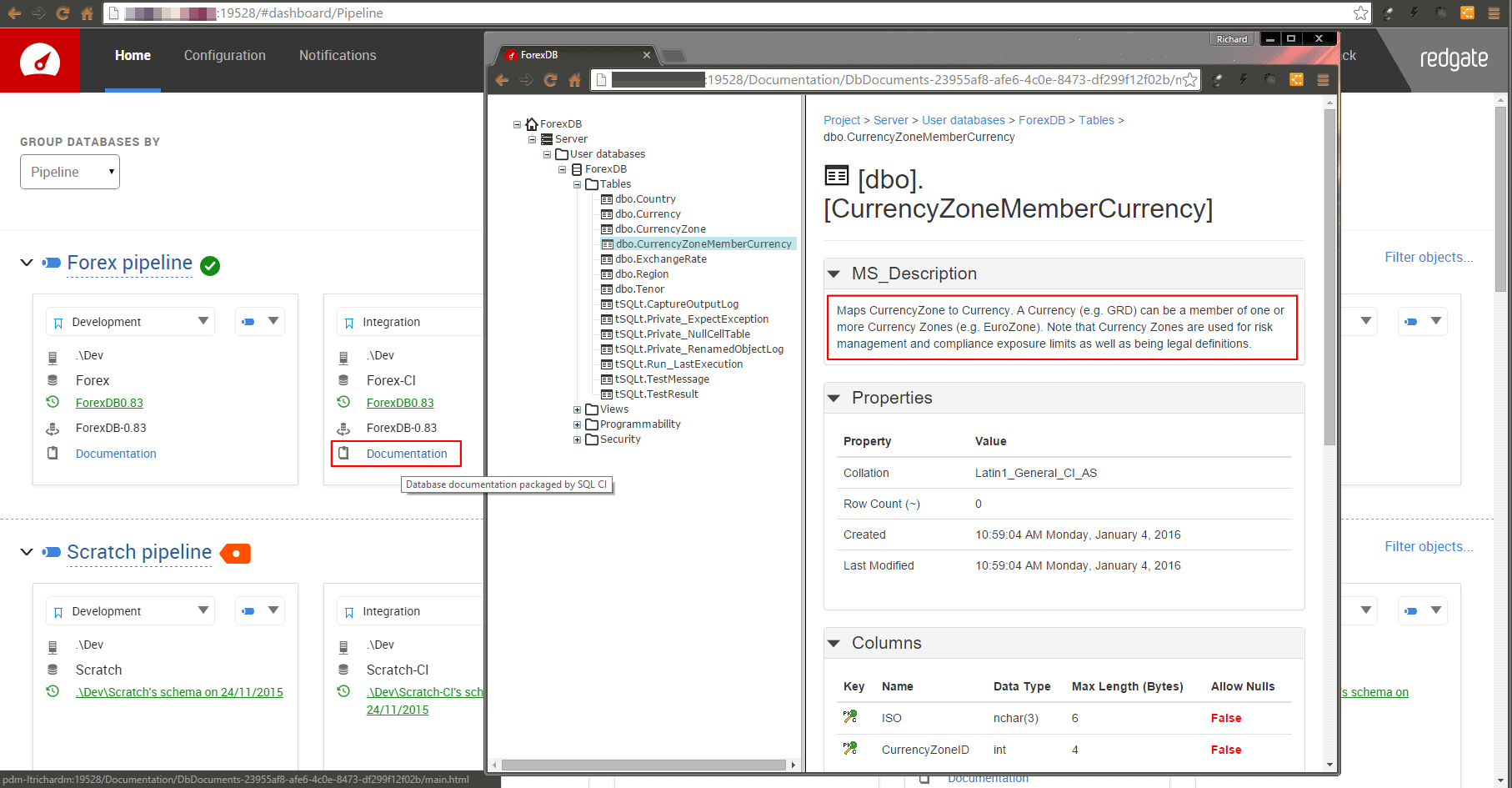

Redgate’s SCA doesn’t enforce one approach to database deployment. Every organization has its own development and deployment processes, which inevitably vary considerably according to the organization’s business. SCA allows teams to make changes to the deployment system easily because it is versatile, co-works with other tools, and provides excellent reporting.

This article is intended to demonstrate that, because it makes automation so easy, SCA is amenable other approaches to database development. Although the extremes of SCA functionality are well documented, it is less obvious how SCA assists those of us who require fewer deployment pyrotechnics. To illustrate this, we’ll imagine ourselves using the typical shared database development model, keeping in source control the build scripts, migration scripts and object-level scripts, for every version.

A practical example

The fictional publisher, and its’ very tired database system, are to be revamped by some developers. They are using a shared database to do ‘trunk’ development work and unit testing but have other copies of the database for doing experimental work. They keep a reference copy of the previous release version of the database.

Source control should remain the single source of truth, so incremental changes must be checked into version control. The developers need to have the object-level source scripts in version control to make it easier to track the changes to individual tables or routines. These will be updated on every change. They will use these to generate build scripts for development work, and eventually a build script for the new version. These build scripts don’t need to preserve existing data because it is inserted later.

The team also want migration scripts because they provide a good summary of what has changed and flag up those changes that require special scripting to preserve existing data. They want to draft migration scripts that preserve data, to convert databases from one release version to another. After a sprint, or whenever a release is required, a single migration script will be required that consolidates all the changes from the previous version. Each version migration script must test the target database to make sure that it is currently at a suitable version, and then successfully change the database from the previous release to the new release version. Just with a series of migration scripts, you can upgrade from any release to any later one. Normally, the various development and QA/Test databases will be at the previous release version so the current migration script will probably end up being a well-tested script by the time it gets to staging..

The team are using a three-number semantic versioning system of MajorVersion.Minor Version.Change. They want to execute a scripted procedure that saves every change into source control and aim to increment the minor version number only for release candidates.

Included with this article is a PowerShell function (SourceControl.ps1) that uses SCA to update the object-level source of a directory used for version control to the version of the database indicated by a connection string. It also generates both a migration script and a build script. It will also provide an HTML report of what has changed in the database and warn of any problems in the code and of any possible migration issues with the data that will require hand-cut code to patch the script.

We’ll walk through its use.

Setting up the reference version of the database (v1.1.1)

Our development team first set up a reference, read-only version of the Pubs database, representing the current release. they create this from the old instPubs.sql script, which provides us some sample data too. They patch the database to mark it with the version number

|

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 |

Use Pubs DECLARE @DatabaseInfo NVARCHAR(3750), @version NVARCHAR(20) SET @version=N'1.1.1' SELECT @DatabaseInfo = ( SELECT 'Pubs' AS "Name", @version AS "Version", 'The Pubs (publishing) Database supports a fictitious bookshop.' AS "Description", GetDate() AS "Modified", SUser_Name() AS "by" FOR JSON PATH ); IF not EXISTS (SELECT name, value FROM fn_listextendedproperty( N'Database_Info',default, default, default, default, default, default) ) EXEC sys.sp_addextendedproperty @name=N'Database_Info', @value=@DatabaseInfo ELSE EXEC sys.sp_Updateextendedproperty @name=N'Database_Info', @value=@DatabaseInfo |

Generating the initial scripts for version 1.1.1 in version control

Now that they have a reference database, they need to put this version into GitHub version control. They need to generate the object-level scripts from the reference database.

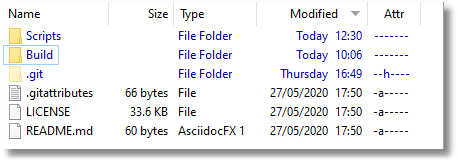

To start, they set up a source control directory with two empty subdirectories, called Scripts and Build. The one shown below is a local GitHub directory.

The Build directory will hold the object-level scripts and the Scripts directory will hold the build scripts and migration scripts. The version of the database, as represented by the contents of the script files that Github reads, will be placed in a JSON file in the root directory. You’ll see a copy of it here.

At this point, both Build and Scripts subdirectories are empty.

They will be using our PowerShell script containing the SCA cmdlets to create the various scripts. This PowerShell script will create these subdirectories if they don’t already exist. It will then generate and update the object-level scripts in the Build directory, and the build and migration scripts in Scripts. It will put the JSON version file goes in the root.

The following PowerShell code will check into Source control the reference Pubs database. For convenience I’ve saved the SourceControl function in a subdirectory called Posh.

|

1 2 3 4 5 6 7 8 9 10 11 |

$TheGithubDirectory = 'PathToTheGitDirectory' #the root version control directory cd $TheGithubDirectory . '.\Posh\SourceControl.ps1' <# do the initial save into source control #> SourceControl @{ 'source' = @{ #give the details of the source 'Server' = 'MyServer'; 'Database' = 'Pubs'; 'uid' = 'PhilFactor'; 'version' = '1.1.1' }; #The version of the database in the database 'Directory' = $TheGithubDirectory } |

This command means that we are telling the function that we have as our source the original reference Pubs database. Because there is nothing in the Build directory, SCA is comparing the reference database as ‘source’ to an empty ‘target’ database, as represented by the empty build subdirectory. The PowerShell script knows that you want to have that directory to be the same as the source, in terms of its database metadata. It will therefore generate CREATE scripts for every object in the source. SCA also provides a build script, and a report of everything it did.

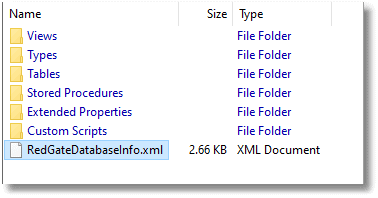

If all goes well, we find that the version of the database is now in the github directory. The build subdirectory of our version-control directory now has the following contents. All of the subdirectories have one or more files, each of which will build a database object

As an example, we can look in the Tables subdirectory, there are all the object scripts, one for each table:

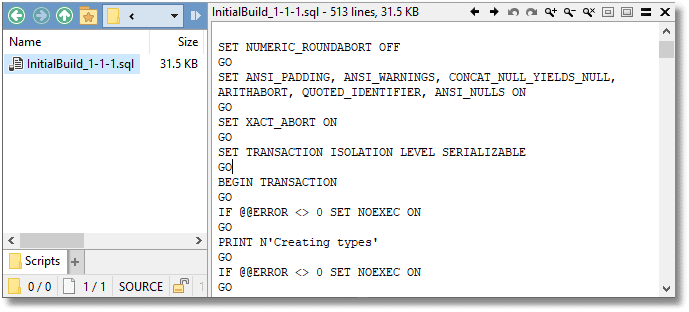

In the Scripts directory, an InitialBuild script has been generated for v1.1.1.

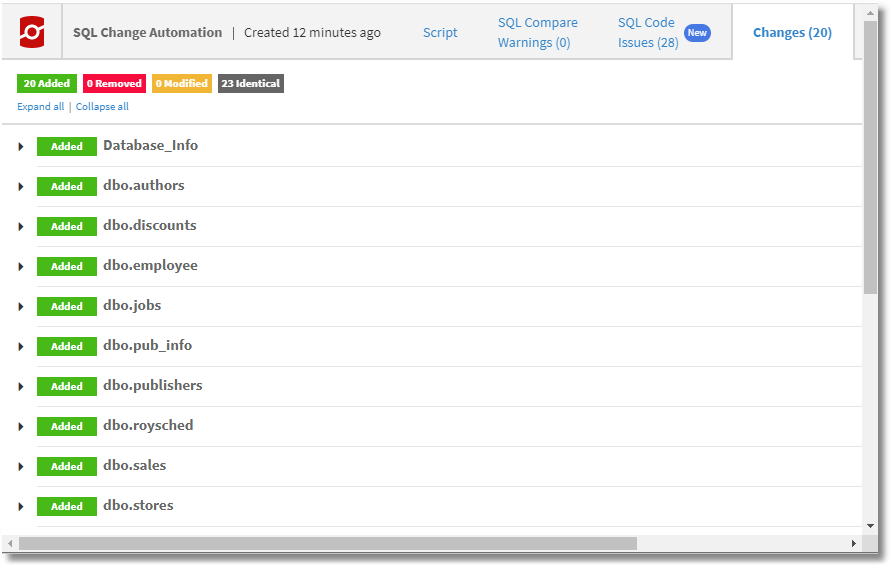

Also, we have an HTML report of what happened. This can be viewed in a browser, but the script can obligingly load it into the browser for you. Here is what is in the ‘Changes’ tab:

There are 28 issues in the SQL Code Issues tab, but no SQL Compare warnings, of course, because SCA knows that the target we specified has no data.

We put any SQL scripts we used, such as the stamping of the version number on Pubs in the Scripts directory and then commit the whole lot to master, using GitHub.

Moving to version 1.1.2 via a development version of the database.

We’ll create a development database called PubsDev using the same instPubs.sql script used to create the reference version, Pubs.

With this database, we can now imagine that the team diligently fix these various code issues and they also decide to change the table design, modifying some datatypes, adding a couple of tables and altering the Titles table. This also means fixing several reporting stored procedures, which referenced the old version of Titles (see my previous article, The Database Development Stage for the details).

Their starting point is the existing instPubs.sql build script, renamed to HandcutBuild_1-1-2.sql because this is the version number that will incorporate these changes. They start from this script because it does more than just build the database objects from an empty database. It also inserts the test data into it. One of them works on the SQL code issues and the other does the table split. They are each using their own ‘sandbox’ databases a lot of the time to test their work. This means they can undertake several rounds of rebuild and testing without needing to worry too much about the data because that is included in the build.

Once they are happy with the changes, they run the final HandcutBuild_1-1-2.sql (at end of day) on the PubsDev database. Then they use the SourceControl function script to save the changes to source control, incrementing the change number and specifying the PubsDev database:

|

1 2 3 4 5 6 7 |

SourceControl @{ 'source' = @{ #give the details of the source 'Server' = 'OurServer'; 'Database' = 'PubsDev'; 'uid' = 'PhilFactor'; 'version' = '1.1.2' }; #The version of the database in the database 'Directory' = $TheGithubDirectory } |

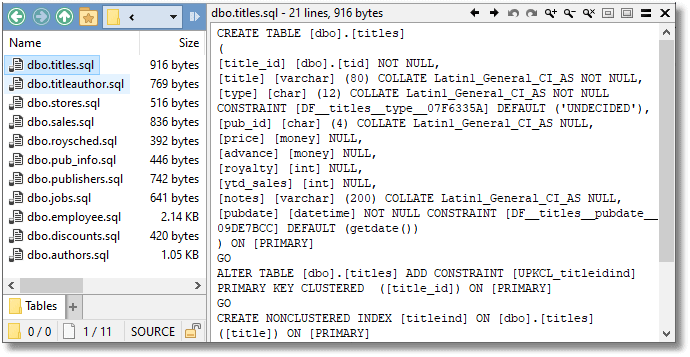

This time, there is code in the Build subdirectory so the script will compare this source to it and just update it to reflect the changes.

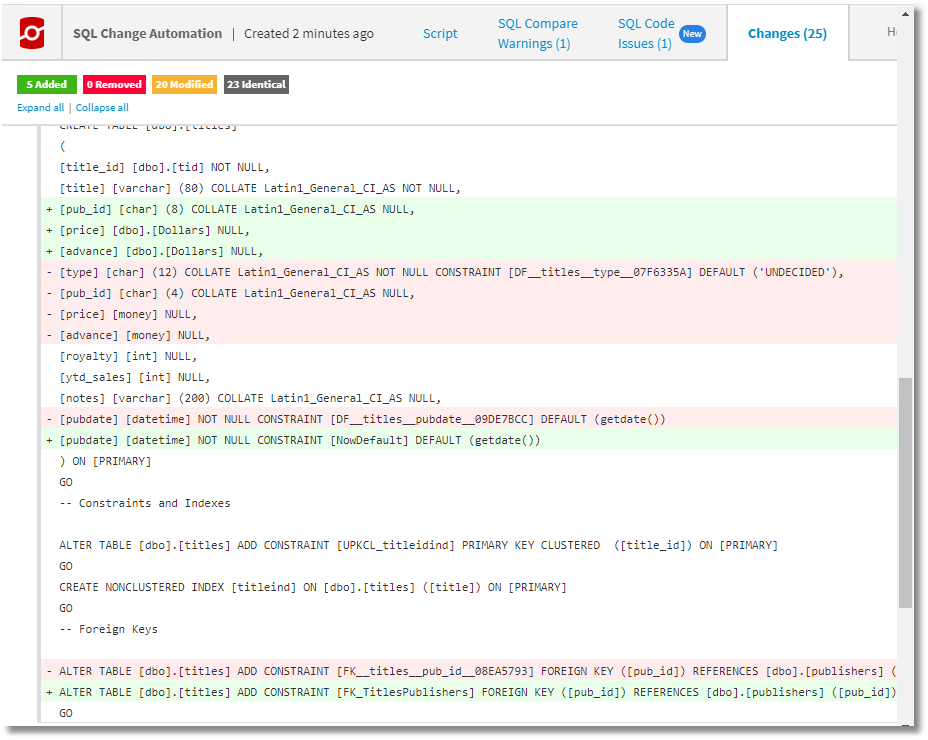

We check to see that the Scripts directory and the Build directory are both updated. The Scripts directory now has a migration script as well as a build script. We also check the HTML report. Here, SCA is showing you what has changed with the Titles table.

When the PowerShell script ran, SCA issued an onscreen warning, which is also in the HTML file, saying that it cannot preserve the data in the Type column of Titles (which we dropped from the new development version).

Creating the update script. WARNING: (High) This deployment drops the column(s) [type] from table [dbo].[titles]. Data in these column(s), will be lost unless additional steps are taken to preserve it. Press Enter to continue...:

Good. The developer who is doing the check-in would have been worried at this stage if they didn’t have this. Because the devs were working from a script (HandcutBuild_1-1-2.sql) that rebuilds the database entirely and re-stocks its data on every alteration that is compiled, they are already familiar with how to deal any potential data migration problems, on deployment.

Cutting a new change: v 1.2.1

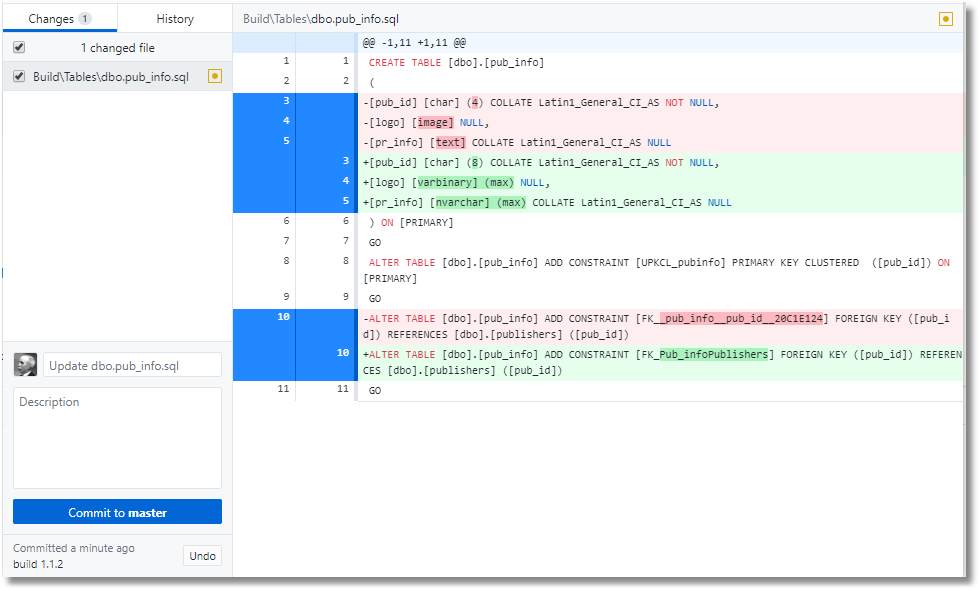

The team need to update the IMAGE and TEXT datatypes in the Pub_Info table, since one of these was flagged as a SQL Code issue.

|

1 2 3 4 5 6 7 |

CREATE TABLE [dbo].[pub_info] ( [pub_id] [char] (4) COLLATE Latin1_General_CI_AS NOT NULL, [logo] [image] NULL, [pr_info] [text] COLLATE Latin1_General_CI_AS NULL ) ON [PRIMARY] GO |

To do this, they execute this SQL code on PubsDev (currently at v1.1.2). This will be also be a good check that the system can detect such as minor change.

|

1 2 |

ALTER TABLE dbo.pub_info ALTER COLUMN Logo varbinary(MAX) NULL; ALTER TABLE dbo.pub_info ALTER COLUMN PR_Info nvarchar(MAX) NULL; |

This change is the final one they need to make during so sprint and will lead to a new minor version rather than just a change, so it becomes 1.2.1 (meaning the first change of version 1.2). They update the source code directory, and generate the scripts as before:

|

1 2 3 4 5 6 7 |

SourceControl @{ 'source' = @{ #give the detail;s of the source 'Server' = 'OurServer'; 'Database' = 'PubsDev'; 'uid' = 'PhilFactor'; 'version' = '1.2.1' }; #The version of the database in the database 'Directory' = $TheGithubDirectory } |

We want to make sure that both GitHub and SCA recognize this minor change correctly. To our relief, they both do.

We can also glow with pride that we’ve eliminated all the SQL Code issues

We can then commit v1.2.1 to master via GitHub.

Releasing version 1.2.1

The team now want to release this version (yes, it is a bit sudden, but this is for illustration!). But there is a nagging thought. There was that warning about removing that type column from the Titles table, when creating v1.1.2, meant that there was no way for SCA to know how to preserve the data in that column. The developers didn’t worry so much at time, but now they must fix that. Fortunately, they were working with build scripts, so they’d needed to deal with the necessary data migration already, to reserve the data in their new builds, and run their unit tests. (see ‘Handcut1.1.2’). When generating those build scripts, they had just loaded the data for Titles into a temporary table and used that to insert all the data in the right place. The code worked and passed unit tests.

To start this release process, the team now stamp the PubsDev database with the version number 1.2.1, in the extended property, because it now represents the release candidate. This release number will end up in the migration script that we are now going to create and hence into every database with which it is successfully used.

Generating the 1.1.1-to-1.2.1 migration script

For this release we need to create a single migration script that takes the Pubs database directly from version 1.1.1 to 1.2.1, rather than running two migration scripts. In real life, you will have maybe hundreds of changes between releases, so it is much better to avoid running that many migration scripts.

Therefore, we are now using our updated object-level source folder (v1.2.1) as the source and the Pubs reference database, which is still at the previous release (v1.1.1), as the target. SCA will always compare the source with a target to produce a script that can alter the target to be the same as the source. We modify the PowerShell script to make this clear.

|

1 2 3 4 5 6 7 |

SourceControl @{ 'target' = @{ 'Server' = 'OurServer'; 'Database' = 'Pubs'; 'uid' = 'PhilFactor'; 'version' = '1.2.1' }; #The version of the database in the database 'Directory' = $TheGithubDirectory } |

We can be relaxed about using our reference database as a target, because nothing in any database actually gets changed in this SCA system I’ve written, you just get scripts that you amend to actually do the work, and reports.

In this case, we get the HTML Report, but we don’t get the object-level scripts because they are the source. Instead we get a migration script that, when we’ve finished our work, will move Pubs from version 1.1.1 to version 1.2.1 whilst preserving the data. However, before we can use it, we need to add that code to populate the new tables properly.

Dealing with data migration

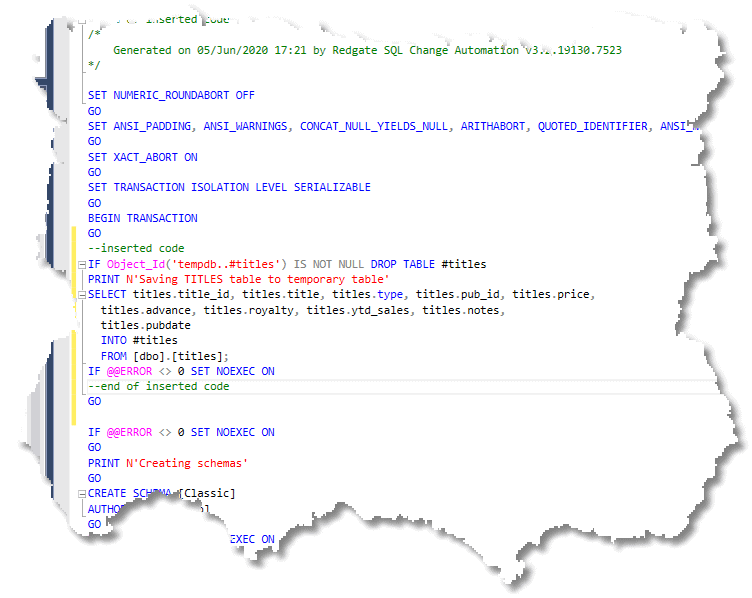

The team extract the routines they developed to preserve the data during their development work, already tested when creating the hand-cut build script for v1.1.2 and add them to the 1.1.1-to-1.2.1 migration script. I’ve described how to do this in more detail in The Database Development Stage. The first bit of data migration code goes after the start of the transaction and places the data in the Titles table in a temporary table.

|

1 2 3 4 5 6 7 8 9 |

IF Object_Id('tempdb..#titles') IS NOT NULL DROP TABLE #titles PRINT N'Saving TITLES table to temporary table' SELECT titles.title_id, titles.title, titles.type, titles.pub_id, titles.price, titles.advance, titles.royalty, titles.ytd_sales, titles.notes, titles.pubdate INTO #titles FROM [dbo].[titles]; IF @@ERROR <> 0 SET NOEXEC ON GO |

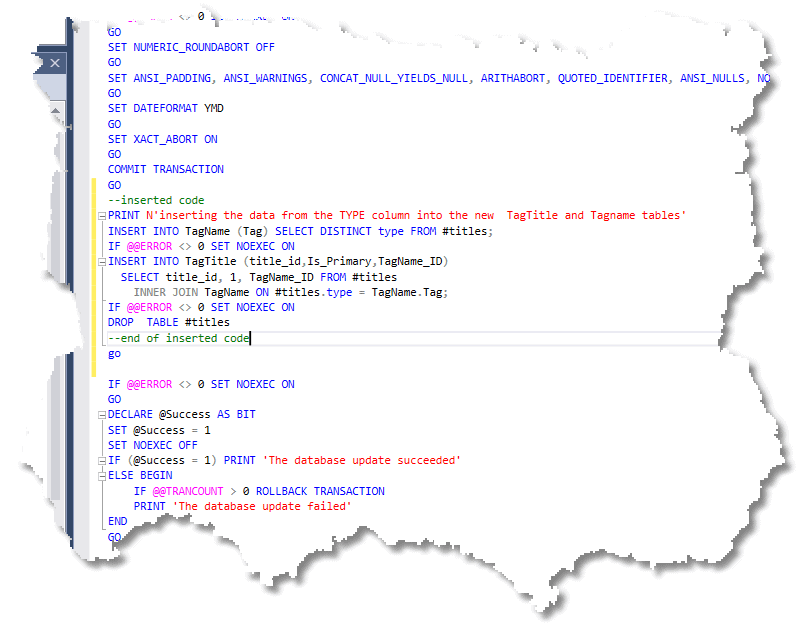

The other section of code reads this data from the temporary table and uses it to stock the two new tables with data. The code goes just before the committing of the transaction in the migration script.

|

1 2 3 4 5 6 7 8 9 10 |

--inserted code PRINT N'inserting the data from the TYPE column into the new TagTitle and Tagname tables' INSERT INTO TagName (Tag) SELECT DISTINCT type FROM #titles; IF @@ERROR <> 0 SET NOEXEC ON INSERT INTO TagTitle (title_id,Is_Primary,TagName_ID) SELECT title_id, 1, TagName_ID FROM #titles INNER JOIN TagName ON #titles.type = TagName.Tag; IF @@ERROR <> 0 SET NOEXEC ON DROP TABLE #titles GO |

The team test it out (see the details in Testing SQL Server Stored Procedures and Functions with SQL Data Compare) and all is well.

Conclusion

We’ve tried to show how to use SCA to do the task of generating all the necessary scripts for version control and for generating migration scripts. We’ve also shown how it is possible to patch these scripts to ensure that only the correct migration script is run for a database. Every database change will have an appropriate draft migration script and a build script for the database.

I’ve published the code on Github. I hope you feel inspired to play along and try SCA out. Why use SCA for such a simple task? For me it is the HTML reporting that has everything I need for checking the generation of code and puts the visibility into automated deployment scripts.