The Database Development Stage

Phil Factor distills the basic tasks of the database development stage and explains how SQL Compare can help tackle them.

Database development is never going to be an easy process, but a lot of one’s working life gets easier if databases are given version numbers, you have a simple way of verifying that a database can always be built, and the source of every version, along with the migration scripts to move to the next version from the previous one, are kept in source control. Development work is a team process, and everything must be visible documented and repeatable.

This article explains each of these tasks, and demonstrate what’s possible with SQL Compare. In a subsequent article, I’ll show the tasks slot into an automated SQL Change Automation process.

Database versions and release management

Whatever way that the development work of a database is achieved, a development team will, at some point, get the OK to release a version of it. This version will be represented by scripts in version control. The version of the database becomes, after the appropriate unit and integration tests, a release candidate. Why the term ‘candidate’? Because it can fail one or more of the subsequent tests or checks along the deployment pipeline through to production.

The moment that a version becomes a release candidate, it must be protected from any amendments, otherwise the subsequent tests are void. Any automated DevOps process needs to be able to check that it is using the correct unchanged version. The obvious place for the version in a database is to attach it to the database as an extended property. The check would best be done against the copy of the version in source control or a NuGet package.

So, what are these DevOps processes? Once a development database build becomes a release candidate, it enters a process often called a ‘deployment pipeline’. This is a misnomer because it implies that each check must be done serially. In fact, they are better done in parallel. The Release candidate must be tested in several ways, to make sure that it is robust enough, legal, does what the customer expects, and is what the customer wants. Checking all this involves quite a different range of tests, in addition to the unit and integration testing that is generally done for every build. After all these checks, the database is ready for staging. If it passes all the tests in staging, one of the few places where one can legally test with real data, then it can be released with some confidence to production. The key to speeding this process up is for all participants to know precisely what has changed, when and why.

You’ll notice from what I’ve said that this release process can become a ‘World of Pain’ without careful management. There are a lot of databases that need to be at the same version as the release candidate. The test cell could also include previous versions of the database. I’ve experienced dev sites where they are at several versions, and other where they are not there at all.

You’ve got User Acceptance Testing, where not only must the database and its associated applications be present and working, but everything coordinated and at the right version. Then there is Staging, where again you need the current staging database server to have the release candidate in place, along with the production data. If any of the databases involved in this process is at the wrong version, then the release process fails because one or more of the required checks couldn’t be done or, worse, were done for the wrong version. This latter mistake means the person responsible is buying all the drinks in the pub after work.

Whereas in development, you need to be cavalier with the data in order to work quickly, it is a different matter in the deployment. You can build a dev database and copy in whatever data you need, but a test or UAT database will need carefully curated data. To update or rollback such a database, you need a migration script. Each new release needs a migration script that will change the previously released version to the new release version. As it is only valid for a specific version of the target database, it needs to check the target’s version and only run if it at the correct version. This is the only migration script that is necessary for a release, though the worldly-wise will also add a rollback script that works in the opposite direction.

Using SQL Compare’s migration script as a starting point

Creating an effective script to migrate a database between versions should be a simple process but don’t count on it. The release candidate will be somewhat different to what is in production. If you’ve re-engineered the table structures, or renamed a lot of routines, columns or tables then your schema comparison tool may not always be able to work as you would wish; it will need help preserving the existing data. It will probably inform you if it can’t, but it may not.

What should you do then? The first instinct is to hand cut a migration script. I flinch, just thinking about it because the details are important, and the devil lurks there. It is so easy to forget all those small changes that have been made alongside the big ones, and either you will be overly optimistic and have a failed deployment, or you will be locked into an almost interminable cycle of amend-test-amend-test to ensure that the migration script works. My preference is to build on, and amend, the automatically generated migration script that is the output of the schema comparison, done by SQL Compare. This is because SQL Compare, as you’ll notice by inspecting the script it produces, is able to do many ingenious migrations that a simple hand cut ALTER script would fail.

Although migration scripts are what you use in the deployment pipeline, it is important not to become too distracted by migration issues during development. Database developers should be able to make plenty of stepwise improvements, even indulge in experiments, without cringing at the thought of the downstream hassle. You can delegate all the minor stuff to the build process. Even if SQL Compare sometimes can’t mind-read your intentions when you radically change tables, it can still sweat all the smaller stuff. All you need to do is to add the trickier migration code, which is likely to be already cut and tested by the developers, as specific migration scripts. After all, the developers would have had to do this anyway to modify their test data, in preparation for testing the build of each new version, during development.

Some SQL Compare conventions you need to know about

SQL Compare automatically generates a migration script that will make the schema of a target database match that of the source. It aims to ensure that either this script completes successfully or else rolls back, leaving no trace. To do this it checks the error level after every DDL statement (e.g. CREATE, ALTER or GRANT) and if an error has occurred that doesn’t cause an immediate rollback or termination of the script, it sets the state of the connection to execute nothing further until the end using IF @@ERROR <> 0 SET NOEXEC ON. For example:

|

1 2 3 4 5 6 7 8 9 |

PRINT N'Altering [dbo].[byroyalty]' GO ALTER PROCEDURE [dbo].[byroyalty] @percentage int AS select au_id from titleauthor where titleauthor.royaltyper = @percentage GO IF @@ERROR <> 0 SET NOEXEC ON GO |

At the end of the script there is the very important batch that catches any error.

|

1 2 3 4 5 6 7 8 |

DECLARE @Success AS BIT SET @Success = 1 SET NOEXEC OFF IF (@Success = 1) PRINT 'The database update succeeded' ELSE BEGIN IF @@TRANCOUNT > 0 ROLLBACK TRANSACTION PRINT 'The database update failed' END |

If SQL Server is still executing the code at this point, it will declare a variable called @success and set it to true. Then it sets the connection to execute the code, regardless of whether there has been an error. If the variable was successfully set to true, then the migration script is considered successful. Otherwise any transaction is rolled back, and the update is failed.

To be a considerate player, when adapting this script, you will need to check the error variable after every DDL SQL Statement and set NOEXEC ON if there has been an error that would affect the migration. Never set NOEXEC OFF in the code you use when altering a SQL Compare script!

Stamping the version on a database

The only sure way of ‘versioning’ a database is to stamp a version on it, by means of an extended property. There is no established standard for doing this. In preparation for our first example of customizing a SQL Compare script, let’s get a simple way of versioning a database in place. Later, this will allow us to use a migration script appropriately, without elaborate checks.

Here is some code that will allow you to set a version number in a database, in this case the Pubs database.

|

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 |

SET NOEXEC off go USE pubs DECLARE @DatabaseInfo NVARCHAR(3750), @version NVARCHAR(20) SET @version=N'2.1.5' SELECT @DatabaseInfo = ( SELECT 'Pubs' AS "Name", @version AS "Version", 'The Pubs (publishing) Database supports a fictitious bookshop.' AS "Description", GetDate() AS "Modified", SUser_Name() AS "by" FOR JSON PATH ); IF not EXISTS (SELECT name, value FROM fn_listextendedproperty( N'Database_Info',default, default, default, default, default, default) ) EXEC sys.sp_addextendedproperty @name=N'Database_Info', @value=@DatabaseInfo ELSE EXEC sys.sp_Updateextendedproperty @name=N'Database_Info', @value=@DatabaseInfo |

Having created the Database_Info extended property, we can now stamp our original database with a version. As we develop a new version of a database, we can very simply put the same version stamp with updated version number in the same place. It will be automatically be copied to the target on a successful migration, if it is done within a transaction.

In GitHub, we can maintain a version number for the database in a version.json file that we set to the correct version number, before committing the source. This version number will not be in the object-level source because it is a property of the database itself. However, it will be in the migration scripts if you use a live database as the source. The code above is in Github as AddInitialVersion.sql.

Setting up a practice lab

For a simple demonstration, we’ll get the venerable pubs database out from the curiosity cabinet and install it as a ‘pretend’ production server. We will create a Github Project that has the aim of bringing the database slightly more up-to-date.

We’ll pretend that our ‘production’ copy of the Pubs database, the current release, is at version 2.1.5 so we’ll stamp it with that version. This is a read-only reference database, useful as a source of test data, and for checking the data hasn’t changed when running tests during development. The backup of pubs is here.

To stamp the version on it, you can use this, which assumes the Database_Info extended property doesn’t already exist.

|

1 2 3 |

EXEC sp_addextendedproperty N'Database_Info', N'[{"Name":"Pubs","Version":"2.1.5","Description":"The Pubs (publishing) Database supports a fictitious bookshop.","Modified":"2020-05-06T13:57:56.217","by":"PhilFactor"}]', NULL, NULL, NULL, NULL, NULL, NULL; |

To simulate the dev process, we’ll install another copy of the original Pubs database on the development server, with the Current Version in production. This represents the starting version i.e. the version you are then altering to make the new version. By having a reference to the current version (as well as to the current release), you know at any time what a migration script will need to achieve. For some teams, this might be a shared development database.

Putting the database in source control

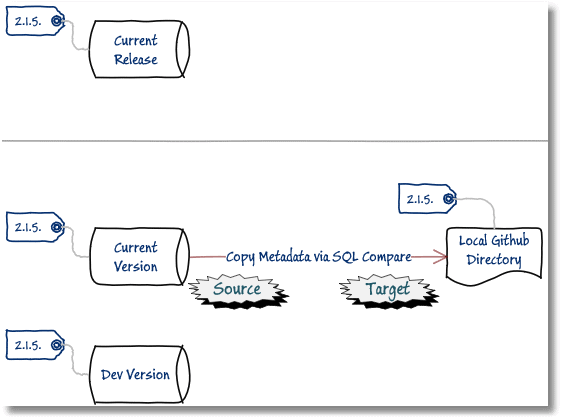

The next thing we do is to put the current version of Pubs in source control (GitHub). I’m just using SQL Compare to do it (see Retrospective Database Source Control with SQL Compare), but you may well prefer to use SQL Source Control.

Set the current version of the Pubs database as the source and a Build subdirectory of our newly created GitHub directory as the target.

I then add the same starting version number, “2.1.5”, in a version.json file. This is the overall version of the Pubs project (which is the same as the database build version), so we place the file in the base folder of the GitHub project (with the ReadMe).

|

1 2 3 |

{ "$schema": "https://raw.githubusercontent.com/dotnet/Nerdbank.GitVersioning/master/src/NerdBank.GitVersioning/version.schema.json", "version": "2.1.5" } |

Finally, set up a development database on our dev server. It starts by being identical to the current version. During development, you will be free to destroy and rebuild this copy freely, as often as required. You can create this copy by building the new version from source and then filling with test data from the current version, or if it is on the same server, you can populate the data repeatedly for tests using a “Kill and Fill” method. If you do this task often, you’ll want to take a look at SQL Clone because it makes it all very easy.

The first version

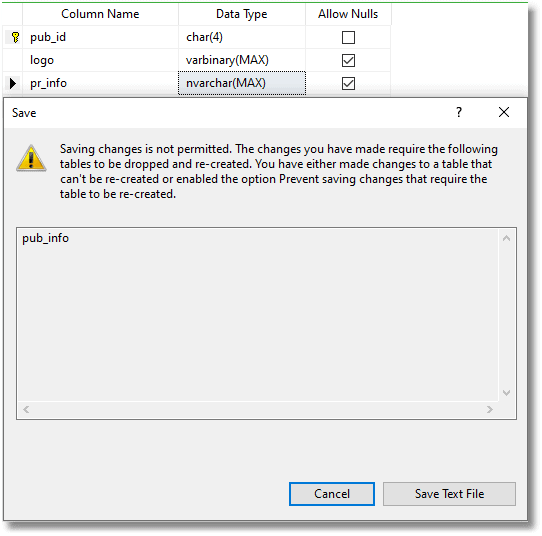

We decide to do a few hurried updates to our development database. We’ll just update the pub_info table that has some archaic datatypes, to deal properly with a binary logo and some Unicode information. First, we try to use SSMS’s Table designer, but it refuses to do it:

Sighing, we do it instead via SQL code, as follows:

|

1 2 |

ALTER TABLE dbo.pub_info ALTER COLUMN Logo varbinary(MAX) NULL; ALTER TABLE dbo.pub_info ALTER COLUMN PR_Info nvarchar(MAX) NULL; |

Normally, of course, we’d be doing a lot more changes for each version, but this just illustrates the stages. Bear with us.

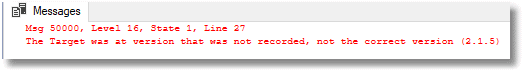

After extensive testing (ahem), and checks that the data hasn’t changed, we decide that this is a new version, and place the updated table in source control, as follows:

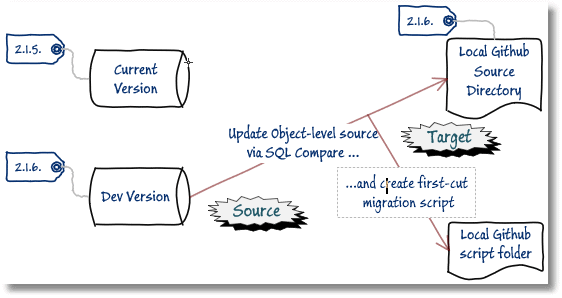

- Stamp the new development build version 2.1.6

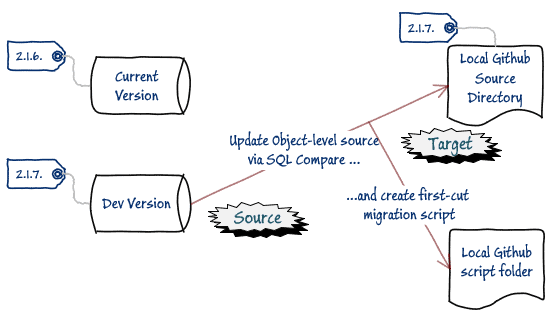

- Deploy the changes to GitHub using SQL Compare, with the build database (2.1.6) as the source and the GitHub Build directory as the target. Choose to “create a deployment script” and save it to a script folder in GitHub; this is our ‘first-cut’ migration script (in GitHub: migration_2-1-5_to_2-1-6.sql). Execute it to update the Build directory in the GitHub source.

This first-cut migration script that we’ve been presented with by SQL Compare is a lot more methodically done than we’d ever be able to do by tapping in the code. We find that the ALTER statements that SSMS wouldn’t allow us to make using the table Designer/editor can indeed be done without special migration code.

All we need to do on this occasion is to add to the auto-generated script a little trap that means that we don’t execute the code on the wrong version.

|

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27 28 29 30 31 32 33 34 35 36 37 38 39 40 41 42 43 44 45 46 47 48 49 50 51 52 53 54 55 56 57 58 59 60 61 62 63 64 65 66 67 68 69 70 71 72 73 |

SET NUMERIC_ROUNDABORT OFF GO SET ANSI_PADDING, ANSI_WARNINGS, CONCAT_NULL_YIELDS_NULL, ARITHABORT, QUOTED_IDENTIFIER, ANSI_NULLS ON GO SET XACT_ABORT ON GO SET TRANSACTION ISOLATION LEVEL Serializable GO BEGIN TRANSACTION; --inserted code Declare @version varchar(25); SELECT @version= Coalesce(Json_Value( ( SELECT Convert(NVARCHAR(3760), value) FROM sys.extended_properties AS EP WHERE major_id = 0 AND minor_id = 0 AND name = 'Database_Info'),'$[0].Version'),'that was not recorded'); IF @version <> '2.1.5' BEGIN RAISERROR ('The Target was at version %s, not the correct version (2.1.5)',16,1,@version) SET NOEXEC ON; END --end of inserted code GO IF @@ERROR <> 0 SET NOEXEC ON GO PRINT N'Altering [dbo].[pub_info]' GO IF @@ERROR <> 0 SET NOEXEC ON GO ALTER TABLE [dbo].[pub_info] ALTER COLUMN [logo] [varbinary] (max) NULL GO IF @@ERROR <> 0 SET NOEXEC ON GO ALTER TABLE [dbo].[pub_info] ALTER COLUMN [pr_info] [nvarchar] (max) COLLATE Latin1_General_CI_AS NULL GO IF @@ERROR <> 0 SET NOEXEC ON GO PRINT N'Creating extended properties' GO BEGIN TRY EXEC sp_addextendedproperty N'Database_Info', N'[{"Name":"Pubs","Version":"2.1.6","Description":"The Pubs (publishing) Database supports a fictitious bookshop.","Modified":"2020-05-15T13:30:11.697","by":"RED-GATE\\Tony.Davis"}]', NULL, NULL, NULL, NULL, NULL, NULL END TRY BEGIN CATCH DECLARE @msg nvarchar(max); DECLARE @severity int; DECLARE @state int; SELECT @msg = ERROR_MESSAGE(), @severity = ERROR_SEVERITY(), @state = ERROR_STATE(); RAISERROR(@msg, @severity, @state); SET NOEXEC ON END CATCH GO COMMIT TRANSACTION GO IF @@ERROR <> 0 SET NOEXEC ON GO -- This statement writes to the SQL Server Log so Redgate Monitor can show this deployment. IF HAS_PERMS_BY_NAME(N'sys.xp_logevent', N'OBJECT', N'EXECUTE') = 1 BEGIN DECLARE @databaseName AS nvarchar(2048), @eventMessage AS nvarchar(2048) SET @databaseName = REPLACE(REPLACE(DB_NAME(), N'\', N'\\'), N'"', N'\"') SET @eventMessage = N'Redgate SQL Compare: { "deployment": { "description": "Redgate SQL Compare deployed to ' + @databaseName + N'", "database": "' + @databaseName + N'" }}' EXECUTE sys.xp_logevent 55000, @eventMessage END GO DECLARE @Success AS BIT SET @Success = 1 SET NOEXEC OFF IF (@Success = 1) PRINT 'The database update succeeded' ELSE BEGIN IF @@TRANCOUNT > 0 ROLLBACK TRANSACTION PRINT 'The database update failed' END GO |

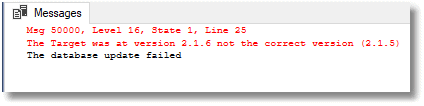

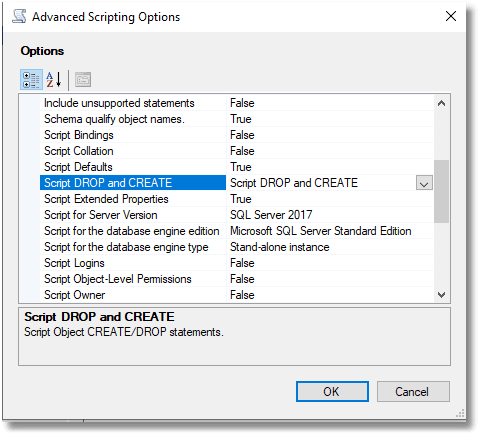

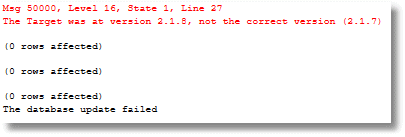

We test it out the edited migration script with the current version (2.1.5) as target. If all is well, it updates the version stamp of the target to the new version, because the source of the comparison already had it. If it all goes wrong, you’ll get this…

If you run it on a database without a version number, you’ll get this:

We compare the current version to the reference version, representing the current production version (using SQL Data Compare in our case), and find that the data is unchanged, but the reference database is now at the new version. We save the migration script and update the project version number on GitHub:

|

1 2 3 |

{ "$schema": "https://raw.githubusercontent.com/dotnet/Nerdbank.GitVersioning/master/src/NerdBank.GitVersioning/version.schema.json", "version": "2.1.6" } |

Finally, we push the local GitHub directory containing the build and script for the new version to source control.

This alteration to one table took some time and we didn’t make a great deal of progress. However, we now have an effective way of ensuring that the migration scripts are run in sequence if necessary!

The second version

I look at Pubs anew, and decide it needs a more radical haircut to bring it up to date. Basically, I want to do a wholesale change of VARCHAR columns into NVARCHAR columns, where appropriate, so that people or books with accents in their names will be rendered correctly!

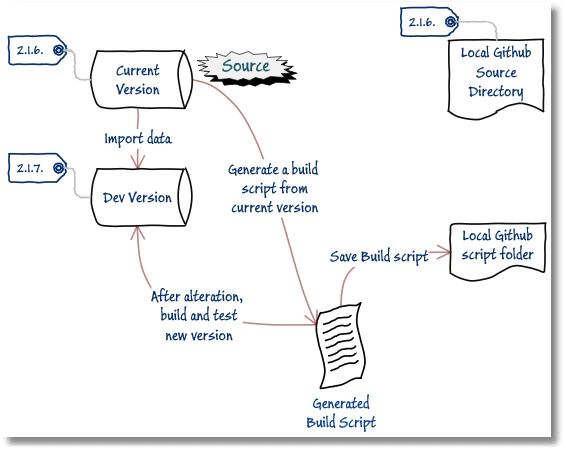

On my development copy, I methodically work on each table, with a full migration script, testing it all the time and saving each object independently into source cont…. (pause). Well, last time I did that, I was dressed in flares. What I actually did was to generate a build script from the current version (2.1.6), using Tasks > Generate Scripts in SSMS, and edited it to update and extend the datatypes. If you are using a script, you can rapidly make a lot of changes and be sure of catching all the references and repercussions. Objects such as triggers and rules just can’t hide when you are working with a script.

Obviously, these datatypes I’m changing need to be consistent across tables. If you are likely to make mistakes, it is worth adding code to delete the object if it exists. In SSMS, you do this by hitting the ‘Advanced’ key to fine-tune the script options.

I’m doing a broad revision that is beyond the scope of object-level work. This sort of work would include work with an ER Diagramming tool or any other design tool that makes me more productive.

I could, alternatively, have written a migration script to make all the changes to each table, but this simply isn’t necessary at this stage, because there isn’t any data to preserve. Why write a script before it becomes necessary when SQL Compare just takes care of all that? All we need here is a build script, and this is a thing SSMS does well. You can work on a build script that just includes whatever objects you need (everything in our case).

Once you’ve done all the necessary edits, you use the new build script to build a new development version of the database (2.1.7), from scratch, then import the data from the current version to test it out (see either the BCP approach or Kill/Fill). When I’m happy that everything is working, I update the build script to reflect any bug-fixes and save it to a scripts directory, which is a GitHub subdirectory of the directory for this project.

I now stamp the Dev version as 2.1.7 and then compare our newly built dev database with the source code directory containing 2.1.6, to update the object scripts and save the migration script (migration_2-1-6_to_2-1-7.sql), as before.

We now edit migration script to put in the trap for the version number, this time to ensure the target database is at version 2.1.6. As there were no problems with populating the data, we don’t need to add any migration code. We test it out on the current version, and once again verify that the current version is now 2.1.7, but the data is unchanged.

We save the migration script for 2.1.6 to 21.7, along with the build script for 21.7, to the Script directory in the project. We update the version.json file to ensure that everything is in sync.

|

1 2 3 |

{ "$schema": "https://raw.githubusercontent.com/dotnet/Nerdbank.GitVersioning/master/src/NerdBank.GitVersioning/version.schema.json", "version": "2.1.7" } |

Finally, we’ve ready to push all the change to GitHub and, of course, it magically recognizes that some of the object level scripts have changed and advises you on what needs to be committed to the local repository.

The third version

This time, we decide that we need to alter the publications so that instead of having just one topic, we allow several sub-topics to be applied as well. We’re doing this to illustrate a migration step that will need some additional migration code in the migration script.

Taking the v2.1.7 build script we just saved, we remove the type column from the titles table and create two new tables. One of these is a list of tags called TagName and a second one called TagTitle to associate one or more tags with a title, but which has just one primary tag for each title. Again, I’m using a build script to do this because there are implications for altering this table and creating two others.

|

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 |

CREATE TABLE [dbo].[titles]( [title_id] [dbo].[tid] NOT NULL, [title] [nvarchar](120) NOT NULL, [pub_id] [char](10) NULL, [price] [money] NULL, [advance] [money] NULL, [royalty] [int] NULL, [ytd_sales] [int] NULL, [notes] [nvarchar](max) NULL, [pubdate] [datetime] NOT NULL, CONSTRAINT [UPKCL_titleidind] PRIMARY KEY CLUSTERED ( [title_id] ASC )WITH (PAD_INDEX = OFF, STATISTICS_NORECOMPUTE = OFF, IGNORE_DUP_KEY = OFF, ALLOW_ROW_LOCKS = ON, ALLOW_PAGE_LOCKS = ON) ON [PRIMARY] ) ON [PRIMARY] GO CREATE TABLE TagName (TagName_ID INT IDENTITY(1, 1) PRIMARY KEY, Tag VARCHAR(20) NOT NULL UNIQUE); go CREATE TABLE TagTitle ( TagTitle_ID INT IDENTITY(1, 1), title_id dbo.tid NOT NULL REFERENCES titles (title_id), Is_Primary BIT NOT NULL DEFAULT 0, TagName_ID INT NOT NULL REFERENCES TagName (TagName_ID), CONSTRAINT PK_TagNameTitle PRIMARY KEY CLUSTERED (title_id ASC, TagName_ID) ON [PRIMARY] ); |

When you run the full build script for the new version (2.1.8) you’ll see build errors, because there are a couple of dependent reporting stored procedures, reptq2 and reptq3, that use the old type column that need to be changed.

Msg 207, Level 16, State 1, Procedure reptq2, Line 4 [Batch Start Line 459] Invalid column name 'type'. Msg 207, Level 16, State 1, Procedure reptq3, Line 10 [Batch Start Line 475] Invalid column name 'type'.

You’ll have the refactored tables in place though, so you can work out the new code for these without too many problems (I won’t show it here, but you’ll see it in the migrations script shortly). You’ll also have to fix a DEFAULT constraint on the old type column, so it references the new Tag column instead.

Of course, to test out this new build, we now need to fill it with the data from the Current Version (2.1.7), but this time we’ll need to work out data migration scripts to move the data in the old type column into the new tables, and also to populate the other new columns.

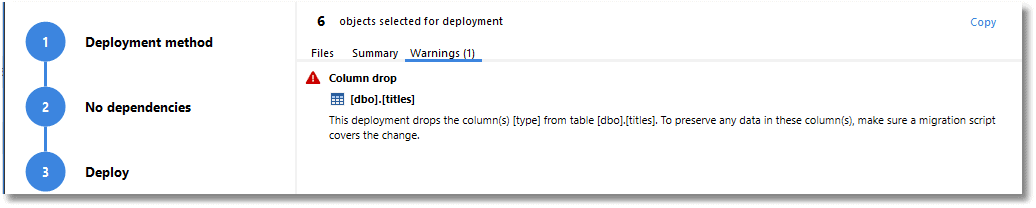

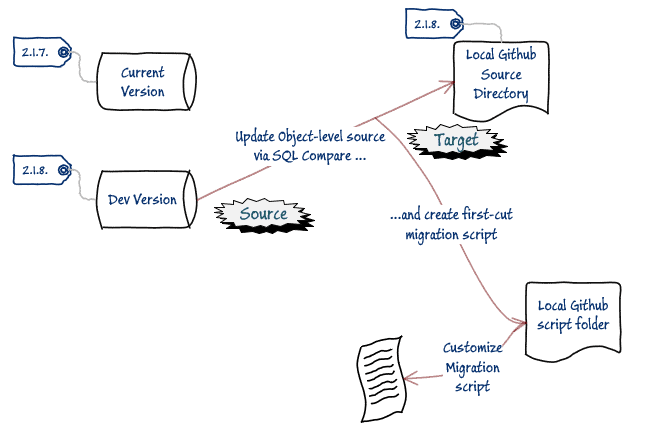

Once we’ve worked out all the issues with the data migration, and run all the tests, we stamp the new development build with v2.1.8, and use SQL Compare, with the build, as the source and the contents of our source code directory as the target, in order to update the object scripts and save the “2.1.7 to 2.1.8” migration script, which this time comes with a warning.

The next stage is to edit the migration script. This is more than the simple version trap we had before.

Fortunately, we know about the migration issues because we had to fill the v2.1.8 build to test those stored procedures.

We need to open the migration script we just saved and edit it. We create a temporary table, which is a version of titles. We use this to add the data to the two new tables. Conveniently, the altered stored procedure reptq2 acts as a convenient unit test (see migration_2-1-7_to_2-1-8.sql for the finished script). If the two procedures give the same result as the previous version, then we are likely to get home early. If you’re following along, you’ll need to use SQL Compare to generate the script then add to it the sections marked out by the “inserted code” comments.

|

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27 28 29 30 31 32 33 34 35 36 37 38 39 40 41 42 43 44 45 46 47 48 49 50 51 52 53 54 55 56 57 58 59 60 61 62 63 64 65 66 67 68 69 70 71 72 73 74 75 76 77 78 79 80 81 82 83 84 85 86 87 88 89 90 91 92 93 94 95 96 97 98 99 100 101 102 103 104 105 106 107 108 109 110 111 112 113 114 115 116 117 118 119 120 121 122 123 124 125 126 127 128 129 130 131 132 133 134 135 136 137 138 139 140 141 142 143 144 145 146 147 148 149 150 151 152 153 154 155 156 157 158 159 160 161 162 163 164 165 166 167 168 169 170 171 172 |

/* Run this script on : Script created by SQL Compare version 13.4.5.6953 from Red Gate Software Ltd at 12/05/2020 09:35:47 */ SET NUMERIC_ROUNDABORT OFF GO SET ANSI_PADDING, ANSI_WARNINGS, CONCAT_NULL_YIELDS_NULL, ARITHABORT, QUOTED_IDENTIFIER, ANSI_NULLS ON GO SET XACT_ABORT ON GO SET TRANSACTION ISOLATION LEVEL Serializable GO BEGIN TRANSACTION GO IF @@ERROR <> 0 SET NOEXEC ON GO --inserted code Declare @version varchar(25); SELECT @version= Coalesce(Json_Value( ( SELECT Convert(NVARCHAR(3760), value) FROM sys.extended_properties AS EP WHERE major_id = 0 AND minor_id = 0 AND name = 'Database_Info'),'$[0].Version'),'that was not recorded'); IF @version <> '2.1.7' BEGIN RAISERROR ('The Target was at version %s, not the correct version (2.1.7)',16,1,@version) SET NOEXEC ON END go PRINT N'Saving TITLES table to temporary table' SELECT titles.title_id, titles.title, titles.type, titles.pub_id, titles.price, titles.advance, titles.royalty, titles.ytd_sales, titles.notes, titles.pubdate INTO #titles FROM [dbo].[titles]; IF @@ERROR <> 0 SET NOEXEC ON GO --end of inserted code PRINT N'Dropping constraints from [dbo].[titles]' GO ALTER TABLE [dbo].[titles] DROP CONSTRAINT [DF__titles__type__07F6335A] GO IF @@ERROR <> 0 SET NOEXEC ON GO PRINT N'Altering [dbo].[titles]' GO IF @@ERROR <> 0 SET NOEXEC ON GO ALTER TABLE [dbo].[titles] DROP COLUMN [type] GO IF @@ERROR <> 0 SET NOEXEC ON GO PRINT N'Creating [dbo].[TagName]' GO CREATE TABLE [dbo].[TagName] ( [TagName_ID] [int] NOT NULL IDENTITY(1, 1), [Tag] [varchar] (20) COLLATE Latin1_General_CI_AS NOT NULL ) GO IF @@ERROR <> 0 SET NOEXEC ON GO PRINT N'Creating primary key [PK__TagName__3109E9F88C8DE0AD] on [dbo].[TagName]' GO ALTER TABLE [dbo].[TagName] ADD PRIMARY KEY CLUSTERED ([TagName_ID]) GO IF @@ERROR <> 0 SET NOEXEC ON GO PRINT N'Adding constraints to [dbo].[TagName]' GO ALTER TABLE [dbo].[TagName] ADD UNIQUE NONCLUSTERED ([Tag]) GO IF @@ERROR <> 0 SET NOEXEC ON GO PRINT N'Creating [dbo].[TagTitle]' GO CREATE TABLE [dbo].[TagTitle] ( [TagTitle_ID] [int] NOT NULL IDENTITY(1, 1), [title_id] [dbo].[tid] NOT NULL, [Is_Primary] [bit] NOT NULL DEFAULT ((0)), [TagName_ID] [int] NOT NULL ) GO IF @@ERROR <> 0 SET NOEXEC ON GO PRINT N'Creating primary key [PK_TagNameTitle] on [dbo].[TagTitle]' GO ALTER TABLE [dbo].[TagTitle] ADD CONSTRAINT [PK_TagNameTitle] PRIMARY KEY CLUSTERED ([title_id], [TagName_ID]) GO IF @@ERROR <> 0 SET NOEXEC ON GO PRINT N'Altering [dbo].[reptq2]' GO ALTER PROCEDURE [dbo].[reptq2] AS select case when grouping(TN.tag) = 1 then 'ALL' else TN.tag end as type, case when grouping(pub_id) = 1 then 'ALL' else pub_id end as pub_id, avg(ytd_sales) as avg_ytd_sales FROM titles INNER JOIN tagtitle ON TagTitle.title_id = titles.title_id INNER JOIN dbo.TagName AS TN ON TN.TagName_ID = TagTitle.TagName_ID where pub_id is NOT NULL AND is_primary=1 group by pub_id, TN.tag with rollup GO IF @@ERROR <> 0 SET NOEXEC ON GO PRINT N'Altering [dbo].[reptq3]' GO ALTER PROCEDURE [dbo].[reptq3] @lolimit money, @hilimit money, @type char(12) AS select case when grouping(pub_id) = 1 then 'ALL' else pub_id end as pub_id, case when grouping(TN.tag) = 1 then 'ALL' else TN.tag end as type, count(titles.title_id) as cnt from titles INNER JOIN tagtitle ON TagTitle.title_id = titles.title_id INNER JOIN dbo.TagName AS TN ON TN.TagName_ID = TagTitle.TagName_ID where price >@lolimit AND is_primary=1 AND price <@hilimit AND TN.tag = @type OR TN.tag LIKE '%cook%' group by pub_id, TN.tag with rollup GO IF @@ERROR <> 0 SET NOEXEC ON GO PRINT N'Adding foreign keys to [dbo].[TagTitle]' GO ALTER TABLE [dbo].[TagTitle] ADD FOREIGN KEY ([TagName_ID]) REFERENCES [dbo].[TagName] ([TagName_ID]) GO ALTER TABLE [dbo].[TagTitle] ADD FOREIGN KEY ([title_id]) REFERENCES [dbo].[titles] ([title_id]) GO IF @@ERROR <> 0 SET NOEXEC ON GO PRINT N'Altering extended properties' GO BEGIN TRY EXEC sp_updateextendedproperty N'Database_Info', N'[{"Name":"Pubs","Version":"2.1.8","Description":"The Pubs (publishing) Database supports a fictitious bookshop.","Modified":"2020-05-06T13:57:56.217","by":"PhilFactor"}]', NULL, NULL, NULL, NULL, NULL, NULL END TRY BEGIN CATCH DECLARE @msg nvarchar(max); DECLARE @severity int; DECLARE @state int; SELECT @msg = ERROR_MESSAGE(), @severity = ERROR_SEVERITY(), @state = ERROR_STATE(); RAISERROR(@msg, @severity, @state); SET NOEXEC ON END CATCH GO --inserted code INSERT INTO TagName (Tag) SELECT DISTINCT type FROM #titles; IF @@ERROR <> 0 SET NOEXEC ON INSERT INTO TagTitle (title_id,Is_Primary,TagName_ID) SELECT title_id, 1, TagName_ID FROM #titles INNER JOIN TagName ON #titles.type = TagName.Tag; IF @@ERROR <> 0 SET NOEXEC ON DROP TABLE #titles go --end of inserted code COMMIT TRANSACTION GO IF @@ERROR <> 0 SET NOEXEC ON GO DECLARE @Success AS BIT SET @Success = 1 SET NOEXEC OFF IF (@Success = 1) PRINT 'The database update succeeded' ELSE BEGIN IF @@TRANCOUNT > 0 ROLLBACK TRANSACTION PRINT 'The database update failed' END GO |

To test this migration script works, we are likely to need to revert the dev version back to 2.1.7 repeatedly, and fill it with data from the current version, and then repeat our unit tests. You can avoid this repeated build with simpler migrations like this by doing them within a transaction and rolling back, but this can make debugging the migration script more difficult. We repeat until our migration script works reliably.

Once it does, we run it on the current version, and updates the Pubs database fine, and all the data is as it should be in the new table structure. It refuses to do it twice, which is correct

It also refuses to do it with other databases, which is comforting.

Putting all the version scripts together

We’ve gone through three versions. The first was a minimal change just to demonstrate how to do a version check. The second one changed all those varchar datatypes to the more correct NVARCHAR datatypes. The final one made the categorization system for books more useful and updated the stored procedures to use the new system. This last one needed a data migration. We can now test it all out. We want to know if we can migrate a database of the raw version, as downloaded from the internet, and upgrade it to the same version as the one we have in our source directory.

As the original backup is long vanished from the internet, I’ve added a copy in the backup directory of the Github repository. We now just apply to it the four scripts in the correct order…

- AddInitialVersion.sql – Just adds the version to the original Pubs

- migration_2-1-5_to_2-1-6.sql – migrates from 2.1.5 to 2.1.6: merely updating the datatypes of the PubInfo table.

- migration_2-1-6_to_2-1-7.sql – migrates from 2.1.6 to 2.1.7: updating the datatypes of all the varchar types to NVarchar.

- migration_2-1-7_to_2-1-8.sql – migrates from 2.1.7 to 2.1.8: Splits the Title table to allow more than one book type to be assigned. Demonstrates a data migration.

Hopefully, all is well, and this has illustrated how there are two alternative routes to arrive at a version of a database. It can be done directly by running a build script on an empty database, followed by the addition of data, or it can be done from an existing version with a sequence of migration scripts, modified slightly from the scripts auto-generated from SQL Compare. Remember that the actual work was done using a data-less script without any care about the consequences for migration. That legwork was all done by SQL Compare with a little help where it became impossible for the tool to read my mind.

One problem that crop up is that of constraints declared without an explicit name. This is fine with temporary tables and table variables, but it isn’t good practice for base tables because it makes life more difficult for any comparison. It can also have a knock-on effect when you are using a chain of existing migration scripts to create a version with existing data intact. If you create your initial copy of Pubs from a build script that uses the lazy definition of constraints, then these migration scripts can sometimes fail when applied to the database because these constraints are given names internally in the metadata such as 'PK__TagName__3109E9F88C8DE0AD'. That random number won’t be repeated. That is why I’ve provided Pubs as a backup rather than a published build script.

Conclusions

During the database development process, a good developer should be free to use the best possible tool for the current task. You might need to use an ER Diagramming tool that exports the new table design. At some point in a project, you might want to use a traditional build script, a table-builder tool, or even a text editor, as well as SSMS. If you can produce the deliverables of the object level script and the migration script, then that’s OK.

For reliable deployment of database changes, we need in version control both the object-level source for each version, and the migration scripts to move between versions. Where a version becomes a release candidate, then the migration script will be created that takes the database from the previous release. SQL Compare can provide a ‘first-cut’ of this script which can be combined with custom migration code whenever SQL Compare cannot do all that is required. You can, of course, run individual version-to-version scripts in sequence, but there may be too many of these to manage, and you may have gone to-and-fro with a design idea which didn’t survive integration tests. One migration script per version is easier to manage.

Finally, automation is important. As more and more is expected in terms of speed and volume of unit testing, integration testing, automated build, and ‘office work’ (checking logs, team coordination, documentation, issue management, and reporting), so automation becomes the route to a more pleasant and productive work life. In a forthcoming article, I’ll show the various tasks in the development stage, as described in this article, slot into an automated SQL Change Automation process.