The Benefits and Challenges of Managing Hybrid Estates

Updated September, 2023 – More and more organizations are migrating their data to the cloud but the majority are likely to end up managing hybrid estates, with some data managed through a cloud provider and some managed locally.

This leads to some unique issues, especially around monitoring and services. If the objective of migrating to the cloud is simply to implement virtual machines (VMs) in a different location than you traditionally do, there will be fewer challenges. However, as your organization starts to adopt cloud-based database services you'll find that they have different monitoring requirements than traditional hardware or VMs, leading to a need for hybrid monitoring.

If your current monitoring solution focuses only on local installations of SQL Server or PostgreSQL, chances are high you’re soon going to need more. You may need to cover a growing and increasingly complex mixed data environment, comprising both local databases and those hosted on cloud platforms provided by Microsoft (Azure), Amazon (AWS RDS) or Google (GCP), and including database services such as Azure SQL Database, Aurora PostgreSQL and others.

Most of all, you’re going to want a way to monitor your database estate so that you can see everything, from local big iron and VMs to cloud instances and services. That single pane of glass view of every hybrid environment is important, so you’re going to have to go through a significant set of tasks to build it, or pick it up from a third-party monitoring tool.

The benefits of hosting data in the cloud

Even though the year is 2023 and cloud technologies have been around for a good 18 years at this point, there are still a lot of questions about why any organization would move to the cloud. Startups typically adopt cloud technologies because it provides a way to move rapidly without incurring the overhead of building a data center. However, it makes as much sense for other types of organization as well.

-

Flexibility across departments

While established organizations may have a significant investment in their existing data centers, they can also benefit from the cloud. Some departments may be moving at a faster pace, with different objectives leading to a requirement for a hybrid data management model. This is why you’ll see them adopting the latest cloud services for data management and introducing hybrid environments.

-

Lower costs and expertise

Many organizations are seeing additional benefits in using the cloud, in whole or in part, as a fundamental aspect of their data management. By using cloud technologies for management, the organization can focus on its core needs. Building a global data center requires a lot of technical expertise and massive infrastructure. Whereas, implementing databases across multiple data centers through providers like Azure, AWS or Google Cloud requires less technical expertise, and no infrastructure expenditure.

-

Option to scale up or down

Organizations are also seeing the need to expand and contract their systems on demand. A retail management system will likely need a lot more resources around Black Friday than a random Friday in August. The cloud, in general, offers that ability to expand and contract, as a fundamental aspect of the design.

-

Built in security management

You’re also seeing that lots of organizations have a hard time securing their systems. Getting an appropriate upgrade and patching service to ensure the most fundamental protections on the system is a lot of work and requires technical knowledge beyond what many organizations possess. The major cloud services provide patching, upgrades and other maintenance such as database backups as a fundamental part of the service.

-

Speed

Cloud service offerings continue to expand. It’s not just a question of where your data lives, it’s also a question of how your data is consumed. As we see cloud-based analytics, data mining and machine learning and AI expand, we’re increasingly seeing the advantages of storing the data closer to the analysis site. Having the data and the analysis services co-located will, of course, increase the speed of the analysis and just makes it easier. This means many more people are moving data into the cloud.

All of these things taken together are why the majority of organizations are working with cloud services.

The benefits of hosting data on-premises

If the cloud offers so many positive aspects, why then do organizations retain, or even expand, their on-premises data management? There are two fundamental answers to this question. The first is simple: sunk investment. If you’re already storing your data successfully on a local server or in a data center, moving to the cloud doesn’t have much attraction.

The second is also simple. Organizations often need more than the cloud offers and have special functional needs to keep the business online or beat the competition.

Very small organizations may only need a single database or instance to get the job done. If they’ve purchased that and are happily maintaining it, moving to the cloud doesn’t make sense from a business standpoint. The same thing applies to an organization that has built out a large-scale data center and may have already built out off-site secondary systems and mechanisms to support global scale. While either of these scenarios might benefit from a move to the cloud, it simply doesn’t make financial sense.

I’ve worked with organizations that are doing very unique types of data processing. Either they have a transaction volume that would swamp a cloud-based system, or they have a need for processing power that would be difficult to replicate in the cloud. What’s most interesting about these types of systems is the competitive edge that the company derives from it. So, unlike a lot of organizations, they’re not simply investing in servers and disks. These organizations invest in their people to build superior systems so that they stay ahead of the competition.

Between specific technical requirements and those sunk investments, you’re going to be seeing on-premises servers continue to be a factor for a very long time to come.

The challenges of hybrid monitoring

According to a recent Microsoft Azure Cloud Migration Report, 62% of organizations have a cloud migration or modernization strategy in place. However, simply saying ‘the cloud’ does not really mean anything. It could mean VMs in Azure, AWS, Google, or any other cloud provider. Since we’ve been managing servers remotely for more than 30 years, and VMs remotely for almost 20, this is a well-established problem space. Things get really interesting when we talk about the Platform as a Service (PaaS) offerings.

Implementing PaaS for your databases requires a number of changes in how you get things done, how you consume data, and overall how your data is managed and monitored. While moving your data into services like Amazon RDS or Azure SQL Database has a ton of benefits, there are also costs. The biggest of these costs is the need for additional training and knowledge among your staff.

One of the key places where more knowledge is needed is in dealing with monitoring your databases. Some traditional fundamental aspects of monitoring go away, like whether or not a given machine is online. Some aspects of monitoring will be unique to the cloud platform, such as the need to track DTU or vCore use for Azure SQL Databases. Most of the usual monitoring metrics remain the same, but may be measured differently or have extra significance, in a PaaS environment. For example, CPU usage tracking has extra significance when running on 'burstable' Amazon RDS instances.

Add to this the fact that you’re going to have a lot of on-premises data to worry about as well. You’re in a hybrid environment now, and you’re going to need a solution that can deal with it.

The need for a hybrid monitoring tool

Even as little as ten years ago, organizations traditionally had a single monolithic database, typically SQL Server or Oracle, PostgreSQL or MySQL. While those four relational databases remain the most popular, organizations have moved from monolithic estates to multi-database estates.

The 2023 Stack Overflow Developer Survey, for example, shows that developers are now typically working with three database types. Those databases can also be on-premises or in the cloud with Flexera’s 2023 State of the Cloud Report showing that 58% of organizations are using multiple public clouds.

So, the relative ease with which a database estate could be managed and monitored has changed to one where there are different types of servers, databases and instances in use, both on-premises and in various flavors of the cloud.

Hence the rise in the popularity of third-party monitoring tools like Redgate Monitor, which have had to adapt quickly to meet these new demands. When Redgate Monitor was first launched in 2008 under the name SQL Response, it monitored only SQL Server on-premises. It now monitors PostgreSQL as well as SQL Server and covers databases, instances and servers on AWS, Azure and Google Cloud as well as on-premises, incorporating all of the different metrics in a similar way.

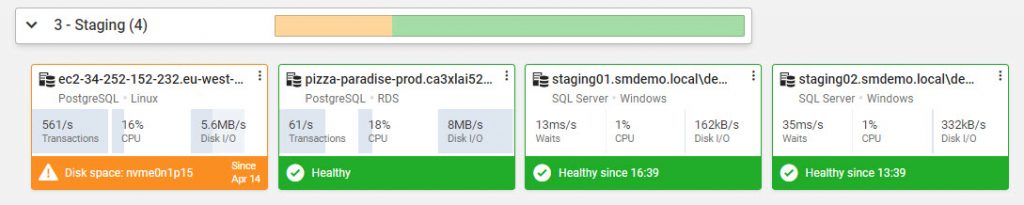

Importantly, it still enables you to monitor and manage the entire hybrid estate from a single user interface. Whether the databases are hosted on-premises, in the cloud or both, it provides an overview of the health of every instance, availability group, cluster, and VM on one central web-based interface:

It does this without compromising on the need to provide in-depth performance metrics, tailored for the requirements of each RDBMS and each platform. When a problem occurs, you can drill down quickly from top-level resource metrics like CPU, IO and memory consumption, to detailed query execution statistics that will help you pinpoint the root cause. Of course, effective monitoring will not only detect potential problems before they annoy users, but also prevent them from happening in the first place. Continuous monitoring and analysis across a wide range of metrics allows Redgate Monitor to provide projected resource usage, based on the current trend, as well as to establish baselines for important sets of metrics, so you can establish quickly when behavior deviates from the norm.

If you have a small footprint in the cloud, or you’re not there at all, you still have to deal with your on-premises servers. Further, as with everything else, the data under management there, demands on the system, and more, are all still an issue. Even as Redgate Monitor has expanded what it can do with PostgreSQL, AWS, Azure and Google Cloud, we haven’t left the on-premises systems behind. We’re expanding the capabilities of Redgate Monitor in order to support you, regardless of where your data lives.

Conclusion

As a technologist, I love the challenges that the hybrid cloud-based/on-premises environments we’re dealing with present. However, many more of us simply want to get the job done and not sweat every nuance of the differences our hybrid systems present.

When it comes to monitoring, I’d say that’s one of the biggest areas where it would be a lot easier if you could just get a single tool to do the job. Since Redgate Monitor supports everything you’re doing today, and will support the things you do in the future, as you move to the cloud, it makes for a great solution to the hybrid monitoring problem.

Tools in this post

Redgate Monitor

Real-time multi-platform performance monitoring, with alerts and diagnostics

Loading comments...