Six shades of masking your data

Foundry is Redgate's research and development division. We develop products and technologies for the Microsoft data platform. Each project progresses through Foundry's four-stage product development process: Research, Concept, Prototype, Beta. At each stage, the Foundry team is exploring the scope and potential for Redgate to develop a product. One of our projects, data masking, has seen us working to improve the management of sensitive data and synthesize more realistic data. To do this, we’ve talked to multiple customers and we’ve come up with six different approaches to data masking.

Keeping track of sensitive data

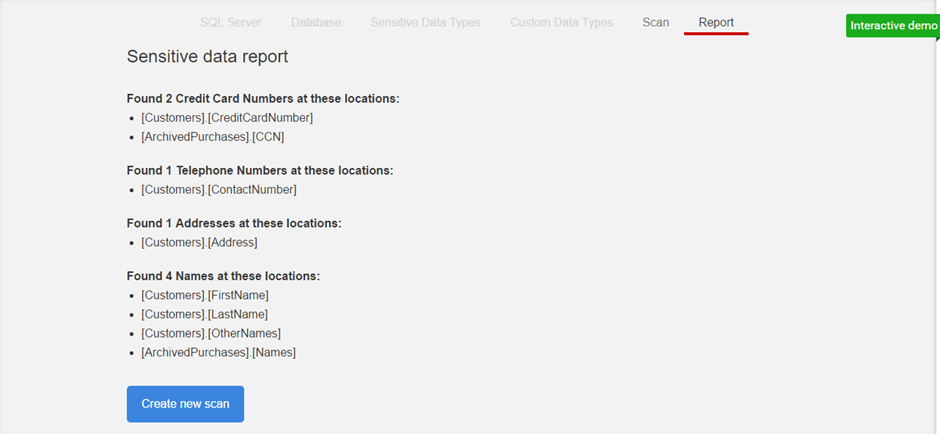

The first step in preventing sensitive data from leaving your databases is knowing where that data is. Keeping track of what data is sensitive and where that data is can be very challenging.

Our first concept application uses machine learning in order to intelligently discover sensitive data in any and all columns in a SQL Server database. It uses a combination of scanning actual column values along with the names of SQL Objects to determine if a column contains sensitive data:

Masking rules

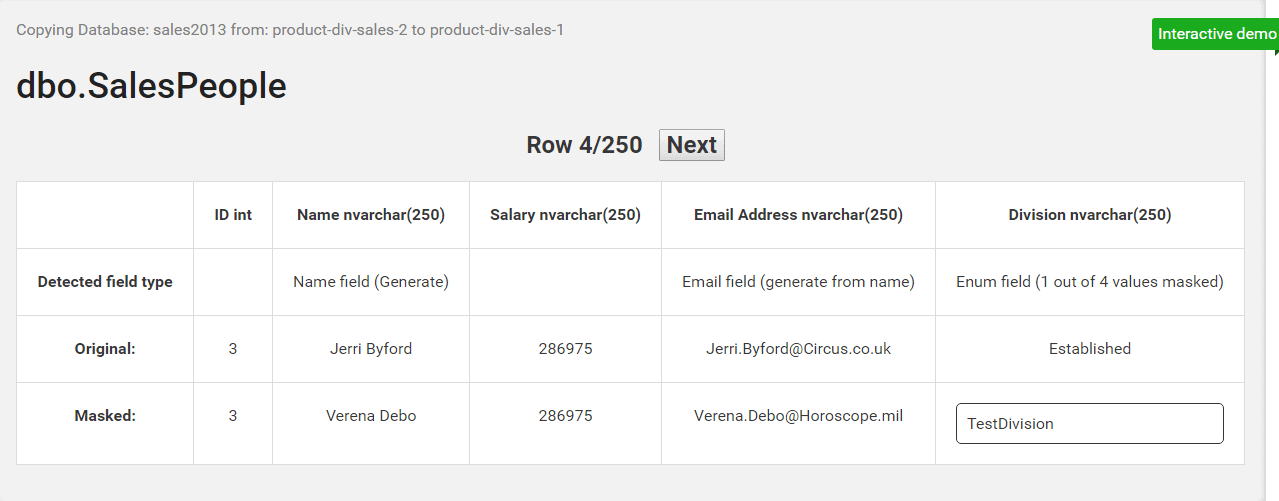

In many cases, personal data can be desensitized by applying a couple of basic masking rules to each row. For example, columns with the name ‘Name’ can have their values replaced with a random value chosen from a list of first names and surnames.

In this concept application, we show how a applying a few simple masking rules to a table can produce realistic and desensitized data:

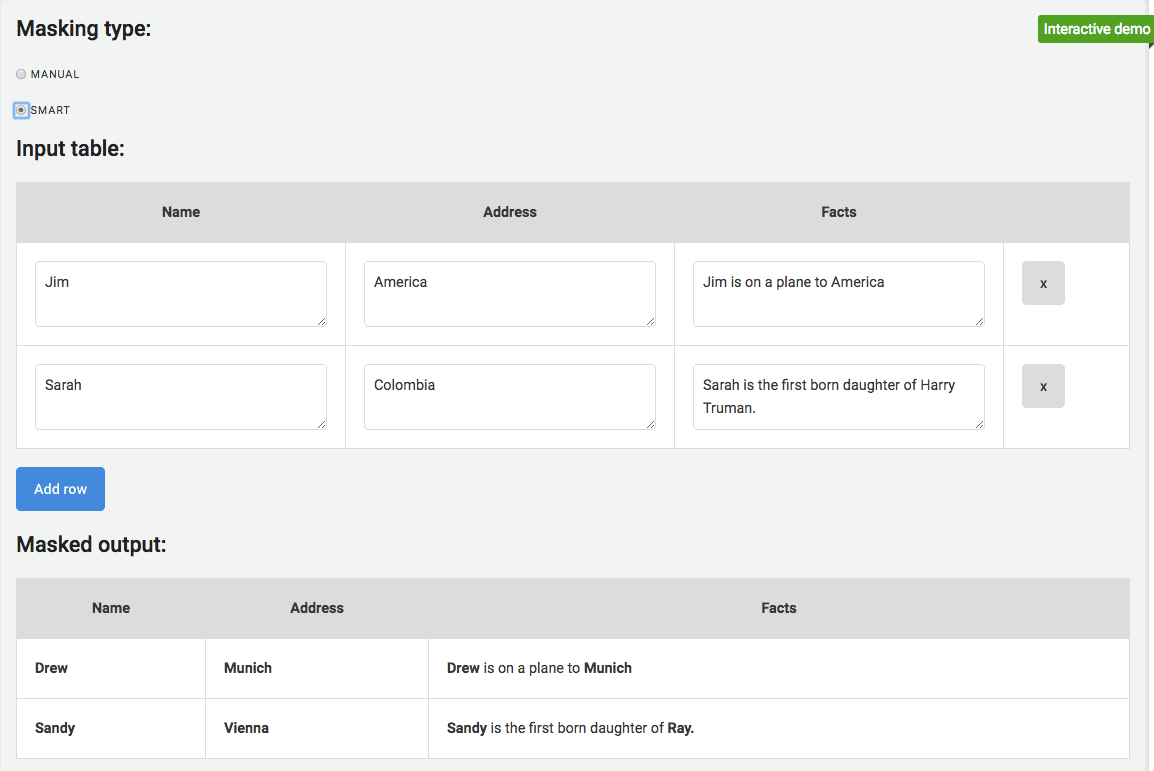

Sensitive data in large text fields

Large text fields can pose a big problem when trying to mask sensitive data from a database. Often these fields represent data that has meaning but no inherent structure, and it's not sufficient to just null them out. However, these text fields can contain unique sensitive data that is hard to detect with traditional masking tools.

This concept application explores how natural language processing could be used to find and replace sensitive data in large amounts of text:

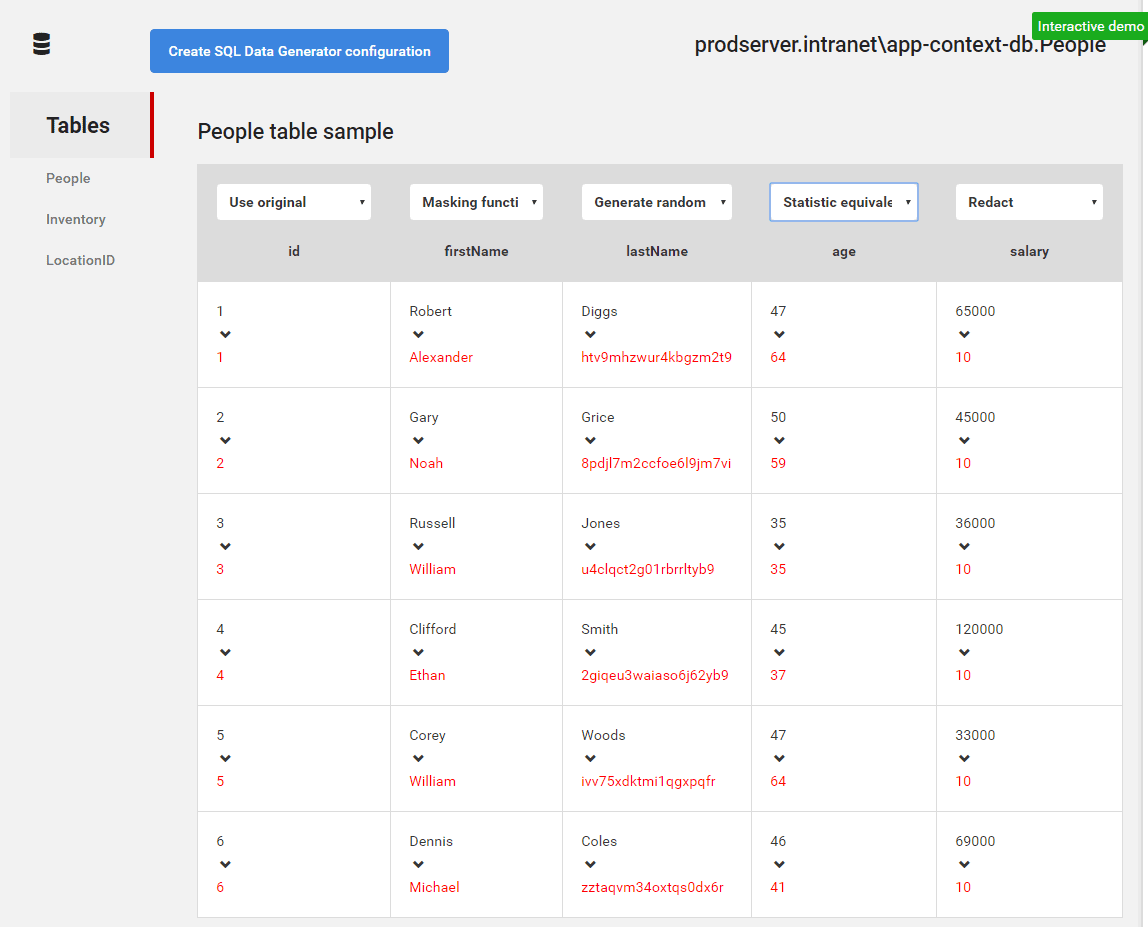

Generate random data from production

Why risk masking data when you can just generate some realistic random data instead? SQL Data Generator is really useful for filling a test or dev database with random data. However, configuring it to create sensible, production-like data can be very time consuming, especially with lots of tables and columns.

What if we could use example data from production to create a SQL Data Generator configuration in a few simple steps?

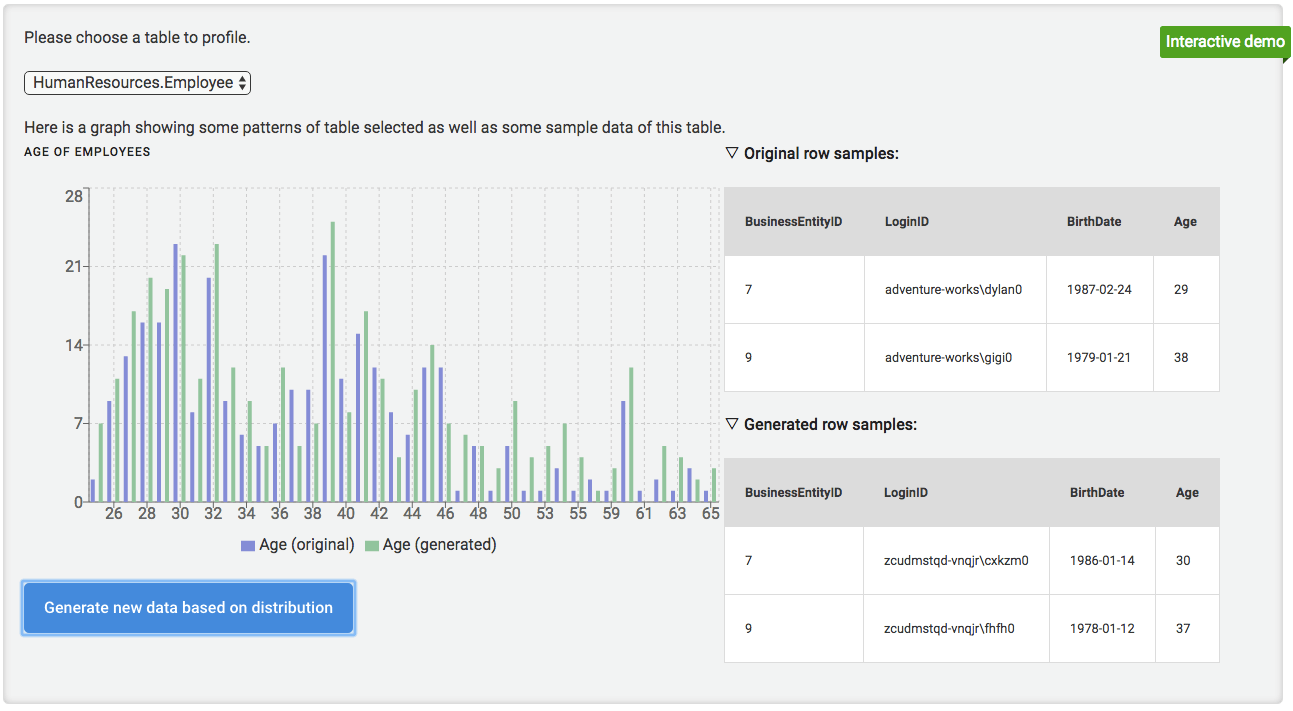

Data distribution

One of the problems with generating data row by row is that you lose useful information about the entire data set. For example, if we choose a random value for each row, then the average of the generated data will be quite different the average data in production.

Our next concept application demonstrates how we could generate data that has the a similar shape and distribution to the data in production:

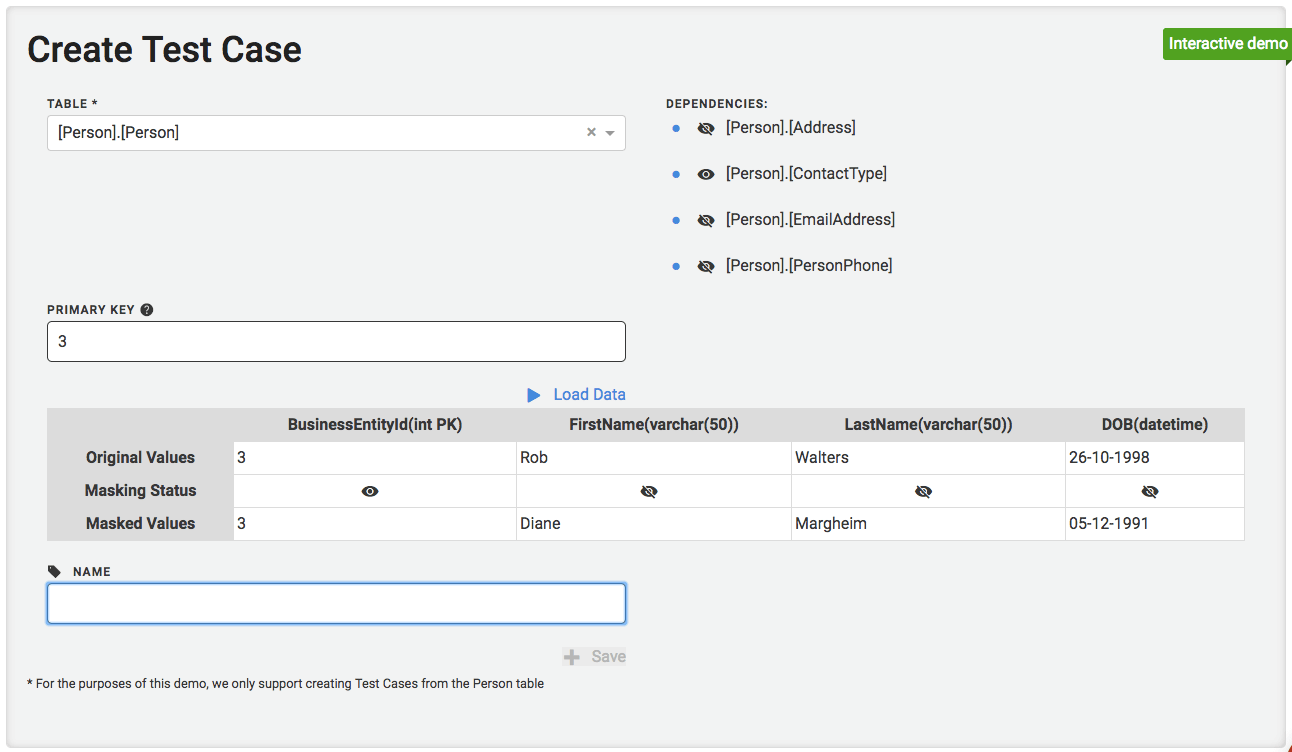

Manage test data

Creating test data by hand and scripting all the dependent data is time consuming, error prone and often misses edge cases. Testing using a representative subsample of data from production and keeping it up to date – and free from sensitive data – can be time-consuming and painful. What happens when your go-to test case closes their account?

Our final concept application looks at how we could help you create and manage test data:

Find out more

To play with these applications for yourself, head over to our demo page.

We’d also love to hear your thoughts, and to know if there are any types of masking that we’ve missed. If you want to get in touch you can drop us an email or chat with us using the intercom link on our demo page.

Loading comments...