Build and fill a database using JSON and SQL Change Automation

Phil Factor demonstrates how to export data from a database, as JSON files, validate it using JSON Schema, then build a fresh development copy of the database using SQL Change Automation, and import all the test data from the JSON files.

When you are developing a database, and doing regular builds from Source Control, you must produce a working database, of course, but it also needs data. The data you use will vary according to the type of tests you need to run. Integration test runs generally use one or more standard datasets so that, so that you can test each process, such as an end-of-day reconciliation, with a set of standard inputs and check that for a given input, your output is always the same. When you are doing performance testing you need lashings of data, of the same distribution of the real data. If you are doing user-acceptance testing, the data needs to look real, and so on. You could end up needing several sets of data. Loading the data manually can be an awful chore so it is best to automate the process.

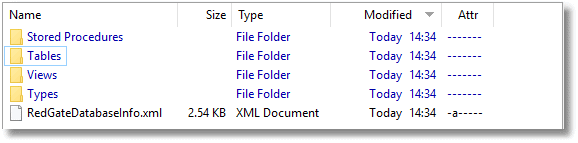

There are several ways of maintaining this data. In my experience, it is usually kept in files, which generally come from a database, or series of databases. The first task is to synchronize the source database with the current build, in case table structures have changed. We can then export the data into a file directory (one table per file), and the imported into the development copy of the database during the build process.

BCP native format is fastest way of importing or exporting data, of course, but can’t be used if the target database changes its table schemas. We can use CSV, but SQL Server is puzzlingly bad at CSV, and it is easy to get problems. As I now tend to use MongoDB for building data sets, I’m increasingly using JSON import. If it is used with JSON schema, then the data can be checked and validated well before the build, so that success at the stage of data import is well guaranteed.

The step-by-step approach

To demonstrate the principles, I’ll do it step by step. We’ll start with a copy of the PUBS database, on the same server as the test database, containing all the data we need. I also have a utils database containing the various utility procedures we need to do the leg-work of the data export-validate-import process. As we just use stored procedures, we can merely use temporary stored procedures to do the same thing.

I’ll export the data from the Pubs database, as JSON files, where each file represents the intended contents of a table, stored in a standard ‘object-within-array’ format. I’ll validate the data using JSON Schema, build the development copy of the database using SCA, and then import all the data from JSON files.

Exporting data to JSON

We need to start with a directory containing the source of our data. We’ll use the venerable Pubs database (in fact, a modified version, with plenty of data in it) just to illustrate. I’ve provided the source in the accompanying Pubs.zip file).

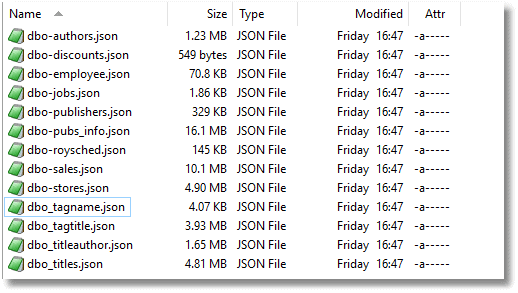

Additionally, we have the contents of PUBS, greatly augmented from the original, stored as JSON files.

In my example, I exported the data via MongoExport with optional array brackets and commas. As an alternative, we can easily use a SQL Server with the data to provide the JSON data files. I usually do this from PowerShell purely because of its superior file handling, but let’s see how to do it in SQL. The @path directory must already exist on the target server (so, in this example, ‘C:\data\RawData\PubsData’).

|

1 2 3 4 5 6 7 8 9 10 11 12 |

DECLARE @TheCommand NVARCHAR(4000) SELECT @TheCommand=' Declare @Path sysname =''C:\data\RawData\'+Db_Name()+'Data\'' DECLARE @destination NVARCHAR(MAX) = (Select @path+Replace(Replace(Replace(''?'',''.'',''-''),'']'',''''),''['','''')+''.json'') DECLARE @Json NVARCHAR(MAX) EXECUTE #SaveJsonDataFromTable @database='''+Db_Name()+''', @tablespec= ''?'', @JSONData=@json OUTPUT Execute #SaveJSONToFile @theString=@Json, @filename=@destination' EXECUTE sp_MSforeachtable @command1=@TheCommand |

I provide the source of these procedures here. They need to be compiled in on the same connection, which means in the same SSMS query window, or on the same connection in PowerShell.

Saving this data should be a trivial matter but there is a complication in that some of the CLR datatypes can cause an error, so the code is slightly more complicated than you might want. We need to output this in UTF8.

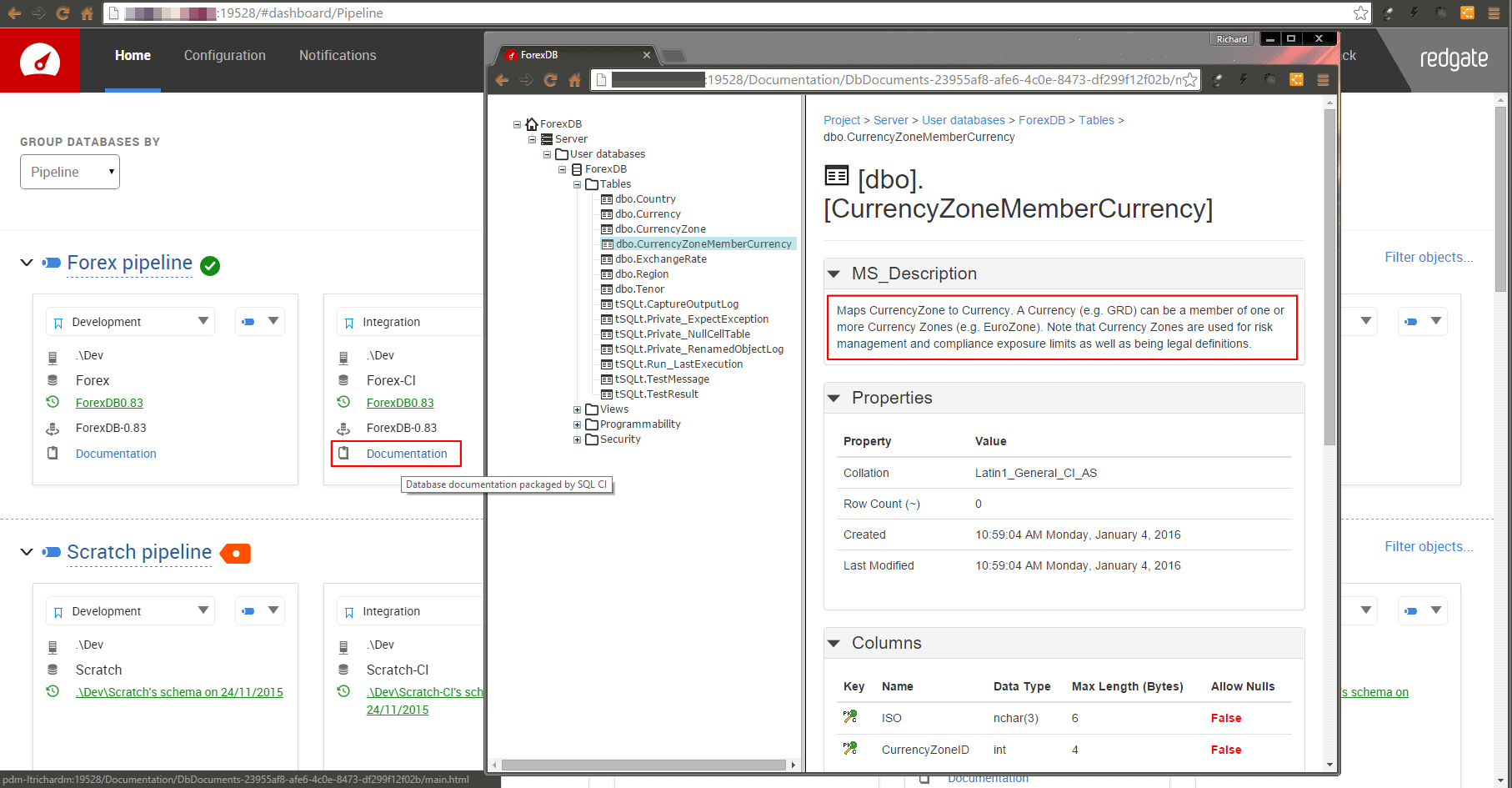

Building the database with SQL Change Automation

We can build the database easily enough on our SQL Server. First, we create the database.

|

1 2 |

USE master; CREATE DATABASE PubsBuild |

Then, with SQL Change Automation (SCA), we can very quickly build the database. In this case, I’m using SQL Server authentication, but Windows Authentication is even easier.

|

1 2 3 4 5 6 7 8 9 |

Import-Module SqlChangeAutomation -ErrorAction silentlycontinue -ErrorVariable +ImportErrors $production = New-DatabaseConnection ` -ServerInstance "MyServer" ` -Database "PubsBuild" ` -Username "MyLogin" ` -Password "MyPassword" Sync-DatabaseSchema ` -Source 'MyPathTo\pubs' ` -Target $production |

Checking the Data

If you are feeling lucky, you can skip this stage, which is to check the JSON import files to make sure that they have good data. Sometimes, if you are masking data, or generating new sets of data, or pulling data in from public sources, you’ll find that things can go wrong.

We can generate a JSON schema for the JSON files from our new database, because any SQL Server database knows what it can ‘eat’. We can easily get that information, and we can add additional information such as the SQL datatypes of each column and the column order of our tables. We want this JSON Schema because we can use it to check the data files to make sure that the data is all there for the columns we need, and generally of a type that can be coerced into a SQL Server datatype.

We can do this in SQL if we can use xp_cmdshell on the built database. Otherwise we can use PowerShell or any .NET scripting. I usually use PowerShell scripting because SQL Server is notoriously unreliable with producing or consuming the standard UTF-8 format that is standard for JSON files. However, we’ll show this in SQL. Again, the target server directory must exist.

|

1 2 3 4 5 6 7 |

USE PubsBuild DECLARE @TheCommand NVARCHAR(4000) SELECT @TheCommand='DECLARE @ourJSONSchema NVARCHAR(MAX) --our JSON Schema EXECUTE #CreateJSONSchemaFromTable @TableSpec='''+Db_Name()+'.?'',@JSONSchema=@ourJSONSchema OUTPUT DECLARE @destination NVARCHAR(MAX) = (Select ''C:\data\RawData\'+Db_Name()+'Schema\''+Replace(Replace(Replace(''?'',''.'',''-''),'']'',''''),''['','''')+''.json'') Execute #SaveJSONToFile @theString=@ourJSONSchema, @filename=@destination' EXECUTE sp_MSforeachtable @command1=@TheCommand |

I give the source of these routines here. We can now use PowerShell to run the checks. Note that if you are using MongoDB as your source, it adds its own additional primary key, of a particular BSON datatype. We won’t want it, so we simply ignore that data.

We use NewtonSoft’s JSON Schema Validator, which will flush out any problems if we specify the checks in the JSON file.

I’ve arranged the data in two directories, schema and data, and the files have the same name in each (see Pubs.zip). The path to this is defined by the variable $OurDirectory.

Execute this PowerShell on your server:

|

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27 28 29 30 |

$OurDirectory='OurPathToTheData' $NewtonsoftJsonPath = Resolve-Path -Path "lib\Newtonsoft.Json.dll" $NewtonsoftJsonSchemaPath = Resolve-Path -Path "lib\Newtonsoft.Json.Schema.dll" Add-Type -Path $NewtonsoftJsonPath Add-Type -Path $NewtonsoftJsonSchemaPath # define the validator type $source = @' public class Validator { public static System.Collections.Generic.IList<string> Validate(Newtonsoft.Json.Linq.JToken token, Newtonsoft.Json.Schema.JSchema schema) { System.Collections.Generic.IList<string> messages; Newtonsoft.Json.Schema.SchemaExtensions.IsValid(token, schema, out messages); return messages; } } '@ Add-Type -TypeDefinition $source -ReferencedAssemblies $NewtonsoftJsonPath,$NewtonsoftJsonSchemaPath Get-Childitem "$($ourDirectory)\PubsData\*.json" -Filter *.json|Select name|foreach{ $filename=$_.Name $JSONData = [IO.File]::ReadAllText("$($ourDirectory)\PubsData\$filename") $Schema = [IO.File]::ReadAllText("$($ourDirectory)\PubsSchema\$filename") $tokenisedData=[Newtonsoft.Json.Linq.JToken]::Parse($JsonData) $tokenisedSchema = [Newtonsoft.Json.Schema.JSchema]::Parse($Schema) $ErrorMessages = [Validator]::Validate($tokenisedData,$tokenisedSchema) if ($ErrorMessages.Count -eq 0) {write-host "The data of $filename is valid against the schema"} else {$ErrorMessages|Select-Object -First 5| foreach{ write-warning "data file $filename $_"}} } |

After a bit of sorting out of issues with the data, we get

The data of dbo-authors.json is valid against the schema The data of dbo-discounts.json is valid against the schema The data of dbo-employee.json is valid against the schema The data of dbo-jobs.json is valid against the schema The data of dbo-publishers.json is valid against the schema The data of dbo-pub_info.json is valid against the schema The data of dbo-roysched.json is valid against the schema The data of dbo-sales.json is valid against the schema The data of dbo-stores.json is valid against the schema The data of dbo-TagName.json is valid against the schema The data of dbo-TagTitle.json is valid against the schema The data of dbo-titleauthor.json is valid against the schema The data of dbo-titles.json is valid against the schema

Importing the data

So now we have the data and we have the database. What can go wrong?

- We need a bit of fiddling because the

OPENJSONfunction can’t have certain deprecated datatypes in its ‘explicit schema’ syntax, - OpenJSON cannot use some of Microsoft’s own CLR datatypes.

- We must cope properly with identity fields.

- We need to temporarily disable all constraints before we start and turn them back on when we finish.

I generally use PowerShell for this: We can import this via SQL but here I’m doing so purely to illustrate that it can be done, more or less. The worst problem I found was that it is impossible to read in a UTF8 text file (the standard for a JSON file) and preserve the characters! You can do it in BCP but not OPENROWSET for some reason. This is said to work but doesn’t:

|

1 2 |

SELECT bulkcolumn FROM OPENROWSET (BULK 'OurPathTo\utf8testfile.txt', SINGLE_CLOB, CODEPAGE='65001') AS TheText |

Fortunately, this database is from the old world of ASCII, so we can do the best we can in SQL. We execute the following SQL

|

1 2 3 4 5 6 7 8 9 |

USE PubsBuild --disable all constraints for all tables EXEC sp_msforeachtable 'ALTER TABLE ? NOCHECK CONSTRAINT all' EXECUTE sp_MSforeachtable @command1= ' Execute #SaveJsonDataToTable @Tablespec =''?'', @database=''PubsBuild'', @path=''C:\data\RawData\pubsData\''' ---- --enable all constraints for all tables EXEC sp_msforeachtable 'ALTER TABLE ? WITH CHECK CHECK CONSTRAINT all' |

The source of the procedure that does the real work is attached to the article and stored here.

A scripted approach

Here we have a script that creates as many databases as you need on as many servers as you want, using whichever data sets you choose. For each database, you can provide a source code directory, a JSON data directory (JSONData), and a JSON schema directory (JSONSchema). The JSONData directory must correspond to the database, in terms of the tables and their metadata.

In this example script, I’ve given the information for the databases, servers and login in a PowerShell data structure. Usually, I turn this into a PowerShell cmdlet that takes the config as a filename. However, this script form allows much easier debugging and change. The current data structure is to give a minimal example that you can build on.

The script works in a similar way to the manual approach, but I create a temporary stored procedure to do the hard work, and use SMO for the slow dignified bits. This is a more satisfactory approach, if only because one can read in the JSON files within PowerShell and they can be anywhere visible to your workstation on the network. By using just temporary stored procedures for the heavy lifting, we keep the database and server clean without a lasting trace of our activities.

I have not embedded the routine that tests the JSON data, because I usually do that separately. Also, the generation of the JSON schema and the JSON data is normally a separate process. As you can imagine, the generation of the schema must be done by doing a database build from source control. It need have no data in it at all. The schema can then be generated and then tested against the data. Normally, one would wish to add checks to the schema, such as Regexes. (wonderful for the checking of dates, email addresses and the like).

|

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27 28 29 30 31 32 33 34 35 36 37 38 39 40 41 42 43 44 45 46 47 48 49 50 51 52 53 54 55 56 57 58 59 60 61 62 63 64 65 66 67 68 69 70 71 72 73 74 75 76 77 78 79 80 81 82 83 84 85 86 87 88 89 90 91 92 93 94 95 96 97 98 99 100 101 102 103 104 105 106 107 108 109 110 111 112 113 114 115 116 117 118 119 120 121 122 123 124 125 126 127 128 129 130 131 132 133 134 135 136 137 138 139 140 141 142 143 144 145 146 147 148 149 150 151 152 153 154 155 156 157 158 159 160 161 162 163 164 165 166 167 168 169 170 171 172 173 174 175 176 177 178 179 180 181 182 183 184 185 186 187 188 189 190 191 192 193 194 195 196 197 198 199 200 201 202 203 204 205 206 207 208 209 210 211 212 213 214 215 216 217 218 219 220 221 222 223 |

set-psdebug -strict $ErrorActionPreference = "stop" # <#variables that you need to fill in for each project. Normally I have this as a separate file and read the relevant data file in according to the project being built The script adds to the config object as it is executed #> $config = @{ # The directory to store the log in. eg; D:\MyDatabaseproject\Logs 'LogDirectory' = 'S:\work\programs\SQL\ScriptsDirectory\Logs'; #the name of the scripting activity or project 'project' = 'Pubs'; 'Databases' = @{ 'first' = #a target database. Use a blank string for the user for windows auth. @{ 'ServerInstance' = 'MyServer'; 'Database' = 'Daniel'; 'Username' = 'PhilFactor'; 'Source' = 'S:\work\programs\SQL\ScriptsDirectory\MyServer\pubs\scripts'; 'DataSource' = 'S:\work\programs\SQL\ScriptsDirectory\MyServer\pubs\Data'; 'SQLCompareOptions' = '' }; 'Second' = #a target database. Use a blank string for the user for windows auth. @{ 'ServerInstance' = 'MyServer'; 'Database' = 'Lion'; 'Username' = 'PhilFactor'; 'Source' = 'S:\work\programs\SQL\ScriptsDirectory\MyServer\pubs\scripts'; 'DataSource' = 'S:\work\programs\SQL\ScriptsDirectory\MyServer\pubs\data'; 'SQLCompareOptions' = '' } } } # and some handy constants $MS = 'Microsoft.SQLServer' $My = "$MS.Management.Smo" $TheLogFile = "$($config.LogDirectory)\logfile.txt" #Load SMO assemblies $Errors = @() Import-Module sqlserver -DisableNameChecking -ErrorAction silentlycontinue -ErrorVariable +Errors #check and if necessary create all directories specified by the config that can be created $DirectoriesThatCanBeCreated = @("$($config.LogDirectory)\$($config.project)") $DirectoriesThatCanBeCreated | foreach{ # If necessary, create the directory for the artefact if (-not (Test-Path -PathType Container $_)) { # we create the directory if it doesn't already exist New-Item -ItemType Directory -Force -Path $_ ` -ErrorAction silentlycontinue -ErrorVariable +Errors; } } <# here we run a check that all the data we need is there #> $DirectoriesThatMustExist = @() $DirectoriesThatMustExist += $config.Databases.GetEnumerator() | foreach{ $_.Value.Source } $DirectoriesThatMustExist += $config.Databases.GetEnumerator() | foreach{ $_.Value.DataSource } $DirectoriesThatMustExist | group { $_ } | foreach{ $_.name } | foreach{ if (-not (Test-Path -PathType Container $_)) { $Errors += "Directory $($_) could not be found!" } } <# make sure all the connections are servicable- that we can log into the servers #> if ($errors.Count -eq 0) { $config.Databases.GetEnumerator() | foreach{ $ConnectionErrors = @() # to store any connection errors in $Database = $_ $db = $Database.Value; $conn = new-object "$MS.Management.Common.ServerConnection" $conn.ServerInstance = $db.ServerInstance if ($db.username -ieq '') { # Crikey, this is easy, windows Passwords. Don't you love 'em? $conn.LoginSecure = $true; $db.Connection = New-DatabaseConnection ` -ServerInstance $db.ServerInstance -Database $db.Database ` -ErrorAction silentlycontinue -ErrorVariable +Errors; } else { <# This is more elaborate a process than you might expect because we can't assume that we can use Windows authentication, because of Azure, remote servers outside the domain, and other such complications. We can't ever keep passwords for SQL Server authentication as part of the static script data. At this stage, we ask for passwords if they aren't known, and otherwise store them as secure strings on file in the user area, protected by the workstation security. #> #create a connection object to manage credentials $conn = new-object "$MS.Management.Common.ServerConnection" $conn.ServerInstance = $db.ServerInstance $encryptedPasswordFile = ` "$env:USERPROFILE\$($db.Username)-$($db.ServerInstance).txt" # test to see if we know about the password in a secure string stored in the user area if (Test-Path -path $encryptedPasswordFile -PathType leaf) { #has already got this set for this login so fetch it $encrypted = Get-Content $encryptedPasswordFile | ConvertTo-SecureString $Credentials = ` New-Object System.Management.Automation.PsCredential($db.Username, $encrypted) } else #then we have to ask the user for it { #hasn't got this set for this login $Credentials = get-credential -Credential $SourceLogin $Credentials.Password | ConvertFrom-SecureString | Set-Content "$env:USERPROFILE\$SourceLogin-$SourceServerName.txt" } $conn.LoginSecure = $false; $conn.Login = $Credentials.UserName; $conn.SecurePassword = $Credentials.Password; $db.Connection = ` New-DatabaseConnection -ServerInstance $db.ServerInstance -Database $db.Database ` -Username $conn.Login -Password $conn.Password ` -ErrorAction silentlycontinue -ErrorVariable +Errors; } $db.ServerConnection = $conn; #this sets our server connection for the database } } <# We Kill any existing versions of the databases and create the new blank databases. Be very careful not to kill our Datasource! Normally, you'd back up existing versions of databases just in case #> if ($errors.Count -eq 0) { $config.Databases.GetEnumerator() | where Name -inotin ('DataSource') | foreach{ $Db = $_.Value $DestinationServerObject = new-object ("$My.Server") $db.ServerConnection if ($DestinationServerObject.Version -eq $null) { $errors += "Could not connect to the server $($db.ServerInstance) with the credentials" } else { #if the database already exists, then kill it If (@($DestinationServerObject.Databases | % { $_.Name }) -contains $db.Database) { $DestinationServerObject.KillDatabase($db.Database) } $DestinationDatabaseObject = ` New-Object ("$My.Database") ($DestinationServerObject, $db.Database) $DestinationDatabaseObject.Create() if ($DestinationDatabaseObject.name -ne $db.Database) { $Errors += "Can't create the database '$($db.Database)' in '$($db.ServerInstance)" }; if ($errors.Count>0) {break;} $syncResult = Sync-DatabaseSchema -Source $db.Source -Target $db.Connection ` -AbortOnWarningLevel None -SQLCompareOptions $db.SQLCompareOptions ` -ErrorAction silentlycontinue -ErrorVariable +Errors 3>>$TheLogFile $procedure = @' CREATE OR ALTER PROCEDURE #SaveJsonValueToTable (@Table sysname, @Database sysname, @TheJSONData NVARCHAR(MAX)) AS DECLARE @SelectStatement NVARCHAR(200)=(SELECT 'Select * from '+@database+'.'+@Table) Declare @parameters nvarchar(max) DECLARE @hasIdentityColumn INT DECLARE @columnlist NVARCHAR(4000) SELECT @parameters=String_Agg(QuoteName(name)+' '+ CASE f.system_type_name WHEN 'hierarchyid' THEN 'nvarchar(30)' WHEN 'geometry'THEN 'nvarchar(100)' WHEN 'geography' THEN 'nvarchar(100)' WHEN 'image' THEN 'Varbinary(max)' WHEN 'text' THEN 'Varchar(max)' WHEN 'ntext' THEN 'Nvarchar(max)' ELSE f.system_type_name end+ ' ''$."'+name+'"''',', '), @hasIdentityColumn =MAX(Convert(INT,is_identity_column)), @columnlist=String_Agg(name,', ') from sys.dm_exec_describe_first_result_set (@SelectStatement, NULL, 1) f IF @parameters IS NULL RAISERROR('cannot execute %s',16,1,@selectStatement) Declare @command nvarchar(max)=(SELECT ' use '+@database+' Delete from '+@table+ CASE WHEN @hasIdentityColumn>0 THEN ' SET IDENTITY_INSERT '+@table+' ON ' ELSE '' END+' INSERT INTO '+@table+' ('+@columnList+') SELECT '+@columnList+' FROM OpenJson(@jsonData) WITH ( '+@parameters+' ); '+ CASE WHEN @hasIdentityColumn>0 THEN ' SET IDENTITY_INSERT '+@table+' OFF ' ELSE '' END) EXECUTE sp_executeSQL @command, N'@jsonData nvarchar(max) output', @jsonData = @ThejsonData; '@ $SqlConnection = New-Object System.Data.SqlClient.SqlConnection $SqlConnection.ConnectionString = ` $db.Connection.ConnectionString.UnmaskedValue + ';MultipleActiveResultSets=True;' $SqlConnection.Open() $sqlCommand = $sqlConnection.CreateCommand() $sqlCommand.CommandText = $procedure $null=($sqlCommand.ExecuteReader()).Close $sqlCommand = $sqlConnection.CreateCommand() $sqlCommand.CommandText = "EXEC sp_msforeachtable 'ALTER TABLE ? NOCHECK CONSTRAINT all'" $null=($sqlCommand.ExecuteReader()).Close $DestinationDatabaseObject.Tables | foreach { $filename = "$($_.schema)-$($_.Name)" -replace '[\\\/\:\.]', '-' $Tablename = "[$($_.schema)].[$($_.Name)]" $JSONdata = [IO.File]::ReadAllText( ` #get the JSON data file "$($Db.datasource)\JSONdata\$($filename).json") #execute this script, using the existing connection $SqlCmd = $sqlConnection.CreateCommand() $SqlCMD.CommandType = [System.Data.CommandType]::StoredProcedure $SqlCMD.CommandTimeout = 300 $SqlCMD.CommandText = '#SaveJsonValueToTable' [Void]$SqlCMD.Parameters.AddWithValue('Table', $Tablename) [Void]$SqlCMD.Parameters.AddWithValue('Database', $db.Database) [Void]$SqlCMD.Parameters.AddWithValue('TheJSONData', $JSONdata) $SqlCmd.ExecuteNonQuery() } $sqlCommand = $sqlConnection.CreateCommand() $sqlCommand.CommandText = ` "EXEC sp_msforeachtable 'ALTER TABLE ? WITH CHECK CHECK CONSTRAINT all'" $null=($sqlCommand.ExecuteReader()).Close $SqlConnection.Close() } } } # try to keep the error handling in one place All errors should fall # through to here if ($Errors.count -gt 0) #if we couldn't import something { $Errors | foreach{ write-warning "The build gave an error '$($_)'" "$((Get-Date).ToString()) - '$($_)' ">>$TheLogFile; } } |

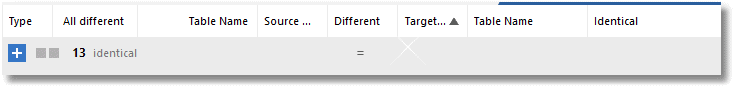

And after it runs we can easily check that all is OK with SQL Data Compare!

Conclusions

Storing your data for development work as JSON confers several advantages. You can exchange data with any application or service that can consume JSON data, you can edit the data easily in a JSON editor, use lightning fast data masking that doesn’t leave a trace in the database, and you can very easily validate the data before you insert it. These validation checks include some that can’t be done in SQL Server, such as RegEx checks. JSON can store tabular data in several formats, some of which are almost as economical as CSV. I haven’t noticed data import to be any slower than CSV.

JSON seems to have suddenly grown up.