What is SQL Change Automation?

This article describes the basic principles of SQL Change Automation, and how it works to provide a consistent and repeatable way of automating as much as possible of the database build, test, and deployment processes.

It is easy to deliver a small SQL Server test database without extra software tools, but once you edge into the real world you will soon need a consistent and repeatable way of automating as much as possible of the build, test, and deployment processes.

SQL Change Automation (SCA) is a software framework that uses PowerShell to help you automate the way you build, test, and deploy SQL Server databases. It is designed to fit, and co-exist with, the application’s existing development toolchain, source control, build, integration, tracking, and reporting systems. The build and release components come with add-ins that allows SCA to work with all major build, continuous integration and release management servers. SCA will adapt to whatever methodology you choose. It doesn’t insist on a particular delivery process. For example, it allows developers to store in version control either a set of migration scripts, to describe the sequence of changes required to move the database from one version to the next, or to store just the latest CREATE script for each database object. From either starting point, SCA aims for a design that allows as rapid as possible a delivery of database changes to testing and pre-production environments. It also permits as much as possible of the process to inspected, including the generated scripts, warnings, test results and changes.

Gathering all the ‘deployment bits’ into a NuGet package

SCA keeps all the components of the build together as files within a zipped directory called a NuGet package. It stores the schema and static data, but also extra metadata that describes the contents of the package, and the project reference of the project to which it belongs. A tool such as Octopus Deploy or PowerShell Remoting can deploy the package across a network, to update any target database so that it matches the version described by in the package.

Unlike Microsoft’s DacPac/BacPac, also a zipped directory, the more familiar NuGet package is an open standard that follows the Open Packaging Conventions. It is already used for packaging libraries to be used in Visual Studio. Not only does this mean that the components of the database are packaged together for archiving and publishing, but also that the metadata, such as version number, can be read by a range of tools commonly used by developers. This allows the database delivery to be more closely integrated with the application development cycle, if this proves to be necessary.

For a SQL Server database of any size, a database release will involve many components, on top of the SQL DDL code and some static data, and changing the version of the database will mean a change to some of these other components as well. There is likely to be external files, SSIS components, .NET libraries, PowerShell scripts, R and python scripts and modules, and a host of other components that are essential for the working database. To deploy the bits that SCA doesn’t handle, you can add PowerShell scripts to the ‘tools’ directory of the NuGet package, which will be called during the first-time installation, update or removal process of the package. The same NuGet package can then be used by other tools in the deployment toolchain for those more specialized database components.

Working with existing build and integration systems

SCA takes two approaches to working with the other tools selected by the delivery team. At its simplest, it can be used as a standalone set of PowerShell Cmdlets from the command-line interface (CLI).

Alternatively, it provides a range of add-ins to extend some of the more common build, continuous integration and deployment tools. For example, there is TeamCity plugin, a Visual Studio Team Services (VSTS) Build extension and a VSTS Release extension. The VSTS extensions also work with Team Foundation Server (TFS). There is also a Bamboo add-in, a Jenkins plugin, and Octopus Deploy step templates, and a TFS plugin.

Database source control

The most essential service to any database build and deployment process is to provide a means of creating each database object in the correct dependency order. A team-based database development is likely to decide to store database objects such as tables, views, triggers and routines separately. If, for example, a table references another via a foreign key constraint, both tables must exist before the constraint can be added, yet it is perfectly legitimate for a foreign key constraint to be defined within the table build script. In any database, there are likely to be a host of dependencies that will cause a build to fail if done in the wrong order. SCA figures out the correct dependency order for a successful build.

You can, if you wish, create an SCA project within Visual Studio by import an existing database, and using SCA to generate in source control the scripts to build a new version of the database. For database migrations, it will generate the set of change scripts describing how to alter the metadata of the database, as defined by its constituent DDL creation scripts, from one database version to another, whilst preserving the data held within it.

Alternatively, SCA will build a new database from the latest versions of the CREATE scripts for each object, generated by linking a database to a source control repository using SQL Source Control, and then committing any subsequent changes using this tool. It can also use them to upgrade a database to a newer version, assuming you also provide any additional change scripts required to describe the correct migration route for any changes that affect existing data.

Beyond this, SCA can use any source control methodology, even with just a single build script, as long as the directory structure is one SQL Compare can work with.

From any of these starting points, the processes to automate database builds, migrations and deployments, with SCA, is very similar, and can use the same toolset.

Automating builds from source control with SCA

The Build components of SCA take as its input your database, as it’s represented in source control. There are separate processes or phases that can:

- Build document and validate the database

- Export and review the source code

- Test the code by running unit, integration and regression tests, as well as generate the test data

- Publish the database build artifact to a NuGet package, which can be used by your release management system to create a new database version, from an empty database, or to update previously installed versions of the database.

In the average development, this will be a regular, possibly daily, activity. It is worth setting up SCA early in the project. It might take a little time and patience, initially, to get it running in a PowerShell script, but it will run from then on with little effort. The benefit of automating these processes will soon kick in.

SCA deals only with the database itself. Once it has created the NuGet package, it can also be used to contain the resources and scripts to install additional database components such as SSIS projects, Agent tasks, replication, or R modules. These must be added to the NuGet package once the NuGet package is built, along with their respective PowerShell post-installation scripts.

Build

In this phase of the overall process, SCA verifies that it can build the database from the build script, migration script or object source. It runs pre-build validation syntax checks, on various components, to catch cases where someone has checked-in SQL source code that will cause an error at build. To run these checks, it creates a temporary version of the database, either purely from the SQL DDL code, or by executing the migration scripts and then building the various routines (procedures, functions, triggers, and so on).

If it is building from an object-level source in the directory structure, such as produced by SQL Source Control or SQL Compare, it works out the correct order for the building of the entire database. SCA can also additionally perform the following activities:

- Add Documentation – it is best to document your database objects within the SQL DDL source code. These individual parts of the documentation can be gathered into a document within the package that can then be used to inspect the source and check changes to the functionality. It also allows the governance processes such as security and compliance to make their checks directly on the source code, without having to repeatedly refer to separate documentation. It keeps the documentation with the database version to which it applies.

- Check for SQL Code smells and likely issues – numerous checks can be made, automatically, to ensure that the code complies with current policies. All issues are reported. These checks can be configured to ensure that they align with the requirements of the organization

- Run unit tests – SCA can rerun tSQLt tests at build time, either directly on the database, or on a database schema taken from a scripts folder, a NuGet package. With SCA you can specify a specific test to run, an entire test suite, or all the tests to run. It provides a standard report on the results, which can be seen from your continuous integration system, and it can also export it to a JUnit or MSTest file.

Export and Review

At various stages of the process, the team, or others, will need to review the the script that will build a database to the new version, or to update a release database to the build version, and other resources, such as the change report and deployment warnings. They may need to send them on for external checks, such as a security sign-off. SCA can export a build artifact, writing it to a directory or ZIP file.

It can include the following:

- The SQL update script containing all the changes that will be made

- A list of the database objects that will change as a result of running the script

- SQL code differences for each object before and after the update.

- Changes to static data, showing warnings of any potential data loss and the SQL update script that will be run.

- A list of any additional warnings related to running the update script – a full list is available at https://documentation.red-gate.com/sc13/deploying-data-sources/deployment-warnings/full-list-of-deployment-warnings .

- a representation of the state of each database before running the update, and the desired state after the update.

- Metadata about the database deployment resources and the SQL Compare options used in comparisons.

- Any SQL Compare filters that have been used when creating the database deployment resources

These review stages are essential in a large development project, with involvement from a range of expertise outside the development team. It allows governance to view changes directly and, with this review stored correctly in a repository, to track back to where changes were made.

Test

SCA can maintain a test cell to run unit, tests and integration and regression tests. It can update a test database to the build version, via synchronization. As described earlier, it can run tSQLt unit tests directly. Having verified the build, you’ll then want to fill the database with realistic test data and run various end-to-end database processes, checking that the output is as expected, each time.

With SCA you can:

- Run a SQL Data Generator project to fill a database with mock data – the newly-built database can be filled with mock data from a SQL Data Generator project, in preparation for integration tests

- Assess code coverage – SCA can work with any commonly-used test framework, such as tSQLt, NUnit or Selenium. It uses SQL Cover to exercise the SQL procedures and functions in order to generate an industry-standard code coverage report and set a minimum acceptable code coverage threshold. You can specify a baseline threshold of code coverage below which the build will fail.

More complex integration tests must be done via ad-hoc PowerShell scripting outside SCA, or using a test framework.

Publish

This final step of the “build” phase publishes a database package to a NuGet feed. It is used to create the NuGet package for the database and all its assets. SCA wraps up everything required for the deployment in this NuGet package, with some additional metadata. The NuGet Package can be used either to create a new database at this build version, or update an existing database to the build version, which is encapsulated in the package.

SCA provides the means of creating or over-writing a package, or to add, remove and alter part of the contents of any NuGet release package. This means that you can, for example, have the deployment script checked and amended by a DBA. The publishing process:

- Provides a synchronization or build script for a target database – exporting database deployment resources lets you review the update script and other update resources, such as the change report and deployment warnings.

- Deploys static data contained in a NuGet package, scripts folder or zip file – if a database version is built from an empty database (SCA does not create a database), it is likely to require the loading of reference data. This is best done from the package, if there are likely to be changes over time. It can also be done from a file directory, even a zipped one.

- ‘Versions’ the target database – if the target database, when checked after the synchronization process, proves to be identical to the version contained in the NuGet Package, or live database if preferred, SCA updates the version number held within the database so that all other processes can be certain of the version of any copy of the database, without requiring a comparison by SCA

Synchronizing the target database using the NuGet database package

Once the new database version is published, the NuGet package can be used to update an empty database to the build version, or to update an existing version of a database to the same level, in terms of the metadata (table structures, views and routines), while leaving the data in place. This process can be as simple or fine-grained as required, and it is possible to include or exclude database objects, as required.

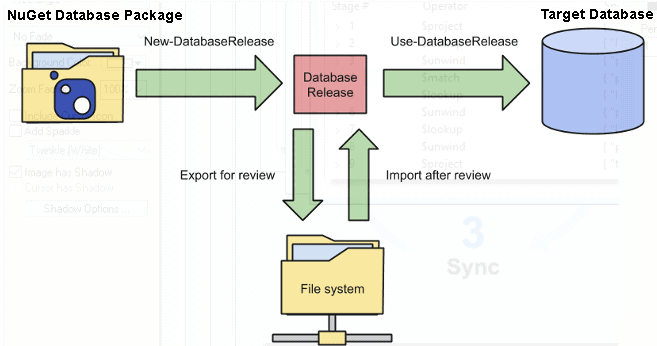

Figure 1

SCA can integrate directly with a release management tool such as Octopus deploy to automate deployment of database schema changes, directly to the development and test servers, as well as to pre-production database servers, such as QA and Staging.

At any point in the deployment pipeline, SCA can, if necessary, respond to workflow requirements that involve supervision or sign-off. It is even possible to produce synchronization scripts and reports for inspection, editing and review as part of the process. Any changes to the synchronization script can be imported into the package for deployment.

If a database is synchronized, it is purely one-way, so that any database schema changes that were not saved in source control before the start of the build process, and therefore not in the NuGet package, will be overwritten by this process and therefore lost from any development machine that is upgraded.

NuGet packages are designed to contain PowerShell scripts that control the way the package is installed and they can include pre-installation and post-installation PowerShell scripts, as well as extra assets, such as more complex database components like SSIS projects, R or Python modules, or the configuration of replication. Any extra asset can be added to the package at build time, but the task of initially creating the PowerShell scripts is outside the scope of SCA, and is done after the NuGet package is published.

SCA and SQL Clone for provisioning multiple test and development servers

Often, you’ll need to build or update several databases, using the newly built version of the database, as represented by the NuGet package, whether it be for development, or for various types of testing. SCA is designed to deal with the whole spectrum of database releases, but there are times where SQL Clone can help to speed the provisioning process for development or test servers.

Fortunately, SCA and SQL Clone can work together and both can be PowerShell-driven. SCA’s Build process is still essential. It takes the code from source control, validates that it can be compiled, checks it for style, unit-test coverage and policy-conformance, and finally builds a database from it that includes any ‘static’ data that is required for the database to run.

Testing to the max

In the test process, a large database will need to include integration tests, where an entire process is run from a known data set, and the results verified against a confirmed and agreed version. There will be a range of other testing, depending largely on context, to check for such things as scalability, resilience, user-interface and data feeds.

To do this quickly will usually require tests to be run in parallel, because they can take a considerable time to run one after another. Whatever hardware and virtualization is used, several identical versions of the new build must be updated to the current version, ready for the tests. Each database can then be stocked with test data, either from the package, or from SQL Data Generator, or from an external link.

However, if you’re when dealing with large volumes of test data, and many test databases, then SQL Clone is faster and takes fewer resources. Once you’ve SCA to produce one stocked database, at the correct version, you can use SQL Clone to create an image of it, and then deploy clones to each of the test servers.

See How to Automatically Create and Refresh Development and Test Databases using SQL Clone and SQL Toolbelt for the details of the workflow using Clone and SQL Compare

Keeping the developers up-to-date

Similarly, when working with most commercial database applications, developers will also need anonymized data that conforms closely in style, distribution and characteristics, with real data, and it pays to start with a common version of the data, as well as the metadata. However, this data takes time to develop and prepare, and it is likely to change as the database application progresses.

You can use SCA to create the build, and then produce a migration script to upgrade the previous build to the new version. This is then rolled out to each developer for their use, perhaps with a shared server as well. SCA can load anonymized data from file into a database or ensure that the data is the same as the development dataset using synchronization. However, at a certain scale, SQL Clone will be faster and take fewer network resources.

SCA can first ensure that the metadata and data in the database is at the right version. Then it can pass this to SQL Clone, which can create an image from that before deploying clones to each development server. Where build time is critical, it is therefore better to integrate SQL Clone into the provisioning.

Conclusions

The database delivery process needs to be:

- Resilient – by keeping changes in a single package, and by doing initial checks on the source code, the automated build process is less likely to be stopped due to an irrecoverable problem.

- Secure – the build source stays with the database version, so that changes can be reliably audited to be sure when a change is made. The deployment ‘artifact’, with all the assets, need be stored in just one network location. As the process is automated, it is easier to provide logging, and permissions can be more easily limited, with fewer ‘god-like’ logins required. With adequate reporting of changes, only a limited amount of source code needs to be widely disseminated.

- Repeatable – by allowing the whole process to be scripted, and having a single ‘source of truth’, there is less opportunity for ‘finger trouble’.

- Quick – SCA is flexible in the way that it can handle deployments, depending on the size of the database, and can use synchronization to change a database from one state to another via a migration script. With the addition of SQL Clone, the process can provision large test and development databases even faster.

- Visible – via reports and logging, it is far easier for team members to keep up-to-date with changes in the database.

SCA will adapt to your preferred delivery process, and is designed with automation in mind. It positively encourages integration with other development tools, wherever possible. It is not the best choice for the dilettante, or novice developer, but is aimed at teams that are committed to professional quality, close teamwork, and rapid deployment, within a large organization or enterprise.