Realistic, simulated data for testing, development and prototypes

Generating realistic test data is a challenging task, made even more complex if you need to generate that data in different formats, for the different database technologies in use within your organization. Dave Poole proposes a solution that uses SQL Data Generator as a ‘data generation and translation’ tool.

Generating realistic test data, which reflects accurately the nature and distribution of the data it is emulating, is a challenging task. The task is made more complex if you need to generate that data in different formats, for the different database technologies in use within your organization.

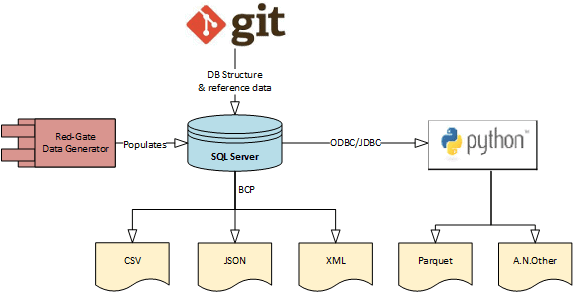

This article proposes a scheme for using Redgate SQL Data Generator (SDG) as a ‘data generation and translation’ tool. We generate the data using a free copy of SQL Server Express, and then use standard bulk copy mechanisms to translate it to various formats, then distribute it out to the different data technologies and platforms that require a copy of the data. The tool becomes a mechanism to support ‘polyglot persistence’.

SDG comes with a useful set of ‘pre-canned’ generators of various types, which will get you started, but which you’ll doubtless need to customize for your own needs. We can customize these generators within SDG GUI, but then they are ‘tied’ to one SDG project.

What we need instead, to make SDG a more useful team resource, is a specific set of standalone generators, which will work with any SDG project, and which generate realistic data, as well as reflecting your language and naming conventions.

Uses for a data generator

In my case, there are three main drivers for the use of a data generator:

- Privacy and personal data regulation, explicitly GDPR

- Demonstrating the expected behavior of an application, in the context most relevant to a user, during user acceptance testing

- Performance and load testing across different data technologies

In addition, the data generation technique had to make it easy to distribute the generated data, in a variety of formats, across the different platforms and data storage technologies that comprise our estate.

Privacy and personal data regulation

In EU states and the United Kingdom GDPR comes into full force on May 25th, 2018. Under GDPR, we must know who is consuming which data and for what purposes, and whether they have permission to do so. We must also take all appropriate measure to protect any personal and sensitive data that the organization stores or processes.

Under GDPR, Shadow IT (i.e. systems and practices that contravene these policies) presents two quantifiable threats:

- Data breaches = €20million or 4% of organization turnover, whichever is highest.

- Failure to implement administrative processes to protect personal data privacy = €10million or 2% of organization turnover.

These levels of fines, in addition to the publicity surrounding such breaches and the ensuing reputational damage, means that the risk posed by copying a live database for testing and development purposes becomes unacceptable to business stakeholders.

The risk is mitigated by the capability of generating realistic test data in other environments without the need for copying the data from a live database.

Demonstrating system behavior to the user

The more realistic the data the easier it is for your stakeholder to decide whether what you are delivering meets their needs. Not everyone can grasp an example that uses abstract or unrealistic data. I have witnessed a personalization project descend into farce, because a combination of ‘made up’ product attributes resulted in what was misconstrued as an application quality problem.

Performance testing

Hardware, the number of concurrent users, and user behavior all have a strong influence on performance metrics, but so too do data volumes and the distribution of values within that data.

Test data that is realistic, in this regard, should produce more trustworthy results. How a realistic distribution of values can be achieved will be revealed later in the article.

Distributing data across platforms

I first came across the term polyglot persistence in an article by Martin Fowler. It describes the use of different database technologies, depending on what is appropriate to the problem being solved. In my case, we had to distribute generated data in a variety of forms, as required by each of the data storage and processing technologies that we supported. Also, much of our estate has migrated to Linux and we have reached the tipping point where there is a reluctance to invest in technology that runs solely on Windows.

On the face of it, SQL Data Generator (SDG) is a specialist tool for filling SQL Server databases with ‘simulated’ data. However, given that SQL Server 2017 is available on Linux, and with a little ingenuity, it did not preclude it as a possible solution. We could use SQL Server Express database to generate the data, and then translate it to a variety of different formats, as appropriate for the technology used in the target system.

Generate data in SQL Server Express, export using BCP

The ability to carry out an unattended install of SQL Server means that we can propose SQL Server Express as a disposable component in a data generation solution. The database only need exist for the purposes of data generation. Using SQL Server Express, we can generate up to 10 GB of data and then bulk-export it in the required file format, via BCP. Where we require more than 10GB of data, with careful design we can build a solution that operates in batches.

We can build the SQL Server Express database from version control. A good idea learned from Red-Gate Source Control is to keep reference data in the repository as well as the database structure. A key point here is that we’re using data structures that represent the data storage needs of the target system (such as a MongoDB collection). We’re not just “importing relational tables into MongoDB“.

As long as we can represent our desired data structure in its equivalent form within SQL Server, then we can use the BCP utility to ‘translate’ the generated data into CSV, JSON or XML files, which we can then import into the technology of choice.

Figure 1: Generate data in SQL Server Express then export in a variety of formats

Most database systems have a bulk import facility for CSV data. In the NOSQL world, for example, MongoDB, Couchbase and ElasticSearch all support bulk import from both CSV and JSON.

We may need more exotic file formats such as Parquet files to test an Apache Spark process built to interrogate Parquet files. In this case these could be generated by using Python with Pandas data frames and PyArrow to generate the required Parquet files. Another example of an exotic file format is TOPAS (Tour Operator Product Availability Standard). Python makes it easy to assemble almost any bespoke format required.

The scheme described in Figure 1 works well for generating smaller data sets, for larger, or more ‘specialist’, data sets, it sometimes makes sense to be able to import at least some parts of the real ‘production’ data.

Using data from production systems

While we generate data from scratch, where possible, there are some valid cases for transferring production data to non-production systems. For example:

- Large product catalogues

- HTTP user agents lists from real web site visitors

- Enriched datasets based on postal code

The source ‘production’ database could be a relational database, but equally a NoSQL data store, and therefore the data can arrive from the production source in a variety of formats.

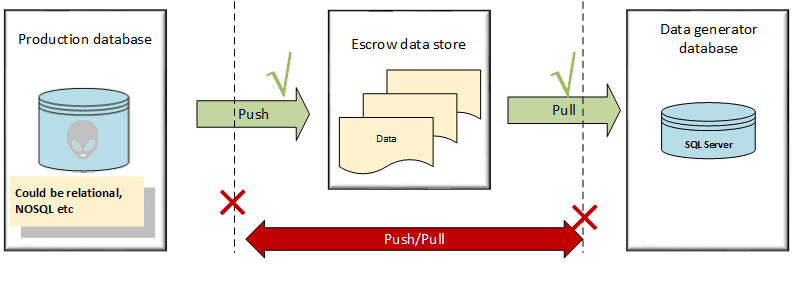

On principle, we cannot allow any non-production system to connect directly to any production system. In our case, we use an ‘escrow data facility’, analogous to SQL Server’s publisher/distributor/subscriber model.

Figure 2: The Escrow data facility as ‘intermediary’ between the production database, and the data generator database

As you can see, our production system is not allowed to talk directly to our data generator; it can only push data to the escrow data store. Our data generator cannot request data from the production system; it can only pull from the escrow data store.

As noted, data in the escrow store will be in a variety of formats, including CSV, JSON or XML, depending on the originating system. Ideally, we want a format that can be imported into SQL Server using BCP or BULK INSERT.

Our escrow store is strictly segregated and is a secure source of data. It cannot push data out and it is careful about what systems it allows to push data to it. Similarly, it maintains tight control over which systems can pull data from it and where. This level of control becomes extremely important under GDPR, as discussed above.

Generating realistic data using SDG

Over the following sections, I’ll describe how I used SDG, with various bespoke data configuration techniques, to generate realistic data for our ‘polyglot’ systems.

I will not attempt to reproduce the Redgate SDG documentation, nor or the material on this tool’s Product Learning pages. Instead, I’ll show how the tool can be tweaked and stretched for your needs.

I think of the application, as installed, as giving us four things:

- A framework

- A user interface

- Useful base data and configuration, in the form of built-in generators

- Command line support

When generating data for commercial databases, the built-in generators will only get you so far. However, they do illustrate the range of capabilities of the package and the techniques that can be employed within its user interface, and they also serve as useful examples that you can adapt, to build generators that are appropriate for your industry, or nationality, or based on your naming conventions.

To do this, we need to look at the way in which the pre-canned data generators work, and then understand how to adapt their behavior, by customizing the associated configuration file or data source. You can do this interactively, within the GUI, and once you have the desired behavior, you can then create a bespoke, standalone generator, which will work with any SDG project, and publish its XML configuration file in version control, for sharing within your organization.

What do we mean by realistic data?

I noted previously that, to be useful for performance and load testing as well as for demonstrating system behavior to prospective users, the data we generate has to be ‘realistic’. Before we start generating this data, let’s clarify what that really means. Realistic data has four main characteristics:

- Believable values – first name attributes should be representative as such; a date of birth should be within expected bounds; we should not see Methuselah’s mum in a list of customers

- Believable value spread – for example, we would not expect an even spread of single, married, divorced people in the MaritalStatus column of our data set. In the UK population the figures are 25%, 49% and 17%.

- Believable entities – the values for a record representing an entity must make sense. If a person has a salutation of “Dr”, then a date of birth less than 20 years ago would be questionable.

- Believable referential relationships – a customer would have one or more orders; an order may have one or more line-items.

Most programming languages have a library for producing mock data values. This is commonly referred to as a “Faker” library but these will only satisfy our first characteristic of realistic data. Redgate’s SQL Data Generator can satisfy them all.

Preparing your database for use with SDG

Proper planning prevents poor performance.

Calculate the size of the data you wish to generate and pre-size your database and log files to allow for that volume of data.

Data generation can be quite intensive so I prefer to put the database in bulk recovery mode.

|

1 |

ALTER DATABASE MyDatabase SET RECOVERY BULK_LOGGED; |

Listing 1

The data model within our SQL Server 2017 instance needs to minimize the amount of work the Data Generator has to do. Certainly, if possible, you will want to:

- Denormalize any one-to-zero-or-one relationships.

- Index to support foreign keys

- Avoid clustered indexes, if you can.

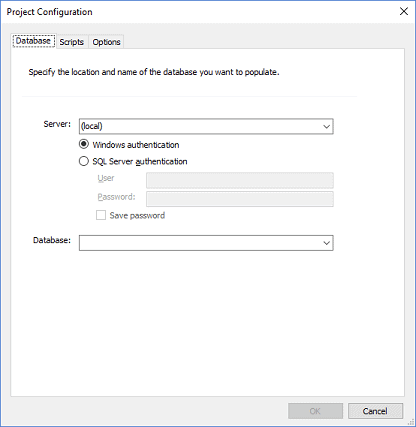

Connecting SDG to your database

When you start the SDG application, you will be faced with a simple Project Configuration dialogue, shown in Figure 3.

Figure 3: Configuring the SDG project

When building your SDG project for the first time you may decide to ignore the Scripts and Options tab. However, these become useful after you are satisfied that your initial data previews are giving you the results you need.

The Scripts tab allows you to specify several scripts, and the order in which they run, as pre/post data generation steps.

The Options tab allows you to specify the following optimizations:

- Whether you wish to enable any triggers and check constraints

- Whether you wish to generate data in batches, and if so how big the default batch size should be. Alternatively, whether you wish to generate data in a single transaction

- Whether you wish to auto-generate previews of the data you wish to create

After entering the SQL Server instance and choosing your database the application will scan your schema and make its best guess as to which of its pre-canned generators best fits your schema.

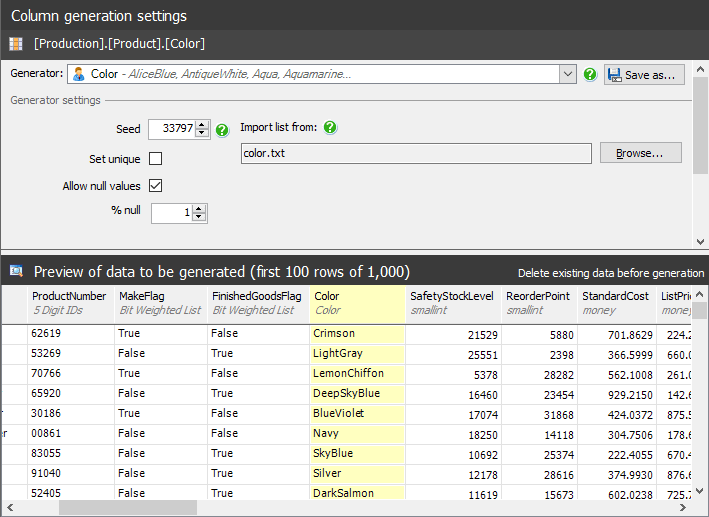

Pre-canned data generators

Through the SDG user interface, we can see that there are 70+ different pre-canned data generators. Where possible, the application will try and match a database field to one of those generators. The example in Figure 4 illustrates the use of the Color generator.

Figure 4: The built-in SDG Color generator

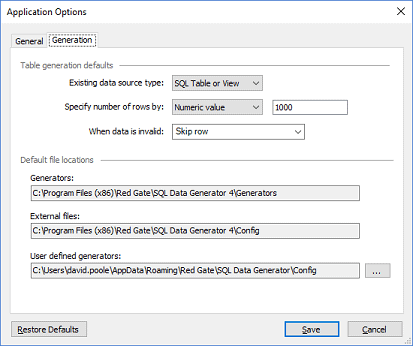

We can see where the pre-canned generators are installed by selecting the Tools | Application Options menu, which will display the dialogue shown in Figure 5.

Figure 5: The location of the DLLs and configuration files for the built-in generators

The Generators folder contains the DLLs that carry out the data generation

Of greater interest, at this stage, is the External files folder, which contains the data and configuration that define how the Generators are used. It is here that we can see the 70+ XML files that define how the different pre-canned generators work.

Returning to our Color generator, if we look at the files in C:\Program Files (x86)\Red Gate\SQL Data Generator 4\Config\, we can see a pair of files called Color.

- Color.txt contains the possible colors

- Color.xml contains the rules that cause the generator to be used.

Listing 2 shows the XML configuration file.

|

1 2 3 4 5 6 7 8 9 10 11 12 13 |

<?xml version="1.0" encoding="utf-8"?> <generators> <generator type="RedGate.SQLDataGenerator.ExpressionGenerators.FileListGenerator" name="Color" description="AliceBlue, AntiqueWhite, Aqua, Aquamarine..." category="Personal"> <property name="FileName">color.txt</property> <type type="string"/> <matches field="^Color$" score="50" minlen="15"/> <matches field="Color" score="30" minlen="15"/> </generator> </generators> |

Listing 2

The rules that are revealed for the “Color” generator are as follows.

- Uses a FileListGenerator to pick up its values from a file called color.txt.

- Lists the generator under the Personal category

- Expects a string (

varchar,nvarchar,char,nchar) - Expects the fields against which it matches to be at least 15 characters long

- Looks for field names in the database that match, or are similar to, ‘Color’ and apply a score to express the likelihood that the column stores colors:

- If it can find a field called Color (

^means start of a string and$means the end), then it will give that a score of 50 - If it finds a field name containing the word Color then it will give it a score of 30

- If it can find a field called Color (

Suppose that we had a separate generator which generates RGB color patterns. To make sure that this generator gets chosen in preference to the standard color generator, we would have to ensure that a match against a field called RGB_color would result in a score greater than 30.

Of course, in the UK we spell colour with a “u”, so this generator would not work against schemas that use UK spelling. However, it is very easy to change the regex matching expressions to use colou?r, which will work with both UK and US spellings.

We can edit both the text file containing the values, and the XML file containing the behavior. We must restart the application for such changes to take effect.

The mechanisms that are available to generate values are shown in the table below

| Generator | Comment |

| Credit Card number | Ensures that a pseudo credit card number is generated that will pass the checksum test for a credit card. |

| CSV | Choose a value as imported from a CSV file |

| Default values | Does not generate a value. It accepts the default value for the columns |

| File list | Similar to the CSV |

| Foreign key | References a value from another table |

| Python Script | Iron Python data generator. These can be used to perform complex data generation logic. Iron means “It Runs On .Net” and is compatible with Python 2.7 |

| RegEx Generator | A valid RegEx will cause values matching the RegEx pattern to be generated. |

| Simple Expression | Iron Python expression. These are simple enough that it might not be apparent to the user that they are actually writing Python. |

| SQL Statement | Allows data values to be chosen from a SQL Server database. This is why the abilty to preload our SQL Server 2017 database with reference data is an important capability. |

| SQL Type | Matches a default generator to the field type |

| Text Shuffler | Will choose words at random from the supplied text. |

| Weighted List | These allow us to assign values and probabilities to those values. For example, we could make one gender more prevalent to another |

These mechanisms are used for several genuinely useful pre-canned generators. These pre-canned generators provide an excellent illustration of how to build your own.

Building a bespoke generator for UK driving offences

Let us suppose that we needed a Data Generator to produce UK Driving Offence codes. A quick look on Wikipedia reveals that these are four character codes, with a limited list of two character prefixes and 2 digit suffixes. There are several possible ways to create this bespoke generator.

Using a RegEx Generator

I decide that a RegEx generator is a good fit for my use case. For the sake of the example, I will use a simplified RegEx, so some codes will be invalid.

I create a file called C:\Program Files (x86)\Red Gate\SQL Data Generator 4\Config\ UKDrive.xml, as shown in Listing 3.

|

1 2 3 4 5 6 7 8 9 10 11 12 13 14 |

<?xml version="1.0" encoding="iso-8859-1"?> <generators> <generator type="RedGate.SQLDataGenerator.ExpressionGenerators.Regexp.RegexpGenerator" name="UK Driving Offences" description="AC10, CD20, DD40, " category="Motoring"> <property name="Expression">(AC|BA|CD|CU|DD|DR|IN|LC|MS|MW|PC|SP|TS|TT|UT|MR)[1-9]0</property> <matches field=".*Offence.*Code.*" score="90" minlen="4"/> <type type="string"/> </generator> </generators> |

Listing 3

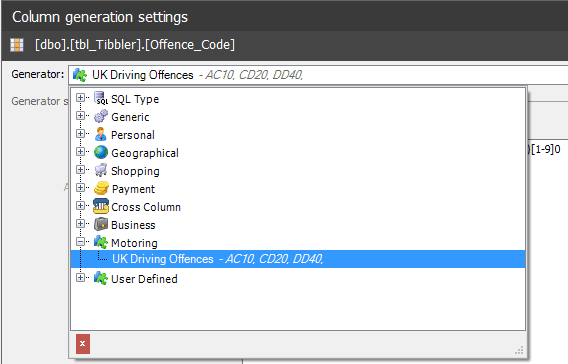

After restarting SDG, I find that my generator will assign to any field with the word “Offence” followed by the word “Code”. I also find that my UK Driving Offences generator is available from the drop-down box in the application user interface.

Figure 6: The custom UK Driving Offences generator

Using a File List Generator

Alternatively, I may decide to store the actual driving offence codes in a file called UKDrive.txt. This would mean that I would change my UKDrive.XML file as follows.

|

1 2 |

<generator type="RedGate.SQLDataGenerator.ExpressionGenerators.Regexp.RegexpGenerator" |

should be changed to:

|

1 2 |

<generator type="RedGate.SQLDataGenerator.ExpressionGenerators.FileListGenerator" |

And:

|

1 |

<property name="Expression">(AC|BA|CD|CU|DD|DR|IN|LC|MS|MW|PC|SP|TS|TT|UT|MR)[1-9]0</property> |

Should be changed to:

|

1 |

<property name="FileName">UKDrive.txt</property> |

Restarting SDG, and our bespoke generator is now working as a random selection from a file.

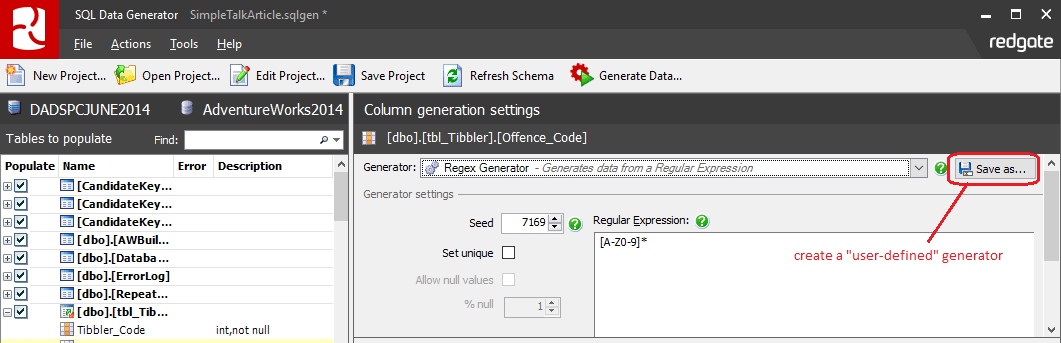

Customizing a pre-canned generator

There is a third way in which I could have set up the data generation; I could have chosen the appropriate “Generic” data generator, adjusted it as required, and clicked the Save as button, as shown in Figure 7.

Figure 7: Customizing and renaming a pre-canned generator

I chose not to do this because my colleagues may need the definition of a driving offence generator for a different project. Any generators built through the GUI are embedded in the specific SDG project file. A project file will only work if the team use a shared database. By defining the generator as a file, I can put the generator configuration and data into source control, to be shared by my team, and for use on any database requiring that generator.

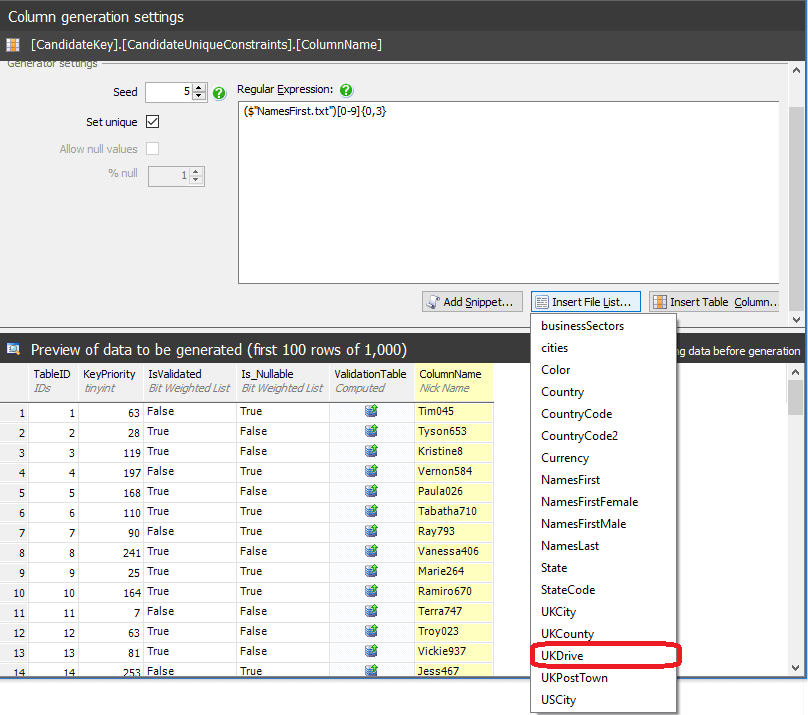

Using the File List Generator with regular expressions

The file based approach has another advantage in that file contents can be used within Regular Expression generators. In Figure 8, we can see that our UKDrive file now shows up in the dropdown list to allow us to use file contents in conjunction with regular expressions.

- ($”UKDrive.txt”) means choose a random value from the UKDrive.txt file

- $[IsValidated] means incorporate the value of the

IsValidatedcolumn.

Figure 8: Using file-based generators with regular expressions

Modelling data distributions using a bespoke weighted list

Through its support for weighted lists, SDG allows us to model the realistic distribution of values. Taking MaritalStatus as an example, we may be able to run a simple query against a data warehouse as follows.

|

1 2 3 |

SELECT MaritalStatus,COUNT(*) AS RecordCount FROM Customer.ContactDetails GROUP BY MaritalStatus |

Listing 4

From the results of the query, we can assemble information as shown in the following table:

| From Query | Supplementary Information | ||

|---|---|---|---|

| MaritalStatus | RecordCount | Description | % |

| D | 9,100 | Divorced/Dissolved | 9 |

| M | 47,000 | Married/Civil Partnership | 47 |

| P | 2,700 | Separated | 3 |

| S | 34,000 | Single | 34 |

| W | 6,600 | Widowed | 7 |

| U | 600 | Unknown | 1 |

What is the best way to implement this distribution via a generator? Again, there are a few options.

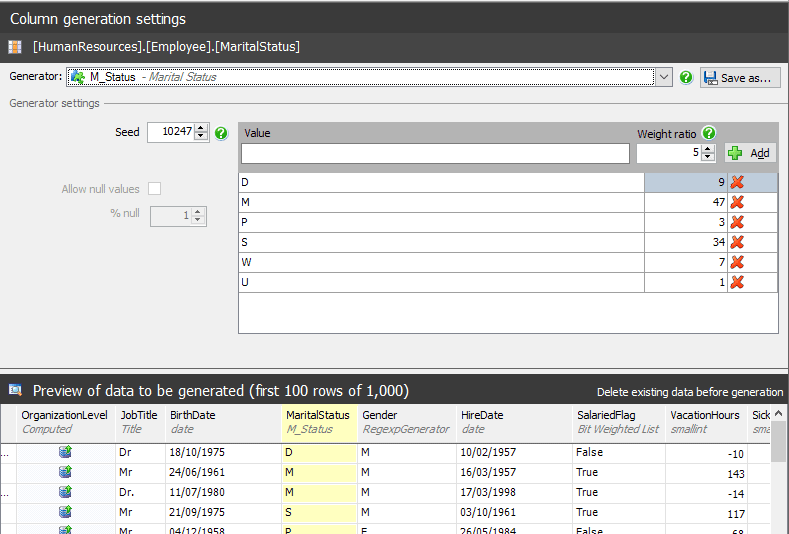

Customize the pre-canned Marital Status generator

I could use the SDG user interface with the Generic/Weighted list generator to set up the list manually, as shown in Figure9.

Figure 9: Customizing the weighted list for the pre-canned Marital Status generator

This has several disadvantages:

- It is time consuming

- It is specific to a one Data Generator project tied to a specific database

- Changes in customer profile of marital status will require manual alteration of the generator

What I want is a generator that can be set up dynamically and work with whatever Data Generator project I may wish to create. Perhaps the ability to create standalone generators through the GUI and the ability to import a pre-weighted list would be useful enhancements in future versions of SDG.

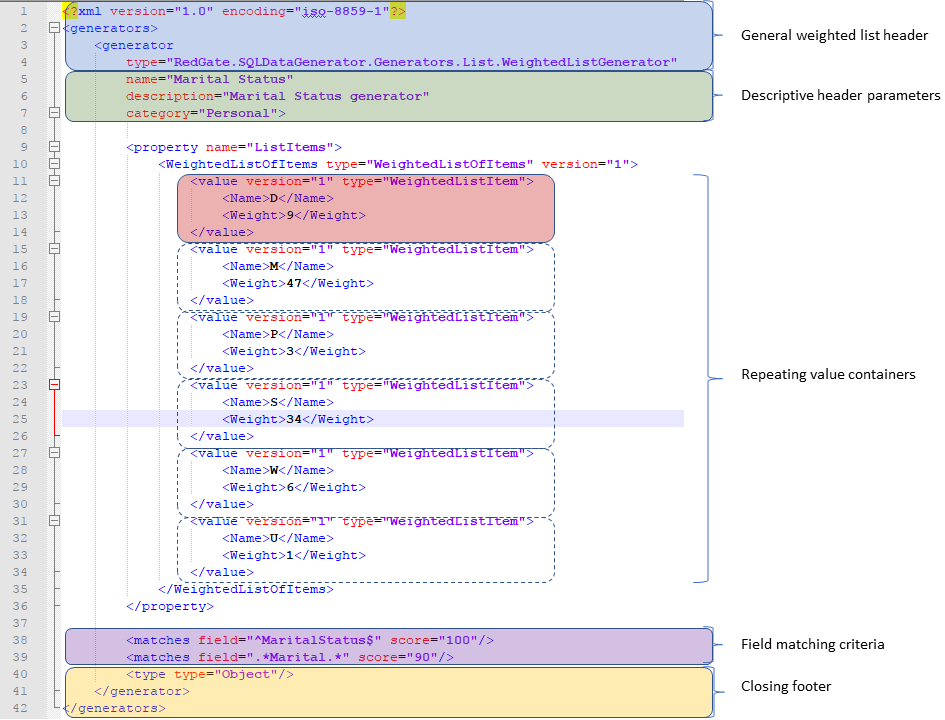

Creating a Bespoke Weighted List

Fortunately, Redgate supply a number of sample configurations that include a WeightedListGenerator.xml. For SDG 4.0, these are held in C:\Program Files (x86)\Red Gate\SQL Data Generator 4\UserExample\Config

Using the WeightedListGenerator.xml file as a template, I create my own MaritalStatus.xml and save it into C:\Program Files (x86)\Red Gate\SQL Data Generator 4\Config.

Figure 10: Creating a bespoke Marital Status generator

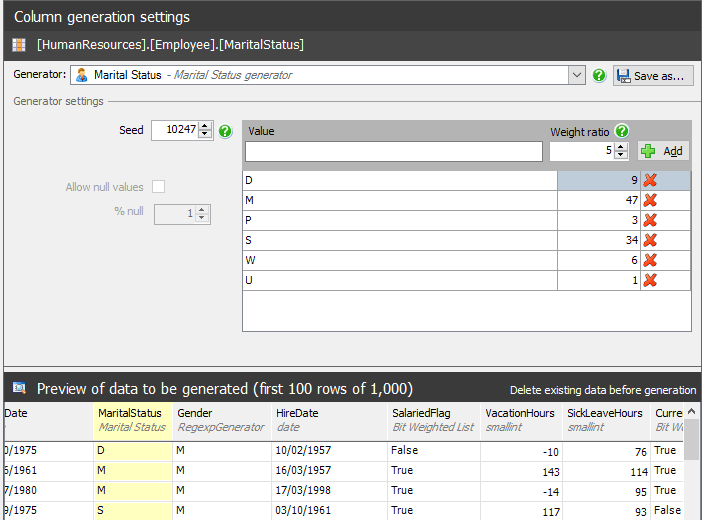

Our bespoke Marital Status configuration gives us the result we want, which is to be used automatically when a new Data Generator project is started.

Figure 11: The bespoke Marital Status generator is used automatically for any SDG project

If we were to use the escrow facility described earlier to obtain our marital status profile, then we could generate the XML file from it using almost any programming language. The task of doing so is straight forward.

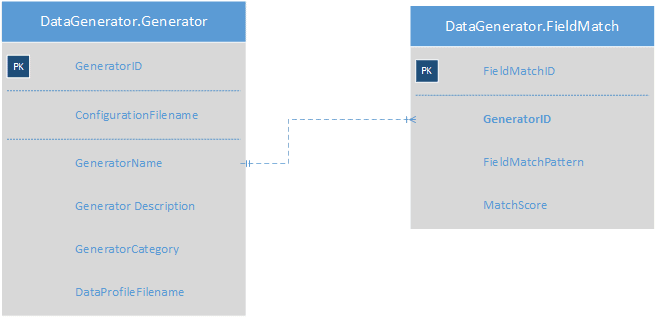

We could take it a stage further and make the creation of our bespoke weighted list data generators entirely data driven.

Figure 12: Schema to support dynamic creation of generator configuration files

Where:

- ConfigurationFilename is the config file we wish to generate

- DataProfileFilename is the file we obtained from our escrow facility containing the names and distribution percentage we wish to base the file on

In building a data driven mechanism, we would address the challenges presented by a manual process:

- Rapid generation of data generator weighted list configurations

- Generators shared across projects and teams

- Scheduled tasks keep both the profiles and the generators up to date

- Easy maintenance of data generator field matching patterns to work with our particular database design

Exporting data from our data generator

Having generated realistic data, using a set of tailored generators that the whole team can share, we now need a means to translate this data in the required formats, for distribution across our data platform.

Delimited text files

Most databases have a facility to bulk import delimited text files. This means that the standard SQL Server bcp utility is well suited to the task.

We do have to consider that our target systems may have specific needs and fortunately bcp caters for these through some critical command switches.

- Code page differences through the -C switch

- Support for Unicode through the -w switch

- Choice of row terminators through the -r switch

- Choice of field delimiters through the -t switch

In my experience, most problems concerned with import or export of character based data with SQL Server can be resolved by some combination of the switches described above.

JSON and XML export

The bcp utility can also be used to provide a rapid export in JSON or XML format.

I have found that database systems that can import JSON documents prefer each document to be represented as a single line. This requires you to use a specific SELECT within SELECT pattern when writing a query.

|

1 2 3 4 5 |

SELECT( SELECT BusinessEntityID, Title, FirstName, MiddleName, LastName, Suffix FOR JSON PATH, WITHOUT_ARRAY_WRAPPER ) FROM Person.Person |

Listing 5

I have also found that writing the output from a JSON query into a database table and then using BCP on that table is a more robust and faster approach than trying to query and export in one step, particularly if your Data Generator database is running on low spec hardware.

Other formats

There are various means of achieving a fixed width export using the bcp utility however these are cumbersome. Fixed width export is best achieved using an ETL tool.

Parquet is a compressed columnar format that is becoming increasingly popular in the Big Data world. The easiest way I have found of generating Parquet files is to use Python Pandas data frames with PyArrow. The caveat is that Pandas is extremely memory inefficient and large data exports can be time consuming.

Closing notes on performance and usage

By its nature, Redgate SQL Data Generator must perform a write intensive operation against a database. If a database has many objects, you may find that the most practical use of the tool is to have separate Data Generator projects aligned to specific subject areas in the database. Trying to use a single project to populate the entire database is simply expecting too much.

Nevertheless, SDG presents us with a huge boost for the task of generating realistic test data. Even with our ability to generate bespoke generator files there remains a considerable amount of work to ensure that all data elements conform to business rules and that sensible values are produced in every case. The goal of generating realistic test data is to improve the quality of the overall application. In my experience quality is the reward for attention to detail and effort.