Planning a Database Testing Strategy for Flyway

With Flyway, you can adopt a test-driven development strategy that will allow you to test and evaluate databases, and database objects, at every phase of the database development lifecycle. The further down the delivery pipeline that bugs appear, the more costly in time and resources they are to fix. This approach will allow you to catch many of them before the database change even gets committed to version control, making a continuous delivery process much easier to adopt and sustain.

This article is part of a series on the requirements and practicalities of database testing, with Flyway:

- Testing Databases: What’s Required?

- Planning a Database Testing Strategy for Flyway

- Test-Driven Development for Flyway Using Transactions

- Basic Functional Testing for Databases using Flyway

- Running Unit and Integration Tests during Flyway Migrations

- Performance Testing Databases with Flyway and PowerShell

- Running Structured Database Tests in Flyway

- Running Database Assertion Tests with Flyway

Also relevant are the series of related articles on Test Data Management.

The road to database test automation

When testing database changes, many simple unit and integration tests can be embedded in the Flyway migration script itself. In addition, Flyway Teams has a callback feature that will allow it execute SQL files or PowerShell scripts before and after every migration. These ‘callbacks’ were designed to make it easy to perform many of the chores associated with database development such as testing, documenting and source management. They allow Flyway to be the center of a series of automated, task-based processes, with testing being the most obvious example, although I’ve explained in other articles how I also use these script callbacks to automatically run a range of other ancillary processes too, such as generating build scripts, models and reports.

In subsequent articles in this series, I’ll show how to use this callback feature to automatically run a range of different types of database test, each time Flyway delivers a new version of the database. Here, I’ll start by describing what you need to consider before you start writing the tests: the sort of database tests you need to plan for, and their purpose, the test environments you’ll require and the test data management strategies to provide all the different types of test data you need for the tests. Finally, I’ll suggest a tiered, stepwise approach to introducing database testing to Flyway developments.

Why write database tests?

Databases can, and do, make catastrophic mistakes through being poorly tested. The elaborate and unexpected ways in which a database can go awry is unimaginable to anyone who hasn’t experienced them. The experienced database developer will test everything possible, at every opportunity.

Database testing may look like obsessive caution to the onlooker, but it accelerates productivity like nothing else, makes you far more aware of good coding practices, and ensures that you sleep well at night when your application goes live. Sure, your code has failed frequently in development, but you’ve recovered things rapidly, in the privacy of your feature branch. Your colleagues are deprived of any evidence of your rashness or impetuous coding habits.

You may never have experienced the subsequent feeling of liberation, where you have a freedom to try things out and experiment in the sure knowledge that you get instant feedback of the unfortunate consequences of your actions. Tests are your safety-net in a development task that can otherwise go seriously wrong. This article will hopefully explain how testing can be done as quickly and painlessly as possible.

Before you start writing tests…

Relational databases, unlike applications, have built-in features to ensure that data itself is internally consistent and correctly formatted. Constraints are the database’s most effective way of testing and enforcing the integrity and consistency of the data, whether they be check constraints, nullability, default, foreign key or unique constraints. A database without constraints can never be reliable. See Managing Database Code Quality in a Flyway Development for further details.

All necessary constraints must be in place before you can assess the coverage required of unit tests. There is no point, for example, in elaborately testing for duplicate data with code when it can be done much more effectively with a UNIQUE constraint.

Types of database test

I’ve written previously about the various type of database tests that is required for corporate applications, see Testing Databases: What’s Required?, so here I’ll focus on the details of performing these tests during a Flyway development.

Unit Tests

Unit testing is performed on each deterministic routine, in isolation, to ensure that it returns a predictable result for each specific set of inputs that is used. They are required for all modules, such as procedures, functions, views and rules. Unit testing is done using small, immutable datasets containing only data that the object can reference directly. Ideally, the test dataset will include the edge-case values that over time tend to sneak into, and cause problems for, even the best-designed database.

The most obvious advantage of any migrations-based approach to database development is that we can add test code directly to the migration file. Generally, you can’t do that with a build script because by convention it can only have DDL code, and because any test code could usually only be run on another connection, after the build is complete and committed.

Flyway migration scripts allow DML as well as DDL so we can unit test the migration as it is being applied. We simply incorporate the required test procedure directly into the migration that creates or alters the table or code module, taking care that the tests are written without leaving any permanent objects. They must clean up after themselves if the test succeeds.

If you are using a sensible RDBMS that allows DDL rollback (DB2, PostgreSQL/EnterpriseDB, Derby, and SQL Server) then you can also design a system where Flyway will automatically roll back any faulty migration that fails its unit and integration tests. If there is a problem that would affect the version, such as a financial miscalculation, you can signal a failure by creating an error. Unless you have changed the default settings, this will cause Flyway to roll back the script.

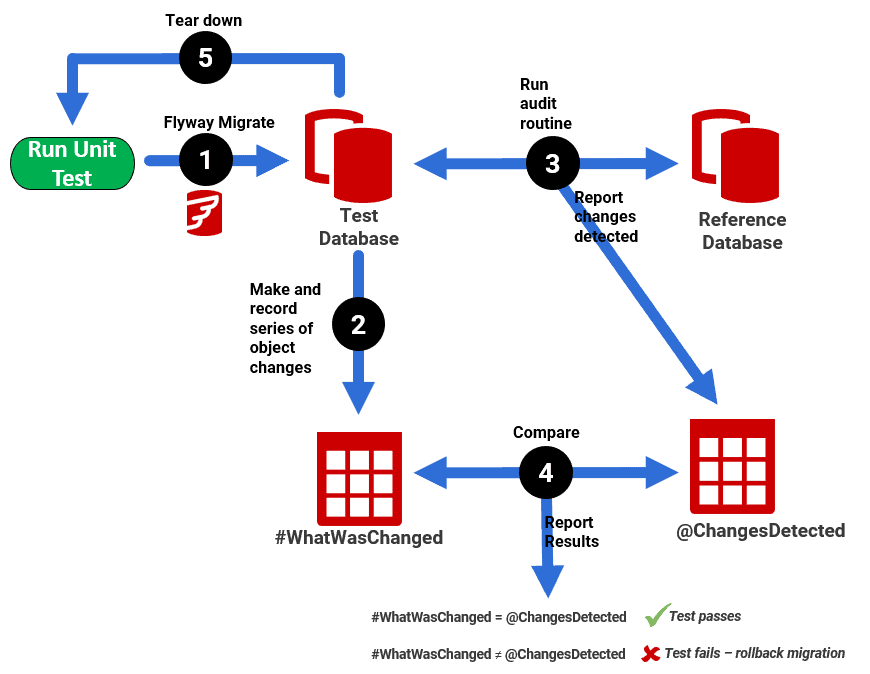

The following example shows a migration that creates a new audit routine with an automated unit test that verifies that it works correctly:

To illustrate how all this is done, in my Pubs database project for Flyway, I’ve added a SQL Server procedure in the migration V1.1.11__AddProcedureWithTest.sql that incorporates a series of unit tests. There another example here: How to Test SQL Server Functions and Procedures using SQL Prompt.

My article Testing Flyway Migrations Using Transactions shows an example for SQL Server, where a failing unit test raises an SQL error message, with the Raiserror statement, causing Flyway to roll back the migration.

Integration Tests

To maintain quality and find problems as you develop, it pays to do continuous integration Testing to ensure that your database and application(s) still work accurately after every migration. The objective is, again, to run tests automatically with each migration. If a test fails, then the migration cannot become part of a release.

Although it is easy to ensure that your own work is free of obvious errors, there is still a potential danger. Your clever function could pass all unit tests but subsequently fail due to its input data being truncated by another part of a process. Imagine a financial value, such as a unit price or a balance. There are plenty of things that can go wrong: just one floating-point datatype in a long processing chain of properly constructed numeric data types, for example, can cause a change in a financial value, and a subsequent crisis in the organization. After all, financial calculations are either correct or wrong. There are no shades of grey.

We need to test business processes as well as individual units. One of the most pressing tasks of the database developer is to write integration tests to verify that the values produced at the end of the chain of tasks are what the organization has deemed to be correct. Taking a simple example, if a customer places a product in a basket, does the database correctly put a temporary lock on the database stock level. Is it released appropriately? Are the end-of-day accounting reports correct for a sample day’s trading?

The strategy I adopt is to record a sample of the processing, such as the trading actions involved in the above purchase process. On a website application, for example, you’d record a few minutes of trading, capturing all calls the application makes to the database as part of a purchase (discounts, refunds, quotations, shipping costs and so on) and then get the organization to sign off on the figures they expect from this sample of trading.

This might sound fatuously simple, but then you’d be wrong. An organization might have different conventions for rounding monetary values up or down when calculating discounts or tax. In my experience, it is unusual for a database to agree with a spreadsheet. I once did a customer-facing application for a retail bank where few of the calculations survived thorough testing. Usually, the fault was due to long-term errors in the manual process, or arcane office practices for rounding values.

To do this type of end-to-end integration testing as part of a Flyway development, you need to:

- Run the set up to establish a known data state – typically this will mean running the migration to create the new database version then importing a standard test dataset. Different tests may require different test datasets.

- Run the process (or simulate it using a scripted process that runs the same sequence of actions)

- Check the final data state matches that which your business rules dictate it should be

- Report the results – this sort of testing must provide enough of the right sort of diagnostic information to make it easy to resolve the problems, and make sure that subsequent migrations are healthier

- Run then a ‘teardown’ process to restore things to how they were at the start.

In step 1, it’s best probably best to use BCP to hold the test datasets if you’re using SQL Server (see Bulk Loading Data via a PowerShell Script in Flyway for an example), and for all other databases I’d advise using JSON. I’ll cover this a little more in the Data Strategies and issues section, later in the article.

If end-to-end processes are run within the database, then integration testing with Flyway is likely to entail running several SQL batch scripts. Alternatively, if the application drives the processes, it might involve using a ‘stub’ of the application, a defined interface between application and database, with enough functionality to perform the application’s part of the process.

Since we don’t want a migration to succeed if it is flawed, it is useful to trigger the integration tests as soon as the migration completes, within the same connection and under the same transaction.

With Flyway we can only do this if we can run the tests as a single SQL callback file (e.g., afterEachMigrate). Even then, it is difficult to maintain because the tests will vary between each version. If running the test involves executing several files, then you’ll need to run them from a batch or PowerShell script callback, but you would then be unable to roll back the transaction because you’d be using another connection.

A simpler approach is to maintain all the resources for integration tests, functions, tables, datasets and procedures, within a separate schema so that the body of tests builds in sync with the database. The tests are then triggered at the end of the migration. This ensures that the integration tests are appropriate for the version. It also allows a transaction rollback to prevent the migration being successfully applied if the integration tests fail. The only complication is that the testing schema must never become part of the release, so the production branch would require a migration to remove it.

Performance Testing

Performance tests with a dataset the size of the production system (either the real size or the envisaged size, if not yet in production) will help to ensure that nothing in a migration run has compromised the performance of the database. If the tests are repeated with a series of different-sized datasets, it is usually termed scalability testing rather than performance testing.

Performance testing has a different objective to integration testing, so it is usually done in parallel, as a separate exercise. It is almost always done once the team have a release candidate. It is unlikely to be done by a Flyway callback because testing will tend to change in nature with the version of the database.

‘Penetration’ testing

Pen Testing (a.k.a. ‘ethical hacking’) aims to assess the quality of the measures that are in place to protect the confidentiality, integrity, and availability of corporate information.

It is usually best done using a published set of tests. These tend to be regularly updated. It is hard for a developer involved in the creation of a database to conceive of the various ways of breaching the data, so it is best managed by a separate team. The tests will involve checking and testing the different security controls, tools, and measures that are designed to protect the Database Management System (DBMS). These tests are important and can expose flaws in the database’s security that seem startlingly obvious when examined by the organization’s legal advisors after a data breach.

Test environments

As far as possible, developers should run automated unit tests and integration tests before merging any changes into the Develop branch. However, this isn’t enough. Developers tend to write automated tests for expected and known bugs; this is fine for preventing regressions, but we also need to test for the unexpected, and to develop and perform both exploratory tests . This is usually done by test experts. If the database passes all the existing integration tests, then we can release the new version for performance and penetration testing.

Flyway works well with the test function because it can be used to provision any number of databases to the same version, whatever their current state.

Development

Any competent database developer will hope to do as much unit and integration testing as possible before any code is committed to the development branch. A bug is best caught early. However, the database developer, unlike a tester, doesn’t enjoy breaking things. Testing is a different expertise and attitude. The developer, however, is well-placed to automate the existing body of tests so that testing can concentrate on developing tests for the new features.

Test

The test cell is the best place for performance testing as this will generally require more storage space, and specialized techniques. The same goes for penetration testing. It is also here that you’ll perform user-acceptance testing. Under UAT, one would generally include system testing (combining all processes tested by integration testing) as well as usability tests.

Staging

A staging environment must resemble your production environment as closely as possible. Staging will also be under the same security constraints as production, so it is the only point at which any database that will contain personal or financial data can be tested with the existing production data.

By the time a release candidate reaches the Staging server, the work of the testing ‘environment’ will already have ensured that the release is bug free. The test cycle is Staging is likely to be the same as are used in integration testing. What has changed is the data. Also, because the test cycle will be run as part of operations, it must be as automated as possible. It must also be integrated with your organization’s continuous integration (CI) process.

The migrations and associated scripts are simply used to update the metadata of the staging server after it is restored from production backups. This underlines the point that migration files for a real database application should never involve data loading: The loading of a development data set must always be done separately. A migration must always be able to conserve existing data to enable table splits and other changes in data design, but not load new data.

Data strategies and issues

Because it is such an obvious cause of data breaches, developers no longer want to use real data for development work, especially if it contains Personally identifiable information (PII). This is even more important for developers working from home.

Development data can be generated, or in some cases it can be a masked and obfuscated version of the real data. Integration tests, as discussed above, require standard data sets, it becomes much easier to verify a test run because you can know what the result of an aggregation operation should be and will have had this confirmed by the users of the database. Basically, the database developer should be able to create, load and save different datasets without batting an eyelid.

Although it’s possible to load relatively small quantities of development or test data via a migration, it’s not a wise approach, generally. You’d need to maintain the separation of data from metadata in database development work and ensure that none of these data insertion scripts enter the ‘main’ branch, where they could then be applied to a production server.

It is safer to load the data as a separate process. This is not as simple as it might seem because data required for testing and development should, obviously, only be loaded if it isn’t there already, and the data files will vary from version to version in line with the current table structure. Since the migrations will be used to upgrade databases as well as provisioning new databases to the correct version, the data loading process will need to be scripted via a batch process or a scripting language such as PowerShell or Python. In Flyway, the obvious choice is to use a callback after a ‘version’ migration run. This would check the version of the database and then either check that the dataset was complete and correct or delete the current dataset and load the appropriate dataset.

When you extract data from a database, or load it into a database, the most convenient way of storing it is usually JSON, simply because of the range of support for it. CSV is the most economical form of storage, but few database tools conform to the standard, so you are likely to get hassle if you choose it. I’ve written an artilce comparing the various options, Saving Data to Various Formats, Using SQL.

My own preference is to use JSON (see Transferring Data with JSON in SQL Server) together with JSON Schema so that the script can test whether the dataset matched the table (see How to validate JSON Data before you import it into a database, together with code.

How to start introducing database testing to Flyway developments

If you currently don’t do any specific database testing, how or where do you start?

Below, I’ve made some suggestions for a ‘staged’ approach to introducing various types of tests, as part of a Flyway development. I won’t go into any detail on the database code quality checks that will also be automated at various points, as I’ve covered that separately here: Managing Database Code Quality in a Flyway Development

Level 1: unit tests and team code reviews

- Create unit tests for all deterministic functions that can be executed as part of a migration.

- Create a system to allow code reviews on any code before it becomes part of the development version of the database. (e.g., during a merge)

- Create one or more consistent realistic test datasets without any production data, using a data generator.

- Provide ways of changing a test data set outside the database, loading it in and exporting it via automated repeatable processes.

- Create a way of relating the versions of the test data to the database versions (unlikely to be one-to-one).

Level 2 – Scripted integration tests

- Develop simple integration tests for all the major processes identified in the database code – these will require a consistent dataset and the ability to compare results with table sources, to check that a result is exactly as expected

- Produce scripts that can ensure a standard input for integration tests before a test is run (e.g., table source or input parameters)

- Automate the suite of integration tests and run them for every new version created by Flyway

- Automated checks for coding standards, code quality and database design issues, to supplement manual code reviews with a

Level 3 – CI Automation and collaboration

- Fully automated CI process for routine unit and integration tests

- Ad-hoc tests supplement the integration tests so as to check for issues that would then be candidates for automation

- Cross-team collaboration on testing – isolated, branch-based development allows Security, Ops Compliance experts to collaborate on testing much earlier in the development cycle.

Summary

Testing must be as automated as possible, and it is relevant to all stages in the development lifecycle. The earlier that bugs and mistakes are identified, the less they will disrupt progress. It is foolish to rely purely on tests. Databases must be designed to be self-testing where possible to avoid bad data, using constraints and triggers. They must be designed in such a way as to make unit testing and integration testing easy, and to provide enough information to make it easy to track bugs.

Flyway Teams makes it far easier to hook in automated testing to the database upgrade process. Not only can each migration file be tested individually, but also the results of each migration run can have the appropriate tests.

By facilitating the appropriate test process at the correct point in the database Development process, it is easier to deliver new database features more quickly.