The Enterprise Roadmap to Reducing Costs While Increasing Value

Every enterprise is now facing the same challenge: to do more with less in a demanding economic environment. Releasing value to customers sooner while at the same time controlling the costs of their infrastructures, retaining skilled staff, and reducing risk.

With technology now central to the way many enterprises offer, package and deliver their products and services, it has a big part to play here. Microsoft CEO Satya Nadella summed it up perfectly in the keynote to Microsoft Inspire 2022 when he said:

Digital technology is a deflationary force in an inflationary economy.

DevOps has a part to play as well because it’s been shown to deliver value to customers faster by streamlining and automating the processes that drive digital technology, and providing tangible business benefits. A widely used benchmark for rating software delivery performance and DevOps is the four key metrics used in the Accelerate State of DevOps Report, which rates organizations on their frequency of deployments, the lead time for changes, their change failure rate, and the time it takes to restore service following a failure.

High performing organizations which use DevOps typically deploy changes and updates multiple times per day compared to low performers which deploy once every few months. Their lead time for changes is also in days not months, and their change failure rate is 0%-15% rather than 46%-60%. Importantly, their time to restore service after a failure is less than one day compared to between one week and one month seen in low performers.

That also includes database development which has been called out in the State of DevOps Report as a key technical practice which can drive high performance in DevOps. As far back as 2018, the report revealed that integrating database development into software delivery positively contributes to performance, and changes to the database no longer slow processes down or cause problems during deployments.

All of which delivers real advantages, as Jon Forster, Senior Global Program Manager at Fitness First, discovered after introducing database DevOps with Redgate:

Code goes from months to deploy, to days. When we want to bring out new functionality for the customer, we can do it really quick. That's the real benefit.

But how do you get to be a high performer, deploying changes faster while at the same time reducing the lead time for changes, the change failure rate, and the mean time to recover? For enterprises, this means focusing on three key areas:

- Unlocking efficiency and innovation

- Accelerating the speed of software delivery

- Enhanced risk management

Unlocking efficiency and innovation

Enterprises typically have large, disparate IT teams working on different projects for departments and functions across the whole business. There will be a number of databases in use along with many applications, some developed in-house, some provided by third parties, which serve both internal needs as well as providing products and services for customers.

It’s a complex picture which is often complicated further by developers working in different ways, using a variety of tools, and following processes that vary between and often within teams. Some developers and teams will be comfortable writing code for both the application they’re working on and the database it connects to. Others won’t be.

It works but the speed of development and deployments is slowed down, frequently by the very desire to release changes faster, as the IT team at the leading financial cooperative in North America, Desjardins, discovered:

So many people were deploying to so many databases, it was hard to keep track of who was deploying what and when.

For Desjardins, as with many large IT teams, one of their biggest challenges was standardizing processes and tooling across different environments and databases.

The biggest step here is to normalize the technology stack and replace the varying approaches to software development and range of legacy tools and solutions in place with a standard set of processes and technologies that are scalable.

A great way to encourage this is to introduce version control within and across teams. By committing code changes to a shared repository, a single source of truth is maintained, cooperation and collaboration is encouraged, and siloed development practices begin to disappear.

This is just as true for the database code, which should also be version controlled, preferably by integrating with and plugging into the same version control system used for applications. Capturing the changes in version control reduces the chance of problems arising later in the development process, and also provides an audit trail of who made what change, when and why.

As well as introducing version control, it’s good practice to equip development teams with realistic, sanitized copies of the production database in their own dedicated environment. This will avoid unpredictable performance problems further down the line because developers are working on realistic datasets, while minimizing the risk of overwriting each other’s changes.

As well as introducing version control, it’s good practice to equip development teams with realistic, sanitized copies of the production database in their own dedicated environment. This will avoid unpredictable performance problems further down the line because developers are working on realistic datasets, while minimizing the risk of overwriting each other’s changes.

Further standardization efforts include equipping development teams with a unified toolset to help with onboarding new team members and establishing coding standards and formatting guidelines. As result, efficiency will increase, and teams will be free to collaborate and innovate.

Want to find out more? Read the Desjardins case study and discover how Desjardins uses Flyway to standardize the way migration scripts are created and versioned across its multi-database estate, which includes Oracle, SQL Server and PostgreSQL.

Accelerating the speed of software delivery

The automation that DevOps encourages removes laborious, manual processes which, in turn, frees up developer time, reduces errors, and makes it simple for teams to collaborate. All while helping to accelerate the speed of releases, yet making the development process more secure.

It works too. By automating the release process for database changes, BGL Insurance made it faster and much more reliable, and saved hours of time and effort for support staff:

Releases are now deployed the same day they are signed off, normally within ten minutes of approval, with no possibility of overwriting code.

It starts with version controlling both application and database code, which keeps track of every change, and makes sure everyone is working on the latest version of the code. With version control in place, Continuous integration (CI) can be introduced, providing the first automation step. As soon as code changes are checked into version control, CI triggers an automated build which tests the changes and immediately flags any errors in the code. If a build fails, it can be fixed efficiently and re-tested, so a stable current build is always available

The opportunity then arises to take the DevOps approach further by aligning the development of database scripts with application code in release management as well as CI. Many application developers already use CI to automatically test their code, and release management tools to automate application deployment. Database developers can join them.

The result? Continuous delivery for databases. Continuous delivery extends DevOps thinking from software development through to deployment. Rather than regarding the release of software as a separate activity, continuous delivery means software is always ready for release.

Additional data management, migration, and monitoring processes may be required to safeguard data, but the broader benefits of continuous delivery gained by standardizing deployment and release processes through automation remain the same for the database.

By automating onerous processes so they are quick, reliable, and predictable, DBAs and development teams are freed to concentrate on more important tasks like high availability, replication, downstream analysis, and alerts and backups.

Want to find out more? Read the BGL Insurance case study to see how they resolved many deployment issues in their database development process by establishing the same kind of CI and CD pipelines that were in use in application development.

Enhanced risk management

Including the database in DevOps has been shown to drive higher software delivery performance. It stops the database being a bottleneck in the process, minimizes deployment errors reaching production, enables teams to release updates and features faster, and releases value to customers sooner.

In order to achieve those advantages, high performing teams typically use a copy of the production database to test their proposed changes against. Having a local, private database to work with empowers developers to try out new approaches without impacting the work of others.

Moving toward this way of working, however, is not a trivial process for database teams that need to balance their resources in terms of time, storage cost, and information security.

Copies of production databases need to be realistic and truly representative of the original in order to retain their referential integrity and distribution characteristics. Using an anonymous dataset, for example, or a smaller subset of the production database, will not truly validate that any changes produce the desired results, and neither will they allow developers to estimate the performance impact of changes.

Providing those copies can be problematic, however, as South Africa’s leading provider of general insurance, Santam, found:

Restoring databases for development environments used to take between four and six hours.

Partly this is down to size. Databases tend to be large and complex, which means developers need to be allocated significant storage, and DBAs need to allow hours of time to copy and provision them. Here, data virtualization technology should be employed to reduce the size of those copies.

It’s also because databases contain personally identifiable information (PII), which often needs to be de-identified or masked in order to remain compliant with data privacy regulations, while also protecting enterprises against data breaches.

Hence the need to provision database copies in a faster, more streamlined way that fits in with DevOps workflows, by using tools which take advantage of virtualization to reduce the size of database copies, and masking data as part of the process to reduce risk.

Hence the need to provision database copies in a faster, more streamlined way that fits in with DevOps workflows, by using tools which take advantage of virtualization to reduce the size of database copies, and masking data as part of the process to reduce risk.

Further protection efforts include enabling the self-service of database copies, refreshing those copies without manual intervention, and building up an inventory of the data estate through data classification.

As a result, development teams will be able to produce higher quality code while protecting sensitive data and remaining compliant with any relevant legislation.

Want to find out more? Read the Santam case study to understand how they automated the rapid delivery of masked, high-quality database copies using 95% less storage space.

Summary

Database DevOps is now a natural part of any digital transformation and modernization initiative. We’ve seen how it can help to unlock efficiencies, accelerate software delivery, and reduce risk. Importantly, it can do so by integrating with and using the same infrastructure already in place for application development.

Like any DevOps initiative, it will involve introducing new tools to help in the streamlining and automation of database development processes and workflows. With IT budgets under pressure, the Return on Investment needs to be established, both in terms of the business benefits to be gained and the $ value waiting to be realized.

If you'd like to learn more about how Redgate have helped organizations across multiple sectors achieve efficiencies while reducing risk, check out our case studies page.

Alternatively, visit our Enterprise solution page to find out more.

Read next

Blog post

Enabling digital transformation – and data modernization – with DevOps

I don’t need to highlight the impact of the last few years on the world and its businesses. Companies that once completely dismissed the idea of remote working now embrace online offices, with many now operating fully remotely. Externally, the marketplace is shifting too, and opportunities for creating and realizing value can be found in new, less familiar places. The pandemic amplified the digital landscape; as a result, there is no fear and resistance to transformation anymore: everyone wants to transform themselves. And this trend of digital transformation hasn’t reached its peak either; it will simply continue to grow. Innovative

Blog post

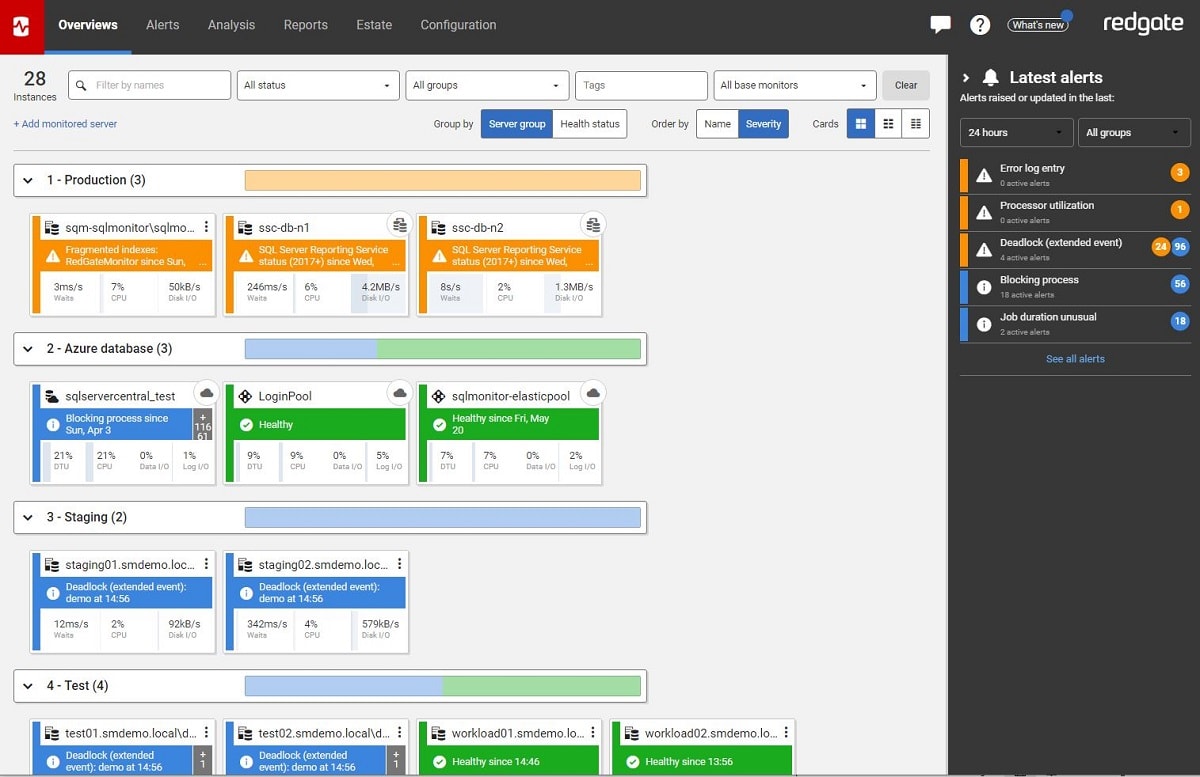

Gaining a competitive edge with database monitoring

I was recently joined by Chris Yates, Senior Vice President, Managing Director of Data and Architecture at Republic Bank for our webinar, Gain the competitive edge with a monitoring tool. The session sparked an insightful conversation around estate monitoring from the perspective of a Senior VP, and I wanted to share some of the key take-aways. With years and years of experience as first a DBA and now a VP responsible for data and architecture, Chris has a deeply informed and valuable viewpoint about how companies can use database monitoring to gain real business value, as you’ll find from the

Loading comments...