Simpler Enterprise Test Data Management using Database Instance Provisioning

Business applications are rarely self-contained, often depending on multiple interconnected databases, each playing a vital role in the functioning of the system. How can we create, refresh, and maintain test data for these complex database systems, and still support dedicated databases and test-driven development processes? This article explains how database instance provisioning helps meet this challenge.

Follow the series

▶ What are Clones? – Get Started with Clones – Development and Testing with Clones

When you survey your database estate you will doubtless find that you need to maintain test data not just for one but for a range of databases, often interdependent, and managed by various applications and projects or even by third-party vendors. This article explains how teams can use the database provisioning, or cloning, component of Redgate Test Data Manager, to create and manage test data for these larger and more complex database systems.

It describes how, as the application (read: database) landscape becomes more complex, Redgate Test Data Manager can still support the use of dedicated development and test environments, through use of containers, data virtualization and database instance provisioning. Each environment reflects the production environment as closely as possible and therefore allows complete and accurate database testing.

Limitations of single-database provisioning

When developing and testing complex database applications, developers often find they have access only to the database on which they are directly working, even if it has dependencies on other databases and other servers. The problem is that these “missing dependencies” will cause build errors during development.

One option is to find them all, and then use temporary ‘stub objects’ to account for them, so that you can develop and test one database out of the ‘set’ without access to others. However, without full access to the complete set of databases, tests might miss critical interactions, leading to false positives or undetected issues that only surface in production. For example, a change in one database could inadvertently affect the data integrity or performance of another, creating cascading problems that are difficult to trace and resolve.

A proper Test Data Management (TDM) strategy will allow all databases to be provisioned together as a cohesive unit, so we can ensure that our testing is comprehensive and reflective of the real production environment. This is not just a nice-to-have: it is a necessity for maintaining the integrity, performance, and reliability of integrated software systems.

Datasets, plural

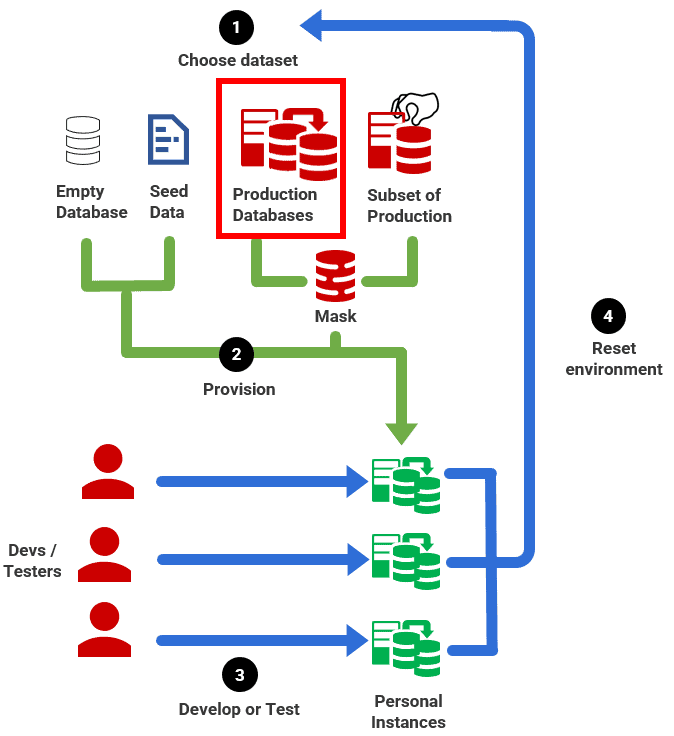

In a previous article, Better Database Development: The Role of Test Data Management, I discussed the role of TDM in delivering a better development and testing experience, and therefore more reliable database changes. The following 4-step TDM workflow is taken from that article, but adapted for the need to be able to choose datasets for inter-connected production databases and then provision them as multi-database development and test instances:

- Choose dataset – choose the required data set, appropriate for the sort of tests that need to be run. In this case, we need to create a ‘dataset’ from a multi-database production system.

- Provision – use the chosen test data to provision personal, ‘dedicated’ database instances, one per developer (or per branch of development).

- Develop or Test – each developer develops and tests code independently, before merging their work into the main development branch.

- Reset environment – developers should be able to reset databases, on demand, so that tests can be run consistently and repeatedly.

How to provision and reset multi-database environments

How do we achieve the above test data management strategy? Even if developers had access to ‘sanitized’ backups of every production database, they’d then need a process that restored each backup in turn, every time they needed to create or reset a dedicated development or test environment. This would take far too long to be viable for test-driven development and require a lot of storage capacity.

This is where data virtualization technologies and tools can help a lot. In particular, the ability to do whole instance provisioning with Test Data Manager makes this process so much simpler. To provide some context for this statement, I’ll start by briefly describing some of the challenges we had to overcome to achieve instance provisioning using SQL Clone (per-database provisioning, SQL Server only)

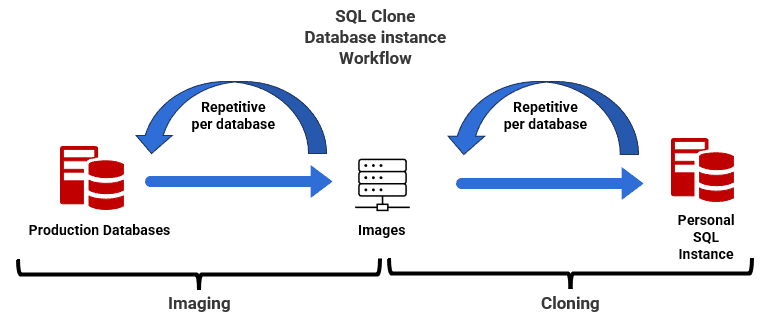

Recreating an instance using per-database provisioning (SQL Clone)

I’ve described in previous articles, such as Automated Provisioning of Multi-Database Environments in a Flyway Development, how SQL Clone and PowerShell automation enable dedicated, multi-database dev and test environments for a SQL Server-only organization.

SQL Clone works on a per-database basis, so when creating or refreshing a database instance we establish the database instance, configured correctly, and then use SQL Clone and PowerShell to recreate each database, one by one. This means that each time we run the pipeline, a script will provision our instance with cloned databases, following predefined steps and configurations. The automated process then repetitively provisions new databases (clones), recreating what the production instance should look like:

Each image creation takes about as long as restoring a database backup but is done infrequently and can be run as an overnight job. After that, each instance creation takes only about 5-10 seconds per database.

Developers then apply schema changes, such as by using Flyway migration scripts. When resetting the environment to start on a new task, the automated process will need to delete each database one by one, in the correct dependency order, and then recreate them all. While the use of clones makes this process much faster, it can still become a time-consuming task when the ‘instance reset’ needs to happen repeatedly, such as during a test run.

Despite the challenges, we have this system working smoothly in SQL Clone, albeit with a lot of PowerShell “heavy lifting”. We have even implemented a database stashing process allowing developers and testers to work on multiple priorities in parallel, without losing work, although again it’s a time-consuming method, because the whole database-by-database instance recreation starts over again, each time a developer switches branch.

With whole-instance provisioning in Redgate Test Data Manager, all these tasks become both much simpler and much faster.

Whole instance provisioning with Redgate Test Data Manager

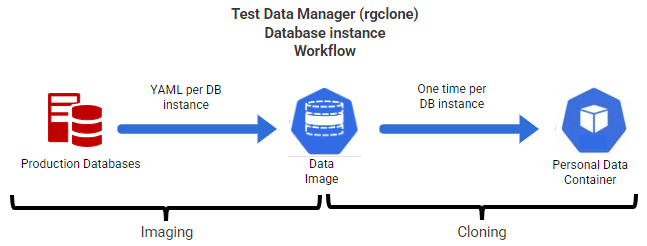

Through its rgclone component, Redgate Test Data Manager provides cross-RDBMS support for multi-database systems, with the ability to capture an entire database instance in a single data image. This ensures that all interdependencies are maintained and mirrored accurately in all the environments where production-like test data is needed.

Clones and Containerization

The technical implementation of database cloning in Test Data Manager relies on a concept called containerization. Containers are an evolution of virtual machines (VMs). But while VMs include an entire operating system within each instance, containers virtualize the operating system itself. This distinction means that the OS is not duplicated across containers, making them faster to boot and significantly more lightweight in terms of both size and resource consumption. Redgate Test Data Manager then exploits built-in data virtualization technology to remove the need to duplicate the data across all containers. It is stored only once, and then each container shares access to the data in a data image, with only subsequent changes being saved for each data container.

Whereas SQL Clone delivers a database on an existing server, Redgate Test Data Manager delivers a complete and working database instance running in a data container. Therefore, it is designed to handle multiple databases that need to be managed together, making it much easier to manage and deploy complete databases instances. When you create a data image using rgclone, it will:

- Spin up an empty, containerized database instance, from a Data Image Definition Template (YAML)

- Restore into it all the required databases, from source backup files

- Save all the database files into a sharable, read-only data image

This, with a single command, gives us an exact replica of the original database instance. Once this image is created, you can quickly, again with a single command, spin up multiple clones from this image, as data containers. Each clone will be a working instance with all the databases, and their data.

This process is much faster. With just one command we can, without any custom PowerShell script, create a dedicated data container. The developer can then apply the necessary migration scripts to produce a new feature, for example, and then test the latest version thoroughly, with all the data and interdependencies in place, exactly as they are in the production system. When a developer or tester needs to reset an instance to its original state, they just use the rgclone CLI to decommission the container, in seconds, and replace it with a new one.

More advanced techniques like database stashing also become simpler with Redgate Test Data Manager, where the user easily switches between containers in seconds with the proxy feature. Your “localhost” connection will be proxied to a data container and by running the proxy command you can virtually switch this “localhost” connection between containers.

Conclusion

Managing test data across multiple interdependent databases is essential for enterprise database development. By choosing the correct TDM strategy, setting up a proper workflow and using the right tools, you and your team can:

- Get all the databases and all the data in a single unit, running on the correct version of the DBMS

- Benefit from rapid creation and reset of environments, which enables test-driven development and rapid testing for databases

- Prevent frustration in development, improving code quality, and reducing time to market for new features.

This article focused on the why, the next articles in this series will look at the how and will cover technical details and code example for implementing the proposed TDM workflow.