Deploying Data and Schema Together with SQL Compare or SQL Change Automation

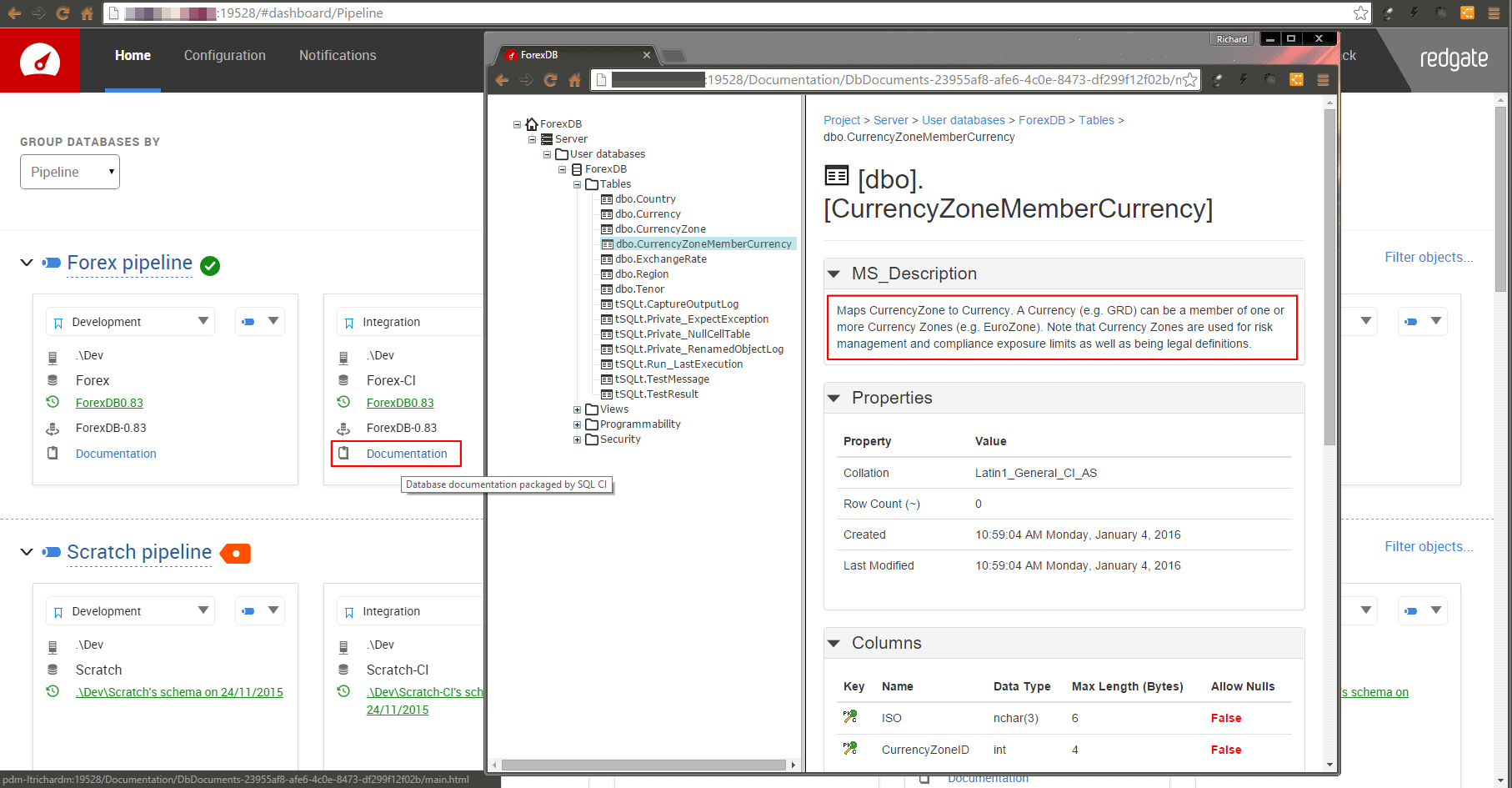

You want to use SQL Compare or SQL Change Automation (SCA) to create or update a database, and at the same time ensure that its data is as you expect. You want to avoid running any additional PowerShell scripting every time you do it, and you want to keep everything in source control, including the data. You just want to keep everything simple. Phil Factor demonstrates how it's done, by generating MERGE scripts from a stored procedure.

If you haven’t got a lot of data, or you if just have a few tables that need some static data, then you can do it easily using SQL Compare or SCA by adding a post-deployment script, which will run as part of the synchronization. Otherwise, it is just one extra script to run as part of the deployment process.

We’re going to use a technique I’ve described in a previous article Using Custom Deployment Scripts with SQL Compare or SQL Change Automation, which involves running a series of MERGE scripts to ensure that the database has the data that you want. To create these MERGE scripts, I use a PPP (Pretty Powerful Procedure), which I’ll introduce here; writing these scripts by hand is laborious although you are seldom likely to need to repeat the chore of creating the script, but will need to adapt it in response to alterations of the corresponding table.

So, what is the problem?

Re-engineering a database would be simple were it not for the data. However long we delay the chore in development, the problem of deploying schema changes while maintaining the data intact will always hit us when we come to deploy to UAT, staging and production. Whichever way we choose to create a new version of a database, after a substantial re-engineering, we will always run the risk of encountering difficulties in dealing with the existing data, especially if tables are split, combined or renamed.

Although I always advocate testing your data migration scripts throughout development, there are also times when you just need a working system, with data of some description, built from source control. To do this, we have alternatives:

- Build the metadata and import the data subsequently using BCP (see Recreating Databases from Scratch with SQL Change Automation).

- ‘Migrate’ the data from the old schema to the new in the affected tables as part of the build (Handling Tricky Data Migrations during State-based Database Deployments)

- Deal with the problem with pre- and post- deployment scripts, keeping DML activities firmly away from the DDL used to build the database. (Using Custom Deployment Scripts with SQL Compare or SQL Change Automation)

Usually, we only do a build once from source, and then synchronize it with the various database copies in development and testing. Generally, the modern relational database knows how to preserve the data when you issue ALTER database commands, and the synchronization tools, such as SQL Compare, know a lot of tricks to preserve the data when SQL Server can’t. However, there is always a time when this becomes impossible because there is too much uncertainty. At that point, you need to spell it out with T-SQL scripts, however you do it.

The technique I’ll describe can only be used with ‘data at rest’, which isn’t being continually updated. It is great for dev data though, so it would suit a lot of development and testing work. If you wish to do use the same technique to synchronize UAT, staging or production, such as after a table split, you’ll need to ‘quiesce’ the database by cutting all other connections, and do the data merging dynamically at the moment you do the split. I’ll be explaining how to do this in another article.

The Merge

Imagine we wanted some code that ensured that a table contained just the data we wanted, no more, no less. We use a MERGE statement so that we do only the necessary insertions or deletions. Let’s take a simple example, from AdventureWorks:

|

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 |

SET IDENTITY_INSERT [Adventureworks2016].[HumanResources].[Department] ON; MERGE INTO [Adventureworks2016].[HumanResources].[Department] AS target USING (VALUES (1, 'Engineering', 'Research and Development', '2008-04-30T00:00:00'), (2, 'Tool Design', 'Research and Development', '2008-04-30T00:00:00'), (3, 'Sales', 'Sales and Marketing', '2008-04-30T00:00:00'), (4, 'Marketing', 'Sales and Marketing', '2008-04-30T00:00:00'), (5, 'Purchasing', 'Inventory Management', '2008-04-30T00:00:00'), (6, 'Research and Development', 'Research and Development', '2008-04-30T00:00:00'), (7, 'Production', 'Manufacturing', '2008-04-30T00:00:00'), (8, 'Production Control', 'Manufacturing', '2008-04-30T00:00:00'), (9, 'Human Resources', 'Executive General and Administration', '2008-04-30T00:00:00'), (10, 'Finance', 'Executive General and Administration', '2008-04-30T00:00:00'), (11, 'Information Services', 'Executive General and Administration', '2008-04-30T00:00:00'), (12, 'Document Control', 'Quality Assurance', '2008-04-30T00:00:00'), (13, 'Quality Assurance', 'Quality Assurance', '2008-04-30T00:00:00'), (14, 'Facilities and Maintenance', 'Executive General and Administration', '2008-04-30T00:00:00'), (15, 'Shipping and Receiving', 'Inventory Management', '2008-04-30T00:00:00'), (16, 'Executive', 'Executive General and Administration', '2008-04-30T00:00:00') )source(DepartmentID, Name, GroupName, ModifiedDate) ON source.DepartmentID = target.DepartmentID WHEN NOT MATCHED BY TARGET THEN INSERT ( DepartmentID, Name, GroupName, ModifiedDate ) VALUES ( DepartmentID, Name, GroupName, ModifiedDate ) WHEN NOT MATCHED BY SOURCE THEN DELETE; SET IDENTITY_INSERT [Adventureworks2016].[HumanResources].[Department] OFF; |

This statement will ensure that there are entries for every row, based on the values of the primary key. For development work, we don’t care too much if the non-key columns are different. If you also need to ensure that the values in the other columns are the same, then you would need an additional statement for the WHEN MATCHED phrase that updates the values if necessary, like this:

([WHEN MATCHED [AND <clause_search_condition>] THEN <merge_matched> ] [...n])

Are you really going to hand-craft code like this for all the tables in a database? Not likely. This is the output from the PPP, called #SaveMergeStatementFromTable, which I’ll show later:

|

1 2 3 4 5 |

DECLARE @TheStatement NVARCHAR(MAX) EXECUTE #SaveMergeStatementFromTable @tablespec='Adventureworks2016.[HumanResources].[Department]', @Statement=@TheStatement OUTPUT; PRINT @TheStatement |

It creates a MERGE script from the table you specify in @tablespec, using a VALUES statement to create a data source. It returns this script as an output variable. And then you can just execute it. This will ensure that there are the correct number of rows with the correct values in the key fields. Is this a complete and wonderful solution? Not quite. It works very well for small tables, but the multi-row VALUES clause doesn’t scale well. It is best broken up into smaller statements. With larger tables, you eventually reach a size where you need to TRUNCATE the table and then import the data into it, using native BCP. If you wish to do a MERGE, you BCP the data into a temporary table, index it up nicely, and then use that as the source for your MERGE, rather than use a Table Value Constructor.

You can also import JSON from a file or held within the script and use that as a table source. For this article, I would like to stick with the simple, and demonstrate the principles using multi-row VALUES statements that can be kept easily in source control for building development databases, or for adding static data.

Creating a data set of MERGE statements

There are different ways of doing this. One is to use SQL from SSMS. To do this, you’d need read and write access to just the part of the file system reserved for this sort of activity. For the demo, I’ve created a directory ‘c:\data\RawData‘ to which I’ve given SQL Server access. You’ll also need to temporarily allow the use of xp_cmdshell. For those of a nervous disposition, at some point I’ll also provide a PowerShell Script that can be used instead.

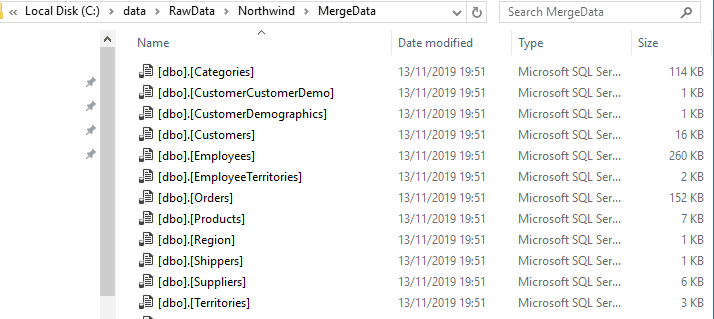

We are going to try it on the venerable NorthWind database, just out of nostalgia and because it won’t tax even the meagerest file system. The script will execute the #SaveMergeStatementFromTable PPP (so you’ll need to create it first of course; see later), providing the name of each table, in turn as the source. For the sp_msforeachtable (and sp_msforeachdb) system procedure, SQL Server substitutes in the name of the table or database for the placeholder (‘?‘) in the string you provide it as a parameter. The PPP produces the corresponding MERGE statement, in each case (@CreatedScript). For each table, we use its MERGE statement to populate a temporary table (##myTemp) and then write it out to its own file in the specified directory (don’t miss off the trailing backlash in the path):

|

1 2 3 4 5 6 7 8 9 10 11 12 13 14 |

USE northwind DECLARE @ourPath sysname ='C:\data\RawData\Northwind\MergeData\'; DECLARE @TheServer sysname =@@ServerName Declare @command NVARCHAR(4000)= ' print ''Creating SQL Merge file for ?'' DECLARE @CreatedScript NVARCHAR(MAX) EXECUTE #SaveMergeStatementFromTable @TableSpec=''?'', @Statement=@CreatedScript OUTPUT CREATE TABLE ##myTemp (Bulkcol nvarchar(MAX)) INSERT INTO ##myTemp (Bulkcol) SELECT @CreatedScript print ''Writing out ?'' EXECUTE xp_cmdshell ''bcp ##myTemp out '+@ourPath+'?.SQL -c -C 65001 -T -S '+@TheServer+' '' DROP TABLE ##myTemp' EXECUTE sp_msforeachtable @command GO |

Which, if successful, gives this:

If we are to incorporate this data deployment into a schema synchronization, with SQL Compare or SCA, we are going to want just one script. We also need to disable constraints at the start and enable them all when we’ve done. This requires a very slight modification to the approach, but the principle remains the same.

|

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27 |

USE northwind DECLARE @TotalScript NVARCHAR(MAX) DECLARE @DestinationDatabase sysname='WestWind' DECLARE @ourPath sysname ='C:\data\RawData\Northwind\MergeData\TotalFile.sql'; DECLARE @TheServer sysname =@@ServerName DROP TABLE IF exists ##myTemp CREATE TABLE ##myTemp (Bulkcol nvarchar(MAX)) DECLARE @DisableConstraints nvarchar(4000)='Print ''Disabling all table constraints'' ' SELECT @DisableConstraints=@DisableConstraints+'ALTER TABLE [WestWind].[dbo].'+QuoteName(name)+' NOCHECK CONSTRAINT ALL ' FROM sys.tables INSERT INTO ##myTemp (BulkCol) SELECT @DisableConstraints DECLARE @command NVARCHAR(4000)= ' print ''Creating SQL Merge file for ?'' DECLARE @CreatedScript NVARCHAR(MAX) EXECUTE #SaveMergeStatementFromTable @TableSpec=''?'',@DestinationDatabase='''+@DestinationDatabase+''',@Statement=@CreatedScript OUTPUT INSERT INTO ##myTemp (Bulkcol) SELECT coalesce(@CreatedScript,'''')+'' '' ' SELECT @command EXECUTE sp_msforeachtable @command print 'Writing out file' DECLARE @BCPCommand NVARCHAR(4000)='bcp ##myTemp out '+@ourPath+' -c -C 65001 -T -S '+@TheServer EXECUTE xp_cmdshell @BCPCommand DECLARE @endCommand VARCHAR(4000)= (SELECT 'ECHO EXEC sp_msforeachtable ''ALTER TABLE ? WITH CHECK CHECK CONSTRAINT all'' >>'+@ourPath) EXECUTE xp_cmdshell @endCommand DROP TABLE ##myTemp |

Oddly, when SQL Compare executes the synch script, it can, if you aren’t careful, come up with errors for code that works fine when the script is executed in SSMS. This explains why constraints are disabled table-by-table. It also explains why I avoid GO batch delimiters.

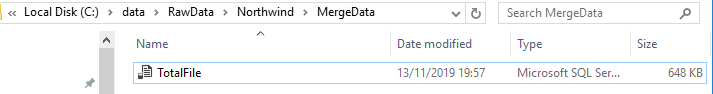

This produces a single file …

If this file is executed directly in SSMS it will check every table and do the necessary insertions and deletions to ensure that the data is the same. When appended to the synchronization script, it will do the same thing to the database you’ve jsut created or altered.

If you wish to deploy to a database with a different name, you can set the @DestinationDatabase optional parameter of #SaveMergeStatementFromTable to the name of the database you are creating or synchronizing, as I’ve demonstrated in the previous code. If I need to have a script that relies on a previous script to provide the database context (SQL Compare and SCA will do this) then you can suppress the database part of the three-part-name by setting the @DontSpecifyTheDatabase optional parameter to 1.

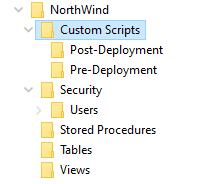

Now we have the file, we can put it in Source Control. OK, I’m pretending here because I’m not developing Northwind. All I’ve done is to create a source control directory by using SQL Compare to comparing the Northwind database to an empty directory. I’ve then added the Custom Scripts directory with its Post-Deployment and Pre-Deployment subdirectories.

With a real database, you can very simply run the SQL to generate the MERGE statements whenever the tables are modified. The merge statements rely on the tables they act on being the same number of columns, with the same name. If they are not, there will be an error. If you modify the tables in the source scripts so that one or more of the tables in target database will be different, then the corresponding post-synchronization merge statements will need to conform to the new design.

I now put the merge script (TotalFile.sql) into the Post-Deployment directory. Once this technique is developed, the file can be written to directly by your SQL Batch file or PowerShell script by specifying the correct path to the appropriate post-deployment directory.

From then on, I can use this directory to synch the data as well as the schema. SQL Compare will append the script to the end of the build script that it generates and use it to fill the tables. Note that it is important to disable constraints before the data-filling operation and enable them afterwards. This can cause problems if you have deliberately disabled constraints in the object build scripts in source control, but we’ll ignore that problem.

The Pretty-Powerful Procedure

I’ve used a temporary procedure to do the donkey work in creating the MERGE scripts. It is one in a series of procedures I use to extract data, which I maintain at https://github.com/Phil-Factor/JSONSQLServerRoutines.

If you specify a table, this PPP creates a MERGE statement. It must be run on SQL Server 2017 or later, because it uses the STRING_AGG function. It can be altered to run on SQL Server 2016 if you use the XML concatenation trick.

It creates a table source using multi-row VALUES statements, comprising the data in the table, and uses this as the source of a MERGE statement, which you can then execute on a destination table whose name you provide. As discussed, this technique is only practicable for small tables, because the use of the VALUES clause means that it degrades with scale.

The source table or query can be specified either by the ‘database.schema.table‘ format for @tablespec, or by supplying the table name, schema and database individually. You can provide a query instead, though in this case you will need to provide the name of the target table. Your query will provide the source data and must produce the correct table source, with the right columns, in the correct order, for the target table you specify.

Elaborating the system

As well as table names, you can provide queries to this routine. The query must produce a result that is the same as the destination table, excluding of course calculated columns. This effectively allows you to specify how, for example, the resulting tables for a table split can be filled. Normally, with the MERGE we ascertain the primary key from the table passed to the procedure. However, a query result can’t have a primary key. You can get around this in a couple of ways.

Firstly, you can feed a temporary table that you’ve filled with the required test data, and then add a primary key. We pass the data in the temp table to the procedure via a query using the temporary table as the source:

|

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 |

USE AdventureWorks2016; SELECT TOP 100 Customer.PersonID, AccountNumber, Identity(INT, 1, 1) AS uniquifier, PersonType, Title, FirstName, MiddleName, LastName, Suffix, AddressLine1, AddressLine2, City, PostalCode, Name INTO #tempTable FROM Sales.Customer INNER JOIN Person.Person ON Customer.PersonID = Person.BusinessEntityID INNER JOIN Person.BusinessEntityAddress ON Person.BusinessEntityID = BusinessEntityAddress.BusinessEntityID INNER JOIN Person.Address ON BusinessEntityAddress.AddressID = Address.AddressID INNER JOIN Person.AddressType ON BusinessEntityAddress.AddressTypeID = AddressType.AddressTypeID; ALTER TABLE #tempTable ALTER COLUMN PersonID INTEGER NOT NULL; ALTER TABLE #tempTable ADD CONSTRAINT MyTempPKConstraint PRIMARY KEY CLUSTERED (PersonID, AccountNumber, uniquifier); DECLARE @TheStatement NVARCHAR(MAX); EXECUTE #SaveMergeStatementFromTable @Query = 'Select top 100 * from #tempTable', @Destination = 'MyTempTable', @Statement = @TheStatement OUTPUT; PRINT @TheStatement; DROP TABLE #tempTable; |

Alternatively, you can specify the primary key to use, if you prefer.

|

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 |

USE AdventureWorks2016; DECLARE @TheStatement NVARCHAR(MAX); EXECUTE #SaveMergeStatementFromTable @Query = ' SELECT top 10 Customer.PersonID, AccountNumber, PersonType, Title, FirstName, MiddleName, LastName, Suffix, AddressLine1, AddressLine2, City, PostalCode, Name FROM Sales.Customer INNER JOIN Person.Person ON Customer.PersonID = Person.BusinessEntityID INNER JOIN Person.BusinessEntityAddress ON Person.BusinessEntityID = BusinessEntityAddress.BusinessEntityID INNER JOIN Person.Address ON BusinessEntityAddress.AddressID = Address.AddressID INNER JOIN Person.AddressType ON BusinessEntityAddress.AddressTypeID = AddressType.AddressTypeID', @Destination = 'MyTempTable', @PrimaryKeys='PersonID, AccountNumber', @Statement = @TheStatement OUTPUT; PRINT @TheStatement; |

Conclusion

It comes as a surprise to do a SQL Compare and find that both data and schema are the same as the source database. One only needs to prepare a new post-deployment script if the table design changes in the database, but as it is all automated, I suspect that this wouldn’t be too much trouble anyway. I used the multi-line VALUES statement because it seemed less radical than the use of JSON to hold the data, but I reckon that the use of JSON would allow larger tables.

Why not just use native BCP to stock the tables? Doing it the way I’ve demonstrated here means that a normal SQL Compare or SCA deployment will do the trick without additional scripting. Everything is held with the database scripts.