The Dangers of using Float or Real Datatypes

There is an old joke about floating-point arithmetic:

"If I cut a cake in three, each piece is 0.33 of the cake. If I stick all three pieces back together that gives me 0.99 of the cake. Where has the rest of the cake gone?"

-- "Simple. That's the little bit stuck on the knife"

Floating-point arithmetic is all about tolerating and managing approximation in order to avoid overflow errors in calculations. In the real world, we usually care about precision in numbers and will, instead, sacrifice space and resources in order to avoid overflow.

Whereas science works happily within a margin of error, precision matters in business accounting. When I was a cub programmer, I once wrote what I thought to be a perfectly suitable way of calculating the profit of stockbroker deals. In a million pounds, it was a penny or two out at the most. I was well-pleased. It used the calculations inherent in the PL/1 compiler that we used at the time to develop financial packages. I showed them the finely crafted application, and they were horrified. A penny out in a million pounds seemed to the hardboiled city traders to be reckless. They wouldn't have it. I was forced to write a binary-coded-decimal (BCD) package in assembler code that was precisely accurate.

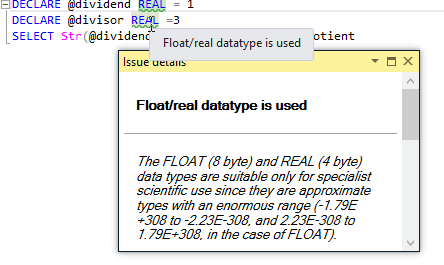

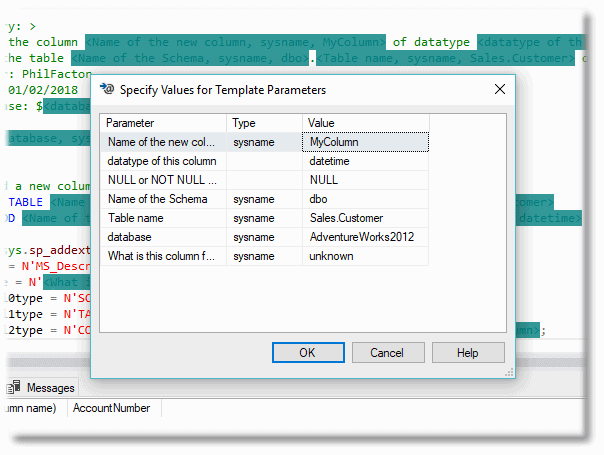

SQL Prompt has a code analysis rule (BP023) that will alert you to the use of FLOAT or REAL datatypes, due to the significant inaccuracies they can introduce to the sort of calculations that many organizations will routinely perform on their SQL Server data.

Approximate-number data types

Floating-point arithmetic was devised at a time when it was a priority to save memory while giving a versatile way of doing calculations that involved large numbers. Although it is still useful for many types of scientific calculations, particularly those that conform to the double-precision IEEE 754 standard for floating point arithmetic, it is, of necessity, a compromise. The clue is in the name of this type of data and arithmetic: 'approximate'. Floating point numbers cannot accurately represent all real numbers: additionally, floating point operations cannot accurately represent all arithmetical operations. However, the range of magnitude of the number that they can hold is far greater than is possible in other numeric types, even if it isn't always accurately held.

The problems that arise from use of floating-point calculations are due to round-off during complex calculations, and are most often seen if the data is 'ill-conditioned', so that small changes in input are magnified in the output. The inaccuracies are far less apparent with increased precision of the representation of the numbers, but they are still present, nonetheless. There are also some esoteric restrictions in the use of numbers that are valid but can't be represented in floating point, such as tan(π/2), but these are likely to excite only mathematicians.

SQL Server floating point datatypes

The SQL Standard has three floating point, approximate data types, REAL, DOUBLE PRECISION and FLOAT(n). SQL Server conforms to this except it has no DOUBLE PRECISION datatype, using FLOAT(53) instead. The FLOAT(24) and FLOAT(53) datatypes corresponds to Binary32 (Single) and Binary64 (double) in the IEEE 754 standard, and are stored in 4 and 8 bytes, and 7 and 16 digits held, accordingly. They are useful when it is important that calculations produce the same result as an application using the .NET framework that also uses IEEE 754. The double precision type is also required when numbers exceed in their magnitude the maximum allowed by the DECIMAL datatype (38 digits) though with loss in precision. Approximate numbers cannot, of course, be reliably used in any test of equality, such as a WHERE clause.

Calculations using the REAL datatype (single precision)

I will try out the REAL datatype. The FLOAT(24) datatype, or smaller, reacts the same way. The first thing to remember when experimenting with floating point numbers in SQL Server is that SSMS renders a floating point number in a way that disguises small differences. For example:

|

1

|

SELECT Convert(REAL,0.100000001490116119384765625)

|

…gives 0.1

To see more accurately what value is stored in a floating point number, you have to use the STR() function, specifying the precision you actually want.

|

1

2

3

4

5

|

/*I'm being a little unfair here, because the decimal number 0.1 is not representable

in floating-point; the exact binary representation would have a "1100" sequence

continuing endlessly:*/

DECLARE @FirstApproximate REAL = 0.1

SELECT Str(@FirstApproximate,20,16) –should be 0.100000001490116119384765625

|

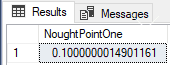

Already, this is alarming. We are, after all, dealing with data with millions of rows so small errors will stack up unless, like 'bankers rounding', they average out. That error is already close to the 'penny in a million pounds' (1/ 240000000) that I mentioned in the introduction!

Let's avoid 0.1 and put it down to a freak of floating point. How about dividing 1 by 3. Surely this couldn't be a problem?

|

1

2

3

4

5

|

DECLARE @dividend REAL = 1

DECLARE @divisor REAL =3

SELECT Str(@dividend /@divisor,20,16) as quotient

--produces 0.3333333432674408

--should be 0.3333333333333333

|

Oops. It got it wrong. OK, it is a tiny error but remember my story about the bankers. An answer is either right or it is wrong, there are no shades of grey for the men in grey suits. In business school, there is only a tick and a cross. No sign that means 'near enough'.

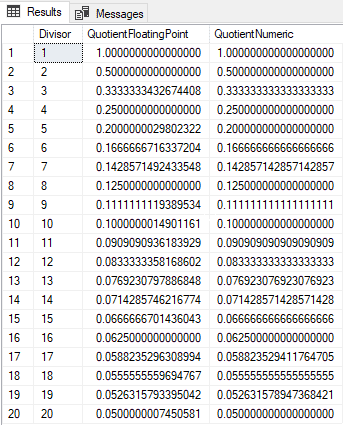

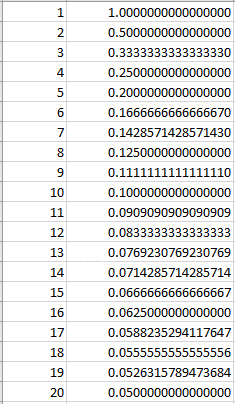

A simple test is to divide one by numbers from one to twenty. What could go wrong?

We can store the results of the floating point and numeric calculation, both converted to strings and we then compare the strings (be warned that STR() can put in a leading space which makes for a complication).

|

1

2

3

4

5

6

7

8

9

10

11

12

13

14

15

|

DECLARE @divisor REAL, @dividend REAL = 1

DECLARE @FloatingPointCalculations TABLE (Divisor INT, QuotientFloatingPoint VARCHAR(20), QuotientNumeric VARCHAR(20));

DECLARE @ii INT = 1;

DECLARE @iiMax INT = 20;

WHILE (@ii <= @iiMax)

BEGIN

SELECT @divisor = @ii;

INSERT INTO @FloatingPointCalculations (Divisor, QuotientFloatingPoint,

QuotientNumeric)

SELECT @ii AS divisor, Str(@Dividend / @divisor, 20, 16) AS QuotientFloatingPoint,

Convert(VARCHAR(20), 1.0000000 / @ii) AS QuotientNumeric;

SELECT @ii += 1;

END;

SELECT The.Divisor, The.QuotientFloatingPoint, The.QuotientNumeric

FROM @FloatingPointCalculations AS The;

|

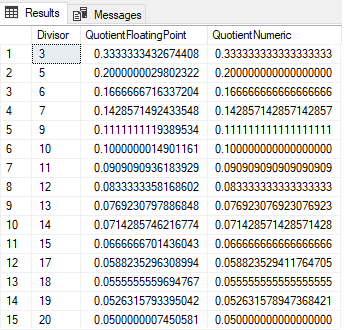

Now, what if we list the rows where the numbers don't match?

|

1

2

3

|

SELECT The.Divisor, The.QuotientFloatingPoint, The.QuotientNumeric

FROM @FloatingPointCalculations The

WHERE Left(LTrim(The.QuotientFloatingPoint),16)<> Left(LTrim(The.QuotientNumeric),16)

|

Ugh! Only where the divisor was 1, 2, 4, 8 or 16 was there a correct result.

In case you are hoping that somehow the float was accurate, and the numeric version wasn't, here is the numeric quotient calculated in Excel:

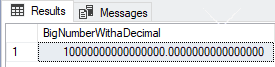

Calculations using FLOAT(25) or over (double precision)

If you use double precision floating point, FLOAT(25) or over, the tests are all passed, because of the STR() function allows a maximum of sixteen places to the right of the decimal point. If there are more than 16 then the result is truncated. The double precision datatype has sixteen digits, whereas the single precision datatype has seven. You'll also have seen that the single precision datatype gets the first seven digits right. Likewise, the double-precision gets the first sixteen digits right. We can just broaden the number to see the approximation.

|

1

2

|

DECLARE @FirstApproximate FLOAT(53) = 10000000000000000.1

SELECT Str(@FirstApproximate,40,16) AS BigNumberWithaDecimal

|

That fractional part has vanished, hasn't it? It is probably only a tiny difference, but in some calculations, it can cause problems.

Conclusion

Floating point arithmetic is fast and economical on storage but provides an approximate result. It is suitable for well-conditioned scientific applications but not for financial calculations, which demands that a number is either 'right' or 'wrong'. It also has the extra disadvantage in a database because you cannot reliably and consistently test two approximate numbers for equality.

It is not correct to say that you should never use floating point numbers in SQL datatypes or in arithmetic. Approximate types are there in the SQL standard for a purpose. I would nowadays always stick with the double precision floating-point datatype in SQL Server, where there is a suitable requirement. They are great for such purposes as modelling weather systems, or plotting trajectories, but not for the types of calculations for which the average organization is likely to use a database.

If you spot erroneous use of these types, then you should switch to a suitable DECIMAL/NUMERIC type instead. If you know you need floating point arithmetic and can explain why, then you probably know enough to avoid the pitfalls of floating point, such as the one that occurred in the famous Patriot missile failure that led directly to 28 deaths.

Loading comments...