Choosing Between Table Variables and Temporary Tables (ST011, ST012)

People can, and do, argue a great deal about the relative merits of table variables and temporary tables. Sometimes, as when writing functions, you have no choice; but when you do you’ll find that both have their uses, and it's easy to find examples where either one is quicker. In this article, I'll explain the main factors involved in choosing one or the other, and demonstrate a few simple 'rules' to get the best performance.

Assuming you follow the basic rules-of-engagement, then you should consider table variables as a first choice when working with relatively small data sets. They are easier to work with and they trigger fewer recompiles in the routines in which they're used, compared to using temporary tables. Table variables also require fewer locking resources as they are 'private' to the process and batch that created them. SQL Prompt implements this recomendation as a code analysis rule, ST011 – Consider using table variable instead of temporary table.

If you are doing more complex processing on temporary data, or need to use more than reasonably small amounts of data in them, then local temporary tables are likely to be a better choice. SQL Code Guard includes a code analysis rule, based on his recommendation, ST012 – Consider using temporary table instead of table variable, but it's not currently implemented in SQL Prompt.

Pros and cons of table variables and temporary tables

Table variables tend to get 'bad press', because queries that use them occasionally result in very inefficient execution plans. However, if you follow a few simple rules, they are a good choice for intermediate 'working' tables, and for passing results between routines, where the data sets are small and the processing required is relatively straightforward.

Table variables are very simple to use, mainly because they are "zero maintenance". They are scoped to the batch or routine in which they are created, and are removed automatically once it completes execution, and so using them within a long-lived connection doesn't risk 'resource hogging' problems in tempdb. If a table variable is declared in a stored procedure, it is local to that stored procedure and cannot be referenced in a nested procedure There are also no statistics-based recompiles for table variables and you can't ALTER one, so routines that use them tend to incur fewer recompiles than those that use temporary tables. They are also not fully logged, so creating and filling them is faster and requires less space in the transaction log. When they are used in stored procedures, there is less contention on system tables, under conditions of high concurrency. In short, it is easier to keep things neat and tidy.

When working with relatively small data sets, they are faster than the comparable temporary table. However, as the number of rows increases, beyond approximately 15K rows, but varying according to context, then you can run into difficulties, mainly due to their lack of support for statistics. Even the indexes that enforce PRIMARY KEY and UNIQUE constraints on table variables do not have statistics. Therefore, the optimizer will use a hard-coded estimation of 1 row returned from a table variable, and so will tend to choose operators optimal for working with small data sets (such as Nested Loops operator for joins). The more rows in the table variable, the larger the discrepancies between estimation and reality, and the more inefficient become the optimizer's plan choices. The resulting plan is sometimes frightful.

The experienced developer or DBA will be on the lookout for this sort of problem, and be ready to add the OPTION (RECOMPILE) query hint to the statement that uses the table variable. When we submit a batch containing a table variable, the optimizer first compiles the batch at which point the table variable is empty. When the batch starts executing, the hint will cause only that single statement to recompile, at which point the table variable will be populated and the optimizer can use the real row count to compile a new plan for that statement. Sometimes, but rarely, even this won't help. Also, over-reliance on this hint will negate to some extent the advantage that table variables have of causing fewer recompiles than temporary tables.

Secondly, certain index limitations with table variables become more of a factor when dealing with large data sets. While you can now use the inline index creation syntax to create non-clustered indexes on a table variable, there are some restrictions, and there are still no associated statistics.

Even with relatively modest row counts, you can encounter query performance issues if you try to execute a query that is a join, and you forget to define a PRIMARY KEY or UNIQUE constraint on the column you are using for the join. Without the metadata that they provide, the optimizer has no knowledge of the logical order of the data, or whether the data in the join column contains duplicate values, and will likely choose inefficient join operations, resulting in slow queries. If you're working with a table variable heap, then you can only use it a simple list that is likely to be processed in a single gulp (table scan). If you combine both use of the OPTION (RECOMPILE) hint, for accurate cardinality estimations, and a key on the join column to give the optimizer useful metadata, then for smaller data sets you can often achieve query speeds similar to or better than using a local temporary table.

Once row counts increase beyond a table variable's comfort zone, or you need to do more complex data processing, then you're best switching to use temporary tables. Here, you have the full options available to you for indexing, and the optimizer will have the luxury of using statistics for each of these indexes. Of course, the downside is that temporary tables come with a higher maintenance cost. You need to make sure to clear up after yourself, to avoid tempdb congestion. If you alter a temporary table, or modify the data in them, you may incur recompiles of the parent routine.

Temporary tables are better when there is a requirement for a large number of deletions and insertions (rowset sharing). This is especially true if the data must be entirely removed from the table, as only temporary tables support truncation. The compromises in the design of table variables, such as the lack of statistics and recompiles, work against them if the data is volatile.

When it pays to use table variables

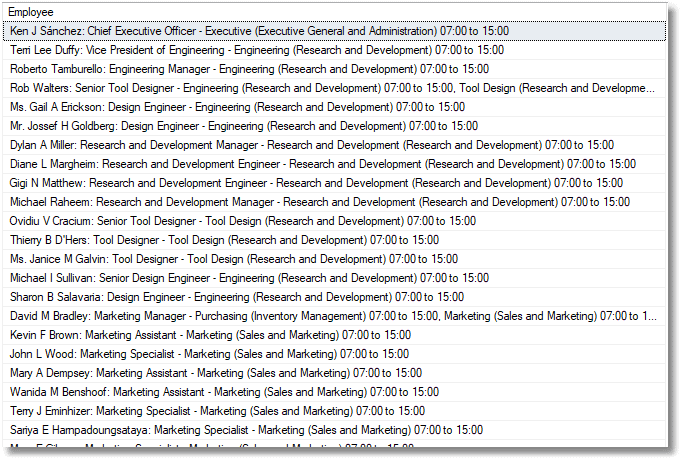

We'll start with an example where a table variable is ideal, and results in better performance. We will produce a list of employees for Adventureworks, which department they work in, and the shifts they work. We're dealing with a small data set (291 rows).

We will put the results in a second temporary table, as if we were passing the result on to the next batch. Listing 1 shows the code.

|

1

2

3

4

5

6

7

8

9

10

11

12

13

14

15

16

17

18

19

20

21

22

23

24

25

26

27

28

29

30

31

32

33

34

35

36

37

38

39

40

41

42

43

44

45

46

47

48

49

50

51

52

53

54

55

56

57

58

59

60

61

62

63

64

65

66

67

68

69

70

71

72

73

74

75

76

77

78

79

80

81

82

83

84

85

86

87

88

89

90

91

92

93

|

USE AdventureWorks2016;

--initialise out timer

DECLARE @log TABLE (TheOrder INT IDENTITY(1,1),

WhatHappened varchar(200), WHENItDid Datetime2 DEFAULT GETDATE())

CREATE TABLE #employees

(Employee NATIONAL CHARACTER VARYING(500) NOT NULL);

----start of timing

INSERT INTO @log(WhatHappened)

SELECT 'Starting My_Section_of_code'--place at the start

--start by using a table variable for workpad

DECLARE @WorkPad TABLE

(NameOfEmployee NATIONAL CHARACTER VARYING(100) NOT NULL,

BusinessEntityID INT PRIMARY KEY NOT NULL,

NationalIDNumber NATIONAL CHARACTER VARYING(15) NOT NULL);

INSERT INTO @WorkPad

(NameOfEmployee, BusinessEntityID, NationalIDNumber)

SELECT Coalesce(Person.Title + ' ', '') + Person.FirstName + ' '

+ Coalesce(Person.MiddleName + ' ', '') + Person.LastName

+ ': ' + Coalesce(Person.Suffix, '') + Employee.JobTitle,

Employee.BusinessEntityID, Employee.NationalIDNumber

FROM HumanResources.Employee

INNER JOIN Person.Person

ON Person.BusinessEntityID = Employee.BusinessEntityID;

INSERT INTO #Employees(Employee)

SELECT TheList.NameOfEmployee + ' - '

+ Coalesce(

Stuff(

(SELECT ', ' + Department.Name + ' ('

+ Department.GroupName + ') '

+ Convert(CHAR(5), Shift.StartTime) + ' to '

+ Convert(CHAR(5), Shift.EndTime)

FROM HumanResources.EmployeeDepartmentHistory

INNER JOIN HumanResources.Department

ON Department.DepartmentID = EmployeeDepartmentHistory.DepartmentID

INNER JOIN HumanResources.Shift

ON Shift.ShiftID = EmployeeDepartmentHistory.ShiftID

WHERE EmployeeDepartmentHistory.BusinessEntityID =

TheList.BusinessEntityID

FOR XML PATH(''), TYPE).value('.', 'varchar(max)'), 1,2,''),'?')

AS Department

FROM @WorkPad TheList;

INSERT INTO @log(WhatHappened) SELECT 'The use of a Table Variable took '--where the routine you want to time ends

--now use a temp table for workpad instead

CREATE TABLE #WorkPad

(NameOfEmployee NATIONAL CHARACTER VARYING(100) NOT NULL,

BusinessEntityID INT PRIMARY KEY NOT NULL,

NationalIDNumber NATIONAL CHARACTER VARYING(15) NOT NULL);

INSERT INTO #WorkPad

(NameOfEmployee, BusinessEntityID, NationalIDNumber)

SELECT Coalesce(Person.Title + ' ', '') + Person.FirstName + ' '

+ Coalesce(Person.MiddleName + ' ', '') + Person.LastName

+ ': ' + Coalesce(Person.Suffix, '') + Employee.JobTitle,

Employee.BusinessEntityID, Employee.NationalIDNumber

FROM HumanResources.Employee

INNER JOIN Person.Person

ON Person.BusinessEntityID = Employee.BusinessEntityID;

INSERT INTO #Employees(Employee)

SELECT TheList.NameOfEmployee + ' - '

+ Coalesce(

Stuff(

(SELECT ', ' + Department.Name + ' ('

+ Department.GroupName + ') '

+ Convert(CHAR(5), Shift.StartTime) + ' to '

+ Convert(CHAR(5), Shift.EndTime)

FROM HumanResources.EmployeeDepartmentHistory

INNER JOIN HumanResources.Department

ON Department.DepartmentID = EmployeeDepartmentHistory.DepartmentID

INNER JOIN HumanResources.Shift

ON Shift.ShiftID = EmployeeDepartmentHistory.ShiftID

WHERE EmployeeDepartmentHistory.BusinessEntityID =

TheList.BusinessEntityID

FOR XML PATH(''), TYPE).value('.', 'varchar(max)'), 1,2,''),'?')

AS Department

FROM #WorkPad TheList;

INSERT INTO @log(WhatHappened)

SELECT 'The use of a temporary Table took '--where the routine you want to time ends

DROP TABLE #Employees

DROP TABLE #WorkPad

/* now we see how long each took. */

SELECT ending.WhatHappened, DateDiff(ms, starting.WHENItDid, ending.WHENItDid) AS ms

FROM @log AS starting

INNER JOIN @log AS ending

ON ending.TheOrder = starting.TheOrder + 1;

--list out all the timings

|

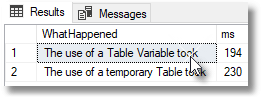

And here is a typical result on my slow test machine:

Using a temporary table is consistently slower, though individual runs can vary quite a lot.

The problems of scale and forgetting to provide a key or a hint

What's the performance like if we join two table variables? Let's try it out. For this example, we need two simple tables, one with all the common words in the English language (CommonWords), and the other with a list of all the words in Bram Stoker's 'Dracula' (WordsInDracula). The TestTVsAndTTs download includes the script to create these two tables and populate each one from its associated text file. There are 60,000 common words, but Bram Stoker only used 10,000 of them. The former is well outside the break-even point, where one starts to prefer temporary tables.

We'll use four simple, outer join queries, testing the result for NULL values, to find out the common words that aren't in Dracula, common words that are in Dracula, words in Dracula that are uncommon, and finally another query to find common words in Dracula, but joining in the opposite direction. You'll see the queries shortly, when I show the code for the Test Rig.

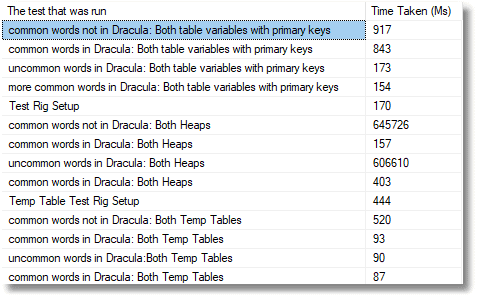

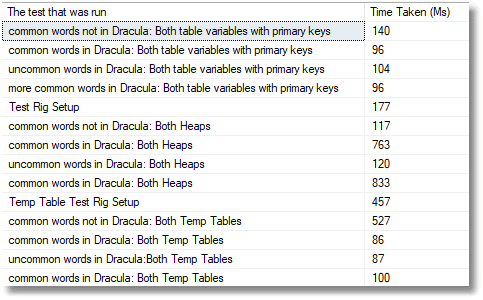

Following are the results of the initial test runs. In the first run, both table variables have primary keys, and in the second they are both heaps, just to see if I'm exaggerating the problems of not declaring an index in a table variable. Finally, we run the same queries with temporary tables. All tests were run, deliberately, on a slow development server, for purposes of illustration; you will get very different results with a production server.

The results show that when the table variables are heaps, you run the risk of the query running for ten minutes rather than 100 milliseconds. These give a great example of the ghastly performance you can experience if you don't know the rules. Even when we use primary keys, though, the number of rows we're dealing with mean that using temporary tables is now twice as fast.

I won't delve into the details of the execution plans behind these performance metrics, other than to give a few broad explanations of the main differences. For the temp table queries the optimizer, armed with a full knowledge of cardinality and the metadata from the primary key constraints, chooses an efficient Merge Join operator to perform the join operation. For the tables variable with primary keys, the optimizer knows the order of the rows in join column, and that they contain no duplicates, but assumes it's only dealing with one row, and so chooses instead a Nested Loops join. Here, it scans one table and then for each row returned performs individual seeks of the other table. This becomes less efficient the larger the data sets, and is especially bad in the cases where it scans the CommonWords table variable, because it results in over 60K seeks of the Dracula table variable. The Nested Loops join reaches 'peak inefficiency' for two, ten-minute queries using table variable heaps, because it entails thousands of table scans of CommonWords. Interestingly, the two "common words in Dracula" queries perform much better and this is because, for those two, the optimizer chose instead a Hash Match join.

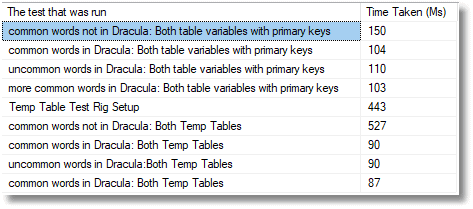

Overall, the temp tables look to be the best choice, but we're not finished yet! Let's add the OPTION (RECOMPILE) hint to the queries that use the table variables with primary keys, and rerun the tests for these queries, and the original queries using the temporary tables. We leave out the poor heaps for the time being.

As you can see, the performance advantage of the temporary table vanishes. Armed with correct row counts and ordered inputs, the optimizer chooses the far more efficient Merge Join.

What, you wonder, would happen if you gave those poor heaps the OPTION (RECOMPILE) hint too? Lo, the story changes for them so that all three timings are much closer.

Interestingly, the two "common words in Dracula" queries that were fast even on heaps are now much slower. Armed with the correct row counts, the optimizer changes its strategy, but because it still has none of the useful metadata available to it when we define constraints and keys, it makes a bad choice. It scans the CommonWords heap then attempts a "partial aggregation", estimating that it will aggregate down from 60K rows to a few hundred. It doesn't know that there are no duplicates, so in fact it doesn't aggregate down at all, and the aggregation and subsequent join spill to tempdb.

The Test Rig

Please note that this is the test rig in its final form showing roughly equal performance for the three different types of table. You will need to remove the OPTION (RECOMPILE) hints to get back to the original.

|

1

2

3

4

5

6

7

8

9

10

11

12

13

14

15

16

17

18

19

20

21

22

23

24

25

26

27

28

29

30

31

32

33

34

35

36

37

38

39

40

41

42

43

44

45

46

47

48

49

50

51

52

53

54

55

56

57

58

59

60

61

62

63

64

65

66

67

68

69

70

71

72

73

74

75

76

77

78

79

80

81

82

83

84

85

86

87

88

89

90

91

92

93

94

95

96

97

98

99

100

101

102

103

104

105

106

107

108

109

110

111

112

113

114

115

116

117

118

119

120

121

122

123

124

125

126

127

128

129

130

131

132

133

134

135

136

137

138

139

140

141

142

143

144

145

146

147

148

149

150

151

152

153

154

155

156

157

158

159

160

161

162

163

164

165

166

167

168

169

170

171

172

173

174

175

176

177

178

179

180

181

182

183

184

185

186

187

188

189

|

USE PhilFactor;

--create the working table with all the words from Dracula in it

DECLARE @WordsInDracula TABLE

(word VARCHAR(40) NOT NULL PRIMARY KEY CLUSTERED);

INSERT INTO @WordsInDracula(word) SELECT WordsInDracula.word FROM dbo.WordsInDracula;

--create the other working table with all the common words in it

DECLARE @CommonWords TABLE

(word VARCHAR(40) NOT NULL PRIMARY KEY CLUSTERED);

INSERT INTO @CommonWords(word) SELECT commonwords.word FROM dbo.commonwords;

--create a timing log

DECLARE @log TABLE

(TheOrder INT IDENTITY(1, 1),

WhatHappened VARCHAR(200),

WhenItDid DATETIME2 DEFAULT GetDate());

----start of the timing (never reported)

INSERT INTO @log(WhatHappened) SELECT 'Starting My_Section_of_code';

--place at the start

---------------section of code using table variables

--first timed section of code using table variables

SELECT Count(*) AS [common words not in Dracula]

FROM @CommonWords AS c

LEFT OUTER JOIN @WordsInDracula AS d

ON d.word = c.word

WHERE d.word IS NULL

OPTION(RECOMPILE);

INSERT INTO @log(WhatHappened)

SELECT 'common words not in Dracula: Both table variables with primary keys ';

--where the routine you want to time ends

--Second timed section of code using table variables

SELECT Count(*) AS [common words in Dracula]

FROM @CommonWords AS c

LEFT OUTER JOIN @WordsInDracula AS d

ON d.word = c.word

WHERE d.word IS NOT NULL

OPTION(RECOMPILE);

INSERT INTO @log(WhatHappened)

SELECT 'common words in Dracula: Both table variables with primary keys ';

--where the routine you want to time ends

--third timed section of code using table variables

SELECT Count(*) AS [uncommon words in Dracula ]

FROM @WordsInDracula AS d

LEFT OUTER JOIN @CommonWords AS c

ON d.word = c.word

WHERE c.word IS NULL

OPTION(RECOMPILE);

INSERT INTO @log(WhatHappened)

SELECT 'uncommon words in Dracula: Both table variables with primary keys ';

--where the routine you want to time ends

--last timed section of code using table variables

SELECT Count(*) AS [common words in Dracula ]

FROM @WordsInDracula AS d

LEFT OUTER JOIN @CommonWords AS c

ON d.word = c.word

WHERE c.word IS NOT NULL

OPTION(RECOMPILE);

INSERT INTO @log(WhatHappened)

SELECT 'more common words in Dracula: Both table variables with primary keys ';

--where the routine you want to time ends

---------------section of code using heap variables

DECLARE @WordsInDraculaHeap TABLE(word VARCHAR(40) NOT NULL);

INSERT INTO @WordsInDraculaHeap(word) SELECT WordsInDracula.word FROM dbo.WordsInDracula;

DECLARE @CommonWordsHeap TABLE(word VARCHAR(40) NOT NULL);

INSERT INTO @CommonWordsHeap(word) SELECT commonwords.word FROM dbo.commonwords;

INSERT INTO @log(WhatHappened) SELECT 'Test Rig Setup ';

--where the routine you want to time ends

--first timed section of code using heap variables

SELECT Count(*) AS [common words not in Dracula]

FROM @CommonWordsHeap AS c

LEFT OUTER JOIN @WordsInDraculaHeap AS d

ON d.word = c.word

WHERE d.word IS NULL

OPTION(RECOMPILE);

INSERT INTO @log(WhatHappened) SELECT 'common words not in Dracula: Both Heaps ';

--where the routine you want to time ends

--second timed section of code using heap variables

SELECT Count(*) AS [common words in Dracula]

FROM @CommonWordsHeap AS c

LEFT OUTER JOIN @WordsInDraculaHeap AS d

ON d.word = c.word

WHERE d.word IS NOT NULL

OPTION(RECOMPILE);

INSERT INTO @log(WhatHappened) SELECT 'common words in Dracula: Both Heaps ';

--where the routine you want to time ends

--third timed section of code using heap variables

SELECT Count(*) AS [uncommon words in Dracula ]

FROM @WordsInDraculaHeap AS d

LEFT OUTER JOIN @CommonWordsHeap AS c

ON d.word = c.word

WHERE c.word IS NULL

OPTION(RECOMPILE);

INSERT INTO @log(WhatHappened) SELECT 'uncommon words in Dracula: Both Heaps ';

--where the routine you want to time ends

--last timed section of code using heap variables

SELECT Count(*) AS [common words in Dracula ]

FROM @WordsInDraculaHeap AS d

LEFT OUTER JOIN @CommonWordsHeap AS c

ON d.word = c.word

WHERE c.word IS NOT NULL

OPTION(RECOMPILE);

INSERT INTO @log(WhatHappened) SELECT 'common words in Dracula: Both Heaps ';

--where the routine you want to time ends

---------------section of code using Temporary tables

CREATE TABLE #WordsInDracula (word VARCHAR(40) NOT NULL PRIMARY KEY);

INSERT INTO #WordsInDracula(word) SELECT WordsInDracula.word FROM dbo.WordsInDracula;

CREATE TABLE #CommonWords (word VARCHAR(40) NOT NULL PRIMARY KEY);

INSERT INTO #CommonWords(word) SELECT commonwords.word FROM dbo.commonwords;

INSERT INTO @log(WhatHappened) SELECT 'Temp Table Test Rig Setup ';

--where the routine you want to time ends

--first timed section of code using Temporary tables

SELECT Count(*) AS [common words not in Dracula]

FROM #CommonWords AS c

LEFT OUTER JOIN #WordsInDracula AS d

ON d.word = c.word

WHERE d.word IS NULL;

INSERT INTO @log(WhatHappened) SELECT 'common words not in Dracula: Both Temp Tables ';

--where the routine you want to time ends

--Second timed section of code using Temporary tables

SELECT Count(*) AS [common words in Dracula]

FROM #CommonWords AS c

LEFT OUTER JOIN #WordsInDracula AS d

ON d.word = c.word

WHERE d.word IS NOT NULL;

INSERT INTO @log(WhatHappened) SELECT 'common words in Dracula: Both Temp Tables ';

--where the routine you want to time ends

--third timed section of code using Temporary tables

SELECT Count(*) AS [uncommon words in Dracula ]

FROM #WordsInDracula AS d

LEFT OUTER JOIN #CommonWords AS c

ON d.word = c.word

WHERE c.word IS NULL;

INSERT INTO @log(WhatHappened) SELECT 'uncommon words in Dracula:Both Temp Tables ';

--where the routine you want to time ends

--last timed section of code using Temporary tables

SELECT Count(*) AS [common words in Dracula ]

FROM #WordsInDracula AS d

LEFT OUTER JOIN #CommonWords AS c

ON d.word = c.word

WHERE c.word IS NOT NULL;

INSERT INTO @log(WhatHappened) SELECT 'common words in Dracula: Both Temp Tables '; --where the routine you want to time ends

DROP TABLE #WordsInDracula;

DROP TABLE #CommonWords;

SELECT ending.WhatHappened AS [The test that was run],

DateDiff(ms, starting.WhenItDid, ending.WhenItDid) AS [Time Taken (Ms)]

FROM @log AS starting

INNER JOIN @log AS ending

ON ending.TheOrder = starting.TheOrder + 1;

--list out all the timings

|

Listing 2

Conclusions

There is nothing reckless about using table variables. They give a better performance when used for the purposes for which they were intended, and they do their own mopping-up. At a certain point, the compromises that give them a better performance (not triggering recompiles, not providing statistics, no rollback, no parallelism) become their downfall.

Often, the SQL Server pundit will give sage advice about the size of result that will cause problems for a table variable. The results I've shown you in this article will suggest to you that this oversimplifies the issues. There are two important factors: if you have a result of over, let us say, 1000 rows (and this figure depends on context) then you need to have a PRIMARY KEY or UNIQUE key for any queries that join to a table variable. At a certain point, you will also need to trigger a recompile to get a decent execution plan, which has its own overhead.

Even then, performance can suffer badly, especially if you're performing more complex processing, because the optimizer still has no access to statistics, and so no knowledge of the selectivity of any query predicate. In such cases, you'll need to switch to using temporary tables.

Further Reading

- http://sqlblog.com/blogs/paul_white/archive/2012/08/15/temporary-tables-in-stored-procedures.aspx

- https://blogs.msdn.microsoft.com/sqlprogrammability/2007/01/18/11-0-temporary-tables-table-variables-and-recompiles/

- http://www.sqlservercentral.com/blogs/bit-barbarian/2016/02/02/should-i-use-optionrecompile/

Loading comments...