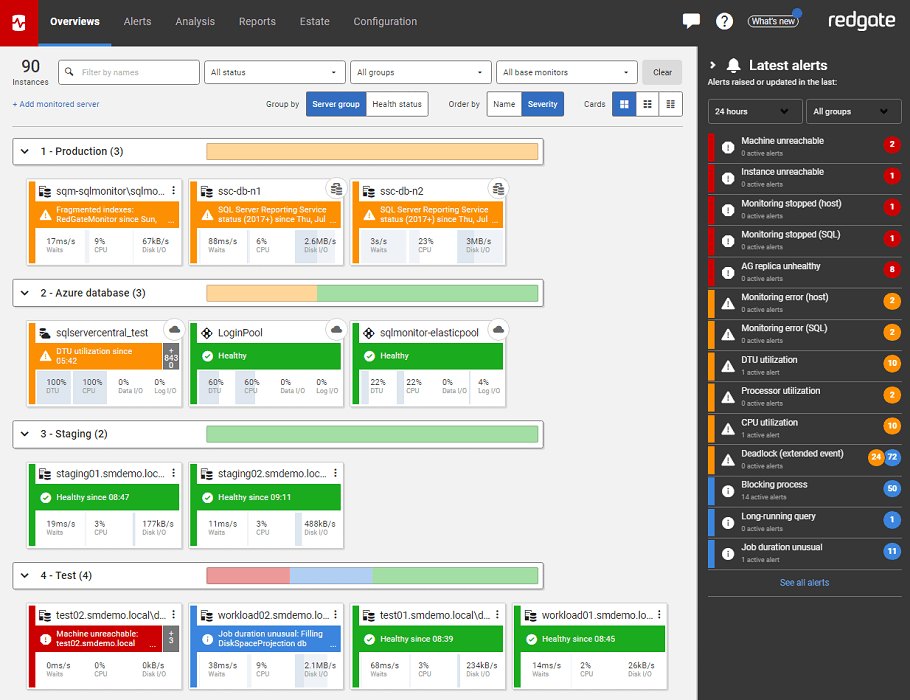

Monitoring Distributed SQL Servers using SQL Monitor

If your SQL Server estate is large, or installed across different, isolated networks, or both, then you need a distributed monitoring solution. It is very easy to set up multiple base monitors in SQL Monitor, and then to manage the monitoring, alerting and troubleshooting for your entire SQL Server estate, from a single web interface.

Many organizations are experiencing rapid expansion and diversification of their SQL Server estate to include Cloud, VMWare and other platforms, alongside traditional on-premise servers. This article explains the basic architecture and components of SQL Monitor, and then how to set up a distributed monitoring solution. It will allow a single administrative team to manage and monitor activity on all SQL Servers, across diverse networks and infrastructures, and so to identify resource bottlenecks, query performance problems, and other common errors and issues across the entire estate, from a single web interface.

SQL Monitor Basic Architecture

In a typical, basic SQL Monitor architecture, we’d have a dedicated monitoring server, on which we install the SQM Monitoring Service, a.k.a. Base Monitor. This is a normal Windows service that connects remotely to each monitored server and collects the alerts and diagnostic data, using a combination of WMI and T-SQL. It stores all this data in a SQL Monitor repository.

SQM raises an alert whenever an ‘event’ occurs (such as a SQL Server error or a job failure) or whenever some pre-defined, but configurable, threshold value for some alert metric (such as disk space usage, or query duration) is exceeded. When you view alert details in the SQL Monitor web interface, the web application asks for data, the base monitor retrieves it from the repository. Users can drill into the list of all the alerts raised on the monitored servers, along with the data collected for that alert, as well as various snapshots, aggregations and summaries of resource usage and query activity on the server around the time of the alert, helping us to determine quickly what might have caused it.

Figure 1 shows a basic 3-tier SQL Monitor architecture, consisting of the SQL Monitor Web Interface, the Base Monitor and the monitored servers.

Figure 1

Distributed monitoring of servers on different networks

If you try to use the architecture in Figure 1 to monitor servers in isolated networks, then it will introduce some complexities, and potentially some security issues. For example, the server estate may be geographically dispersed, or co-located but separated by security protocols, or the estates may be distributed across different infrastructure, including on-premise production servers, but with certain business functions now using cloud-based SQL Servers, and with some development and tests servers running on virtualized systems, and so on.

Figure 2 shows an example where the estate includes five on-premise servers in New York, three in Tokyo, and four servers hosted on Azure, although the dashed lines could represent any securely-isolated network.

The SQL Monitor icon at the top represents a single set of SQL Monitor components i.e. we have one base monitor (and associated repository) responsible for connecting to, and collecting data from, all servers.

Figure 2

This architecture presents several challenges. Opening ports from a single SQL Monitor installation, into the networks at each location, represents an increased ‘surface area’ for attack. Also, if the single Windows service account for the base monitor is compromised, the attacker would have access to servers at all locations. In addition, if you’re using SQL Monitor for servers that are geographically remote, then latency and bandwidth constraints could reduce the performance and reliability of the monitoring solution. Even when this approach can be delivered reliably and securely (such as by using VPNs), it is typically time-consuming to implement, and usually requires assistance from network and security teams.

One solution might be to install a complete set of SQL Monitor components in each location, as show in Figure 3.

Figure 3

This is more secure, and more scalable if the number of servers at each location is high. However, now you need to log in to multiple accounts, and will need to open a different UI for each location.

The best solution is to exploit SQL Monitor’s support for multiple base monitors, all connected to a single User Interface. In Figure 4, each set of servers is assigned to that network’s base monitor, and associated SQL Monitor repository, and each base monitor sends it’s monitoring data to a single web server and UI.

Figure 4

Now we can monitor the entire estate using a single SQL Monitor interface. This architecture is more secure, since we only need to open one port into each location. It removes bandwidth and connection-related issues from the diagnostic data collection process. It is scalable; just add a base monitor per 200 servers for best performance. Finally, it is no more expensive to set up, in terms of license cost, since SQL Monitor is licensed purely in terms of the number of “entities” monitored, and we can simply split the license between multiple base monitors.

How to set up distributed monitoring

To setup Multiple Base Monitors, just go to the Configuration | Base Monitor Connection page in the SQL Monitor UI. On that page, you can add additional Base Monitors.

Figure 5

When you have more than one Base Monitor, one will be designated as the Primary Base Monitor (by default the first one you install, but you can change this), and it will handle the authentication of SQL monitor users. If the Primary Base Monitor cannot be reached, monitoring will continue as normal but you will not be able to log in to the web front-end until you reestablish a connection to it. The Primary Base Monitor also handles all reporting, including managing report definitions and mailing generated reports.

Once the connections are saved, to setup the monitored servers, go to Configuration | Monitored servers. On that page, you can switch between Base Monitors and assign each of the monitored servers to a specific Base Monitor, as shown in Figure 6.

Figure 6

There are some basic requirements for setting up multiple Base Monitors. For example, all base monitors, and the Web Server, should be running the same version of SQL Monitor; you’ll see an error if you try to connect from a web server to a base monitor that is a different version. You can find other troubleshooting advice in the documentation, as well as guidance on how to upgrade SQL Monitor, when running multiple base monitors.

Monitoring large estates using distributed monitoring

A classic trap that catches some monitoring solutions, especially manual solutions, is that as they grow, they become heavyweight, and intrusive, collecting an ever-growing stream of highly detailed metrics. They install agents that run complex, heavyweight queries, or set trace flags to capture ‘verbose output’, or request ‘specialist’ metrics that SQL Server can collect only by scanning every page in the source table. While a DBA might find it useful to have in-depth data for every metric, it’s only manageable when monitoring a small number of databases; this monitoring strategy doesn’t scale. Also, it increases substantially the danger of the observer effect, where the act of collecting performance data from a target server affects the performance of that server.

By contrast, SQM has been designed and tuned to minimize the impact of diagnostic data collection on the monitored servers, and to allow SQM to scale smoothly to monitor large number of SQL Server instances. It installs no agents on the target servers. By default, it collects a carefully curated set of data required to catch and diagnose common SQL Server-related problems, rather than gathering large volumes of data which is unlikely to be of value. It doesn’t collect detailed profiling data, by default, but does allow you to turn it on for limited periods as required. If a metric is known to have a high collection overhead (such as index fragmentation, for example), SQM collects it only infrequently, but again allows you to configure this.

Overall, you can expect SQM to collect only around 150-450 MB of data per day for a typical server hosting a single SQL Server instance, depending on the number of databases per instance, and the workload running on the server. SQM stores the text of SQL statements, the details of blocking processes, and query plans, as well as the alerts. If the server is subject to many requests per second, and especially if the workload includes frequent “large-scale”, reporting style queries, then the volume of data collected will be higher.

All of this means that even the single base monitor architecture, as described in figure 1, will typically scale to around 200 servers. Beyond that, the speed and responsiveness of SQL Monitor will start to degrade, due to the bottleneck of having a single service orchestrate the collection of data from so many servers, and write all the data to a single SQL Monitor repository.

Of course, If you have more than 200 servers to monitor, then they’re likely to be in a network configuration that makes multiple base monitors more appropriate anyway, but a useful side-effect is that you can now scale smoothly to monitor very large estates, with up to 200 servers in each network location.

Conclusions

If your SQL Server estate is distributed across different, isolated networks, or your server estate is very large (>200 servers), or both, then you need a distributed monitoring solution. It is very easy to set up multiple base monitors in SQL Monitor, and it will allow you to collect all of the diagnostic data you need, securely, and then to manage the monitoring and alerting and performance troubleshooting for your entire SQL Server estate, from a single web UI.

Tools in this post

Redgate Monitor

Real-time multi-platform performance monitoring, with alerts and diagnostics