SQL Clone Quick Tip: Offloading DBCC checks

If you guard against database corruption by restoring backups to secondary server, licensed as per production, and then running DBCC CHECKDB integrity checks, then you might save a lot of restore time, and disk space, by offloading this task to a clone.

If corruption creeps into a database, and from there into its backup chain, it can and will derail the best-laid Disaster Recovery plans. How, as a DBA, do you guard against this? The first line of defense is SQL Server’s CHECKSUM verification, but to be certain that a database is corruption-free, you need to run regular DBCC CHECKDB checks on that database.

Unfortunately, this is a resource-intensive task that you won’t want to run during normal hours of operation, as it will affect the performance of user transactions. However, for very large databases, these database integrity checks can take so long that they cannot be completed within a standard maintenance window.

What are your options in this situation? The most common response is to offload the integrity checks to a restored database backup, on a secondary, production-licensed server. However, if you already have SQL Clone set up, for database provisioning, then an alternative might be to offload DBCC checks, and possibly other administrative jobs, to a clone database on a server licensed as per the production server.

CHECKSUM verification

SQL Server runs lightweight CHECKSUM verification automatically (by default) to detect on-disk corruption of data pages. Whenever SQL Server writes a data page to disk, it calculates a checksum and stores the value in the page header. Subsequently, when it reads that page into memory, it recalculates the checksum and compares it to the value stored in the header. If the values match, then no corruption occurred while the page was stored in I/O subsystem; if they don’t match then SQL Server raises a corruption error, which might look something like this:

|

1 2 3 4 |

Msg 824, Level 24, State 2, Line 1 SQL Server detected a logical consistency-based I/O error: incorrect checksum (expected: 0x9a3e399c; actual: 0x9a14b99c). It occurred during a read of page (1:184) in database ID 23 at offset 0x00000000170000 in file 'D:\SQLDATA\ForcingFailures.mdf'. Additional messages in the SQL Server error log or system event log may provide more detail. This is a severe error condition that threatens database integrity and must be corrected immediately. Complete a full database consistency check (DBCC CHECKDB). This error can be caused by many factors; for more information, see SQL Server Books Online. |

Of course, this means that SQL Server only detects on-disk corruption at the point it reads the data from the disk, into memory. What if on-disk data corruption affects a row that has not yet been read, since it was last updated, and you subsequently capture that row in a backup operation?

We can guard against this by extending the CHECKSUM verification into our database backup operations, as shown in Listing 1, so that every page on disk is free from this form of corruption.

|

1 2 3 4 |

BACKUP DATABASE <DatabaseName> TO DISK= '<Backup_location>.bak' WITH CHECKSUM GO |

Listing 1: Backup WITH CHECKSUM syntax

SQL Server will recalculate checksums as it reads each page to the backup file. If it finds a page that fails this test, the backup will fail. If the backup succeeds then the backup is valid…or maybe not.

The problems with relying solely on CHECKSUM is that it verifies only that no corruption occurred between writing the data to disk and reading it back out. It does not detect in-memory corruption. What happens if a data page cached in memory is updated, and then some in-memory corruption occurs, perhaps due to a faulty memory chip, before the modified page is written back to disk?

On writing that data page to disk, SQL Server will simply calculate a checksum value on the corrupted page and write it into the page header. When it reads that page during a subsequent BACKUP...WITH CHECKSUM operation, it will recalculate the checksum and, assuming no additional problems occurred in the I/O subsystem, the values will match and the corrupted page will be included in the backup.

DBCC CHECKDB

The only way to be sure your database, and therefore its backups, are totally corruption-free is to run DBCC CHECKDB integrity checks on a regular basis. This tool fully validates the page structure and content of all data pages, and will check the logical and physical integrity of all the objects in the specified database, and so will catch corruption as early as possible.

However, it performs extensive checks, and so eats up a lot of server resources. If you run it concurrently with user workloads, it will have a performance impact on those applications.

It can also take a long time to run, depending on size and complexity of the database. If you only need to check relatively few databases, of modest size, you can run the task during the weekly maintenance window. If that’s not viable, then you might consider running a cut-down version of the task, DBCC CHECKDB <DatabaseName> WITH PHYISCAL_ONLY. However, as you might expect, this will skip certain types of check, so is not without risk.

Most teams, who find they can’t run these checks on the production system, during maintenance windows, end up offloading the task to another server, and running the checks on a restored backup. However, if you’re managing a large SQL Server estate, populated with very large databases, then this can require extra servers and storage, just to offload the database integrity check workload, and will involve copying very large backups across your networks.

If you’re already using SQL Clone for database provisioning, then you might have already solved this conundrum. Rather than run the integrity checks as a separate task, you can offload it to your clone databases.

Offloading DBCC checks to SQL Clone

Let’s say you take automated nightly full backup of your databases, using the WITH CHECKSUM syntax shown in Listing 1.

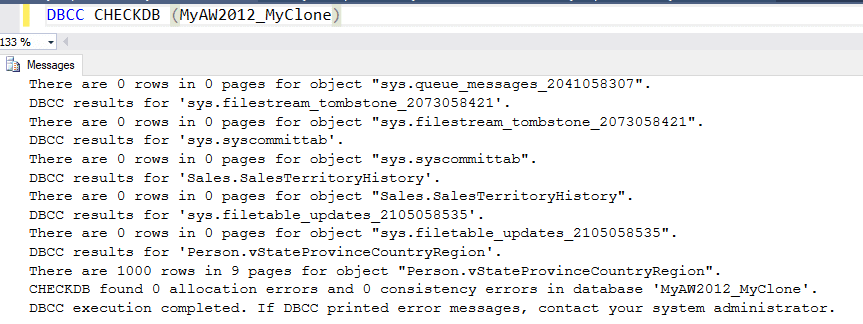

If you’re already created images from any of these backups, then you can offload DBCC checks for those databases to clones. Simply create a clone from each image, and run the DBCC checks on the clone database. You can use SQL Clone’s PowerShell interface to automate this.

The CPU processing load associated with the DBCC task will be on the client hosting the clone, so you’ll probably want each clone on its own machine, unless you’re going to run the checks serially, for one database after another.

You’ll want the image location to have plenty of disk space and disk IO capacity, since each clone will be reading every page in its parent image, during the DBBC checks. You’ll also want a fast network connection between client and image.

Summary

A DBA needs a multi-pronged defense to the threat of data corruption. BACKUP...WITH CHECKSUM is a useful tool, but you also need to schedule regular consistency checks for each database, using DBCC CHECKDB. Weekly checks are optimal. If you’re struggling to fit these checks into your weekly maintenance window, then you can consider offloading the checks for some or all the databases to SQL Clone.

Of course, there are other tasks that you might also consider offloading, such as ‘heavy’ end-of-month reports, such as AR reconciliation reports, in cases where running them on production significantly impacts the performance of user transactions. You might also consider offloading to SQL Clone tasks such as testing patches and updates, or testing new indexes.

We’d be interested to know of any issue you’d see in offloading DBCC Checks to Clone, and about any other administrative tasks you might consider offloading.

Tools in this post

SQL Provision

Provision virtualized clones of databases in seconds, with sensitive data shielded