Migrating SQL Monitor’s Base Service to .NET Core

Josh Crang explains why SQL Monitor will be switched to run on .NET Core, and how the team tested the potential performance improvements in the monitoring service.

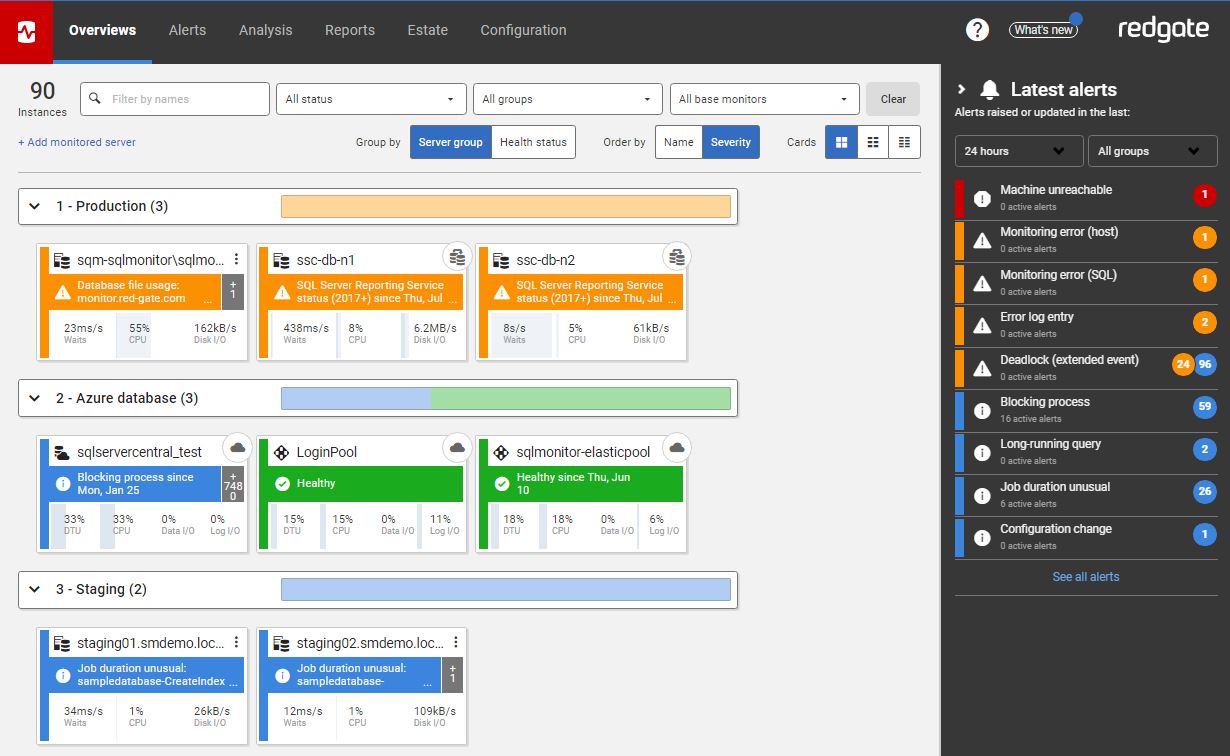

SQL Monitor is made of two main components: a base monitor and a website. The base monitor is responsible for gathering, storing, and alerting on data. The website displays all this data in a user-friendly format accessible from a web browser.

Over the last few months, the SQL Monitor team have been focusing on improving the performance of the base monitor to allow you to monitor more servers from a single base monitor. We have already released several incremental improvements to reach this goal. This articles explains significant upcoming change: migrating the base monitor service from .NET Framework to .NET Core.

.NET Framework to .NET Core

.NET Core is Microsoft’s cross-platform successor to .NET Framework. One of its biggest promises is a significant improvement in performance – something we were interested to see if SQL Monitor’s base monitor could benefit from.

The base monitor connects to each of the machines and SQL Server instances on a network domain. On a schedule, it runs a series of data collection tasks on each ‘target’, which sample data for a range of machine-, instance- and database-level metrics. Several factors affect the number of SQL Server instances you can expect to be able to monitor from a single base monitor, but we’re aiming for 200 SQL Server instances from a single, well-spec’d base monitor server (See Monitoring Distributed SQL Servers using SQL Monitor for further details).

The next sections describe our testing methodology and results. They have been encouraging enough that we’re asking existing SQL Monitor users to contact us, if they’d like to be ‘early adopters’, and test the performance improvements offered by our new .NET Core base monitor on their own systems (see the end of the article for details).

Testing methodology

Part of our work on performance was providing all developers with a reliable way to test how any changes affect overall performance of the system without requiring to always have access to 100s of SQL Server instances to monitor. To allow this, we added support in SQL Monitor to have its data collection mocked out – replacing data collection with fake data inspired by some of our larger customers’ estates. Monitor will still process, store, and alert on this data exactly as though it were provided from real machines and SQL instances.

To compare performance of SQL Monitor running under .NET Core, we ran SQL Monitor using this faked data collection over two days with 150 instances – switching to .NET Core after the first day. The SQL Server data repository and base monitor were hosted on modestly spec’d machines (base monitor a 3.30GHz i5-6600 with 16GiB memory; data repository a 3.50GHz i5-7600 with 32GiB memory).

We chose to use 150 instances since this is a more than this single base monitor could reasonably handle, so we’d see meaningful changes in health metrics:

- Data collections tasks per second: Measures the overall throughput of the system. A single data collection task collects all data for a given target on a given schedule. For example, this may be all data on localhost collected every 15 seconds.

- Median runnable task time: Measures the system’s capacity to keep up with data collection tasks. Data collection tasks are scheduled to run at a regular frequency (for example every 15 seconds). Median runnable time measures how long tasks are in a ready to run state before they are run. In a system with 10 seconds median runnable task time, you’d expect an every-15-seconds task to be run every 25 seconds. Ideally this should be under five seconds.

Results

Let’s start by looking at data collections. To the left of the graph, you’ll see how many data collections were run under .NET Framework. To the right on the same metric where the only change is to use the .NET Core runtime. This shows a clear improvement. Over the shown period, .NET Framework manages around 109 data collections per second, whereas .NET Core pushes 137 data collections per second. A speedup of over 25% just by changing the runtime.

Median runnable, too, shows great improvements. When running under .NET Framework, the median runnable time never falls below 10 seconds. .NET Core sees this metric stable at around three and a half seconds. In real terms, this means metrics that should be sampled every 15 seconds will be sampled every 18 seconds instead of every 25 seconds.

Next steps

We’re already running both versions of the base service internally, as well as on our monitor.red-gate.com demo site. The improvements we’ve seen are significant enough that we’re aiming to replace the .NET Framework version with the .NET Core version for all customers soon. If you are interested in taking part in our early-access program and be among the first to experience the performance improvements, please contact the team (email sqlmonitorfeedback@red-gate.com).

Tools in this post

Redgate Monitor

Real-time SQL Server and PostgreSQL performance monitoring, with alerts and diagnostics