At Stack Overflow, our environment has multiple implementations of a largely – but not 100% – identical schema. (By “schema,” I mostly mean the set of tables within a database.) I sometimes need to retrieve or update data across a large number of sites. Let’s say, pulling or removing information from the Users table, and related tables, across all of our databases.

This gets complicated.

Because:

- Each of our Q & A sites has its own database, and each database has a table called

Users(and some metadata is split out into a separateUserMetadatatable). - A few sites are special, like Area 51, where the

Userstable looks a little different (it is wider, because theUserMetadatasplit isn’t present here for historical reasons). - A few others look like Q & A databases, like Chat. They also contain a table called

Users, but here the table is used differently and the column structure is unique. - Stack Overflow for Teams is more complex. A team’s tables are shared with others within a single database, with the boundary being a schema instead of a database. There is no table called

dbo.Users, but a database may contain multipleUserstables, such asTeam0001.Users,Team0002.Users, and so on. I’ll deal with this special case in a future post.

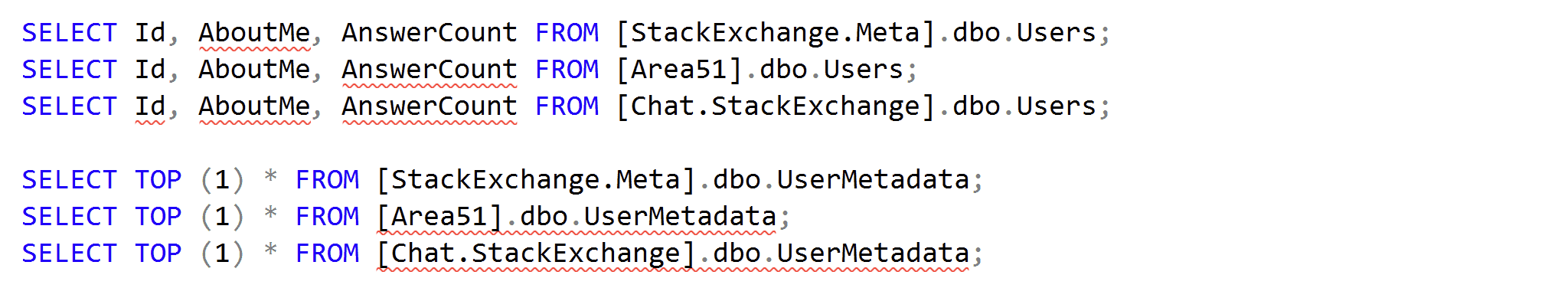

IntelliSense highlights a few of these differences straight away:

Schema has “drifted” in these and other cases, for example when features are only applicable to one site, team, or product. Features like Collectives, Saves, or Staging Ground can lead to new columns or even entire tables that are only present in some databases.

This is okay, and there are many ways to deal with drift – even drift by design. But that’s not the point of this post. Because even if I could convince everyone all of our databases should have identical schema, I can’t snap my fingers and make it happen overnight. In the meantime, I have to deal with these differences.

A fake example.

Imagine I want to collect a list of users with more than one answer and who mention groundhogs in the “About Me” section of their profile. In any Q & A database, I can issue the following query:

|

1 2 3 4 5 6 7 8 |

SELECT u.Id, u.AnswerCount FROM Users AS u INNER JOIN UserMetadata AS um ON u.Id = um.UserId WHERE um.AboutMe LIKE N'%groundhogs%' AND u.AnswerCount > 1; |

That works fine across “normal” site databases.

But there are exceptions.

In the Area 51 database, that query fails. As mentioned above, there is no UserMetadata table, and AnswerCount is not aggregated anywhere. I have to write the query differently:

|

1 2 3 4 5 6 7 8 9 10 |

SELECT u.Id, AnswerCount = COUNT(p.Id) FROM Users AS u INNER JOIN Posts AS p ON u.Id = p.OwnerUserId AND p.PostTypeId = 2 WHERE u.AboutMe LIKE N'%groundhogs%' GROUP BY u.Id HAVING COUNT(p.Id > 1); |

In the Chat database, this query makes no sense, because there are no answers. So I want an easy way for this database to be skipped entirely.

The problem.

I don’t want to hard-code lists of databases that happen to support one version of the schema or another right now. This reminds me of browser detection techniques that rely on the user agent string the browser presents, rather than testing the required functionality. Picture maintaining a list of all possible user agent strings and keeping it up to date. This is simply unmanageable, and I don’t want to do it for database names, either. I want it to be dynamic, so I don’t have to update some list somewhere every time a new database gets added, existing schema drift gets corrected, or new schema drift appears. And sometimes new schema drift will appear, like with new features, as mentioned before. We know about this drift; it goes through PRs and reviews, and is what I call controlled drift. The technique I use relies on knowing the schemas are well controlled, and being aware of changes coming out. So if someone goes rogue and creates a new table in Area51 called dbo.MoreUserMetadata, and starts stuffing information about groundhogs in a random column there without anyone’s knowledge, that data will go unnoticed. That is one caveat to the solution I’ll propose.

A piece of the puzzle.

I have to repeat this process for a growing list of queries. So, in our central DBA database, I have an Actions table that I use to validate the metadata in each database before attempting to run a given query there.

|

1 2 3 4 5 6 7 8 9 10 11 12 13 14 |

USE DBA; GO CREATE TABLE dbo.Actions ( ActionID int, QueryText nvarchar(max), CheckTable1 nvarchar(128), CheckColumn1 nvarchar(128), CheckTable2 nvarchar(128), CheckColumn2 nvarchar(128) ); |

I put these queries into the table (minus the statement terminator, since these will later be placed inside a common table expression):

|

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27 28 29 |

INSERT dbo.Actions ( ActionID, QueryText, CheckTable1, CheckColumn1, CheckTable2, CheckColumn2 ) VALUES (1, N'SELECT u.Id, u.AnswerCount FROM Users AS u INNER JOIN UserMetadata AS um ON u.Id = um.UserId WHERE um.AboutMe LIKE N''%groundhogs%'' AND u.AnswerCount > 1', N'Users', N'AnswerCount', N'UserMetadata', N'AboutMe'), (2, N'SELECT u.Id, AnswerCount = COUNT(p.Id) FROM Users AS u INNER JOIN Posts AS p ON u.Id = p.OwnerUserId AND p.PostTypeId = 2 WHERE u.AboutMe LIKE N''%groundhogs%'' GROUP BY u.Id HAVING COUNT(p.Id) > 1', N'Users', N'AboutMe', NULL, NULL); |

As I loop through each database, I build dynamic SQL that checks for the proper metadata before replacing tokens and executing the query. To demonstrate, first, let’s set up a few databases that meet our various criteria:

|

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27 28 29 |

CREATE DATABASE StackOverflow; GO USE StackOverflow; -- only query 1 can run here GO CREATE TABLE dbo.Users(Id int, AnswerCount int); CREATE TABLE dbo.UserMetadata(UserId int, AboutMe nvarchar(max)); INSERT dbo.Users VALUES(1,5),(2,0); INSERT dbo.UserMetadata VALUES(1,'I love groundhogs!'); GO CREATE DATABASE Chat; -- neither query can run here GO USE Chat; GO CREATE TABLE dbo.Users(UserId int); INSERT dbo.Users VALUES(1),(2); GO CREATE DATABASE Area51; -- only query 2 can run here GO USE Area51; GO CREATE TABLE dbo.Users(Id int, AboutMe nvarchar(max)); CREATE TABLE dbo.Posts(Id int, OwnerUserId int, PostTypeId int); INSERT dbo.Users VALUES(5,'I hate groundhogs!'); INSERT dbo.Posts VALUES(1,5,2),(2,5,2); GO |

I’ll use a cursor to step through each action within each database, and check if it has supporting metadata. I keep it flexible so that I can check one or two tables, and zero or one columns in each table. Why zero? For some queries, I know the columns exist in all databases, so I don’t need to check.

|

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27 28 29 30 31 32 33 34 35 36 37 38 39 40 41 42 43 44 45 46 47 48 49 50 51 52 53 54 55 56 57 58 59 60 61 62 63 64 65 66 67 68 69 70 71 72 73 74 75 76 77 78 79 80 81 82 83 84 85 86 87 88 89 90 91 92 93 94 95 96 97 |

DECLARE @debug bit = 0; /* set to 1 to see output instead of execution */ DECLARE @go bit, @actions cursor, @actionId int, @db sysname, @context nvarchar(255), @checkSQL nvarchar(max), @queryText nvarchar(max), @t1 nvarchar(128), @c1 nvarchar(128), @t2 nvarchar(128), @c2 nvarchar(128), @tCheck nvarchar(255) = N'EXISTS (SELECT 1 FROM sys.tables AS t WHERE name = ', @cCheck nvarchar(256) = N'EXISTS (SELECT 1 FROM sys.columns AS c WHERE c.object_id = t.object_id AND c.name = ', @params nvarchar(max) = N'@t1 nvarchar(128), @c1 nvarchar(128), @t2 nvarchar(128), @c2 nvarchar(128), @go bit OUTPUT'; /* temp table to hold the results from each database */ DROP TABLE IF EXISTS #Results; CREATE TABLE #Results(ActionID int, Source nvarchar(255), UserId int, AnswerCount int); /* cursor to loop through each action for each database */ SET @actions = cursor FOR SELECT act.ActionID, db.name, act.QueryText, act.CheckTable1, act.CheckColumn1, act.CheckTable2, act.CheckColumn2 FROM dbo.Actions AS act CROSS JOIN sys.databases AS db WHERE db.state = 0 AND db.database_id > 4 ORDER BY db.name, act.ActionID; OPEN @actions; FETCH @actions INTO @actionId, @db, @queryText, @t1, @c1, @t2, @c2; WHILE @@FETCH_STATUS <> -1 BEGIN /* set the right database context, and construct the metadata check depending on whether we need to validate one or two tables and/or columns. The tables/columns are passed into the dynamic SQL based on what comes back from the Actions table. */ SELECT @context = QUOTENAME(@db) + N'.sys.sp_executesql', @checkSQL = N'IF ' + @tCheck + N'@t1' + CASE WHEN @c1 IS NOT NULL THEN N' AND ' + @cCheck + N'@c1)' ELSE N'' END + N')' + CASE WHEN @t2 IS NOT NULL THEN N' AND ' + @tCheck + N'@t2' + CASE WHEN @c2 IS NOT NULL THEN N' AND ' + @cCheck + N'@c2)' ELSE N'' END + N')' ELSE N'' END + N' SET @go = 1; ELSE SET @go = 0;'; IF @debug = 1 BEGIN PRINT CONCAT_WS(char(13), @db, 'Action:', @ActionID, 'CheckSQL:', @checkSQL); PRINT CONCAT('@t1/@c1: ',@t1, '.' + @c1, char(13), '@t2/@c2: ',@t2, '.' + @c2); END /* check if the metadata exists in the target database: */ EXEC @context @checkSQL, @params, @t1, @c1, @t2, @c2, @go OUTPUT; IF @debug = 1 BEGIN PRINT CONCAT('Should action #', @actionId, ' run here (', @db, ')? ', @go); END /* if it does, we're good to go! */ IF @go = 1 BEGIN /* wrap the query in a CTE so we can inject action/DB: */ SET @queryText = N'WITH cte AS (' + @QueryText + ') SELECT @ActionID, DB_NAME(), * FROM cte;'; IF @debug = 1 BEGIN PRINT CONCAT('Would have run:', char(13), @queryText); END ELSE BEGIN INSERT #Results(ActionID, Source, UserID, AnswerCount) EXEC @context @queryText, N'@ActionID int', @actionId; END END FETCH @actions INTO @actionId, @db, @queryText, @t1, @c1, @t2, @c2; END IF @debug = 0 BEGIN SELECT ActionID, Source, UserID, AnswerCount FROM #Results; END |

Results.

Even though I have very different schema across (many!) different databases, this technique allows me to pull a consolidated result without knowing which ones store the same data in slightly different ways:

If you are trying to get a handle on what the code does, the parameter @debug provides some insight. If you execute the script with @debug = 1, you’ll see this output instead of the results. This shows what code is produced and executed by sp_executesql:

|

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27 28 29 30 31 32 33 34 35 36 37 38 |

Area51 Action: 1 CheckSQL: IF EXISTS (SELECT 1 FROM sys.tables AS t WHERE name = @t1 AND EXISTS (SELECT 1 FROM sys.columns AS c WHERE c.object_id = t.object_id AND c.name = @c1)) AND EXISTS (SELECT 1 FROM sys.tables AS t WHERE name = @t2 AND EXISTS (SELECT 1 FROM sys.columns AS c WHERE c.object_id = t.object_id AND c.name = @c2)) SET @go = 1; ELSE SET @go = 0; @t1/@c1: Users.AnswerCount @t2/@c2: UserMetadata.AboutMe Should action #1 run here (Area51)? 0 Area51 Action: 2 CheckSQL: IF EXISTS (SELECT 1 FROM sys.tables AS t WHERE name = @t1 AND EXISTS (SELECT 1 FROM sys.columns AS c WHERE c.object_id = t.object_id AND c.name = @c1)) SET @go = 1; ELSE SET @go = 0; @t1/@c1: Users.AboutMe @t2/@c2: Should action #2 run here (Area51)? 1 Would have run: WITH cte AS (SELECT u.Id, AnswerCount = COUNT(p.Id) FROM Users AS u INNER JOIN Posts AS p ON u.Id = p.OwnerUserId AND p.PostTypeId = 2 WHERE u.AboutMe LIKE N'%groundhogs%' GROUP BY u.Id HAVING COUNT(p.Id) > 1) SELECT @ActionID, DB_NAME(), * FROM cte; ... repeat for every database ... |

I’ll follow up soon with details on some of the more special cases.

Load comments