A couple of weeks ago, I was in Las Vegas presenting several Exchange Server sessions at one of the many IT conferences that they have out there. After one of my sessions, I was having a conversation with one of the other conference attendees. During the conversation, he made an off the cuff remark that Exchange 2007 runs so well with a default configuration, that you don’t even have to worry about optimizing Exchange any more.

Maybe it was because I was because I was still jet lagged, or because my mind was still on the session that I had just presented, but the remark didn’t really register with me at the time. Later on though, I started thinking about his comment, and while I don’t completely agree with it, I can kind of see where he was coming from.

I started working with Exchange at around the time when people were first moving from Exchange 4.0 to version 5.0. At the time, I was working for a large, enterprise class organization with roughly 25,000 mailboxes. Although those mailboxes were spread across several Exchange Servers, the server’s performance left a lot to be desired. I recall spending a lot of time trying to figure out things that I could do to make the servers perform better.

Although there is no denying how poorly our Exchange Servers performed, I think that the server hardware had more to do with the problem than Exchange itself. For example, one night I was having some database problems with one of my Exchange Servers. Before I attempted any sort of database repair, I decided to make a backup copy of the database. In the interest of saving time, I copied the database from its usual location to another volume on the same server.

All of the server’s volumes were using “high speed” RAID arrays, but it still took about three and a half hours to backup a 2 GB information store. My point is that Exchange has improved a lot since the days of Exchange 5.0, but the server hardware has improved even more dramatically. Today multi core CPUs, and terabyte hard drives are the norm, but were more or less unheard of back in the day.

When the guy at the conference commented that you don’t even have to worry about optimizing Exchange 2007, I suspect that perhaps he had dealt with legacy versions of Exchange on slow servers in the past. In contrast, Exchange 2007 uses a 64-bit architecture, which means that it can take full advantage of the CPU’s full capabilities and that it is no longer bound by the 4 GB memory limitation imposed by 32-bit operating systems.

“

Although Exchange 2007

does perform better than

its predecessors, I would

not go so far as to say

that optimization is no

longer necessary.

“

Although Exchange 2007 does perform better than its predecessors, I would not go so far as to say that optimization is no longer necessary. Think about it this way… If you’ve got an enterprise grade Exchange Server, but you’ve only got 20 mailboxes in your entire organization, then that server is going to deliver blazing performance. If you start adding mailboxes though, you are eventually going to get to the point at which the server’s performance is going to start to suffer.

This illustrates two points. First, the Exchange 2007 experience is only as good as what the underlying hardware is capable of producing. Second, even if your server is running well, future growth may require you to optimize the server in an effort to maintain the same level of performance that you are enjoying now. Fortunately, there are some things that you can do to keep Exchange running smoothly. I will spend the remainder of this article discussing some of these techniques.

My Approach to Exchange 2007 Optimization

To the best of my knowledge, Microsoft has not published an Exchange 2007 optimization guide. They do offer some lists of post installation tasks, but most of the tasks on the list are related more to the server’s configuration than to its performance. Although there isn’t an “optimization guide” so to speak, the Exchange 2007 Deployment Guide lists a number of optimization techniques, and for all practical purposes serves as the optimization guide. Since I can’t cover all of the techniques listed in the guide within a limited amount of space, I want to talk about some optimization techniques that have worked for me.

As I have already explained, the underlying hardware makes a big difference as to how well Exchange is going to perform. For the purposes of this article, I am going to assume that you have already got the appropriate hardware to meet your needs. If you have insufficient hardware capabilities for the workload that the server is carrying, then these techniques may or may not help you.

The Microsoft Exchange Best Practices Analyzer

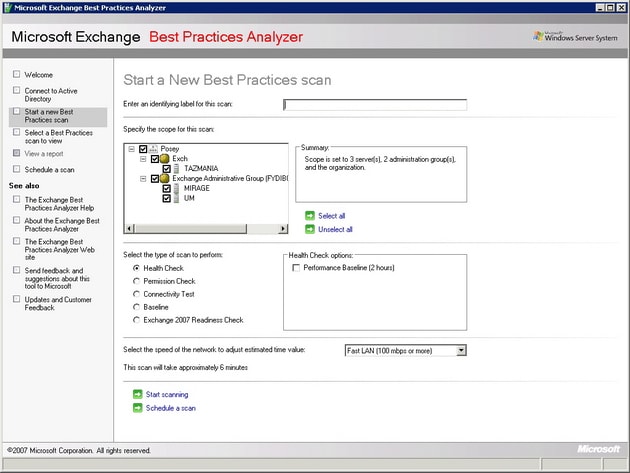

If you were to ask me what the single most important (non hardware related) thing you could do to optimize Exchange Server, I would probably tell you to run the Microsoft Exchange Best Practices Analyzer (ExBPA). Although the ExBPA, shown in Figure A, was originally designed as a configuration analysis tool, and has been largely used by the IT community as a security tool, what it really does is scan your Exchange Server organization and make sure that it is configured according to Microsoft’s recommended best practices. Many of Microsoft’s best practices for Exchange Server are related to security, but there are plenty of performance and reliability related recommendations as well.

Figure A

The Microsoft Exchange Best Practice Analyzer makes sure that your Exchange organization is configured according to Microsoft’s best practices.

The ExBPA started life as a downloadable utility for Exchange 2003. Microsoft recommends this tool so strongly though, that they actually included it in the Exchange Management Console for Exchange Server 2007, at the very top of the list of tools in the Toolbox.

One thing that you need to keep in mind is that Microsoft is a dynamic organization. They are constantly doing research on the ways that their products are being used, and sometimes their recommended best practices end up changing as a result of that research. This means that optimizing Exchange 2007 is not a set it and forget it operation. You need to periodically run the ExBPA to see if any of Microsoft’s recommendations for your organization have changed. Keep in mind though that if you are using Microsoft’s System Center Operations Manager or System Center Essentials, they will automatically run ExBPA on a daily basis.

Disk I/O

If you are an Exchange veteran, then you might have been a little surprised when I listed running the ExBPA as the single most important step in optimizing an Exchange Server. In every previous version of Exchange, the most important thing that you could do from a performance standpoint was to optimize disk I/O.

There are a couple of reasons why I didn’t list disk optimizing disk I/O as my top priority. First, if an Exchange Server has a serious problem in which the configuration of the disk subsystem is affecting performance, then, the ExBPA should detect and report the issue when you run a performance baseline scan. More importantly though, you can always count on your users to tell you when things are running slowly.

Another reason why disk I/O isn’t my top priority is because Exchange Server 2007 uses a 64-bit architecture, which frees it from the 4 GB address space limitation imposed by 32-bit operating systems. Microsoft has used this larger memory model to design Exchange 2007 in a way that drives down read I/O requirements. This improves performance for database reads, although the performance of database writes have not really improved.

Of course that doesn’t mean that the old rules for optimizing a disk subsystem no longer apply. It is still important to place the transaction logs and the databases onto separate volumes on high speed raid arrays for performance and fault tolerant reasons. Keep in mind that you can get away with using two separate volumes on one physical disk array, but doing so is not an ideal arrangement unless you divide your bank of disks into separate arrays so that each volume resides on separate physical disks.

If the same physical disks are used by multiple volumes, then fault tolerance becomes an issue unless the array is fault tolerant. That’s because if there is a failure on the array, and both volumes share the same physical disks, then both volumes will be affected by the failure.

Even if the volume is fault tolerant, spanning multiple volumes across the same physical disks is sometimes bad for performance. In this type of situation, the volumes on the array are competing for disk I/O. If you were to separate the volumes onto separate arrays or onto separate parts of an array though, you can eliminate competition between volumes for disk I/O. Each volume has full reign of the disk resources that have been allocated to it.

Storage Groups

As was the case in previous versions of Exchange, Microsoft has designed Exchange 2007 so that multiple databases can exist within a single storage group, or you can dedicate a separate storage group to each individual storage group.

Microsoft recommends that you limit each storage group to hosting a single database. This does a couple of things for you. First, it allows you to use continuous replication, which I will talk about later on. Another thing that it does is that it helps to keep the volume containing the transaction logs for the storage group from becoming overwhelmed by an excessive number of transactions coming from multiple databases, assuming that you use a separate volume for the transaction logs from each storage group.

Using a dedicated storage group for each database means that the transaction log files for the storage group only contain transaction log entries for one database. This makes database level disaster recovery much easier, because you do not have to deal with transaction log data from other databases.

Mailbox Distribution

Since Exchange Server can accommodate multiple Exchange Server databases, it means that you have the option of either placing all of your mailboxes into a single database, or of distributing the mailboxes among multiple databases. Deciding which method to use is actually one of the more tricky points of optimizing Exchange Server’s performance. There are really two different schools of thought on the subject.

Some people believe that as long as there aren’t an excessive number of mailboxes, that it is best to place all of the mailboxes within a single store. The primary advantages of doing so are ease of management and simplified disaster recovery.

There is also however an argument to be made for distributing Exchange mailboxes among multiple stores (in multiple storage groups). Probably the best argument for doing so is that if a store level failure occurs then not all of your mailboxes will be affected. Only a subset of the user base will be affected by the failure. Furthermore, because the store is smaller than it would be if it contained every mailbox in the entire organization, the recovery process tends to be faster.

Another distinct advantage to using multiple mailbox stores is that assuming that each store is placed on separate disksfrom the other stores, and the transaction logs are also distributed onto separate disks, the I/O requirements are greatly decreased, because only a fraction of the total I/O is being directed at any one volume. Of course the flip side to this is that this type of configuration costs more to implement because of the additional hardware requirements.

As you can see, there are compelling arguments for both approaches, so which one should you use? It really just depends on how much data your server is hosting. Microsoft recommends that you cap the mailbox store size based on the types of backups that you are using. For those running streaming backups, Microsoft recommends limiting the size of a store to no more than 50 GB. For organizations using a VSS backups without a continuous replication solution in place, they suggest limiting your database size to 100 GB. This recommendation increases to 200 GB if you have a continuous replication solution in place

There are a couple of things to keep in mind about these numbers though. First, the limits above address manageability, not performance. Second, the database’s size may impact performance, but it ultimately comes down to what your hardware can handle. Assuming that you have sufficient hardware, Exchange 2007 will allow you to create databases with a maximum size of 16 TB.

If you do decide to split an information store into multiple databases, there are some hardware requirements that you will need to consider beyond just the disk configuration. For starters, each database is going to consume some amount of CPU time, although the actual amount of additional CPU overhead varies considerably.

You are also going to have to increase the amount of memory in your server as you increase the number of storage groups. Microsoft’s guidelines state that a mailbox server should have at least 2 GB of memory, plus a certain amount of memory for each mailbox user. The table below illustrates the per user memory requirements:

|

User Type |

Definition |

Amount of Additional Memory Required |

|

Light |

5 messages sent / 20 messages received per day |

2 MB |

|

Medium |

10 messages sent / 40 messages received per day |

3.5 MB |

|

Heavy |

Anything above average |

5 MB |

The 2 GB base memory, and the per user mailbox memory requirements make a couple of assumptions. First, it is assumed that the server is functioning solely as a mailbox server. If other server roles are installed, then the base memory requirement is increased to 3 GB, and the per user mailbox requirements remain the same.

These recommendations assume that the server has no more than four storage groups though. Each storage group consumes some memory, so Microsoft requires additional memory as the number of storage groups increase.

When Microsoft released SP1 for Exchange 2007, they greatly decreased the amount of memory required for larger numbers of storage groups. For example, a server with 50 storage groups requires 26 GB of memory in the RTM release of Exchange 2007, but when SP1 is installed, the requirement drops to 15 GB. The table below illustrates how the base memory requirement changes as the number of storage groups increases.

|

Number of Storage Groups |

Exchange 2007 RTM Base Memory Requirements |

Exchange 2007 Service Pack 1 Base Memory Requirements |

|

1-4 |

2 GB |

2 GB |

|

5-8 |

4 GB |

4 GB |

|

9-12 |

6 GB |

5 GB |

|

13-16 |

8 GB |

6 GB |

|

17-20 |

10 GB |

7 GB |

|

21-24 |

12 GB |

8 GB |

|

25-28 |

14 GB |

9 GB |

|

29 to 32 |

16 GB |

10 GB |

|

33-36 |

18 GB |

11 GB |

|

37-40 |

20 GB |

12 GB |

|

44-44 |

22 GB |

13 GB |

|

45-48 |

24 GB |

14 GB |

|

49-50 |

26 GB |

15 GB |

One Last Disk Related Consideration

Exchange Server 2007 Enterprise Edition allows you to use up to 50 storage groups. I have only worked with a deployment of this size on one occasion, but made an interesting observation that I had never seen specifically pointed out in any of the documentation.

Normally, server volumes are referenced by drive letters. If you have a server with 50 stores, all on separate volumes, then you more than exhaust the available drive letters. As such, you will have to address the volumes as mount points rather than drive letters.

Backups

I don’t know about you, but when I think about optimizing a server’s performance, backups are not usually the first thing that comes to mind. Even so, my experience has been that you can get a big performance boost just by changing the way that nightly backups are made. Let me explain.

Most organizations still seem to be performing traditional backups, in which the Exchange Server’s data is backed up to tape late at night. There are a couple of problems with this though. For starters, the backup process itself places a load on the Exchange Server, which often translates into a decrease in the server’s performance until the backup is complete. This probably isn’t a big deal if you work in an organization that has a nine to five work schedule, but if your organization is a 24 hour a day operation then a performance hit is less than desirable.

Another aspect of the nightly backup that many administrators don’t consider is that a nightly backup almost always coincides with the nightly automated maintenance tasks. By default, each night from midnight to 4:00 AM, Exchange performs an automated maintenance cycle.

This automated maintenance cycle performs several different maintenance tasks, including an online database defragmentation. These maintenance tasks tend to be I/Ontensive, and the effect is compounded if a backup is running against a database at the same time that the maintenance tasks are running.

There are a few ways that you can minimize the impact of the maintenance cycle and the backup process. One recommendation that I would make would be to schedule the maintenance cycle so that it does not occur at an inopportune moment.

The maintenance cycle occurs at the database level. If you’ve got multiple databases, then by default the maintenance cycle will run on each database at the same time. Depending on how many databases you’ve got, you may be able to schedule the maintenance cycle so that it is only running against one database at a time. Likewise, you may also be able to work out a schedule that prevents the maintenance cycle and the backup from running against the same database at the same time.

If you want to see the current maintenance schedule for a database, open the Exchange Management Console, and navigate through the console tree to Server Configuration | Mailbox. Next, select your Exchange mailbox server in the details pane, followed by the store that you want to examine. Right click on the store and then select the Properties command from the resulting shortcut menu. You can view or modify the maintenance schedule from the resulting properties sheet’s General tab.

Another way that you can mitigate the overhead caused by the backup process is to take advantage of Cluster Continuous Replication (CCR). Although CCR is no substitute for a true backup, CCR does use a process called log shipping to create a duplicate of a database on another mailbox server. It is possible to run your backups against this secondary cluster node rather than running it against your primary Exchange Server. That way, the primary Exchange Server is not impacted by the backup.

If you do decide to use CCR, then you will have to keep in mind that you are only allowed to have one database in each storage group. Otherwise, the option to use CCR is disabled.

The Windows Operating System

Another aspect of the optimization process that is often overlooked is the Windows operating system. Exchange rides on top of Windows, so if Windows performs poorly, then Exchange will too.

One of the best things that you can do to help Windows to perform better is to place the pagefile onto a dedicated hard drive (or better yet, a dedicated array). You should also make sure that the pagefile is sized correctly. Microsoft normally recommends that the Windows pagefile should be 1.5 times the size of the machine’s physical memory. There is a different recommendation for Exchange 2007 though. Microsoft recommends that you set the pagefile to equal the size of your machine’s RAM, plus 10 MB. If you use a larger pagefile, then the pagefile will eventually become fragmented, leading to poor performance.

Finally, make sure that your server is running all of the latest patches and drivers. You should also take the time to disable any services that you don’t need. Every running service consumes a small amount of system resources, so disabling unneeded services can help you to reclaim these resources.

Conclusion

In this article, I have explained that while Exchange Server has improved over the years, there are still a lot of things that you can do to help Exchange to perform even better. This is especially important in larger organizations in which the server’s finite resources are being shared by many different users.

Load comments