The series so far:

- Oracle sequences: The basics

- Oracle sequences: RAC

- Typical uses of Oracle sequences

- Oracle sequences – 12c features including “identity”

In my first article on Sequences, I reviewed the fundamental reason why Oracle Corp. introduced the mechanism (i.e. synthetic/surrogate keys) and the mechanical threats (cache size and index contention) that still had to be addressed by the developer/DBA. In this article, I’ll spend most of my time explaining how the problems can get worse (and be addressed) as you move from single-instance Oracle to multi-instance Oracle (RAC). I’ll end the article by mentioning the newer features of sequences that appeared in the upgrades from 12c to 19c, emphasizing one RAC scalability feature.

NOTE: Literally minutes after I had emailed the final draft of this article to the Simple Talk editor, a question came up on the Oracle-L list server asking why duplicate values were appearing from a sequence with scale and extend enabled when the queries accessing it were running parallel. The answer arrived shortly afterwards: this is due to (unpublished) bug 31423645, fixed in Oracle 19.11 with back-ports possible for earlier versions of Oracle.”

RAC

Whenever you move from single-instance to multi-instance, the fundamental problem is how to avoid excessive competition between instances for “popular” data blocks. This generic issue turns into two specific issues for sequences:

- Refreshing the sequence “cache” updates a specific row in table

sys.seq$. - Every session that’s inserting consecutive values into an index will be trying to access the same “right-hand / high-value” index leaf block.

There is another issue with sequences and RAC, though, that has to be considered before worrying about possible hot spots. How do the instances co-ordinate their use of sequence values and avoid the risk of two instances using the same value? There are two solutions: the default noorder mechanism where each instance behaves as if it doesn’t know about the other instances. The other is the order option, where the instances continuously negotiate through the use of global enqueues to determine which instance should be responsible for the sequence at any moment.

Default Mechanism (noorder)

Imagine you have created a sequence with the basic command

|

1 |

create sequence s1 cache 5000; |

As demonstrated in the previous article, Oracle creates a row in the seq$ table with highwater = 1 and cache = 5000. The first instance to call for s1.nextval reads this row into its global memory area and returns the value 1 to the calling session, updating the table to set the table’s highwater to 5001. (Note: I have said that the “instance” is calling for a value, more accurately, I should say a session in the instance.)

What happens when the next instance calls for s1.nextval? It will do what any instance normally does; it will read the current values of the row from seq$ and say “the current high value is 5001, the cache size is 5000; I will update seq$.highwater to 10,001 and return the value 5001 to the session”. If a third instance then calls for s1.nextval, the same procedure takes place – it reads the current state of the row, updates the highwater to 15,001, and returns 10,001 to the user session.

Any of the three instances could be the first to exhaust its cache – when it does, it will read the seq$ row again, update the highwater by 5,000 and return the value that used to be the highwater. If this is the first instance, its call to nextval will jump from 5,000 to 15,001.

The upshot of this noorder mechanism is that each instance will be working its way through a different range of numbers, and there will be no overlaps between instances. If you had sessions that logged on to the database once per second to issue a call to nextval (and they ended up connecting through a different instance each time), then the values returned would appear to be fairly randomly scattered over a range dictated by “number of instances x cache size.” Uniqueness would be guaranteed, but ordering would not.

What does this do for the two hot spots, though? I chose a cache size of 5,000 rather than leaving it to default to 20 so that there would be a reasonably large gap between instances that would help to address both points. As it stands, the seq$ block for the sequence’s row would move between instances to be updated at a fairly low frequency. The instances will spend most of their time inserting key values into leaf blocks that are spaced a few blocks apart in the index with intermittent collisions each time an instance refreshes its cache. To a very large degree, optimizing a sequence in a RAC system simply means setting a big enough cache size – in some cases cache 1e6 would be a perfectly reasonable setting.

This isn’t a complete solution to all the performance problems, and I’ll have more to say about that after a brief diversion into sequences created with the non-default order option.

Sequences with ORDER

If you’re running single-instance Oracle, then your sequence values are always generated in order. Some of the values may get lost, some of them may be used out of order by the sessions that acquired them, but there is only one source for “the next value.” That value is generated by the instance as “the previous value” plus “the increment,” so the values are always generated in order.

As noted above, in multi-instance Oracle, the instances will, by default, have separate non-overlapping caches that will be out of synch with each other by an amount relating to the cache size. When you view the sequence from a global perspective, there’s no guarantee that values will be generated in order – and that’s where the RAC-specific order option comes into play.

If you declare a sequence with the order option, Oracle adopts a strategy of using a single “cache” for the values and introduces a mechanism for making sure that only one instance at a time can access and modify that cache. Oracle does this by taking advantage of its Global Enqueue services. Whenever a session issues a call to nextval, the instance acquires an exclusive SV lock (global enqueue) on the sequence cache, effectively saying, “who’s got the most up to date information about this sequence – I want control”. The one instance holding the SV lock in exclusive mode is then the only instance that can increment the cached value and, if necessary, update the seq$ table by incrementing the highwater. This means that the sequence numbers will, once again, be generated in order.

The immediate penalty you pay for invoking the order option is that you serialize the generation of values. The rate at which you can generate sequence numbers is dictated by the rate at which the Global Enqueue Server processes (LCK0/LMD) can manage to move the SV lock for the sequence between the instances. Unless your sequence is only supposed to supply values at a fairly low rate, you probably don’t want to use this option – it doesn’t scale.

It is an odd detail that while the sequence information is passed between instances through the SV enqueue, the enqueue statistics (v$enqueue_stat) won’t show any gets on the SV enqueue. (The system wait events (v$system_event) will report waits for enq: SV - contention, but the individual sessions (v$session_event) will show these waits only as events in waitclass other.)

Performance Impact

All the performance issues that appear in single-instance Oracle reappear in multi-instance Oracle but tend to get worse because of the need for the instances to co-ordinate their activity through the Global Cache Service or Global Enqueue Service (or both).

In particular, when an instance needs to update seq$, it may need to call the Global Cache service to get exclusive access (gc current get) to the block that it needs to update. Similarly, if the sequence is being used to generate a unique key, then the instance may also need to call the global cache service to get exclusive access to the relevant index leaf block, and the “right-hand/high-value” problem that appears in single-instance Oracle can become a disaster area in multi-instance Oracle.

The possible threats are made a little more subtle, though, by the choice between declaring the sequence as order or noorder. There are 4 combinations to consider:

Noorder / cache N

Order / cache N

Noorder / nocache

Order / nocache

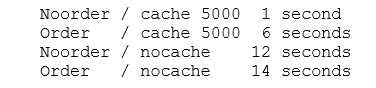

To give you some idea of the effect of the different options, I set up a 3-node RAC system and ran a simple PL/SQL block on each node to do 1,000 single-row inserts of sequence.nextval with a 1/100 second sleep between inserts. I then tested each of the 4 options above (with N = 5000 for the cache tests). The actual work done for the inserts was tiny (less than 1 second CPU); the excess wait time due to RAC-related wait events was as follows (in order of excess time lost):

Clearly, cache is better than nocache, and noorder is better than order. A large cache with noorder is by far the most efficient option. The overheads of global enqueue management for ordering are significant, as are the overheads of maintaining the seq$ table.

There are defects to this specific test, of course. First, it’s running VMs, so the virtual interconnect speeds are slower than they would be on big dedicated hardware; secondly, I haven’t included any indexes in this test, and the different patterns of index contention could be significant; finally, I’m only using one session per instance to do the inserts while production systems are likely to see different patterns of contention between sessions that are running on the same instance.

There are too many possible variations in the patterns of activity that different applications might have, so it’s not sensible for me to create and report tests for all of them. Just remember that you need to think about how your application will be working, then design a couple of models to work out the most appropriate strategy for your circumstances. I will, however, make a few general comments on how the different options may affect the performance of your production system based on my initial statement about the two critical points: refreshing the sequence cache and inserting into the sequence-based unique index.

Noorder / Cache N

Refreshing the cache: an instance that reaches its high water and needs to refresh its cache will have to acquire the relevant seq$ block in exclusive mode. For low cache values (e.g. the default 20), this may happen very frequently and introduce significant waits for “gc” (global cache) events. For large values (e.g. the 5,000 in my test), this may be sufficiently rare that any “gc” waits are insignificant compared to the rest of the workload.

Inserting into the index: imagine two instances and a sequence with a cache size of 5,000 where node 1 has been busier than Node 2. Node 1 is currently inserting values in the range 25,001 to 30,000 and has just reached 28,567. Node 2 is inserting values in the range 5,001 to 10,000 and has just reached 9,999. The two instances are inserting into different index leaf blocks, so there’s no contention. After one more insert, Node 2 needs to refresh its cache, so it now starts to insert values in the range 30,001 to 35,000, but Node 1 is currently inserting a few values just a little larger than 28,567. The two nodes will be inserting into the same high-value leaf block for a little while until that leaf block splits and probably leaves Node 1 inserting into one leaf block and Node 2 inserting into the one just above it. For a couple of seconds, there might be a fierce battle for ownership between the two instances, and you’re likely to see waits for various “gc” events for the index, including “gc cr block busy”, “gc buffer busy release” and “gc buffer busy acquire”. I’ll comment on the workaround, or damage limitation mechanism, for this behaviour on my way to the section on 12c enhancements.

Order / Cache N

Refreshing the cache: the comments from “noorder/cache N” regarding “gc” waits still apply, but since only one instance will need to update the seq$ table at any one moment, the impact will be greatly reduced. But this savings has been at the cost of the continuous stream of waits for the SV enqueue.

Inserting into the index: If you don’t do something to avoid the issue, the contention on the right-hand / high-value leaf block on the index will be huge. Every instance will constantly be demanding the block in current mode, and you will see a lot of waits for “gc” events for the index; you may also see several waits for “enq: TX – index contention” as the current high_value block splits. Of course, if you take action to avoid the contention on the high-value index leaf block, you must have done something that stops consecutive values belonging to the same index leaf block, which means they can’t be consecutive anymore. This suggests you didn’t really need the “order” option.

Noorder / NoCache

Refreshing the cache: If there’s no “cache”, the row in seq$ has to be updated on every single call to sequence.nextval. Every instance will request exclusive access to the relevant block and show lots of time lost on various “gc” waits. However, since updating the highwater also results in the dictionary cache (rowcache) being updated, there will also be a lot of time spent waiting on “row cache lock” events.

Inserting into the index: Even though you’ve specified noorder, the effect of the nocache means that the values supplied will be very well ordered with no “cache-size” gap between instances. All the instances will insert into the same high-value leaf block at the same time. There will be “gc” waits on the index as well as on seq$. Given all the waits for seq$ and row cache locks, the extra time lost due to these waits for the index leaf block may not be extreme.

Order / Nocache

Refreshing the cache: As for noorder/nocache, there is no cache, so every call to sequence.nextval is a request to update the seq$ table and invalidate the row cache entry for the sequence on all instances. In fact, I could see no difference between order/nocache and noorder/nocache. Possibly the difference in timing between these two cases in my test was purely one of luck.

Inserting into the index: Again, the argument of noorder/nocache applies.

Performance – further thoughts

From the list of 4 combinations, it should be clear that noorder/cache N with a large cache is the most scalable option, as it separates the (index) activity of the instances and minimizes the contention for updating the seq$ table. However, it still has effects that might need further consideration.

First is the simple observation that you can expect the index to be roughly twice the “packed” size. If you do a test on a single instance inserting into an indexed column the values from 1 to 1,000,000 from one session and the values from 1,000,001 to 2,000,000 from another session, you will find that the lower range of values will result in 50/50 leaf block splits. In contrast, the upper range of values will result in 90/10 splits. If you have N instances doing inserts from a sequence with a large cache, all but the instance using the top-most range of values will be doing 50/50 leaf block splits all the time. The only comment I can make on this is that it isn’t nice, but it’s probably not terribly important.

More significant is that I’ve ignored the effects of multiple sessions in each instance using the sequence for inserts. In a single instance (of a multi-instance RAC), you could have many sessions inserting values that are very similar, and though this won’t generally produce contention between instances, it can produce contention between sessions in the same instance (i.e. buffer busy waits, index ITL waits, etc.). The “single instance” workaround I mentioned in the previous article of adding a factor like (1 + mod(sid, 16)) * 1e10 to the value supplied by the sequence is a positive danger in these circumstances. For example, entries generated by session 99 of instance 1 might end up in the same index leaf block as rows generated by session 99 of instance 2. You need to go one step further for RAC. In addition to adding a factor to spread sessions across multiple leaf blocks, you also need to add a factor that ensures that different instances will stay separated by adding an “instance factor” like (1 + mod(instance,999) * 1e14 as well. And this brings me to 12c Enhancements.

Sequences in 12c

Oracle 12c (12cR2) brings several enhancements to sequences. You can associate a sequence with a table column using the identity mechanism. Alternatively, you can use “sequence.nextval” as a default value for a column if you’d rather not declare an identity. You can create sequences that can be used “locally” – i.e. they are private to a session. You can restart a sequence. Finally, 12c automates the business of minimizing index contention on RAC by introducing the “scale” option (though it’s not documented in the SQL Reference manual and probably shouldn’t be used until 18c).

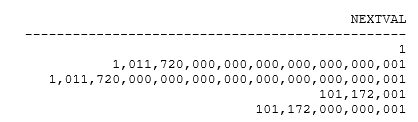

Here’s a little script that produces the same pattern of results on 12.2.0.1 and 19c, demonstrating the new automatic scalability option:

|

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 |

create sequence s1; create sequence s2 scale; create sequence s3 scale extend; create sequence s4 maxvalue 1e8 scale; create sequence s5 maxvalue 1e8 scale extend; create sequence s6 maxvalue 1e5 scale; set linesize 48 column nextval format 999,999,999,999,999,999,999,999,999,999,999,999 select s1.nextval, s2.nextval, s3.nextval, s4.nextval, s5.nextval from dual / |

I’ve declared the first three sequences without a maxvalue (which means the implicit maxvalue is 1e28 – 1) and the next two with an explicit maxvalue of 1e8. I’ve given the final sequence a maxvalue of 1e5, but for reasons that will become clear, I haven’t tried to select it from dual.

All six sequences have supplied the value 1 – with some adjustments – as their first value. Apart from the simple declaration of s1, the results have all been expanded to include a leading six-digit number: 101720. The first three digits are (100 + mod(instance_number,100)), and the next three are mod(sid,1000). This matches exactly the sort of strategy I’ve described above and in the previous article to minimize contention on index leaf blocks (at the cost of seeing 50/50 leaf block splits almost everywhere.)

The significance of the extend option is visible when you compare s2 with s3 or s4 with s5. In the absence of the extend option, the value created by the six-digit prefix has to fall within the defined maxvalue. This means the largest possible “raw” sequence number will be 6 orders of magnitude lower than you expected. (And that’s why I didn’t try selecting s6.nextval from dual – it would have raised Oracle error “ORA-64603: NEXTVAL cannot be instantiated for S6. Widen the sequence by 1 digits or alter sequence with SCALE EXTEND”). If you include the extend option, the prefix is multiplied by enough powers of 10 that your actual specified maxvalue can be reached without raising an error.

It’s a nice thought that your code no longer needs to impose scalability on the use of sequences, but you do still have to do a little design work before you take advantage of this option. If you’ve implemented something like this new “double prefix” in the past to maximize the scalability of your sequences, you will already be familiar with the problem: you need to decide the smallest possible safe maxvalue for a sequence before you create the sequence.

Imagine you actually needed “raw” values up to 1e8 (ca. 6 bytes), then a suitably selected maxvalue with scale and extend would give you a 15 digit number (ca. 9 bytes). If you hadn’t specified a maxvalue, your sequence would become a 28 (or 32, with extend) digit number. This means a typical 15 (or 17) bytes which would leave you storing an extra 6 (or 8) bytes per row, per index entry in the primary key, per row in any child table, and per index entry in any “foreign key index”. This may not matter very much if it avoids a performance threat, but it’s a detail that requires a deliberate choice – you should always try to be aware of consequences, even if you decide to ignore them.

Summary

This article reviewed sequences in light of the basic contention threat that is always present in any multi-instance (RAC) system and identified two hot spots: updates to the seq$ table as a sequence cache is refreshed, and the high-value index leaf block if nothing is done to spread the sequence values used by different instances across different leaf blocks.

It’s shown that the default RAC behaviour of sequences – where every instance gets its own range of values – helps to avoid the index contention, though a large cache size is needed for the best effect. At the same time, it demonstrated that a large cache size minimizes the frequency of updates to, hence competition for, the seq$ table.

The order option decreases scalability as it makes every instance compete for the current high-value index leaf block. It also adds overhead as the sequence information is passed around the instances through the Global Enqueue service.

Even though you can reduce cross-instance contention by setting a large cache size, you will still have some contention between one pair of instances each time an instance refreshes its cache. There will still be contention between sessions within each instance as they compete for the “instance-local” high-value index leaf block. This leads us to the idea of pre-fixing the sequence value with a two-part prefix constructed from the instance number and session number. In 12c (though documented only in 18c), Oracle allows creating a sequence that handles this work with no extra coding required. However, the necessary static declaration does require deciding whether to use some extra space to avoid any risk of running out of sequence values.

I’ve also briefly mentioned a couple of new features in 12c that add a little extra power and convenience to sequences. The next article will start looking at examples of the various ways to use sequences, and how they work might sometimes be surprising.

Load comments