Getting HTML content into SQL Server is a recurring integration task where structured data lives on a web page (or in an HTML file) and needs to become relational rows for querying. Direct approaches (SSIS web source, CSV-after-Excel-paste, regex against raw HTML) tend to break on real-world HTML’s irregularities – missing closing tags, nested structure, mixed content.

This article demonstrates a more robust approach using the HTML Agility Pack (HAP), a .NET library that parses real-world HTML reliably, driven from PowerShell

In brief, the approach is:

(1) use Invoke-WebRequest to fetch the page;

(2) load the HTML into a HAP HtmlDocument;

(3) use XPath or LINQ queries against the document tree to extract tables, lists, or specific elements;

(4) stage the extracted data in a PowerShell DataTable;

(5) write the DataTable to SQL Server via Write-SqlTableData or bulk-copy.

The same pattern generalises to any structured web-source ingestion – price monitoring, reference-data feeds, scheduled HTML-report scraping. Works on any Windows machine with PowerShell and the HAP NuGet package.

The problem at hand

Quite a lot of developers would like to read the data reliably from websites, usually in order to subsequently load the data into a database. There are several ways of doing so, and I’ve used most of them. If it is a one-off process, such as getting the names of countries, colours, or words for snow, then it isn’t much of a problem. If you need to do it more regularly when data gets updated, then it can become more tedious.

Any system that you use is likely to require constant maintenance because of the shifting nature of most websites. There are a number of snags which aren’t always apparent when you’re starting out with this sort of ‘web-scraping’ technology. From a distance, it seems easy.

The HTML table

An HTML table is the most obvious place to find data. An HTML table isn’t in any way equivalent to a database table. For a start, there seems to be wide range of opinions about how an HTML data table should be structured. The data, too, must always be kept at arms-length within the database until it is thoroughly checked. Some people dream of being able to blast data straight from the web page into a data table. So easily, the dream can become a nightmare.

Imagine that you have an automated routine that is set up to get last week’s price movements for a commodity from a website: Sugar Beet, let us say. Fine. Because the table you want doesn’t have any id or class to uniquely identify it within the website, you choose instead to exploit the fact that it is the second table on the page. It all works well, and you are unaware that the first table, used for formatting the headings and logo prettily, is replaced by some remote designer in another country with a CSS solution using DIVs. The second table then becomes the following table, containing the prices for an entirely different commodity: Oilseed Rape.

Because the prices are similar and you do not check often, you don’t notice, and the business takes decisions on buying and selling sugar-beet based on the fluctuations in the price of Oilseed Rape. Other things can go wrong. Designers can change tables by combining cells, either vertically or horizontally. The order of columns can change (some designers apparently don’t think that column headings are ‘cool’). Other Websites use different table structures or don’t use TH tags for headings. Some designers put in extra columns in a table of data purely as ‘spacers’, for sub headings, pictures, or fancy borders.

There are plenty of ways of fighting back against sloppy web-pages to do web-scraping. You can get to your data more reliably if it is identified by its ID or the class assigned to it, or if it is contained within a consistent structure. To do this, you need a rather efficient way of getting to the various parts of the webpage.

If you are doing an aggregation and ‘warehousing’ of data from a number of sources you need to do sanity checks. Is the data valid and reasonable? Has it fluctuated beyond the standard deviation? Is it within range? You need to do occasional cross-checks of a sample of the data with other sources of the same data.

In one company I worked for, the quantitive data for product comparisons with competitors were stored in Metric units for some products and Imperial units for others, without anyone noticing for months. It only came to light when we bought one of the competitors and their engineers then questioned the data. Any anomalies, however slight they seem have to be logged, notified and then checked by an Administrator. There must be constant vigilance. Only when you are confident can you allow data updates.

I see DBAs as being more like data-zoo-keepers than data-police. I like to check the data in a transit area within the database in order to do detailed checking before then using the data to update the database.

The alternatives

Of the various methods of extracting data from HTML tables that I’ve come across…

- Regex dissection.

- This can be made to work but it is bad. Regexes were not designed for slicing up hierarchical data. An HTML page is deeply hierarchical. You can get them to work but the results are overly fragile. I’ve used programs in a language such as Perl or PHP in the past to do this, though any .NET language will do as well.

- Recursive dissection.

- This will only work for well-formed HTML. Unfortunately, browsers are tolerant of badly-formed syntax. If you can be guaranteed to eat only valid XHTML, then it will work, but why bother when the existing tools exist to do it properly.

- Iterative dissection:.

- This is a handy approach in a language like SQL, when you have plenty of time to do the dissection to get at the table elements. You have more control that in the recursive solution, but the same fundamental problems have to be overcome: The HTML that is out there just doesn’t always have close-tags such as ‘</p>’, and there are many interpretations of the table structure out there. Robyn and I showed how this worked in TSQL here.

- ODBC.

- Microsoft released a Jet driver that was able to access a table from an HTML page, if you told it the URL and used it if you were feeling lucky. It is great when this just works, but it often disappoints. It is no longer actively maintained by Microsoft. I wrote about it here.

- Driving the browser

- Sometimes, you’ll need to drive the IE browser as a COM object. This is especially the case if the site’s contents is dynamic or is refreshed via AJAX. . You then have to read the DOM via the document property to get at the actual data. Let’s not go there in this article! In the old days, we used to occasionally use LINX text browser for this, and then parse the data out of the subsequent text file.

- OCR.

- You may come across a Flash site, or one where the data is done as ‘text as images’. Just screendump it and OCR the results. (for one-offs I use Abbyy screenshot reader!).

- XPath queries

- You can use either the .NET classes for XML or the built-in XQuery in SQL Server to do this. It will only work for valid XHTML.

- XSLT

- This is always a good conversation-stopper. ‘easier to use XSLT’. I’ve never tried it for this purpose, but there will always be someone who will say that it is soooo easy. The problem is that you are not dealing with XML or, for that matter, XHTML.

- HTML Agility Pack

- This is so easy that it makes XPath seem quite fun. It works like standard XPath, but on ordinary HTML, warts and all. With it you can slice and dice HTML to your heart’s content.

Using the HTML Agility Pack with PowerShell.

The HTML Agility Pack (HAP) was originally written by Simon Mourier, who spent fourteen years at Microsoft before becoming the CTO and co-founder of SoftFluent. The HAP is an assembly that works as a parser, building a read/write DOM and supporting plain XPath or XSLT. It exploits dotNet’s implementation of XPath to allow you to parse HTML files straight from the web.

The parser has no intrinsic understanding of the significance of the HTML tags. It treats HTML as if it were slightly zany XML. The HAP It works in a similar way to System.XML, but is very tolerant with malformed HTML documents, fragments or streams that are so typically found around the Web. It can be used for a variety of purposes such as fixing or generating HTML pages, fixing links, or adding to the pages. It is ideal for automating the drudgery of creating web pages, such as creating tables of contents, footnotes, or references for HTML documents. It is perfect for Web-scrapers too. It can even turn a web page into an RSS feed with just an XSLT file as the binding. It can be downloaded from here. It has been recently converted so as to now support LINQ to Objects via a ‘LINQ to Xml’-like interface

It isn’t possible to use the HTML Agility Pack easily from within SQL Server, though you could probably write a CLR library pretty quickly. The downside is that you’d need to access the internet from your production server, which would be silly. However, driving the HAP from PowerShell, or any other language that you might prefer, is pretty easy.

First step

Here is some PowerShell that lists out some classical insults as text, from a test website. Quite simply, it downloads the file, gulps it into the HAP as a parsed file and then pulls the text out of the second table. (‘(//table)[2]’ means the second table, wherever it is in the document, whereas ‘(//table)[2]’ means the second table of the parent element, which isn’t quite the same thing)

|

1 2 3 4 5 |

add-type -Path 'C:\Program Files (x86)\htmlAgilityPack\HtmlAgilityPack.dll' $HTMLDocument = New-Object HtmlAgilityPack.HtmlDocument $wc = New-Object System.Net.WebClient $result = $HTMLDocument.LoadHTML($wc.DownloadString("http://www.simple-talk.com/blogbits/philf/quotations.html")) $HTMLDocument.DocumentNode.SelectSingleNode("(//table)[2]").InnerText |

This will give a list of insults. You would need to install the Agility Pack and give the path to it or put it in your Global Assembly Cache (GAC). In this, and subsequent PowerShell scripts, I’m giving quick ‘n dirty code that gets results, along with some test HTML pages, just so you can experiment and get a feel for it.

You’ll notice that this first script extracts the meat out of the sandwich by the means of XPath queries. Normally, ordinary humans have no business looking at these, but in certain circumstances they are very useful.

Of course, if you’re a database programmer, you’ll probably be familiar with XPath. Here is an example of shredding XHTML document fragments via XPath using SQL. I give just a small part of the document. Make sure that, in your mind’s eye, the precious text is surrounded by pernicious adverts and other undesirable content.

|

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 |

DECLARE @xml xml SELECT @XML='<body> <ol id="insults""> <li>You have two ears and one mouth so you might listen the more and talk the less.</li> <li>You like your friends to be just clever enough to comprehend your cleverness and just stupid enough to admire it.</li> <li>You own and operate a ferocious temper. </li> <li>There is nothing wrong with you, that a miracle couldn''t cure.</li> <li>Why be disagreeable, when with a little effort you could be impossible? </li> <li>You look as if you had been poured into your clothes and had forgotten to say when.</li> </ol> </body> ' SELECT @xml.query('//li[2]/text()')--text of second element SELECT Loc.query('.') FROM @xml.nodes('/body/ol/li/text()') as T2(Loc) |

However, that works with XML documents, or XHTML fragments, but not HTML.

Getting the contents of a list

Let’s try PowerShell’s .NET approach with a list (OL, in this case), which is the other main data-container you’re likely to find in an HTML page

|

1 2 3 4 5 6 |

add-type -Path 'C:\Program Files (x86)\htmlAgilityPack\HtmlAgilityPack.dll' $HTMLDocument = New-Object HtmlAgilityPack.HtmlDocument $wc = New-Object System.Net.WebClient $HTMLDocument.LoadHTML($wc.DownloadString("http://www.simple-talk.com/blogbits/philf/listExample.html")) $list=$HTMLDocument.DocumentNode.SelectNodes("(//ol)[1]/li") #only one list foreach ($listElement in $list) {$listElement.InnerText.Trim() -replace ('\n', "`r`n ") -replace('(?<=\s)\s' ,'') } |

This is fun. Not only that but it is resilient too. You’ll notice that I’ve corrected, in this example, the interpretation of all the white-space within the text of the tag as being significant, just by using a couple of regex substitutions. If you execute this from xp_cmdshell in SQL Server, you’re laughing, since you can read it straight into a single-column table and from there into the database.

Getting data that isn’t in a table into an array

Let’s try something more serious. The front page of Simple-Talk.

|

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 |

add-type -Path 'C:\Program Files (x86)\htmlAgilityPack\HtmlAgilityPack.dll' $HTMLDocument = New-Object HtmlAgilityPack.HtmlDocument $wc = New-Object System.Net.WebClient $HTMLDocument.LoadHTML($wc.DownloadString("http://www.simple-talk.com/default.aspx")) $Table=@() $articleDetails=$HTMLDocument.DocumentNode.SelectNodes("//div[@class='middle']/div[@class='articlesummary']") foreach ($articleDiv in $articleDetails) { $Tuple = "" | Select Title, Author, Synopsis $Tuple.Title = $articleDiv.SelectSingleNode("a").innertext $Tuple.Author = $articleDiv.SelectSingleNode("div[1]").innertext $Tuple.Synopsis = $articleDiv.SelectSingleNode("div[2]").innertext $Table +=$Tuple } $Table |

Here, you’ll immediately notice that we are specifying nodes by their class. We could use their IDs too. We’ve specified a couple of DIVs by their order within each of the articleDetails nodes. Where am I getting all this information on how to address individual parts of an HTML document via XPath? Well, we have a new wallchart which we have just published that has the information in it, and maps the functionality between XPath, CSS and javascript DOM. It should help a lot with the trickier bits of web-scraping.

We have also created a multidimensional array in which to put the data. This makes it easier to manipulate once we’ve finished.

Getting your data into SQL Server

Now that we have this data, what can we do to it? The easiest approach is to write it to file and then read it in to SQL Server via BCP. There is no need to explain how to read a file into SQL Server as this is already well-covered in Simple-Talk, here , here, and here. For the PowerShell file, we simply change the last line with

|

1 |

$Table | export-CSV 'SimpleTalkArticles.csv' |

I’ve left out any optional switches for simplicity. Or you can use Using the Export-Clixml Cmdlet if you wish to do so, to export it as an XML file.

There are a number of switches you can use to fine-tune the export of this XML data to file . You can even do this …

|

1 |

$Table |ConvertTo-HTML |

…in order to convert that data into a table in an HTML file (or a table fragment if you prefer)! If you then write it to file, it can then be read into Excel easily,

At this stage, I hope you feel empowered to create some quick-‘n-dirty PowerShell routines to grab HTML data from the internet and produce files that can then be imported into SQL Server or Excel.

The next stage would be to import into a SQL Server table from a PowerShell script. You can call a PowerShell script directly from SQL Server and import the data it returns into a table. Chad Miller has already covered an ingenious way of doing this without requiring a DataTable. If you are likely to want to import a lot of data, then a DataTable might be a good alternative.

Using a datatable

In this next ‘quick and dirty’ script we’ll create a DataTable that can then be exported to SQL Server. The most effective way of doing this is to use Chad Miller’s ‘Write-DataTable’ function. You can, if the urge takes you, write to an XML file as if it were a multidimensional array, and thence gulp it into SQL Server.

|

1 2 |

$DataTable.WriteXml(".\TableData.xml") $DataTable.WriteXmlSchema(".\TableData.xsd") |

To take out some tedious logic, we’ll do a generic table-scraper that doesn’t attempt to give the proper column names but gives, for each cell, the cell value, the row of the cell and the column of the cell. The headers are added as row 0 if they can be found. With this information, it is pretty easy to produce a SQL Server result, as we’ll show later on.

|

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27 28 29 30 31 32 |

add-type -Path 'C:\Program Files (x86)\htmlAgilityPack\HtmlAgilityPack.dll' $HTMLDocument = New-Object HtmlAgilityPack.HtmlDocument $wc = New-Object System.Net.WebClient $result = $HTMLDocument.LoadHTML($wc.DownloadString("http://www.simple-talk.com/blogbits/philf/TestForHTMLAgilityPack.html")) #create the table and the columns $DataTable = New-Object system.Data.DataTable 'TableData' $datacol1 = New-Object system.Data.DataColumn Row,([int]) $datacol2 = New-Object system.Data.DataColumn Col,([int]) $datacol3 = New-Object system.Data.DataColumn Value,([string]) #add the columns to the DataTable $DataTable.columns.add($datacol1) $DataTable.columns.add($datacol2) $DataTable.columns.add($datacol3) #iterate through the cells in the third table foreach ($Cell in $HTMLDocument.DocumentNode.SelectNodes("(//table)[3]//tr/td")) { $ThisRow=$DataTable.NewRow() $ThisRow.Item('Value')=$Cell.InnerText.Trim() -replace ('\n', "`r`n ") -replace('(?<=\s)\s' ,'') $ThisRow.Item('Col')=$Cell.XPath -creplace '(?sim)[^\s]*td\[([\d]{1,6})\]\s*\z', '$1' #column $ThisRow.Item('Row')=$Cell.XPath -creplace '(?sim)[^\s]*tr\[([\d]{1,6})\]/td\[[\d]{1,6}\]\s*\z', '$1' #row $DataTable.Rows.Add($ThisRow) } #and iterate through the headers (these may be in a thead block) foreach ($heading in $HTMLDocument.DocumentNode.SelectNodes("(//table)[3]//th")) { $ThisRow=$DataTable.NewRow() $ThisRow.Item('Value')=$heading.InnerText.Trim() $ThisRow.Item('Col')=$heading.XPath -creplace '(?sim)[^\s]*th\[([\d]{1,6})\]\s*\z', '$1' #column $ThisRow.Item('Row')=0 $DataTable.Rows.Add($ThisRow) } $DataTable |

This may look a bit wacky. The reason is that we’re taking advantage of a short-cut. The HTML Agility Pack returns the XPath of every HTML element that it returns, so you have all the information you need about the column number and row number without iterative counters. It assumes that the table is regular, without colspans or rowspans. Here is an example from one of the cells that we just parsed.

|

1 |

/html[1]/body[1]/table[1]/tr[47]/td[1] |

See that? It is the first table (table[1]) row 47, column 1 isn’t it. You want the rightmost figures too, in case you have nesting. You just have to parse it out of the XPath string. I used regex to get the ordinal values for the final elements in the path. The sample file has three tables, you can try it out with the other two by changing the table[1] to table[2] or table[3]

If we export the DataTable to XML using the …

|

1 |

$DataTable.WriteXml(".\TableData.xml") |

..in the end of the last PowerShell script, then we can soon get the result into a SQL table that is easy to manipulate.

|

1 2 3 |

DECLARE @Data XML SELECT @Data = BulkColumn FROM OPENROWSET(BULK 'C:\workbench\TableData.XML', SINGLE_BLOB) AS x |

In this example, I’ve left out most of the rows so that you can try this, out of sequence, without needing the table.

|

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27 28 29 30 31 32 33 34 35 36 37 38 39 40 41 42 43 44 45 46 47 48 49 50 51 52 53 54 55 56 57 |

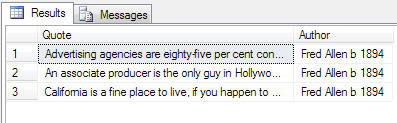

DECLARE @data XML SET @Data= '<?xml version="1.0" standalone="yes" ?> <DocumentElement> <TableData> <Row>1</Row> <Col>1</Col> <Value>Advertising agencies are eighty-five per cent confusion and fifteen per cent commission.</Value> </TableData> <TableData> <Row>1</Row> <Col>2</Col> <Value>Fred Allen b 1894</Value> </TableData> <TableData> <Row>2</Row> <Col>1</Col> <Value>An associate producer is the only guy in Hollywood who will associate with a producer.</Value> </TableData> <TableData> <Row>2</Row> <Col>2</Col> <Value>Fred Allen b 1894</Value> </TableData> <TableData> <Row>3</Row> <Col>1</Col> <Value>California is a fine place to live, if you happen to be an orange.</Value> </TableData> <TableData> <Row>3</Row> <Col>2</Col> <Value>Fred Allen b 1894</Value> </TableData> <TableData> <Row>0</Row> <Col>1</Col> <Value>Quotation</Value> </TableData> <TableData> <Row>0</Row> <Col>2</Col> <Value>Author</Value> </TableData> </DocumentElement>' Select max(case when col=1 then value else '' end) as Quote, max(case when col=2 then value else '' end) as Author from (SELECT x.y.value('Col[1]', 'int') AS [Col], x.y.value('Row[1]', 'int') AS [Row], x.y.value('Value[1]', 'VARCHAR(200)') AS [Value] FROM @data .nodes('//DocumentElement/TableData') AS x ( y ) ) rawTableData group by row having row >0 order by row |

Yes, you’re right, we’ve used XPath once again to produce a SQL Result.

By having the data with the same three columns whatever the data, you can simplify the transition to proper relational data.You can define a Table-valued Parameter to do lot of the work for you or even pass the data from PowerShell to SQL Server using a TVP. It makes the whole process very simple.

Using a web-scraper within an application

So, how would you go about scraping a site? The data is there but generally you have no idea of the structure of the site. I usually start by checking that the site can be read by this technique (dynamic content requires a very different technique) and that robots have not been specifically forbidden. If it requires authentication, a POST, or any other complication such as the user-agent, then it is better to use a more specialised tool to do the download. I like both CURL and WGET.

With most pages, it is easy to work out where the data that you want is held, just by inspecting the source. FireBug in Firefox will help you locate an element that is difficult to find. For the more complicated sites, it is simple to scan a site for a particular word or phrase in the InnerText using HAP, and get back the absolute XPath for the elements that have the phrase in their Innertext. You can also sometimes locate your data by the HREF of an anchor . This snippet displays the link for every anchor on the page and its corresponding XPath.

|

1 2 3 4 |

foreach( $link in $HTMLDocument.DocumentNode.SelectNodes("//a[@href]")) { $link.Attributes["href"].value +' is at address ' + $link.XPath } |

..or you can search for text, either within the element (contains) , starts-with and get the XPath of the elements

|

1 2 3 4 |

foreach( $element in $HTMLDocument.DocumentNode.SelectNodes("//td[contains(text(),'Fred') ]")) { $element.XPath + ' --- '''+$element.innerText+ '''' } |

With a fair wind and an XPath reference wallchart, a great deal is possible. If, for example, the data always has the same title, even if its location in the page varies, you can write a script that gets gets its location purely by looking for the heading. Some data is in ordinary paragraph tags, but you can still get at them via XPath if they follow a particular heading. XPath has a great deal of magic for awkward data-gathering.

For data-gathering, I generally use dedicated PCs within the domain. These need need very little power, so you can use old nags. I never let SQL Server itself anywhere near the internet. On these PCs, I have a scheduled task that runs a script that downloads the next task (ID and parameters) from the SQL Server, and runs it if it is due, returning the data to SQL Server, using windows authentication. Each task corresponds to a single data collection on one site. All errors and warnings are logged, together with the taskID, the User, and time of day, within the SQL Server database. When the data is received, it is scrubbed, checked, compared with the existing data and then, if all is well, the delta is entered into the database.

Conclusions

Using the HTMLAgilityPack is great for the run-of-the-mill reading of data, and you may never hit its limitations, unless of course you are scraping the contents of an AJAX site, or the data is in flash. isn’t perfect, since the HTML file is treated without understanding the semantics of HTML. This is fine up to a level of detail, but HTML tables really are awful because they allow you to mix presentation and meaning. The Colspan and Rowspan have no equivalent meaning in any sensible data table, and make extracting the data more tiresome than it need be.

Although I’ve tended to use RegEx queries in the past, I’m now convinced that the HTML Agility Pack is a more sensible approach for general use in extracting data from HTML in .NET

Load comments