Pseudonymizing a Database with Realistic Data for Development Work

How to use SQL Data Generator, and PowerShell to obfuscate personal data (names), while retaining the same distribution of data, so that the test database behaves like the original.

For this demonstration, we will take AdventureWorks and produce a pseudonymized copy for development work. The aim is to surgically alter just the data that can identify individuals but leave everything else intact. I’ve shown how to do something similar before, for a subset of the data, in Pseudonymizing your data with SQL Data Generator. However, this time we want to work with the whole database, and we also need to ensure that the new data has the same overall data distribution as the old data. We want the data to look uncannily like the real thing.

For the demonstration, we will restrict ourselves to removing just the names in a single table, and replacing them with randomised names, generated only from the characteristics of the real names, to get the same distribution as the original. Hopefully, the result is suitable for development work as well as testing.

Although we will use SQL Data Generator to do this, the principle also works with Data Masker for SQL Server, and you will want to use that tool if dealing with any relationships. The spoof data is generated according to a Markov distribution, in order to give it extra verisimilitude, and my Spoofing Data… series on Simple-Talk provides further details on using Markov chains in T-SQL, so I won’t repeat much of that detail here.

The Process

This process, once set up, is infinitely repeatable via PowerShell automation so that you can easily make it part of your daily build. It is designed to get over the problem that entirely generated data doesn’t ever conform to the same distribution of data as the original, or even to look much like the original data. Remember that were this real data, you would still need to keep the resulting data under the same security regime as the original, because it is pseudonymized rather than completely anonymized. Most of the data is left intact, so a determined hacker could identify a few individuals in pseudonymized data

Preparing a copy of the database with its data

In this demonstration, I’m using a database called Phasael, which is just copy of AdventureWorks2016, including the data. You can restore from a backup, attach a copy of the mdf file, or use the technique I show here, Deploying Multiple Databases from Source Control using SQL Change Automation, using SCA and BCP.

For the sake of this demonstration, we are only going to alter just one table, Person.Person. In reality, we’d need to find, and replace, all the personally identifiable information, such as the address information, and so on. We aren’t going to mess with any relationships, as defined by the FOREIGN KEY constraints. For that, you’d need Data Masker for SQL Server.

In SSMS, run Listing 1 on Phasael, to disable constraints. Don’t worry, this is a temporary thing you do when changing data and it is reversible, as I’ll eventually show.

|

1 |

EXEC sp_msforeachtable "ALTER TABLE ? NOCHECK CONSTRAINT all" |

Listing 1

Preparing to make lists of data

Now we create the new data in SQL, using the real data as inspiration. This is done only once when starting this process, and thereafter only occasionally, if the characteristics of the original data change significantly.

We create a new utility database or use an existing one on the same server as the database we are obfuscating. To start, we create some utility procedures that we’ll use to generate lists of column values that contain spoof values that conform as closely as possible to real values.

Surnames, for example, might look like this: Serez, Wara, Garson, Subran, Ree, Perris, Gomirez, Zhans, Lopez, Riversen, Murkera, Herry, Munoz, Natterrez, Shan, Raje, Zhe, Kumarro, Simmon, Johns, Lopez, Liu, Kin or Gomez.

A Markov table merely records the frequency of every set of adjacent three-letter combinations (trigraphs). Factor, for example will be composed of ‘Fac’ (position 1), ‘act’ (position 2), ‘cto’ (position 3), and ‘tor’ (position 4). Add a few thousand last names into the mix, and there will be several trigraphs that can follow ‘Fac’. If we then select ‘Fac’ by random numbers, according to its frequency in the distribution we would choose one of them to get the next letter. Obviously, they would all start with ‘ac’. You can do it randomly, or by frequency in the population. We would choose the latter, though the former provides funnier results, and more akin to ‘smudging’. You then just continue finding each trigraph, each of which contributes a letter to the final string. When you reach the point where a trigraph is a string-terminator, then you’ve got your value.

|

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27 28 29 30 31 32 33 34 35 36 37 38 39 40 41 42 43 44 45 46 47 48 49 50 51 52 53 54 55 56 57 58 59 60 61 62 63 64 65 66 67 68 69 70 71 72 73 74 75 76 77 78 79 80 81 82 83 84 85 86 87 88 89 90 91 92 93 94 95 96 97 98 99 100 101 102 103 104 105 106 107 108 109 110 111 112 113 114 115 116 117 118 119 120 121 122 123 124 125 126 127 128 129 130 131 132 133 134 135 136 137 138 139 140 141 142 143 144 145 146 147 148 149 150 151 152 153 154 155 156 157 158 159 160 161 162 163 164 165 166 167 168 169 170 171 172 173 174 175 176 177 178 179 180 181 182 |

/* drop anything that uses the two types we need to create */ IF Object_Id('dbo.MarkovTableFrom', 'IF') IS NOT NULL DROP FUNCTION dbo.MarkovTableFrom; GO IF Object_Id('dbo.FakedStringFrom') IS NOT NULL DROP FUNCTION dbo.FakedStringFrom; GO /* create the two types we will use as table-valued parameters */ IF EXISTS (SELECT * FROM sys.types WHERE types.name LIKE 'StringValue') DROP TYPE StringValue; /* Create a table type to store strings in. */ CREATE TYPE StringValue AS TABLE (String NVARCHAR(50) NOT NULL, Category INT NOT NULL DEFAULT 1); GO IF EXISTS (SELECT * FROM sys.types WHERE types.name LIKE 'Markov') DROP TYPE dbo.Markov; GO /* Create a table type to store Markov data in. */ /* Object: UserDefinedTableType dbo.Markov */ CREATE TYPE dbo.Markov AS TABLE (trigraph NCHAR(3) NOT NULL, iteration TINYINT NOT NULL, frequency INT NOT NULL, totalInSet INT NOT NULL, runningTotal INT NOT NULL, TheOrder INT NOT NULL, Choices INT NOT NULL, TheEND BIT NOT NULL, Category INT NOT NULL PRIMARY KEY CLUSTERED (iteration ASC, trigraph ASC, category) WITH(IGNORE_DUP_KEY = OFF)); GO /* Create a view to sneak into a function. Random numbers are detected and they are not allowed. */ IF Object_Id('dbo.SingleRandomNumber') IS NOT NULL DROP VIEW dbo.SingleRandomNumber GO CREATE VIEW [dbo].[SingleRandomNumber] AS SELECT Rand() AS RandomNumber; GO IF Object_Id('MarkovTableFrom') IS NOT NULL DROP FUNCTION MarkovTableFrom; GO CREATE FUNCTION MarkovTableFrom /** Summary: > in our table, we will take every three-character trigraph in the word sequence and calculate the frequency with which it appears in the collection passed to the routine. This is simple to do with a GROUP BY clause. The quickest way I've found of doing this is by a cross-join with a number table (I've used a VALUE table here to reduce dependencies). The result is then fed to a window expression that calculates the running total of the frequencies. You'll need this to generate the right distribution of three-character triads within the list of sample words This version of the Markov table has extra fields, more than is strictly necessary, in order to make it easier to do set-based operations using it. Author: Phil Factor Date: 10/07/2018 Database: Utility Examples: - Select trigraph, iteration, frequency, runningTotal, theOrder, choices, TheEnd from dbo.MarkovTableFrom(@MyStringValues) Returns: > A Markov Table **/ (@SampleStrings StringValue READONLY) RETURNS TABLE --WITH ENCRYPTION|SCHEMABINDING, .. AS RETURN -- (trigraph, iteration, frequency ,runningTotal, theOrder, choices, TheEnd) (WITH MarkovBasics AS (SELECT Frequencies.trigraph, Frequencies.Iteration, Frequencies.frequency, Sum(Frequencies.frequency) OVER (PARTITION BY Frequencies.Iteration, Left(Frequencies.trigraph, 2) ORDER BY Frequencies.frequency, Frequencies.trigraph) AS RunningTotal, Row_Number() OVER (PARTITION BY Substring( Frequencies.trigraph, 1, 2), Frequencies.Iteration ORDER BY (SELECT 1)) AS TheOrder, 0 AS choices, CASE WHEN Left(Frequencies.trigraph, 2) LIKE '%|%' THEN 1 ELSE 0 END AS TheEnd, Sum(Frequencies.frequency) OVER (PARTITION BY Frequencies.Iteration, Left(Frequencies.trigraph, 2) ORDER BY (SELECT 1)) AS totalInSet, Frequencies.category FROM (SELECT Substring( ' ' + Coalesce(p.String, '') + '|', f.iteration, 3) AS trigraph, f.iteration, Count(*) AS frequency, p.Category FROM @SampleStrings AS p CROSS JOIN (VALUES (1),(2),(3),(4),(5),(6),(7),(8),(9),(10), (11),(12),(13),(14),(15),(16),(17),(18),(19),(20), (21),(22),(23),(24),(25),(26),(27),(28),(29),(30), (31),(32),(33),(34),(35),(36),(37),(38),(39),(40) ) f(iteration) WHERE Substring( ' ' + Coalesce(p.String, '') + '|', f.iteration, 3) <> '' GROUP BY Substring( ' ' + Coalesce(p.String, '') + '|', f.iteration, 3), f.iteration, p.Category) AS Frequencies(trigraph, Iteration, frequency, category) ) SELECT MarkovBasics.trigraph, f.iteration, MarkovBasics.frequency, MarkovBasics.RunningTotal, MarkovBasics.totalInSet, MarkovBasics.TheOrder, f.choices, MarkovBasics.TheEnd, MarkovBasics.category FROM MarkovBasics INNER JOIN ( --we add in the number of choices at any point and give each choice an id 1..n SELECT Count(*) AS choices, Substring(MarkovBasics.trigraph, 1, 2) AS leading, MarkovBasics.Iteration FROM MarkovBasics GROUP BY Substring(MarkovBasics.trigraph, 1, 2), MarkovBasics.Iteration) f(choices, leading, iteration) ON f.iteration = MarkovBasics.Iteration AND f.leading = Substring(MarkovBasics.trigraph, 1, 2)); GO IF Object_Id('dbo.FakedStringFrom') IS NOT NULL DROP FUNCTION dbo.FakedStringFrom; GO CREATE FUNCTION dbo.FakedStringFrom /** Summary: > This function builds a string by random numbers from a Markov table passed to it via a TVP. Each time it is called, it gives a different string, and in this algorithm, the first three characters of each string are provided will fit the distribution of the words used to create the markov table. The following characters are chosen with equal probability just to increase the 'noise' of the word (you can tweak this) Author: Phil Factor Date: 10/07/2018 Database: Utility Examples: - SELECT dbo.FakedStringFrom(@CountryMarkov) FROM AdventureWorks2016.Person.Person AS P; Returns: > A string **/ (@MarkovTable AS Markov READONLY) RETURNS NVARCHAR(50) --WITH ENCRYPTION|SCHEMABINDING, ... AS BEGIN DECLARE @RowCount INT, @ii INT, @NewWord VARCHAR(50) = ' ', @done INT = 1; DECLARE @EntireRange INT --for matching the sample distribution = (SELECT Sum([@MarkovTable].frequency) FROM @MarkovTable WHERE [@MarkovTable].iteration = 3), @Random FLOAT = (SELECT TOP 1 SingleRandomNumber.RandomNumber FROM SingleRandomNumber); DECLARE @MaxLength INT = 50; --now we can use markov chains to assemble the word SELECT @ii = 1, @RowCount = 1, @done = 0; WHILE((@ii < @MaxLength) AND (@RowCount > 0)) BEGIN --we can make our choice based on its distribution but --it tends to reproduce more of the real data and less made up SELECT @NewWord = @NewWord + Right(M.trigraph, 1) FROM @MarkovTable M WHERE M.iteration = @ii AND M.trigraph LIKE Right(@NewWord, 2) + '_' AND --(choices=1 --OR (@Random * M.totalInSet) BETWEEN (M.runningTotal - M.frequency) AND M.runningTotal; SELECT @Random = SingleRandomNumber.RandomNumber FROM SingleRandomNumber; SELECT @RowCount = @@RowCount, @ii = @ii + 1; END; RETURN Replace(LTrim(@NewWord), '|', ''); END; GO |

Listing 2

Making the lists of data as tables

Now we can generate lists of the randomised spoof data, from markov chains. There will be a table for each list, just to keep things simple. We will generate lists for the FirstName, MiddleName, LastName, Title and Suffix columns.

This will take some time but remember that you only need to do it once, until the nature of the original data changes significantly.

I’ve generated this script by cut ‘n paste from a single batch that did just one column, so this is less arduous than it looks. You can, of course, do this with a stored procedure, but even that isn’t entirely as elegant as you’d imagine.

|

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27 28 29 30 31 32 33 34 35 36 37 38 39 40 41 42 43 44 45 46 47 48 49 50 51 52 53 54 55 56 57 58 59 60 61 62 63 64 65 66 67 68 69 70 71 72 73 74 75 76 |

/* Firstly, let's get the firstnames from Adventureworks Person.Person.FirstName */ DECLARE @firstnameStrings StringValue, @FirstnameMarkov Markov; INSERT INTO @firstnameStrings (String) SELECT P.FirstName FROM AdventureWorks2016.Person.Person AS P; INSERT INTO @FirstnameMarkov (trigraph, iteration, frequency, totalInSet, runningTotal, TheOrder, Choices, TheEND, category) SELECT trigraph, iteration, frequency, totalInSet, runningTotal, theOrder, choices, TheEnd, category FROM dbo.MarkovTableFrom(@firstnameStrings); SELECT dbo.FakedStringFrom(@FirstnameMarkov) AS Firstname INTO FirstName FROM AdventureWorks2016.Person.Person AS P; /* Now get the middlenames from Adventureworks Person.Person.middleName */ DECLARE @middlenameStrings StringValue, @middlenameMarkov Markov; INSERT INTO @middlenameStrings (String) SELECT Coalesce(P.MiddleName, '') FROM AdventureWorks2016.Person.Person AS P; INSERT INTO @middlenameMarkov (trigraph, iteration, frequency, totalInSet, runningTotal, TheOrder, Choices, TheEND, category) SELECT trigraph, iteration, frequency, totalInSet, runningTotal, theOrder, choices, TheEnd, category FROM dbo.MarkovTableFrom(@MiddleNameStrings); SELECT dbo.FakedStringFrom(@middlenameMarkov) AS middlename INTO middleName FROM AdventureWorks2016.Person.Person AS P; -- and the last names DECLARE @LastnameStrings StringValue, @LastnameMarkov Markov; INSERT INTO @LastnameStrings (String) SELECT P.LastName FROM AdventureWorks2016.Person.Person AS P; INSERT INTO @LastnameMarkov (trigraph, iteration, frequency, totalInSet, runningTotal, TheOrder, Choices, TheEND, category) SELECT trigraph, iteration, frequency, totalInSet, runningTotal, theOrder, choices, TheEnd, category FROM dbo.MarkovTableFrom(@LastNameStrings); SELECT dbo.FakedStringFrom(@LastnameMarkov) AS Lastname INTO LastName FROM AdventureWorks2016.Person.Person AS P; ---and the suffix DECLARE @SuffixStrings StringValue, @SuffixMarkov Markov; INSERT INTO @SuffixStrings (String) SELECT Coalesce(P.Suffix, '') FROM AdventureWorks2016.Person.Person AS P; INSERT INTO @SuffixMarkov (trigraph, iteration, frequency, totalInSet, runningTotal, TheOrder, Choices, TheEND, category) SELECT trigraph, iteration, frequency, totalInSet, runningTotal, theOrder, choices, TheEnd, category FROM dbo.MarkovTableFrom(@SuffixStrings); SELECT dbo.FakedStringFrom(@SuffixMarkov) AS Suffix INTO Suffix FROM AdventureWorks2016.Person.Person AS P; -- finally the titles DECLARE @TitleStrings StringValue, @TitleMarkov Markov; INSERT INTO @TitleStrings (String) SELECT Coalesce(P.Title, '') FROM AdventureWorks2016.Person.Person AS P; INSERT INTO @TitleMarkov (trigraph, iteration, frequency, totalInSet, runningTotal, TheOrder, Choices, TheEND, category) SELECT trigraph, iteration, frequency, totalInSet, runningTotal, theOrder, choices, TheEnd, category FROM dbo.MarkovTableFrom(@TitleStrings); SELECT dbo.FakedStringFrom(@TitleMarkov) AS Title INTO Title FROM AdventureWorks2016.Person.Person AS P; |

Listing 3

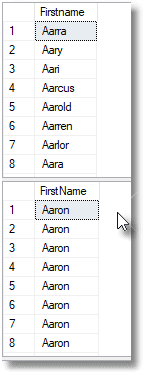

Let’s just test out what we’ve done. Whereas the original had 56 people whose first name started with ‘Aar‘, all of which were Aaron, the Markov chains have introduced a rich variety of 49 names beginning with ‘Aar‘, because the string ar_ (where ‘_‘ means any character!) occurs with many different third characters in the data at the second position.

|

1 2 |

SELECT * FROM dbo.FirstName WHERE FirstName.Firstname LIKE 'Aar%' SELECT FirstName FROM Adventureworks2016.person.person WHERE Firstname LIKE 'Aar%' |

Listing 4

It yields the results in Figure 1.

Figure 1

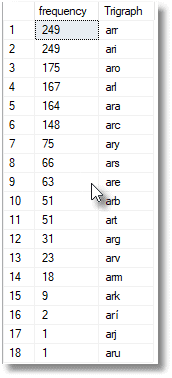

We can see this variety by using this query:

|

1 2 3 4 5 |

SELECT Count(*) AS Frequency, Substring(Person.FirstName, 2, 3) AS Trigraph FROM AdventureWorks2016.Person.Person WHERE Person.FirstName LIKE '_ar%' GROUP BY Substring(Person.FirstName, 2, 3) ORDER BY Count(*) DESC; |

Listing 5

Which gives:

Figure 2

Note that some data can’t be generated via Markov chains. Credit cards, for example, require a different technique, see Spoofing Data Convincingly: Credit Cards. Also, I show how to do dates in Spoofing Data Convincingly: Masking Continuous Variables.

The whole point is to make the spoofed data convincing enough to fool the casual glance, and pass obvious CHECK constraints, whilst keeping the distribution of the original.

Copying the tables to files

Now we have these lists as tables, it is the work of a moment to turn them into ASCII lists in your filesystem. We’ll use PowerShell for this. This script will do any lists of a column in a table in a database, as long as it is on the source server, defined by $SourceServerName.

|

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27 28 29 30 31 32 33 34 35 36 37 38 39 40 41 42 43 44 45 46 47 48 49 50 51 52 53 54 55 56 57 58 59 60 61 62 63 64 65 66 67 68 69 70 71 72 |

$SourceServerName = 'MyServer' # the server which contains the tables we take the lists from $SourceLogin = 'Mylogin' #Leave blank for Windows authentication $SourceOfLists = @( #specify the column and the qulalified table name, @{ ColumnName = 'Firstname'; TableName = 'MyDatabase.dbo.Firstname'; Filename = 'Firstname' }, @{ ColumnName = 'MiddleName'; TableName = 'MyDatabase.dbo.MiddleName'; Filename = 'MiddleName' }, @{ ColumnName = 'Lastname'; TableName = 'MyDatabase.dbo.Lastname'; Filename = 'Lastname' }, @{ ColumnName = 'Title'; TableName = 'MyDatabase.dbo.Title'; Filename = 'Title' }, @{ ColumnName = 'Suffix'; TableName = 'MyDatabase.dbo.Suffix'; Filename = 'Suffix' } ) $DestionationListDirectory = 'S:\work\SQL\Lists\'#or wherever #none of our errors are recoverable $ErrorActionPreference = "Stop" $MS = 'Microsoft.SQLServer' if (-not (Test-Path -PathType Container $DestionationListDirectory)) { # we create the script directory (normally you get static data from source control $null = New-Item -ItemType Directory -Force -Path "$DestionationListDirectory"; } #now we can save each list <a id="post-465983-_Hlk522725608"></a>#create a connection object # is this Windows Authentication or UserID/Password credentials? $connection = New-Object -TypeName System.Data.SqlClient.SqlConnection $connectionString = "Server=$SourceServerName;Integrated Security=True;" if ($SourceLogin -ne '') #if no login specified, then it was a windows login { $connectionString = "Server=$SourceServerName;Integrated Security=False;" if (Test-Path -path "$env:USERPROFILE\$SourceLogin-$SourceServerName.txt" -PathType leaf) { #has already got this set for this login so fetch it $encrypted = Get-Content "$env:USERPROFILE\$SourceLogin-$SourceServerName.txt" | ConvertTo-SecureString $encrypted.MakeReadOnly() $Credentials = New-Object System.Data.SqlClient.SqlCredential($SourceLogin, $encrypted) } else { #hasn't got this set for this login $AutomationCredentials = get-credential -Credential $SourceLogin $AutomationCredentials.Password | ConvertFrom-SecureString | Set-Content "$env:USERPROFILE\$SourceLogin-$SourceServerName.txt" ($password = $AutomationCredentials.Password).MakeReadOnly() $Credentials = New-Object System.Data.SqlClient.SqlCredential( ($AutomationCredentials.UserName -replace '^.*\\|@.*$'), $password ) } } $connection.Credential = $Credentials $connection.ConnectionString = $connectionString $connection.Open() | out-null $handler = [System.Data.SqlClient.SqlInfoMessageEventHandler] { param ($sender, $event) $global:message = "$($message)`n $($event.Message)" }; $connection.add_InfoMessage($handler); $connection.FireInfoMessageEventOnUserErrors = $true $SourceOfLists | foreach{ $list = $_; $cmd = new-Object System.Data.SqlClient.SqlCommand(@" Select $($list.ColumnName) from $($list.TableName) "@, $connection) $cmd.CommandTimeout = 10 $rdr = $cmd.ExecuteReader() #because we are getting a result back $columnWeWant = $list.ColumnName do { $datatable = new-object System.Data.DataTable $datatable.Load($rdr) $TheList=$datatable | foreach{ $_."$columnWeWant" } [io.file]::WriteAlllines("$($DestionationListDirectory)$($list.Filename).txt",$theList) } while ($rdr.IsClosed -eq $false) $rdr.Close() } |

Listing 6

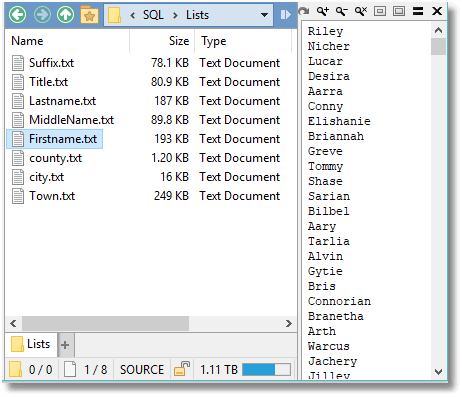

If you run this, then you will find your lists written out as text files in your file system.

Figure 3

If you do a lot of database testing, you are likely to end up with many of these lists, and they are very handy for the purpose. It is possible to stitch these into tables using a technique I demonstrate in the blog ‘Spoofing Data Convincingly: Doing the Shuffle and Zip’.

Creating the SQL Data Generator project file

Now we are ready to create the SQL Data Generator project file (.sqlgen)that we can then use to automate this process.

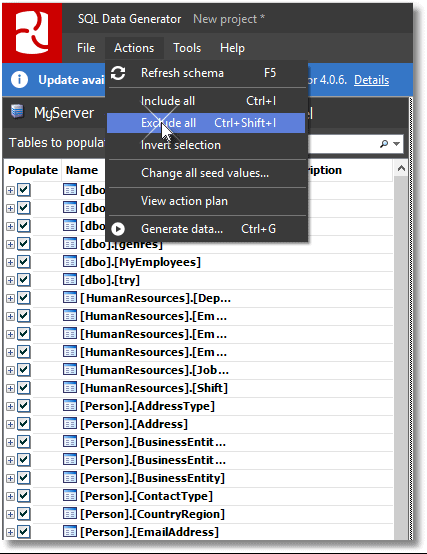

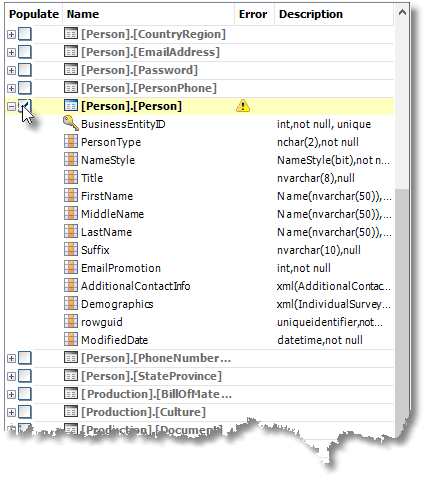

Open SQL Data Generator (SDG), start a new project, and set it to the database we need to pseudonymize, Phasael. Then, exclude all the tables, as in the illustration, by clicking on Actions | Exclude All.

Figure 4

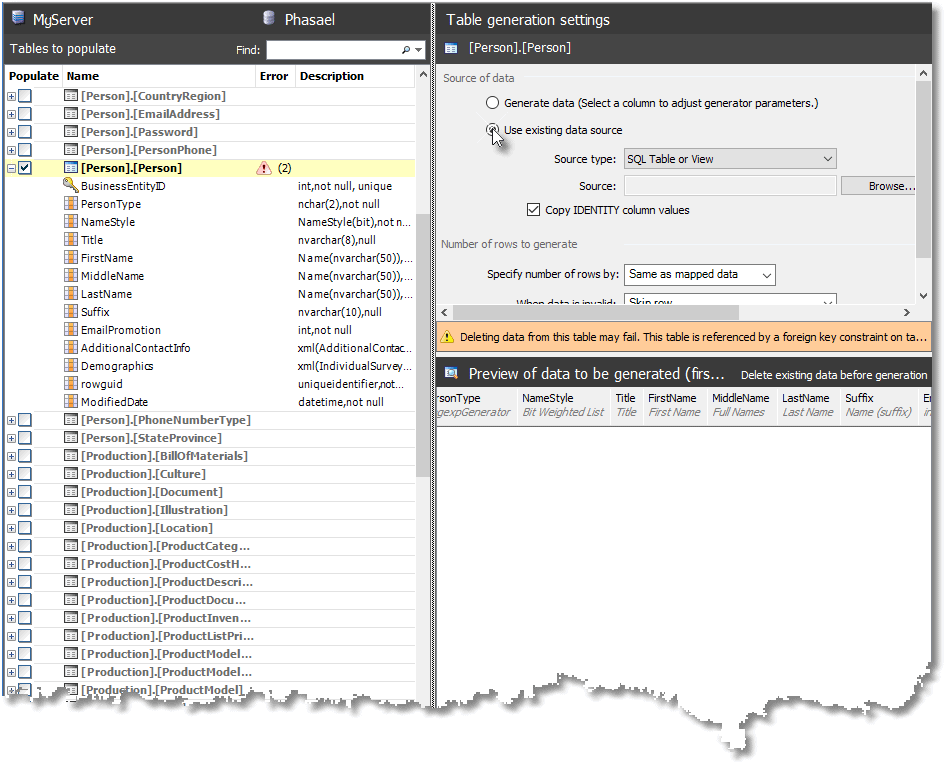

SDG now has nothing to do, so our next job is to select the Person.Person table:

Figure 5

You will see a warning to the effect the Person.Person is referenced by a FOREIGN KEY constraint, so SDG can’t delete the data in the table before filling it. However, it doesn’t know that we already disabled the CHECK constraints, so we can ignore these warnings.

Now we specify a generator for the entire table, which will be used for all the columns as appropriate. At the moment, SDG has only one table-level generator, but it is extremely powerful, because individual columns can be subsequently over-ridden.

Figure 6

Hit Browse… to select an existing data source and specify the server and database to use as the source of the table. By default, SDG will look for the source table in the Phasael database, but of course the source mustn’t be the same database and table as the one we are stocking with data, so specify the original copy of the database, AdventureWorks2016, instead.

Select the Person.Person table and hit Finish. We have now populated our Person.Person table in Phasael with the original data. SQL Data Generator is nervous at this point, because it hasn’t detected that we’ve disabled constraints. Fortunately, SQL Data Generator will always attempt an operation, despite its warnings and misgivings.

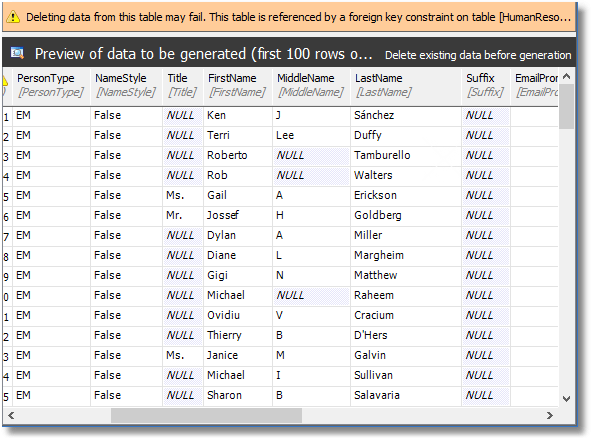

Figure 7

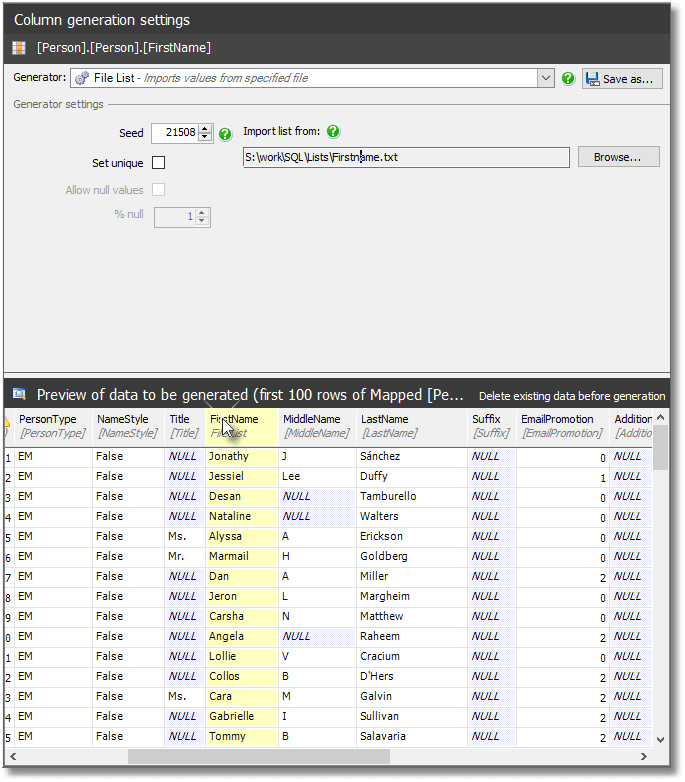

If we select any column in the preview pane, above, we can over-ride its generator. We want to therefore over-ride the generator for our individual name-related columns, while keeping the rest of the data intact.

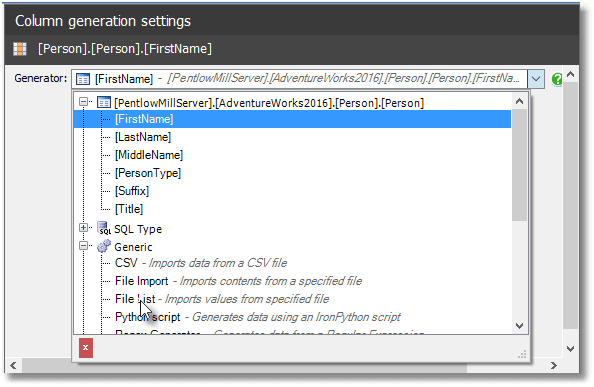

Figure 8

We want the File List generator, so we click it and SDG will choose one of the pre-supplied file lists from its Config folder. Hit Browse, and point it instead to our FirstName.txt file, and lo…the spoof data from our SQL and PowerShell machinations is now in the preview pane.

Figure 9

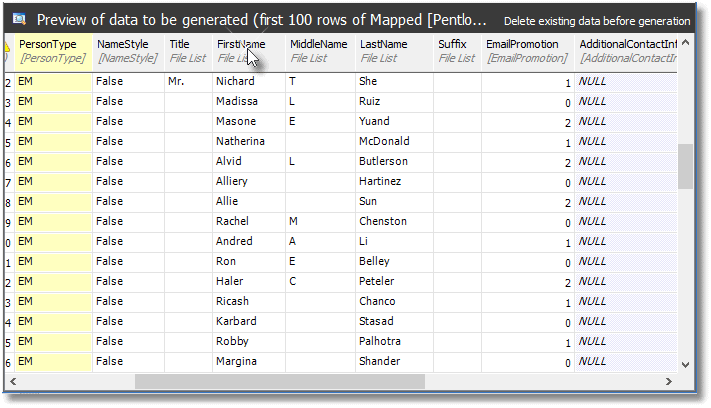

Now we just repeat this process for the four other lists, and we get this:

Figure 10

So, now, the names bear no resemblance to the originals. The final step is to see if our generator will work despite SQL Data Generator’s nervous warnings, so hit Actions | Generate Data and SDG will present you with this action plan:

The data generation actions are listed in execution order.

Delete

|

1 2 3 4 5 |

Removing all the rows from table [Person],[Person] using 'DELETE FROM [Person], [Person]" Generate Data Generating data for table [Person],[Person] with data from [MyServer].[AdventureWorks2016].[Person].[Person], The number of rows inserted will be the same as the number of rows in the source data |

And when you hit the Generate Data button, it does it. Once the data generation process completes, you now have the Phasael database, with obfuscated name data.

Make sure that you save the .sqlgen project file, which is an XML file with all your settings. With this, you can repeat the process as many times you need. If you plan to run this pseudonymization process often, it is worth nailing down all your settings, such as the location and name of lists, and the servers you use for the process. These SQLGEN projects are easily modified, but they are laborious.

The last thing you need to do is pop back over to SSMS and re-enable constraints on the our partially-obfuscated Phasael database.

|

1 |

EXEC sp_msforeachtable "ALTER TABLE ? WITH CHECK CHECK CONSTRAINT all" |

Listing 7

Tidying up

This is, of course, only a demonstration, but if you are doing this for real, you need to be careful not to leave artefacts. We deleted the old rows from the database, but you have to be sure that you haven’t left the old data in the transaction log, or the data pages, when doing this for real with sensitive data. The best way is to BCP all the data across, from the database to a freshly-built copy, but this is only practical with small databases.

Automating the process

I show how to automate a SQL Data generation in my article, Automatically filling your SQL Server test databases with data. To see how to do this for a whole range of development machines, see Automatically build-and-fill multiple development databases using PowerShell and SQL Data Generator.

Conclusions

The worst part of preparing development databases with data that has the characteristics of the real data, but without any real personal data, is the time it takes to do it. Plenty of solutions work with small amounts of data. With this solution, once the SQLGEN file is prepared, the only slow parts are the preparation of the spoof data and the actual deletion of the existing data.

The lists of spoofed data don’t have to be of the entire set of data, since SQL Data Generator will cheerfully repeat its contents randomly for as long as you wish, and you only create the lists once. The deletion process won’t worry you much if it is automated and can run overnight.

I’d like to emphasize that this process can be done without SQL Data Generator, but only with a lot of hard work, testing and maintenance. There is no excuse nowadays for developing databases with production data that contains sensitive or personal data.