My previous article, SQLXML Bulk Loader Basics, introduced you to the core functionality of SQLXML Bulk Loader, covering how to load multiple types of XML data files, from the simple table, to multiple separate tables, through to more complex, nested XML structures.

This article will introduce you to some of the more advanced tricks that the tool has up its sleeves. It’s very much an extension of the previous article, and uses the same CarSales_Staging database for the demos, so you’ll need to read that one first, if you’re new to the tool. I’ll demonstrate how to:

- Load attribute-centric XML files

- Use Optimize SQLXML Bulk Loader for fast bulk loads

- Enable or disable constraints

- Enable or disable SQLXML Bulk Loader transactions

- Create and drop the destination tables automatically

- Load XML data into XML columns in SQL Server tables

- Handle unmapped data

If you want to follow along with the examples, you can download the sample files by clicking on the Code Download link, in the article rating box at the start of the article. Throughout the article, the code listing caption provides the name of the relevant file in the code download bundle.

You can, of course, specify any database as the destination for your XML data. However if you do this it will be up to you to tweak the load scripts to use your destination database instead of the sample database. And remember to set your server and instance name in the .vbs load files instead of mine!

Using Attribute-Centric XML Source Files

All of the examples in the previous article used as source data only element-centric XML files, such as that shown in Listing 1.

|

1 2 3 4 5 6 7 8 |

<?xml version = "1.0" encoding = "UTF-8"?> <ROOT> <Invoice> <ID>3</ID> <ClientName>John Smith</ClientName> <County>Staffs</County> </Invoice> </ROOT> |

Listing 1: Element-centric XML file

It’s equally possible to handle attribute-centric XML files, and even use a source file the combines the two formats, such as the one in Listing 2. Here you can see that ID is an XML attribute, whereas ClientName and County are XML elements.

|

1 2 3 4 5 6 7 |

<?xml version = "1.0" encoding = "UTF-8" ?> <ROOT> <Invoice ID = "3"> <ClientName>John Smith</ClientName> <County>Staffs</County> </Invoice> </ROOT> |

Listing 2: Clients_Simple_EntityAndAttribute.xml

Listing 3 shows the schema file to handle the bulk load of a source file (such as the one described in Listing 2) that combines both element-and attribute-centric XML.

|

1 2 3 4 5 6 7 8 9 10 11 12 13 14 |

<xsd:schema xmlns:xsd = "http://www.w3.org/2001/XMLSchema" xmlns:sql = "urn:schemas-microsoft-com:mapping-schema"> <xsd:element name = "Invoice" sql:relation = "Client_XMLBulkLoad" > <xsd:complexType> <xsd:sequence> <xsd:element name = "ClientName" type = "xsd:string" sql:field = "ClientName"/> <xsd:element name = "County" type = "xsd: string" sql:field = "County"/> </xsd:sequence> <xsd:attribute name = "ID" type = "xsd:integer" /> </xsd:complexType> </xsd:element> </xsd:schema> |

Listing 3: Clients_Simple_EntityAndAttributeCentric.xsd

As you saw in the previous article, the schema file needs to describe not only the structure of the XML file, but also include the requisite annotations that enable SQLXML Bulk Loader to map the data to the destination table in SQL Server. This example uses the sql:relation annotation to specify the destination table, and then the xsd:element annotation to map the data to the columns in the table. It also uses the xsd:attribute annotation which (unsurprisingly) maps XML attributes to columns in the destination database.

In case you do not have the previous article to hand, the SQL to create the destination table is given in Listing 4.

|

1 2 3 4 5 6 7 8 9 10 |

CREATE TABLE CarSales_Staging.dbo.Client_XMLBulkLoad ( ID INT NULL , ClientName NVARCHAR(1000) NULL , Address1 NVARCHAR(1000) NULL , Town NVARCHAR(1000) NULL , County NVARCHAR(1000) NULL , Country NUMERIC(18, 0) NULL ); GO |

Listing 4: Client_XMLBulkLoad.sql

To test this short example, double click on the VBScript file defined in Listing 5 to invoke SQLXML Bulk Load and load the data. The target table is Client_XMLBulkLoad, and once again I’ve assume the .xml and .xsd files are stored in C:\SQLXMLArticle.

|

1 2 3 4 5 |

objBL = CreateObject("SQLXMLBulkLoad.SQLXMLBulkload.4.0") objBL.ConnectionString = "provider=SQLOLEDB;data source=ADAM02\SQLEXPRESS2014; database=CarSales_Staging; integrated security=SSPI" objBL.ErrorLogFile = "C:\SQLXMLArticle\SQLXMLBulkLoadImporterror.log" objBL.Execute("C:\SQLXMLArticle\Clients_Simple_EntityAndAttributeCentric.xsd", "C:\SQLXMLArticle\Clients_Simple_EntityAndAttribute.xml") objBL = Nothing |

Listing 5: SQLXMLBulkLoadMixedAttributeXML.vbs

This simple example is an indication of how you can mix and match element- and attribute-centric XML in the source file. Once again, as is so often the case with SQLXML Bulk Loader, the essential point of focus will inevitably be a carefully adapted XSD file. Once the XSD file maps to the source file you can load this data into the destination table quickly and easily.

Configuring the Load Process

The VBScript files we’ve used up until now to load XML files into SQL Server with SQLXML Bulk Loader have been extremely simple (not to say fairly elementary). All they do is:

- Establish a connection

- Specify an error log file

- Execute the load process

There are many ways in which we can configure the load process, depending on your balance of priorities between speed, security and data validation. All of these possibilities are accessible by configuring the available parameters of the SQLXMLBulkLoad object that you create in the first line of the script. Any SQLXML Bulk Load parameter can be added anywhere to the file before the objBL.Execute line.

As the aim here is to concentrate on the SQLXMLBulkLoad object and its properties in the examples that follow, I will continue to use the source file Clients_Simple_EntityAndAttribute.xml that you saw in the first example in this article, along with its companion XSD file Clients_Simple_EntityAndAttributeCentric.xsd.

Optimizing a Load for Speed

When we execute SQLXML Bulk Loader, it does not read the entire source XML file into memory; rather it reads the XML data as a stream. As it reads the data, it finds the database tables, creates the appropriate records from the XML source data, and then inserts the records into the database tables.

Any reduction in load times is based on taking advantage of this stream-based data load process. While it is difficult to offer exhaustive advice on optimizing loads, the ground rules are as follows:

- Try to negotiate “table dump” XML files (the second example that you saw in the previous article; Listing 7) with the people who supply you with the source data. This approach loads the tables of data successively. Simple XML source data organized into XML structures that map to SQL Server tables will load much faster than complex nested source XML patterns.

- Ensure that the tables into which the data is bulk loaded are empty. This can minimize logging which can reduce system resource utilization and often mean a reduction in load time.

- Remove all indexes from the destination tables before the load and recreate them afterwards. This approach, even if it means initially loading the XML data into a heap, and reapplying or recreating the indexes once the data load has finished, will nearly always prove to be faster than loading into a table with indexes. The usual caveats apply, however. You will have to test the principle on each data load as circumstances will vary.

- Place the source files as close as possible, in network terms, to the SQL Server, using the fastest possible network connection.

- Lock the destination table

- Do not enable transacted mode (this is explained a little later in the article).

- Disable

CHECKconstraints (also explained shortly). Applying constraints after a load process is nearly always the faster option, even if some of the load time gained is subsequently “lost” whenCHECKconstraints are enabled after the load process has finished.

While only practice and experimentation will determine the best solution for your environment, you could get close to 90% of BCP native data load speeds if you respect all the conditions outlined above. In any case, feel free to experiment with the various possibilities until you have settled on an optimal approach for your specific data load.

Locking the Destination Table for Faster Bulk Loads

One simple but powerful option is to use the ForceTableLock option to place a table lock on the destination table. The availability of this option is probably no surprise, since in T-SQL you have the WITH (TABLOCK) hint, in BCP you have -h "TABLOCK" and BULK INSERT has TABLOCK.

Use of ForceTableLock can deliver a noticeable reduction in load times. Be warned, however, that if SQLXML Bulk Load cannot obtain a lock on the table, the load will fail. To lock the destination table simply add the following line to the VBScript file that you are using to load the data (assuming you created objBL as the SQLXMLBulkLoad object):

|

1 |

objBL.ForceTableLock = True |

Enable or Disable Constraints?

SQLXML Bulk Load behaves in a very similar fashion to BCP and BULK INSERT in many respects, and it’s handling of CHECK constraints is similar too. They are disabled by default for a bulk load, but if you ever need to enable them you add the following line to the VBScript file:

|

1 |

objBL.CheckConstraints = True |

Setting this parameter ensures that the data being inserted into the tables applies all constraints that have been specified on the tables (PRIMARY and FOREIGN KEY constraints, for example). The process fails if this parameter is set to True, and constraints are violated. If you are inheriting an SQLXML load process, it is worth casting an eye on the VBScript file to ensure that CHECK constraints are handled in the way you want.

Loading the Data as Part of a Transaction

Bulk loading data within a transaction is much slower but if you want to trade a slower data load against moderately increased security, then you can carry out the load as a transacted operation.

During a transacted load, SQLXML Bulk Load will store the XML data as separate tables in a temporary file, and then load the data using Transact-SQL BULK INSERT. In some cases, this two-phase approach can help when debugging errors, particularly when shredding complex XML, as errors will surface before the data load begins. This can help you avoid partly populated destination tables and the consequent obligation to carry out potentially lengthy delete operations.

As for other bulk load techniques, using a transacted load does not guarantee that you can roll back the entire load if the process fails. If you are lucky you might see part of the load rolled back. In most cases, you will be faced with a partial load.

The following couple of lines of code add transactional support to an SQLXML Bulk Load VBScript file:

|

1 |

objBL.TempFilePath = "C:\Articles\SQLXMLBulkLoader" objBL.Transaction = True |

The Transaction parameter is simply a transaction flag and the TempFilePath parameter sets a temporary file path that SQLXML Bulk Load can use during the load. It really helps if this can be a fast disk array and for large imports, you have to ensure that there is enough disk space at the TempFilePath location. Also, it must be a shared location that is not only accessible to the service account of the target instance of SQL Server, but also to the account that is running the bulk load application.

If you are bulk loading on a local server you need to ensure that the temporary file path is a UNC path. In any case, you must set the TempFilePath property if:

You are bulk loading to a remote server.

You want to specify the folder to store the temporary files. This means that you can choose a disk where you are sure there is enough space, or a disk that is perhaps faster than other available drives. Otherwise SQLXML Bulk Loader will use the Windows system temporary file path specified in the TEMP environment variable.

Listing 6 offers an example of a very slightly more complex VBS file that uses transactions, enables constraints, applies a table lock and specifies the temporary file path. It loads the source data from the file that you saw at the start of this article and applies all these load options at once.

|

1 2 3 4 5 6 7 8 9 |

objBL = CreateObject("SQLXMLBulkLoad.SQLXMLBulkload.4.0") objBL.ConnectionString = "provider=SQLOLEDB;data source=Adam02\SQLEXPRESS2014; database=CarSales_Staging; integrated security=SSPI" objBL.TempFilePath = "C:\Articles\SQLXMLBulkLoader" objBL.Transaction = True objBL.CheckConstraints = True objBL.ForceTableLock = True objBL.ErrorLogFile = "C:\SQLXMLArticle\SQLXMLBulkLoadImporterror.log" objBL.Execute("C:\SQLXMLArticle\Clients_Simple_EntityAndAttributeCentric.xsd", "C:\SQLXMLArticle\Clients_Simple_EntityAndAttribute.xml") objBL = Nothing |

Listing 6: SQLXMLBulkLoadTransactionsCheckTempPath.vbs

However, if you really want to be sure of being able to start over in case of a load failure, the optimal solution is to load the XML data into staging tables that you truncate before each load. This will have repercussions for your overall process, and could push you towards an ELT (Extract Load Transform) approach rather than a classic ETL. Loading a file into an empty table or partition, which can be truncated in case of an error during the load process, is probably a faster solution as well as almost certainly more reliable.

Other Data Handling Options

SQLXML Bulk Loader offers several parameters that we can use to force SQLXML Bulk Loader to create and drop destination tables automatically, handle overflow data and validate the XML source.

Creating and Dropping the Destination Tables Automatically

So far in this tour of SQLXML Bulk Loader, we have always created any necessary destination tables using T-SQL DDL. This is not strictly necessary, as SQLXML Bulk Loader can create the destination tables from the schema file definitions.

Using SQLXML Bulk Loader to create tables is a bit of a blunt instrument, but it can save a lot of time when you start coding an XML Bulk Load process. You can always have SQLXML Bulk Loader create the initial destination tables that you fine-tune later. Another option that SQLXML Bulk Loader offers is to drop the destination tables as part of the load process, and recreate them each time. This means that you can, in effect, control the DDL from the schema file.

The SchemaGen option indicates that the destination tables should be created. Simply add the option to the VBScript file, as follows:

|

1 |

objBL.SchemaGen = True |

While it is possible to create generic destination tables using the type = "xsd:" annotation, you can achieve much greater precision as far as the shape of the destination table is concerned if you specify the sql:datatype in the schema file. This means that you will have to extend the schema file to specify the exact data type of the SQL Server column that will be created by SQLXML Bulk Loader. This is probably best understood with a simple example. Listing 7 shows the XSD file from Listing 3, with examples of the sql:datatype annotation added. To prove the point that the destination table will be created during the load process I have changed the table name to Client_XMLBulkLoadCreated. Needless to say, this table is not (yet) in the destination database.

|

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 |

<xsd:schema xmlns:xsd = "http://www.w3.org/2001/XMLSchema" xmlns:sql = "urn:schemas-microsoft-com:mapping-schema"> <xsd:element name = "Invoice" sql:relation = "Client_XMLBulkLoadCreated"> <xsd:complexType> <xsd:sequence> <xsd:element name = "ClientName" type = "xsd:string" sql:field = "ClientName" sql:datatype= "NVARCHAR(25)"/> <xsd:element name = "County" type = "xsd: string" sql:field = "County" sql:datatype= "NVARCHAR(25)"/> </xsd:sequence> <xsd:attribute name = "ID" type = "xsd:integer" sql:field = "ID" sql:datatype= "INT" /> </xsd:complexType> </xsd:element> </xsd:schema> |

Listing 7: Clients_CreateDestination.Xsd

As you can see, each mapped field now has the SQL data type specified, and this will be the data type of the column that will be created in the destination table. The field name will, inevitably, be the name specified in the sql:field annotation. Equally inevitably the table name is taken from the sql:relation annotation. Adding these annotations will not impinge on a data load in any way if you are not using SQLXML Bulk Loader to create the destination tables.

What you need now is the VBS file that will create the database table as part of the data load. Listing 8 shows you what this file looks like.

|

1 2 3 4 5 6 |

objBL = CreateObject("SQLXMLBulkLoad.SQLXMLBulkload.4.0") objBL.ConnectionString = "provider=SQLOLEDB;data source=Adam02\SQLEXPRESS2014; database=CarSales_Staging; integrated security=SSPI" objBL.SchemaGen = True objBL.ErrorLogFile = "C:\SQLXMLArticle\SQLXMLBulkLoadImporterror.log" objBL.Execute("C:\SQLXMLArticle\Clients_CreateDestination.xsd", "C:\SQLXMLArticle\Clients_Simple_EntityAndAttribute.xml") objBL = Nothing |

Listing 8: SQLXMLBulkLoadCreateDestination.vbs

If all you want to do is to create destination tables and not load the data, then you can use the BulkLoad parameter to prevent the data loading. For instance, if you add the following options to the VBScript file, SQLXML Bulk Load will only create the tables and not load the data:

|

1 2 |

objBL.SchemaGen = True objBL.BulkLoad = False |

If you want to drop and re-create the destination tables and then load the data from the source files afresh with each data load operation then the code is:

|

1 2 |

objBL.SchemaGen = True objBL.SGDropTables = True |

Be warned, however, that if the tables exist and you set SchemaGen = True without setting SGDropTables = True as well, then the table generation will fail.

When creating destination tables you need to ensure that the XML data types correspond to SQL Server data types that you require. The following table resumes the generic mapping that is applied:

| SQL Data Type | XML Data Type |

| bigint | long |

| binary | base64Binary |

| bit | boolean |

| char | string |

| datetime | dateTime |

| decimal | decimal |

| float | double |

| image | base64Binary |

| int | int |

| money | decimal |

| nchar | string |

| ntext | string |

| nvarchar | string |

| numeric | decimal |

| real | float |

| smalldatetime | dateTime |

| smallint | short |

| smallmoney | decimal |

Note that if you do not specify the SQL data type and lengths, then SQLXML Bulk Loader will convert each XML Data Type in this table into the corresponding SQL data type, and set its own default lengths for character fields.

Loading XML data into XML data type columns

If the mapping schema specifies an XML data type column, using the sql:datatype="xml" annotation, then SQLXML Bulk Loader can copy XML child elements for the mapped field from the source document into this column as XML. That is, the specified data is not “shredded” as other XML elements and attributes in the source document are, but is loaded as pure XML. This happens regardless of whether we’re creating destination tables manually, or automatically via SQLXML Bulk Load,

For this to work, of course, the destination column in the SQL Server table must also be of the XML data type. So you will see in Listing 9 the SQL DDL for a new destination table – that contains an XML column named Biography.

|

1 2 3 4 5 6 7 8 9 10 |

CREATE TABLE [dbo].[Client_XMLBulkLoadWithXML] ( ID int NULL, ClientName nvarchar(1000) NULL, Address1 nvarchar(1000) NULL, Town nvarchar(1000) NULL, County nvarchar(1000) NULL, Country numeric(18, 0) NULL, Biography XML ) |

Listing 9: Client_XMLBulkLoadWithXMLData.sql

Listing 10 shows an XML source file which includes XML data that must be loaded as XML into the biography column of the destination table in SQL Server.

|

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27 28 29 |

<?xml version = "1.0" encoding = "UTF-8"?> <CarSales> <Client> <ID >3</ID> <ClientName>John Smith</ClientName> <Address1>4, Grove Drive</Address1> <Town>Uttoxeter</Town> <County>Staffs</County> <Country>1</Country> <Biography> <ROOT> <School>Oxford University</School> </ROOT> </Biography> </Client> <Client> <ID>7</ID> <ClientName>Slow Sid</ClientName> <Address1>2, Rue des Bleues</Address1> <Town>Avignon</Town> <County>Vaucluse</County> <Country>3</Country> <Biography> <ROOT> <School>La Sorbonne</School> </ROOT> </Biography> </Client> </CarSales> |

Listing 10: Clients_Simple_Doc.xml

To load XML data you need to add another element to the schema file to specify that an XML source element is to be handled as “pure” XML. The schema file will look like the one in Listing 11:

|

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27 28 |

<xsd:element name = "CarSales" sql:is-constant = "1" > <xsd:complexType> <xsd:sequence> <xsd:element name = "Client" sql:relation = "Client_XMLBulkLoadWithXML" maxOccurs = "unbounded"> <xsd:complexType> <xsd:sequence> <xsd:element name = "ID" type = "xsd:integer" sql:field = "ID" /> <xsd:element name = "ClientName" type = "xsd:string" sql:field = "ClientName" /> <xsd:element name = "Address1" type = "xsd:string" sql:field = "Address1" /> <xsd:element name = "Town" type = "xsd:string" sql:field = "Town" /> <xsd:element name = "County" type = "xsd:string" sql:field = "County" /> <xsd:element name = "Country" type = "xsd:decimal" sql:field = "Country" /> <xsd:element name="Biography" type="xsd:string" sql:field = "Biography" sql:datatype="xml"/> </xsd:sequence> </xsd:complexType> </xsd:element> </xsd:sequence> </xsd:complexType> </xsd:element> </xsd:schema> |

Listing 11: Clients_WithXMLData.xsd

Finally, not to say inevitably you will need a VBS file that loads the data. Listing 12 shows a script to do this. The script in Listing 12 uses the XML and XSD files from listings 10 and 11.

|

1 2 3 4 5 |

objBL = CreateObject("SQLXMLBulkLoad.SQLXMLBulkload.4.0") objBL.ConnectionString = "provider=SQLOLEDB;data source=Adam02\SQLEXPRESS2014; database=CarSales_Staging; integrated security=SSPI" objBL.ErrorLogFile = "C:\SQLXMLArticle\SQLXMLBulkLoadImporterror.log" objBL.Execute("C:\SQLXMLArticle\Clients_WithXMLData.xsd", "C:\SQLXMLArticle\Clients_Simple_Doc.xml") objBL = Nothing |

Listing 12: SQLXMLBulkLoadWithPureXML.vbs

Anticipating Overflow Data

What will happen if the source XML contains more attributes or elements than are contained in the XSD file? In other words, if this data is not mapped? Unless SQLXML Bulk Loader is told what to do with such “overflow” data, it is as if the source data never existed. The source data will simply not be loaded into SQL Server, and no error message will be generated. This can be dangerous in practice because it is never easy to explain to managers or clients that you have lost or ignored their data.

Fortunately, there is a simple technique to catch overflow data. You can then handle any unmapped data elements or attributes separately, or use the resulting flags as an indicator that some more work needs to be done on the schema file.

Handling overflow data is a two-step process. First, add a column to the destination table using the name you specified in the schema file. Listing 13 shows a new table that is ready to handle overflow data:

|

1 2 3 4 5 6 7 8 9 10 11 |

CREATE TABLE [dbo].[Client_XMLBulkLoadWithOverflow] ( ID int NULL, ClientName nvarchar(1000) NULL, Address1 nvarchar(1000) NULL, Town nvarchar(1000) NULL, County nvarchar(1000) NULL, Country numeric(18, 0) NULL, DataOverflowColumn NVARCHAR(MAX) ) GO |

Listing 13: Client_XMLBulkLoadWithOverflow.sql

Second, for each table mapping in the schema file, specify which column will handle any and all overflow. For example, in Listing 14 you can see an XSD file that has an overflow field defined for the XML element Client.

|

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27 28 29 |

<xsd:schema xmlns:xsd="http://www.w3.org/2001/XMLSchema" xmlns:sql = "urn:schemas-microsoft- com:mapping-schema"> <xsd:element name = "CarSales" sql:is-constant = "1" > <xsd:complexType> <xsd:sequence> <xsd:element name = "Client" sql:relation = "Client_XMLBulkLoadWithOverflow" maxOccurs = "unbounded" sql:overflow-field="DataOverflowColumn"> <xsd:complexType> <xsd:sequence> <xsd:element name = "ID" type = "xsd:integer" sql:field = "ID" /> <xsd:element name = "ClientName" type = "xsd:string" sql:field = "ClientName" /> <xsd:element name = "Address1" type = "xsd:string" sql:field = "Address1" /> <xsd:element name = "Town" type = "xsd:string" sql:field = "Town" /> <xsd:element name = "County" type = "xsd:string" sql:field = "County" /> <xsd:element name = "Country" type = "xsd:decimal" sql:field = "Country" /> </xsd:sequence> </xsd:complexType> </xsd:element> </xsd:sequence> </xsd:complexType> </xsd:element> </xsd:schema> |

Listing 14: Clients_WithOverflow.xsd

Of course, you can set the type and length of the overflow column to whatever you feel appropriate. Indeed, you can create multiple overflow columns and attribute separate overflow columns to different source XML entities.

Finally you will need a source XML file that contains an unmapped element or two (Comments and CreditStatus, in this example). Listing 15 demonstrates this.

|

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 |

<?xml version = "1.0" encoding = "UTF-8"?> <CarSales> <Client> <ID >3</ID> <ClientName>John Smith</ClientName> <Address1>4, Grove Drive</Address1> <Town>Uttoxeter</Town> <County>Staffs</County> <Country>1</Country> <Comments>None</Comments> </Client> <Client> <ID>7</ID> <ClientName>Slow Sid</ClientName> <Address1>2, Rue des Bleues</Address1> <Town>Avignon</Town> <County>Vaucluse</County> <Country>3</Country> <CreditStatus>-100</CreditStatus> </Client> </CarSales> |

Listing 15: Client_XMLBulkLoadWithSourceOverflow.xml

Of course, you will need a VBS file to run this process. Listing 16 loads the data from the XML file using the overflow mapping in the XSD file. Interestingly, this does not require you to tweak the VBS script in any way, other than to add the relevant XML and XSD files, of course.

|

1 2 3 4 5 |

objBL = CreateObject("SQLXMLBulkLoad.SQLXMLBulkload.4.0") objBL.ConnectionString = "provider=SQLOLEDB;data source=Adam02\SQLEXPRESS2014; database=CarSales_Staging; integrated security=SSPI" objBL.ErrorLogFile = "C:\SQLXMLArticle\SQLXMLBulkLoadImporterror.log" objBL.Execute("C:\SQLXMLArticle\Clients_WithOverflow.xsd", "C:\SQLXMLArticle\Client_XMLBulkLoadWithSourceOverflow.xml") objBL = Nothing |

Listing 16: SQLXMLBulkLoadOverflow.vbs

Now when you run the SQLXML import, any data not mapped in the XML schema file will be inserted into the overflow column, for each row. With an overflow column in place (or more than one if you really need it) you can trap unforeseen data and then work out how to reprocess the file more successfully.

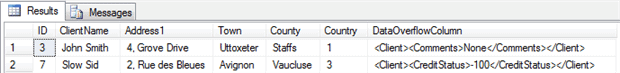

To give you a better idea of what happens when you capture unmapped overflow data, take a look at Image 1 that shows you the result of running the process described in this section.

Image 1. Capturing overflow data

Validating the XML File against the Schema File

If you are feeling particularly nervous about a large import failing part-way through, due to poorly structured source data, you can validate the source XML data file against the XSD schema. Be aware, however that this can take a long time for a large data file. Nonetheless schema validation can be a valuable early warning of an impending load failure. This is especially true if you are loading data into non-empty tables in circumstances where attempting to reset the data to its previous state could be painful.

The VBScript file in Listing 17 will validate a data file and if the validation is successful it will proceed to load the file. The files used are those used in the first XML data load from the previous article. These files can be found in the accompanying download and are:

- A data file: C:\SQLXMLArticle\Clients_Simple.xml

- A schema file: C:\SQLXMLArticle\SQLXMLBulkLoadImport_Simple.xsd

|

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 |

Dim xs src = " C:\Articles\SQLXMLBulkLoader\SQLXMLBulkLoadImport_Simple.xsd" xs = CreateObject("MSXML2.XMLSchemaCache.6.0") xs.add("", src) Dim xd xd = CreateObject("MSXML2.DOMDocument.6.0") xd.schemas = xs xd.async = False xd.Load("C:\SQLXMLArticle\Clients_Simple.xml") If xd.parseError.errorCode = 0 Then objBL = CreateObject("SQLXMLBulkLoad.SQLXMLBulkload.4.0") objBL.ConnectionString = "provider=SQLOLEDB;data source=ADAM02;database= CarSales_Staging;integrated security=SSPI" objBL.ErrorLogFile = "error.log" objBL.Execute("C:\SQLXMLArticle\SQLXMLBulkLoadImport_Simple.xsd", "C:\SQLXMLArticle\Clients_Simple.xml") objBL = Nothing |

Listing 17: ValidateAndLoad.vbs

Note that you are creating and using a DOM object to run asynchronously so that it will not return control to the caller before the validation is finished.

Conclusion

A whole host of options and extensions lie hidden beneath the apparently anodyne simplicity of SQLXML Bulk Loader. I have tried to show you how you can build on the core knowledge that you acquired in the previous article to push this little-known but amazingly efficient utility to its limits. If you are faced with XML files of any size to load into SQL Server then you could be well advised to consider using SQLXML Bulk Loader as your tool of choice. It will almost certainly impress you with its speed and power.

SQL Server 2012 Data Integration Recipes

If you like what you read in this article and want to learn more about data integration using SQL Server, then please take a look at my book, SQL Server 2012 Data Integration Recipes (Apress, December 2012).

Load comments