The late Jim Gray once said that in the early days of SQL, “We had no idea what we were doing!” However, that is not completely true. What we were doing was mimicking the technologies that had gone before. The first SQL engines put each table in a separate physical file. We had file systems that had been in use for decades. We had lots of code for handling those files, in particular, all kinds of variations on index sequential access methods (ISAM). But data modeling introduced something we hadn’t had before: the concept of data integrity being enforced declaratively instead of procedurally.

In the dark ages of file systems, if we wanted to restrict a field in a record to particular values, then we had to have a program to enforce this rule. Actually, it was worse than that because we had to have every program enforce this rule if it made a modification in the file. The idea of having a general CHECK() constraint on a column simply did not exist. COBOL gave us some display formatting on fields with the PICTURE clause, but this had nothing to do with the relationships in the data.

Here’s a relatively straightforward example from the old days. You have an inventory file that shows all the goods that you sell and an orders file that shows who placed what orders. The integrity rule is pretty simple: you can’t sell anything that you don’t have in the inventory. You would go to the Orders file record, loop through the items that were ordered, which would be in a repeating group called the OCCURS clause in COBOL and match them to the inventory. If you had the item in inventory, you would execute one procedure (in COBOL, this would be a PERFORM paragraph statement). If you didn’t have items, you would execute a second procedure.

REFERENCES Clause

The <references specification> is the simplest version of a referential constraint definition:

|

1 2 3 |

<references specification> ::= [CONSTRAINT <constraint name>] REFERENCES <referenced table name>[(<reference column list>)] |

What this says is that the value in this column of the referencing table must appear somewhere in the referenced table’s columns which are named in the constraint. Notice the terms “referencing” and “referenced” are not the same as the “parent” and “child” terms used in network databases. Those terms were based on pointer chains that were traversed in one direction; that is, you cannot find a path back to the parent node from a child node in the network. Another difference is that the referencing and referenced tables can be the same table. There is also no such thing as a “link table” in RDBMS; that’s another network database term.

Furthermore, the referenced column must have a UNIQUE constraint. A PRIMARY KEY is a special case of a UNIQUE constraint that also implied NOT NULL on all its columns. If the referenced columns are in a UNIQUE constraint, then the target table must have one and only one NULL in that column. The NULLs will match in the referencing table. If no <reference column list> is given, then the PRIMARY KEY of the referenced table is assumed to be the target. There is no rule to prevent several columns from referencing the same target columns. For example, you might have a table of flight crews that has pilot and copilot columns that both reference a table of certified pilots. A table can also reference itself (this can get tricky and involves turning constraints on and off). A circular reference is a relationship in which one table references a second table, which in turn references the first table. The old gag about “you cannot get a job until you have experience, and you cannot get experience until you have a job!” is the classic version of this.

As a general design principle, it’s much more convenient to have a tree structured span of references. In particular, it makes referential actions much more predictable. Now I need to define “referential actions” and show how they work.

Referential Actions

The very first SQL engines behaved pretty much like procedural code language files. When TRIGGERs were added to the language, you could still do integrity checks in procedural code, but now it was in one place, the DDL, and not have to repeat it in every module of code. But people began to notice the same coding patterns were being used over and over in about 80% of these TRIGGERs. So, we added declarative subclauses for the most common situations. This means that the SQL engine can optimize these cases, which is not possible with triggers.

We decided that the REFERENCES clause can have two sub-clauses that take actions when a “database event” changes the referenced table. The two database events are updates and deletes and the sub-clauses look like this:

|

1 2 3 4 5 6 7 |

<referential triggered action> ::= <update rule> [<delete rule>] | <delete rule> [<update rule>] <update rule> ::= ON UPDATE <referential action> <delete rule> ::= ON DELETE <referential action> <referential action> ::= CASCADE | SET NULL | SET DEFAULT | NO ACTION |

When the referenced table is changed, one of the referential actions is set in motion by the SQL engine.

1) The CASCADE option will change the values in the referencing table to match the value (if any) in the referenced table. This is a very common programming technique that allows you to set up a single table as the trusted source for an identifier. This way, the system can propagate changes automatically.

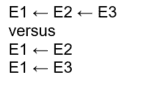

The ON DELETE CASCADE is probably the most common option. The reason is that in data modeling we talk about having “strong” and “weak” entities. A weak entity (such as the Order Details) can exist only if they have a reference back to a strong entity (Orders). You can build chains of weaker and weaker entity references to any depth and spread it out in a tree structure that begins at the strongest entity. Let’s use ← to mean “references” and look at the possible ways you can chain a strong entity, E1, and it’s two weaker entities, E2 and E3.

The difference can be subtle. Imagine that E1 is an order. In the first case, E2 might be order items like a back-to-school supply kit. This kit is made up of individual items (pencils, pens, crayons, paper, etc.) from E3. In this model, you can delete from or add individual items to a kit. Whatever you do, it’s still a back-to-school kit until you remove all the items.

In the second case, E2 might be an order item, and E3 could be delivery options. In theory, you could have an order, E1, that is empty and still deliver it. That doesn’t make much sense in the real world, but it is allowed by the data model.

2) The SET NULL option will change the values in the referencing table to a NULL. Obviously, the referencing column needs to be NULL-able, but the referenced column does not.

3) The SET DEFAULT option will change the values in the referencing table to the default value of that column. Obviously, the referencing column needs to have some DEFAULT declared for it, but each referencing column can have its own default in its own table.

A little-known feature of SQL is the DEFAULT VALUES clause in the INSERT INTO statement where a single row is inserted containing only DEFAULT values for every column. The syntax is: INSERT INTO <table name> DEFAULT VALUES; as a shorthand for INSERT INTO <table name> VALUES (DEFAULT, DEFAULT,… DEFAULT).

4) The NO ACTION option explains itself. Nothing is changed in the referencing table, and a warning message about reference violation might be raised. If a REFERENCES constraint does not specify any ON UPDATE or ON DELETE subclause, then NO ACTION is implicit.

Full ANSI/ISO Standard SQL has more options about how matching is done between the referenced and referencing tables. Full ANSI/ISO Standard SQL also has deferrable constraints. This lets the programmer turn a constraint off during a session so that the table can be put into a state that would otherwise be illegal. However, at the end of a session, all the constraints are enforced. Many SQL products have implemented these options, and they can be quite handy, but I will not mention them anymore. In SQL Server, you have to explicitly turn the constraints on and off with the statement. Please remember to give your constraints names, so this feature will be easy to use.

|

1 2 3 4 5 |

-- Disable/enable all table constraints ALTER TABLE <table name> [NOCHECK | CHECK] CONSTRAINT ALL; -- Disable/enable single constraint ALTER TABLE <table name> [NOCHECK | CHECK] CONSTRAINT <constraint name>; |

It is also possible to use system procedures that will enable or disable all the constraints in the entire database. I can’t give a good reason for wanting to do this, and it sounds likely to be very dangerous.

This weak and strong entity model is very simple. It may not look that way at first, but full E-R modeling can get more elaborate, and it’s tough to support in SQL.

POINTER Chains

I’m probably one of the few people who still remember WATCOM. It was a spinoff from the University of Waterloo in Canada. The University produces some of the best systems programmers I’ve ever worked with, but they could not build a useful human interface. They also produced an SQL compiler which was eventually sold to Sybase.

Their SQL product knew the difference between a referenced and referencing table. The referenced columns in the key were materialized (one way, one place, one time) then the referencing tables built pointer chains back to that occurrence. Basically, they took a lesson from the old network databases (IMS, IDMS, Total, etc.). This meant that no matter how big the key was, the references to it used a simple pointer. It also meant doing joins on primary and foreign keys is fast and cheap (we got really good at scanning pointer chains back in the old network days!). DRI (declarative referential integrity) actions to cascade the updates were also insanely fast; the system simply changed the reference and left the pointers alone.

Similar tricks can be done with SQL products that use hashing and columnar databases. This is one of the reasons that a REFERENCES clause is actually more abstract and is, therefore, nothing like a link.

E-R Modeling

In 1976, Peter Chen introduced Entity-Relationship (E-R) modeling. Variations on his diagramming technique quickly appeared, differing mostly in the graphics. This is still an excellent tool for data modeling today, but it takes a little care to generate DDL from the diagrams.

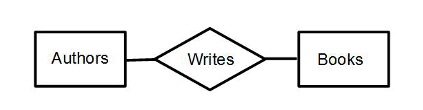

The basic symbols are fairly simple. Entities are shown by rectangles, relationships among the entities are indicated by a diamond, and connecting lines between the diamonds and rectangles show the relationships. Some simple rules are that a relationship has to apply to one or more entities, that two or more entities in a relationship have to be connected,

and so forth. There are additional symbols to show what kind of relationship the entities have with each other.

Explaining this is probably easier to do with a simple example. Consider the relationship between authors and their books. The relationship is authorship, or you can just use the verb “write” to keep things simple.

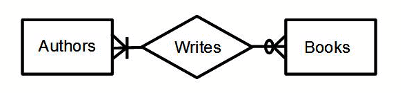

A vertical line means one member of the entity set must be involved in the relationship. Think of the digit one. A circle on the connecting line means no members can be involved; think of a zero.

A “crow’s foot” is the symbol for “many,” which means zero or more. For example, this diagram says at least one, but possibly more authors are involved in the authorship relation. On the books side, there are some options. A circle – crows foot would mean zero or more books are written by the author or authors.

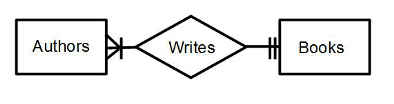

On the other hand, two vertical slashes mean that the author or authors have written precisely one book no more no less.

There have been a few experimental database products that implemented these notations, but SQL is so dominant they never really got anywhere.

WITH CHECK OPTION

A little-used feature in SQL can be used to fake constraints at this level. It is the WITH CHECK OPTION clause on a VIEW that has existed since the SQL–89 standards. To explain this, consider the VIEW

|

1 2 3 4 |

CREATE VIEW V1 AS SELECT col1 FROM Foobar WHERE col1 =’A’; |

The view is updatable. This means that it applies to one and only one table that is capable of getting to one and only one row unambiguously. An update like this can be performed:

|

1 |

UPDATE V1 SET col1 =’B’; |

The update works just fine, but now rows which were previously returned by the VIEW disappear because they no longer meet the WHERE clause condition. An INSERT statement into the view could also put values into the base table whose rows don’t show up in the VIEW.

The WITH CHECK OPTION makes the system look at the WHERE clause in the VIEW definition. If an insertion or update fails the test, the SQL engine rejects the changes, and the VIEW remains the same. The full ANSI/ISO standard this feature is a more elaborate and includes cascade options.

To fake a constraint, you can use a relatively simple [NOT] EXISTS () constraints on a VIEW to create the conditions. For example, to assure that orders have at least one order item, you can create a VIEW on the join of Orders and Order_Details. This would mean that an order must match to one or more order details to show up in the Orders_2 view. Please note that the base tables are still in the schema. You have to make a decision to use only the VIEW and use DCL to prevent user access to those base tables.

|

1 2 3 4 5 6 7 8 9 |

CREATE VIEW Orders_2 AS SELECT O.order_nbr, .. FROM Orders AS O WHERE EXISTS (SELECT * FROM Order_Details AS D WHERE D.order_nbr = O.order_nbr) WITH CHECK OPTION; |

Now simply use Orders_2 in your queries. You can still use the base table, Orders. You might want to sit down and play with all the options that you can implement in such a VIEW.

Conclusion

Yes, putting in ER style constraints is a good bit of work for the programmer. But you need to ask yourself is it worth the effort. When do you really need data integrity? Is there any hope in the future for some help from SQL? The answer is yes, and we would find it when we get an implementation of the CREATE ASSERTION statement. This essentially is a CHECK() constraint which applies to the schema as a whole, rather than to columns within a single table. This is why the constraint names are global rather than local.

Load comments