At its most basic level, Oracle databases are built around data based within tables held within the database, and the moulding of that data into information and meaning. Under this model, data is created within the database or imported into it.

There is, however, a third way. Using external tables, Oracle can query the contents of flat files held outside the database, in pretty much the same way that you would query a more normal, vanilla, database table.

Our sales department, for example, use a non-Oracle application. At the close of each day they export the day’s sales into a csv file which they save in an agreed location. This means that all I need do is create an external table which points at this file, and I am then able to query the data and use it to populate tables in my Oracle database. Using an external table makes this process pretty much painless; in the old days we needed a morass of scripts, data entry and loaders to achieve the same thing.

Restrictions

There are, as you would expect, some restrictions. External tables are powered by the ORACLE_LOADER access driver, and therefore can only read data stored in formats that can be loaded using SQL*Loader. Most of the files I receive are comma-separated and that suits me just fine, but with a little cleverness, we can even use XML.

Secondly, using external tables you cannot perform DML on your flat files; no deletes, no updates. And frankly, you should not want to. Think of yourself as a Hollywood-style bank robber: go in, grab the data, get out. In other words: query the external table, use that data to populate other tables, and then leave it alone. Don’t start making it an ordinary working part of your application; that’s just crazy.

There is a good reason for this, and that brings us to our third restriction: you cannot index external tables. This means, of course, that you should not expect an external table to perform at the speed of an ordinary table. So be a bank robber: go in, grab the data to populate other tables, and leave.

Finally, and this isn’t exactly a restriction per se, the flat file that the external table is built upon must be located somewhere accessible to Oracle. As you know, directories are Oracle’s means of mapping OS paths. So ensure your flat file is located in a folder that can be reached by Oracle directory, and that all the read privileges are present and correct.

Creating an External Table

To create an external table we use the following syntax: CREATE TABLE … ORGANIZATION EXTERNAL. The CREATE TABLE section of the statement is identical to the usual syntax that you would use to create a table: and the ORGANIZATION EXTERNAL section is made up of the access parameters (also known as the opaque format spec) and information about the location of the source file.

Let’s shine some light on the opaque format spec. The access parameters are made up of record format information, field definitions and any column transformations.

The record_format_info clause is optional. When present it must begin with the RECORDS keyword.

These are some of the subclauses you may wish to use:

- DELIMITED BY: This subclause expresses how the fields are delimited and terminated.

- LOAD WHEN: Using this subclause we can supply a conditional statement by which the decision to load or not load a record is made.

- BADFILE: Specifies a file (including a valid directory) to which erroring records should be written. If a record does not pass the LOAD WHEN condition it is not written to the bad file. Instead there is a subclause, DISCARDFILE, that specifies where such records should be logged.

- LOGFILE: A valid Oracle directory and file name for all logging.

- SKIP: This specifies a number of records that should be skipped before loading begins. If, for example, your csv begins with a header record, you will want to skip the first record.

The field_definitions clause details the fields in the data file and outlines how they build up the record. This clause must begin with the FIELDS keyword.

- ENCLOSED BY: Shows the string(s) that encapsulates each field.

- TERMINATED BY: Specifies the string that terminates the fields or the keyword WHITESPACE.

The column transformations outline any transformations that should be applied to each column. Transformations are specified column-by-column.

- NULL: If you apply the NULL transform, the column will be set to null irrespective of what is in the data file.

- CONSTANT: With the CONSTANT transform, the column will be set to the specified string for all records.

Example

An example will probably help you make some sense out of my huge spewing of subclauses.

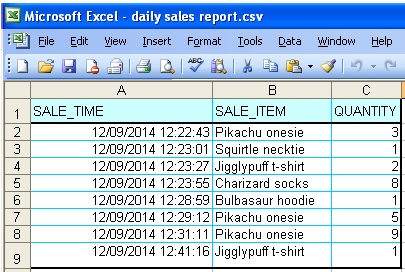

Let’s assume the Sales team create a daily spreadsheet of sales details, and we need to build an external table to query it.

|

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 |

CREATE TABLE ext_daily_sales ( Sale_time DATE, Sale_item VARCHAR2(100), Quantity NUMBER ) ORGANIZATION EXTERNAL (TYPE ORACLE_LOADER DEFAULT DIRECTORY sales_dir ACCESS PARAMETERS ( RECORDS DELIMITED BY NEWLINE LOAD WHEN (quantity > 1) BADFILE sales_dir:'dailysales.bad' LOGFILE sales_dir:'dailysales.log' DISCARDFILE sales_dir:'dailysales-discarded.log' SKIP 1 FIELDS delimited by ',' (sale_time char(20) DATE_FORMAT DATE mask "dd/mm/yyyy hh24:mi:ss", Sale_item, Quantity) ) LOCATION ('daily sales report.csv') ); |

There are a few things to note. The RECORDS and FIELDS keywords must be present – unless you completely exclude the record_format_info and/or field_definition clauses. Secondly, your file names – as used, for example, in the badfile and logfile subclauses – should contain your Oracle directory name concatenated to your file name by means of a colon. If you omit the directory name, the file will be created in your DEFAULT DIRECTORY.

XML

At first glance there seems to be very little leeway at the heart of the external table functionality; however, with just a little cunning, we can use it to read XML files.

Assume the Daily Sales Total report that we receive is an XML file. We could create an external table upon it.

|

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 |

CREATE TABLE ext_sales_totals ( Sale_date DATE, Sale_item VARCHAR2(100), Quantity NUMBER ) ORGANIZATION EXTERNAL (TYPE ORACLE_LOADER DEFAULT DIRECTORY sales_dir ACCESS PARAMETERS ( RECORDS DELIMITED BY '</Item>' LOGFILE 'sales_totals.log' FIELDS ( Sale_date CONSTANT SYSDATE, Sale_item CHAR(100) ENCLOSED BY '<Name>' AND '</Name>', Quantity CHAR(5) ENCLOSED BY '<Quantity>' AND '</Quantity>') ) LOCATION ('sales totals.xml') ); |

Modifying External Tables

The sands can shift beneath the feet of an external table quite easily. At the close of each business day, the sales team create new versions of daily sales report.csv and sales totals.xml and overwrite the old copies of these files. That way, when we rerun our scripts the next morning, we have a whole new suite of data to upload.

If, however, you need to make structural – DDL – amendments to your external table, this is also possible. If, for example, your default directory or your file name changes, you can issue the following alter statements.

|

1 2 |

ALTER TABLE ext_daily_sales DEFAULT DIRECTORY new_sales_dir; |

|

1 2 |

ALTER TABLE ext_sales_totals LOCATION ('new sales totals.xml'); |

Load comments