Why should a DBA learn PowerShell ? It is all about solutions. In this article, I want to explain how one can integrate PowerShell, TSQL, SQL Jobs and SQL WMI alerts into a complete solution. I will go further in this topic in my new written project along three great friends. Stay Tuned, as we will soon have a complete guide about day-to-day solutions for the DBA, using PowerShell and SQL Server.

When you read about using PowerShell and SQL Server, you are usually learning about the way that you use PowerShell to access SQL Server. Sometimes, instead, you’ll want to use PowerShell directly from SQL Server to create solutions. You may want to do it from TSQL, getting data back in a form that can then be inserted into a table, or execute it on the server from SSMS. You might want to run PowerShell scripts from the SQL Server Agent, or to set up sophisticated alerts using WMI that then execute jobs that are written in PowerShell. I’ll be showing you how to do all this; but let’s take things in easy stages.

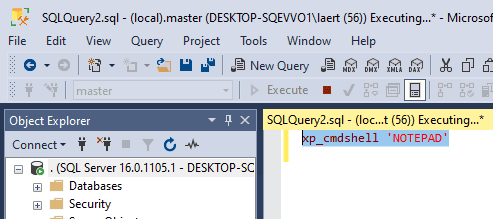

Running PowerShell scripts from SSMS Query Editor

Did you know that you can run your PowerShell cmdlets and functions, along with their parameters, very simply from the Management Studio (SSMS) Query Editor, executing them on the server? Yeah, by using xp_cmdshell. Before I start showing you how to use xp_cmdshell to run PowerShell cmdlets from within TSQL, I must make you aware that, by enabling xp_cmdshell on a server, you’re creating potential security issues. There are good reasons why xp_CmdShell is disabled by default.

When using xp_CmdShell to run PowerShell in SSMS , you’ll Just need to remember three things:

- Use ‘Results to text’ in SSMS, from the menu item Query -> ‘Results to…’ to see an easily-readable output from PowerShell.

- You are not running PowerShell on your own machine, but on the server that is hosting the SQL Server instance you’re connected to. The userID which you are using to open a connection will need execute permissions. You’ll need to make sure that your PowerShell profile has all the functions installed that you need to run. What account is this? If you’re using a SysAdmin account, then the PowerShell command will be executed under the ‘SQL Server service account’, which is the security context in which the SQL Server service is running. Otherwise, you’ll need to set up a proxy account on that server using sp_

xp_CmdShell_proxy_account - Make sure that PowerShell is installed to the correct version on the host server.

Once you’ve enabled xp_CmdShell, and you have the necessary permissions to use it, PowerShell can give you valuable information easily. I’ll show you a couple of examples: Getting Disk space, and seeing what services are running.

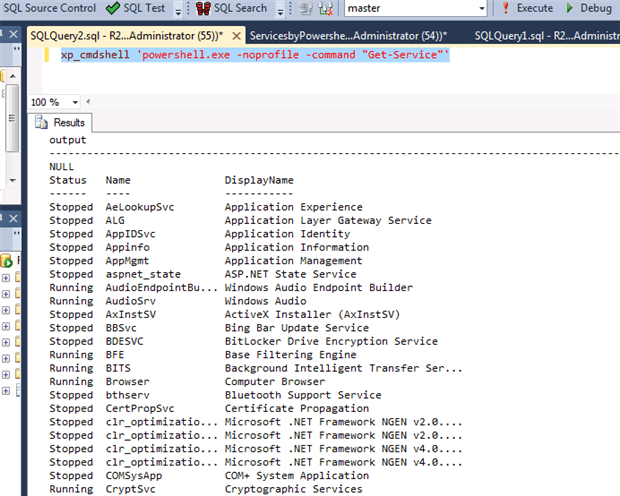

Seeing what services are running

We’ll start simply by listing all the services on the server.

|

1 |

xp_cmdshell 'PowerShell.exe -noprofile Get-Service' |

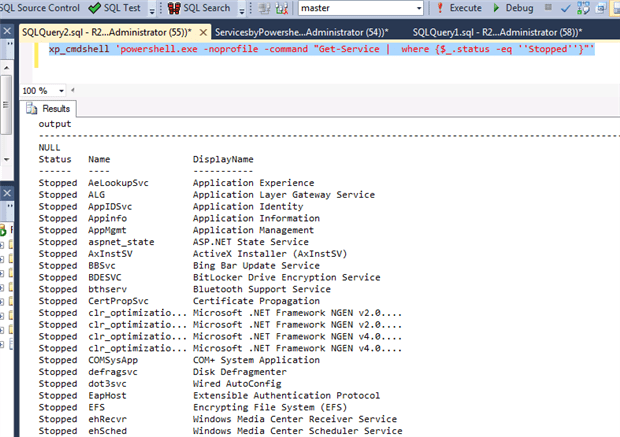

You have the full list of services, whatever their status. What if you wanted only those that had stopped? You’ll need to combine two CmdLets to do this in a pipeline , so it is now time to use a command-line parameter to run the command, the -Command parameter

|

1 |

xp_cmdshell 'PowerShell.exe -noprofile -command "Get-Service | where {$_.status -eq ''Stopped''}"' |

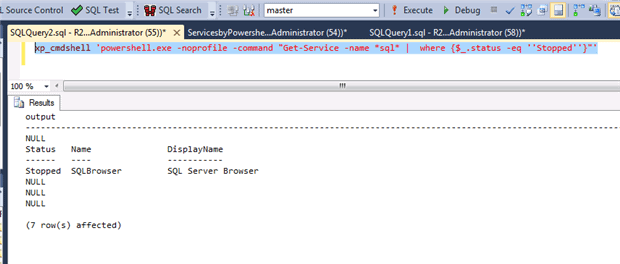

And with only a small change we can see all the SQL services that have stopped.

|

1 |

xp_cmdshell 'PowerShell.exe -noprofile -command "Get-Service -name *sql* | where {$_.status -eq ''Stopped''}"' |

But you can also query a server remotely once it has been configured :

|

1 |

xp_cmdshell 'PowerShell.exe -noprofile -command "Get-Service -computername ObiWan -name *sql* | where {$_.status -eq ''Stopped''}"' |

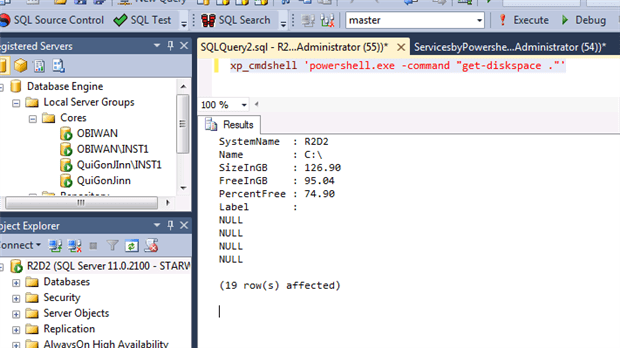

Getting the free disk space

Here, we are using a Get-Diskspace function (@sqlvariant) for the host of the SQL Server instance. This requires a function that you can download at the bottom of the article, and which will need to be placed on the server.

|

1 |

xp_cmdshell 'PowerShell.exe -command "get-diskspace ."' |

To get the disk space for a different, remote, server, for example, use …

|

1 |

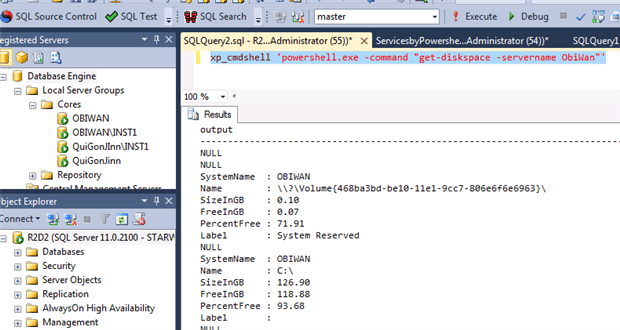

xp_cmdshell 'PowerShell.exe -command "get-diskspace -servername ObiWan"' |

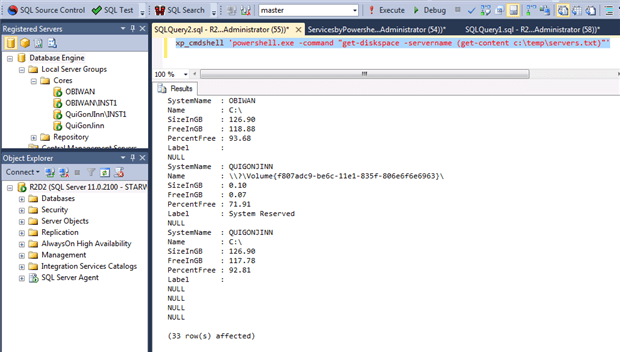

To get the disk space for all the Servers into a file called Servers.txt:

|

1 |

xp_cmdshell 'PowerShell.exe -command "get-diskspace -servername (get-content c:\temp\servers.txt)"' |

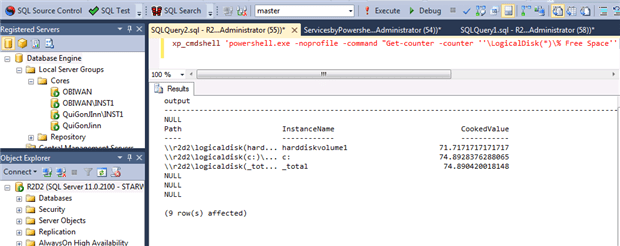

Alternatively, to get just the percentage disk space, you can also use Get-Counter and \LogicalDisk(*)\% Free Space to get all counter instances. You can do this locally, for the host of your instance ….

|

1 |

xp_cmdshell 'PowerShell.exe -noprofile -command "Get-counter -counter ''\LogicalDisk(*)\% Free Space'' | select -expand countersamples"' |

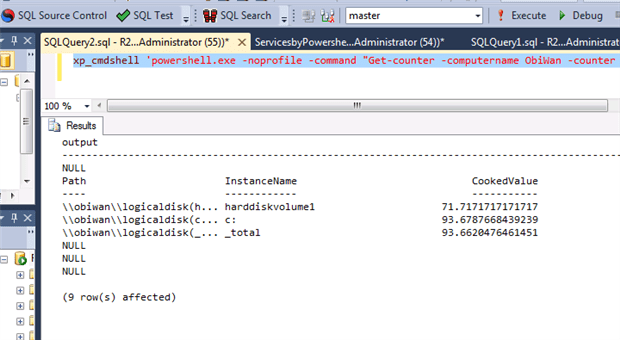

…or for a remote server

|

1 |

xp_cmdshell 'PowerShell.exe -noprofile -command "Get-counter -computername ObiWan -counter ''\LogicalDisk(*)\% Free Space'' | select -expand countersamples"' |

So can you do more than this and run scripts the same way? Well, no, because there is a limitation. You can’t use the ” (double-quote) character, which is essential for PowerShell, because it is used in the command-line parameter to delimit the script-fragment being executed. To do this, you’ll need to save the script as a file and execute that.

Returning data from PowerShell

If you run a PowerShell CmdLet in xp_cmdshell, how do you get the data back into SQL as tabular data? We’ve shown you the output, but it is not immediately obvious as to how to read it. Xp_cmdshell actually returns a table consisting of a single column called ‘output’. You can insert it into a table using INSERT..EXEC, but INSERT..EXEC has certain restrictions. You cannot nest them and it cannot contain an output clause.

However we can use this method to return an XML representation of the PowerShell objects being returned. All we then have to do is to shred it into a relational form and create a table.

Taking a more refined version of the previous PowerShell command

|

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27 |

DECLARE @XML_as_String VARCHAR(MAX) DECLARE @Percentage XML --create a table variable for the data to go into, preserving the order of insertion DECLARE @XML TABLE (TheXML VARCHAR(2000), theOrder INT IDENTITY(1,1) PRIMARY KEY) --insert the XML into the table, line by line INSERT INTO @XML(TheXML) EXECUTE xp_cmdshell '@PowerShell -noprofile -command "Get-counter -counter ''\LogicalDisk(*)\% Free Space'' | select -expand countersamples | select @{Name=''Disk''; e={$_.InstanceName}}, @{Name=''%FreeSpace''; e={''{0:n2}'' -f $_.CookedValue}}|ConvertTo-XML -As string" ' --now assemble the XML as a string in the correct order SELECT @XML_as_String=COALESCE(@XML_as_String,'') + theXML FROM @XML WHERE theXML IS NOT NULL ORDER BY theOrder --now save it into an XML variable SELECT @Percentage = @XML_as_String -- the entity-attribute-value result that comes from the XML has to be pivoted SELECT MAX (CASE WHEN attribute='Disk' THEN VALUE ELSE '' END ) AS [Disk], CAST (MAX (CASE WHEN attribute='%FreeSpace' THEN VALUE ELSE '' END )AS numeric(9,2)) AS [% Free Space] FROM ( SELECT --shred the XML into an EAV table along with the number of the object in the collection [property].value('(./text())[1]', 'Varchar(20)') AS [Value], [property].value('@Name', 'Varchar(20)') AS [Attribute], DENSE_RANK() OVER (ORDER BY [object]) AS unique_object FROM @Percentage.nodes('Objects/Object') AS b ([object]) CROSS APPLY b.object.nodes('./Property') AS c (property) ) PowerShell GROUP BY UNIQUE_object |

This will give the result

|

1 2 3 4 5 6 |

Disk % Free Space -------------------- --------------------------------------- c: 91.38 d: 79.61 _total 83.53 (3 row(s) affected) |

Which any DBA will recognise as data! What have we done here? We have chosen to create an XML representation of the report which was then returned to SQL Server line by line. We had to re-assemble it into an XML file and shred it in the way that we needed. This is laborious for an ad-hoc request but it makes a lot of sense for a scheduled monitoring job.

Running Scripts That has Posh Jobs on a SQL Agent Job

PowerShell can be run from the scheduler to do regular jobs such as ETL. Although this generally takes little more effort than testing it in the PowerShell ISE, just sometimes PowerShell gives you a culture-shock. Sometimes things happen that you don’t expect, even though they make sense when you think about it later. For example, I recently developed a script that created a lot of PowerShell Jobs. For some reason, when I ran it in the PowerShell Command-line console, it all worked fine. When I then ran it on the SQL Server scheduler, using a CMDExec jobtype, calling PowerShell, nothing happened: and there was no error message in Jobs History.

The script invoked a process that retrieved all the windows updates applied to a list of servers in the past 24 hours, and saved the results into a SQL Server Table within a repository server. It was using runspaces, though what I’ll describe will be useful for anyone that is using background jobs. I was using PowerShell to create jobs that ran in parallel, one for each server I was getting information from. I was getting a list of servers from a file called ‘c:\temp\Servers.txt’ and for each server name, I was starting a background job on the local computer. This job then obtained the windows update information for the server which was then filtered by a Where cmdlet for only those within the past day. The results were reported back to a tbl_WindowsPatches table in a SQL Server repository. The code is this:

|

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 |

Get-Content c:\temp\Servers.txt | % { Start-Job -Name $_ -InitializationScript{Ipmo Functions -Force -DisableNameChecking} ` -scriptblock { ( Get-WindowsUpdates -ComputerName $args[0] ` | Where {$_.UpdateDate -ge ((Get-Date).adddays(-1))} ` | select CurrentDate, ` ServerName, ` UpdateClientApplicationID, ` UpdateDate,` UpdateDescription, ` UpdateTitle ) | Out-DataTable ` | Write-DataTable -ServerInstance R2D2 ` -Database SQLServerRepository -TableName tbl_WindowsPatches } -ArgumentList $_ } |

You can download the Get-WindowsUpdates , Out-Datatable and Write-DataTable functions at the top of this article in the Functions.psm1 file . In this case I have a module called Functions that join all these functions . As I am using PowerShell jobs and it runs in another runspace, these functions are not visible. So I need to explicit load them in , in the functions module, in the line

$_ -InitializationScript{Ipmo Functions -Force -DisableNameChecking}

Why should it have worked in the PowerShell console, but not when run from the SQL Server Agent? My first test was to create a .bat and run :

|

1 |

PowerShell.exe "C:\Temp\Automation\GetWindowsUpdates.ps1" |

Ok. So what is happening ? Nothing was stored. My script was creating the jobs, each of which was running in its own independent runspace, and then it was closing the PowerShell session. The script seemed to run in an open command console, but not when it was closed immediately after the script was run. Were these separate jobs being closed prematurely when the parent session was closed?

To check that this was the problem, I added, to the line in the .bat file that executed PowerShell, the parameter –noexit so as to prevent the closure of the session…

|

1 |

PowerShell.exe -noexit "C:\Temp\Automation\GetWindowsUpdates.ps1" |

… and it worked. Why? My script was creating the jobs, each of which was running in its own independent runspace, and then closed the PowerShell session. The PowerShell jobs hadn’t been completed when the main session was closed, so nothing was returned to the table, but no error was raised. Why was the session closed before the jobs had completed? It was because the PowerShell jobs run in another runspace, but within the same session that was called. The session must not be closed until all PowerShell jobs finish.

What do I need to do? All I have to do is to wait until all PowerShell Jobs are finished, it is as simple as that. I’d forgotten to add a ‘wait-job *’!

|

1 2 |

Get-Job | Wait-Job | Out-Null Remove-Job -State Completed |

The final code is :

|

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 |

Get-Content c:\temp\Servers.txt | % { Start-Job -Name $_ -InitializationScript{Ipmo Functions -Force -DisableNameChecking} ` -scriptblock { ( Get-WindowsUpdates -ComputerName $args[0] ` | Where {$_.UpdateDate -ge ((Get-Date).adddays(-1))} ` | select CurrentDate, ` ServerName, ` UpdateClientApplicationID, ` UpdateDate,` UpdateDescription, ` UpdateTitle ) | Out-DataTable ` | Write-DataTable -ServerInstance R2D2 ` -Database SQLServerRepository -TableName tbl_WindowsPatches } -ArgumentList $_ } Get-Job | Wait-Job | Out-Null Remove-Job -State Completed |

I save this code into C:\Temp\Automation\GetWindowsUpdates.ps1 on the server,

The Command to run as a CMDExec SQL Agent Step is :

|

1 |

PowerShell.exe "C:\Temp\Automation\GetWindowsUpdates.ps1" |

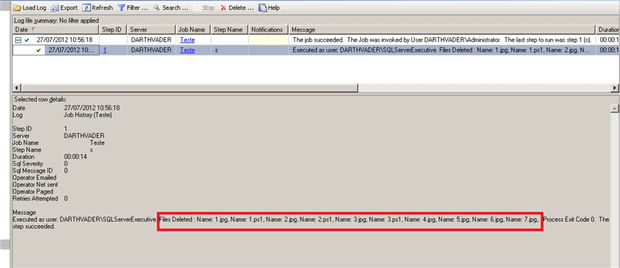

Outputting SQL Agent Job PowerShell in Job History

Although I’ve shown you how to get data from a PowerShell job that is running in a batch, there are times when all you need is a record of what a script did, so you can check afterwards

If, for example, you have a SQL Agent PowerShell Job that deletes old files in a log shipping process, and you want to output a list of the files that were removed in the Job History you can just to use write-output. This script shows you what happens:

|

1 2 3 4 5 6 7 8 |

$FilesRemoved = 'Files Deleted : ' gci "c:\test\*.*" | foreach { $FilesRemoved += "Name: {0}, " -f $_.name Remove-Item $_.fullname } write-output $FilesRemoved |

Then if you look at your Job History, you’ll then see the list.

The trick to get this to work with SQL Agent PowerShell Job, is that instead of using

|

1 |

$FilesRemoved += Name: $($_.name)" |

you need to use format specifier :

|

1 |

"Name: {0}, " -f $_.name |

Triggering PowerShell jobs with SQL WMI Alerts

This solution is using PowerShell scripts of course, SQL Server Jobs and a SQL WMI Alert

Imagine that you have a download folder on a SQL Server host that has several files downloaded automatically by FTP. It is called FTPDownload. A file with a specific name is downloaded once a day. The contents of this file must be loaded into a staging table in another SQL Server.

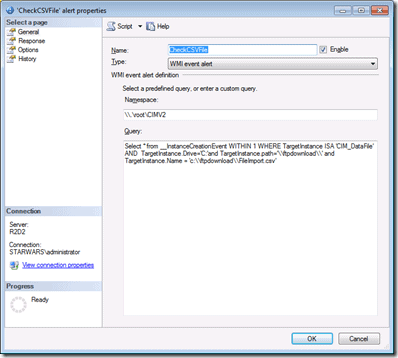

Firstly, lets create the Windows Query Language (WQL) query to monitor the specific file in a specific folder : for us the folder is c:\FTPDownload and the file is FileImport.CSV

|

1 |

@wmi_query=N'Select * from __InstanceCreationEvent WITHIN 300 WHERE TargetInstance ISA ''CIM_DataFile'' AND TargetInstance.Drive=''C:''and TargetInstance.path=''\\ftpdownload\\'' and TargetInstance.Name = ''c:\\ftpdownload\\FileImport.csv''', |

To a complete explanation about the WMI and WQL I suggest you read the excellent ebook from my good friend and PowerShell Jedi Ravikanth Chaganti –WMI Query Language via PowerShell

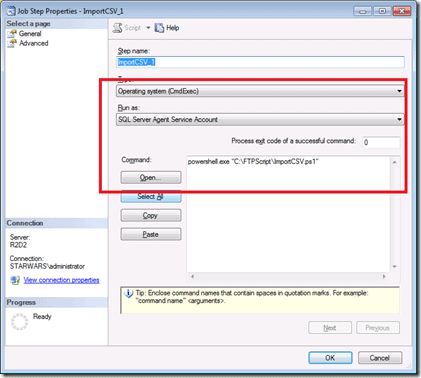

Then Lets create the Job called IMPORTCSV with a PowerShell code called importCSV.ps1 on c:\FTPScripts (you’ll need to get these CmdLets from SQLPSX)

|

1 2 3 4 5 6 7 8 9 10 11 12 |

try { $DataImport = Import-Csv -Path "c:\FTPDownLoad\FileImport.csv" -ErrorAction Stop $DataTable = Out-DataTable -InputObject $DataImport Write-DataTable -ServerInstance YOURSERVER -Database YOURDATABASE -TableName YOURTABLE -Data $DataTable $Msg = "FileImport.csv successfully imported" Rename-Item -Path "c:\FTPDownLoad\FileImport.csv" -NewName "c:\FTPDownLoad\FileImport_$(Get-date -format 'yyyyMMdd').csv" Write-Output $Msg } catch { $ex = $_.Exception Write-Error "$ex.Message" throw "Failure" } |

Now it is time to create the SQL WMI Alert to monitor the arrival of this file in the FTP folder, based on our WQL :

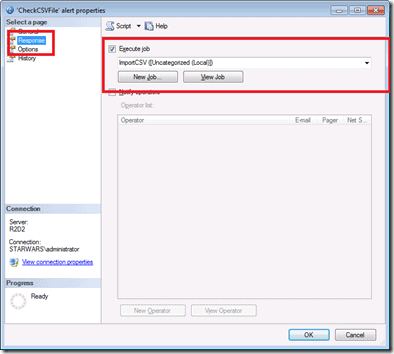

Now let’s set the response to the alert to execute the IMPORTCSV job

Here is the code that creates the alert if you’d rather do it via TSQL and you already have the ID of the job that you wish to execute when the alert is fired.

|

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 |

USE [msdb] GO /****** Object: Alert [CheckCSVFile] Script Date: 8/9/2012 8:47:15 PM ******/ EXEC msdb.dbo.sp_delete_alert @name=N'CheckCSVFile' GO /****** Object: Alert [CheckCSVFile] Script Date: 8/9/2012 8:47:15 PM ******/ EXEC msdb.dbo.sp_add_alert @name=N'CheckCSVFile', @message_id=0, @severity=0, @enabled=1, @delay_between_responses=0, @include_event_description_in=0, @category_name=N'[Uncategorized]', @wmi_namespace=N'\\.\root\CIMV2', @wmi_query=N'Select * from __InstanceCreationEvent WITHIN 300 WHERE TargetInstance ISA ''CIM_DataFile'' AND TargetInstance.Drive=''C:''and TargetInstance.path=''\\ftpdownload\\'' and TargetInstance.Name = ''c:\\ftpdownload\\FileImport.csv''', @job_id=N'990ef94a-a96d-41f2-809d-323c5e60d375' GO |

And all is done . Every time that a file called Fileimport.csv is created on folder c:\FTPDownload then, 5 minutes later, (the reason for the ‘WITHIN 300’ clause in the WQL), the alert is fired and the job is then run. Why 300? Just to allow time for the file to arrive and be written to disk.

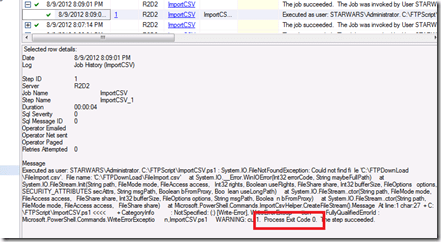

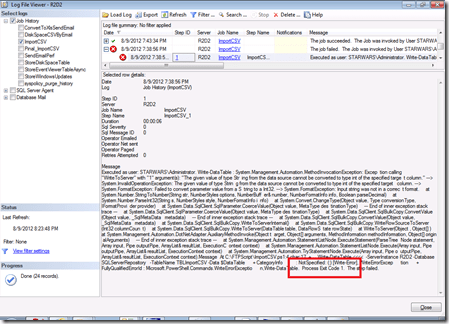

If you have some problem with the file and the routine generates a error, the job will finish with no errors even you using Try-Catch. This is because the exit code is 0. The error will be recorder on the job history if you look for it, but that job should finish with an error being flagged to SQL Server Agent.

How to solve this ? Just add the line ‘throw “Failure” ‘ into the catch block and then change the exit code to 1 :

|

1 2 3 4 5 |

} catch { $ex = $_.Exception Write-Error "$ex.Message" throw "Failure" } |

and the Job will finish with an error :

Now that you’ve got that running, you can send an email using PowerShell or by the SQL job, informing whether the job was successful . it is up to you!

For now that is it folks! I hope you guys liked it the Posh DBA series. Some cool stuff are coming .

Acknowledgements:

As usual, I cannot forget the awesome Jedi that is always helping this young Padawan and , of course, everyone that is needing help.

My good friends Ravikanth Chaganti, Shay Levy, my editor Andrew Clarke and the mysterious Sir Phil Factor (thanks for the XML part, Phil) Sir Bob Beauchemin , my brother Mark Broadbent and all people that kindly give their time and knowledge to share.

Load comments