The series so far:

- Storage 101: Welcome to the Wonderful World of Storage

- Storage 101: The Language of Storage

- Storage 101: Understanding the Hard-Disk Drive

- Storage 101: Understanding the NAND Flash Solid State Drive

- Storage 101: Data Center Storage Configurations

- Storage 101: Modern Storage Technologies

- Storage 101: Convergence and Composability

- Storage 101: Cloud Storage

- Storage 101: Data Security and Privacy

- Storage 101: The Future of Storage

- Storage 101: Monitoring storage metrics

- Storage 101: RAID

These days, discussions around storage inevitably lead to the topics of convergence and composability, approaches to infrastructure that, when done effectively, can simplify administration, improve resource utilization, and reduce operational and capital expenses. These systems currently fall into three categories: converged infrastructure, hyperconverged infrastructure (HCI), and composable infrastructure.

Each infrastructure, to a varying degree, integrates compute, storage, and network resources into a unified solution for supporting various types of applications and workloads. Although every component is essential to operations, storage lies at the heart of each one, often driving the entire architecture. In fact, you’ll sometimes see them referred to as data storage solutions because of the vital role that storage plays in supporting application workloads.

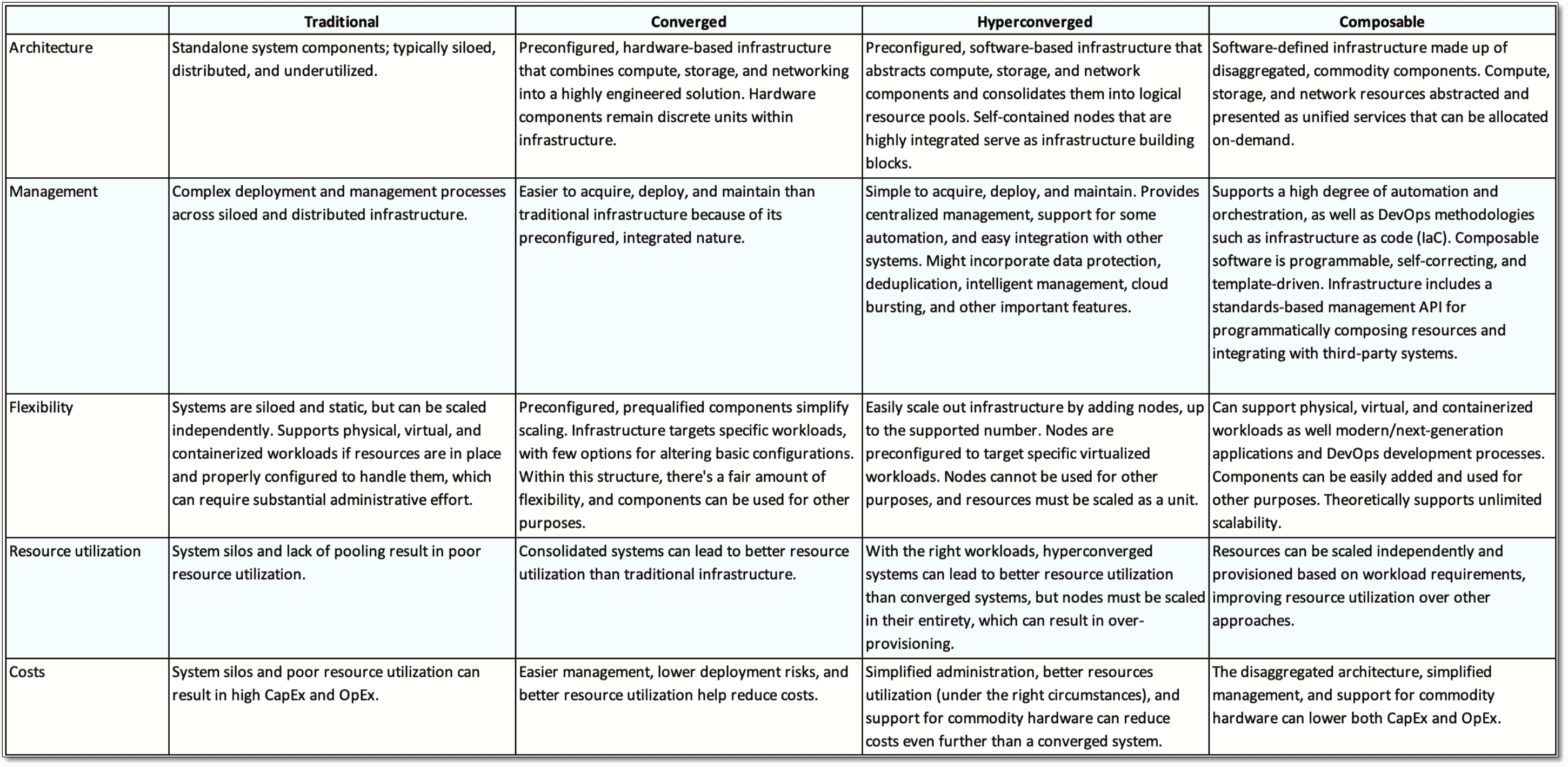

Convergence and composability grew out of the need to address the limitations of the traditional data center, in which systems are siloed and often over-provisioned, leading to higher costs, less flexibility, and more complex management. Converged, hyperconverged, and composable infrastructures all promise to address these issues, but take different approaches to getting there. If you plan to purchase one of these systems, you need to understand those differences in order to choose the most effective solution for your organization.

Before discussing each infrastructure, review this matrix which summarizes the differences.

Converged Infrastructure

A converged infrastructure consolidates compute, storage, network, and virtualization technologies into an integrated platform optimized for specific workloads, such as a database management system or virtual desktop infrastructure (VDI). Each component is prequalified, preconfigured, and assembled into a highly engineered system to provide a complete data solution that’s easier to implement and maintain than a traditional infrastructure.

The components that make up a converged infrastructure can be confined to a single rack or span multiple racks, depending on the supported workloads. Each component serves as a building block that works in conjunction with the other components to create a unified, integrated platform.

Despite this integration, each component remains a discrete resource. In this way, you can add or remove individual components as necessary, while still retaining the infrastructure’s overall functionality (within reason, of course). In addition, you can reuse a removed component for other purposes, an important distinction from HCI.

A converged infrastructure can support a combination of both hard-disk drives (HDDs) and solid-state drives (SSDs), although many solutions have moved toward all-flash storage. The storage is typically attached directly to the servers, with the physical drives pooled to create a virtual storage area network (SAN).

In addition to the hardware components, a converged infrastructure includes virtualization and management software. The platform uses the virtualization software to create resource pools made up of the compute, storage, and network components so they can be shared and managed collectively. Applications see the resources as pooled capacities, rather than individual components.

Despite the virtualization, the converged infrastructure remains a hardware-based solution, which means it doesn’t offer the agility and simplicity that come with software-defined systems such as hyperconverged and composable infrastructures. Even so, when compared to a traditional infrastructure, a converged infrastructure can help simplify management enough to make it worth serious consideration.

The components that make up a converged infrastructure are deployed as a single platform that’s accessible through a centralized interface for controlling and monitoring the various systems. In many cases, this eliminates the need to use the management interfaces available to the individual components. At the same time, you can still tune those components directly if necessary, adding to the system’s flexibility.

Another advantage to the converged infrastructure is that the components are prevalidated to work seamlessly within the platform. Not only does this help simplify procuring the components, but it also makes it easier to install them into the infrastructure, a process that typically takes only a few minutes. That said, it’s still important to validate any new storage you add to the infrastructure to ensure it delivers on its performance promises. Prevalidated components also reduce deployment risks because you’re less likely to run into surprises when you try to install them.

There are plenty of other advantages as well, such as quick deployments, reduced costs, easy scaling and compatibility with cloud computing environments. Even so, a converged infrastructure is not for everybody. Although you have flexibility within the platform’s structure, you have few options for altering the basic configuration, resulting in less flexibility should you want to implement newer application technologies.

In addition, a converged infrastructure inevitably leads to vendor lock-in. Once you’ve invested in a system, you’ve essentially eliminated products that are not part of the approved package. That said, you’d be hard-pressed to deploy any highly optimized and engineered system without experiencing some vendor lock-in.

If you choose a converged infrastructure, you have two basic deployment options. The first is to purchase or lease a dedicated appliance such as the Dell EMC VxBlock 1000. In this way, you get a fully configured system that you can deploy as soon as it arrives. The other option is to use a reference architecture (RA) such as the HP Converged Infrastructure Reference Architecture Design Guide. The RA provides hardware and configuration recommendations for how to assemble the infrastructure. In some cases, you can even use your existing hardware.

Hyperconverged Infrastructure

The HCI platform takes convergence to the next level, moving from a hardware-based model to a software-defined approach that abstracts the physical compute, storage, and (more recently) network components and presents them as shared resource pools available to the virtualized applications.

Hyperconvergence can reduce data center complexity even further than the converged infrastructure while increasing scalability and facilitating automation. HCI may add features such as data protection, data deduplication, intelligent management, and cloud bursting.

An HCI solution is typically made up of commodity compute, storage, and network components that are assembled into self-contained and highly integrated nodes. Multiple nodes are added together to form a cluster, which serves as the foundation for the HCI platform. Because storage is attached directly to each node, there is no need for a physical SAN or network area storage (NAS).

Each node runs a hypervisor, management software, and sometimes other specialized software, which work together to provide a unified platform that pools resources across the entire infrastructure. To scale out the platform, you simply add one or more nodes.

Initially, HCI targeted small-to-midsized organizations that ran specific workloads, such as VDI. Since then, HCI has expanded its reach to organizations of all sizes, while supporting a broader range of workloads, including database management systems, file and print services, email servers, and specialized solutions such as enterprise resource planning.

Like a converged infrastructure, a hyperconverged system offers a variety of benefits, beginning with centralized management. You can control all compute, storage and network resources from a single interface, as well as orchestrate and automate basic operations. In addition, an HCI solution reduces the amount of time and effort needed to manage the environment and carry out administrative tasks. It also makes it easier to implement data protections while ensuring infrastructure resilience.

An HCI solution typically comes as an appliance that is easy to deploy and scale. An organization can start small with two or three nodes (usually three) and then add nodes as needed, without a minimal amount of downtime. The HCI software automatically detects the new hardware and configures the resources. Upgrades are also easier because you’re working with a finite set of hardware/software combinations.

An HCI solution can integrate with other systems and cloud environments, although this can come with some fairly rigid constraints. Even so, the integration can make it easier to accommodate the platform in your current operations. The solution can also help reduce costs by simplifying administration and better utilization of resources while supporting the use of commodity hardware.

Despite these advantages, an HCI solution is not without its challenges. For example, vendor lock-in is difficult to avoid, as with the converged infrastructure, and HCI systems are typically preconfigured for specific workloads, which can limit their flexibility. In addition, nodes are specific to a particular HCI platform. You can’t simply pull a node out of the platform and use it for other purposes, nor can you add nodes from other HCI solutions.

HCI’s node-centric nature can also lead to over-provisioning. For example, you might need to increase storage and not computing. However, you can’t add one without the other. You must purchase a node in its entirety, resulting in more compute resources than you need. Fortunately, many HCI solutions now make it possible to scale compute and storage resources separately or have taken other steps to disaggregate resources. However, you’re still limited to a strictly-defined trajectory when scaling your systems.

You have three primary options for deploying an HCI solution. The first two are the same as a converged infrastructure. You can purchase or lease a preconfigured appliance, such as HPE SimpliVity, or you can use an RA, such as Lenovo’s Reference Architecture: Red Hat Hyperconverged Infrastructure for Virtualization. The third option is to purchase HCI software and build the platform yourself. For example, Nutanix offers its Acropolis software for deploying HCI solutions.

Composable Infrastructure

A composable infrastructure pushes beyond the limits of convergence and hyperconvergence by offering a software-defined infrastructure made up entirely of disaggregated, commodity components. The composable infrastructure abstracts compute, storage, and network resources and presents them as a set of unified services that can be allocated on-demand to accommodate fluctuating workloads. In this way, resources can be dynamically composed and recomposed as needed to support specific requirements.

You’ll sometimes see the term composable infrastructure used interchangeably with software-defined infrastructure (SDI) or infrastructure as code (IaC), implying that they are one in the same, but this can be misleading. Although a composable solution incorporates the principles of both, it would be more accurate to say that the composable infrastructure is a type of SDI that facilitates development methodologies such as IaC. In this sense, the composable infrastructure is an SDI-based expansion of IaC.

Regardless of the labeling, the important point is that a composable infrastructure provides a fluid pool of resources that can be provisioned on-demand to accommodate multiple types of workloads. A pool can consist of just a couple compute and storage nodes or be made up of multiple racks full of components. Organizations can assemble their infrastructures as needed, without the node-centric restrictions typical of HCI.

The composable infrastructure includes intelligent software for managing, provisioning, and pooling the resources. The software is programmable, self-correcting, and template-driven. The infrastructure also provides a standards-based management API for programmatically allocating (composing) resources. Administrators can use the API to control the environment, and developers can use the API to build resource requirements into their applications. The API also enables integration with third-party tools, making it possible to implement a high degree of automation.

A composable infrastructure can support applications running on bare metal, in containers, or in VMs. The infrastructure’s service-based model also makes it suitable for private or hybrid clouds and for workloads that require dynamic resource allocation, such as artificial intelligence (AI). In theory, a composable infrastructure could be made up of any commodity hardware components, and those components could span multiple locations. In reality, today’s solutions come nowhere close to achieving this level of agility, but like SDI, this remains a goal for many.

That said, today’s composable infrastructure solutions still offer many benefits, with flexibility at the top of the list. The infrastructure can automatically and quickly adopt to changing workload requirements, run applications in multiple environments (bare metal, containers, and VMs), and support multiple application types. This flexibility also goes hand-in-hand with better resource utilization. Resources can be scaled independently and provisioned based on workload requirements, without being tied to predefined blocks or nodes.

The composable platform also simplifies management and streamlines operations by providing a single infrastructure model that’s backed by a comprehensive API, which is available to both administrators and developers. IT teams can add components to the infrastructure in plug-and-play fashion, and development teams can launch applications with just a few clicks.

The composable infrastructure makes it possible to compose and decompose resources on demand, while supporting a high degree of automation and orchestration, as well as DevOps methodologies such as IaC. These benefits—along with increased flexibility and better resource utilization—can lead to lower infrastructure costs, in terms of both CapEx and OpEx.

However, the composable infrastructure is not without its challenges. As already pointed out, these systems have a long way to go to achieve the full SDI vision, leaving customers subject to the same vendor lock-in risks that come with converged and hyperconverged solutions.

In addition, the composable infrastructure represents an emerging market, with the composable software still maturing. The industry lacks agreed-upon standards or even a common definition. For example, HPE, Dell EMC, Cisco, and Liqid all offer products referred to as composable infrastructures but that are very different from one another, with each vendor putting its own spin on what is meant by composability.

No doubt the market will settle down at some point, and we’ll get a better sense of where composability is heading. In the meantime, you already have several options for deploying a composable infrastructure. You can purchase an appliance that comes ready to deploy, use a reference architecture like you would a blueprint, or buy composable software and build your own. Just be sure you do your homework before making any decisions so you know exactly what you’re getting for your money.

Converged to Composable and Beyond

Choosing a converged or composable infrastructure is not an all-or-nothing prospect. You can mix-and-match across your organization as best meets your requirements. For example, you might implement HCI systems in your satellite offices but continue to use a traditional infrastructure in your data center. In this way, you can easily manage the HCI platforms remotely and reduce the time administrators need to spend at those sites, while minimizing the amount of space being used at those locations.

Data and storage will play a pivotal role in any infrastructure decisions. To this end, you must take into account multiple considerations, including application performance, data protection, data quantities, how long data will be stored, expected data growth, and any other factors that can impact storage. You should also consider integration with other systems, which can range from monitoring and development tools to hybrid and public cloud platforms.

Of course, storage is only part of the equation when it comes to planning and choosing IT infrastructure. You must also consider such issues as deployment, management, scalability, resource consolidation, and the physical environment. But storage will drive many of your decisions, and the better you understand your data requirements, the more effectively you can choose an infrastructure that meets your specific needs.

Load comments