- Failure #1: Not securing the physical environment

- Failure #2: Not protecting the server environments

- Failure #3: Implementing inadequate network security

- Failure #4: Not updating and patching your systems

- Failure #5: Maintaining a large surface attack area

- Failure #6: Using improper authentication

- Failure #7: Assigning the wrong service accounts

- Failure #8: Failing to control access to SQL Server resources

- Failure #9: Failing to encrypt sensitive data

- Failure #10: Following careless coding practices

- Failure #11: Not verifying SQL Server implementations

- Failure #12: Failing to audit your SQL Server instances

Failure #1: Not securing the physical environment

A database team might go through extraordinary measures to protect its SQL Server instances and the data they contain, but not necessarily extend that diligence to the physical environment, often because the assets themselves are not in the team’s control. Yet physical devices-such as servers, backup drives, or disks in a storage area network (SAN)-can be compromised just like any software or network component, so whoever is in charge of those devices better take heed.

All it takes is one disgruntled employee with the access to the physical assets to turn an organization upside down. Before anyone knows what happens, the individual can walk off with a backup drive full of credit card numbers, health records, company secrets, or other sensitive information, resulting in permanent damage to the organization’s reputation and financial well-being. Even if the data is encrypted, an employee with the necessary privileges, access to the security certificates, and a bit of know-how can make full use of that data on the open market.

Organizations serious about data security must implement defense in depth strategies that protect all layers of the data infrastructure, including the physical components, whether from inside threats or those coming from outside the organization. Every server and disk that hosts a SQL Server instance or data file must be physically protected so that only those who absolutely need access to those devices can get at them. That includes devices used for replications, mirrored databases, backups, or log files. The number of workers who have access to the physical devices should be strictly limited, with security measures scrutinized and updated regularly.

Another challenge, perhaps even more difficult to address, is trying to “enlighten” those wayward employees with privileged access who are careless with their desktops and laptops, leaving them unattended and unlocked for varying lengths of time. Anyone with less than honorable intentions can stroll along and change or copy sensitive data with a little bit of insight into the systems, not only making the careless user look bad, but again putting the company’s reputation and financial happiness on the line. If the imprudent user is a creature of habit, the miscreant thief has an even easier time getting at the sensitive data. Given the possibility of such a scenario, the only hope for the organization is employee education and IT policies that try to mitigate such risks as much as possible.

Failure #2: Not protecting the server environments

SQL Server can spread across environments far and wide, its impact reaching into machines and folders on the other side of the known network. Installation files might be located on a nearby server, log files on another device, data files on the new SAN down the hall, and backups in a remote data center. Even within the host, SQL Server also makes use of operating system (OS) files and the registry, spreading its impact even further.

Anything SQL Server touches should be protected to minimize the potential for a SQL Server instance or its data being compromised. For example, a database might contain sensitive data such as patient health records. Some of that data can end up in the log files at any given time, depending on the current operations. Even if all other files are carefully protected, a less-then-secure log file can have far reaching implications if the network or attached devices are compromised.

Database and IT teams should take every precaution to protect the files and folders that support SQL Server operations, including OS files, SQL Server installation files, certificate stores, data and log files-everything. Administrators should not assign elevated privileges to any related folder and should not share any of those folders on the network. Access to the files and folders should be limited to the greatest degree possible, granting access only to those accounts that need it. Teams should always keep in mind the principles of least privilege when it comes to NTFS permissions, that is, granting access only to the degree absolutely necessary and nothing more. The same goes for the machine as a whole. Don’t assign administrative access to a server when a user requires only limited privileges.

Failure #3: Implementing inadequate network security

When you consider all the ways a network can be hacked, it’s remarkable we can do anything safely. Weak network security can lead to viruses and network attacks and compromised data of unimaginable magnitude. Cyber-criminals able to exploit network flaws are not only able to inflict damage, but also download boatloads of data before being detected, whether credit card information, social security numbers, company strategies, or a host of other types of sensitive data.

An organization must do everything possible to protect its network and the way SQL Server connects with that network. One place to start is to ensure that you’re running the necessary antivirus software on all your systems and that the virus definitions are always up to date. Also be sure that firewalls are enabled on the servers and that the software is current. Firewalls provide network protection at the OS level and help to enforce an organization’s security policies.

In addition, configure SQL Server to use TCP/IP ports other than default one (such as 1433 or 1434). The default ports are well known and are common targets for hackers, viruses, and Trojan horses. In addition, configure each instance to use a static port, rather than allowing the number to be assigned dynamically. You might not be able to ward off all attacks, but this way you’re at least obscuring the pathways.

To further obscure your SQL Server instance, consider disabling the Browser service to prevent snoopers from being able to search for it on the network. Clients can still access the instance as long as their connections specify the correct port number or named pipe. If disabling the Browser service is not an option, at least hide any instances you don’t want easily discovered, once again forcing any client connections to have to provide the port number or named pipe.

In addition, you should disable the network protocols that are not needed. For example, it’s unlikely you need to have both the Named Pipes and TCP/IP protocols enabled. In addition, you should grant the CONNECT permission only the endpoints or logins that require it; for all others, explicitly deny the permission. If possible, avoid exposing the server that’s running SQL Server to the Internet altogether.

Failure #4: Not updating and patching your systems

Some of you might recall what happened in 2003. The SQL Slammer computer worm infected over 75,000 servers running SQL Server. Within 10 minutes of deployment, the worm infected more than 90% of vulnerable computers and took down thousands of databases. Slammer exploited a buffer-overflow vulnerability in SQL Server to carry out an unprecedented denial-of-service (DoS) attack. The rest is cyber history.

The interesting part of this story is that the bug had actually been discovered long before the attack. In fact, Microsoft provided a fix six months in advance of the DoS onslaught. The organizations that got hit by Slammer had failed to patch their SQL Server instances.

Because of the onslaught of threats aimed at SQL Server and other systems, companies like Microsoft are regularly releasing service packs and patches and security fixes and other types of updates to address any new found vulnerabilities. What these vendors can’t do, however, is force their customers to apply the updates.

In all fairness, there’s good reason why many DBAs and other administrator types can be slow to getting around to patching their systems. Applying these fixes can be a big deal. They take time and testing and careful consideration. If you apply an update to a production server without first testing it you risk taking down the entire system. Overworked DBAs must have the time in their schedules and the resources necessary to apply these updates right.

Yet such incidents as the Slammer debacle demonstrate how critical it is to keep your SQL Server instances up to date. Not only can such attacks lead to excessive resource consumption, server downtime, and corrupt data, but also to even more serious concerns. The information gathered from a successful DoS attack can be used to launch subsequent attacks, some of which might be aimed at getting at the actual data.

Microsoft is constantly releasing critical updates and fixes for both Windows and SQL Server. You can manually download and install them, or set up your systems to update the software automatically, if you can afford to take such risks. More often than not, you’ll want to first test the update and schedule a time to implement it into a production environment. It is no small matter, certainly, but keeping Windows and SQL Server current is one of the most effective strategies you can employ for protecting your system’s data.

Failure #5: Maintaining a large surface attack area

We’re not talking rocket science here, just common sense. The more SQL Server features and services you have enabled, the larger the surface attack area and the more vulnerable your system is to potential attacks. When setting up SQL Server, you should install only those services you need and disable those features you don’t plan to use anytime soon. Only the services and features for which there is a credible business need should be running.

Cybercriminals are a diligent lot, and the more you give them to work with, the happier they are. Given the proliferation of zero-day exploits, the less you can leave yourself exposed, the better. Why install Analysis Services or Integration Services on a production server when all you need is the database engine?

Some features in particularly can raise the stakes on your system’s vulnerability. For example, xp_cmdshell let’s you execute a command against the Windows host. If that feature is enabled on a SQL Server instance and a hacker gains access, xp_cmdshell can open doors to the OS itself. Other features too should be enabled only if needed, such as the Active Directory Helper service or VSS Writer service. You even have to be cautious with OPENROWSET and OPENDATASOURCE because they can facilitate access to external systems. In addition, you should consider disabling mail-related features that are not needed, such as the Database Mail service or the sp_send_mail system stored procedure. And don’t install sample databases or code on your production servers.

If you’re running SQL Server 2005, you can use the Surface Area Configuration (SAC) tool to enable or disable installed components. For whatever components SAC doesn’t address, you can use Configuration Manager, the sp_configure system stored procedure, or a tool such as Windows Management Instrumentation.

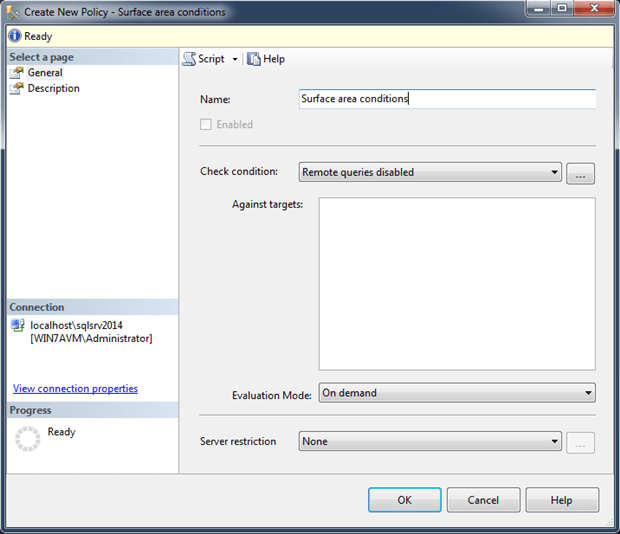

Starting with SQL Server 2008, the Policy-Based Management system replaces SAC, providing one location to address all your surface configuration needs, in addition to configuring other components. For example, the following dialog box shows a new policy named Surface area conditions, with the Remote queries disabled condition selected. The condition specifies that the OPENROWSET and OPENDATASOURCE functions should be disabled.

Whichever tools you use, the important point is to install and enable only those components you need. You can always add or enable other features later. Just don’t give hackers any more ammunition than they already have.

Failure #6: Using improper authentication

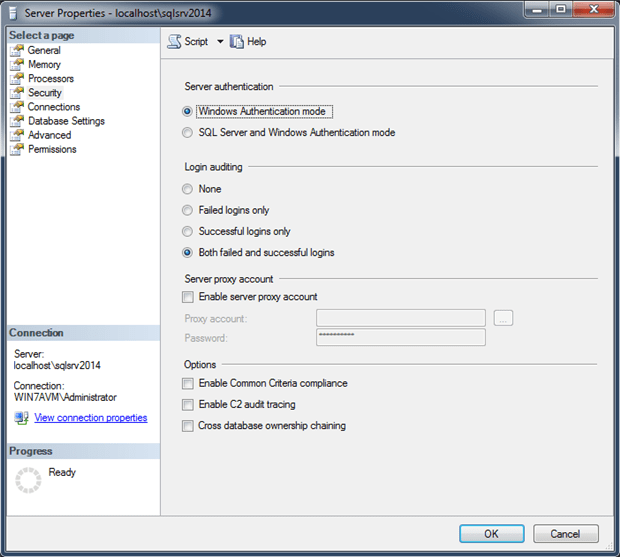

SQL Server supports two authentication modes: Windows Authentication and Mixed Mode. Windows Authentication, the default authentication type, leverages Windows local accounts and Active Directory network accounts to facilitate access to the SQL Server instance and its databases. Mixed mode authentication supports both Windows accounts and SQL Server accounts (SQL logins). You can specify the authentication mode when installing SQL Server or change it after SQL Server has been installed by updating the server properties, as shown in the following figure.

Microsoft (and just about everyone else) recommends that you use Windows Authentication whenever possible, falling back on Mixed mode only to support backward compatibility, access to SQL Server instances outside the domain, and legacy applications. Windows Authentication is more secure because it can take advantage of the Windows and Active Directory mechanisms in place to protect user credentials, such as the Kerberos protocol or Windows NT LAN Manager (NTLM). Windows Authentication also uses encrypted messages to authorize access to SQL Server, rather than passing passwords across the network. In addition, because Windows accounts are trusted, SQL Server can take advantage of Active Directory’s group and user account administrative and password management capabilities.

SQL Server accounts and passwords are stored and managed within SQL Server, with passwords being passed across the network to facilitate authentication. Not only does this make the authentication process less secure, but it also means you can’t take advantage of the password controls available to Active Directory. In addition, you must be diligent about managing the passwords within SQL Server, such as mandating strong passwords and expiration dates as well as requiring new logins to change their passwords. You must also guard against accounts being created with blank passwords, including accounts created by third-party products. Weak passwords leave your database open to brute force attacks and other nefarious activities. Blank passwords open up your databases to everyone and everything.

Also with Mixed Mode authentication, you have to be particularly wary of that powerful built-in SA account. At one time, this had actually been created with a blank password. Fortunately, that has not been the case since SQL Server 2005. Many database pros recommend that you rename the SA account. Others suggest you disable it and never use it. Still others would have you do all three, not a bad strategy, given how well known the account is and its history of abuse. At the very least, you should assign a complex password to the account and keep it disabled.

It should also be noted the SQL Server creates the SA account even if Windows Authentication is the selected mode, assigning it a randomly generated password while disabling the account. You might consider applying a complex password in this case as well, and certainly don’t enable the account.

Failure #7: Assigning the wrong service accounts

Each SQL Server service requires a Windows account to run so the service can access resources such as networks and file folders. Because orchestrating this access can be somewhat cumbersome, services are often assigned high-privileged built-in accounts such as Local System or Network Service, thus preventing those mysterious SQL Server error messages that can pop up at peculiar moments, providing information that is so obscure that even the Google gods cannot offer an answer.

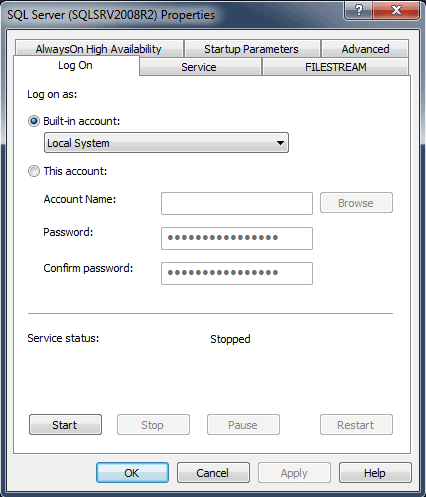

The problem with using these accounts is that, if SQL Server were to be compromised, the OS too could be compromised-to the degree that the service account has access. These built-in accounts can actually inherit elevated privileges in Active Directory, which are not required in SQL Server. For example, you can easily assign the Local System account to a SQL Server service, as shown in the following figure.

The Local System account is a very high-privileged account with extensive access to the local system. It is like granting a user administrative privileges to the server. If SQL Server were to be compromised, the would-be hacker could potentially have unrestricted access to the machine and its resources.

Despite the convenience that these built-in accounts bring, you should be following the principles of least privilege when assigning accounts to SQL Server services. The accounts should have exactly the permissions necessary for the service to do its job. You should also use a different account for each service, with the permissions set up specifically to meet the needs of that account.

For example, SQL Server Agent will likely require a different set of permissions from Integration Services. Also, avoid assigning accounts being used by other services on the same server. In addition, you must take into consideration whether the service will need access to domain resources, such as a data file on a network share. Keep in mind too that service accounts should also be governed by good password management policies, such as enforcing complex passwords and expiration dates.

Failure #8: Failing to control access to SQL Server resources

Like all aspects of SQL Server access, account access should adhere to the principles of least privilege, assigning only the permissions necessary to perform specific functions. Along with this principle, we can add another-separation of duties. This ensures against conflicts of interest and the inadvertent combination of privileges that lead to excessive access.

All too often, database administrators (or someone) will assign one or two sets of generic privileges to all users, without thought to the consequences of such action. For example, an assistant bookkeeper might need access to sales totals and quantities, but is granted access to the entire database, which includes sensitive customer information. That employee could abuse that position by modifying records or stealing data. Together, the principles of least privilege and separation of duties help to ensure that database owners maintain control over who can access SQL Server and its data and what levels of access they have.

This also goes for the applications and services accessing the database. They too should be using low-privileged accounts that grant only the access needed. In addition, be sure to remove unused default accounts created by third-party apps (as well as any other accounts not in use).

When possible, avoid granting individual accounts access to SQL Server and instead grant access to the security groups that contain these accounts, granting them the specific access they need. This helps to think in terms of separation of duties and makes it easier to manage large numbers of accounts. Also consider disabling the guest accounts in your databases so that members of the public server role can’t access those databases, unless they’ve been specifically granted the permissions.

Pay particular attention to how you’re assigning administrate permissions to users. For example, choose widely which accounts are added to the sysadmin fixed server role. Group members can do just about anything they want in SQL Server. And don’t use the SA admin account to manage SQL Server, as mentioned earlier.

You should also avoid assigning the CONTROL SERVER permission to individual accounts. It provides full administrative privileges over SQL Server and is already granted to the sysadmin group. Many database pros also recommend that you remove the Windows BUILTIN/Administrators group from the sysadmin role if it has been added. (It was added by default prior to SQL Server 2008.) Although removing the group lets you better control SQL Server access, you have to be careful doing so because it can impact your SQL Server installations.

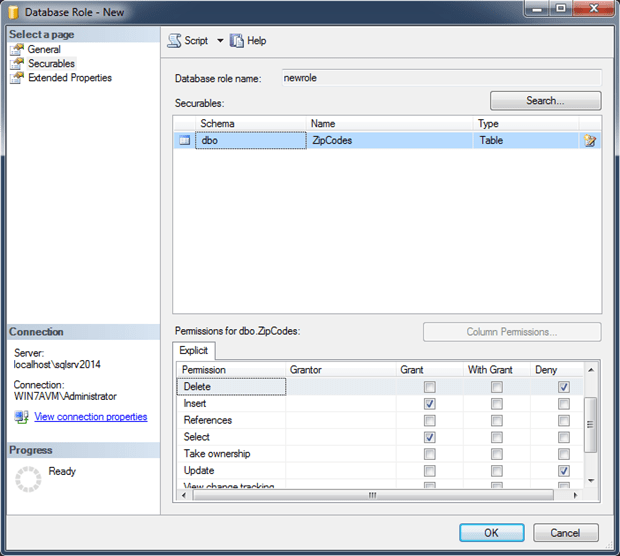

Make use of SQL Server’s hierarchical permission structure in which the principals (users and roles) can be granted or denied to the securables (the databases and its objects). For example, you can grant a principal access at the database, schema, or table level. In the following figure, the database role has been granted SELECT and INSERT privileges to the ZipCodes table, but denied UPDATE and DELETE privileges.

By taking this approach, you can ensure that users perform only specific tasks and not perform others. Again, the key is to follow the principles of least privilege and the separation of duties. This applies to administrative accounts as well as other types of accounts.

Failure #9: Failing to encrypt sensitive data

An organization less diligent about security might assume that, because SQL Server is a backend system, the databases are inherently more secure than public-facing components and be satisfied that the data is ensconced in a protective layer. But SQL Server still relies on network access and is consequently exposed enough to warrant protection at all levels. Add to this the possibility that physical components such as backup drives can be lost or stolen (most likely the latter), and you can’t help but realize that no protection should be overlooked.

Encryption is one such protection. Although it cannot prevent malicious attempts to access or intercept data, no more than it can prevent a drive from being stolen, it offers another safeguard for protecting your data, especially that super-sensitive stuff such as credit card information and social security numbers. That way, if the data has been accessed for less than ethical reasons, it is at least protected from prying eyes.

SQL Server supports the ability to encrypt data at rest and in motion. On the at-rest side, you have two options: cell-level encryption and Transparent Data Encryption (TDE). Cell-level has been around for a while and lets you encrypt individual columns. SQL Server encrypts the data before storing it and can retain the encrypted state in memory.

Introduced in SQL Server 2008, TDE encrypts the entire database, including the log files. The data is encrypted when it is written to disk and decrypted when being read from disk. The entire process is transparent to the clients and requires no special coding. TDE is generally recommended for its performance benefits and ease of implementation. If you need a more granular approach or are working with SQL Server 2005 or earlier, then go with cell-level encryption.

SQL Server can also use the Secure Sockets Layer (SSL) protocol to encrypt data transmitted over the network whether between SQL Server instances or between SQL Server and a client application. In this way, data can be protected throughout a session, making it possible to pass sensitive information over a network. Of course, SSL doesn’t protect data at rest, but when combined with TDE or cell-level encryption, data can be protected at every stage.

An important component of any encryption strategy is key management. Without going into all the gritty details of SQL Server key hierarchies, master keys, and symmetric and asymmetric keys, let’s just say you need to ensure these keys (or certificates) are fully protected. One strategy is to use symmetric keys to encrypt data and asymmetric keys to protect the symmetric keys. You should also password-protect keys, and always back up the master keys and certificates. Also back up your database to maintain copies of your symmetric and asymmetric keys, and be sure those backups are secure.

Failure #10: Following careless coding practices

After all these years, after all the press, after all the countless articles and recommendations and best practices, SQL injection still remains a critical issue for SQL Server installations. Whether because of sloppy coding within SQL Server or within the web application passing in SQL, the problem persists. Hackers are able to insert malicious code into a string value passed to the database and in the process do all sorts of damage-deleting rows, corrupting data, retrieving sensitive information. Any code that accesses SQL Server data should be vetted for potential SQL injection and fixed before going into production. Fortunately, those fixes are often quite straightforward, such as validating user input or delimiting identifiers.

But SQL injection is not the only risk. Issues can arise if the execution context within procedures, functions, or triggers is not explicitly called out. By default, the code executes as the caller, but this can lead to problems if an account with elevated privileges has been compromised. However, as of SQL Server 2005, you have more control over the execution context (using EXECUTE AS) and can specify the account to use as the execution context, thus ensuring full control over a procedure’s capabilities.

Another way coders can protect their databases is to create procedures, functions, and views to present the data without providing access to the base tables. This helps to abstract the actual schema and allows access to be controlled at the abstraction layer, restricting access altogether to the tables themselves. This approach also has the benefit of more easily accommodating changes to the application as well as to the underlying data structure. In some cases, the database team won’t have this option because of application requirements or the technologies being used, such as a data abstraction layer, but when it is possible, providing this extra layer of protection can be well worth the effort.

There are, of course, plenty of other coding practices that can result in compromised data. The key is to make certain that all code is reviewed and tested for vulnerabilities, before it is implemented in production.

Failure #11: Not verifying SQL Server implementations

Regardless of the safeguards you’ve implemented to protect your SQL Server databases and their data, security can be breached without you being aware that something is wrong. The only way you can fully protect your data is to monitor and verify your SQL Server installations. Monitoring in this sense does not refer to auditing (which we’ll discuss shortly), but to the process of assuring that everything is running as it should, the data is intact, and nothing too strange is going on.

For example, you should be monitoring CPU, memory, and disk usage, not only for performance considerations, but also to ensure you’re not seeing any anomalies that might point to such issues as illegal data downloads or malware accessing or modifying the databases and their data. Activity monitoring can also be a useful stopgap measure until you get a chance to apply the latest security patches. You should take whatever steps might help you expose abuses that would otherwise go unnoticed-until it’s too late.

You can also make use of such tools as DBCC CHECKDB, which can help uncover data corruption as a result of a cyber-attack or illegal access. Spot-checking security-related configuration settings can also be useful in discovering if a rogue user has gained access and is wreaking havoc on the permissions or other settings. In general, you want to check your databases to make sure they’re doing only what they’re supposed to be doing and that data is in the state you expect it to be.

You can also make use of such tools as SQL Server Best Practices Analyzer. The Analyzer is free and can help you gather information about security settings and identify vulnerabilities. The tool uses SQL Server recommendations and best practices to uncover potential security risks. And don’t forget the server hosting SQL Server. For example, you (or an IT administrator) can use Microsoft Security Compliance Manager to enhance the server’s security via Group Policy.

Failure #12: Failing to audit your SQL Server instances

Auditing is a big topic, too big to cover in just a few paragraphs, but it is a topic too important not to mention. One of the most beneficial steps you can take as part of a complete security strategy is to implement auditing. If you’re not monitoring user activity, you might be opening yourself up to severe consequences in the form of corrupt or compromised data.

You have several options for auditing your systems. You can use SQL Server’s built-in features (which have greatly improved since SQL Server 2008), or you can implement one of many available third-party solutions, which usually come in the form of host-based agents or network-based monitors. There are pros and cons to any solution, with performance and cost being two of the main considerations. The important point is to maintain an audit trail of all access to sensitive data. Without such an audit trail, you risk being out of compliance as well as having your data compromised.

You will, of course, have to balance the overhead that comes with auditing against the need to protect sensitive information. You’ll have to take this on a case-by-case basis. Determine the level of granularity needed to ensure a credible audit as well as which operations and data should be audited. At the very least, you should audit both successful and failed login attempts. Also keep in mind any compliance-related requirements that might govern your auditing strategy.

Load comments