DevOps, Continuous Delivery & Database Lifecycle Management

Automated Deployment

I love the human race, but I don’t believe that it is well-adapted to accurately performing routine tasks. It isn’t the best use of our time and we fail too often. We are best at the more creative and open-ended tasks, but we need time to do them, and the only way that we, in IT can do this is to automate as much of our routine tasks via scripting. For we Database Administrators, PowerShell is the key to a more satisfying work life.

In a series of two articles of which this is the first, I will show to you how I automated a review of SQL Server Best-practices for all my servers and databases, delivering the results in a Word document. This first article shows you how to export the data from SQL Server, and the second one will show you in detail how to automatically load the information into Microsoft Word. When we used to do a Best Practices review by hand, this kind process used to take four hours. Now we can do it in a few minutes. That ´s an awesome time-saving.

The Start Point

“The White Rabbit put on his spectacles. ‘Where shall I begin, please your Majesty?’ he asked.

‘Begin at the beginning,’ the King said gravely, ‘and go on till you come to the end: then stop.'”

Before we attempt to automate or script any process, we must be very clear about the process. We must know what we want to achieve in detail. I know it is obvious, but I speak from sad experience in trying to help people who, it turns out, don’t clearly know what they want from the automated process.

Actually this article is really about showing you how to automate just about everything you want; not just a SQL Server Best practices review. However, it makes a good example script of fairly defensive scripting, so let’s take this first step by creating a list of the best practices and the thresholds or suggestions that might alert you to take a closer look.

For instance, these might be like this:

- Check SQL Server Edition and Service Pack

- The Service pack should to be the last one published by Microsoft

- Check the SQL Server Server Configurations

- Default backup compression should be enabled (if applicable to the SQL Server Version)

- Max Memory should be set up to a fixed value and the OS needs to have at least 3GB free

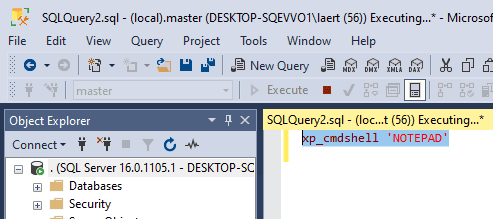

- Check if XP_CMDSHELL is enable

- Suggested value = NO

- Check if OLE Automation Procedures is enabled

- Suggested value = NO

- Check Optimize for ad–hoc workload turned ON

- Suggested value = Yes

- Database properties

- Database owner is not SA

- Auto close enabled

- Auto Shrink enabled

- CHECKSUM page verification not in use

-

…and so on…

Of course we all know that there are no universal ‘best practices’ in SQL Server, because everything is qualified by the classic phrase “it depends”: So you may want to mix the best practices that are appropriate to your environment with the thresholds from the experts whose advice we trust, along with our own experience.

The original script is too long to publish within the article but it is here. You can also download the scripts attached to this article, and so I’ll only quote the relevant bits to illustrate the points I’m making.

Operational Considerations

I wanted to make this script something that anyone can use. We are talking about a new technology and SQL Server DBAs are culturally suspicious of command line scripts that are not T-SQL, so one of my big challenge in devising this script was this:

- The user shouldn’t need to know any PowerShell, so it must be possible to run the script from one click that does everything.

- It needs to run in all the possible systems that I could think on. The final script runs in any version of Windows with at least PowerShell 2.0 and SQL Server 2005 and higher.

- The machine that is running the script doesn’t need to have Microsoft Word installed, so we need to use two scripts: One to extract the data running in the SQL Server machine and other one to run in the desktop machine to create the word document. Because PowerShell deals natively with csv files, all the data is extracted and saved in csv format.

- The script cannot be intrusive on the server, and must not create any kind of object in the SQL Server Instance

Coding Considerations

As I said in the previous section, the script needs to be so simple to use that one click will perform everything. This means that one of the most important sections is the one that checks the prerequisites for successfully running the script. We need to perform a series of checks up-front to ensure that we can keep running the script with minimal problems. For instance:

- The Script needs to collect information from several SQL Server instances, so of course it must have the right access to be able to collect data from all the instances.

- The Scripts deal with WMI, so it needs to be running with elevated permissions

- The Script will assume that all instances can be accessed using Windows authentication.

- The Script collects special data from SQL Server, so the login must be assigned the SysAdmin role in SQL Server

- SMO, at least, needs to be installed. Ok. This point is a bit contradictory. I could do everything without requiring SMO, but it would be more elaborate because of the requirement that the script needs to work by itself and in all the required platforms and versions. We reckoned that DBAs would be running SSMS, and so would already have SMO installed in the machine that is destined to run the script

- Some early versions of SQL Server don’t have the SMO objects that the newer versions have, so I need to test the version and use the metadata to collect information. At this point I need to have a consistent and robust method to invoke T-SQL metadata commands. SQLPS (the SQL Server PowerShell Host) has invoke-SQCMD, but SQL Server 2005, 2008 and 2008 R2 don’t use SQLPS as a module; they are snapins instead, so my code I would need to test it and load in the correct way accordingly. I don’t want that, so I am, instead, using invoke-SQLCMD2 from Chad Miller.

- I am using some functions from SQLPSX to connect in the SQL Server.

I needed an effective way to display a verbose step-by-step report on the screen and a log file so I created a function to do that. You can also pass the error treatment as a parameter to this function. For any error, I can choose whether it will stop the script or not.

And the most important:

KISS – L – Keep It Simple Stupid Laerte.

Coding Algorithm

The entire process is, and needs to be, simple (KISS – L). For each check you just need to collect the data, format the output object, and handle any errors. You will need to use a PSOBJECT (as this script needs to run in PowerShell 2.0 and higher, the new-object cmdlet will be used). For instance:

Some activities are relatively straightforward. Checking Disk Information is a prime example:

|

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 |

Try { Get-WmiObject -ComputerName $MachineName -Class Win32_Volume | Where { $_.DriveType -eq 3 -or $_.DriveType -eq 4 } | Select @{ N = 'Drive Letter'; E = { $_.DriveLetter } }, @{ N = 'Label'; E = { $_.Label } }, @{ N = 'File System'; E = { $_.FileSystem } }, @{ N = 'File Allocation Unit Size (KB)'; E = { $_.BlockSize/1024 } }, @{ N = 'Compressed'; E = { $_.Compressed } } | Sort DriveLetter | Export-Csv -force -NoClobber -NoTypeInformation -Path "$($FileName)Disk.csv" } catch { Write-Log -Error "Could not generate the Disk.csv file. : Error $($_.Exception.Message) " ` -path $PathWriteLog -ErrorLogFileName $ErrorLogfileName } |

Which would give you a CSV file with something like this in it

|

1 2 3 |

"Drive Letter","Label","File System","File Allocation Unit Size (KB)","Compressed" "C:",,"NTFS","4","False" "D:",,"NTFS","4","False" |

Sometimes, however, you need to duck and dive a bit to collect the data. The data needs to be collected for a number of checks and only then, when all checks are complete, exported as CSV. As example, we need to see when the last DBCC consistency check was run: We will need to use an array to store the psobject for each of the databases that we discovered had not had a consistency check run within the previous X days. Only after all the databases have been checked do we export the array:

|

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27 28 29 30 31 32 33 34 35 36 37 |

Try { $ArraydbccLastKnownGood = @() $Server.Databases | Where-Object { (!($_.IsSystemObject)) -and $_.isaccessible } | ForEach-Object { $dbccLastKnownGood = [datetime](Invoke-Sqlcmd2 -ServerInstance $FullInstanceName ` -Database $_.name -Query "DBCC dbInfo() with TableResults" -Verbose:$false | Where-Object { $_.field -eq 'dbi_dbccLastKnownGood' } | Sort-Object -Property Value -Descending | Select -First 1 -ExpandProperty value ) $daysdbccLastKnownGood = ($dbccLastKnownGood - (Get-Date)).days if ($daysdbccLastKnownGood -lt 7) { $ArraydbccLastKnownGood += New-Object PSOBJECT -Property @{ 'Database Name' = $_.name 'Last DBCC Ran' = $dbccLastKnownGood 'Days With no DBCC' = $daysdbccLastKnownGood } } } if ($ArraydbccLastKnownGood) { $ArraydbccLastKnownGood | Select @{ N = 'Database Name'; E = { $_."Database Name" } }, @{ N = 'Last DBCC Ran'; E = { $_."Last DBCC Ran" } }, @{ N = 'Days With no DBCC'; E = { $_."Days With no DBCC" } } | Export-Csv -force -NoClobber -NoTypeInformation -Path "$($FileName)LastDBCC.csv" } } catch { Write-Log -Error "Could not generate the LastDBCC.csv file. : Error $($_.Exception.Message) " ` -path $PathWriteLog -ErrorLogFileName $ErrorLogfileName } |

Which might produce

|

1 2 3 4 5 |

"Database Name","Last DBCC Ran","Days With no DBCC" "Yoda","01/10/2016 07:08:00","11" "Chewbacca","02/10/2016 07:20:00","10 "Padme","03/10/2016 07:23:00","9" "Ahsoka","10/10/2016 07:30:00","2" |

Or, in another example where we are accumulating a number of separate checks into one CSV file, here we check the SQL Agent configurations :

|

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27 28 29 30 31 32 33 34 35 36 37 38 39 40 41 42 43 44 45 46 47 48 49 50 |

Try { Write-Log -Message "Checking SQL Agent ...." ` -path $PathWriteLog -ErrorLogFileName $ErrorLogfileName -verbose $ArraySQLAgent += New-Object PSobject -Property @{ 'Check' = 'Maximum History of Rows' 'Value' = if ($server.JobServer.MaximumHistoryRows -lt 1000) { "$($server.JobServer.MaximumHistoryRows);Red" } else { $server.JobServer.MaximumHistoryRows } 'Description' = 'SQL Job Agent history should be set to default of 1000 records or higher' } $ArraySQLAgent += New-Object PSobject -Property @{ 'Check' = 'Maximum Job History of Rows' 'Value' = if ($server.JobServer.MaximumJobHistoryRows -lt 100) { "$($server.JobServer.MaximumJobHistoryRows);Red" } else { $server.JobServer.MaximumJobHistoryRows } 'Description' = 'SQL Job Agent history should be set to default of 100 records or higher' } $ArraySQLAgent += New-Object PSobject -Property @{ 'Check' = 'SQL Agent service Auto Start' 'Value' = if (!($server.JobServer.SqlAgentAutoStart)) { "$($server.JobServer.SqlAgentAutoStart);Red" } else { $server.JobServer.SqlAgentAutoStart } 'Description' = 'SQL Job Agent service should be set to automatic start' } $ArraySQLAgent += New-Object PSobject -Property @{ 'Check' = 'SQL Agent Restart' 'Value' = if (!($Server.JobServer.SqlAgentRestart)) { "$($Server.JobServer.SqlAgentRestart);Red" } else { $Server.JobServer.SqlAgentRestart } 'Description' = 'SQL Job Agent service should be set to automatic re-start' } $ArraySQLAgent | Select Check, Value, Description | Export-Csv -force -NoClobber -NoTypeInformation -Path "$($FileName)SQLAgent.csv" } catch { Write-Log -Error "Could not generate the SQL Agent.csv file : Error $($_.Exception.Message) "` -path $PathWriteLog -ErrorLogFileName $ErrorLogfileName } |

Which might produce a CSV file with..

|

1 2 3 4 5 |

"Check","Value","Description" "Maximum History of Rows","1000","SQL Job Agent history should be set to default of 1000 records or higher" "Maximum Job History of Rows","100","SQL Job Agent history should be set to default of 100 records or higher" "SQL Agent service Auto Start","True","SQL Job Agent service should be set to automatic start" "SQL Agent Restart","True","SQL Job Agent service should be set to automatic re-start" |

Ok Laerte, but where we are doing checks where there are thresholds, we would like to add colors (red, green and yellow) into the Microsoft Word document created by the CSV files (the details of this will be in the next article)

Oh boy. I need to be honest. In this case I am going to break one of my cherished maxims because we will need to use a ultra-complex algorithm. It was not easy to get this solution. I will try to explain. Lets say we are doing a VLF check and we would like to highlight in RED any values higher than 500, use yellow for VLF counts higher than 200 and have any VLFs higher than 50 highlit in green. Brace yourselves for my forthcoming Star Trek algorithm!

Well, it is simple. Just create the condition and, after the data is collected, add the semi-colon and the color. You will see in the next article that the is a reusable function to create the Word Tables which splits the data by semi-column and checks what comes after the semicolon delimiter. If the word ‘RED’ comes after the semi-column, it changes the Word background to red and the same operation to yellow or green as appropriate. KISS-L

Lets check the code that generates the CSV file with the colour annotations :

|

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27 28 29 30 31 32 33 34 |

Try { Write-Log -Message "Checking VLF's..."` -path $PathWriteLog -ErrorLogFileName $ErrorLogfileName -verbose $Server.Databases | Where-Object { $_.isaccessible } | ForEach-Object { $DatabaseName = $_.name $ArrayVLF += (invoke-Sqlcmd2 -Database $DatabaseName ` -Query "DBCC LOGINFO" -ServerInstance $FullInstanceName | Measure-Object | Select-Object @{ N = 'DBName'; E = { $DatabaseName } }, @{ N = 'VLFCount'; E = { $_.count } }) } $ArrayVLF | Sort-Object VLFCount -Descending | Select-Object @{ N = 'Database Name'; E = { $_.dbname } }, @{ N = 'VLF Count'; E = { if ($($_.VLFCount) -gt 500) { "$($_.VLFCount);Red" } elseif ($($_.VLFCount) -gt 200 -and $($_.VLFCount) -lt 500) { "$($_.VLFCount);Yellow" } elseif ($($_.VLFCount) -gt 50 -and $($_.VLFCount) -lt 200) { "$($_.VLFCount);Green" } Else { $($_.VLFCount) } } } | Export-Csv -force -NoClobber -NoTypeInformation -Path "$($FileName)VLFs.csv" } catch { Write-Log -Error "Could not generate the VLFs.csv file. : Error $($_.Exception.Message) "` -path $PathWriteLog -ErrorLogFileName $ErrorLogfileName } |

The output is :

|

1 2 3 4 5 6 7 8 9 10 |

"Database Name,""VLF Count"""; "msdb,""700";"Red""" "SSISDB,""400";"Yellow""" "DummyDB,""400";"Green""" "master,""7"""; "cleartrace,""6"""; "ReportServerTempDB,""4"""; "AdventureWorksDW2014,""4"""; "model,""4"""; "tempdb,""3"""; |

Getting Started

To get started:

- Download the BestPracticesReview.ps1 and the BestPracticesReview.bat from the head of the article

- Copy these two files to the same directory

- Pay attention to the execution policy. Perhaps you need to change , using :

Set-ExecutionPolicy RemoteSigned

(more info on Using the Set-ExecutionPolicy Cmdlet) - Create a txt file in the same directory called I

nstanceNames.txtand fill with the instance names :

InstanceName

Machine\InstanceName

etc.. etc - Click on the

BestPracticesReview.bat. It will start the script as administrator. If you run it in the PowerShell ISE, make sure to run the ISE as administrator - The script will create a directory called output and log. You can find the csv in the output and the log file from your running in the log directory.

* you need to be a member of the SysAdmin role in all the Instances. If it finds an instance where you don’t have the rights, it will be skipped

Any questions please ask me – laertesqldba@outlook.com

Conclusion

In my next article, I’ll explain how to put all this information into a Microsoft Word document. From then on, it is all up to you to adapt the system to your unique requirements. Enumerate all the checks that you need to do as a routine all the servers and databases you manage, and start automating them. You’ll soon appreciate how easy it is to claw back all that time you’ve lost doing repetitive tasks and reduce the chance of getting things wrong. Remember that, if it is PowerCool, it is PowerShell!

The source to the bare-bones version of the Best Practice Check is here as HTML and can also be downloaded from a link at the head of the article

DevOps, Continuous Delivery & Database Lifecycle Management

Go to the Simple Talk library to find more articles, or visit www.red-gate.com/solutions for more information on the benefits of extending DevOps practices to SQL Server databases.

Load comments