You just Build It don’t you? 12 Common Database Build Blockers

Database deployments, like the sheep of exasperated hill-farmers, often find strange and unexpected ways to self-destruct. Phil Factor describes the most common things that can go wrong, and how a reliable automated database build process can prevent messy accidents.

-

- The build script doesn’t compile because of script errors

- There are mutual, or circular, database dependencies

- There are cross database or linked server references

- The build script assumes a case-insensitive database whereas the target is case-sensitive

- Migration script applied to wrong target

- Migration script causes unforeseen outcome

- A migration script is accidentally re-run

- Database drift

- What you tested is not the same as what you released

- A build fails because it requires special preconditions to run successfully

- The reference data does not exist

- A database contains a vulnerability that prevents it being deployed

You just build it, don’t you?

Databases are quite unlike applications, and this is true of the way that they are built as well. You don’t compile them as you would a C# or Java application, you create them via SQL Scripts and then create within them the objects they should contain, using SQL Scripts. You can then alter them and add to them as you wish. This process can be done by several developers at once, working on the same schema. Any changes are checked to make sure that they are effective, and then saved in source control. If all changes made to the database are stored in source control, so that databases can be reliably ‘versioned’, then we can generate and test scripts that will change a database from one version to another. This means we can use the source to build or update as many copies of the database as required.

With a small database-driven application, perhaps one with no commercial processes or personal data, it is possible to work informally; one can even develop code on a backup the database of a production application, because it will ultimately be saved in source control.

As a database grows both in size and complexity, however, the time soon comes that one must get used to the discipline of separating development, test, staging and production databases. When developing a new feature, we must try things out and assess different strategies and algorithms. We need a development server to do that. We need test builds with different types of data sets as part of integration and performance testing. We will also need to test the script that upgrades the database to the new version (the ‘migration script’) and test the effectiveness of feature switches and other devices that reduce the risks of a failed deployment. This requires a Staging server.

It is at this stage of the lifecycle of a database that you will either adopt a reliable automated database build process, or else you will be forced to become very resistant to doing any kind of rapid delivery of changes to the database. Point ‘n’ Click soon becomes very tedious.

For any regular build process, there are certain things you need to check for before it is going to work. This isn’t the fault of the build process; no development tool can work unconditional magic. We’ll describe some of the things that can break the build process.

Things that go wrong or cause difficulties

Several things can go wrong, even when managing a simple database release that adds new features to an existing database. From my own observations and experience, database deployments somehow choose original and bizarre ways to self-destruct, like some of the sheep of exasperated hill-farmers. However, there are a few common themes.

1. The build script doesn’t compile because of script errors

Sometimes the script contains errors. It is surprising when it happens because every object should be unit-tested before it is committed. In fact, it is unavoidable at times and because build processes are often lengthy, perhaps running overnight unattended with large databases, some quick pre-build checks, followed by a fix, can save a wasted day. To ensure that you’re not tripped up on the starting line like this, you can check during development, before the build and during the release process.

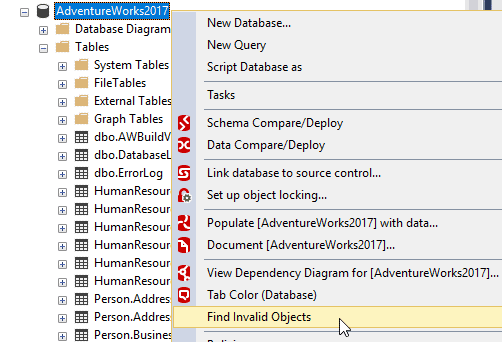

During development, SQL Prompt will check the SQL code you’re working on in SSMS or VS against a set of rules that check the code for code smells and code errors. Also, you can use the same tool to find any invalid objects (those that can’t be compiled, or will compile but can’t be executed).

Before you build the database, you can then run automated static code analysis checks on all the code in source control, using the command-line SQL Code Guard. This will find many of the syntax errors that will stop the build, but not all of them. As a final pre-build test, I use SQL Change Automation (or SQL Compare to run a comparison of the source code against an empty directory. This, with only rare exceptions, will find any remaining build breakers, and it is then possible to fix the errors before the build is run.

Finally, SCA will produce a report of all code smells, including minor concerns such as deprecated code, during the release process.

2. There are mutual, or circular, database dependencies

A database project may consist of a group of connected databases on the same instance, and one or more of these databases may contain objects, such as views, which make mutual, or circular, cross-database references to objects in the other databases.

This is sometimes a design choice, instead of using schemas to partition a database into logical security spaces, or components or namespaces. The cross-database references are checked on build and will cause an error if they don’t exist in the other server. There is no natural order of creating the databases that doesn’t break the build.

Before the build of any of the databases with cross-database references, create a script that checks that these external references exist and, if not, creates a stub that produces a result with the same metadata as the real object, but with no data. For SQL Change Automation builds, or for SQL Compare, each database will need a pre-deployment script that creates the necessary stubs.

3. There are cross database or linked server references

When in a production server environment, a database might reference one or more other databases, either on the same instance or more likely not. Often when building your database, the referenced database, perhaps a payroll database, is not part of the development project, so the referenced objects won’t exist, and the build will break with an error. These references are usually called ‘Linked Server references‘ if they reference objects in a database on another server, possibly even a different type of database, or ‘Cross Database references’ if they are made to another database on the same instance. For the latter, we can take advantage of deferred name resolution for objects such as stored procedures, but when a routine accesses a remote object, there is no deferred name resolution.

When tackling builds where databases make cross-server references, you will either need to establish copies of all dependent databases, on the build server, or you can use synonyms to represent the remote objects, and local ‘stub’ objects to overcome the problems caused by ‘missing references’ when building the individual objects. You create a ‘mock’ or ‘stub’ for any object that is the target of a remote reference. You then provide a synonym for the foreign reference and substitute this for the actual reference. Assign the synonym to the stub you have created. This reference will now no longer create an error. Once in the appropriate environment, change the synonym to point to the correct references in the other database.

4. The build script assumes a case-insensitive database whereas the target is case-sensitive

A database may be built and work fine when its collation is case-insensitive, but if the same code is used in a case sensitive database, it can break the build. A reference that is valid when you wrote the code in a case-insensitive database may not compile in a case-sensitive collation, if you’ve not kept to the same case. Another problem if you develop a database with a case-sensitive collation is that a name for any object must be unique within a schema, and a name that is unique in a database with a case-sensitive collation may then be flagged as a duplicate in a case-insensitive database.

Be consistent with collations. Decide as early as possible which database collation is appropriate and stick to it with all development instances and all targets of a build.

5. Migration script applied to wrong target

A migration script is any script that is intended to change a database from one version to another, while preserving existing data. They are usually hand-cut by the developer but are auto-generated by tools such as SQL Compare and SQL Change Automation. A migration script that has been auto-generated will only change a target database from one, specific version of a database to another version. If you try to apply such a migration script to a target database that is at a different version, or has drifted (see 8), then it is likely to fail, but could cause unpredictable and unfortunate changes.

SQL Change Automation is unique in that it deals with this under the covers, and avoids the problem. More generally, scripts that are version-specific should only run with that version of the target database, so databases must have an easily-determined version. There must be a way of checking that a script directory, build package or target database is really at the version you believe it to be. SQL Chane Automation, like most deployment tools, provides version numbers as extended properties of the database, which you can view in SSMS and can be read in scripts, but there are no common conventions for naming the property. See Reading, Writing, and Creating SQL Server Extended Properties.

6. Migration script causes unforeseen outcome

A migration script may succeed in running but can still fail to bring the target to the correct version due to an unforeseen bug.

You must be able to check whether the migration created a target at the same level as the source. It pays to check warnings, and to do a post-deployment check. SQL Change Automation does this automatically unless you stop it doing do. With SQL Compare, you need to run a second comparison to check. Usually you can correct any differences easily, because they are usually minor, but it pays to have a rollback procedure where possible.

7. A migration script is accidentally re-run

If you rerun a migration script, you’ll usually get an error; and if the script runs within a transaction it will roll back. However, not all scripts can be run within a single transaction, and in that case the effects of re-running it accidentally can be subtle, and in some circumstances result in data being changed or destroyed.

To deal with this, either we check the metadata at the start of every change and terminate the process if there is evidence that the script has already been run, or we make the script so accommodating that it checks every change before making it. The correct term for this sort of script is ‘idempotent‘. The first alternative just checks one change, such as the presence of an index that is introduced by the script, and aborts if it is already there. The accommodating script will check the metadata on every object creation or alteration to make sure that the object is in the state that we expect, before executing the ALTER script that makes the change. It will rescue a botched migration that leaves the database in an intermediate state.

You’ll be familiar with the principle of guard clauses: When SSMS generates a script for object creation, you have the option of adding a guard clause to check for object existence. It verifies whether an object with the given name exists before dropping or altering, or that an object with the given name does not exist before creating. However, although this allows you to recreate a database from source, this is not enough for a migration script because it destroys any data. A ‘safe’ migration script to upgrade a database from v1 to v2 (for example) must create v2 versions of any objects that don’t exist, ALTER the v1 version of those that do exist, contain guard clauses so that it won’t cause any harm if accidentally rerun, and will usually fix a migration that failed.

Migration scripts should be able to detect quickly if they have been run on this target before. If so, it should either abort so that it is subsequently possible to determine how or why it happened, or else alter each database object only if necessary.

8. Database drift

A thoroughly tested migration script may be run on a target database to bring it to the required version, yet it will fail if the target has, in the meantime, been altered. Even if it succeeds, it will be likely to destroy the “out of process” changes, which could be a vital alteration done in an emergency to a production system by a gifted developer who dislikes ‘paperwork’, and so has not saved the alteration to source control.

It should be possible to check that the target database is at the version for which the migration script was designed. If you opt to ignore small changes, it should be possible to script out any minor changes before they are deleted, for later examination. When SQL Change Automation creates the release artifact, it imports into it the schema of your intended target database so it can check for any subsequent modifications in the target, ‘database drift’, which would invalidate the synchronization script.

9. What you tested is not the same as what you released

A database release has been finalized, sent for testing and has passed. This may take a long time; a month is the longest in my personal experience! However, in the meantime, a developer somehow sneaks a code change into the release, in source control. When the database is then transferred to staging, the code change is incorporated into the migration script because the database in a deployment pipeline must always represent Source Control as the ‘source of truth’. The untested change can introduce a bug.

Once the deployment has started, the source code for the release must be immutable (unchanging). SQL Change Automation ensures this if you start the process by creating a project object and then using that to create a build artifact.

10. A build fails because it requires special preconditions to run successfully

A database build might rely on a feature of SQL Server that isn’t automatically installed, or it might require special database settings. It may require subsequent work after the database itself is built, for example when the database uses full-text search or needs the SQL Agent for scheduled database tasks

The deployment process must allow for the use of pre-deployment and post-deployment scripts, stored in source control, to be considered part of the release and subject to the same rules as the code used for the database build process, such as being ‘immutable’ once the release has started. These should be able to check for preconditions and ensure that server components are in place.

11. The reference data does not exist

A database may need very specific reference data to be loaded and checked before it can work properly or be tested. Otherwise, tests will give ‘false failures’. A typical example is a table of exchange-rate data for an import/export trading company.

If a database requires reference data to function properly, it should be considered part of the deployment and saved in source control.

12. A database contains a vulnerability that prevents it being deployed

A database might pass every functional test but still fail to deploy successfully if, for example, it makes use of a deprecated feature that isn’t allowed within the company’s support contract, or it makes use of a string parameter directly in ‘dynamic SQL’ on the server.

A serious code issue, if not spotted early in the deployment process, can waste a great deal of time and cause team issues.

Code quality checks should be performed as early on as possible in the process, to prevent wasted work by team members. Ideally, checks should be made as the code is created and unit-tested, and then rerun as part of the release process. If code is in source control, then a code review process can and should be run to check this.

Scripting the deployment process

To go back to the ‘dead sheep’ analogy, the team leader of any database development needs to be on the lookout for impossible things happening. For example, I once saw a database revert suddenly and inexplicably to the state it was in three months before. It turned out that a database developer was in the habit of working from an entire build script when developing, and then highlighting and running just the routine he was working on. His attention wandered and the entire script was run. His build script for the database was three months out of date, except the section he was working on.

For all the reasons I’ve outlined, and many more, it is best to promote a culture of watchfulness and suspicion about the entire development process. This makes scripting a much better approach than point n’ click, for deployments. Few developers are known to their chums for being methodical and meticulous, but scripts can make them so.

As well as having a deployment process that can be repeated without any deviations or omissions, this allows you to run otherwise-boring routine checks and take tedious precautions. What do you check for? Scripts must test that everything is as you expect it to be and abort gracefully otherwise. A database object in the target that is missing, or different from what you expect, tells you that any attempt to run the script will end badly. It could be an attempt to re-run the same migration script; it could be that the target database is different to the one for which the migration script was designed. Either way, it must be checked out. The same goes for anything else unexpected. You stop the process if anything seems wrong.

The problem with a process that is entirely scripted is that it can entirely miss the obvious. It must be possible to insert places in the workflow where an intelligent human can check for the unexpected. This may seem to the inexperienced to be a silly precaution, but it has helped to avoid catastrophes. Scripted checks often forget the wildest events, such as the accidental deletion of all the tables of a production database because the target was automatically synchronized with an entirely different source and nobody eye-balled the migration script. An imminent disaster that is immediately apparent to a human can seem fine to a script.

Supporting different build methodologies

From the start of the development of any database, the end-product is the SQL source code that is required to build the new version of the database. We refer to this as the ‘schema’ definition written in Data-definition language (DDL), but it is just SQL. There are no hard rules about what can legitimately be in a build script. We talk about the difference between the ‘data’ and the ‘schema’, or metadata, but this isn’t as clear-cut as you might think because a user database is, ultimately, nothing but data about its objects, and this data is stored in system databases. In other words, databases are defined in databases. With a small database, we can build an entire database from one script, but then, as it gets bigger, we either do it at schema level or with a file for each object.

Many of the problems of database deployment are based on the difference between a table build script and a table migration script. You can build a relatively small database anew quite quickly and then fill its tables, with data saved as files. Past a certain size of data, that is no longer viable. There was a time that this build-and-fill approach was the only way to make changes to relational tables, and some database-driven applications had to be taken offline for days while they were rebuilt. It made source control easy, however. You executed one or more build scripts that did everything required to create a database to the correct version, with whatever set of data you required.

Quite quickly SQL was extended to allow tables to be altered in-situ; the ALTER TABLE command introduced the possibility of doing ‘migrations’, preserving existing data. Sometimes, preserving data isn’t entirely easy, such as when you are splitting tables. This meant that the scripts that performed the magic, called migration scripts, could become somewhat intricate.

Around fifteen years ago came the introduction of the first tools that allowed migration scripts to be generated automatically. The joy of this was that it became possible to compare a set of source scripts with a living database, and have the tool generate the migration script. For the first time, developers could save object-level scripts in source control and avoid all having to work on the same migration script simultaneously.

There are always cases where the changes to an existing database system are so radical that an auto-generated script that preserves the data just isn’t possible, and so the automated migration script must be supplemented by one that is crafted by hand to preserve the data within the new referential design of the tables. This supplementary script must then be tested, validated and held in source control. One of the consequences of this is that some developers stored their source merely as a set of migration scripts that had to be executed in the correct order to create the new version of the database. The advantage is that it makes it far more likely that your migration script will work when used to deploy from staging to production, because it will have been well-exercised in the course of the deployment pipeline. The complications of this ‘migrations-first’ approach come if a team of any size need to work on different aspects of the structure of an important table, simultaneously. You will need to merge all the changes and arbitrate on the contradictory changes to the resulting table migration script.

If development work goes on a majestic straight-line journey from its creation, there will be few scripts. If development is tortuous, with many releases, and changes in strategy, the application of many migration scripts will reflect all this, and a database build will be both slow and inefficient. To avoid this, developers found ways to ‘baseline’ from a stable evolved state of the database rather than the germination stage.

Any system of deploying database changes must accommodate both approaches. They are different only by degree because the static, or state-based, approach must check and test its auto-generated migration scripts and preserve them in source control. The migration-first approach generates the migrations scripts during development, but also then needs to maintain object-level source in source control to keep track of the what, who and why of the changes.

The appropriate method depends on your style of developing databases. If you extend the Agile approach to the database, without modification, so that your understanding of the data domain will be subject to major changes, then a migration-first approach is likely to be more appropriate. This is because the overall table structure, the database model, will be subject to many changes, and this will lead to migrations that require table-splitting or relationship-changes that can never be entirely automated without supplementary scripting. Conversely, the design-up-front approach to database design is best approached using object-level source control, the ‘state-first’ approach.

The important message for any system for database deployment is that it should support both methods of building and updating databases in order to support rapid delivery. It is likely that one project will employ different methods at different stages in the database lifecycle, but only as a matter of emphasis.

Conclusion

There isn’t a royal road to database builds; they will break for a surprising number of reasons. If you build often, we are alerted to problems at a time when they are easy to fix.

Database developers take great pride in developing resilient database build scripts that never break a build, and which are impervious to the mistakes of others. There are plenty of ways to prevent a build breaking, and they involve adding ‘intelligence’ to a script to, for example, ensure that data is preserved when inter-related groups of tables are altered, or to avoid problems of mutual or circular dependency. The way that scripts are used to build a database will vary at different stages in the lifecycle of a database and with the preferences and skills of the development team. The difference between a static and migration script is purely one of emphasis and team methods. Under the covers, it is the same process.