A very common tenet used when building out a DevOps style approach to automated deployment is the concept of failing fast. You want to identify issues with the changes in your code and structures as early as possible to protect the production environment. One of the most common methods to meet this requirement is setting up a Continuous Integration (CI) environment. The need for CI is not only for application code. Integrating changes to database code and components as often as possible and testing the results of those changes makes problems visible early and fast.

CI doesn’t get rid of bugs, but it does make them dramatically easier to find and remove if the team can be sure that the cause is one of the changes that were made since the last successful integration. If a developer’s code has introduced an error or has caused another developer’s previously working piece of code to fail, then the team knows immediately. No further integrations occur until the issue is fixed.

Why is integration so important?

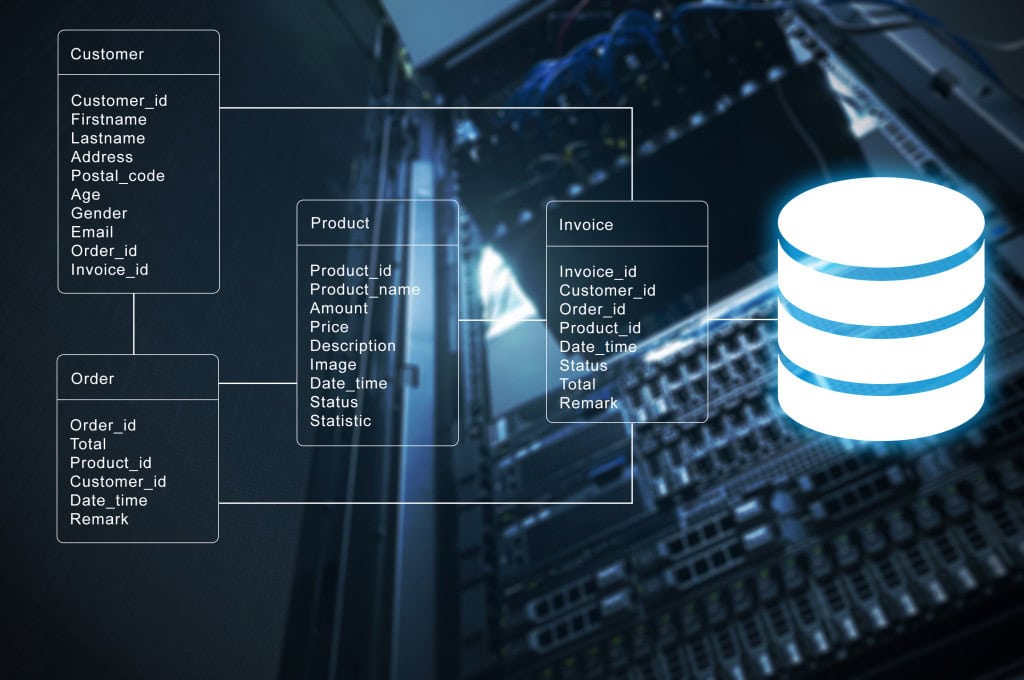

All applications are made up of components. Even an application hand-crafted from procedural code will depend on third-party components, frameworks, libraries, operating-system interfaces, data sources and databases. At the other extreme, the custom application could be a mashup of standard off-the-shelf applications.

All components are liable to upgrades and changes, especially during active development. Each time any component of an application changes to a new version, you must perform a complete build on all parts of the application that rely on that component, followed by integration, with its integration tests. With a rapidly changing application, integration must be continuous to prove, continuously, that nothing has been broken as a result of any change.

Any change to the application code requires integration, as does any change to a third-party component. Where does the database fit? From the application-perspective, the database is just another component, and a typical corporate application can be dependent on a handful of databases. A database change merely triggers the same work as any other component. The application integration tests must prove that all database access methods continue to perform correctly, according to the database interface specification.

From the database perspective, it is, in effect, an application, with its own hierarchy of components on which it depends. Just as for an application, any change to the database code or components should prompt a fresh build, followed by integration and its tests.

The purpose of Database CI, then, is precisely the same as for application CI. The development team establish a working version of the database very early in the development cycle and then continue to verify regularly that it remains in a working state as they expand and refactor the schema and database code objects. Developers integrate new and changed code into a shared version control repository several times a day. Development proceeds in small steps. Developers first write the tests that, if passed, will prove that a small new piece of functionality works. They then implement the code to make the tests pass. When the tests pass, they commit the code to “trunk” in the shared VCS, and their “commit tests” are added to the broader suite of tests for the application. Each commit, or check-in, is then verified by an automated database build or migration, and subsequent testing, allowing teams to detect problems early.

Why database CI?

CI within database development remains relatively rare when compared to application development. Inevitably, “late integration” of database changes cause headaches and delays towards the end of a project. There are often unpleasant surprises that lead to manual scripting, and a lot of tweaking of those scripts, to get the database working as expected. Database CI minimizes the element of surprise.

Database testing is different in nature, and often far more complex than application testing. The integration testing of a database has to go well beyond answering the question of whether code ‘passes’ or ‘fails’. It must go much more deeply into whether the system performs well under load, and if it scales properly. It should check that access rights are done correctly. Integration tests should, in short, ensure that the database is providing a service as expected.

However, ad-hoc integration of database changes doesn’t help. In consequence, a database release can cause a lot of subsequent problems in the QA and Production environments. The problems can stem from unreliable stored procedures, functions, and triggers to schema changes. Even a simple database change that requires no complicated data migrations can cause problems if not integrated early and tested thoroughly. For example, a change such as adding a new column can cause unexpected failures if certain applications don’t qualify column names or expect only a certain number of columns or columns in a specific order. Even more potentially damaging to the business are problems affecting data integrity, which stem largely from untested or missing data quality and referential integrity constraints.

Instead, database changes must be integrated and tested regularly and should be done continuously, if possible. Although shared-development databases are, in a sense, self-integrating, you still ought to put them through a CI process because you are obliged to test the overall behaviour of the database system and prove that a working database can be built from the changes to the component list. This includes not only the database code and schema changes but also SSIS jobs, CLR libraries, agent tasks, alerts, messaging and so on.

Database CI leads naturally to earlier and more frequent releases, which means that all the required scripts and files required to migrate a database from one version to the next will be thoroughly tested in development, and then again when deploying the release to QA and Staging. As a result, deployments to production will be rehearsed and be far more reliable. Also, the more rapidly that new functionality and improvements can be delivered, the easier it is to improve collaboration with the DBA and systems team. If you use the same tools to both repeatedly build the latest database version in the CI environment and to deploy the release to QA, Staging, and ultimately Production, then you can have more confidence that they will work as intended.

Of course, the subsequent deployment pipeline requires more than this to work. The testing in these more production-like environments is somewhat different in nature. It will encompass acceptance testing, and verify that security, capacity, availability, and performance characteristics of the production system are acceptable to the business or customer and in line with the signed-off specification of the system. This, in turn, requires a reliable process for safe delivery of production data, with the necessary obfuscations, into those environments.

Additional benefits of a well-drilled database CI process will emerge as the team gains experience with automation and DevOps-style practices. This experience leads to more automation and workflow techniques introduced into your database delivery pipeline. For example, if the team have integrated the issue-tracking system into the version control system (VCS), they can respond quickly to any critical issues. Through their CI processes, they will hopefully be able to quickly spin up an environment and establish the correct version of the database, as well as data, in a state it existed in soon before the error occurred. This often requires a “clean” version of production data (for example, minus any sensitive data elements). They can then reproduce the error, and write a failing test that will validate the fix and prevent regressions, meaning introducing a new problem while solving an existing one.

Leaving the database out of CI causes headaches, delays, and unpleasant surprises. Database CI leads to more frequent releases and ultimately delivering more value to the customer.

Commentary Competition

Enjoyed the topic? Have a relevant anecdote? Disagree with the author? Leave your two cents on this post in the comments below, and our favourite response will win a $50 Amazon gift card. The competition closes two weeks from the date of publication, and the winner will be announced in the next Simple Talk newsletter.

Load comments