Before importing JSON data from a web service or application into SQL Server, it is worth validating it in T-SQL – checking that it has the expected path structure, that all required properties are present, that values are the right SQL data types, and that they pass any relevant constraint checks. SQL Server’s JSON functions (JSON_VALUE, OPENJSON, ISJSON) provide the tools to build this validation pipeline entirely in T-SQL, without external schema validators. This article covers the validation steps in sequence: path expression parsing, metadata comparison, data type checking, constraint validation, and finally a pattern for scaling validation to large JSON payloads via temporary tables.

Articles by Phil Factor about JSON and SQL Server:

- Consuming JSON Strings in SQL Server (Nov 2012)

- SQL Server JSON to Table and Table to JSON (March 2013)

- Producing JSON Documents From SQL Server Queries via TSQL (May 2014)

- Consuming hierarchical JSON documents in SQL Server using OpenJSON (Sept 2017)

- Importing JSON data from Web Services and Applications into SQL Server(October 2017)

There are a number of reasons why database developers or DBAs will regard JSON with suspicion. They are used to the protection of a higher level of constraints and checks for imported data: They want to be sure that the metadata of the data is correct before they import it: They also want to check the data itself. At the simplest level, they want to be confident that a regular feed from a data source is as robust as possible. Why so fussy? Simply because once bad data gets into a database of any size, it is tiresome and time-consuming to extract it.

As well as taking JSON data from web-based applications, especially single-page ones, many databases rely on volatile data from web services, such as a list of currencies and their current value against the dollar. We need to be sure that each time it happens, the JSON has the same metadata or data structure as usual, and that the data is valid. Although nowadays, application data generally comes as a JSON document, we have a similar problem with other types of document-based data: XML, for example, exists in two variations: Schema-based or typed XML which is good and virtuous but has a naughty sister, schema-less or untyped XML. Typed XML is the only sort of XML you should allow in a SQL Server database. Not only is it safer to use, but it is stored much more efficiently. Untyped XML, JSON and YAML all require checks.

We’d like to do the same with JSON as we do with typed XML, and allow JSON Schema to do the donkey work; but, sadly, SQL Server don’t currently support any JSON schema binding and cannot, therefore, store JSON efficiently in a ‘compiled’ form. (note: you can get 25x compression of JSON data, and far better performance by using clustered columnstore indexes, but that is a different story)

The dominant JSON Schema is actually called ‘JSON Schema’ and is in IETF draft 6. It can describe a data format in human-readable JSON and can be used to provide a complete structural validation. PostgreSQL has postgres-json-schema for validating JSON, JavaScript has many add-ins for doing this. Net programmers have Json.NET Schema and several other alternatives.

PowerShell is the obvious place to validate your JSON via JSON.net schema, but there is, in fact, quite a lot you can do in SQL to check your JSON data. You can easily compare the metadata of two JSON documents to see if anything has changed, and you can apply constraints on JSON Data. This is because of the use of path expressions. To get started we’d better describe them, because they are essential to any serious use of JSON in SQL Server.

Path expressions

When you need to read from, or write to, values from a JSON document in SQL Server, you use JSON path expressions. These can reference objects, arrays or values.

These path expressions are needed if you call OPENJSON with the WITH clause to return a relational table-source, if you call JSON_VALUE to extract a single value from JSON text, or when you call JSON_MODIFY to update this value or append to it.

The path itself starts with a dollar sign ($) that represents the context item. The path consists of elements separated by ‘dots’ or full-stops.

The property path is a set of path steps that consist of Key names that are optionally ‘quoted’. (e.g. $.info.address.county or $.info.address.”post code”.outward). Each dot denotes that the Lvalue (left-side value) is the parent of the RValue (right-side value). If the key name starts with a dollar sign or contains special characters such as spaces then you need to use double-quoted delimiters for the key (e.g. $.info.Cost.”La Posche Hotel”). If the key name refers to an array, they are indexed with a zero-based index. (e.g. $.info.tags[0] or $.info.tags[1])

If the path references an object that exists more than once, neither JSON_Value nor JSON_modify can access the second or subsequent values. In this case, you have to use OpenJSON instead to get to all the values.

JSON paths in SQL Server can start with a keyword ‘lax’ or ‘strict’. It is an unusual requirement to want to suppress errors if a path isn’t found in the JSON document but this is the default, and you can specify this by using ‘lax’. You’d be more likely to want ‘strict’ mode, but ‘lax’ is better if you want to test whether a path value is there because you will know something is wrong by the NULL return value

You can easily use path expressions. The first function you’d probably need is a routine to tell you what these expressions actually are for any particular JSON document.

Finding out what paths there are in a JSON string or document

Here is a routine that takes a JSON string and returns a table-source containing the expressions, data types and values for the JSON that you specify.

|

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27 28 29 30 31 32 33 34 35 36 37 38 39 40 41 42 43 44 45 46 47 48 |

IF Object_Id('dbo.JSONPathsAndValues') IS NOT NULL DROP FUNCTION dbo.JSONPathsAndValues; GO CREATE FUNCTION dbo.JSONPathsAndValues /** Summary: > This function takes a JSON string and returns a table containing the JSON paths to the data, and the data itself. The JSON paths are compatible with OPENjson, JSON_Value and JSON_Modify. Author: PhilFactor Date: 06/10/2017 Version: 2 Database: PhilFactor Examples: - Select * from dbo.JSONPathsAndValues(N'{"person":{"info":{"name":"John", "name":"Jack"}}}') - Select * from MyTableWithJson cross apply dbo.JSONPathsAndValues(MyJSONColumn) Returns: > A table listing the paths to all the values in the JSON document with their type and their order and nesting depth in the document **/ (@JSONData NVARCHAR(MAX)) RETURNS @TheHierarchyMetadata TABLE ( -- columns returned by the function element_id INT NOT NULL, Depth INT NOT NULL, Thepath NVARCHAR(2000), ValueType VARCHAR(10) NOT NULL, TheValue NVARCHAR(MAX) NOT NULL ) AS -- body of the function BEGIN DECLARE @ii INT = 1, @rowcount INT = -1; DECLARE @null INT = 0, @string INT = 1, @int INT = 2, -- @boolean INT = 3, @array INT = 4, @object INT = 5; DECLARE @TheHierarchy TABLE ( element_id INT IDENTITY(1, 1) PRIMARY KEY, Depth INT NOT NULL, /* effectively, the recursion level. =the depth of nesting*/ Thepath NVARCHAR(2000) NOT NULL, TheName NVARCHAR(2000) NOT NULL, TheValue NVARCHAR(MAX) NOT NULL, ValueType VARCHAR(10) NOT NULL ); INSERT INTO @TheHierarchy (Depth, Thepath, TheName, TheValue, ValueType) SELECT @ii, ' |

With this, you can see what paths lead to the keys, and you can see what is in the values of those keys.

|

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 |

DECLARE @JSONData NVARCHAR(4000) = N' { "info": { "type": 1, "address": { "town": "Colchester", "county": "Essex", "country": "England" }, "Hotels": { "La Posche Hotel": "$400", "The Salesmans Rest": "$35", "The Middling Inn": "$100"} }, "Sights": ["the Castle","The Barracks","the Hythe","St Bartolphs"] } '; SELECT * from dbo.JSONPathsAndValues(@JSONData) |

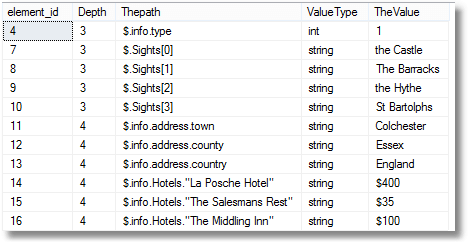

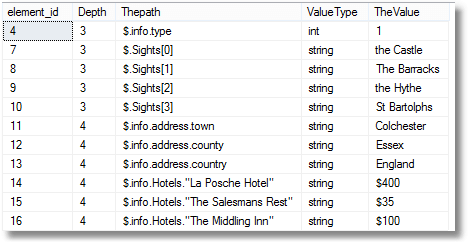

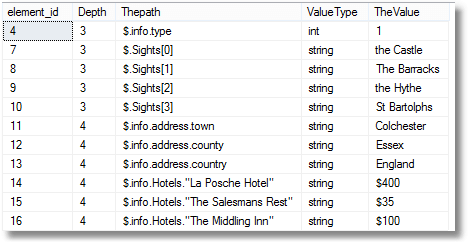

Which would give you this…

Differences between the metadata of two JSON strings

It is very easy to check two JSON strings to make sure that they have the same metadata, and report any differences that it finds.

|

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27 28 29 30 31 32 33 34 35 36 37 38 39 40 |

IF Object_Id('dbo.DifferenceBetweenJSONstrings') IS NOT NULL DROP function dbo.DifferenceBetweenJSONstrings GO CREATE FUNCTION dbo.DifferenceBetweenJSONstrings /** Summary: > This checks two JSON strings and returns a table listing any differences in the schema between them Author: PhilFactor Date: 20/10/2017 Database: PhilFactor Examples: - Select * from dbo.DifferenceBetweenJSONstrings(@Original,@new) - Select from MyTable cross apply dbo.DifferenceBetweenJSONstrings(FirstJ) Returns: > A table **/ ( @Original nvarchar (max),-- the original JSON string @New nvarchar (max) -- the New JSON string ) RETURNS TABLE --WITH ENCRYPTION|SCHEMABINDING, .. AS RETURN ( SELECT Coalesce(old.thePath, new.thepath) AS JSONpath, Coalesce(old.valuetype, '') + CASE WHEN old.valuetype + new.valuetype IS NOT NULL THEN ' \ ' ELSE '' END + Coalesce(new.valuetype, '') AS ValueType, CASE WHEN old.valuetype + new.valuetype IS NOT NULL THEN 'value type changed' WHEN old.thePath IS NULL THEN 'added or key changed' WHEN new.thePath IS NULL THEN 'missing' ELSE 'dunno' END AS TheDifference FROM dbo.JSONPathsAndValues(@New) AS new FULL OUTER JOIN dbo.JSONPathsAndValues(@original) AS old ON old.ThePath = new.ThePath --AND old.Valuetype=new.Valuetype WHERE old.thepath IS NULL OR new.thepath IS NULL OR old.ValueType <> new.ValueType ); Go |

As part of the build, we’d run some code like this to ensure that the function still does what you expect

|

1 2 3 4 5 6 7 8 9 10 |

Select * from dbo.DifferenceBetweenJSONstrings( '[12,32,35,49,15,56,37]','[1,2,3,4,5,6]') Select * from dbo.DifferenceBetweenJSONstrings( '[1,2,3,4,5,6]','[1,"2",3,4,5,6]') Select * from dbo.DifferenceBetweenJSONstrings( '{"id": "001","type": "Coupe","name": "Cougar","year": "2012"}', '{"id": "004","type": "Coupe","name": "Jaguar","year": "2012"}') Select * from dbo.DifferenceBetweenJSONstrings( '{"id": "001","type": "Coupe","name": "Cougar","year": "2012"}', '{"id": "001","type": "Coupe","name": "Cougar","start": "2012"}') |

Actually, to be honest, I wouldn’t do my tests this way in order to make sure the function works. I’d run all the results into a table and make sure that the entire table was what I’d expect. After all, we all do automated unit tests as part of our daily build don’t we?

|

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27 |

DECLARE @TestData TABLE (JSONpath NVARCHAR(MAX),ValueType VARCHAR(20), TheDifference VARCHAR(20)) INSERT INTO @testdata SELECT JSONpath,ValueType,TheDifference FROM dbo.DifferenceBetweenJSONstrings( '[12,32,35,49,15,56,37]','[1,2,3,4,5,6]') UNION ALL SELECT JSONpath,ValueType,TheDifference FROM dbo.DifferenceBetweenJSONstrings( '[1,2,3,4,5,6]','[1,"2",3,4,5,6]') UNION all Select JSONpath,ValueType,TheDifference FROM dbo.DifferenceBetweenJSONstrings( '{"id": "001","type": "Coupe","name": "Cougar","year": "2012"}', '{"id": "004","type": "Coupe","name": "Jaguar","year": "2012"}') UNION all Select JSONpath,ValueType,TheDifference FROM dbo.DifferenceBetweenJSONstrings( '{"id": "001","type": "Coupe","name": "Cougar","year": "2012"}', '{"id": "001","type": "Coupe","name": "Cougar","start": "2012"}') IF (EXISTS( SELECT * FROM @testData g FULL OUTER JOIN (VALUES('$[6]','int','missing'), ('$[1]','int \ string','value type changed'), ('$.START','string','added or key changed'), ('$.year','string','missing'))f(JSONpath,ValueType,TheDifference) ON f.JSONpath=g.JSONpath WHERE f.JSONpath IS NULL OR g.JSONpath IS NULL)) RAISERROR ('the dbo.DifferenceBetweenJSONstrings routine is giving unexpected results;',16,1) |

OK. We can now compare the metadata of two JSON strings, but we can compare the values as well if we ever need to check that two JSON documents represent the same data.

Checking that the JSON is what you expect

Many Web Services send information messages or warning messages in JSON format instead of the data you expect. It could contain a variety of messages such as service interruption, subscription terminations, or daily credit-limits reached. These need to be logged and you need to bypass the import routine. Probably the quickest way to check your JSON is to do a simple JSON_VALUE call on a key/value pair that needs to be there, to see if you get NULL back. To get that path in the first place, you can use the JSONPathsAndValues function. However, it is possible that there are a number of key/value pairs that need to be there. There are plenty of ways of doing this if you have a list or table of the paths you need. I’ll use a VALUES table-source to illustrate the point.

|

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27 28 29 30 31 32 33 34 35 36 37 |

DECLARE @json NVARCHAR(MAX) = N'[ { "Order": { "Number":"SO43659", "Date":"2011-05-31T00:00:00" }, "AccountNumber":"AW29825", "Item": { "Price":2024.9940, "Quantity":1 } }, { "Order": { "Number":"SO43661", "Date":"2011-06-01T00:00:00" }, "AccountNumber":"AW73565", "Item": { "Price":2024.9940, "Quantity":3 } } ]' IF EXISTS( SELECT thepath FROM (VALUES ('$[0].AccountNumber'), ('$[0].Order.Number'), ('$[0].Order.Date'), ('$[0].Item.Price'), ('$[0].Item.Quantity'))WhatThereShouldBe(path) LEFT OUTER JOIN dbo.JSONPathsAndValues( @json ) ON WhatThereShouldBe.path=ThePath WHERE ThePath IS NULL) RAISERROR ('an essential key is missing',16,1) |

Comparing values as well as metadata

A very small tweak in the code for checking the keys will allow you to compare the values as well if you ever need to do that. Note that it checks the order of lists too.

|

1 2 3 4 5 6 7 |

SELECT FirstVersion.Thepath, SecondVersion.Thepath, FirstVersion.TheValue, SecondVersion.TheValue FROM dbo.JSONPathsAndValues('[1,2,3,4,5,7,8]') AS FirstVersion FULL OUTER JOIN dbo.JSONPathsAndValues('[1,2,3,4,5,6]') AS SecondVersion ON FirstVersion.Thepath = SecondVersion.Thepath WHERE FirstVersion.TheValue <> SecondVersion.TheValue OR FirstVersion.Thepath IS NULL OR SecondVersion.Thepath IS NULL; |

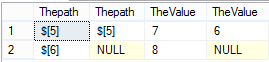

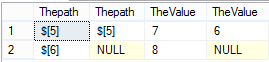

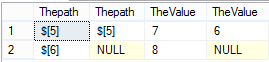

As you can see, there are two differences between the JSON strings. There are different values for the same array element (line 1 of result) and there are a different number of array elements (line 2).

Checking for valid SQL Datatypes.

That is OK as far as it goes, but what about checking that the data will actually go into SQL Server. Although there are good practices for the storage of dates in JSON, for example, there is no JSON standard. If we know our constraints, it is dead easy to check. Imagine we have some JSON which is of a particular datatype. We just define the paths of the values that we want to check and perform whatever check we need on the JSON. We can demonstrate this technique.

|

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 |

SELECT g.ThePath, g.TheValue, CASE Coalesce(f.Datatype,'') WHEN '' THEN '' --it hasnt had a check specified WHEN 'int' THEN --need to check that it is a valid int CASE WHEN Try_Convert(INT,g.TheValue) IS NULL THEN 'Bad int' ELSE 'good' end WHEN 'DateTime' THEN --need to check that it is a valid DateTime CASE WHEN Try_Convert(DateTime,g.TheValue) IS NULL THEN 'Bad datetime' ELSE 'good' end WHEN 'Money' THEN --need to check that it is a valid Money value CASE WHEN Try_Convert(Money,g.TheValue) IS NULL THEN 'Bad money value' ELSE 'good' END WHEN 'ISO8601' THEN --need to check that it is a valid ISO8601 datetime CASE WHEN Try_Convert(DateTime,g.TheValue) IS NULL THEN 'Bad ISO8601 datetime' ELSE 'good' end ELSE '' end FROM dbo.JSONPathsAndValues('["1.7","2","23/4/2008","1 Jun 2017","5.6d","$456,000","2017-09-12T18:26:20.000","2017/10/20T18:26:20.000"]')g LEFT outer JOIN (VALUES('$[0]','int'), ('$[1]','int'), ('$[2]','DateTime'), ('$[3]','DateTime'), ('$[4]','money'), ('$[5]','money'), ('$[6]','ISO8601'), ('$[7]','ISO8601'))f(Thepath,DataType) ON f.thepath=g.Thepath |

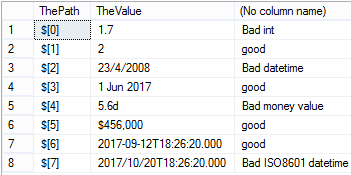

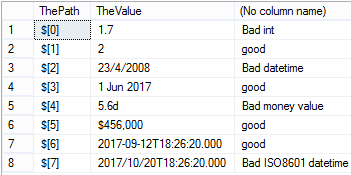

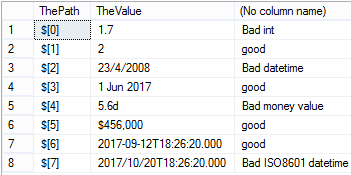

Which, in this test case would give…

You will have appreciated that, as well as the JSON, you will need a separate table source to say what type of data each value should be, and I’ve done a custom date format to show that you can refine your constraint. I’ve used a VALUES table source, but you can easily swap in a JSON one. This table source needs to be kept in sync with the case statement for it to work. In a working system, you’d want to encapsulate all this in a function.

Checking whether the values will pass constraint checks

So how would you tackle the task of checking this simple example to make sure that all these IP addresses were valid? For this example we’ll just check that there are just three dots. We don’t want to check the name, obviously.

|

1 2 3 4 5 6 7 8 9 10 11 12 13 14 |

{ "name":[ "Philip", "Mildew", "Factor" ], "ipAddress":[ "80.243.543.4", "45.85.678.68", "5.8.7.9", "192.168.0.123", "34.8.8" ] } |

You wouldn’t want that last IP address in your database. It isn’t valid. You only want to check those IP values. Here, you can very easily run the check.

|

1 2 3 |

SELECT ThePath, TheValue FROM dbo.JSONPathsAndValues('{"name":["Philip","Mildew","Factor"],"ipAddress":[ "80.243.543.4","45.85.678.68","5.8.7.9","192.168.0.123","34.8.8"]}') WHERE ThePath LIKE '$.ipAddress%' AND TheValue NOT LIKE '%.%.%.%' |

And you will see the bad IP address, but it only runs the check on the list of IP addresses, which is what you want.

![]()

If you had a number of checks to do, you’d save the table-source as a table variable or temporary table and run several queries on it.

Scaling things up

Normally you are dealing with large amounts of JSON data, so you only want to parse it once into a temporary table or table variable before running all your metadata checks. The advantage of doing it this way is that, once you’ve put a good primary key on the path (beware of duplicate JSON keys: they are valid JSON – RFC 4627), the process of firstly checking that the data can be successfully coerced into the appropriate column of the destination table, and then checked that it is within bounds before finally unpicking the hierarchical JSON data into all the relational tables in the right order is much easier and well-controlled.

Using a JSON Webservice from SQL Server

Normally, you’d use SSIS or Powershell for a regular production data feed, but it is certainly possible to do it in SQL. The downside is that your SQL Server needs to have internet access, which is a security risk, and also you have to open up your security surface-area by allowing OLE automation. That said, this is how you do it.

|

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27 28 29 30 31 32 33 34 35 36 37 38 39 40 41 42 43 44 45 46 47 48 49 50 51 52 53 54 55 56 57 58 59 60 61 |

IF NOT EXISTS (SELECT * FROM sys.configurations WHERE name ='Ole Automation Procedures' AND value=1) BEGIN EXECUTE sp_configure 'Ole Automation Procedures', 1; RECONFIGURE; end SET ANSI_NULLS ON; SET QUOTED_IDENTIFIER ON; GO IF Object_Id('dbo.GetWebService','P') IS NOT NULL DROP procedure dbo.GetWebService GO CREATE PROCEDURE dbo.GetWebService @TheURL VARCHAR(255),-- the url of the web service @TheResponse NVARCHAR(4000) OUTPUT --the resulting JSON AS BEGIN DECLARE @obj INT, @hr INT, @status INT, @message VARCHAR(255); /** Summary: > This is intended for using web services that utilize JavaScript Object Notation (JSON). You pass it the link to a webservice and it returns the JSON string Note: > OLE Automation objects can be used within a Transact-SQL batch, but SQL Server blocks access to OLE Automation stored procedures because this component is turned off as part of the security configuration. Author: PhilFactor Date: 26/10/2017 Database: PhilFactor Examples: - > DECLARE @response NVARCHAR(MAX) EXECUTE dbo.GetWebService 'http://headers.jsontest.com/', @response OUTPUT SELECT @response Returns: > nothing **/ EXEC @hr = sp_OACreate 'MSXML2.ServerXMLHttp', @obj OUT; SET @message = 'sp_OAMethod Open failed'; IF @hr = 0 EXEC @hr = sp_OAMethod @obj, 'open', NULL, 'GET', @TheURL, false; SET @message = 'sp_OAMethod setRequestHeader failed'; IF @hr = 0 EXEC @hr = sp_OAMethod @obj, 'setRequestHeader', NULL, 'Content-Type', 'application/x-www-form-urlencoded'; SET @message = 'sp_OAMethod Send failed'; IF @hr = 0 EXEC @hr = sp_OAMethod @obj, send, NULL, ''; SET @message = 'sp_OAMethod read status failed'; IF @hr = 0 EXEC @hr = sp_OAGetProperty @obj, 'status', @status OUT; IF @status <> 200 BEGIN SELECT @message = 'sp_OAMethod http status ' + Str(@status), @hr = -1; END; SET @message = 'sp_OAMethod read response failed'; IF @hr = 0 BEGIN EXEC @hr = sp_OAGetProperty @obj, 'responseText', @Theresponse OUT; END; EXEC sp_OADestroy @obj; IF @hr <> 0 RAISERROR(@message, 16, 1); END; GO |

To use this is simple. You can use this on any of the JSONTest samples

|

1 2 3 |

DECLARE @response NVARCHAR(MAX) EXECUTE dbo.GetWebService 'http://headers.jsontest.com/', @response OUTPUT SELECT @response |

Which will give you …

|

1 2 3 4 5 6 7 |

{ "X-Cloud-Trace-Context": "54e570f5620dc6ef3b087ac6042dca03/10421626717945848480", "Host": "headers.jsontest.com", "User-Agent": "Mozilla/4.0 (compatible; Win32; WinHttp.WinHttpRequest.5)", "Accept": "*/*", "Content-Type": "application/x-www-form-urlencoded" } |

Now that we have the means to get a real service we can try it out. To try out the next example, you need to register with GeoNames.org, but they are good people running a free and useful service. (If you decide to use it in production, make sure you buy support).

Imagine that you need to find the exact geographical coordinated for any postal code in the world. You can now do this, or a whole range of geographical services. For this example, though, we’ll just list the capitals of all the countries in a defined area, together with their populations and longitude/latitude coordinates.

This web service is liable to send you messages instead of your data so be sure to check and log these.

|

1 2 3 4 |

status": { "message": "the hourly limit of 2000 credits for demo has been exceeded. Please use an application specific account. Do not use the demo account for your application.", "value": 19 }} |

In the following batch, we are merely showing this sort of message as an error.

|

1 2 3 4 5 |

Msg 50000, Level 16, State 1, Line 30 The import failed ({"status": { "message": "the hourly limit of 2000 credits for demo has been exceeded. Please use an application specific account. Do not use the demo account for your application.", "value": 19 }}) |

In this case, I should have used my own account rather than demo mode. Although the ‘demo’ name in the URL will work a few times per hour, you will need to change this for your own registered name if you’re getting stuck in.

|

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27 28 29 30 |

DECLARE @response NVARCHAR(4000); --get the data from the provider as JSON EXECUTE dbo.GetWebService 'http://api.geonames.org/citiesJSON?formatted=false&north=44.1&south=-9.9&east=-22.4&west=55.2&username=demo&style=full', @response OUTPUT; --now check to see if it is all there. IF EXISTS ( SELECT * FROM dbo.JSONPathsAndValues(@response) WHERE Thepath IN ('$.geonames[0].lng', '$.geonames[0].geonameId', '$.geonames[0].countrycode', '$.geonames[0].name', '$.geonames[0].fclName', '$.geonames[0].toponymName', '$.geonames[0].fcodeName', '$.geonames[0].wikipedia', '$.geonames[0].lat', '$.geonames[0].fcl', '$.geonames[0].population', '$.geonames[0].fcode' ) ) BEGIN SELECT CountryCode, name, population, latitude, longitude, id, WikipediaURL FROM OpenJson(@response) --we have to walk to the level of the array that --we are interested in. OPENjson doesn't support accessing an array at --a higher level when using the WITH clause. OUTER APPLY OpenJson(Value) WITH (CountryCode CHAR(2) '$.countrycode', Latitude NUMERIC(38, 15) '$.lat', Longitude NUMERIC(38, 15) '$.lng', Name VARCHAR(200) '$.name', Population BIGINT '$.population', wikipediaURL VARCHAR(200) '$.wikipedia', id INT '$.geonameId' ); END; ELSE RAISERROR('The import failed (%s)', 16, 1, @response); |

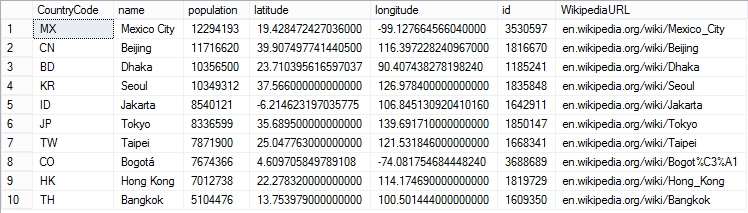

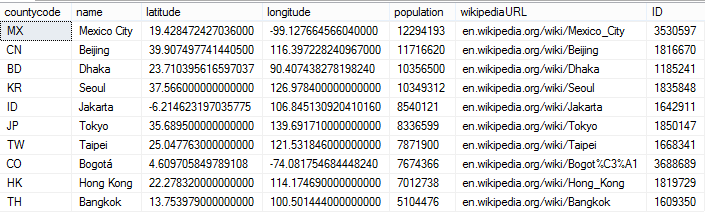

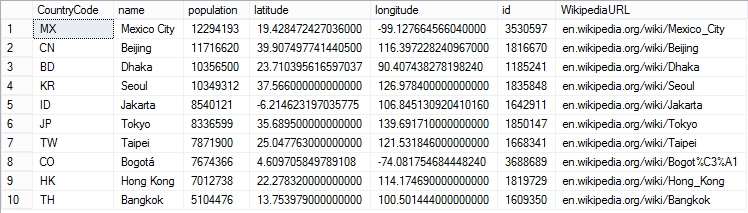

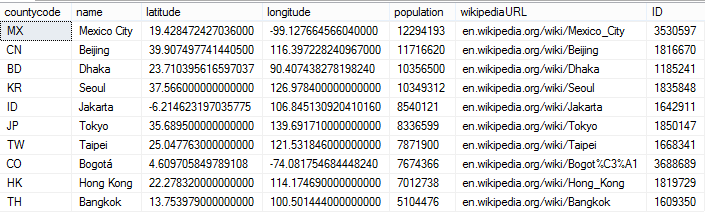

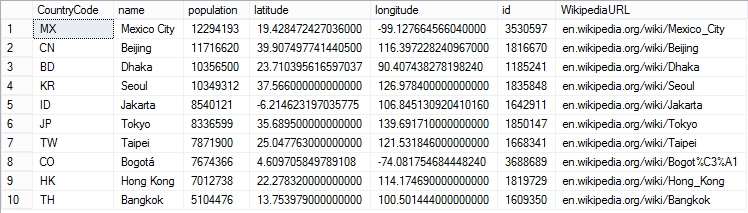

If all is well, this will give you a result something like this…

Of course, there is a lot more you can do. You can, for example, check that the data fits the datatypes in the table, and do whatever other constraint checks you need.

You can of xcourse dispense with the gymnastics of that last bit of openJSON by using what is in the output of dbo.JSONPathsAndValues

|

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 |

DECLARE @response NVARCHAR(4000); --get the data from the provider as JSON EXECUTE dbo.GetWebService 'http://api.geonames.org/citiesJSON?formatted=false&north=44.1&south=-9.9&east=-22.4&west=55.2&username=demo&style=full', @response OUTPUT; DECLARE @TheData table ( -- columns returned by the function element_id INT NOT NULL, Depth INT NOT NULL, Thepath NVARCHAR(2000), ValueType VARCHAR(10) NOT NULL, TheValue NVARCHAR(MAX) NOT NULL ) INSERT INTO @TheData SELECT * FROM dbo.JSONPathsAndValues(@response) SELECT Max(Convert(CHAR(2),CASE WHEN Thepath LIKE '%.countrycode' THEN Thevalue ELSE '' END)) AS countycode, Max(Convert(NVARCHAR(200),CASE WHEN Thepath LIKE '%.name' THEN Thevalue ELSE '' END)) AS name, Max(Convert(NUMERIC(38, 15),CASE WHEN Thepath LIKE '%.lat' THEN Thevalue ELSE '-90' END)) AS latitude, Max(Convert(NUMERIC(38, 15),CASE WHEN Thepath LIKE '%.lng' THEN Thevalue ELSE '-180' END)) AS longitude, Max(Convert(BigInt,CASE WHEN Thepath LIKE '%.population' THEN Thevalue ELSE '-1' END)) AS population, Max(Convert(VARCHAR(200),CASE WHEN Thepath LIKE '%.wikipedia' THEN Thevalue ELSE '' END)) AS wikipediaURL, Max(Convert (INT,CASE WHEN Thepath LIKE '%.geonameId' THEN Thevalue ELSE '0' END)) AS ID FROM @TheData GROUP BY Left(ThePath,CharIndex(']',ThePath+']')) |

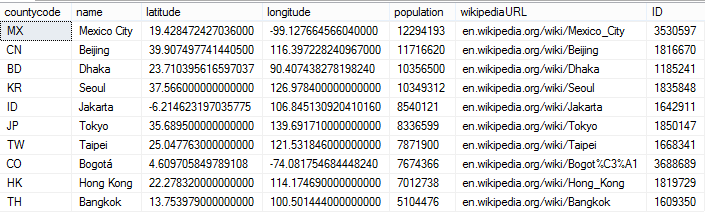

This gives the same result but saving some parsing.

Summary

I’ve set out to show how you can run all manner of checks before importing some JSON into SQL Server, such as ensuring that the JSON metadata is what you expect, finding out the full paths of the information you want, or checking that the values are valid for your datatypes and within range. It is easy to work out what is in a JSON document without having to inspect it. By showing you a simple feed from a web service, I hope I’ve shown how you can make it as robust as you require. There is a lot more you can do, of course, but I hope I’ve shown you enough to enable you to feel confident about accepting data in JSON format.

|

1 |

, ' |

With this, you can see what paths lead to the keys, and you can see what is in the values of those keys.

|

1 |

Which would give you this…

Differences between the metadata of two JSON strings

It is very easy to check two JSON strings to make sure that they have the same metadata, and report any differences that it finds.

|

1 |

As part of the build, we’d run some code like this to ensure that the function still does what you expect

|

1 |

Actually, to be honest, I wouldn’t do my tests this way in order to make sure the function works. I’d run all the results into a table and make sure that the entire table was what I’d expect. After all, we all do automated unit tests as part of our daily build don’t we?

|

1 |

OK. We can now compare the metadata of two JSON strings, but we can compare the values as well if we ever need to check that two JSON documents represent the same data.

Checking that the JSON is what you expect

Many Web Services send information messages or warning messages in JSON format instead of the data you expect. It could contain a variety of messages such as service interruption, subscription terminations, or daily credit-limits reached. These need to be logged and you need to bypass the import routine. Probably the quickest way to check your JSON is to do a simple JSON_VALUE call on a key/value pair that needs to be there, to see if you get NULL back. To get that path in the first place, you can use the JSONPathsAndValues function. However, it is possible that there are a number of key/value pairs that need to be there. There are plenty of ways of doing this if you have a list or table of the paths you need. I’ll use a VALUES table-source to illustrate the point.

|

1 |

Comparing values as well as metadata

A very small tweak in the code for checking the keys will allow you to compare the values as well if you ever need to do that. Note that it checks the order of lists too.

|

1 |

As you can see, there are two differences between the JSON strings. There are different values for the same array element (line 1 of result) and there are a different number of array elements (line 2).

Checking for valid SQL Datatypes.

That is OK as far as it goes, but what about checking that the data will actually go into SQL Server. Although there are good practices for the storage of dates in JSON, for example, there is no JSON standard. If we know our constraints, it is dead easy to check. Imagine we have some JSON which is of a particular datatype. We just define the paths of the values that we want to check and perform whatever check we need on the JSON. We can demonstrate this technique.

|

1 |

Which, in this test case would give…

You will have appreciated that, as well as the JSON, you will need a separate table source to say what type of data each value should be, and I’ve done a custom date format to show that you can refine your constraint. I’ve used a VALUES table source, but you can easily swap in a JSON one. This table source needs to be kept in sync with the case statement for it to work. In a working system, you’d want to encapsulate all this in a function.

Checking whether the values will pass constraint checks

So how would you tackle the task of checking this simple example to make sure that all these IP addresses were valid? For this example we’ll just check that there are just three dots. We don’t want to check the name, obviously.

|

1 |

You wouldn’t want that last IP address in your database. It isn’t valid. You only want to check those IP values. Here, you can very easily run the check.

|

1 |

And you will see the bad IP address, but it only runs the check on the list of IP addresses, which is what you want.

![]()

If you had a number of checks to do, you’d save the table-source as a table variable or temporary table and run several queries on it.

Scaling things up

Normally you are dealing with large amounts of JSON data, so you only want to parse it once into a temporary table or table variable before running all your metadata checks. The advantage of doing it this way is that, once you’ve put a good primary key on the path (beware of duplicate JSON keys: they are valid JSON – RFC 4627), the process of firstly checking that the data can be successfully coerced into the appropriate column of the destination table, and then checked that it is within bounds before finally unpicking the hierarchical JSON data into all the relational tables in the right order is much easier and well-controlled.

Using a JSON Webservice from SQL Server

Normally, you’d use SSIS or Powershell for a regular production data feed, but it is certainly possible to do it in SQL. The downside is that your SQL Server needs to have internet access, which is a security risk, and also you have to open up your security surface-area by allowing OLE automation. That said, this is how you do it.

|

1 |

To use this is simple. You can use this on any of the JSONTest samples

|

1 |

Which will give you …

|

1 |

Now that we have the means to get a real service we can try it out. To try out the next example, you need to register with GeoNames.org, but they are good people running a free and useful service. (If you decide to use it in production, make sure you buy support).

Imagine that you need to find the exact geographical coordinated for any postal code in the world. You can now do this, or a whole range of geographical services. For this example, though, we’ll just list the capitals of all the countries in a defined area, together with their populations and longitude/latitude coordinates.

This web service is liable to send you messages instead of your data so be sure to check and log these.

|

1 |

In the following batch, we are merely showing this sort of message as an error.

|

1 |

In this case, I should have used my own account rather than demo mode. Although the ‘demo’ name in the URL will work a few times per hour, you will need to change this for your own registered name if you’re getting stuck in.

|

1 |

If all is well, this will give you a result something like this…

Of course, there is a lot more you can do. You can, for example, check that the data fits the datatypes in the table, and do whatever other constraint checks you need.

You can of xcourse dispense with the gymnastics of that last bit of openJSON by using what is in the output of dbo.JSONPathsAndValues

|

1 |

This gives the same result but saving some parsing.

Summary

I’ve set out to show how you can run all manner of checks before importing some JSON into SQL Server, such as ensuring that the JSON metadata is what you expect, finding out the full paths of the information you want, or checking that the values are valid for your datatypes and within range. It is easy to work out what is in a JSON document without having to inspect it. By showing you a simple feed from a web service, I hope I’ve shown how you can make it as robust as you require. There is a lot more you can do, of course, but I hope I’ve shown you enough to enable you to feel confident about accepting data in JSON format.

|

1 |

, @JSONData, 'object'; WHILE @rowcount <> 0 BEGIN SELECT @ii = @ii + 1; INSERT INTO @TheHierarchy (Depth, Thepath, TheName, TheValue, ValueType) SELECT @ii, CASE WHEN [Key] NOT LIKE '%[^0-9]%' THEN Thepath + '[' + [Key] + ']' --nothing but numbers WHEN [Key] LIKE '%[$ ]%' THEN Thepath + '."' + [Key] + '"' --got a space in it ELSE Thepath + '.' + [Key] END, [Key], Coalesce(Value,''), CASE Type WHEN @string THEN 'string' WHEN @null THEN 'null' WHEN @int THEN 'int' WHEN @boolean THEN 'boolean' WHEN @int THEN 'int' WHEN @array THEN 'array' ELSE 'object' END FROM @TheHierarchy AS m CROSS APPLY OpenJson(TheValue) AS o WHERE ValueType IN ('array', 'object') AND Depth = @ii - 1; SELECT @rowcount = @@RowCount; END; INSERT INTO @TheHierarchyMetadata SELECT element_id, Depth, Thepath, ValueType, TheValue FROM @TheHierarchy WHERE ValueType NOT IN ('array', 'object'); RETURN; END; GO |

With this, you can see what paths lead to the keys, and you can see what is in the values of those keys.

|

1 |

Which would give you this…

Differences between the metadata of two JSON strings

It is very easy to check two JSON strings to make sure that they have the same metadata, and report any differences that it finds.

|

1 |

As part of the build, we’d run some code like this to ensure that the function still does what you expect

|

1 |

Actually, to be honest, I wouldn’t do my tests this way in order to make sure the function works. I’d run all the results into a table and make sure that the entire table was what I’d expect. After all, we all do automated unit tests as part of our daily build don’t we?

|

1 |

OK. We can now compare the metadata of two JSON strings, but we can compare the values as well if we ever need to check that two JSON documents represent the same data.

Checking that the JSON is what you expect

Many Web Services send information messages or warning messages in JSON format instead of the data you expect. It could contain a variety of messages such as service interruption, subscription terminations, or daily credit-limits reached. These need to be logged and you need to bypass the import routine. Probably the quickest way to check your JSON is to do a simple JSON_VALUE call on a key/value pair that needs to be there, to see if you get NULL back. To get that path in the first place, you can use the JSONPathsAndValues function. However, it is possible that there are a number of key/value pairs that need to be there. There are plenty of ways of doing this if you have a list or table of the paths you need. I’ll use a VALUES table-source to illustrate the point.

|

1 |

Comparing values as well as metadata

A very small tweak in the code for checking the keys will allow you to compare the values as well if you ever need to do that. Note that it checks the order of lists too.

|

1 |

As you can see, there are two differences between the JSON strings. There are different values for the same array element (line 1 of result) and there are a different number of array elements (line 2).

Checking for valid SQL Datatypes.

That is OK as far as it goes, but what about checking that the data will actually go into SQL Server. Although there are good practices for the storage of dates in JSON, for example, there is no JSON standard. If we know our constraints, it is dead easy to check. Imagine we have some JSON which is of a particular datatype. We just define the paths of the values that we want to check and perform whatever check we need on the JSON. We can demonstrate this technique.

|

1 |

Which, in this test case would give…

You will have appreciated that, as well as the JSON, you will need a separate table source to say what type of data each value should be, and I’ve done a custom date format to show that you can refine your constraint. I’ve used a VALUES table source, but you can easily swap in a JSON one. This table source needs to be kept in sync with the case statement for it to work. In a working system, you’d want to encapsulate all this in a function.

Checking whether the values will pass constraint checks

So how would you tackle the task of checking this simple example to make sure that all these IP addresses were valid? For this example we’ll just check that there are just three dots. We don’t want to check the name, obviously.

|

1 |

You wouldn’t want that last IP address in your database. It isn’t valid. You only want to check those IP values. Here, you can very easily run the check.

|

1 |

And you will see the bad IP address, but it only runs the check on the list of IP addresses, which is what you want.

![]()

If you had a number of checks to do, you’d save the table-source as a table variable or temporary table and run several queries on it.

Scaling things up

Normally you are dealing with large amounts of JSON data, so you only want to parse it once into a temporary table or table variable before running all your metadata checks. The advantage of doing it this way is that, once you’ve put a good primary key on the path (beware of duplicate JSON keys: they are valid JSON – RFC 4627), the process of firstly checking that the data can be successfully coerced into the appropriate column of the destination table, and then checked that it is within bounds before finally unpicking the hierarchical JSON data into all the relational tables in the right order is much easier and well-controlled.

Using a JSON Webservice from SQL Server

Normally, you’d use SSIS or Powershell for a regular production data feed, but it is certainly possible to do it in SQL. The downside is that your SQL Server needs to have internet access, which is a security risk, and also you have to open up your security surface-area by allowing OLE automation. That said, this is how you do it.

|

1 |

To use this is simple. You can use this on any of the JSONTest samples

|

1 |

Which will give you …

|

1 |

Now that we have the means to get a real service we can try it out. To try out the next example, you need to register with GeoNames.org, but they are good people running a free and useful service. (If you decide to use it in production, make sure you buy support).

Imagine that you need to find the exact geographical coordinated for any postal code in the world. You can now do this, or a whole range of geographical services. For this example, though, we’ll just list the capitals of all the countries in a defined area, together with their populations and longitude/latitude coordinates.

This web service is liable to send you messages instead of your data so be sure to check and log these.

|

1 |

In the following batch, we are merely showing this sort of message as an error.

|

1 |

In this case, I should have used my own account rather than demo mode. Although the ‘demo’ name in the URL will work a few times per hour, you will need to change this for your own registered name if you’re getting stuck in.

|

1 |

If all is well, this will give you a result something like this…

Of course, there is a lot more you can do. You can, for example, check that the data fits the datatypes in the table, and do whatever other constraint checks you need.

You can of xcourse dispense with the gymnastics of that last bit of openJSON by using what is in the output of dbo.JSONPathsAndValues

|

1 |

This gives the same result but saving some parsing.

Summary

I’ve set out to show how you can run all manner of checks before importing some JSON into SQL Server, such as ensuring that the JSON metadata is what you expect, finding out the full paths of the information you want, or checking that the values are valid for your datatypes and within range. It is easy to work out what is in a JSON document without having to inspect it. By showing you a simple feed from a web service, I hope I’ve shown how you can make it as robust as you require. There is a lot more you can do, of course, but I hope I’ve shown you enough to enable you to feel confident about accepting data in JSON format.

FAQs: Importing JSON Data from Web Services and Applications into SQL Server

1. How do I validate JSON before inserting it into SQL Server?

Use ISJSON() to confirm the string is valid JSON, then use OPENJSON() with a WITH clause to check that required properties exist with the expected data types. Use JSON_VALUE() to extract and cast individual values to SQL Server types, catching conversion errors as validation failures. For constraint validation (checking that values meet business rules like valid IP ranges or foreign key existence), add WHERE-clause checks against extracted values before the final INSERT.

2. How do I parse JSON path expressions in SQL Server?

SQL Server uses lax path mode by default: JSON_VALUE(@json, ‘$.property’) returns NULL if the path doesn’t exist rather than throwing an error. Use strict mode – JSON_VALUE(@json, ‘strict $.property’) – to raise an error when a required path is missing. OPENJSON() returns a table with key, value, and type columns for each JSON element, letting you inspect path structure programmatically without knowing the JSON schema in advance.

3. Can I call a JSON web service from SQL Server T-SQL?

Yes, using sp_invoke_external_rest_endpoint in SQL Server 2022 Azure SQL Database (Azure SQL Database and SQL Managed Instance), or using OPENROWSET with OLE DB providers in earlier versions. For production data pipelines, SSIS or PowerShell is more robust and observable. Direct SQL web service calls are useful for ad hoc queries or simple scheduled imports where full ETL infrastructure is not warranted.

4. How do I compare the metadata of two JSON strings in SQL Server?

Use OPENJSON() on both strings and compare the key-type structures returned. Extract the path expressions from each using a recursive OPENJSON call that traverses the hierarchy, then use EXCEPT or FULL OUTER JOIN between the two path sets to identify differences. The routine in this article returns paths present in one document but missing from the other, flagging structural differences before any data comparison is attempted.

Load comments