The SQL Server ‘Feature’ that everyone is Talking About: Licensing

SQL Server is a fantastic database platform. I’ve been working with it for over 16 years now. I started working with it as a developer, switched to wearing a DBA hat for a while, and have spent the last 10+ years working as a SQL Server Consultant. Early on as a consultant, I spent a lot of time helping developers, DBAs, and organizations become more familiar with the new features and benefits of SQL Server 2005 and then SQL Server 2008. I still do that with SQL Server 2012 and SQL Server 2014, but there’s no doubt that the number of features and improvements (especially for developers) have tapered off with recent releases, while conversations about newer versions of SQL Server today invariably seem to center on discussions about minimizing SQL Server Licensing costs.

The Key to Licensing: Decreasing CPU Utilization

While Microsoft certainly doesn’t win any points for making SQL Server licensing simple, the reality is that there is one simple key to approaching licensing: the less CPU you need, the fewer licenses you’ll require, and the less you’ll have to pay. (Another, related, key to licensing is to make sure that the CPUs you purchase are the fastest possible – to make sure you’re optimizing GHz per license.)

The key take-away, though, is that performance tuning ends up being a critical concern when it comes to SQL Server. Not only will keeping queries ‘lean and mean’ result in happier end users, but operations that consume less CPU ultimately require fewer licenses. Stated differently, SQL Server is easily one of the more expensive solutions that many organizations license – so a healthy focus on optimization (coupled with keen hardware choices up front) is critical to helping keep SQL Server licensing costs manageable. In this article, however, we’ll bypass looking at performance tuning and optimization, and look instead at the idea of caching – or the notion that the fastest, most scalable, and best utilization of SQL Server CPU is the query that you never run.

Putting Cache into Perspective

An effective way to put cache into perspective is to start with licensing costs. Assume two identical 8-core machines – one for SQL Server and one to act as an application server. With simplified math, it’ll cost around $3,000 per machine to license Windows Server 2012 R2. But, while Windows Server 2012 R2 supports up to 4TB of RAM, SQL Server Standard Edition 2012 and 2014 artificially constrain RAM to 64GB and 128GB per instance. Consequently, if your workloads require more RAM, SQL Server Enterprise Edition becomes the logical choice. For an 8 core machine, SQL Server will cost an additional $12, 000 (for Standard Edition) or up to $60,000 (for Enterprise Edition) to license. Ignoring hardware costs, this means that our sample 8-core SQL Server is either 5 or 20+ times more expensive to license than 8-core application server.

With such a stark difference in licensing costs, it would be foolish to not look for ways to offload things done on SQL Server over to your application servers whenever possible. Which is an ideal description of caching – or the notion of offloading redundant and even expensive projections into the cheaper memory found in application servers.

We Can’t Cache our Data Because…

Strangely enough, though, caching doesn’t make its way into far too many applications. Some common reasons that caching isn’t used include the following:

- Data is perceived as being too volatile or too sensitive to be cached. If you’re building a stock trading application to compete with the likes of NASDAQ, then some of your data shouldn’t be cached – like the actual, current, value of a given stock price. This is one of the biggest problems that management and developers will typically raise about caching (or why they don’t want to use it), so I’d like to make a small ‘preemptively’ comment about that before we dive into the more detail later in the article:The reality is that your application probably isn’t NASDAQ. Even if it was, then just about everything else in your application can and should be cached – like descriptions of the companies or stocks being traded, historical trends and reports, press releases, user preferences and trade histories, and just about anything else that you can cache in order to ‘free up’ cycles to focus on your more volatile trades. In short, caching needs to be thought of as an opportunity – not a liability. And, when done correctly, it can become a huge win – even with volatile and semi-volatile data.

- Non-trivial to implement. Caching can be very hard to implement. Not only are there major technical concerns with making sure that cache invalidation works correctly, but caching is frequently considered as a ‘cross cutting’ concern which can make it easy to end up in situations where the code that implements this functionality can feel like it litters your code-base and adds significant ‘clutter’ and complexity.

- Scalability wasn’t an initial concern. A large number of applications start out as simple prototypes where there’s very little separation of concerns and ‘forms’ or ‘web pages’ tend to talk directly to back-end databases as a means of quickly and easily addressing simple tasks. When applications like this become successful, they start to ‘bloat’ as the amount of code and functionality added to them balloons – along with the number of concurrent users. Sadly, though, the initial architecture of these solutions remains a liability – as it can be very hard to retrofit caching into solutions where data-access operations are coupled directly to UI elements.

Happily, however, there’s a way to address just about every problem listed above when it comes to caching. Granted, some fixes are more expensive than others – but the alternative (not implementing caching) can frequently be much more expensive.

Strategies for Cache Invalidation

One of my favorite jokes about development states that “there are two things that are hard about development: naming things, cache invalidation, and off-by-one errors.” Without a doubt, cache invalidation is what makes caching difficult. Adding data into cache and fetching data from cache is (other than threading concerns) a trivial set of operations. But applications that retain and distribute stale data when they shouldn’t are a nightmare for developers and users.

But the truth is, if cache invalidation can be made to work perfectly, then even arguments about data that’s “too volatile to cache” become moot – because the reality of cache invalidation is that as long as data is cached between modifications, and released as soon as it is modified, then any amount of caching on heavily used systems is almost always going to be a major performance win. In fact, this sentiment is at the heart of micro-caching – the notion that something that typically only changes every 2 to 5 seconds (i.e. highly volatile data), but which is queried a few hundred (or even thousand) times per second on a very busy system can provide significant scalability and performance benefits when cached correctly. Consequently, there are two major tips or techniques that can be used to help tame the process of reliably invalidating the cache.

With Caching, One Size Doesn’t Fit All

The first key to optimizing cache invalidation is to avoid thinking of cache as a ‘one-size-fits-all’ mechanism – rejecting the assumption that all data can and should be cached for the same amount of time. Instead, start by establishing maximum ‘thresholds’ for how long data in various parts of your application can be cached. For example, user settings and preferences might be comfortably cached (when not modified) for tens of minutes, whereas something like the lists of states and provinces used to fill a drop down might, effectively, be cached forever. Similarly, inventory levels for products being sold might only be something you can comfortably cache for seconds or tens of seconds, whereas the descriptions of the items you’re selling can probably be cached for minutes or tens of minutes without much concern.

The point is to establish these thresholds up front. This way, if you’ve set a cache duration of a semi-volatile object for a maximum of 30 seconds, if you then somehow manage to ‘flub’ the code that would invalidate this object from the cache when it’s modified, you’re looking at only a total of 30 seconds for which this object could potentially cause problems – rather than something like 20 minutes (or until memory is scavenged) otherwise. Obviously, setting max cache durations is just a mitigating technique, but the process of starting by defining volatility levels for objects helps ensure that your focus is on areas of higher volatility to make sure they’re working correctly.

Intelligent Cache Invalidation

The second key to optimizing cache invalidation is to stop using ‘simple’ string keys as lookups for cached items – as is the case with so many caching frameworks. Instead, a better technique is to define some sort of CacheKey Class that can help ensure that any and all objects are always defined the same way throughout your application, and always ‘serialize’ their details into a string cache key that avoids typos and mis-matches entirely.

Furthermore, once you’ve established ‘strongly typed’ cache keys in this manner, you can then add additional logic to help define details about the objects being cached – and use that information to ‘segment’ your cache for easier management. For example, you can easily define ‘realms’ or ‘regions’ for your cached objects as well as define whether the object being cached is just a single object, a collection of (filtered) objects, or a collection of objects representing an entire domain (like all states/provinces for a drop-down). Then, if you’ve clearly defined how a CacheKey represents itself, you can use this same convention to do lookups against a .config or .json file (for example) where you’ve defined the max durations of your cached objects based upon any number of relevant factors (e.g., what part of your application the objects are being used in, the ‘cardinality’ of the cached object(s), the type, and so on.) In short, such an approach lets you keep caching duration directives in an easy-to-manage ‘config’ file, and moves caching duration details out of your code – making caching easier to manage and modify ‘on the fly’.

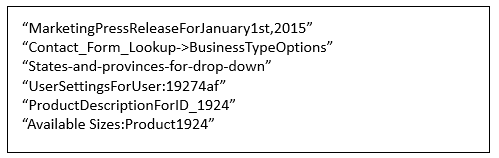

To better put this into perspective, imagine that you could ‘dump’ the keys used to identify objects in many or most applications. Doing so would typically result in something like the following:

Figure 1: A typical ‘dump’ of cache keys – where there’s no overriding convention or nomenclature – making cache invalidation error-prone and tedious because of copy-paste semantics.

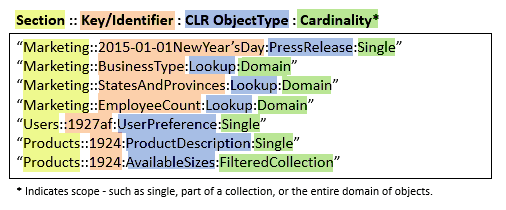

Whereas, if you take the approach of structuring details about your objects as part of the cache key – and codifying those details into a CacheKey object that always serializes these details in similar order, you get something that would look like the following:

Figure 2: By strongly-typing cached object details, you not only get clear, reproducible keys, but it quickly becomes possible to see how cache keys become logically segregated into clear, obvious, hierarchies.

Integrating Cache into Greenfield Apps

With a clear-cut convention for defining cache keys for objects to be cached (and invalidated), implementing caching within Greenfield applications is typically simple. All that needs to happen is to ensure that the same convention (enforced by strongly-typing your cache key details by means of a CacheKey class or similar structure) is used when adding objects into the cache and when informing the cache that objects need to be evicted because they’ve been modified.

Furthermore, by using ‘strongly-typed’ cache keys, it’s possible to make cache invalidation more powerful than simply evicting a single, solitary, key each time an object changes. For example, referencing Figure2 above, imagine having (thread safe) helper routines that would allow you to evict any and all cache keys that started with the text “Marketing“, or all keys for a specific user (i.e., “Users::GUIDHere:”). With such functionality, juggling the complexities of cache invalidation becomes substantially easier to manage – and to get right.

Otherwise, additional techniques that you can use when integrating cache into Greenfield applications would be to seriously consider segregating database connections by creating read-only and read-write connection strings – as a way to help further delineate and clarify where caching could and would make more sense. In a similar manner, application architectures based upon Command Query Responsibility Segregation (or CQRS) provide similar segmentation. However, as such applications obviously separate reads from writes -you’ll have to take care that writes or commands ‘know’ to invalidate cache for objects that may have been pulled out by projections. Happily, though, a custom CacheKey object can make this problem easy to address – especially when coupled with a shared service bus or sourced events that can indicate when projected objects become ‘stale’ or should be invalidated.

Integrating Cache into Existing Applications

Sadly, it’s typically much harder to integrate caching capabilities into existing applications – especially those created with any type of ORM or where data-retrieval and data-modifications are either tightly-coupled to UI, or handled by a ‘black-box’ abstraction that makes injecting caching directives and invalidation routines much harder to tackle. Still, with applications like these that continue to grow, your options are to either throw more hardware (and potentially licensing costs) at the problem, undertake performance tuning (which can be invaluable), or attempt to implement some form of caching. At this point, caching – while hard – can still be feasible.

Focus on Expensive Operations

In situations like this, one key approach to implementing caching is to avoid thinking of the need to deploy it ‘across the board’. Instead, focus on your more expensive operations and look to just cache those. To find these operations, tools like SQL Server Profiler or extended events can help you find the operations taking the longest time on your server – making them good candidates for tuning and/or caching. In cases where caching makes sense – either because the data is very ‘static’ in nature and/or because the queries are very hard or costly to tune within SQL Server, wiring up some caching capabilities around just these specific areas can represent a substantial win.

Another approach for finding problematic or expensive operations is to use ANTS Performance Profiler – which, as of version 9, not only helps you find highly problematic areas with your application code, but which can also directly tie into the SQL Server execution plans to reveal problematic operations that are caused by interactions with a SQL Server back-end. The key benefit of a tool like ANTS is that not only does it become easy to both spot problem areas and evaluate them for tuning, but the fact that ‘problem areas’ are highlighted within your code base, making it much easier to evaluate these kinds of operations as to their suitability for being wrapped by caching (and cache invalidation) routines.

Consider 3rd Party Caching Tools

Finally, for solutions or architectures where extending the existing application with caching functionality is financially (or emotionally) infeasible, it’s important to call out that there are third-party caching solutions that can help to easily and transparently add a caching tier without having to change existing applications. With these solutions (like ScaleArc for SQL Server) – which typically operate as ‘load balancers’ for SQL Server workloads – you simply change your applications (or connection strings) to point at a proxy-server instead of your actual database server. In practice, the proxy will transparently provide the ability to enable caching right out of the gate by determining which requests can be safely and conveniently cached – and then letting systems administrators easily review the most expensive queries being forwarded to their SQL Servers and then define custom caching policies for these queries as needed or logical.

This type of approach isn’t as clear-cut and clean as having your own customized code programmatically managing cache invalidation every time cached data is modified, but these solutions will still typically provide you with vastly improved caching capabilities, and will typically improve scalability and even increase availability. More importantly, they’ll do this all for much less than you’d typically pay to either retrofit older applications or spin up additional licenses for SQL Server Enterprise Edition. As such, solutions like this are well worth examination if you’re hitting scalability limits with your current SQL Server workloads.

Conclusion

Caching represents a great way to off-load expensive CPU cycles from SQL Server. For high-end apps and solutions, the best bet is to start with caching out of the gate. Done correctly, this is one area where a little effort by developers can literally save hundreds of thousands to even millions of dollars over the lifetime of applications. Yet, even in cases where caching needs to be added after the fact, there are still options and possibilities available that can still be easier to introduce than paying additional licensing costs for SQL Server (especially enterprise edition licenses). In short, you ignore caching at your own cost and peril.

Load comments