PowerShell’s Export-CLIXML and Import-CLIXML cmdlets serialise and deserialise objects to/from a custom PowerShell XML format (CLIXML = Common Language Infrastructure XML). Unlike ConvertTo-Json, CLIXML preserves the object’s original type information, which means objects round-trip through Export/Import and retain their type (System.Management.Automation.PSCredential stays a PSCredential, DateTime stays a DateTime, custom class instances retain their type for reflection).

Common use cases: (1) persisting complex object state between script runs – export at end of script, re-import at next run to resume state; (2) cross-process object handoff – one PowerShell process exports, another imports, faster and richer than text serialisation; (3) credential storage – Export-CLIXML with a PSCredential securely encrypts the password using DPAPI (Windows Data Protection API) keyed to the current user on the current machine, so only that user on that machine can decrypt; (4) test fixtures – capture complex object snapshots, import in tests for assertion fixtures.

Limitations: CLIXML files are not human-readable (unlike JSON), the format is PowerShell-specific (can’t be consumed by non-PowerShell tools), the DPAPI-encrypted credentials aren’t portable between machines or users. For human-readable or cross-language serialisation, use ConvertTo-Json; for PowerShell-to-PowerShell with type fidelity, CLIXML is the canonical choice.

As a Database Administrator, it’s essential to keep track of the systems you manage as they change over time. There are several ways to do that, and I’ve found PowerShell objects especially useful.

This article covers creating portable objects which allow you to collect data on one server and copy that data to another server. Then you can continue to work with the objects. It’s especially useful when you want to limit your work on the source server to minimize resource usage, or you want to collect data from many servers in one place and process that data as a group.

Before there was PowerShell . . .

In my early days as a DBA, I worked on Sybase SQL Server (version 4.2, for those asking) on Unix servers. To collect information from those servers, I’d use Korn shell scripts to iterate through the servers and gather the information I needed. Here’s a sample script I used over 20 years ago to collect logins across all my servers:

|

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 |

. /software/admin/bin/SAPSSWD SA_PASSWORD=`echo $SAPSSWD` SHORTNAME=`echo $1 | cut -c1-4` echo "Using short name of .${SHORTNAME}" echo "select name,@@servername,'#' from syslogins where name like '%${SHORTNAME}%'" > /tmp/t echo "go" >> /tmp/t isql -Usa -Scli42a -i/tmp/t -P${SA_PASSWORD} | tee /tmp/t.out isql -Usa -Scli42b -i/tmp/t -P${SA_PASSWORD} | tee -a /tmp/t.out isql -Usa -Scli44a -i/tmp/t -P${SA_PASSWORD} | tee -a /tmp/t.out isql -Usa -Scli45a -i/tmp/t -P${SA_PASSWORD} | tee -a /tmp/t.out isql -Usa -Scli46a -i/tmp/t -P${SA_PASSWORD} | tee -a /tmp/t.out isql -Usa -Scli47a -i/tmp/t -P${SA_PASSWORD} | tee -a /tmp/t.out isql -Usa -Scli47b -i/tmp/t -P${SA_PASSWORD} | tee -a /tmp/t.out isql -Usa -Scli48a -i/tmp/t -P${SA_PASSWORD} | tee -a /tmp/t.out isql -Usa -Scli48b -i/tmp/t -P${SA_PASSWORD} | tee -a /tmp/t.out DATASERVERS=`cat /tmp/t.out | sed -e s/name//g | sed -e s/-//g | sed -e /.row./d` rm /tmp/t.out rm /tmp/t |

(Yes, I’d do things differently today, with the security concerns we have in today’s world.)

When I ran this script, which selects login and server name (and a #) from each of the nine servers, it returns a list in the DATASERVERS variable of all the logins and which server they are defined. (This is Korn shell on a Unix box running against Sybase servers.) In the script, isql does what you now do using sqlcmd. cli4xx is the name of the server, and the results are tee’d to both the console and to the t.out file. I used this script to find all the servers where a given login (SHORTNAME) was defined so that I could update or remove it.

The thing I’d like to point out is the line where I build the DATASERVERS variable. I’m taking the results from the queries that have been directed to the t.out file in the /tmp directory. Then I use the sed (stream editor) utility to filter out any occurrences of the string ‘name’, then the string ‘-‘, and then deleting any line that has the string ‘row’ prefixed or suffixed by 0 or more characters. (Try the command in SSMS with results to text, and the edits will make more sense.)

This method is the standard in Unix environments for piping the results of one utility into another. You have to ensure that the results of one are correctly formatted to be usable in the next utility in the pipeline.

PowerShell and .NET Objects

One of the first features I found amazing in PowerShell is the use of .NET objects for everything. What’s especially great is that I don’t have to use sed (or grep, or awk) to do all the formatting I as I did in Unix environments to get the same power when writing my scripts.

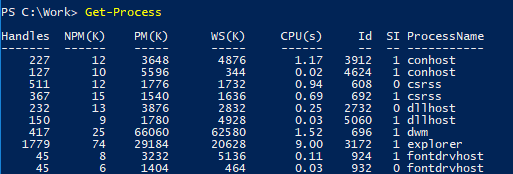

For example, if I want to see what’s running on my server, I run the Get-Process command:

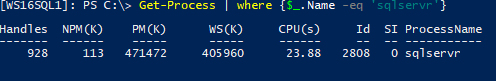

If I want to pull back a specific process name, I can pipe the results to the where-object cmdlet (aliased as just ‘where’), testing each member of the results to see if the Name property is equal to ‘sqlservr’.

|

1 |

Get-Process | where {$_.Name -eq 'sqlservr'} |

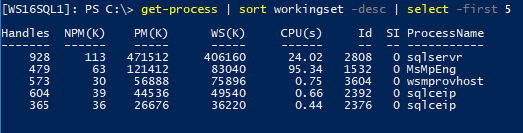

If I want to see the top five most memory-consuming processes, I can sort (sort-object) on the WorkingSet (WS) column in descending order, and then select (select-object) the first five.

|

1 |

Get-Process | sort workingset -desc | select -first 5 |

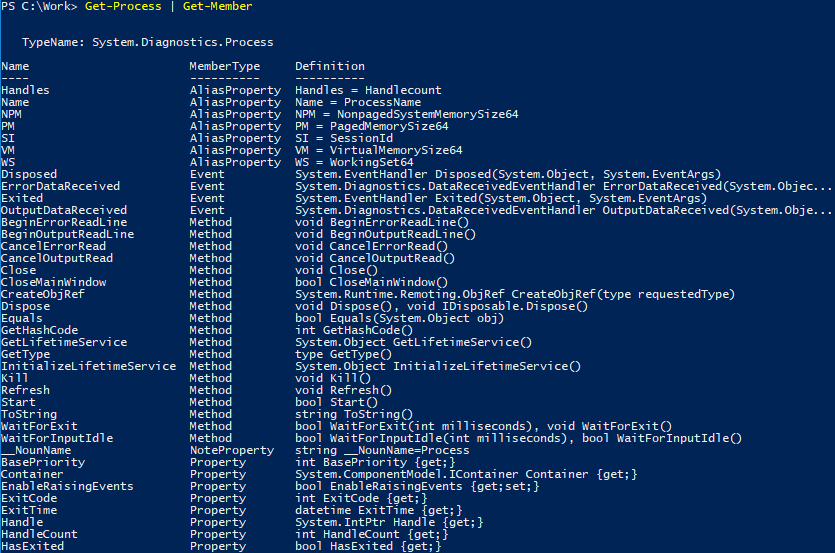

There’s no text manipulation here, as I had to do using the Korn shell 20 years ago. Objects contain properties, and I can select just the properties I want or can filter by specific (or wildcarded) values, as I need. I can discover what properties are available for a specific cmdlet by piping the command to Get-Member.

|

1 |

Get-Process | Get-Member |

As you can see, there are a lot of properties available in this object. Each object is defined by its type (in this case it’s a System.Diagnostics.Process object), and Get-Member returns the properties and methods for the object, as well as other MemberTypes.

As a consultant, I work with systems for multiple clients, and the work I do often requires that I be disconnected from the client systems. This is where PowerShell’s special CLIXML (Common Language Infrastructure XML) type comes into play.

PowerShell Object Serialization

When you move data from one computer to another in PowerShell, it does so by serializing the object – exporting to another form – into XML, usually. There are a lot of options for exporting data out of PowerShell. There’s Export-Csv, and there’s a number of options using the ConvertTo verb, including ConvertTo-Html, ConvertTo-Json, and ConvertTo-Xml. Each of these cmdlets serialize the data in the objects in different ways, but most of them strip out most of the object features. Let’s look at an example.

|

1 2 |

$sqlps = Get-Process | where {$_.Name -eq 'sqlservr'} $sqlps | ConvertTo-Xml -as String | Set-Content -path c:\work\SQLProcessXML.xml |

The results show a standard XML file with values for the individual properties.

|

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 |

<?xml version="1.0" encoding="utf-8"?> <Objects> <Object Type="System.Diagnostics.Process"> <Property Name="Name" Type="System.String">sqlservr</Property> <Property Name="SI" Type="System.Int32">0</Property> <Property Name="Handles" Type="System.Int32">929</Property> <Property Name="VM" Type="System.Int64">7689175040</Property> <Property Name="WS" Type="System.Int64">418717696</Property> <Property Name="PM" Type="System.Int64">482795520</Property> <Property Name="NPM" Type="System.Int64">115392</Property> <Property Name="Path" Type="System.String">C:\Program Files\Microsoft SQL Server\MSSQL14.MSSQLSERVER\MSSQL\Binn\sqlservr.exe</Property> <Property Name="Company" Type="System.String">Microsoft Corporation</Property> <Property Name="CPU" Type="System.Double">27.03125</Property> <Property Name="FileVersion" Type="System.String">2017.0140.1000.169 ((SQLServer).170822-2340)</Property> <Property Name="ProductVersion" Type="System.String">14.0.1000.169</Property> <Property Name="Description" Type="System.String">SQL Server Windows NT - 64 Bit</Property> … |

CLIXML is structured differently, however, and contains a lot more information. You can also use the Export-Clixml command to export it.

|

1 |

$sqlps | Export-Clixml c:\work\SQLProcessCLIXML.xml |

The output file looks like this:

|

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27 28 29 30 31 32 33 34 35 36 37 38 39 40 41 42 43 44 45 46 47 48 49 50 51 52 53 54 55 56 57 58 59 60 61 62 63 64 65 66 67 |

<Objs Version="1.1.0.1" xmlns="http://schemas.microsoft.com/powershell/2004/04"> <Obj RefId="0"> <TN RefId="0"> <T>System.Diagnostics.Process</T> <T>System.ComponentModel.Component</T> <T>System.MarshalByRefObject</T> <T>System.Object</T> </TN> <ToString>System.Diagnostics.Process (sqlservr)</ToString> <Props> <I32 N="BasePriority">8</I32> <B N="HasExited">false</B> <Obj N="Handle" RefId="1"> <TN RefId="1"> <T>System.IntPtr</T> <T>System.ValueType</T> <T>System.Object</T> </TN> <ToString>7088</ToString> </Obj> <Obj N="SafeHandle" RefId="2"> <TN RefId="2"> <T>Microsoft.Win32.SafeHandles.SafeProcessHandle</T> <T>Microsoft.Win32.SafeHandles.SafeHandleZeroOrMinusOneIsInvalid</T> <T>System.Runtime.InteropServices.SafeHandle</T> <T>System.Runtime.ConstrainedExecution.CriticalFinalizerObject</T> <T>System.Object</T> </TN> <ToString>Microsoft.Win32.SafeHandles.SafeProcessHandle</ToString> <Props> <B N="IsInvalid">false</B> <B N="IsClosed">false</B> </Props> </Obj> <I32 N="HandleCount">929</I32> <I32 N="Id">2808</I32> <S N="MachineName">.</S> <Obj N="MainWindowHandle" RefId="3"> <TNRef RefId="1" /> <ToString>0</ToString> </Obj> <S N="MainWindowTitle"></S> <Obj N="MainModule" RefId="4"> <TN RefId="3"> <T>System.Diagnostics.ProcessModule</T> <T>System.ComponentModel.Component</T> <T>System.MarshalByRefObject</T> <T>System.Object</T> </TN> <ToString>System.Diagnostics.ProcessModule (sqlservr.exe)</ToString> <Props> <S N="ModuleName">sqlservr.exe</S> <S N="FileName">C:\Program Files\Microsoft SQL Server\MSSQL14.MSSQLSERVER\MSSQL\Binn\sqlservr.exe</S> <S N="BaseAddress">140702702632960</S> <I32 N="ModuleMemorySize">516096</I32> <S N="EntryPointAddress">140702702798896</S> <S N="FileVersionInfo">File: C:\Program Files\Microsoft SQL Server\MSSQL14.MSSQLSERVER\MSSQL\Binn\sqlservr.exe_x000D__x000A_InternalName: SQLSERVR_x000D__x000A_OriginalFilename: SQLSERVR.EXE_x000D__x000A_FileVersion: 2017.0140.1000.169 ((SQLServer).170822-2340)_x000D__x000A_FileDescription: SQL Server Windows NT - 64 Bit_x000D__x000A_Product: Microsoft SQL Server_x000D__x000A_ProductVersion: 14.0.1000.169_x000D__x000A_Debug: False_x000D__x000A_Patched: False_x000D__x000A_PreRelease: False_x000D__x000A_PrivateBuild: False_x000D__x000A_SpecialBuild: False_x000D__x000A_Language: English (United States)_x000D__x000A_</S> <Nil N="Site" /> <Nil N="Container" /> </Props> <MS> <I32 N="Size">504</I32> <S N="Company">Microsoft Corporation</S> <S N="FileVersion">2017.0140.1000.169 ((SQLServer).170822-2340)</S> <S N="ProductVersion">14.0.1000.169</S> <S N="Description">SQL Server Windows NT - 64 Bit</S> … |

As you can see, things are quite different.

The standard XML file contains the information in the object, but much like you’d do when you put it into a report. The CLIXML file, on the other hand, contains the entire object structure, including the object definitions of the properties within the objects. Because of this, you can import the CLIXML file, using the Import-Clixml cmdlet, and have the complete object in the variable you assign the import to.

As an example, you can use the Get-Content cmdlet to import the data into an XML variable.

|

1 |

[xml]$ps = Get-Content c:\work\SQLProcessXML.xml |

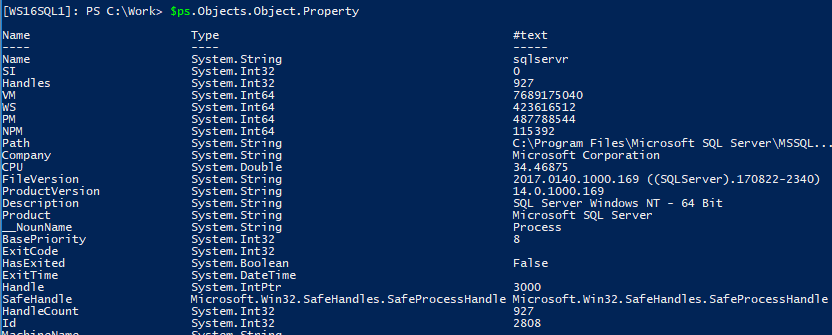

You can then navigate down to the Property elements to retrieve the values. In PowerShell, it’s fairly easy to navigate an XML object by using dots (‘.’) to separate the XML elements.

|

1 |

$ps.Objects.Object.Property |

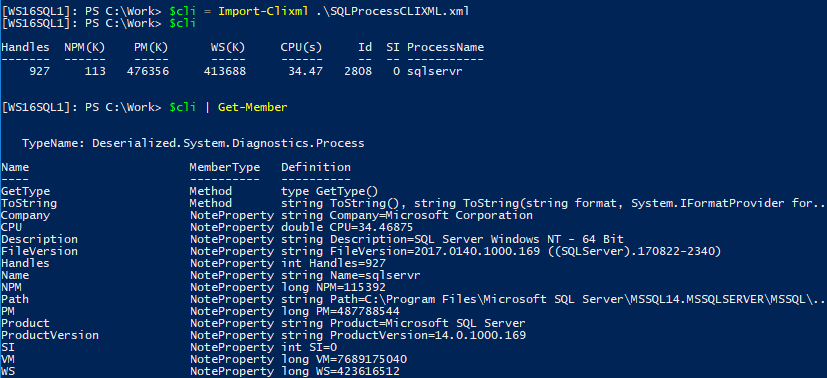

On the other hand, with CLIXML, you can use the Import-Clixml cmdlet to retrieve the original object.

|

1 |

$cli = Import-Clixml e:\work\SQLProcessCLIXML.xml |

Then, the full depth of the original object is returned.

|

1 2 |

$cli $cli | Get-Member |

As you can see, the CLIXML object retains the original object type. Note that it’s been deserialized, so you have all the data in the original object. You can access the object members as you normally would, but the original methods and nested properties are excluded. The important thing to consider is that if you’re planning on using the data in another application, you probably want to use a format appropriate to that application, like JSON, XML, CSV, etc. If, on the other hand, if you’re going to use that data in another PowerShell session, then CLIXML is the format you should use.

Object Portability

The benefit I principally get from CLIXML is the ability to capture data at client systems, then port those objects to my own systems for later analysis.

One of the first things I do when starting with a new client is gather performance metrics using the Get-Counter cmdlet. I’ve got a short script that I use to define the servers I need counters from, the counters I want, and it then uses Invoke-Command to execute the script locally on each of the defined servers.

|

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27 |

$srvrs = 'WS16SQL1','WS16SQL2','WS16SQL3' Invoke-Command -ComputerName $srvrs -ScriptBlock { $srv = $env:COMPUTERNAME $iname = 'sqlserver' $dtstr = (get-date).ToString('yyyyMddHHmm') $counters = @( "\Processor(_Total)\% Processor Time", "\Memory\Available MBytes", "\Paging File(_Total)\% Usage", "\PhysicalDisk(_Total)\Avg. Disk sec/Read", "\PhysicalDisk(_Total)\Avg. Disk sec/Write", "\System\Processor Queue Length", "\$($iname):Access Methods\Forwarded Records/sec", "\$($iname):Access Methods\Page Splits/sec", "\$($iname):Buffer Manager\Buffer cache hit ratio", "\$($iname):Buffer Manager\Page life expectancy", "\$($iname):Databases(_Total)\Log Growths", "\$($iname):General Statistics\Processes blocked", "\$($iname):SQL Statistics\Batch Requests/sec", "\$($iname):SQL Statistics\SQL Compilations/sec", "\$($iname):SQL Statistics\SQL Re-Compilations/sec" ) # Get performance counter data $ctr = Get-Counter -ComputerName $srv -Counter $counters -SampleInterval 60 -MaxSamples 1440 $ctr | Export-Clixml "c:\\work\\$($srv)_counters_$($dtstr).xml" } |

I load the names of the servers into the $srvrs variable and use that to tell Invoke-Command which servers to run the script on. In PowerShell, any executable set of commands, within a pair of braces ({ and }), is called a ScriptBlock. The commands within the ScriptBlock get the local computer name and set the date string to the current date and time. It then defines the individual counters to be gathered into a hash table called $counters. The $iname variable contains the instance name to be used in the counters. In most environments, companies use the default instance, and so I’ve coded this making that assumption.

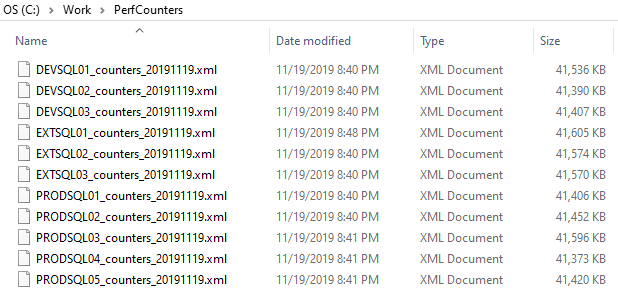

Once the variables needed for Get-Counter are set, I call Get-Counter, specifying a sample interval of 60 seconds and a maximum number of samples at 1440. Essentially, I’m gathering the counters once a minute, for 24 hours. (If you want to try out the script in a shorter time period, just modify those values.) When the Get-Counter cmdlet completes 24 hours later, it assigns the collection of counter values to the $ctr variable. It then pipes that variable to Export-Clixml, which writes the collection to a file in the local work directory with the server name and the date and time the process started in the file name.

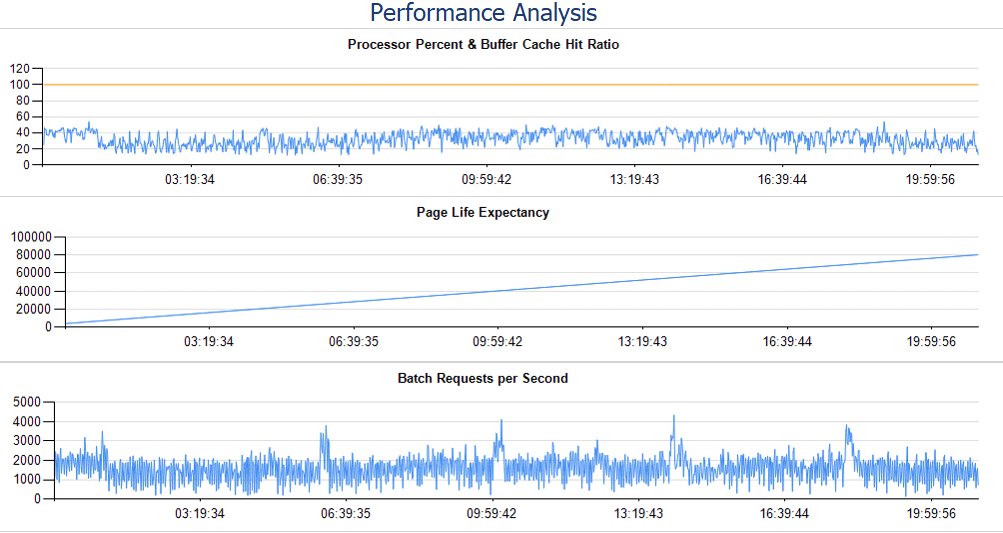

I collect these files and copy them locally to my own machine where I have a second script that imports the CLIXML files and loads the counter values into a database table. I then have reports that read that data and produce reports that show the counters in both graphical and tabular values.

Without installing anything at my client site, I’m able to gather important performance metrics and build a performance baseline against which I can evaluate any changes I make going forward. If you would like to use these reports yourself, you can download the schema and report definitions here.

SQL Power Doc

Kendal Van Dyke (@sqldba) wrote a set of PowerShell modules and scripts that, in two separate steps, collect Windows and SQL Server configuration data, and convert that data into three separate spreadsheets. One contains all the Windows operating system and server configuration data. A second workbook contains SQL Server instance, database, and Agent configuration data. A third workbook provides an analysis of the configuration data and provides an assessment of that configuration against known best practices, with links to the Microsoft documentation describing the best practice descriptions.

There’s a single download zip file you can get from GitHub and, in that file, are the modules, the scripts you need to run, and text documents explaining how to run the scripts. (Note that running the scripts requires local admin rights on each of the servers from which data is collected. This is due to the breadth of information the scripts collect, which use a combination of Windows Management Instrumentation (WMI) and SQL Server Server Management Objects (SMO) to gather its data.)

The first step of the process uses one of the “Get” scripts. I almost always use the Get-SqlServerInventoryToClixml.ps1 script. This script then searches the computers provided in the -ComputerName argument and gathers the configuration data. If I have the necessary privileges, I run this from a workstation in my client environment, and if I don’t, I ask someone who does to run it for me. It doesn’t change anything; it just collects data.

The file it produces is a CLIXML file, compressed using gzip, thus the gz extension.

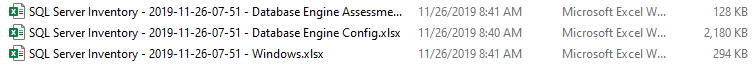

![]()

I then copy the gz file to my local machine and run the second script – Convert-SqlServerInventoryClixmlToExcel.ps1, which produces the spreadsheets I described earlier.

Caveat

I’ve run into many cases where an object I’m working with is not serializable. For example, using the brilliant scripts provided by the dbatools project, I’ve found that some of the objects I’ve retrieved are serializable (Get-DbaComputerSystem, Get-DbaDiskSpace) where others are not (Get-DbaLogin). When they are not, PowerShell throws an error.

Experiment with serialization and the CLIXML file format. You’ll find the right combination of commands that will meet your requirements.

Summary

Over the years, I’ve been successful using scripting to automate the processes of administering databases. PowerShell has been a tremendous step forward from when I started with Korn shell. It’s also important to localize your work, so you don’t use resources on server systems unnecessarily. It’s in this case, where the power of CLIXML comes in handy, to move objects from the servers that have other work to do to your local machine, where you can continue the work without using the server resources.

Load comments