The series so far:

PowerShell provides two main ways to accept parameters in a script: unnamed arguments using the $args array, and named parameters using the Param() block. Named parameters are the recommended approach because they provide type safety, built-in help messages, mandatory enforcement, and default values – making scripts more readable and portable across environments. This guide walks through both methods with working examples you can run immediately.

Introduction

Recently I had a client ask me to update a script in both production and UAT. He wanted any emails sent out to include the name of the environment. It was a simple request, and I supplied a simple solution. I just created a new variable:

|

1 |

$envname = "UAT" |

After updating the script for the production environment, I then modified the subject line for any outgoing emails to include the new variable.

At the time though, I wanted to do this in a better way, and not just for this variable, but also for the others I use in the script. When I wrote this script, it was early in my days of writing PowerShell, so I simply hardcoded variables into it. It soon became apparent that this was less than optimal when I needed to move a script from Dev\UAT into production because certain variables would need to be updated between the environments.

Fortunately, like most languages, PowerShell permits the use of parameters, but, like many things in PowerShell, there’s more than one way of doing it. I will show you how to do it in two different ways and discuss why I prefer one method over the other.

Read also: Using LINQ for high-performance PowerShell

Let’s Have an Argument

The first and arguably (see what I did there) the easiest way to get command line arguments is to write something like the following:

|

1 2 |

$param1=$args[0] write-host $param1 |

If you run this from within the PowerShell ISE by pressing F5, nothing interesting will happen. This is a case where you will need to run the saved file from the ISE Console and supply a value for the argument.

To make sure PowerShell executes what you want, navigate in the command line to the same directory where you will save your scripts. Name the script Unnamed_Arguments_Example_1.ps1 and run it with the argument FOO. It will echo back FOO. (The scripts for this article can be found here.)

|

1 |

.\Unnamed_Arguments_Example_1.ps1 FOO |

You’ve probably already guessed that since $args is an array, you can access multiple values from the command line.

Save the following script as Unnamed_Arguments_Example_2.ps1.

|

1 2 3 |

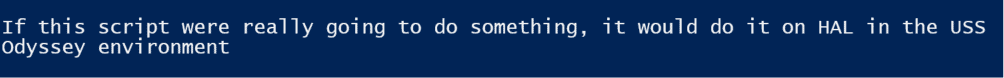

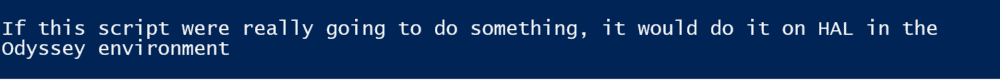

$servername=$args[0] $envname=$args[1] write-host "If this script were really going to do something, it would do it on $servername in the $envname environment" |

Run it as follows:

|

1 |

.\Unnamed_Arguments_Example_2.ps1 HAL Odyssey |

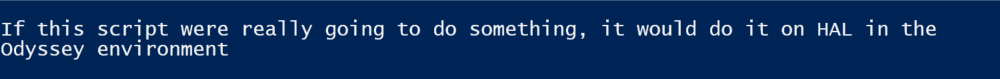

You should see:

One nice ability of reading the arguments this way is that you can pass in an arbitrary number of arguments if you need to. Save the following example as Unnamed_Arguments_Example_3.ps1

|

1 2 3 4 |

write-host "There are a total of $($args.count) arguments" for ( $i = 0; $i -lt $args.count; $i++ ) { write-host "Argument $i is $($args[$i])" } |

If you call it as follows:

|

1 |

.\Unnamed_Arguments_Example_3.ps1 foo bar baz |

You should get:

The method works, but I would argue that it’s not ideal. For one thing, you can accidentally pass in parameters in the wrong order. For another, it doesn’t provide the user with any useful feedback. I will outline the preferred method below.

Using Named Parameters

Copy the following script and save it as Named_Parameters_Example_1.ps1.

|

1 2 |

param ($param1) write-host $param1 |

Then run it.

|

1 |

.\Named_Parameters_Example_1.ps1 |

When you run it like this, nothing will happen.

But now enter:

|

1 |

.\Named_Parameters_Example_1.ps1 test |

And you will see your script echo back the word test.

This is what you might expect, but say you had multiple parameters and wanted to make sure you had assigned the right value to each one. You might have trouble remembering their names and perhaps their order. But that’s OK; PowerShell is smart. Type in the same command as above but add a dash (-) at the end.

|

1 |

.\Named_Parameters_Example_1.ps1 - |

PowerShell should now pop up a little dropdown that shows you the available parameters. In this case, you only have the one parameter, param1. Hit tab to autocomplete and enter the word test or any other word you want, and you should see something similar to:

|

1 |

.\Named_Parameters_Example_1.ps1 -param1 test |

Now if you hit enter, you will again see the word test echoed.

If you run the script from directly inside PowerShell itself, as opposed to the ISE, tab completion will still show you the available parameters, but will not pop them up in a nice little display.

Create and save the following script as Named_Parameters_Example_2.ps1.

|

1 2 |

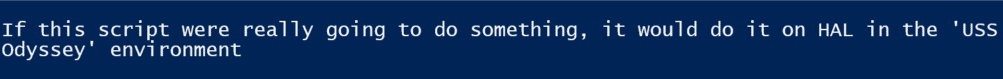

param ($servername, $envname) write-host "If this script were really going to do something, it would do it on $servername in the $envname environment" |

Note now you have two parameters.

By default, PowerShell will use the position of the parameters in the file to determine what the parameter is when you enter it. This means the following will work:

|

1 |

.\Named_Parameters_Example_2.ps1 HAL Odyssey |

The result will be:

Here’s where the beauty of named parameters shines. Besides not having to remember what parameters the script may need, you don’t have to worry about the order in which they’re entered.

|

1 |

.\Named_Parameters_Example_2.ps1 -envname Odyssey -servername HAL |

This will result in the exact same output as above, which is what you should expect:

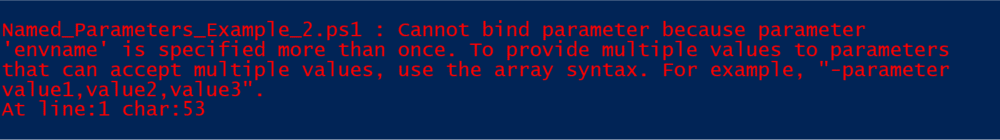

If you used tab completion to enter the parameter names, what you will notice is once you’ve entered a value for one of the parameters (such as –envname above), when you try to use tab completion for another parameter, only the remaining parameters appear. In other words, PowerShell won’t let you enter the same parameter twice if you use tab completion.

If you do force the same parameter name twice, PowerShell will give you an error similar to:

One question that probably comes to mind at this point is how you would handle a parameter with a space in it. For example, how would you enter a file path like C:\path to file\File.ext.

The answer is simple; you can wrap the parameter in quotes:

|

1 |

.\Named_Parameters_Example_2.ps1 -servername HAL -envname 'USS Odyssey' |

The code will result in:

With the flexibility of PowerShell and quoting, you can do something like:

|

1 |

.\Named_Parameters_Example_2.ps1 -servername HAL -envname "'USS Odyssey'" |

You’ll see this message back:

If you experiment with entering different values into the scripts above, you’ll notice that it doesn’t care if you type in a string or a number or pretty much anything you want. This may be a problem if you need to control the type of data the user is entering.

This leads to typing of your parameter variables. I generally do not do this for variables within PowerShell scripts themselves (because in most cases I’m controlling how those variables are being used), but I almost always ensure typing of my parameter variables so I can have some validation over my input.

Consider why this may be important. Save the following script as Named_Parameters_Example_3.ps1

|

1 2 3 |

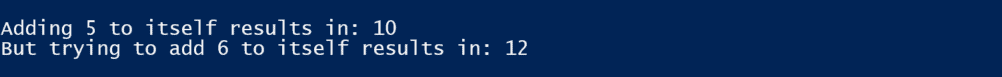

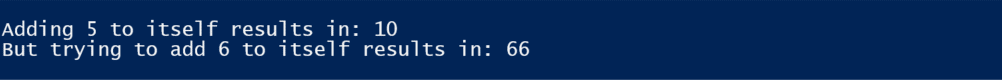

param ([int] $anInt, $maybeanInt) write-host "Adding $anint to itself results in: $($anInt + $anInt)" write-host "But trying to add $maybeanInt to itself results in: $($maybeanInt + $maybeanInt)" |

Now run it as follows:

|

1 |

.\Named_Parameters_Example_3.ps1 -anInt 5 -maybeanInt 6 |

You will get the results you expect:

What if you don’t control the data being passed, and the passing program passes items in quoted strings?

To simulate that run the script with a slight modification:

|

1 |

.\Named_Parameters_Example_3.ps1 -anInt "5" -maybeanInt "6" |

This will result in:

If you instead declare $maybeanInt as an [int] like you did $anInt, you can assure the two get added together, not concatenated.

However, keep in mind if someone tries to call the same script with an actual string such as:

|

1 |

.\Named_Parameters_Example_3.ps1 Foo 6 |

It will return a gross error message, so this can be a double-edged sword.

Automate your SQL workflows with PowerShell and Redgate

Using Defaults

When running a script, I prefer to make it require as little typing as possible and to eliminate errors where I can. This means that I try to use defaults.

Modify the Named_Parameters_Example_2.ps1 script as follows and save it as Named_Parameters_Example_4.ps1

|

1 2 |

param ($servername, $envname='Odyssey') write-host "If this script were really going to do something, it would do it on $servername in the $envname environment" |

And then run it as follows:

|

1 |

.\Named_Parameters_Example_4.ps1 -servername HAL |

Do not bother to enter the environment name. You should get:

This isn’t much savings in typing but does make it a bit easier and does mean that you don’t have to remember how to spell Odyssey!

There may also be cases where you don’t want a default parameter, but you absolutely want to make sure a value is entered. You can do this by testing to see if the parameter is null and then prompting the user for input.

Save the following script as Named_Parameters_Example_5.ps1.

|

1 2 3 4 5 |

param ($servername, $envname='Odyssey') if ($servername -eq $null) { $servername = read-host -Prompt "Please enter a servername" } write-host "If this script were really going to do something, it would do it on $servername in the $envname environment" |

You will notice that this combines both, a default parameter and testing the see if the $servername is null and if it is, prompting the user to enter a value.

You can run this from the command line in multiple ways:

|

1 |

.\Named_Parameters_Example_5.ps1 -servername HAL |

It will do exactly what you think: use the passed in servername value of HAL and the default environment of Odyssey.

But you could also run it as:

|

1 |

.\Named_Parameters_Example_5.ps1 -envname Discovery |

And in this case, it will override the default parameter for the environment with Discovery, and it will prompt the user for the computer name. To me, this is the best of both worlds.

There is another way of ensuring your users enter a parameter when it’s mandatory.

Save the following as Named_Parameters_Example_6.ps1

|

1 2 |

param ([Parameter(Mandatory)]$servername, $envname='Odessey') write-host "If this script were really going to do something, it would do it on $servername in the $envname environment" |

and run it as follows:

|

1 |

.\Named_Parameters_Example_6.ps1 |

You’ll notice it forces you to enter the servername because you made that mandatory, but it still used the default environment name of Odyssey.

You can still enter the parameter on the command line too:

|

1 |

.\Named_Parameters_Example_6.ps1 -servername HAL -envname Discovery |

And PowerShell won’t prompt for the servername since it’s already there.

Using an Unknown Number of Arguments

Generally, I find using named parameters far superior over merely reading the arguments from the command line. One area that reading the arguments is a tad easier is when you need the ability to handle an unknown number of arguments.

For example, save the following script as Unnamed_Arguments_Example_4.ps1

|

1 2 3 4 5 |

write-host "There are a total of $($args.count) arguments" for ( $i = 0; $i -lt $args.count; $i++ ) { $diskdata = get-PSdrive $args[$i] | Select-Object Used,Free write-host "$($args[$i]) has $($diskdata.Used) Used and $($diskdata.Free) free" } |

Then call it as follows:

|

1 |

.\Unnamed_Arguments_Example_4.ps1 C D E |

You will get back results for the amount of space free on the drive letters you list. As you can see, you can enter as many drive letters as you want.

One attempt to write this using named parameters might look like:

|

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 |

param($drive1, $drive2, $drive3) $diskdata = get-PSdrive $drive1 | Select-Object Used,Free write-host "$($drive1) has $($diskdata.Used) Used and $($diskdata.Free) free" if ($drive2 -ne $null) { $diskdata = get-PSdrive $drive2 | Select-Object Used,Free write-host "$($drive2) has $($diskdata.Used) Used and $($diskdata.Free) free" if ($drive3 -ne $null) { $diskdata = get-PSdrive $drive3 | Select-Object Used,Free write-host "$($drive3) has $($diskdata.Used) Used and $($diskdata.Free) free" } else { return} } else {return} # don't bother testing for drive3 since we didn't even have drive 3 |

As you can see, that gets ugly fairly quickly as you would have to handle up to 26 drive letters.

Fortunately, there’s a better way to handle this using named parameters. Save the following as Named_Parameters_Example_7.ps1

|

1 2 3 4 5 6 |

param($drives) foreach ($drive in $drives) { $diskdata = get-PSdrive $drive | Select-Object Used,Free write-host "$($drive) has $($diskdata.Used) Used and $($diskdata.Free) free" } |

If you want to check the space on a single drive, then you call this as you would expect:

|

1 |

.\Named_Parameters_Example_7.ps1 C |

On the other hand, if you want to test multiple drives, you can pass an array of strings.

This can be done one of two ways:

|

1 |

.\Named_Parameters_Example_7.ps1 C,D,E |

Note that there are commas separating the drive letters, not spaces. This lets PowerShell know that this is all one parameter. (An interesting side note: if you do put a space after comma, it will still treat the list of drive letters as a single parameter, the comma basically eats the space.)

If you want to be a bit more explicit in what you’re doing, you can also pass the values in as an array:

|

1 |

.\Named_Parameters_Example_7.ps1 @("C","D","E") |

Note that in this case, you do have to qualify the drive letters as strings by using quotes around them.

Conclusion

Hopefully, this article has given you some insight into the two methods of passing in variables to PowerShell scripts. This ability, combined with the ability to read JSON files in a previous article should give you a great deal of power to be able to control what your scripts do and how they operate. And now I have a script to rewrite!

FAQs: How to Use Parameters in PowerShell

1. What is a parameter in PowerShell?

A parameter in PowerShell lets you pass values into scripts or functions so they behave dynamically instead of using hard‑coded data. Parameters improve flexibility and reuse.

2. How do I define a parameter in a PowerShell script?

You define parameters with the param() block at the top of your script, listing each variable you want to accept.

3. What’s the difference between unnamed and named parameters in PowerShell?

Unnamed parameters come from the $args array and rely on position, while named parameters are declared in a param() block and can be passed in any order with the ‑parameterName value syntax.

4. Can PowerShell parameters have default values?

Yes – you can set default values in the param() block so a parameter uses the default if no value is provided.

5. How do I make a parameter required?

Use the [Parameter(Mandatory)] attribute in the param() declaration to force the user to supply a value before the script runs.

6. Can I accept multiple values with a single parameter in PowerShell?

Yes – you can define a parameter to accept an array of values, letting users pass multiple entries at once.

7. Why should I use parameters instead of hard‑coding values?

Using parameters makes your scripts easier to maintain, more reusable, and less error‑prone when moving between environments like Dev, UAT, and Prod— a principle that scales directly into cloud workflows, as shown in PowerShell functions for reusability in Azure.

Load comments