The series so far:

- DevOps and application delivery

- DevOps application delivery pipeline

- Database DevOps

- DevOps security privacy and compliance

- DevOps Database Delivery

- 10 Guidelines for Implementing DevOps

DevOps is both a mindset and a software development methodology. As a mindset, DevOps is about communication, collaboration, and information sharing. As a methodology, it’s about merging development and operations into a unified, integrated effort. At the heart of the DevOps methodology is the application delivery pipeline, which drives the development and deployment processes forward throughout the application lifecycle.

This article is the second in a series about DevOps. In the first article, I introduced you to many of the basic concepts typically associated with DevOps, including a brief discussion about the DevOps process flow. In this article, I continue that discussion with an explanation of the technologies that make up the application delivery pipeline—the underlying structure that supports this flow.

Introducing the Application Delivery Pipeline

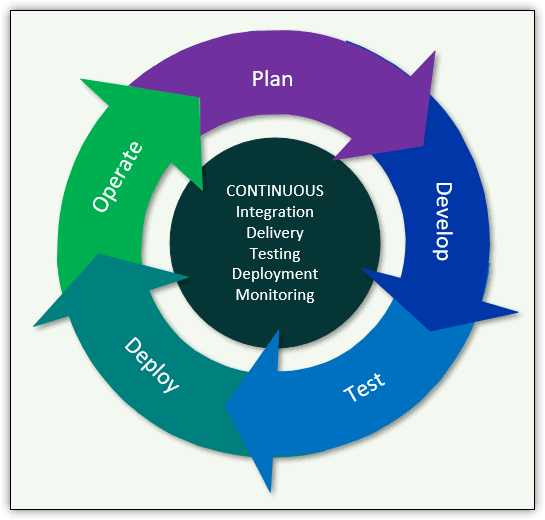

DevOps unifies the application delivery process into a continuous flow that incorporates planning, development, testing, deployment and operations, with the goal of delivering applications more quickly and easily. Figure 1 provides an overview of this delivery flow and its emphasis on continuous, integrated services.

Figure 1. The continuous services of the DevOps methodology

The continuous services—integration, delivery, testing, deployment, and monitoring—are essential to the DevOps process flow. Before I go further with this discussion, however, I should point out that documentation about DevOps practices and technologies often vary from one source to the next, leading to confusion about specific terms and variations in the process flow. Despite these differences, the underlying principles remain the same—development and operations coming together to optimize, speed up and in other ways, improve application delivery.

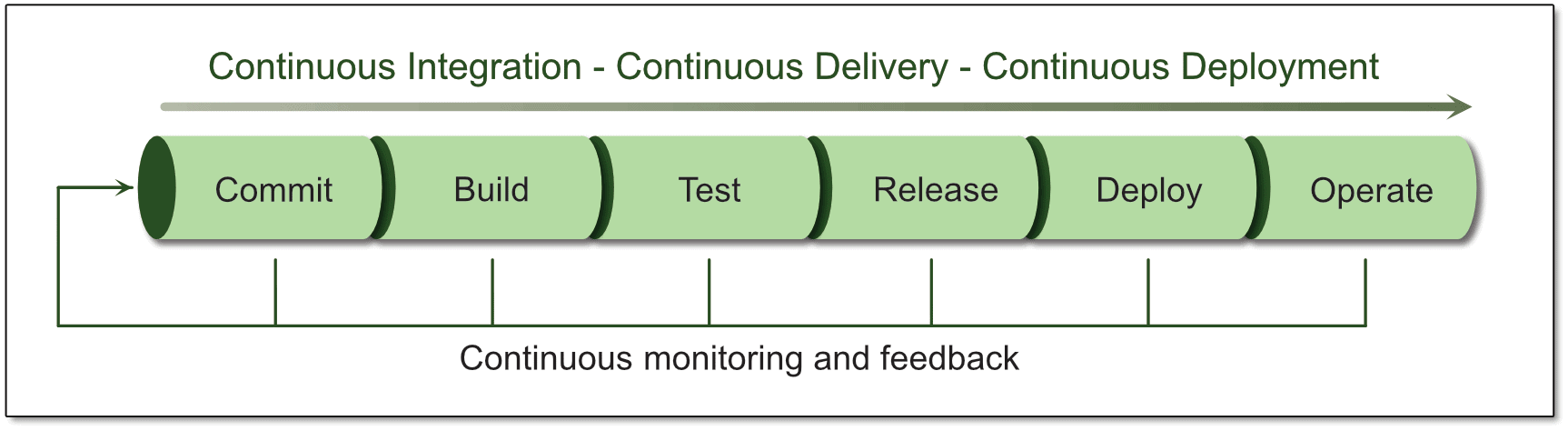

With this in mind, consider the application delivery pipeline that drives the DevOps process flow. One way to represent the pipeline is to break it down into six distinct stages—Commit, Build, Test, Release, Deploy, and Operate. The pipeline begins with code being committed to a source control repository and ends with the application being maintained in production, as shown in Figure 2. Notice that beneath the pipeline is a monitoring and feedback loop that ties the six stages together and keeps application delivery moving forward while supporting an ongoing, iterative process for regularly improving the application.

Figure 2. The DevOps application delivery pipeline

You’ll find plenty of variations of how the pipeline is represented, but the idea is the same: to show a continuous process that incorporates the full application lifecycle—from developing the applications to operating them in production. What the figure does not reflect is the underlying automation that makes the process flow possible, although it’s an integral part of the entire operation.

Also integral to the pipeline are the concepts of continuous integration (CI), continuous delivery (CD), and continuous deployment (CD). My use of the same acronym for both continuous delivery and continuous deployment is no accident. In fact, it points to one of the most common sources of confusion when it comes to the DevOps delivery pipeline.

You’ll often see the delivery process referred to as the CI/CD pipeline, with no clear indication whether CD stands for continuous delivery or continuous deployment. Although you can rely on CI referring to continuous integration in this context, it’s not always clear how CI differs from the two CDs, how the two CDs differ from each other, or how the three might be related. To complicate matters, the terms continuous delivery and continuous deployment are often used interchangeably, or one is used to represent the other.

To help bring clarity to this matter, I’ll start by dropping the use of acronyms and then give you my take on these terms, knowing full well you’re likely to stumble upon other interpretations.

I’ll start with continuous integration, which refers to the practice of automating application build and testing processes. After developers create or update code, they commit their changes to a source control repository. This launches an automated build and testing operation that validates the code whenever changes are checked into the repository. (The automated testing includes such tests as unit and integration tests.)

Developers can see the results of the build/testing process as soon as it has completed. In this way, individual developers can check in frequent and isolated changes and have those changes verified shortly after they commit the code. If any defects were introduced into the code, the developer knows immediately. At the same time, the source control system might roll back the changes committed to the central repository, depending on how continuous integration has been implemented.

With continuous integration, development teams can discover problems early in the development cycle, and the problems they do discover tend to be less complex and easier to resolve.

The CD Conundrum

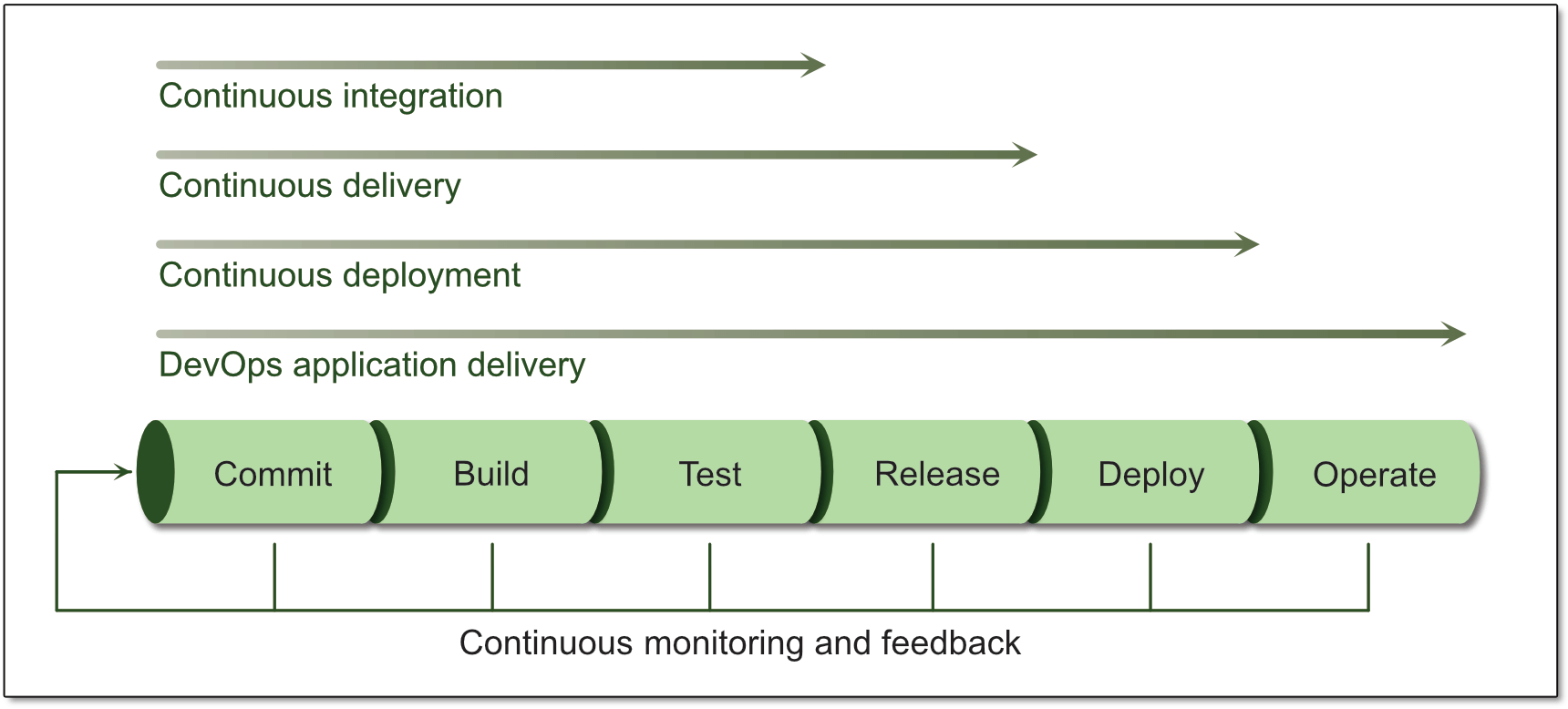

Continuous integration is often associated with the Commit, Build, and Test phases of the application delivery pipeline. Continuous delivery takes the application delivery process one step further by adding the Release stage, which is where the application is prepared and released for production. The Release stage includes test and code-release automation that ensures the code is ready for deployment. As part of this process, the code is compiled and delivered to a staging area in preparation for production.

Like continuous integration, continuous delivery is a highly automated process that allows application updates to be released more frequently. In some documentation, continuous delivery is considered a replacement for continuous integration, incorporating the integration operations into its workflow. In other documentation, continuous delivery is treated an extension of continuous integration, with one building on the other. In either case, the results are the same.

Continuous deployment moves the DevOps pipeline one step further by incorporating the Deploy phase into the delivery process. Continuous deployment presents the same muddle as continuous integration and continuous delivery; that is, continuous deployment is sometimes described as a replacement for the other services or as an extension to them. Once again, the results are the same, only this time, the pipeline’s capabilities are extended in order to automatically deploy the application to production.

In continuous delivery, an application is automatically staged in preparation for production, but it must be manually deployed to production. Before that, however, the application must be manually reviewed and verified, which can represent a significant bottleneck in the software release cycle.

Continuous deployment addresses this issue by adding automation to the equation, making it (theoretically) possible for changes to go live within minutes after the code has been checked into the repository and verified by the automated processes.

The flipside of this is that continuous deployment also eliminates the safeguards that come with manual verifications. For this reason, application delivery teams that implement continuous deployment must be certain to adhere to best coding and testing practices to ensure the application is production-ready.

Figure 3 helps to distinguish between the three continuous services, showing how each service addresses one more step on the pipeline. The figure also shows DevOps application delivery in its entirety in order to stress the importance of viewing the process as one continuous workflow, no matter how you break down or label the services.

Figure 3. The continuous services in the application delivery pipeline

As with the other figures in this article, Figure 3 is meant only to provide a conceptual overview of the application delivery process. No doubt you’ll find many variations on this theme.

Automation and Monitoring

Regardless of how you define or categorize the continuous services, what lies at the core of application delivery is a fully automated process that drives the DevOps pipeline from development through production.

Automation makes it possible to release application updates more easily and frequently while eliminating the need to perform the same mundane tasks over and over again. Automation also lets you easily deploy applications repeatedly, while ensuring that each task in the delivery process is performed correctly.

Just as important as automation is the need for continuous monitoring and feedback at every stage of the DevOps pipeline. Monitoring and feedback are essential to improving the application as well as the delivery process itself. Monitoring also makes it possible to track application usage, performance, and other metrics, while ensuring that any issues that do arise are caught and resolved as quickly as possible.

DevOps-Friendly Technologies

Today’s application development strategies incorporate several technologies that go hand-in-hand with the DevOps methodology. Three particular important technologies are containerization, microservices, and infrastructure as code (IaC).

Containerization refers to the process of implementing applications within containers. A container is similar to a virtual machine (VM) in terms of providing an environment for running applications separately from the host operating system (OS). However, the container is more lightweight and portable than the VM. Each container is packaged with all the essential runtime components, such as files and libraries, but only what is necessary to host an application. A container does not contain the entire OS like a VM.

Containers let you deploy applications in small, resource-efficient packages that can run on any machine that supports containerization, eliminating many of the dependencies that come with deploying traditional applications. Containerized applications also boot and run faster than traditional applications, requiring less of the host’s resources. At the same time, they’re highly scalable, making them well suited to the continuous delivery and deployment models.

Microservices is an application architecture for breaking an application down into small, reusable services. Each service performs a specific business function, communicating with the other services through exposed APIs (typically standards-based REST APIs). At the same time, the services remain independent from each other, which means a service can fail without bringing down the entire application. You can easily integrate microservices into your DevOps process flow because of their flexibility, scalability, and function-specific packaging.

IaC is particularly well suited to the DevOps era. In fact, many consider DevOps to be an essential component of the DevOps application delivery process, helping to ease the development effort, while better preparing the applications for production. With IaC, it’s also quick and easy to set up multiple environments that share the same configuration.

To implement IaC, you can create scripts that define the deployment environments for your applications. For example, you can determine how to configure networks, load balancers, VMs, or other components, regardless of the environment’s initial state. In this way, you can ensure that all your development and test environments properly mimic the production environment.

I should also add that cloud computing can be an important DevOps ally. The cloud provides the type of flexibility and elastic scalability that is so well suited to the DevOps approach. For example, an organization might use cloud storage to support their DevOps infrastructure because it can be quickly and automatically provisioned and scaled as application requirements change.

DevOps Tools

Organizations that plan to adopt the DevOps methodology require a number of tools. One of the most important is a source control solution for maintaining file versions. Solutions such as Git, GitHub, or Subversion let you perform such tasks as track changes, roll back commits, and compare file versions. An effective source control solution also allows developers to check in code from any location, while enabling collaboration between them.

You’ll also need tools for managing and automating the various operations that make up the application delivery pipeline. For example, you’ll require tools that continuously build, test, and deploy your code. One such tool is Jenkins, an open source automation server that lets you automate a wide range of pipeline tasks.

Most applications must have a way to store data, and often it is in SQL Server. Since a production database cannot easily be replaced like a service or application files, database changes are often a bottleneck in bringing new application features online. Consider a tool such as Redgate’s SQL Change Automation, which can bring the database into the DevOps pipeline. With full PowerShell support, it is compatible with automation tools like Jenkins.

You might also benefit from a project management tool such as Apache Maven, which follows the project object model (POM) for managing a project’s build, reporting and documentation. In addition, you’ll likely need a unit testing framework such as JUnit, NUnit, TestNG, or RSpec or an acceptance testing tool such as Calabash, Cucumber, or Fitness.

Another consideration is what tools you need to manage infrastructure. For example, you might require a configuration management tool such as Chef, Puppet, or Ansible to support your IaC deployments. These tools integrate with the process flow to let you provision and configure infrastructure components at scale. You might also need a containerization tool such as Docker or a container orchestration tool such as Kubernetes.

When considering tools, also take into account your monitoring requirements. For example, Nagios lets you continuously monitor your system, network, and infrastructure for performance and security issues. An effective monitoring solution should be able to alert you to problems when they occur, find the root causes of those problems, and help you optimise your systems and plan for infrastructure.

The DevOps Application Delivery Pipeline

When preparing to implement the DevOps methodology, you should assess the tools you have on hand to determine which ones, if any, can support the application delivery process and what additional tools you might need. Be sure to take into account issues related to integration and interoperability, as well as what it will take to deploy and learn the new tools and the processes they support.

Above all, remember that DevOps is not only a methodology but also a mindset that should be adopted by everyone who participates in the process. True, they still need to learn about the logistics of application delivery and the tools it takes to support that process, but more importantly, they should understand the necessity of open communication, collaboration, and information sharing in order to ensure that the DevOps effort can succeed.

Load comments