This is the first part of a mini-series about Parallel Execution Skew and how to address it.

Introduction

So what does “Parallel Execution Skew” mean? With Oracle Enterprise Edition you can have Oracle automatically spread the processing of a single SQL statement across several worker processes in the hope to complete the processing in less elapsed wall-clock time.

Note: The “Oracle Parallel Execution” feature of Enterprise Edition covers more than just the parallel processing of SQL statements, Oracle can actually do parallel processing of other tasks, too, like crash recovery, backup/recovery, index builds, export etc. but here I specifically refer to the parallel processing of SQL execution

The important point to understand with Parallel Execution is that in order to perform parallel processing, a given task – in this case here the execution of a SQL statement – has to be divided and distributed across multiple worker processes and it only can speed up the execution if the processing is spread (ideally) evenly across the involved processes. In case there is an uneven distribution of work, the overall execution time depends on the processes that have to perform the majority of work, and in consequence other involved processes that perform the minority of work will be idle during that time.

Since Parallel Execution comes with significant overhead (start-up/coordination/communication/implementation overhead, refer to below links for more background information), which means that with Parallel Execution the database actually has to perform more work than with a comparable serial execution, in case of significant Parallel Execution Skew it is not uncommon to end up with an execution that isn’t much faster than serial execution, or in worst case even slower. Or in other words, as long as the work distribution is not even, there is room for improvement in terms of execution time.

Therefore, if you want to make the most of Parallel Execution, it is very important to check for Parallel Execution Skew as it can significantly influence the performance of Parallel Execution no matter how powerful the underlying system is and how much resources you try to throw at your SQL statement execution.

Note: I won’t go into too much details here how to determine if a SQL execution has a problem with Parallel Execution Skew – if you are interested in more background information, please refer to below mentioned resources. This series here focuses on how to address Parallel Execution Skew if an SQL execution is actually affected by it

– OTN article mini-series “Understanding Parallel Execution”

– AllThingsOracle.com webinar “Analyzing and Troubleshooting Oracle Parallel Execution”

– Multi-part video tutorial on my blog on detecting and analysing Parallel Execution Skew

So why does it sometimes happen that Oracle can’t automatically ensure an even work distribution? The Oracle query optimizer automatically generates the rules for the work distribution in case of Parallel SQL Execution and therefore follows certain generic strategies. For more complex operations like aggregations, sorts and joins Oracle follows certain rules to ensure in principle an efficient and correct processing.

For example, in order to process the join of two row sources of similar size using Parallel Execution, Oracle uses the join key(s) as criteria to distribute the work rather than distribute the data randomly. Why? Because Oracle has to ensure that data with the same join keys of the two row sources will be processed by the same worker, otherwise the join wouldn’t produce correct results – consider a random distribution where join key value “a” of row source 1 will be processed by worker no. 3, but join key value “a” of row source 2 will be processed by a different worker and therefore not finding a match in this worker’s processed slice of rowsource 1. The join would discard this data as it doesn’t find a match, clearly an incorrect result.

Baseline

Before we look at an example of Parallel Execution Skew, in order to have a baseline where we can see a good work distribution, here is our test case set-up and a simple two table join that can be run with serial and parallel execution to see how parallel execution in an ideal case can speed up the execution.

Test case table set-up:

|

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27 28 29 30 31 32 33 34 |

create table t_1 compress as select /*+ use_nl(a b) */ rownum as id , rpad('x', 100) as filler from (select /*+ cardinality(1e5) */ * from dual connect by level <= 1e5) a, (select /*+ cardinality(20) */ * from dual connect by level <= 20) b ; exec dbms_stats.gather_table_stats(null, 't_1', method_opt=>'for all columns size 1') create table t_2 compress as select rownum as id , case when rownum <= 5e5 then mod(rownum, 2e6) + 1 else 1 end as fk_id_skew , rownum as fk_id_uniform , case when rownum <= 5e5 then mod(rownum, 2e6) + 1 else null end as fk_id_sparse , rpad('x', 100) as filler from (select /*+ cardinality(1e5) */ * from dual connect by level <= 1e5) a, (select /*+ cardinality(20) */ * from dual connect by level <= 20) b ; exec dbms_stats.gather_table_stats(null, 't_2', method_opt=>'for all columns size 1') alter table t_1 parallel 4 cache; alter table t_2 parallel 4 cache; |

Note: If you want to reproduce the example and you’re using Oracle 12c it is important to stick to the same METHOD_OPT parameter used above – we’ll later see that Oracle 12c introduces a new feature that can detect and deal with Parallel Execution Skew automatically (at present in a limited number of scenarios) – and this feature requires a histogram to be triggered. Using FOR ALL COLUMNS SIZE 1 prevents the histogram creation and therefore allows reproducing the skew problem in 12c, too.

The table structure is straightforward for those two tables, the only noteworthy being the different variations of the T_2 FK column – one having a uniform value distribution (FK_ID_UNIFORM, actually representing a 1:1 relationship), one having one very popular value (FK_ID_SKEW), and the third one being another variation of “popular” having a lot of NULLs (FK_ID_SPARSE).

Note: Setting the tables to CACHE enables from 11.2 on the new in-memory Parallel Execution feature. Prior to this Parallel Execution Servers always bypassed the buffer cache and used direct path reads – a strategy optimized for reading large segment via full segment scans since direct path reads don’t have the overhead of maintaining the buffer cache as part of the physical read operation. With the in-memory Parallel Execution feature Parallel Execution Servers can make (in RAC even optimized) use of the buffer cache. For more information see this post by Tanel Poder for example

And here is the simple query that can be run once serially, then using Parallel Execution:

Serial execution:

|

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 |

select count(t_2_filler) from ( select /*+ monitor no_parallel leading(t_1 t_2) use_hash(t_2) no_swap_join_inputs(t_2) */ t_1.id as t_1_id , t_1.filler as t_1_filler , t_2.id as t_2_id , t_2.filler as t_2_filler from t_1 , t_2 where t_2.fk_id_uniform = t_1.id and regexp_replace(t_2.filler, '^\s+([[:alnum:]]+)\s+$', lpad('\1', 10), 1, 1, 'c') >= regexp_replace(t_1.filler, '^\s+([[:alnum:]]+)\s+$', lpad('\1', 10), 1, 1, 'c') ); |

Note: The query makes use of the new statement level hint syntax of the NO_PARALLEL hint – this is officially supported only from 11.2 on. In previous releases it requires the object level hint per object, like NO_PARALLEL(T_1) NO_PARALLEL(T_2). Instead you could also simply disable parallel query in the session: ALTER SESSION DISABLE PARALLEL QUERY to achieve the same on session level.

The remaining hints used (except the MONITOR hint to request Real-Time SQL Monitoring of the execution) dictate a specific join order (LEADING), join method (USE_HASH) and hash join operation order (NO_SWAP_JOIN_INPUTS)

Parallel Execution:

|

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 |

select count(t_2_filler) from ( select /*+ monitor leading(t_1 t_2) use_hash(t_2) no_swap_join_inputs(t_2) pq_distribute(t_2 hash hash) */ t_1.id as t_1_id , t_1.filler as t_1_filler , t_2.id as t_2_id , t_2.filler as t_2_filler from t_1 , t_2 where t_2.fk_id_uniform = t_1.id and regexp_replace(t_2.filler, '^\s+([[:alnum:]]+)\s+$', lpad('\1', 10), 1, 1, 'c') >= regexp_replace(t_1.filler, '^\s+([[:alnum:]]+)\s+$', lpad('\1', 10), 1, 1, 'c') ); |

Note: There is an additional hint that is specific to Parallel Execution: PQ_DISTRIBUTE. For joins this hint tells the optimizer what kind of Parallel Distribution method to use for the join between T_1 and T_2 – in this case a hash distribution based on the join key. For more details regarding distribution methods and the PQ_DISTRIBUTE hint, please refer to my OTN Mini-Series “Understanding Parallel Execution” (see link above)

Note that I used Oracle 12c for all my tests.

On my test system the serial execution takes approx. 57 seconds, whereas at a DOP (Degree Of Parallelism) of 4 the Parallel Execution takes approx. 15 seconds (I have 12 cores / 24 CPUs in my test system so plenty of CPU resources available). So the Parallel Execution in this particular case really scales very well, thanks to the simplicity of the query and the fact that it is completely CPU bound and not constrained by any I/O. In real-life it is more common to see Parallel Execution not to scale that well, for several reasons, two of them being the overhead of Parallel Execution for more complex execution plans and I/O constraints.

Obviously the processing has been split very evenly among the Parallel Execution Servers, otherwise the Parallel Execution couldn’t be four times faster at a DOP of 4.

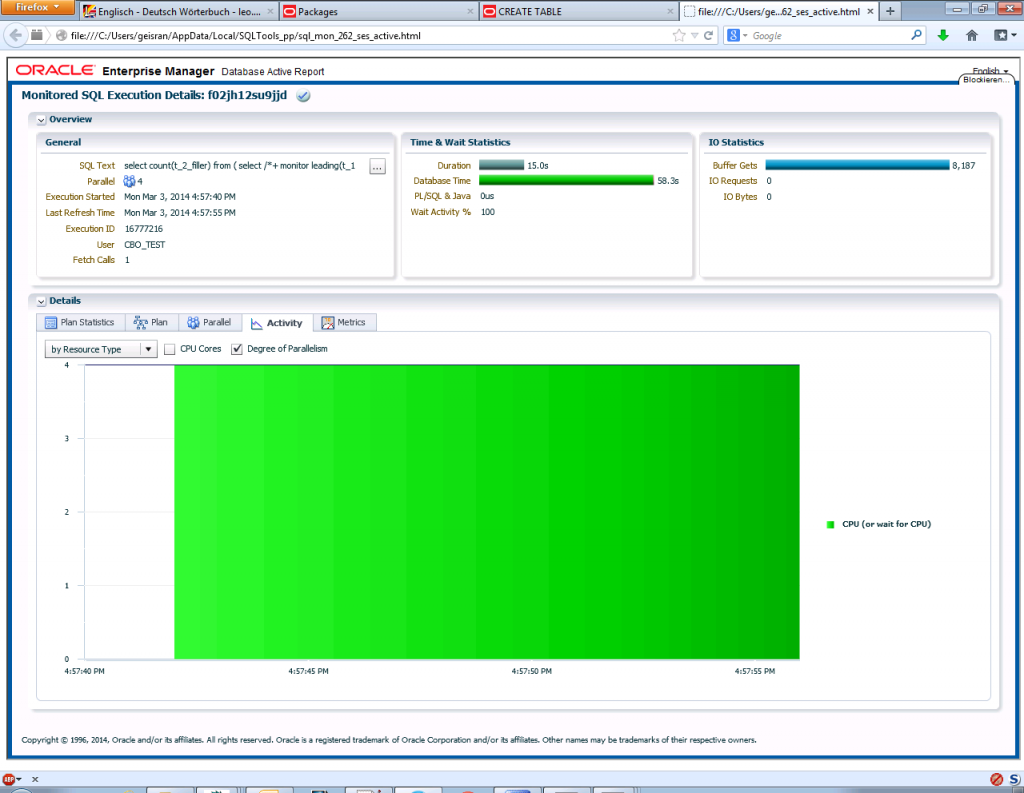

We can see that confirmed for example by looking at the “Activity” tab of a Real-Time SQL Monitoring report (only available if you happen to have an additional Diagnostics + Tuning Pack license):

The “Activity” tab tells us that apart from a short duration at the beginning (the start-up overhead of Parallel Execution) four sessions were active all the time, so obviously the work was very evenly distributed among the Parallel Execution Servers.

Another interesting information from the Real-Time SQL Monitoring report is the “Database Time” in the “Time & Wait Statistics” section of the overview: It shows us a duration of 15 seconds, but a “Database Time” of 58 seconds – which is very similar to the duration / database time of the serial execution. So in total the database performed a similar amount of work, but it completed faster because the processing was spread among four worker processes.

Note: This is a simplified example that processes a very small amount of data and in principle is CPU bound by evaluating a silly regular expression. Therefore it minimizes the Parallel Execution overhead and scales pretty well provided you have sufficient CPU resources available. Typically with real-life examples that process larger amounts of data and use more complex execution plans you won’t achieve the almost perfect scaling as you see with this simple example. If you want to see the effect of the overhead more clearly see this post on my blog where I demonstrate a three table join based on the same test data setup.

Now that we’ve seen Parallel Execution scaling well we’ll have a look in the next part of the series at a quite similar case where the work distribution limits the speedup achieved.

Load comments