This is the last part of my series on Execution plans, and features an option which is only available if you have licensed the Diagnostic and Performance Packs. It’s a feature that allows you to watch the flow of data through an execution plan as the query is running, typically through the graphic interface supplied by Enterprise Manager (or Grid Control, or Cloud Control). There is, however, a command line interface to the tool that you can use to get a text snapshot of a query as it runs, reporting the state either as flat text, an (unreadable) HTML file, or in a form that reproduces the graphic images supplied through EM / GC / CC.

Example

The command line interface to SQL Monitoring comes through the package dbms_sqltune (hence the need for the licensing) in two major functions: report_sql_monitor_list() and report_sql_monitor() ; these functions return a CLOB but there are two related functions that return XMLTYPE; both functions have a large number of parameters (11 and 19 respectively for the list and detail functions) but the functions all have default values so, in the simplest case, you could run a script like the following (the output is, unfortunately, extremely wide):

|

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 |

set long 250000 set linesize 254 set pagesize 100 set trimspool on set heading off column text_line format a254 select dbms_sqltune.report_sql_monitor_list() text_line from dual ; select dbms_sqltune.report_sql_monitor() text_line from dual ; |

The output from this code will produce a text of all the SQL statements that have been monitored in the recent past and are still available in the library cache, followed by the execution plan and actual resource usage of just the single most recently executed statement. If you want to produce an HTML output that you can view through a web browser then add the parameter type = > ‘HTML’ to the two calls; or, if you want a more advanced browser display that emulates the OEM output, add the parameter type => ‘ACTIVE’.

Although there are far more parameters available, it’s likely that the most commonly useful one would be the one that allows you to identify a specific SQL statement by referencing its SQL_ID: sql_id => ‘{some sql_id}’

Here’s an example of the output from an 11.2.0.4 instance where I’ve selected a single sql_id for a parallel “create table as select”. First the “list” section:

|

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 |

SQL Monitoring List ===================== =================================================================================================================================================================================== | Status | Duration | SQL Id | Exec Id | Start | User | Module/Action | Dop | DB Time | IOs | SQL Text | =================================================================================================================================================================================== | DONE | 372s | g12jqmxu3vqhu | 16777216 | 03/02/2015 21:50:34 | TEST_USER | SQL*Plus/- | 2 | 713s | 8560 | create table t3 as select /*+ use_merge(t2) | | | | | | | | | | | | leading(t1 t2) monitor parallel(2) */ | | | | | | | | | | | | t1.object_name obj1, t2.object_name obj2, | | | | | | | | | | | | count(*) ct from t1, t2 where t2.owner = t1.owner | | | | | | | | | | | | group by t1.object_na... | =================================================================================================================================================================================== SQL Monitoring Report |

You’ll notice in the SQL text that I’ve included the hint /*+ monitor */. This wasn’t necessary for my example as Oracle will automatically monitor any parallel statement and any statement that it estimates will take more than 6 seconds to complete. I created the t1 and t2 tables as “select * from all_objects where rownum <= 5000”, so the join on “owner” is going to do something a little nasty. And here’s the detail of what happened:

|

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27 28 29 30 31 32 33 34 35 36 37 38 39 40 41 42 43 44 45 46 47 48 49 50 51 52 53 54 55 56 57 58 59 60 61 62 63 64 65 66 67 68 69 70 71 72 73 74 75 76 77 78 79 80 81 82 83 84 85 86 87 88 89 90 91 |

SQL Monitoring Report SQL Text ------------------------------ create table t3 as select /*+ use_merge(t2) leading(t1 t2) monitor parallel(2) */ t1.object_name obj1, t2.object_name obj2, count(*) ct from t1, t2 where t2.owner = t1.owner group by t1.object_name, t2.object_name Global Information ------------------------------ Status : DONE Instance ID : 1 Session : TEST_USER (20:3335) SQL ID : g12jqmxu3vqhu SQL Execution ID : 16777216 Execution Started : 03/02/2015 21:50:34 First Refresh Time : 03/02/2015 21:50:34 Last Refresh Time : 03/02/2015 21:56:46 Duration : 372s Module/Action : SQL*Plus/- Service : SYS$USERS Program : sqlplus@linux11 (TNS V1-V3) Global Stats ================================================================================================= | Elapsed | Cpu | IO | Concurrency | Other | Buffer | Read | Read | Write | Write | | Time(s) | Time(s) | Waits(s) | Waits(s) | Waits(s) | Gets | Reqs | Bytes | Reqs | Bytes | ================================================================================================= | 713 | 21 | 407 | 1.63 | 284 | 80856 | 4052 | 933MB | 4508 | 1GB | ================================================================================================= Parallel Execution Details (DOP=2 , Servers Allocated=4) ================================================================================================================================================================================== | Name | Type | Server# | Elapsed | Cpu | IO | Concurrency | Other | Buffer | Read | Read | Write | Write | Wait Events | | | | | Time(s) | Time(s) | Waits(s) | Waits(s) | Waits(s) | Gets | Reqs | Bytes | Reqs | Bytes | (sample #) | ================================================================================================================================================================================== | PX Coordinator | QC | | 0.48 | 0.03 | 0.03 | 0.07 | 0.35 | 170 | 2 | 16384 | | . | log file sync (1) | | p000 | Set 1 | 1 | 350 | 8.43 | 196 | 1.02 | 144 | 40290 | 2011 | 463MB | 2241 | 747MB | buffer busy waits (1) | | | | | | | | | | | | | | | log buffer space (19) | | | | | | | | | | | | | | | log file switch (archiving needed) (117) | | | | | | | | | | | | | | | log file switch (checkpoint incomplete) (5) | | | | | | | | | | | | | | | rdbms ipc reply (1) | | | | | | | | | | | | | | | direct path read temp (157) | | | | | | | | | | | | | | | direct path write (2) | | | | | | | | | | | | | | | direct path write temp (24) | | | | | | | | | | | | | | | flashback log file sync (1) | | p001 | Set 1 | 2 | 359 | 8.47 | 211 | 0.55 | 139 | 40300 | 2039 | 469MB | 2267 | 753MB | buffer busy waits (1) | | | | | | | | | | | | | | | log buffer space (16) | | | | | | | | | | | | | | | log file switch (archiving needed) (117) | | | | | | | | | | | | | | | log file switch (checkpoint incomplete) (4) | | | | | | | | | | | | | | | log file switch completion (1) | | | | | | | | | | | | | | | control file sequential read (2) | | | | | | | | | | | | | | | db file sequential read (4) | | | | | | | | | | | | | | | direct path read temp (175) | | | | | | | | | | | | | | | direct path write temp (30) | | p002 | Set 2 | 1 | 2.34 | 1.99 | | | 0.35 | 51 | | . | | . | | | p003 | Set 2 | 2 | 1.81 | 1.60 | | | 0.21 | 45 | | . | | . | | ================================================================================================================================================================================== SQL Plan Monitoring Details (Plan Hash Value=3638022418) ==================================================================================================================================================================================================================== | Id | Operation | Name | Rows | Cost | Time | Start | Execs | Rows | Read | Read | Write | Write | Mem | Temp | Activity | Activity Detail | | | | | (Estim) | | Active(s) | Active | | (Actual) | Reqs | Bytes | Reqs | Bytes | (Max) | (Max) | (%) | (# samples) | ==================================================================================================================================================================================================================== | 0 | CREATE TABLE STATEMENT | | | | 313 | +60 | 5 | 2 | | | | | | | 1.41 | log file sync (1) | | | | | | | | | | | | | | | | | | buffer busy waits (2) | | | | | | | | | | | | | | | | | | rdbms ipc reply (1) | | | | | | | | | | | | | | | | | | control file sequential read (2) | | | | | | | | | | | | | | | | | | db file sequential read (4) | | 1 | PX COORDINATOR | | | | 1 | +372 | 5 | 2 | | | | | | | | | | 2 | PX SEND QC (RANDOM) | :TQ10002 | 6M | 36152 | 10 | +363 | 2 | 2 | | | | | | | | | | 3 | LOAD AS SELECT | | | | 329 | +44 | 2 | 2 | | | 571 | 570MB | 8M | | 40.70 | log buffer space (35) | | | | | | | | | | | | | | | | | | log file switch (archiving needed) (234) | | | | | | | | | | | | | | | | | | log file switch (checkpoint incomplete) (9) | | | | | | | | | | | | | | | | | | log file switch completion (1) | | | | | | | | | | | | | | | | | | Cpu (7) | | | | | | | | | | | | | | | | | | direct path write (2) | | | | | | | | | | | | | | | | | | flashback log file sync (1) | | 4 | HASH GROUP BY | | 6M | 36152 | 371 | +2 | 2 | 13M | 3839 | 930MB | 3839 | 930MB | 101M | 721M | 57.04 | Cpu (19) | | | | | | | | | | | | | | | | | | direct path read temp (332) | | | | | | | | | | | | | | | | | | direct path write temp (54) | | 5 | PX RECEIVE | | 6M | 35 | 44 | +1 | 2 | 13M | | | | | | | 0.14 | Cpu (1) | | 6 | PX SEND HASH | :TQ10001 | 6M | 35 | 40 | +2 | 2 | 13M | | | | | | | | | | 7 | MERGE JOIN | | 6M | 35 | 40 | +2 | 2 | 13M | | | | | | | | | | 8 | SORT JOIN | | 5000 | 12 | 40 | +2 | 2 | 5000 | | | | | 225K | | | | | 9 | PX BLOCK ITERATOR | | 5000 | 11 | 1 | +2 | 2 | 5000 | | | | | | | | | | 10 | TABLE ACCESS FULL | T1 | 5000 | 11 | 1 | +2 | 32 | 5000 | | | | | | | | | | 11 | SORT JOIN | | 5000 | 12 | 41 | +1 | 5000 | 13M | | | | | 422K | | 0.70 | Cpu (5) | | 12 | PX RECEIVE | | 5000 | 11 | 1 | +2 | 2 | 10000 | | | | | | | | | | 13 | PX SEND BROADCAST | :TQ10000 | 5000 | 11 | 1 | +2 | 2 | 10000 | | | | | | | | | | 14 | PX BLOCK ITERATOR | | 5000 | 11 | 1 | +2 | 2 | 5000 | | | | | | | | | | 15 | TABLE ACCESS FULL | T2 | 5000 | 11 | 1 | +2 | 32 | 5000 | | | | | | | | | ==================================================================================================================================================================================================================== |

I’ve picked a parallel example to give you some idea of how much information about the parallelism is available above and beyond the details supplied by dbms_xplan.display_cursor(), but I’m going talk about the details in a fairly generic fashion.

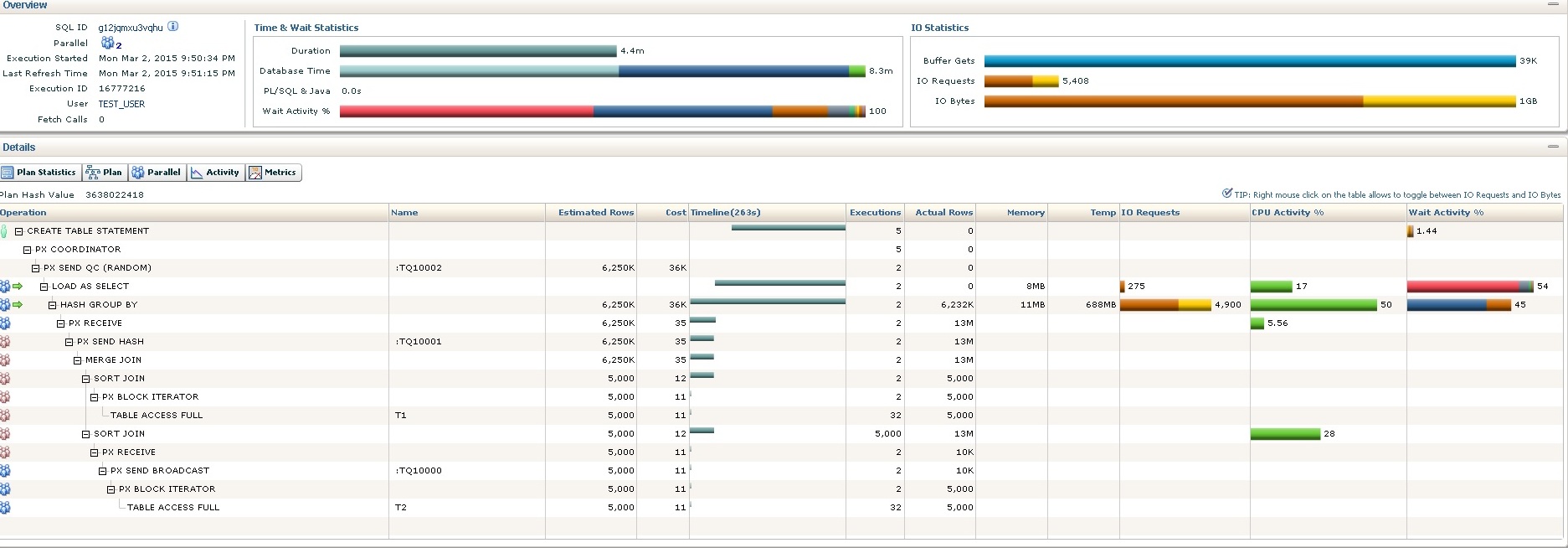

I captured this text output after the CTAS has completed, but you can run the report while a statement is still executing – unlike the dbms_xplan.display_cursor() strategy you don’t have to wait for a statement to complete. Here’s the initial OEM display for the same query, partway through execution:

Things to note (OEM)

In the Overview section you can see at the top left that this query ran parallel 2; the “Time and Wait Statistics” show that the elapsed time (so far) was 4.4 minutes while the DB time was 8.3 minutes (which means, roughly, that at any moment one set of PX slaves was active and the other inactive); of the DB time nearly half was User I/O (dark blue) and half was “Other” (light blue), with a small fraction spent on CPU. Fortunately we also have the “Wait Activity %” bar showing us a (rare) big pink splash that explains the “Other”: my archivelog destination was full so the CTAS stopped until I freed some space. On a live screen you can hover the mouse over these bars to see what each colour represents. The “IO Statistics” gives you a summary of logical and physical I/O (the darker brown is writes, the lighter/yellow is reads).

At the top of the Details section you can see there are 5 buttons which give you different pieces of data – including the information about PX slaves that is printed in the text output. This is followed by the execution plan with all the detail of work down and time spent for each line of the plan. The time information (CPU Activity% and Wait Activity%) comes from v$active_session_history (ASH). At the left of the plan you can see little clusters of “people” which mark the operations that have operated in parallel (I think there really ought to be a marker on the PX SEND QC (RANDOM) operation as well); and on the 4th and 5th lines of the plan you can see a pair of green arrows identifying the lines of the plan that are currently active. I’m going to read through the plan (in order of rowsource generation) describing the detail.

In the last few lines of the plan, PX slave set 1 does a parallel tablescan of t2 and broadcasts the rows to PX slave set 2 (every slave gets every row). There are 5,000 rows in t2, which is why the “Actual Rows” is 5,000 for the scan and (since we’re parallel 2) 10,000 for the send and receive. Generally we see 2 executions of most operations because there are two slaves in a slave set – however we can infer that the tablescan was split into 16 rowid ranges per slave when we see the 32 executions of the TABLE ACCESS FULL operations. (Parallel execution means that the cardinality check that “Estimated rows * Executions = Actual rows” doesn’t apply – you have to think very carefully about what’s happening.) Looking at the “Timeline” column we can see that both tablescans started almost immediately the statement started, and ended very quickly (The time-granularity is only down to 1 second, so there’s plenty of room for little errors to appear).

After the first scan and broadcast, though, we see the parallel sort at line 12 apparently lasting a long time – claiming 28% of the CPU time of the whole query and generating 13M rows because it executed 5,000 times! This is a misleading feature of the way execution plans (including serial ones) display the merge join. Ideally I’d like to see this “SORT JOIN” line split into two operations; it would be nice to see a “SORT JOIN” line that sorts 5,000 rows very quickly, and then a parent called something like “MERGE PROBE” which is the operation that would be executed once for every row in the first rowsource. As it is the “SORT JOIN” line sorts once (for each slave in PX Slave set 2) copying the data into memory in sorted order after which each slave will scan chunks of table t1 and, for each row, probe their private memory copies of the sorted t2 data. (Remember there are 5,000 rows in t1, which is why we get 5,000 executions of the probe.)

The 13M rows generated by the merge join at line 8 are then distributed by hash back to the slaves in PX Slave set 1. The PX RECEIVE at operation 6 claims to use 5.56% of the CPU of the whole query, but that’s probably subject to some bad luck in sampling – in reality the CPU time is probably spread between the send and the receive, and some of the CPU time for the HASH GROUP BY should probably have been allocated to the PX RECEIVE, but ASH samples once per second and for a very small number of samples you have to assume that there are some sampling anomalies around the edges.

As PX Slave Set 1 receives the join data each slave starts doing a HASH GROUP BY; from the colour bars you can see that this takes a lot of reads and writes (to temp) and a lot of CPU. We can also see from the “Timeline” that the HASH GROUP BY started very shortly after the statement started to execute: the tablescans were very quick and the hash group by can start as soon as the first few rows are joined; we can see then that the hash group by goes on for a very long time after the merge join has finished sending its data. There are two reasons for this: first is simply that the volume of data for the group by is large and spills to disc and has to be re-read before the group by can complete – but the merge join (along with the two sort joins that feed it) ends when it has supplied all its data to the hash group by. The “Timeline” tells us that the LOAD AS SELECT started very soon after the MERGE JOIN ended; I am a little puzzled by this but I think it’s an indication that the hash group by spilled to disc in a large number of small partitions, and was therefore able to produce the very first set of aggregate results immediately after the last row of the incoming data set appeared.

The second reason that the group by goes on after the merge join (and would do, even if the group by completed in memory) is that it has to feed its result set to the LOAD AS SELECT operation and can’t complete until the data is written to disc, and the writing has taken a long time. The reason for the exaggerated completion time shows up in the pink Wait I/O bar – there’s a huge amount of time lost to “log file switch (archiving needed)”; I was running in archivelog mode with FORCE LOGGING enabled and my fast_recovery_area was full, so everything stopped until I noticed that something was wrong and cleared some space.

I said at the outset that I snapped this picture from OEM before the statement had completed. That’s why the 2nd and 3rd lines of the plan have an empty Timeline – they haven’t yet done anything. I’ll have to admit to being puzzled by the fact that the 1st line (create table) seems to start a little while after the 4th (load as select) – but perhaps this is an indication of the moment when the first space allocations in the tablespace were made as the hash group by produced its first batch of data.

You’ll notice two things about the first few lines of the plan – their Actual Rows is zero, they haven’t produced anything yet; ultimately (because we are running parallel 2) we will see that they all produce 2 rows. The other little oddity is that the first two lines of the plan show 5 executions rather than 2 – but that’s an effect of the “shared parallel cursor” – there are actually 5 sessions sharing the same child cursor as this query runs.

Things to note (Text)

There isn’t really a lot extra to say about the text output. It’s nice that you can generate it if you don’t have access to OEM; and it’s nice that it can make the list of wait events much clearer in complicated cases (the little patches of colour that show up in OEM for rare cases can be too small to notice or click on).

In my example I ran the text report after the CTAS has completed, so the final figures are different – but for the completed lines the printed figures in the “Start Active” (how many seconds through the execution were we before this operation started) and “Time Active (s)” (for how long was this operation active) give you a little better precision in terms of sequencing.

The summary of parallel activity is very nice – and in this case shows that one set of PX slaves did almost all the work; but that information is also available through OEM if you click on the “Parallel” button.

Conclusion

This closing article in the series on reading Execution plans covered an option that is available only if you’ve got the relevant licenses. Although hinting with /*+ gather_plan_statistics */ or setting the parameter statistics_level to all can give you a summary of the work done when a query completes, the SQL Monitor features adds two key benefits – it can show you the workload accumulating as the query runs so you can choose to terminate a statement if you can see that it’s going to take a very long time to complete, and it gives you the detail of the underlying ASH data for each line of the plan so you know far more about how the time is being used. The extremely visual nature of the OEM display also makes it very easy to spot unexpected patterns of behaviour that you might not predict or expect from reading the bare text of what might seem to be a reasonable execution plan.

Load comments