As a database professional, your most important duty is to protect the data under your care. This is something that I believe most any DBA would agree with; yet, in many cases, you may unknowingly neglect one of the core aspects of data protection, namely tracking who is viewing and modifying the data itself. Most any competent data professional would agree with the need for things like backups, integrity checks, etc., and those certainly are critical (it’s not really going to matter who modified data if you lose it all due to a failed disk). But in today’s world where regulations and risk of data breach abound, they are no longer sufficient to fulfil your duties.

All one needs to do is look at the data protection and regulatory rules affecting many businesses to see this. Here are two clear examples.

PCI-DSS

The Payment Card Industry Data Security Standard, or PCI-DSS, defines rules that companies who accept, store, and process credit card data must follow. While not an actual regulation, in that it is not enforced by any governmental organization, the penalties and potential for lost revenue if an entity is found to be non-compliant are no less substantial.

PCI-DSS is a set of twelve rules, ranging from securing physical access to systems (rule 9) to anti-virus (rule 5) and network security (rule 1). One of the rules I’ve found more complex is rule 10, which requires, among other things, implementing “automated audit trails for all system components” that are involved in the processing and storage of cardholder data[1]. For example, you must be able to show who accessed cardholder data, both from the perspective of viewing and modifying.

GDPR

The Global Data Protection Regulation, or GDPR, it a set of regulations passed by the European Union to protect personally identifiable information (or PII for short). Among its many provisions, several point to the need for robust auditing of access to relevant data. For example, Article 25, “Data protection by design and default”, states that “The controller shall implement appropriate technical and organizational measures for ensuring that, by default, only personal data which are necessary for each specific purpose of the processing are processed.”[2] Article 33, “Notification of a personal data breach to the supervisory authority”, requires that organizations holding personal data “shall without undue delay and, where feasible, not later than 72 hours after having become aware of it, notify the personal data breach to the supervisory authority”.[3] I would argue that, without an accurate record of who accessed your data, you will be very hard pressed to meet either of these requirements.

Having established a clear need for tracking access to data, there is one more item to discuss before getting into specifics, namely the question of where the audit data is kept. In the case of those subject to PCI-DSS, the rule is quite clear, in that rule 10.5.3 states “Promptly back up audit trail files to a centralized log server or media that is difficult to alter.” [4] This should surprise no one familiar with common-sense security, in that the systems holding the logs should be well segregated and secured from the systems generating them. The practical implication of this is that while you may generate the relevant audit records on the systems holding the data (for this purpose, an installation of SQL Server), you should not solely keep it there. In addition, you should ensure that no one component of the system can delete data already logged to the central location; otherwise, an attacker could remove their tracks before you had a chance to see them.

Having laid these foundations, it’s time to learn how you might implement a solution.

Overall Solution Design

To recap the stated requirements:

- Audit all access to relevant data, including viewing and modification.

- Audit any activity around the granting of access to the data itself.

- No automated component of the system should have access to delete the audit records in their eventual home (both short and long term).

- Finally, transmit these audit logs to a secure location outside the area where they are generated, such that compromise of the original system does not put the logs themselves at risk.

Before delving deeper, I want to note that the solution shown here is minimal, and certainly should not be considered adequate to meet the needs of all organizations. For example, it does not involve functionality such as automated analysis of logs or alerting based on defined criteria being met. For example, you may need to be notified when certain kinds of access occurs (such as someone being granted access to sensitive data), or when certain normal patterns of usage are violated (such as when data access occurs from a machine not normally accessing the data, or by a user from an unusual location). These are more complex issues to solve, and as a leader for a team facing them, I can state that they require complex and often expensive solutions. Take this solution only as a starting point, from which you may expand to better suit your particular circumstances. Also, for the sake of expediency, the article may take specific shortcuts that would not be acceptable in a live system.

The solution will combine two main components: SQL Server Audit and Azure Log Analytics.

SQL Server Audit

Available since SQL Server 2014, SQL Server Audit is a feature that allows you to track certain kinds of activity against an instance of SQL Server. These include data access (both view and modify), administrative setting changes, and privilege modifications to name several. This data is collected locally and can be sent to various destinations, such as physical files, Windows logs, and Azure blob storage. Initially, certain features were restricted to only Enterprise edition of SQL Server, such as database level auditing; thankfully, starting with SQL Server 2016 Service Pack 1, all features are available in Standard edition as well, making the feature accessible even to organizations who cannot afford an Enterprise license.

In this solution, SQL Server Audit will serve as the means to collect the necessary audit data. Previously, this likely would have involved either a third-party tool or the creation of a SQL Profiler trace, both of which carry cost and risk. With SQL Server Audit, the functionality is relatively easy to set up, once you understand some fundamental concepts. This article covers how to make use of SQL Audit’s ability to log events to the Windows Application event log, where they can be picked up for processing.

Azure Log Analytics

The Azure Log Analytics platform is designed to provide robust data analytics and visualization for bulk log data. In this case, it provides a place to view data while preventing the need for another piece of infrastructure to manage. You could easily substitute something fancier, such as Elasticsearch, Splunk, or any other platform of your choosing. If your organization already uses something else, I’d encourage you not to go outside the box.

In order to capture the audit events, you will make use of Log Analytic’s feature for collecting Windows event log data.

Time to get building!

Pre-Requisites

Before beginning this exercise, there are a few things you will need.

- An instance of SQL Server 2016 SP1 or higher, minimum Standard Edition (Developer Edition will work fine as well).

- An Azure subscription, with a configured Azure Log Analytics Workspace.

- The Azure Monitor agent must be installed on the operating system hosting SQL Server.

- A basic understanding of Azure Log Analytics query language.

The SQL Server need not be hosted in Azure, though that is ideal for lab purposes. (Note: For more information about using Azure Log Analytics to collect the audit logs on SQL Servers hosted outside of Azure VMs, see this documentation.)

Here are some guides for how to create a subscription, create a SQL Server virtual machine, and create an Azure Log Analytics Workspace.

- Purchase a new Azure subscription (hint: if you already own a Visual Studio / MSDN subscription, you can get an Azure subscription with some monthly credit as a benefit, see this guide for more details)

- Create a SQL Server VM in Azure

- Create an Azure Log Analytics Workspace

- Installing the Log Analytics agent

- Get Started with Log Analytics in the Azure Portal

- Get Started with queries in Log Analytics

TIP: When creating the Log Analytics workspace, choose the region closest to where your virtual machine will be (or if the virtual machine is in Azure, pick the same region if possible). Log Analytics is only available in certain regions, which you can see by browsing this link and scrolling down until you see “Log Analytics” in the list of services.

Auditing Setup

This section covers how to set up a basic server and database level audit in SQL Server and show how the events are logged to the local Windows event log.

SQL Audit configurations have three components: server audits, server audit specifications, and database audit specifications. I’ll discuss each in a little more detail.

Server Audits

A Server Audit object defines how audit data should be logged. For example, you can define outputs such as:

- Files, both on the local file system or a remote share

- Windows event logs, specifically the Application or (with some extra setup) the Security log

- URLs, used mainly for storing audit data in Azure Blob Storage

In this case, you are going to log data to the Windows Application event log. See Microsoft’s documentation for more details.

Server Audit Specifications

A Server Audit Specification object defines what groups of events should be audited at the server level. This includes a great many options, some of which should be used with caution as they may generate a great deal of data.

In this case, you would like to audit the following kinds of server level events:

- Changes to audit settings

- Changes (DDL) to any database object

- Ownership changes on any database object (which might allow a user to have different privileges)

- Changes to permissions on any database level object

- Changes to ownership at the database level (e.g. changing the principal that owns a database)

- Changes to permissions at the overall database level (e.g. granting rights to a principal on an entire database)

- Changes to database level role memberships

- Changes to server level objects

- Changes to ownership of server level objects

- Changes to permissions on server level objects

- Changes to server level permissions

- Changes to server level role memberships

Refer to Microsoft’s documentation for more information about server audits.

Database Audit Specifications

A Database Audit Specification object defines what groups of events should be audited at the database level. Unlike Server Audit Specifications, these are defined on a database by database basis. Many of these are database level equivalents to the action groups available in the Server Audit Specification object. For example, the DATABASE_ROLE_MEMBER_CHANGE_GROUP action group is present in both Database and Server Audit Specifications; in the case of the former, it will only be applied in databases where the audit specification is present, whereas in the latter it will be applied across all databases in an instance.

A good rule of thumb is If you need to audit some kind of action across all databases on an instance, your best bet is likely to do so within a Server Audit Specification, not a Database Audit Specification. There are trade-offs to this, however, such as less granularity in terms of filtering. An excellent example of this is relevant to the scenario, specifically the need to audit access to and modification of data in specific tables, which will be the primary use case for this Database Audit Specification.

Say that you have one or more tables that you must audit access to, across many similar databases on a system. This being the case, you could do so in several ways.

- You could add the SCHEMA_OBJECT_ACCESS_GROUP action group to a Server Audit Specification. This will audit all access to any table across all databases on the instance. Certainly, very comprehensive, but likely also generating a large volume of data, some or most of which might not be necessary depending upon your needs.

- You could add the SCHEMA_OBJECT_ACCESS_GROUP action group to a Database Audit Specification in each of the databases that need to be audited. This will audit all access to any table in any database that has the audit specification defined. Depending on what percentage of databases on the instance you need to audit, and what percentage of tables within those databases must be audited, this may be a useful solution (but still very likely to generate a lot of data).

- You could add specific tables to a Database Audit Specification, using Database Level Audit Actions. This would let us very granularly define what tables require what kinds of access.

Microsoft’s documentation provides more details.

Constructing the audit

Reviewing the list of things to audit, and the various ways which might meet these requirements, you can now create the audit objects and see data being logged to the local Application event log.

First, create the Server Audit object, which will define how the data is logged.

|

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27 |

CREATE SERVER AUDIT SQLAudit TO APPLICATION_LOG; ALTER SERVER AUDIT SQLAudit WITH (STATE=ON); Next, create the Server Audit Specification. CREATE SERVER AUDIT SPECIFICATION SQLServerAuditSpec FOR SERVER AUDIT SQLAudit ADD (AUDIT_CHANGE_GROUP), ADD (DATABASE_OBJECT_CHANGE_GROUP), ADD (DATABASE_OBJECT_OWNERSHIP_CHANGE_GROUP), ADD (DATABASE_OBJECT_PERMISSION_CHANGE_GROUP), ADD (DATABASE_OWNERSHIP_CHANGE_GROUP), ADD (DATABASE_PERMISSION_CHANGE_GROUP), ADD (DATABASE_ROLE_MEMBER_CHANGE_GROUP), ADD (SERVER_OBJECT_CHANGE_GROUP), ADD (SERVER_OBJECT_OWNERSHIP_CHANGE_GROUP), ADD (SERVER_OBJECT_PERMISSION_CHANGE_GROUP), ADD (SERVER_OPERATION_GROUP), ADD (SERVER_PERMISSION_CHANGE_GROUP), ADD (SERVER_ROLE_MEMBER_CHANGE_GROUP)--, /* -- Uncomment this section to enable auditing of all object access -- across all databases on the server level instance. USE WITH -- EXTREME CAUTION AS THIS MAY GENERATE A LOT OF DATA. ADD (DATABASE_OBJECT_ACCESS_GROUP), ADD (SCHEMA_OBJECT_ACCESS_GROUP) */ WITH (STATE=ON); |

TIP: You will see above that I have left a commented section that would enable the auditing of table/object access at the server level. As discussed above, this is likely only useful in certain cases and should be avoided unless absolutely necessary.

Once both of these objects have been created, run a few statements in order to generate some audit records.

|

1 2 3 4 5 6 |

CREATE LOGIN BadLogin WITH PASSWORD = '!m$0B@d'; ALTER SERVER ROLE sysadmin ADD MEMBER BadLogin; GRANT CONTROL SERVER TO BadLogin; CREATE ENDPOINT BadEndpoint AS TCP (LISTENER_PORT=55001) FOR TSQL(); GRANT CONNECT ON ENDPOINT::BadEndpoint TO BadLogin; GRANT CONNECT ON ENDPOINT::[TSQL Default TCP] to [public]; |

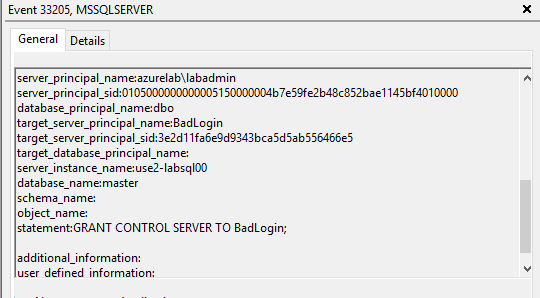

After running these commands, open Event Viewer and browse to the Application event log. You should see several log records that look similar to this one.

Next, use a Database Audit Specification to capture statements against a particular table.

|

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27 |

CREATE DATABASE TestDB; GO USE [TestDB]; GO CREATE TABLE MySensitiveTable (col1 int, col2 char(1)); GO CREATE DATABASE AUDIT SPECIFICATION SqlDatabaseAudit FOR SERVER AUDIT SqlAudit ADD (SELECT, INSERT, DELETE, UPDATE ON dbo.MySensitiveTable BY Public) WITH (STATE=ON); GO INSERT INTO dbo.MySensitiveTable ( col1, col2 ) VALUES ( 1, -- col1 - int 'A' -- col2 - char ); SELECT * FROM dbo.MySensitiveTable mst; DELETE FROM dbo.MySensitiveTable; |

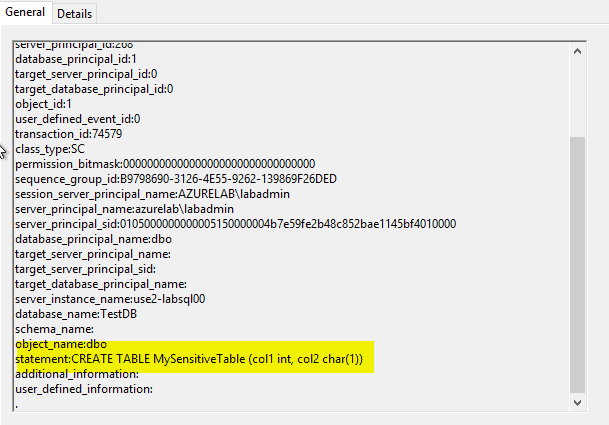

Looking in Event Viewer, you’ll now see these captured as well.

It’s now time to proceed to the next step, which is configuring the Azure Log Analytics agent to collect the necessary event logs.

Azure Log Analytics Agent

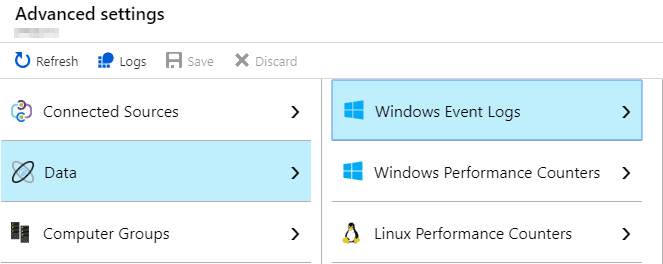

By default, the Log Analytics agent does not collect Windows Event Log data. Fortunately, this is easy to do in the central Log Analytics Workspace configuration in the Azure Portal.

To configure the Log Analytics agent residing on the computer(s) hosting SQL Server, you need to complete these steps.

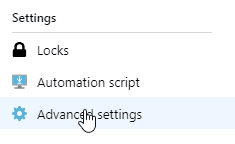

- Navigate to the “Advanced Settings” blade for the OMS Workspace you created.

- Click “Data”, then “Windows Event Logs”.

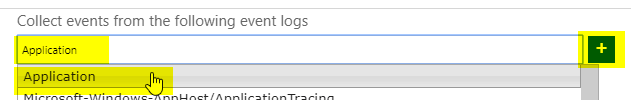

- Type in “Application” in the search box, select the same text in the resulting drop-down, then click the small “+” button.

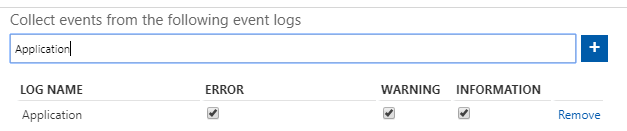

- Ensure that all available checkboxes are selected.

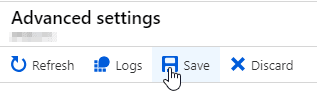

- Click the “Save” button along the top of the screen to save the configuration.

Once you have completed this, go back to SQL and run some more commands, such as the following.

|

1 2 3 4 5 6 7 8 9 10 11 |

USE [master]; GO ALTER SERVER ROLE sysadmin DROP MEMBER [BadLogin]; USE TestDB; GO INSERT INTO MySensitiveTable VALUES (2,'B'); SELECT * FROM MySensitiveTable; DELETE FROM MySensitiveTable; |

Now, navigate to the Logs blade in your OMS Workspace, and run a query such as the following:

|

1 2 3 |

Event | where Source == 'MSSQLSERVER' | top 100 by TimeGenerated desc |

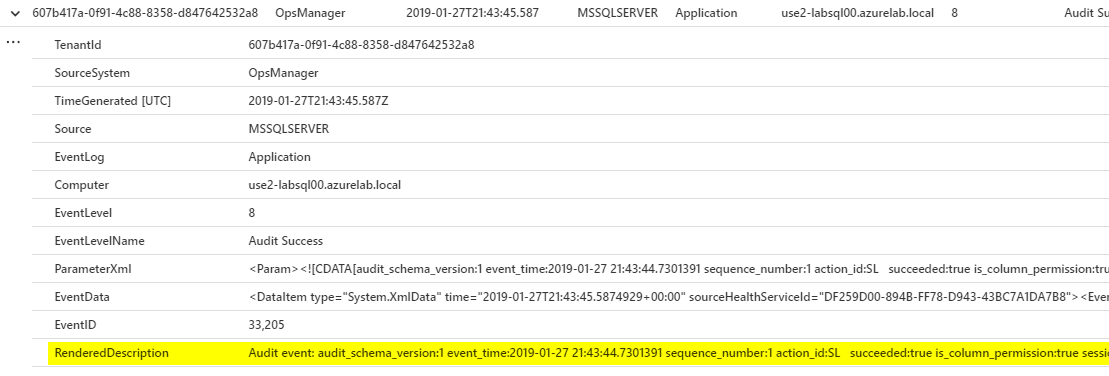

Note that it may take a few minutes for the audit to show up, so you may have to rerun it. Looking at the results, expand a few of the events and you should see something like the following.

Note the highlighted field in particular. In this basic configuration, unfortunately, Log Analytics bunches a lot of the useful data together in one field, which does make it somewhat hard to read. In future explorations I hope to be able to get the data parsed in a more readable fashion, and perhaps also explore how one might set up alerts based on certain kinds of actions, such as additions to highly privileged groups or access to sensitive data.

Summary

In this article, I’ve shown how to configure a basic SQL Audit configuration, including the Server Audit (how data is logged), Server Audit Specification (what actions get audited at a server-wide level), and Database Audit Specification (what actions get audited on a database by database case) objects. I’ve also shown how easy it is to get this data out of the local system and into an Azure Log Analytics Workspace. While this certainly meets the stated requirements, there are some limitations to this model.

First, as shown previously, the data is not particularly readable, which also means it is hard to alert on or analyze further. Second, this configuration will result in all Information level Application Event Log entries being ingested into the Log Analytics Workspace. Depending on what applications are installed on the host computer, this could result in a lot of data that may or may not have used (though in general I’d say that aggregating log data as a whole is useful, especially in security conscious environments).

My eventual goal is to take this basic setup and address each of these issues. While this will necessitate some additional complexity, in the end, the added value will more than likely outweigh that. Stay tuned!

References

1. See section 10.2 of https://www.pcisecuritystandards.org/documents/PCI_DSS_v3-2-1.pdf

2. https://gdpr-info.eu/art-25-gdpr/

3. https://gdpr-info.eu/art-33-gdpr/

4. See section 10.5.3 of https://www.pcisecuritystandards.org/documents/PCI_DSS_v3-2-1.pdf

Load comments