In the past decade, the software development processes and application and infrastructure architectures and technologies have gone through many innovations and changes. Organizations that started with on-premises data centers have moved to hardware virtualization, private and public clouds, containers, and now serverless applications. With this transition, many organizations are moving away from the monolithic architectures to microservices and serverless models. This article focuses on running container workloads in Microsoft Azure.

Brief background about containers

After hardware virtualization became commonplace, the question was raised about how much faster and more efficient application development and deployment could be made. The container was an obvious solution. A container is a virtualization on top of the operating system layer. Containers do not need to boot up another operating system to run an application. All you need is your application code and its dependent libraries packaged into a single image. The important advantage of containers is that you do not need to boot up another operating system with all the software packages needed for your application on top of the host machine.

Figure 1 shows the virtualization of containers:

Figure 1

Microsoft Azure’s Options to run the container workloads

Microsoft Azure provides many options to build the container infrastructure and run the container applications quickly. Here is a list of the container services offered by Microsoft Azure:

- Azure Container Instances

- Azure Kubernetes Service (AKS)

- Azure Batch

- Azure Service Fabric

- Azure Functions

- Azure App Service

This article focuses on Azure Container Instances (ACI) and Azure Kubernetes Services (AKS).

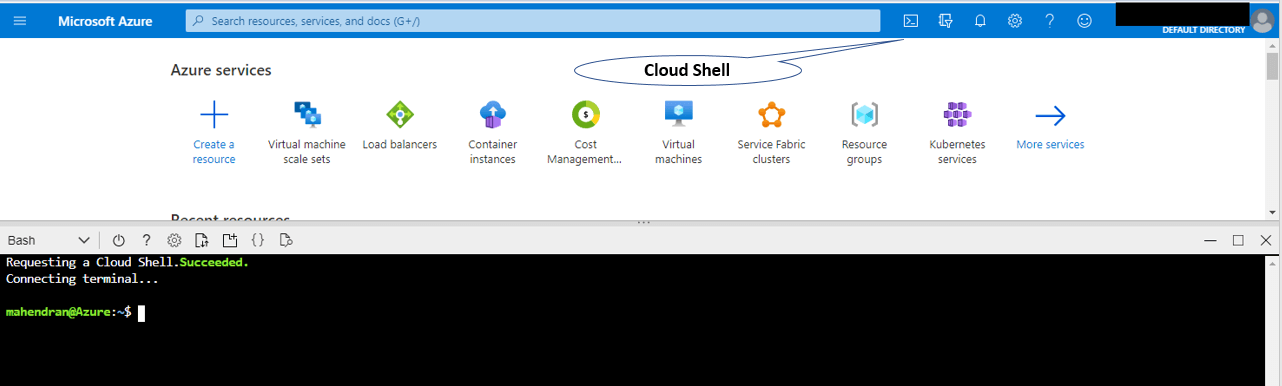

Azure Cloud Shell

Before starting to explore containers in Azure, I’ll provide some brief detail about the Cloud Shell. The Cloud Shell is a browser-accessible interactive shell. It automatically authenticates you once you login to the Azure Portal and launch it. You can choose between Bash and PowerShell. All the required packages are already installed, so you do not need to go through the struggle of installing the Azure command-line tools and packages on your local machine. To access Cloud Shell, click on the Cloud Shell icon after you log in to the Azure Portal, as shown in Figure 2.

Figure 2

You could also open the full cloud shell window by accessing https://shell.azure.com/. You can use both Azure Command-Line Interface (CLI) or Azure PowerShell. This article uses CLI commands throughout.

If you have multiple subscriptions, you may want to ensure you are in the correct one. Use this command to see the default Azure subscription:

|

1 |

$az account show |

Use this command to change subscriptions:

|

1 |

$az account set --subscription 'my azure subscription or ID' |

Azure Container Registry

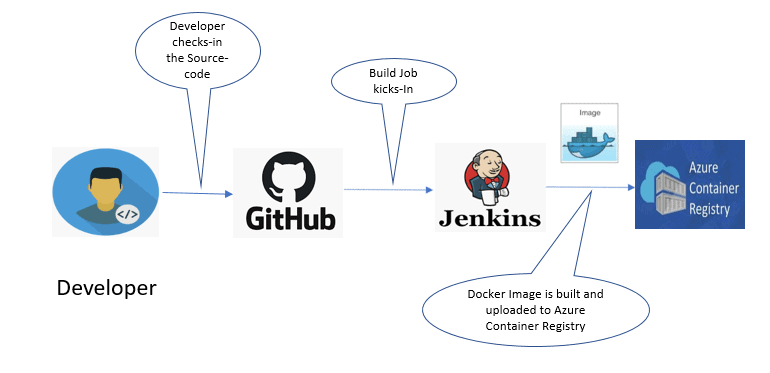

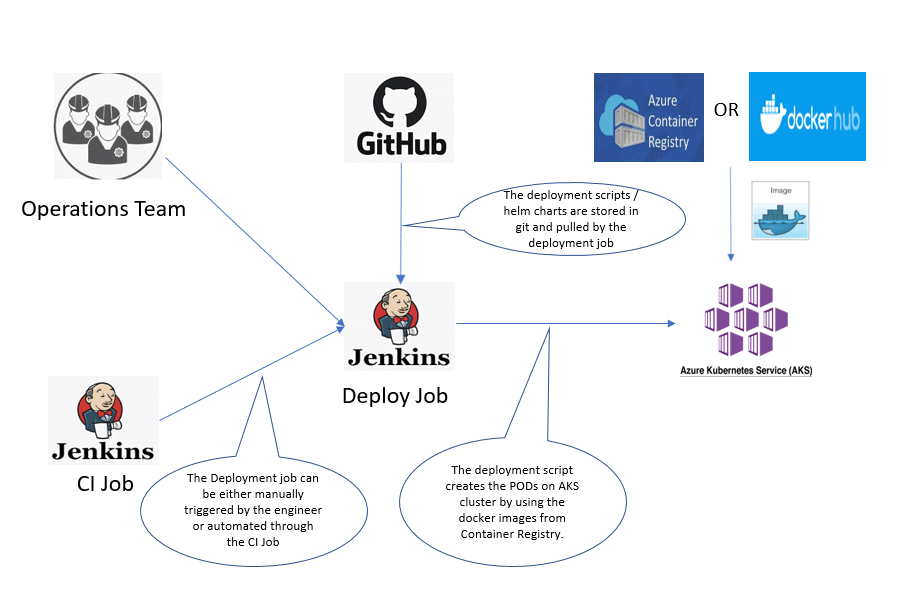

Before moving on to discuss the container services in Azure, you should understand the Azure Container Registry (ACR). The Azure Container Registry is the private registry used to store the docker images which gets deployed to your environment. This is built based on the open-source Docker Registry 2.0. The Docker images can be pushed to the Container Registry as part of the development workflow (CICD Pipeline). It is secure, and the access can be controlled by service principal and role-based access control (RBAC). It supports both Windows and Linux images. Azure Container Registry Tasks can be used to streamline the CICD pipeline. The typical CI workflow can be described, as shown in Figure 3.

Figure 3

Apart from Azure Container Registry, you can also use the Docker Hub as your container repository. In the enterprise level, using the private container registry service offered by the cloud provider is preferred considering factors like security, easy administration, and cost management. As the goal of this article is to explain the container services offered by Azure, I am going to use the Docker images available in the Docker Hub public repository.

Azure Container Instances

Azure container instances are suitable to run isolated containers for use cases like simple applications, task automation and build jobs. Full container orchestration is not possible with Azure Container Instances. However, creating a multi-container group is still possible. That means your main application container can be combined with other supporting containers like logging or monitoring containers. This is achieved by sharing the host machine, local network, and storage.

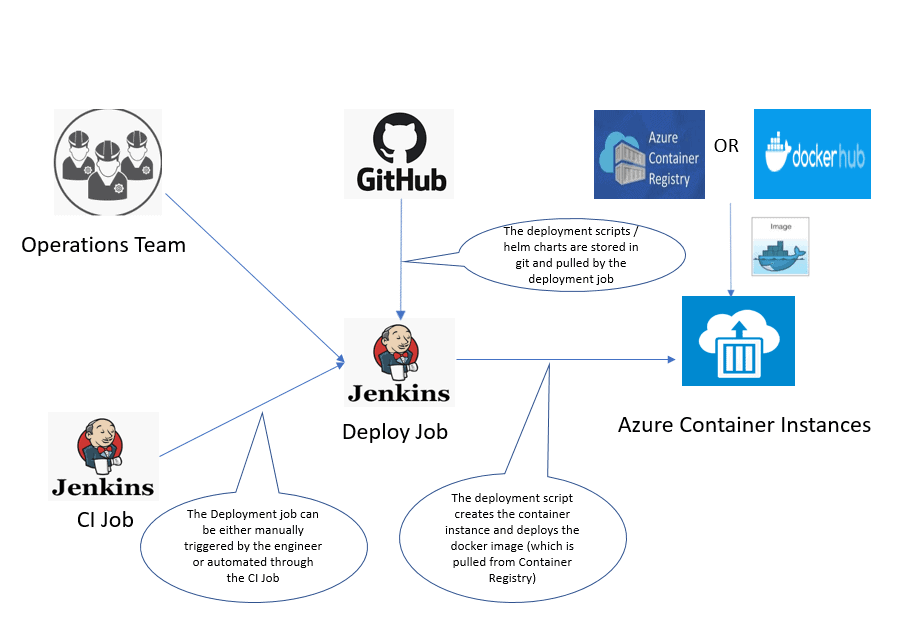

Container Instance Deployment

You can create the Container instances through the Command Line Interface, Azure Portal, PowerShell, or Azure Resource Manager (ARM) templates. Choosing the appropriate deployment method depends on your CICD pipeline requirements. PowerShell, ARM templates, or Terraform would be preferable methods to achieve full automation. An ARM template is nothing more than the JSON file that defines the infrastructure and configuration for your Azure resources. It uses declarative syntax. The deployment process to create the container instances and deploy the images can be described as shown below in Figure 4.

Figure 4

Create and run a container instance

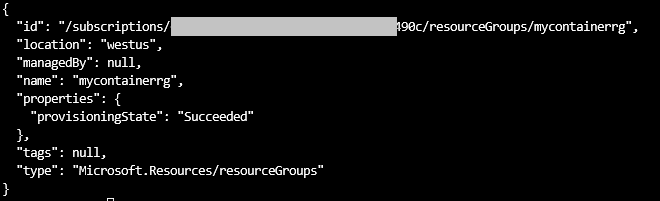

The first step is to create a resource group. All the resources created in this article will be assigned to this resource group named mycontainerrg.

|

1 |

$az group create --name mycontainerrg --location westus |

If running the above command is successful, you should see the below output and the new resource-group should be created.

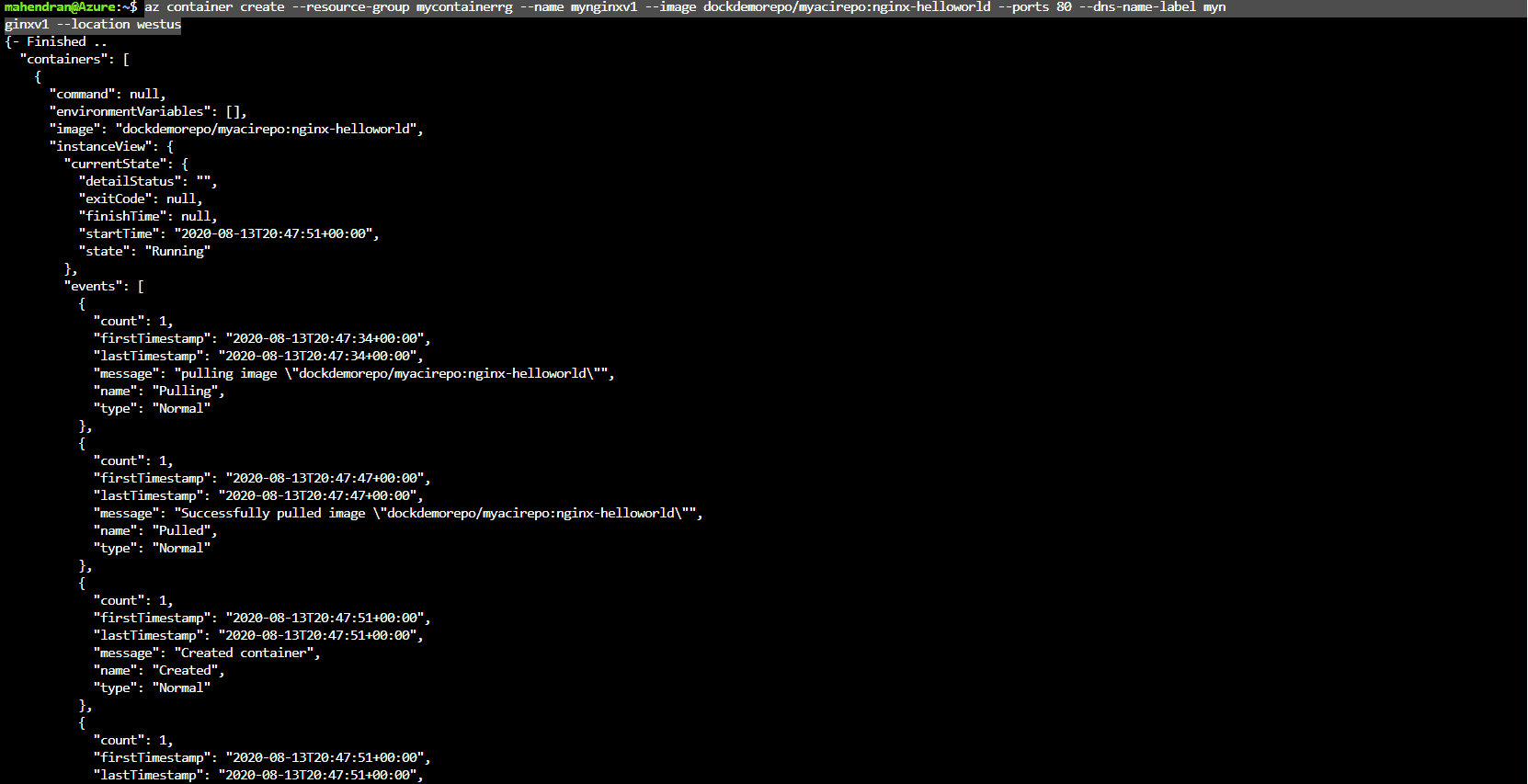

Now create the container instance. In this example, you deploy the container instances which creates the public IP address with DNS name and allows access to the container from the internet. As I mentioned before, you can use images either from the Docker Hub or from the Azure container registry. If you do not mention the full link for the Azure container registry, it pulls the images from the Docker Hub. On the other hand, if you would like to use an image from your private Azure container registry, you should use a value for the “–image” option that will be something like “$FQDN-FOR-AZURE-CONTAINER-REGISTORY/$repositoryname:$tag” (Example – mycontrreg01.azurecr.io/mynginximage/nginx:v1).

|

1 2 |

$az container create --resource-group mycontainerrg --name mynginxv1 --image dockdemorepo/myacirepo:nginx-helloworld --ports 80 --dns-name-label mynginxv1 --location westus # The value for –dns-name-label should be unique. If the name you are trying is taken by someone else in the same location (westus), it will error out. |

Once the container is successfully created, you should see the below output –

To start and stop the container, you can use the below commands

|

1 2 |

$az container stop --name mynginxv1 --resource-group mycontainerrg $az container start --name mynginxv1 --resource-group mycontainerrg |

To view the container-related logs, you can use this command:

|

1 |

$az container logs --name mynginxv1 --resource-group mycontainerrg |

To execute the shell commands inside the container, you can use the command below:

|

1 |

az container exec --exec-command /bin/bash --container-name mynginxv1 --resource-group mycontainerrg --name mynginxv1 |

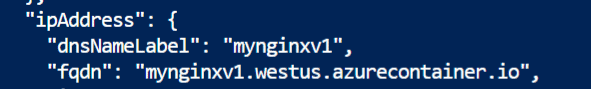

You can access the web page served by the NGINX by using the FQDN provided in the container creation output.

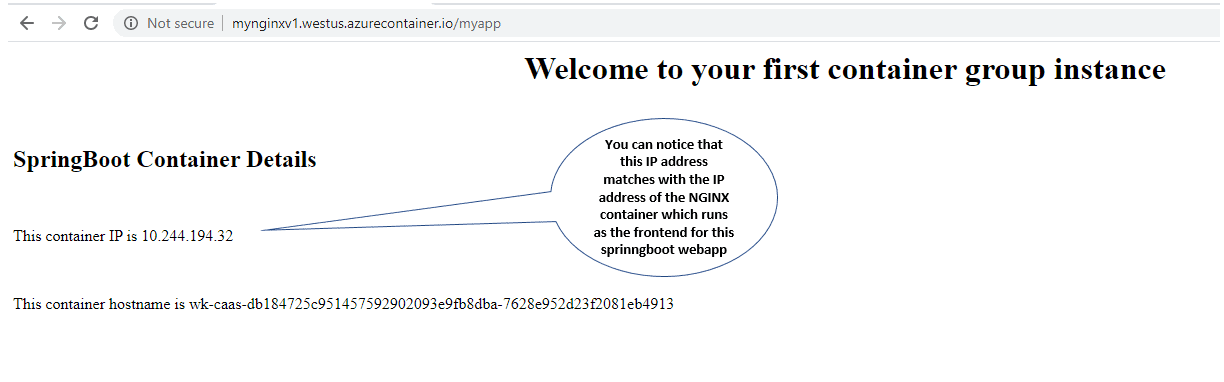

The page should look like Figure 5.

Figure 5

Container Group

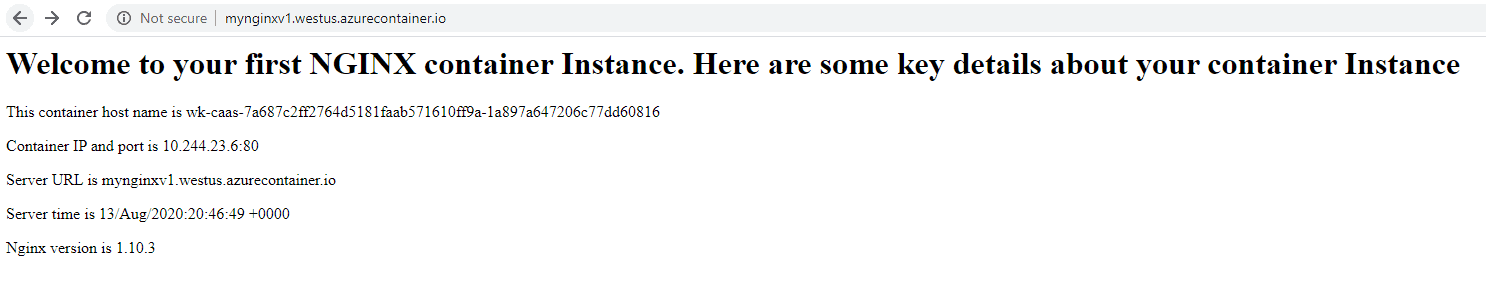

An Azure container group provides the capability to run multiple containers on the same host machine. All the containers in the container group share the resources, network, and storage volumes, and Azure supports two methods of container groups.

You can create multiple containers in the container group, and those containers can connect to each other using the localhost and the ports each container listens. One container port can be exposed to the internet through public IP address and DNS name. However, this does not serve the purpose of multi-service architectures. In other words, you cannot deploy mass production workloads with hundreds of services connecting each other.

The second method is to create multiple containers on the Azure virtual network, but there are limitations on this, too. Only Linux containers are supported, and Windows is not yet supported. The next limitation is that these containers cannot be accessed through the internet. They neither allow assigning a public IP address nor placing the load balancers in front of the containers (It is a limitation from load balancers). The last one is that global virtual network peering is not possible, which limits the container access to only within the virtual network.

In this section, you’ll create a container group with two containers running on two different ports that connect to each other. This is method 1 mentioned above. Figure 6 shows the architecture diagram describing the configuration.

Figure 6

The container groups can be created using two methods.

- Azure Resource Manager Template

- Yaml file

This example uses the yaml method to create the container group. The easiest way is to export the existing container configuration to the yaml file and update that yaml file with the extra container configuration.

Below is the command to export the existing containers yaml file. It exports the configuration of the container instance (mynginxv1) created in the previous section of this article.

|

1 |

$az container export --output yaml --name mynginxv1 --resource-group mycontainerrg --file mynginxv1.yaml |

The above command should create a file named “mynginxv1.yml”.

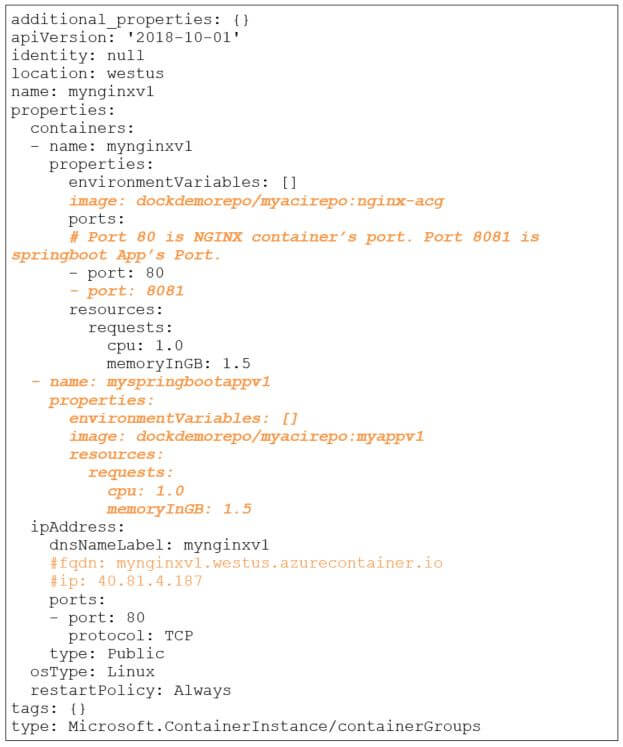

Now edit the yaml file created in step 3 and add the new container’s configuration. I highlighted the changes below (See the image after the code for the changes). You can use any editors which you are familiar with. I used vi editor to edit the yaml file.

|

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27 28 29 30 31 32 33 34 35 36 37 38 39 |

additional_properties: {} apiVersion: '2018-10-01' identity: null location: westus name: mynginxv1 properties: containers: - name: mynginxv1 properties: environmentVariables: [] image: dockdemorepo/myacirepo:nginx-acg ports: # Port 80 is NGINX container’s port. Port 8081 is springboot App’s Port. - port: 80 - port: 8081 resources: requests: cpu: 1.0 memoryInGB: 1.5 - name: myspringbootappv1 properties: environmentVariables: [] image: dockdemorepo/myacirepo:myappv1 resources: requests: cpu: 1.0 memoryInGB: 1.5 ipAddress: dnsNameLabel: mynginxv1 #fqdn: mynginxv1.westus.azurecontainer.io #ip: 40.81.4.187 ports: - port: 80 protocol: TCP type: Public osType: Linux restartPolicy: Always tags: {} type: Microsoft.ContainerInstance/containerGroups |

Before creating the container group, be sure to delete the container you created in the previous section using this command:

|

1 |

az container delete --name mynginxv1 --resource-group mycontainerrg |

The next step is to create the container group using the below command.

|

1 |

az container create --resource-group mycontainerrg --file mynginxv1.yaml |

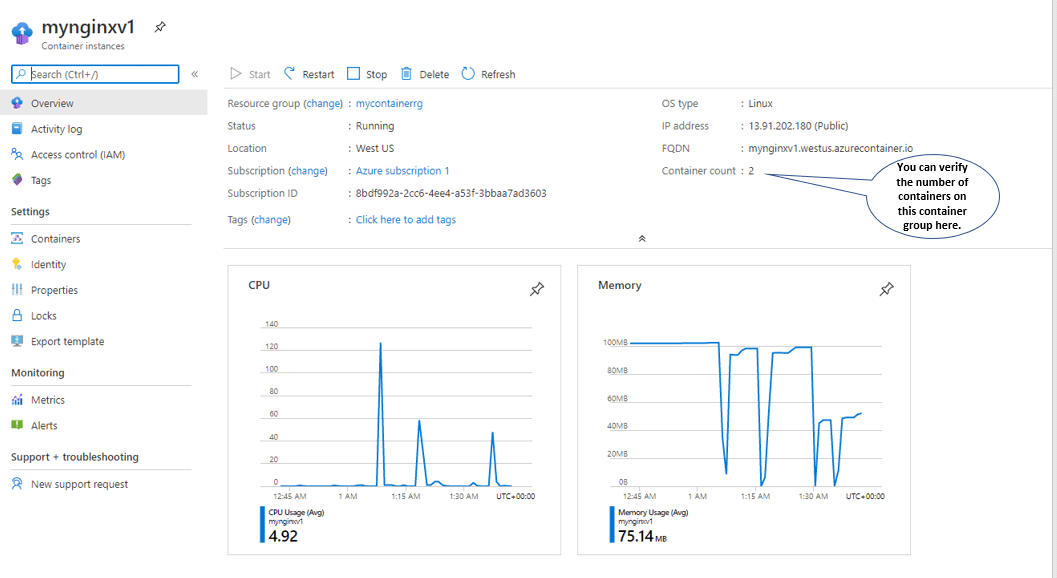

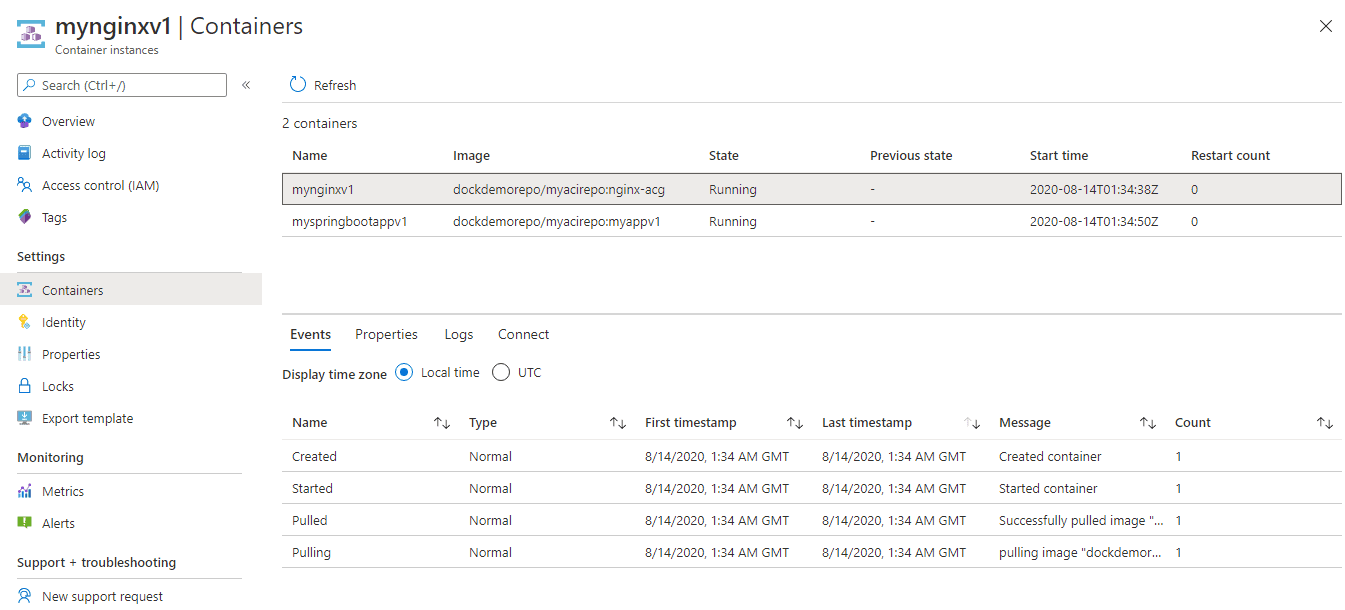

The containers creation can be verified through the Azure Portal, as shown in Figures 7 and 8.

Figure 7

Figure 8

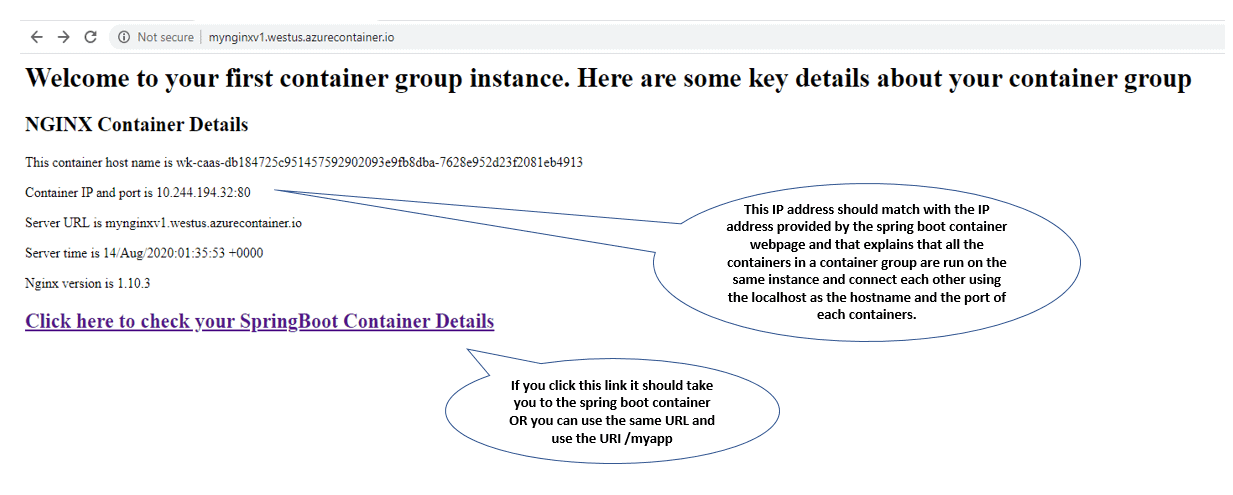

You can access both these container’s as shown in Figure 9 and Figure 10.

Figure 9

Figure 10

As shown in the previous pictures, you can see that both container’s IPs are the same, and that confirm multiple containers can be run on the same container instance and can connect to each other.

Azure Kubernetes Service (AKS)

Azure Kubernetes Service (AKS) offers managed Kubernetes services. It enables easy deployment and management of Kubernetes clusters in Azure. Azure handles the monitoring and maintenance of the Kubernetes clusters for you. The Kubernetes master nodes are managed by Azure and leave the management of worker nodes to you. The Kubernetes service itself is free, and you pay only for the worker nodes that run the PODs ( In Kubernetes, a POD is a group of one or more containers). The Azure cluster can be created through the CLI, Resource Manager templates/Terraform or through Azure Portal.

Azure Kubernetes Service is suitable to run production container workloads which demand the full orchestration capabilities like scheduling, failover, service discovery, scaling, health monitoring, advanced networking/routing, security, and easy application upgrades. Azure Kubernetes service supports both Linux and Windows containers.

To learn more, next you’ll create a Kubernetes cluster and deploy the same docker image built earlier (which was deployed to Azure Container Instances) to the AKS cluster. This example uses Azure CLI to create the cluster and deploy the application.

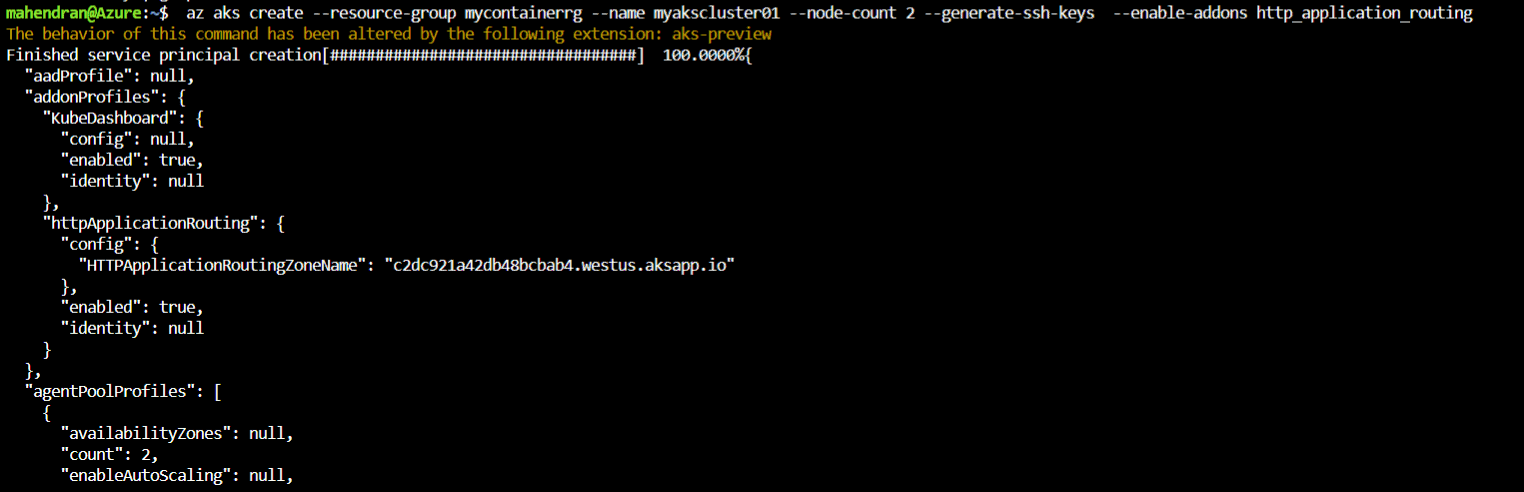

Create an Azure Kubernetes Cluster with 3 Nodes

|

1 |

$az aks create --resource-group mycontainerrg --name myakscluster01 --node-count 3 --generate-ssh-keys --enable-addons http_application_routing |

https://github.com/Azure/azure-cli-extensions/issues/1187

If the above command is successful, you should see the below output and successful cluster creation.

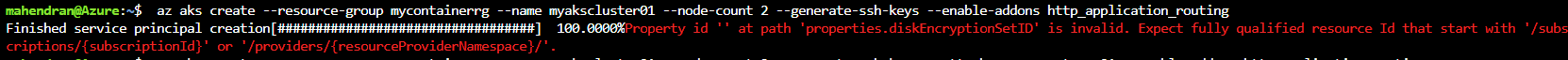

Please note – I ran into the below error, and the reason is the azure AKS CLI version. The Azure CLI on my cloud-shell had the bug related to this error. So, I had to add the “aks-preview” extension to resolve the error.

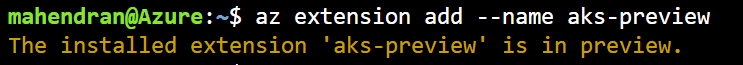

You can add the aks-preview extension by using the below command

|

1 |

$ az extension add --name aks-preview |

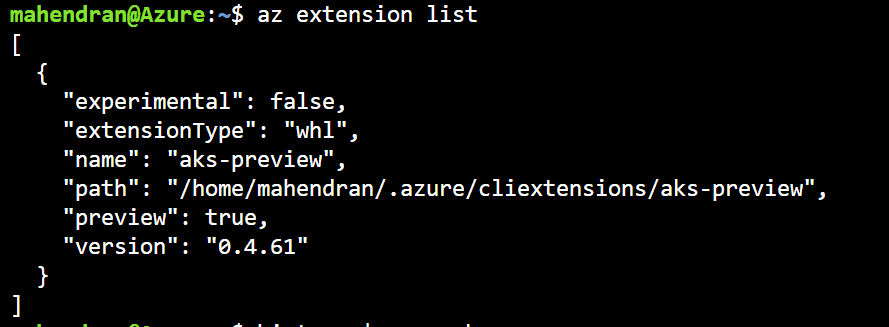

You can list the extensions by using the below command

|

1 |

$ az extension list |

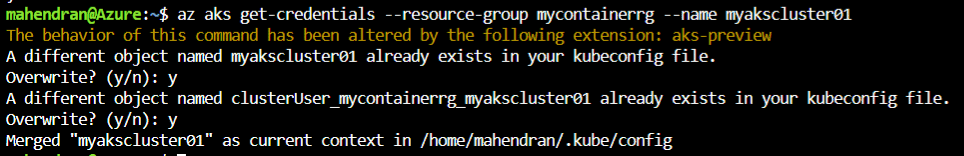

After creating the cluster, execute the following command to configure the kubectl on your cloud-shell (Or if you are running from your local machine).

|

1 |

$az aks get-credentials --resource-group mycontainerrg --name myakscluster01 |

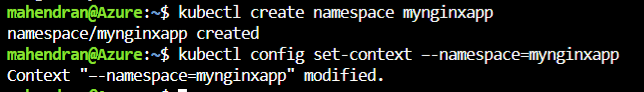

Now, create a new namespace for the application to deploy. Namespaces are used in environments with many users spread across multiple teams or projects. It is used to logically group the cluster resources in Kubernetes.

|

1 |

$kubectl create namespace mynginxapp |

You can set this namespace in the kubectl config level so that all the future kubectl commands will execute under this namespace

|

1 |

$ kubectl config set-context –-namespace=mynginxapp |

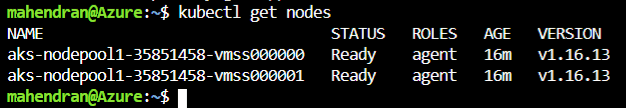

Now, you can use the kubectl commands to interact and manage the Kubernetes cluster.

|

1 |

$ kubectl get nodes |

Below are the commands to stop/start the Worker nodes in the AKS cluster. The az vmss list command lists the Virtual Machine scale set information, and you can grab the resource group and vmss name from this output and use it in the next command

|

1 2 3 |

$az vmss list $az vmss stop --resource-group MC_MYCONTAINERRG_MYAKSCLUSTER01_WESTUS --name aks-nodepool1-35851458-vmss $az vmss start --resource-group MC_MYCONTAINERRG_MYAKSCLUSTER01_WESTUS --name aks-nodepool1-35851458-vmss |

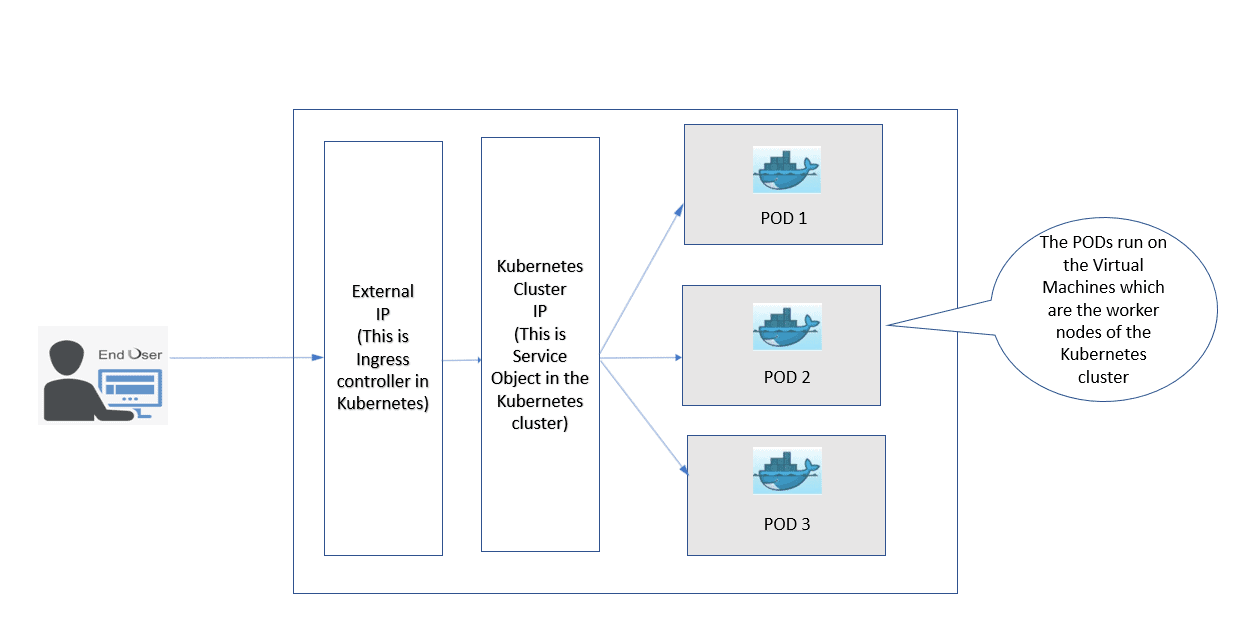

The next step is to deploy the application to Kubernetes cluster. The first step is to create the deployment yaml file and then apply that yaml to the Kubernetes cluster using kubectl command. The POD deployment flow can be described as shown below (Figure 11)

Figure 11

The diagram if Figure 12 describes the architecture pattern of the application.

Figure 12

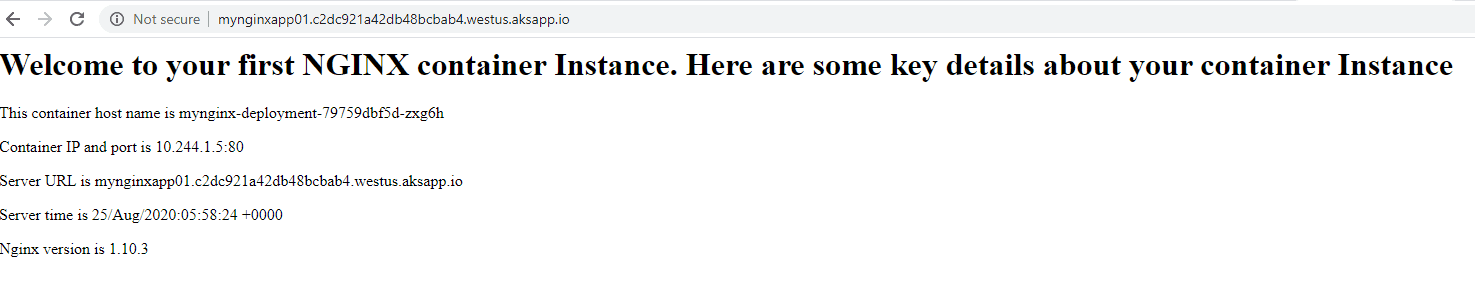

The yaml file below (save it as mynginxapp01.yaml) is used to deploy the application to the Kubernetes, Create the Cluster IP, and to Create the Ingress Service to enable the external access to the application. Before saving the file, look for the Host value. Replace part of the value by looking for HTTApplicationRoutingZoneName (i.e., c2dc921a42db48bcbab4.westus.aksapp.io) from the output of the cluster creation. You can also execute the command “$az aks list”, and that output should show this value.

|

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27 28 29 30 31 32 33 34 35 36 37 38 39 40 41 42 43 44 45 46 47 48 49 50 51 52 53 54 55 56 57 58 59 60 61 62 63 64 65 66 67 68 |

apiVersion: apps/v1 kind: Deployment metadata: name: mynginx-deployment spec: selector: matchLabels: app: mynginxapp01 replicas: 2 template: metadata: labels: app: mynginxapp01 spec: containers: # If you need to deploy multiple containers on the same POD, you can add the container configs below. This POD contains one container. - name: mycluster01 image: dockdemorepo/myacirepo:nginx-helloworld ports: - containerPort: 80 resources: requests: cpu: 1 memory: 250Mi limits: cpu: 1 memory: 250Mi livenessProbe: httpGet: path: /myhtml.html port: 80 initialDelaySeconds: 10 periodSeconds: 5 readinessProbe: httpGet: path: /myhtml.html port: 80 initialDelaySeconds: 10 periodSeconds: 5 --- apiVersion: v1 kind: Service metadata: name: my-external-lb spec: ports: - port: 80 protocol: TCP targetPort: 80 selector: app: mynginxapp01 type: ClusterIP --- apiVersion: extensions/v1beta1 kind: Ingress metadata: name: mynginxapp01 annotations: kubernetes.io/ingress.class: addon-http-application-routing spec: rules: - host: mynginxapp01.c2dc921a42db48bcbab4.westus.aksapp.io http: paths: - backend: serviceName: my-external-lb servicePort: 80 path: / |

After creating this file, use the below command to create the Kubernetes resources.

|

1 |

$kubectl apply -f mynginxapp01.yaml |

This command should create the resources listed below on your AKS cluster.

- Deploys 3 PODs

- Creates the ClusterIP service to route the traffic from Ingress Controller to the PODs.

- Ingress Controller Service.

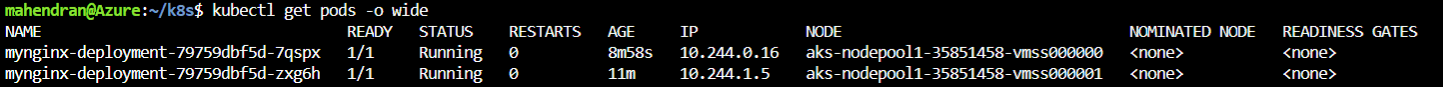

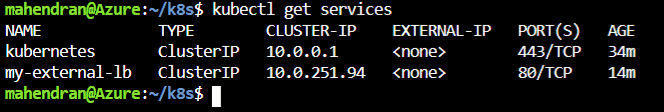

You can get the details of these resources using the below commands.

|

1 |

$kubectl get pods -o wide |

|

1 |

$kubectl get services |

|

1 |

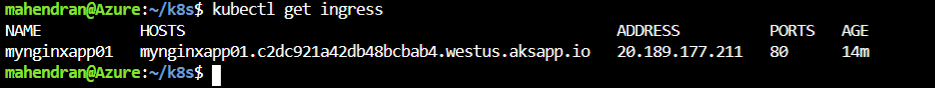

$kubectl get ingress |

Now, you can access the deployed application, as shown in Figure 13.

Virtual Nodes

Azure provides the capability to extend the Kubernetes service into the container instance service through the Virtual Nodes. Using virtual nodes, you can quickly launch the PODs without spending extra effort to scale up or scale out your Kubernetes worker nodes (virtual machine scale sets). To enable this feature, you should use the advanced Kubernetes networking provided by Azure CNI. However, using virtual nodes has its own limitations:

- Host aliases cannot be used.

- Cannot deploy DaemonSets to the virtual nodes.

- Cannot use a service principal to pull ACR images. You must use Kubernetes secrets for this.

Summary

Azure container instances can be used to run light workloads and simple applications like build jobs or task automation. The mass production workloads with hundreds of services connecting to each other and developed and managed by a large number of service teams must choose the Azure Kubernetes service.

This article walked you through creating both solutions using the Azure Cloud Shell and Azure CLI commands.

Load comments