When I first recovered from the surprise of seeing the huge number of heaps in the database project that I’d just become involved with, I was left with a burning curiosity as to how and why it had been done that way. After all a heap, which is a table without a clustered index, isn’t generally useful in SQL Server. It was then explained to me that all Clustered Indexes have been removed to curb the heavy frequency of deadlocks that afflicted the system. When they reengineered their database structure, the deadlocks indeed went, but the team had not considered the drawbacks that would be the inevitable consequence of working with heaps – and these drawbacks were on top of the disadvantages of losing the indexes! In this article, I’ll be describing just one of these drawbacks, that of the complications of DELETE operations on heaps.

Preamble

I am not one of those who argue that heaps have no place in a SQL Server database. I personally favor using heaps as often as possible: Data Warehouses are good candidates for heaps. My own opinion about heaps has been influenced by two very interesting blog posts about the difference of Heaps and Clustered Indexes.

- Unreasonable defaults: Primary Key as Clustering Key Markus Wienand

- Clustered Index vs. Heap Thomas Kejser

Although it is generally better to use clustered indexes than heaps, especially for reasons of maintenance, it is not universally so. However, you need to be aware of the drawbacks of heaps. The reason for these “drawbacks” are based on the structure of a nonclustered index. A nonclustered index in a heap stores the position of the record because there is no order criteria for a lookup. If a heap gets rebuilt, all the nonclustered indexes have to be updated, too because the position of the records changes. I recommend to my customers that, before they decide between a heap and a clustered index, they should analyze the following:

- the workloads

- the type of SELECT statements

- the impact to nonclustered indexes

- the maintenance requirements (fragmentation, forwarded records, DML operations)

When all conditions are perfect, then it is better to use a Heap rather than a Clustered Index. When one of these conditions are wrong, then you are likely to regret the choice of heaps.

A customer of mine failed to analyze these three issues, focusing only on the second option. What the team didn’t explore sufficiently was the deep impact of DELETE operations on Heaps and the impact of UPDATE operations in conjunction with so called Forwarded Records (you can read more about the drawbacks of Forwarded Records here: ‘How forwarded records are read and processed in a sql server heap‘. To illustrate the impact in DELETE operations, we’ll need to run a test.

Environment for test

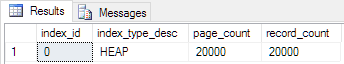

We will use a simple heap structure for all the following examples. The size of a record is 8,004 bytes so only one record will fit on a data page. The table will be filled with 20,000 records.

|

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27 28 29 30 31 32 33 34 35 36 37 38 39 40 41 42 43 44 45 46 47 48 49 50 51 52 53 54 55 |

-- Create a HEAP for the demo CREATE TABLE dbo.demo_table ( Id INT NOT NULL IDENTITY (1, 1), C1 CHAR(8000) NOT NULL DEFAULT ('Das ist nur ein Test') ); GO -- Now we fill the table with 20,000 records SET NOCOUNT ON; GO INSERT INTO dbo.demo_table WITH (TABLOCK) (C1) SELECT TOP 20000 text FROM sys.messages; When the table is filled up, we will have a total of 20,001 data pages in the buffer pool of the Microsoft SQL Server. -- what resource of the table dbo.demo_table are in the buffer pool now! ;WITH db_pages AS ( SELECT DDDPA.page_type, DDDPA.allocated_page_file_id, DDDPA.allocated_page_page_id, DDDPA.page_level, DDDPA.page_free_space_percent, DDDPA.is_allocated sys.dm_db_database_page_allocations ( DB_ID(), OBJECT_ID(N'dbo.demo_table', N'U'), NULL, NULL, 'DETAILED' ) AS DDDPA ) SELECT DOBD.file_id, DOBD.page_id, DOBD.page_level, DOBD.page_type, DOBD.row_count, DOBD.free_space_in_bytes, DP.page_free_space_percent, DP.is_allocated FROM sys.dm_os_buffer_descriptors AS DOBD INNER JOIN db_pages AS DP ON ( DOBD.file_id = DP.allocated_page_file_id AND DOBD.page_id = DP.allocated_page_page_id AND DOBD.page_level = DP.page_level ) WHERE DOBD.database_id = DB_ID() ORDER BY DP.page_type DESC, DP.page_level DESC, DOBD.page_id, DOBD.file_id; |

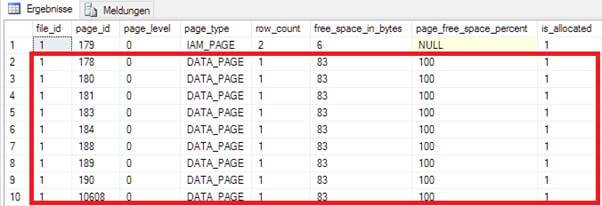

Every data page of the heap is completely filled up.

With the next statement 1,000 records will be deleted from the Heap. Due to the fact that only ONE record fits on a data page, we would expect that the page for each deleted record would then become empty, and ready to be recycled.

|

1 2 3 4 |

-- Now we delete half of the records SET ROWCOUNT 2000; DELETE dbo.demo_table WHERE Id % 2 = 0; |

By analyzing the buffer pool we can see when data is deleted from a heap and the data pages become free.

We would expect that empty data pages will be deallocated and given back to the database, but what has happened is very different. The result above shows the allocated buffer pool. The empty data pages allocate the buffer pool and – although they don’t have any data stored – they consume 8.192 bytes. With 1,000 deleted records this adds up to a wasted reservoir of nearby 8 MB in the buffer pool.

Why is this happening? We need to investigate further.

Reading data pages in a Heap

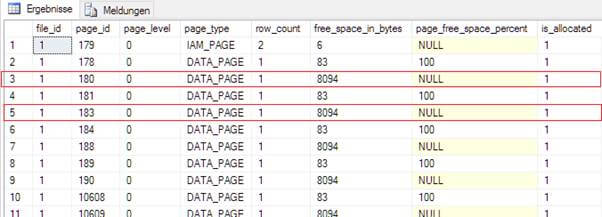

When a heap is used, data can only be accessed by a Table Scan. A Table Scan means always accessing the entire table. When all data has been read, the data will be filtered by the dedicated predicate.

|

1 |

SELECT</code> <code>* FROM</code> <code>dbo.demo_table WHERE</code> <code>Id = 10 OPTION</code> <code>(QUERYTRACEON 9130); |

The above example generates the following execution plan. Please note that TF 9130 has been used to make the FILTER-Operator visible in the execution plan!

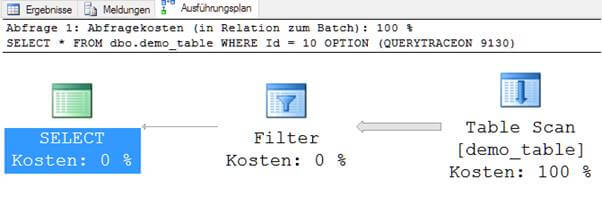

The structure of a Heap is, by definition, a bunch of data without any specific order. For this reason a Heap works like a jigsaw puzzle, and finding data in the heap is the same as having to check every single piece of the puzzle. Another important difference to any index structure is the fact that data pages don’t have a sequence. A single data page does not know where the next or the previous one is.

A data page in a heap is isolated and controlled by the IAM page[1] (Index Allocation Map) of the table. Think about the IAM as the container which holds all the data pages together. When, for example, Microsoft SQL Server accesses page 110 it does not know what or where the next one is. For that reason, Microsoft SQL Server reads the IAM data page first to determine what pages it has to read for a full table scan. Based on this information, the process can start and every single data page can be read. This process is called “Allocation Scan”[2].

Deleting records from a Heap

By the very fact that a Heap does not have any ordering key, it then seems obvious that a DELETE or UPDATE operation requires a TABLE SCAN in the same way as the reading process.

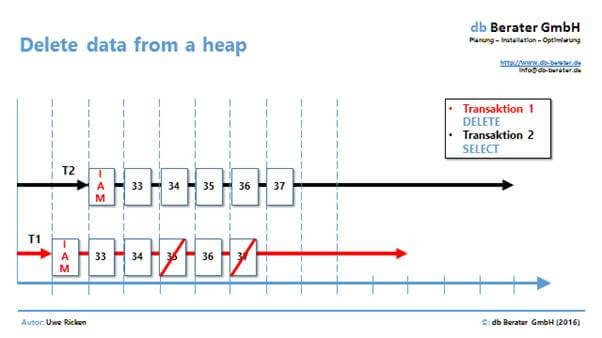

The above picture shows two processes which want to access the heap. While transaction 1 (T1) is accessing the table for a DELETE operation the Transaction 2 (T2) wants to read the data for a different process.

Both processes have to read the IAM page first. As soon as information on the IAM has been consumed, both processes can start with their work. What would then happen if T1 deallocates page 36 once the records have been deleted and the page becomes empty? If T1 deallocates the page, then the process with T2 would run into a failure. Because T2 has already read the IAM it expects to be able to read the data page.

For this reason, Microsoft SQL Server left all data pages from a heap as allocated, but empty, data pages for the Heap structure. This guarantees that deletes are independent of any other process which access the heap.

Deallocation of empty data pages

There are four ways of deallocating empty data pages back to the database after deletes so that they can be reused.

- Use a Clustered Index instead of a Heap

- Rebuild the Heap by using ALTER TABLE[3]

- Delete the records with an exclusive table lock

- Use automatic lock escalation when huge amount of data are deleted

Option 1 and 2 does not need any more explanation, so let’s just focus on the last two options:

Deleting records WITH (TABLOCK)

The simplest way of removing empty data pages from a heap is via the exclusive locking of the table. But keep in mind that there is no such thing as a free lunch. Blocking the table from other processes will lead to a system that will not scale. As long as the DELETE process locks the table, no other process can access the table. The only exception is the “SNAPSHOT ISOLATION” but explaining the pros and cons of this technique is outside the scope of this article.

The next example (with the new created table) demonstrates the behavior.

— Now we delete 2000 records

|

1 2 3 4 |

BEGIN TRANSACTION; GO DELETE dbo.demo_table WITH (TABLOCK) WHERE Id <= 2000; |

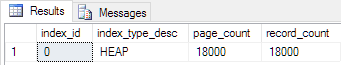

As soon as the process has finished, Microsoft SQL Server has released 2,000 pages back to the database.

|

1 2 3 4 5 6 7 8 9 10 11 12 13 |

-- what pages have been allocated by the table SELECT DDIPS.index_id, DDIPS.index_type_desc, DDIPS.page_count, DDIPS.record_count FROM sys.dm_db_index_physical_stats ( DB_ID(), OBJECT_ID(N'dbo.demo_table', N'U'), 0, NULL, N'DETAILED' ) AS DDIPS |

Microsoft SQL Server can release the data pages from the table without risk because its IAM is protected with an exclusive lock from use by any other process.

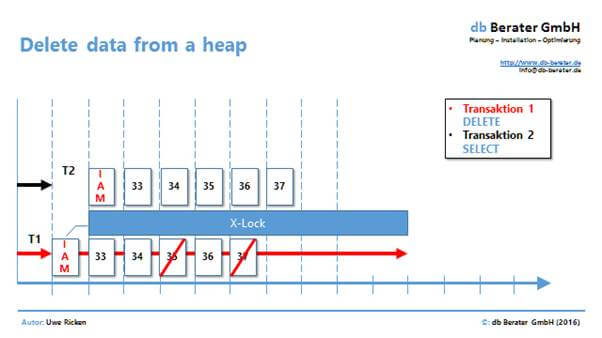

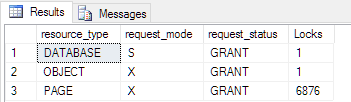

Transaction 1 (T1) starts the DELETE process with an exclusive lock on the table. This exclusive lock extends to the IAM, too. As long as the DELETE process is processing, it holds this exclusive lock on the table. While T1 is processing the task, the transaction 2 (T2) has to wait. A SELECT statement cannot start because in order to do so, T2 need to read the IAM page. The obvious drawback of this type of process implementation is the loss of scalability.

|

1 2 3 4 5 6 7 8 9 10 11 |

- output of aquired / released locks DBCC TRACEON (3604, 1200, -1); GO -- delete 1,000 records SET ROWCOUNT 2000; DELETE dbo.demo_table WITH (TABLOCK) WHERE Id % 2 = 0; GO -- deactivate the output of locks DBCC TRACEOFF (3604, 1200, -1); GO |

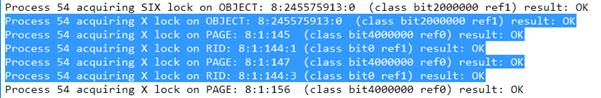

The above code example demonstrates the locking behavior of the process. The following depiction shows the implemented locks.

The DELETE process requires first an X-lock on the table (OBJECT: 8:245575913). After the table has been locked exclusively the data pages and records can/will be locked, too for deallocation. Due to the exclusive lock on the table, the IAM is “safe” and no other process can access the table.

Lock Escalation with huge amount of data

By using the TABLOCK hint, we were able to show that a controlled process can be initiated to delete and deallocate data pages from a Heap. A strange behavior might occur if you delete a huge amount of data from a table without exclusive locking of the table. Microsoft SQL Server is using “Lock escalation”[4] to take memory pressure from the system when too many locks would otherwise hog the available RAM.

To escalate locks, the Database Engine attempts to change the intent lock on the table to the corresponding full lock. If the lock escalation attempt succeeds and the full table lock is acquired, then all heap or B-tree, page (PAGE), or row-level (RID) locks held by the transaction on the heap or index are released. If the full lock cannot be acquired, no lock escalation happens at that time and the Database Engine will continue to acquire row, key, or page locks. The Database Engine does not escalate row or key-range locks to page locks, but escalates them directly to table locks. Similarly, page locks are always escalated to table locks.

The rough threshold for lock escalation is ~5,000 locks. If we want to delete >= 5,000 records from a Heap it is likely that Microsoft SQL Server will set an exclusive-lock (Xlock) on the table. Once the table is fully locked, no other process can then read the IAM page so it is safe to delete and release the data pages in the table.

The following example demonstrates a lock escalation and its side effects to the release of data pages in the Heap. The given demo table is filled with 20,000 records and a DELETE command will delete half of them without any table hints!

To filter the log activities of the DELETE process only we use a named transaction. This technique makes it much easier to filter for dedicated log records.

|

1 2 3 4 5 6 7 8 |

-- Now we delete half of the records BEGIN TRANSACTION demo; GO DELETE dbo.demo_table WHERE Id % 2 = 0; GO COMMIT TRANSACTION demo; GO |

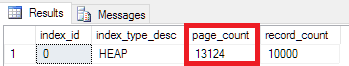

When the 10,000 records have been deleted, we check again the allocated data pages and find the following results:

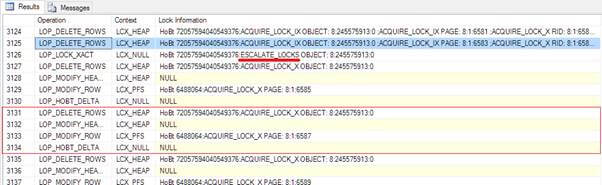

Until we investigate a bit deeper, the counter of the allocated data pages makes no sense. We’d expect all 20,000 data pages or, because of lock escalation, only 10,000 data pages. While the DELETE process did happen, the following locks have been recorded:

Let’s do a little math: We started with 20,000 allocated records and 6,876 pages are exclusively locked. When we subtract the locked pages, the result is exactly the value of the remaining data pages from the above picture. A look into the transaction log will give the answer WHY there is such a curious number of pages left!

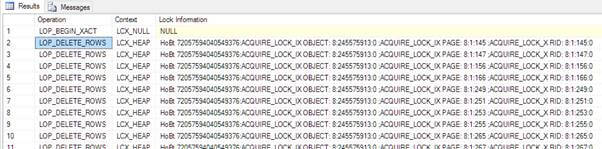

This picture shows the start of the DELETE process in the transaction log. You can clearly see the row-based locking for every single DELETE command (AQUIRE_LOCK_X_RID). At the beginning Microsoft SQL Server didn’t need a lock escalation and it deletes “row by row” with granular locks.

Beginning with line 3,126 in the transaction lock the situation completely changes. Up to this point Microsoft SQL Server has deleted 3.124 records from the table WITHOUT deallocating the data pages. Microsoft SQL Server couldn’t deallocate the pages because there was NO exclusive lock on the table!

Beginning with record 3,125 Microsoft SQL Server has too many locks open, and needs to escalate the locks (Line 3,126). When the table is locked, Microsoft SQL Server changes the way that it deletes records from the table:

- The record will be deleted from the data page (LOP_DELETE_RECORD)

- The header of the page will be updated (LOP_MODIFY_HEADER)

- The PFS Page need to be updated, too (LOP_MODIFY_ROW | LCK_PFS)

- Deleting the allocation in the IAM (LOP_HOBT_DELTA)

To sum up: the first records will be deleted without deallocation of pages because the table is not exclusively locked. When an internal threshold is reached and a lock escalation happens, all ongoing DELETE statements will deallocate the data pages and release them back to the database.

Conclusion

Heaps have lots of advantages and disadvantages. Before you start with the implementation of heaps in your database you have to remember that they are high-maintenance in comparison to Clustered Indexes. A Heap handles DML-Operations completely differently; so a Heap should be the choice when:

- The table is consuming data primarily with INSERT

- The table is autarchic and has no relation to any other table in the database

- The columns of the table are using fixed-length datatypes, thereby avoiding Forwarded Records.

If you have volatile data in your table and you need to delete data from a heap, you would do better with a Clustered Index. This is because data pages will not automatically be deallocated from a Heap and your database will be growing unnecessarily. By using exclusive locks on the table when deleting rows, you will lose the great benefit of granularity and concurrency for this object. This is an unusual risk that you have to consider when you work with Heaps.

Thank you for reading!

Load comments