Executive Summary

Extended Events in SQL Server provide granular event monitoring – but without automation, the data collection process requires a DBA to manually start sessions, wait for events, and extract results. This article covers automating the collection pipeline: creating permanent tables to store event XML and parsed results; building a stored procedure that manages the Extended Events session lifecycle (create if missing, read new events, store to permanent tables, truncate the ring buffer); scheduling execution via SQL Agent; and a complete deadlock monitoring example. The stored procedure approach provides a reusable template that any SQL Server monitoring requirement can adapt.

Extended Events are an excellent way to collect data about a SQL Server that provides a vast array of events that can be used for performance monitoring, troubleshooting, or auditing a server.

While using Extended Events is not overly complex, building a reliable system to collect, parse, and store events over time without any data loss can be challenging.

This article dives into one method for collecting and retaining all event data for a specific set of events. A system like this is highly customizable and provides a solid starting point for Extended Events development. This should be viewed as a collection of puzzle pieces; individual pieces can be adjusted as needed to produce a monitoring solution that fits the needs of a given situation, even if it is vastly different from what is demonstrated here.

Recap

The previous article walked through the creation, configuration, and use of Extended Events with code and customization provided for the reader. The next steps are to turn a temporary process into a permanent one.

Creating the process requires permanent database objects to manage XML indexing and store event XML and the resulting event data. For data to be useful for monitoring, alerting, and analytics, it needs a permanent place to reside. How this data is managed after collection is up to the user. It can be retained forever, for a shorter time frame (if forever is too long), and/or centralized to a location where event data from many servers is aggregated. Centralization may be beneficial regardless of the source details if the central location is a server built for analytics.

Often in projects like this, alerting and monitoring occur close to the source data. In contrast, analytics occur elsewhere to ensure a separation between these processes, their cost in computing resources, and their function.

Note that the stored procedure created in this article uses xp_cmdshell. PowerShell may be easily substituted for this system stored procedure if needed. For the purposes of demonstration, though, having all code in a single script made these concepts easier to understand than by combining the script with PowerShell.

Reading Event Data into permanent tables

Having a permanent target table to store the XML and the results of the XML parsing process is an important step. Some organizations may choose to move the files to another server for processing or store data in another system. For the demonstration, tables will be created in SQL Server to store both the XML and the values extracted from it. Depending on its size, a columnstore index may be beneficial for the table containing the resulting data. If tens of millions of rows are to be stored, use a columnstore index; otherwise, page compression should suffice.

The following code creates two tables: One for the XML and one for the metrics that result from its shredding. It also creates an XML collection as a prerequisite that will allow for indexing the XML column:

|

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27 28 29 30 31 32 33 34 35 36 37 38 39 40 41 42 43 44 45 46 47 48 49 50 51 52 53 54 55 56 57 58 59 60 61 62 63 64 65 |

CREATE XML SCHEMA COLLECTION dbo.extended_events_xml_schema_collection AS N'<xs:schema attributeFormDefault="unqualified" elementFormDefault="qualified" xmlns:xs="http://www.w3.org/2001/XMLSchema"> <xs:element name="event"> <xs:complexType> <xs:sequence> <xs:element name="data" maxOccurs="unbounded" minOccurs="0"> <xs:complexType> <xs:sequence> <xs:element type="xs:string" name="value"/> <xs:element type="xs:string" name="text" minOccurs="0"/> </xs:sequence> <xs:attribute type="xs:string" name="name" use="optional"/> </xs:complexType> </xs:element> <xs:element name="action" maxOccurs="unbounded" minOccurs="0"> <xs:complexType> <xs:sequence> <xs:element type="xs:string" name="value"/> </xs:sequence> <xs:attribute type="xs:string" name="name" use="optional"/> <xs:attribute type="xs:string" name="package" use="optional"/> </xs:complexType> </xs:element> </xs:sequence> <xs:attribute type="xs:string" name="name"/> <xs:attribute type="xs:string" name="package"/> <xs:attribute type="xs:dateTime" name="timestamp"/> </xs:complexType> </xs:element> </xs:schema>'; GO CREATE TABLE dbo.extended_events_xml ( extended_events_xml_id INT NOT NULL IDENTITY(1,1) CONSTRAINT PK_extended_events_xml PRIMARY KEY CLUSTERED, sample_time_utc DATETIME2(3) NOT NULL, event_data_xml XML(dbo.extended_events_xml_schema_collection) NOT NULL) WITH (DATA_COMPRESSION = PAGE); CREATE NONCLUSTERED INDEX NCI_extended_events_xml_sample_time_utc ON dbo.extended_events_xml (sample_time_utc); CREATE PRIMARY XML INDEX PXMLI_extended_events_xml_event_data_xml ON dbo.extended_events_xml (event_data_xml); CREATE TABLE dbo.extended_events_data (extended_events_data_id INT NOT NULL IDENTITY(1,1) CONSTRAINT PK_extended_events_data PRIMARY KEY CLUSTERED, sample_time_utc DATETIME2(3) NOT NULL, database_name VARCHAR(128) NOT NULL, event_name VARCHAR(50) NOT NULL, session_id SMALLINT NOT NULL, cpu_time BIGINT NOT NULL, duration BIGINT NOT NULL, physical_reads BIGINT NOT NULL, logical_reads BIGINT NOT NULL, writes BIGINT NOT NULL, row_count BIGINT NOT NULL, client_app_name VARCHAR(128) NOT NULL, client_host_name VARCHAR(128) NOT NULL, username VARCHAR(128) NOT NULL); CREATE NONCLUSTERED INDEX NCI_extended_events_data_sample_time_utc ON dbo.extended_events_data (sample_time_utc) WITH (DATA_COMPRESSION = PAGE); |

The tables are structured to allow the data to be organized chronologically. dbo.extended_events_xml is a temporary repository for XML retrieved from Extended Events files and will not retain data longer than is needed to process it. dbo.extended_events_data will contain the fields shredded from the XML and is intended to be a more permanent home for Extended Events data.

What remains are two steps to manage event data:

- Read XML data from the Extended Events file and write it to dbo.extended_events_xml.

- Shred that XML and insert the results into dbo.extended_events_data.

This example reads the file created in the first article. Reading the XML takes the function sys.fn_xe_file_target_read_file and inserts the results into dbo.extended_events_xml:

|

1 2 3 4 5 6 7 |

INSERT INTO dbo.extended_events_xml (sample_time_utc, event_data_xml) SELECT timestamp_utc AS sample_time_utc, CAST(event_data AS VARCHAR(MAX)) FROM sys.fn_xe_file_target_read_file( 'C:\SQLBackup\Sql_Server_Query_Metrics*.xel', NULL, NULL, NULL); |

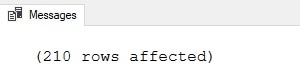

Note that the three NULLs in the function can be replaced with parameters that adjust how to read the file. For our work, the entire file will be read from start to finish. Therefore, the additional parameters may be left NULL. The following confirms that work was done:

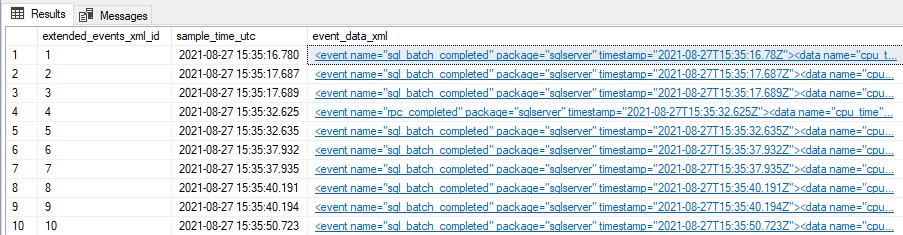

The contents of the table can be confirmed by selecting from the XML table:

|

1 2 3 4 |

SELECT * FROM dbo.extended_events_xml; |

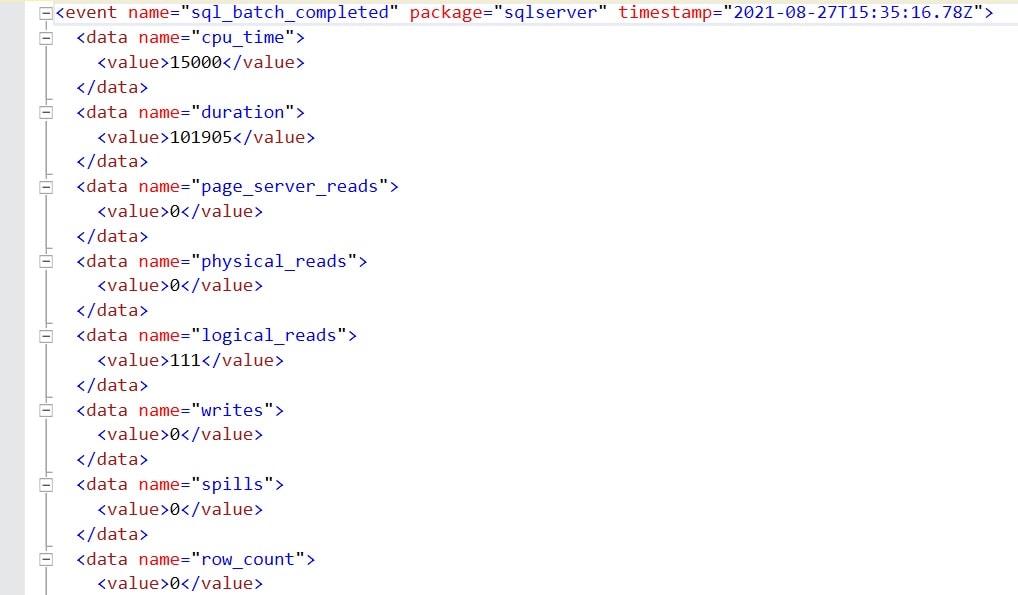

Clicking on any of the XML results displays the raw XML:

The results show some of the fields available in the XML, such as query duration, logical reads, and CPU time. The next step is to parse that XML into those data elements and insert them into dbo.extended_events_data, where they can then be used for analysis:

|

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27 28 29 30 31 32 33 |

INSERT INTO dbo.extended_events_data (sample_time_utc, database_name, event_name, session_id, cpu_time, duration, physical_reads, logical_reads, writes, row_count, client_app_name, client_host_name, username) SELECT sample_time_utc, event_data_xml.value('(event/action[@name="database_name"]/value)[1]', 'SYSNAME') AS database_name, event_data_xml.value('(event/@name)[1]', 'VARCHAR(50)') As event_name, event_data_xml.value('(event/action[@name="session_id"]/value)[1]', 'SMALLINT') AS session_id, event_data_xml.value('(event/data[@name="cpu_time"]/value)[1]', 'BIGINT') AS cpu_time, event_data_xml.value('(event/data[@name="duration"]/value)[1]', 'BIGINT') AS duration, event_data_xml.value('(event/data[@name="physical_reads"]/value)[1]', 'BIGINT') AS physical_reads, event_data_xml.value('(event/data[@name="logical_reads"]/value)[1]', 'BIGINT') AS logical_reads, event_data_xml.value('(event/data[@name="writes"]/value)[1]', 'BIGINT') AS writes, event_data_xml.value('(event/data[@name="row_count"]/value)[1]', 'BIGINT') AS row_count, event_data_xml.value( '(event/action[@name="client_app_name"]/value)[1]', 'VARCHAR(128)') AS client_app_name, event_data_xml.value( '(event/action[@name="client_hostname"]/value)[1]', 'VARCHAR(128)') AS client_host_name, event_data_xml.value('(event/action[@name="username"]/value)[1]', 'SYSNAME') AS username FROM dbo.extended_events_xml; |

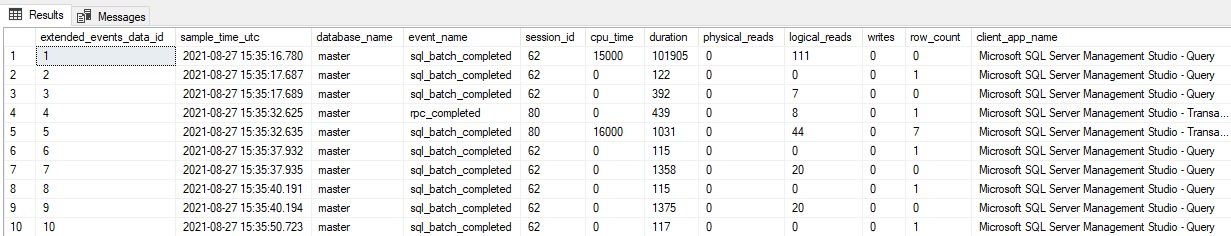

This parses the XML column and pulls out each component of interest to this demo. Additional data elements available in the XML are not used here (result, spills, statement, etc.) but could be if needed. Note that not every XML field is populated in every event, therefore NULL (or defaults) may be needed for some columns in the output table. The results can be confirmed by selecting from dbo.extended_events_data:

|

1 2 3 |

SELECT * FROM dbo.extended_events_data; |

The results show an easy-to-use dataset that has been derived from the event XML. Data will likely be found that may not be useful for a given application, such as:

- Events from system databases

- Events with no reads/writes/CPU/rows returned

- Events from specific logins/hosts

- Events with zero duration

Feel free to add filters to the Extended Events session to remove any scenarios that are not useful for a given application. Unneeded event data will consume extra computing resources throughout this process, and therefore it is beneficial to trim the data set to only what is needed and nothing more.

Automating the data dollection

With each of the building blocks defined, the fun part begins! A process can be built to automate everything introduced thus far, reducing the need for manual intervention, cleanup, or maintenance of Extended Events or the resulting data. The prerequisites for this process are the creation of the following objects, and all introduced previously in this article:

- The XML schema collection, called dbo.extended_events_xml_schema_collection

- The XML table created above, called dbo.extended_events_xml

- The event data table created above, called dbo.extended_events_data

There are many ways to approach this challenge, and no one way is the “right” way. It is up to the consumer of this code to decide how to implement it and adjust it to best fit their database environment.

The simplest way to do this is to encapsulate each of the following T-SQL code blocks into their own chunks of dynamic SQL:

- Create Extended Events session

- Start Extended Events session

- Stop Extended Events session

- Drop Extended Events session

- Read Extended Events log files

- Delete old Extended Events log files

This process allows for code reuse, improved maintainability, and the ability to modify code more easily. The guts of this process will be simplified into a short sequence of dynamic SQL or stored procedure calls, allowing that logic to be adjusted quickly and with minimal effort and, more importantly, minimal risk. (The complete stored procedure can be downloaded here.)

A stored procedure will be created that accepts three parameters:

|

1 2 3 4 |

CREATE PROCEDURE dbo.process_extended_events @extended_events_session_name VARCHAR(MAX), @extended_events_file_path VARCHAR(MAX), @stop_and_drop_all_extended_events_sessions_and_files BIT |

The first two parameters specify a generic Extended Events session name and a place to store event data. This will be modified later on with a suffix to ensure that a new session can quickly be created while events from the old session are read.

The last parameter is used to clean up all Extended Events sessions and data for the session specified in the other parameters. When set to 1, it:

- Stops the Extended Events session, if started.

- Drops the Extended Events session, if it exists.

- Deletes event log files for Extended Events session matching the prefix of the session name provided in @extended_events_session_name

This parameter is useful for testing, cleanup, or when the use of this process is complete.

To streamline this code, as many steps as possible are encapsulated into variables or blocks of T-SQL. The first of these steps retrieves details about the current Extended Events session, if one exists:

|

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 |

DECLARE @current_extended_events_session_name VARCHAR(MAX); DECLARE @does_event_session_exist BIT = 0; DECLARE @is_event_session_started BIT = 0; IF EXISTS (SELECT * FROM sys.dm_xe_sessions WHERE dm_xe_sessions.name LIKE @extended_events_session_name + '%') BEGIN SELECT @does_event_session_exist = 1; SELECT @is_event_session_started = 1; SELECT @current_extended_events_session_name = dm_xe_sessions.name FROM sys.dm_xe_sessions WHERE dm_xe_sessions.name LIKE @extended_events_session_name + '%'; END ELSE IF EXISTS (SELECT * FROM sys.server_event_sessions WHERE server_event_sessions.name LIKE @extended_events_session_name + '%') BEGIN SELECT @does_event_session_exist = 1; SELECT @current_extended_events_session_name = server_event_sessions.name FROM sys.server_event_sessions WHERE server_event_sessions.name LIKE @extended_events_session_name + '%'; END |

This code retrieves 3 details about Extended Events:

- Does an Extended Events session with the specified name exist?

- Is the Extended Events session started?

- What is the currently running Extended Events session name, if one exists?

This information simplifies code later on as it allows a variable to be checked, rather than system views. It also allows for reuse of this info whenever needed.

Extended Events created by this process will automatically append a sequential suffix to the Extended Events session name that is passed into the stored procedure. This ensures uniqueness and the ability to read data from old sessions after the new session has started. Code is needed to try and collect the suffix and will do so like this:

|

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 |

DECLARE @session_number_previous INT; DECLARE @session_number_next INT; IF @does_event_session_exist = 1 BEGIN SELECT @session_number_previous = CASE WHEN ISNUMERIC(RIGHT(@current_extended_events_session_name, 1)) = 1 AND LEN(@current_extended_events_session_name) <> LEN(@extended_events_session_name) THEN SUBSTRING(@current_extended_events_session_name, LEN(@extended_events_session_name) + 1, LEN(@current_extended_events_session_name) - LEN('query_metrics')) ELSE 0 END END SELECT @session_number_next = @session_number_previous + 1; |

This populates two variables that will contain the previous session suffix, as well as the next session suffix. If no session exists, then the new session will be suffixed with “1”.

The next step reuses code from earlier in this article. The only difference is that variables such as file name and session name are spliced into dynamic SQL based on the parameters supplied to the stored procedure. For example, the following command will create the Extended Events session:

|

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27 28 29 30 31 32 |

DECLARE @next_extended_events_session_name VARCHAR(MAX) = @extended_events_session_name + CAST(@session_number_next AS VARCHAR(MAX)); DECLARE @extended_events_session_create_command NVARCHAR(MAX); SELECT @extended_events_session_create_command = ' CREATE EVENT SESSION ' + @next_extended_events_session_name + ' ON SERVER ADD EVENT sqlserver.rpc_completed ( ACTION ( sqlserver.client_app_name, sqlserver.client_hostname, sqlserver.database_name, sqlserver.session_id, sqlserver.username) WHERE (sqlserver.client_app_name NOT LIKE ''SQLAgent%'')), ADD EVENT sqlserver.sql_batch_completed ( ACTION ( sqlserver.client_app_name, sqlserver.client_hostname, sqlserver.database_name, sqlserver.session_id, sqlserver.username) WHERE (sqlserver.client_app_name NOT LIKE ''SQLAgent%'')) ADD TARGET package0.event_file (SET FILENAME = ''' + @extended_events_file_path + @next_extended_events_session_name + '.xel'', MAX_FILE_SIZE = 1000, -- 1000MB MAX_ROLLOVER_FILES = 3) WITH ( EVENT_RETENTION_MODE = ALLOW_SINGLE_EVENT_LOSS, MAX_DISPATCH_LATENCY = 15 SECONDS, MAX_MEMORY = 1024MB, STARTUP_STATE = OFF);'; |

Now that this code is stored in @extended_events_session_create_command, it can be executed using sp_executesql any time. This process will be followed for all subsequent processes, and the code will not be repeated here as it has already been introduced. The entirety of this stored procedure is attached to this article for testing, customization, and use.

After this work is complete, the following commands will exist:

- @extended_events_session_create_command

- @extended_events_session_start_command

- @extended_events_session_stop_command

- @extended_events_session_file_delete_command

- @extended_events_session_file_delete_all_command***

- @extended_events_session_drop_command

- @extended_events_read_data_command

Note that @extended_events_session_file_delete_all_command is used when no previous sessions exist and removes any files with the Extended Events session name provided by @extended_events_session_name.

The last component of this process consists of the following logic:

- If

@stop_and_drop_all_extended_events_sessions_and_files= 1- Does

@is_event_session_started= 1?- If yes, then stop the current session.

- If

@does_event_session_exist= 1- Then drop the current session.

- Delete all log files for the session name provided.

- Does

- If

@stop_and_drop_all_extended_events_sessions_and_files= 0- If

@does_event_session_exist= 0- Then Delete all log files for the session name provided.

- Create the new session.

- Start the new session.

- If

@does_event_session_exist= 1- If

@is_event_session_started= 1- Stop the existing session.

- Create the new session.

- Start the new session.

- Read data from the previous session.

- Drop the previous session.

- Delete log files from the previous session.

- If

- If

If the parameter is passed in to stop and drop all sessions, then the logic above checks for the state of the previous session before stopping it, dropping it, and deleting files.

Otherwise, the process checks to see if a session already exists and if it is started, and then walks through the steps needed to stop it, create a new session, and read data from the previous session.

Why is this logic so involved? The goal is to minimize lost events. If the old session were read before the new session was started, then any events that occur in between would never be captured by Extended Events and would be lost to us.

The table dbo.extended_events_xml is truncated after each use. Once events are read, there is no need to retain the raw XML anymore.

Customize! Customize! Customize!

This process is expected to be modified to meet the needs of a given organization. With near certainty, the collected events will be adjusted to capture different events, actions, and possibly other bits of metadata collected along the way (such as the database server name).

The XML table can be reused as-is without any modifications.

The data table will need to be adjusted to account for different data elements being parsed from the XML. This table does not need to include columns for XML elements that will be ignored but should have columns for any elements that are to be retained.

Deadlock example

If there was a need to automate event collection for deadlocks, the following could be used as the Extended Events session create command:

|

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 |

DECLARE @extended_events_session_create_command NVARCHAR(MAX); SELECT @extended_events_session_create_command = ' CREATE EVENT SESSION ' + @next_extended_events_session_name + ' ON SERVER ADD EVENT sqlserver.xml_deadlock_report ( ACTION(sqlserver.client_app_name, sqlserver.client_hostname, sqlserver.database_name)) ADD TARGET package0.asynchronous_file_target (SET FILENAME = ''' + @extended_events_file_path + @next_extended_events_session_name + '.xel'', MAX_FILE_SIZE = 1000, -- 1000MB MAX_ROLLOVER_FILES = 3) WITH ( EVENT_RETENTION_MODE = ALLOW_SINGLE_EVENT_LOSS, MAX_DISPATCH_LATENCY = 15 SECONDS, MAX_MEMORY = 1024MB, STARTUP_STATE = OFF);'; |

To start, there would be value in dumping the Extended Events data into the XML table and examining it to determine which columns should be maintained and which may not be needed. There are likely some columns that are not needed and many that will be the same as those used thus far in this article. Others, such as the deadlock graph, would be essential.

Note that @next_extended_events_session_name was previously calculated above. The code used to populate this (and other dependant variables) can be reused.

Automating Extended Events data collection

The stored procedure presented (and attached) in this article represents a process that can be executed regularly and will automatically manage an Extended Events session.

By making each step of the process a modular component, the ability to test, customize, and maintain this code is far simpler than it would be otherwise. With this structure, it is possible to completely change how an Extended Events session is read without affecting the other building blocks of the process.

The learning curve to using Extended Events via T-SQL is not trivial, but focusing on macro commands rather than individual queries helps make it easier to dive into and become familiar with the process.

As with any homegrown process, customization is essential to getting the most out of it. Microsoft has documented Extended Events quite well:

Quickstart: Extended events in SQL Server – SQL Server | Microsoft Docs

Many writers have shared their tips and tricks for how to capture various events and view the resulting event data. Combining that knowledge with automation makes this into a process that is easy to deploy, manage, and scale, regardless of whether it is needed on one server or one hundred.

FAQs: Automating Extended Events data collection

1. How do I store SQL Server Extended Events data permanently?

Create a table to hold the raw event XML: CREATE TABLE dbo.ExtendedEventData (EventXML XML, CollectedAt DATETIME DEFAULT GETDATE()). Use INSERT INTO dbo.ExtendedEventData SELECT target_data FROM sys.dm_xe_session_targets WHERE target_name = ‘ring_buffer’ AND event_session_address = (SELECT address FROM sys.dm_xe_sessions WHERE name = ‘YourSessionName’). Parse the XML into structured columns with a second table and XQuery extraction. Schedule this collection via a SQL Agent job on a regular interval to build a historical record.

2. How do I automate Extended Events session management with a stored procedure?

Build a stored procedure that: (1) Checks if the Extended Events session exists (SELECT FROM sys.server_event_sessions) and creates it if not; (2) Starts the session if not running (ALTER EVENT SESSION…ON SERVER STATE = START); (3) Reads event data from the ring buffer target using sys.dm_xe_session_targets; (4) Inserts new events into the permanent table; (5) Optionally truncates the ring buffer after collection using ALTER EVENT SESSION…DROP TARGET then re-adds it. Execute this stored procedure on a schedule via SQL Agent for hands-off event collection.

3. How do I capture SQL Server deadlocks with Extended Events?

Create an Extended Events session that captures the system_health.xml_deadlock_report event or create a custom session: CREATE EVENT SESSION [DeadlockCapture] ON SERVER ADD EVENT sqlserver.xml_deadlock_report WITH (MAX_DISPATCH_LATENCY = 5 SECONDS) ADD TARGET package0.ring_buffer; START the session and let it run. When a deadlock occurs, the ring_buffer stores the XML deadlock graph. Query and parse the XML to extract victim, process, and resource information. The automation pattern from this article can schedule periodic extraction to a permanent table for historical deadlock analysis.

4. What is the difference between Extended Events and SQL Server Profiler?

SQL Server Profiler (and SQL Trace) is the legacy SQL Server event monitoring tool. It is deprecated and should not be used in new implementations. Extended Events is the replacement: lower overhead (no separate monitoring process), more flexible event filtering and action definition, ring buffer and file targets that don’t require an active monitoring session, and integration with SQL Server Management Studio for session creation and analysis. Extended Events supports thousands of events vs Profiler’s hundreds, with better performance impact.

Load comments