- In-Memory OLTP – Part 1: Monitoring

- In-Memory OLTP – Part 2: Memory-Optimized Tables

- In-Memory OLTP – Part 3: Row Structure and Indexes

In-Memory OLTP (IMOLTP) introduced to SQL Server not only a new engine, but also some new concepts. A database that is truly working with IMOLTP contains several Memory-Optimized Objects including, in SQL Server 2016, memory-optimized tables, memory-optimized table variables, natively-compiled Stored Procedures, Indexes and scalar user-defined functions.

Thanks to the Interop component that we can have, memory-optimized objects and traditional objects can work together. However, in order to achieve the best performance, it is best to create as many memory-optimized objects as possible to work together within IMOLTP.

What are the prerequisites to start using memory-optimized tables?

In order to support Memory-Optimized Tables (MOT), there are some requirements and steps to follow.

Starting with the basics, you’ll need a 64-bit Developer or Enterprise version of SQL Server. Don’t forget that the In-Memory OLTP feature was introduced on SQL Server 2014, so you must install SQL 2014 or later. If you are planning to use IMOLTP on Azure SQL Database, make sure that you’re running at least V12.

The IMOLTP feature is not a separate component. As soon as you have the SQL Server Engine installed, you can start using the feature.

SQL Server Management Studio is now a separate, and free, product. It is a good idea to download the latest version of this tool in order to take advantage of the continuous compatibility improvements with IMOLTP.

It is important to have enough available memory as well. But what do I mean by “enough”? In order to provide the right amount of memory, we need to estimate the size of the data that will be stored in-memory. Unfortunately, this is not the only factor to take into account when sizing the server memory. Because of the memory-needs of the row-versioning strategy used for Memory-Optimized Tables, and in order to support table changes, Microsoft recommends that at least double the estimated table size is available.

The memory provisioning is not that straightforward: remember that if you have other databases or tables in the same server, whether or not they are using the In-memory OLTP feature, you will need to provide memory for those components as well. If the system is sharing its resources, you will need to take that into account.

Recommended Memory = ( Estimated MOT Data Size x 2 ) + Estima ted Memory f or other components

In case you already have MOTs in your database, you can get more information about the table size by using the sys.dm_db_xtp_table_memory_stats DMV.

In order to find more details about how to calculate the size of each MOT row, Microsoft published this good resource with practical examples.

It is a very good idea to enable the Resource Governor in order to control the memory consumption of the MOTs. This is not mandatory, but can help avoiding server-wide “low memory” problems. I’ll go into the details of this in a later article.

Another requirement is related to disk space. If you are new to the In-Memory technology, this may look weird, but in fact the disk is still being used (the purpose of the disk will be explained later on in this article). The recommendation is to have, at least double the estimated size for MOT available as free disk space.

Still on the subject of the disks, the old and well-tried recommendations are still valid: it is best to have a dedicated disk for this purpose, enable Instant File Initialization (IFI), and don’t forget ‘The faster is the disk, the better will be the performance’.

Memory-optimized Table – two different approaches

Let’s now finally start talking about the MOTs. There are two different types, with different requirements and characteristics: Durable and Non-Durable tables.

Both Durable and Non-Durable MOTs are fully operating in memory. This means that all the table rows, whether active or not, will be in-memory all the time once the SQL Server Engine process is running. All the read and write operations are being done directly in the server’s memory.

The main difference between them is related to the data lifecycle, and the way that the rows are managed. This will result in different performance results, but there are always some compensations.

The RAM memory is a very efficient way to store data, but it is volatile. This means that if we lose power and the server shuts down, all the data stored in memory will be lost. That is why we have two different types of MOT.

By default, if a MOT is created without an explicit reference of its kind, a Durable MOT will be created. This kind of table has a similar behaviour to the traditional disk-based tables, keeping the data even if the server, or the instance, is restarted. But how does it work?

In order to ensure that the data stored in the memory will be available in case of a SQL Server instance restart, or if an unfortunate crash happens, all the operations targeting the Durable MOTs are logged and stored in the disk. As you can imagine, both the logging and saving the data to disk are expensive operations, but in order to minimize the performance impact, a delayed durability strategy can be used: the user executes the command and will receive a “success” message, however, the data will be saved to disk afterwards. This strategy has a performance benefit, but won’t assure that your data will be safe if your server crashes during this process. In summary, the default MOT type is Durable, which keeps the data even if the server is restarted, but there are some disadvantages related to performance.

On SQL Server 2014, there’s a “soft” limitation of 256 GB per database for Durable MOTs. This means that the sum of the data contained on all the Durable MOTs cannot exceed this amount. From SQL Server 2016, this recommendation was increased to 2 TB per database. This is not a hard limit, just a recommendation given by Microsoft, based on their tests.

The other type of Memory-Optimized Table is “Non-Durable”. As its name says, this is a volatile table. Because of this characteristic, there’s no need to either have operations logged or to save the data to the disk, which will eliminate the need for and disk access. This kind of table offers the best performance benefit, with the penalty of data loss if the server crashes or if the instance/server is restarted.

Both Durable and Non-Durable MOTs are supported on databases with traditional disk-based tables. It is possible to create both Durable and Non-Durable MOTs in the same database.

It is important to mention that, on the first version of In-Memory OLTP in SQL Server 2014, a MOT table could not be changed after its creation, meaning that simple actions such as creating new columns, changing data types or tuning indexes are not possible in that version. From SQL Server 2016 the ALTER TABLE statement is supported, bringing much more flexibility to databases with MOTs. Be aware that while the change is being processed, SQL Server will require double the amount of memory that is currently being used by the table in order to succeed. SQL Server 2016 also introduced the possibility of use Foreign Keys and Nullable columns.

Memory-optimized Table Variable – What is that?

Taking advantage of Memory-Optimized Table Type (MOT Type), another structure compiled and optimized to work in memory, it is possible to create a Memory-Optimized Table Variable (MOT Variable).

A MOT Variable is similar to a traditional Table Variable, the main difference is that on this kind of object the disk is not used, just the memory. This way, there’s no TempDB access or any sort of disk I/O.

Another advantage is that MOT Variables are using the same structure of MOTs, which are highly optimized to work in-memory. The memory allocated to MOT Variables is freed up as soon as the variable is out of scope, even the invalid rows.

Native Compilation

On In-Memory OLTP, the “Native Compilation” is the process of converting the T-SQL code into native code, which brings the benefit of a more efficient data access and query execution, since – unlike traditional SQL Server objects – the code is no longer interpreted during the execution phase.

Both Memory-Optimized Tables and Memory-Optimized Table Types are compiled, resulting in a DLL file. Those DLLs are recompiled on every database or instance restart, based on the metadata stored in the database.

The following query will help us to identify where the compiled DLLs are physically stored in the server:

|

1 |

SELECT name, description FROM sys.dm_os_loaded_modules WHERE description = 'XTP Native DLL' |

In a nutshell, the DLLs will be stored in a folder called “xtp” that will be created the instance’s default datafiles location. If you want to relocate those files, the only way is to change the default datafile location in the instance’s properties (the server should be restarted).

Row structure

Pages and Extents are part of the structure of traditional disk based tables. The rows for this kind of table are identified by page number and offset. This is not true of Memory-Optimized Tables.

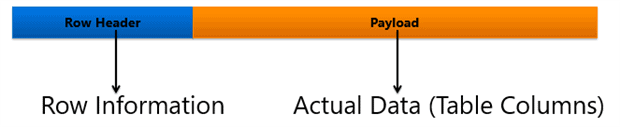

In order to provide a better efficiency, the rows for MOTs are organized differently, as below:

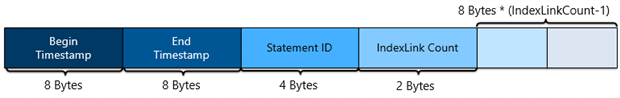

In order to allow concurrent reads and writes, rows from MOTs are versioned, resulting in the following structure for the Row Header:

The Begin and End Timestamp is used to control the “Row Visibility”. The Begin Timestamp contains the time of the creation of the row. Once the End timestamp is set, the row is interpreted as “removed”. It is important to understand that a removed row will still be stored in memory for a while. Once the Garbage Collector runs and there are no more active transactions with a time prior to the one set as End Timestamp, the row will be cleared from memory. Notice that a removed row can be generated not only from DELETE statements, but also from UPDATE statements. Under the hood, an UPDATE is doing an INSERT and a DELETE, because it is more efficient to create a new row with the modified data than to update the current one. Aborted INSERT transactions are also a possible cause for deleted rows.

The Statement ID is the unique ID value for the statement that created the row. The “IndexLink Count” is a number indicating how many indexes are referencing the row.

The remaining slots are used to store index pointers, which are linking the rows of a particular table. That is the reason why every memory-optimized table must have at least one index.

Memory-Optimized File Group

In order to create Memory-Optimized Objects, it is a requirement that a Memory-Optimized Filegroup (MOFG) has already been created in the instance.

A MOFG is a kind of Filegroup based on FILESTREAM, and its role is to hold containers in which the durable Memory-Optimized Tables will store their data. Even if you are planning to just use Non-Durable MOTs, the existence of a MOFG is still required.

As I’ve mentioned, a MOFG can have several containers where Checkpoint Files will be stored. Those kind of files are used to keep track of the data for all durable tables. We will dive into this topic in another article.

Some facts about MOFG:

- Even though they are based on FILESTREAM, there’s no need to enable this in order to create a MOFG.

- A database can have only one MOFG.

- A MOFG may hold multiple containers, which is a pointer to an OS folder.

- From SQL Server 2016, each container have its own Offline Ceckpoint Thread, allowing a better scalability when persisting the data.

- It is a good practice, in terms of I/O, to have the containers created in different disks.

Code time! How do you create a Memory-Optimized Table?

Now that we introduced the basics about the In-Memory OLTP structure, its dependencies and requirements, it is time to see how it works. Notice that every Memory-Optimized Table should have at least one index, as mentioned earlier in this article. The code to create the index will be included in the example, but indexes will be the subject of my next article in this series. The code is fully compatible with SQL Server 2016.

First of all, let’s create a database containing a Memory-Optimized Filegroup. This Filegroup will have two containers:

|

1 2 3 4 5 6 7 8 9 10 11 12 13 14 |

CREATE DATABASE [FootballStatsIMOLTP] ON PRIMARY ( NAME = N'FootballStatsIMOLTP', FILENAME = N'F:\SQL_DATA\FootballStatsIMOLTP.mdf' ) LOG ON ( NAME = N'FootballStatsIMOLTP_log', FILENAME = N'G:\SQL_LOG\FootballStatsIMOLTP_log.ldf') GO -- Memory Optimized FG ALTER DATABASE [FootballStatsIMOLTP] ADD FILEGROUP [MemoryOptimizedFG] CONTAINS MEMORY_OPTIMIZED_DATA GO -- Adding the containers ALTER DATABASE [FootballStatsIMOLTP] ADD FILE (name='FootStatsContainer01', filename='F:\InMemoryContainer\FootStatsContainer01') TO FILEGROUP [MemoryOptimizedFG] GO ALTER DATABASE [FootballStatsIMOLTP] ADD FILE (name='FootStatsContainer02', filename='E:\InMemoryContainer\FootStatsContainer02') TO FILEGROUP [MemoryOptimizedFG] GO |

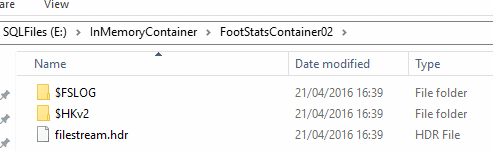

The database called ‘ FootballStatsIMOLTP ‘, and the MOFG with two containers are now created. If you open the Windows File Explorer on one of the container’s paths, you will already find a folder structure defined, as well as some files.

At this stage, we have all that is needed to start creating Memory-Optimized Objects. So let’s now create a Memory-Optimized Table.

|

1 2 3 4 5 6 7 8 9 10 11 12 13 14 |

CREATE TABLE [dbo].[Fixtures]( [fixtureID] [int] NOT NULL IDENTITY(1,1) PRIMARY KEY NONCLUSTERED, [seasonID] [int] NULL, [date] [datetime] NULL, [status] [nvarchar](50) NULL, [matchday] [int] NULL, [homeTeamName] [nvarchar](5) NULL, [awayTeamName] [nvarchar](50) NULL, [goalsHome] [int] NULL, [goalsAway] [int] NULL, [homeTeamID] [int] NULL, [awayTeamID] [int] NULL ) WITH (MEMORY_OPTIMIZED=ON) GO |

You can see that the CREATE TABLE command is almost the same. The key here is the MEMORY_OPTIMIZED=ON clause. Notice that we are not explicitly stating whether the table is durable or non-durable. This means that the default will be used, so the table is a Durable MOT. In order to explicitly define the table’s durability, we need to add ” DURABILITY= SCHEMA_ONLY | SCHEMA_AND_DATA” to the command, where SCHEMA_ONLY defines Non-Durable MOTs and SCHEMA_AND_DATA to Durable MOTs.

The Durable table is created, but now I need to create a Non-Durable table. I can do this executing the following:

|

1 2 3 4 5 6 7 8 9 |

CREATE TABLE dbo.[GameFacts] ( ID int NOT NULL IDENTITY (1, 1) PRIMARY KEY NONCLUSTERED, fixtureID int NOT NULL, comment varchar(100) NOT NULL, dateAndTime datetime NOT NULL, CONSTRAINT FK_Fixture FOREIGN KEY (fixtureID) REFERENCES dbo.Fixtures (fixtureID) ) WITH (MEMORY_OPTIMIZED=ON, DURABILITY=SCHEMA_ONLY) GO |

In order to create a Non-Durable table, the DURABILITY parameter need to be set, as this is not the default value for MOTs. You might have also noticed that a Foreign Key is being created. This wasn’t supported on SQL Server 2014 for MOTs and is one of the improvements on SQL Server 2016.

Load comments