Database Maintenance Tips and Tricks: Part 1.

I do not know many people who actually enjoy routine database maintenance tasks. They can be quite monotonous and require more in the way of pedantry than creativity from the DBA. However, there can be no doubt that database maintenance is important. No matter how brilliant the application is, or how perfectly-designed the database, the system will very soon cease to function correctly without proper maintenance

While database maintenance may never be the most motivating activity that a DBA can undertake, I’ve found that, if you are prepared to make a real effort to learn SQL Server internals and to understand what is going behind the scenes, the task quickly becomes much more interesting, even intriguing.

In this series of the articles, I would like to discuss some SQL Server maintenance internals and, along the way, present a few tips, tricks and traps that DBAs should be aware of when performing database maintenance. In this particular instalment, I will cover:

- Some general issues surrounding disk space requirements

- Disk space and the DBCC CHECKDB consistency check

- Snapshot folder space and transactional replication

How much disk space do I need?

This question, or its close relation, “How fast will the database grow?” is one that customers ask very often. At first glance, it seems to be a quite straightforward, innocuous-looking question, but in reality one must consider many different factors in order to be able to answer it with any degree of accuracy.

Disk-space planning is just one element of the broader discipline of sizing and capacity planning. Sizing (also called pre-configuration capacity planning) takes place before you design the system and attempts to estimate the resources the system will need, over a period of time, in order to meet the conditions of the Service Level Agreement (SLA), or similar specification. At this stage, no statistics exist and so you need to create them, by modelling the system that needs to be designed, which can be quite expensive, or else find other ways to estimate the required resources.

Capacity planning deals with working applications. It uses existing statistics regarding resource consumption to predict future requirements, in order that the system will continue to meet the requirements of the SLA.

Note: A full discussion of sizing and capacity planning is beyond the scope of this article. For further information, the Microsoft Press book, “Microsoft SQL Server 2000 Performance Tuning: Technical Reference”, contains several useful chapters on these topics.

When planning disk space requirements, many people start and end with a basic estimation of database size, and its growth over time. However, there are many other factors to consider that will affect your overall disk space requirements. We’ll discuss each in turn in the following sections.

Database Size and Growth

Many people adopt a fairly simplistic approach to database sizing. For example, for a new database application, they may try to estimate the future size of the database based on:

- The row length of each table.

- The number of business transactions that the database will need to store over a given period of time

This approach may work fairly well for databases with relatively small number of the tables. However, the more variable-length columns in the tables, the less precise will be the result of these calculations.

Of course, when estimating the number of business transactions that may need to be stored in a given period, and the disk space required to accommodate this, you need to give due consideration to the basic nature of the business. For example, a sales application that sells grocery products is likely to need more disk space than one that sells furniture, because a single grocery transaction contains more items than a furniture transaction. Furthermore, there may be seasonal trends to consider. For example, when database sizing for a superstore that sells office supplies, you will need to account, in your calculations, for an abnormally high number of transactions at the end of August, just before school year starts.

You will also need to plan for the amount of space required by indexes, as the indexes can occupy the same, or even more, space than data.

Keep in mind that indexes and data do not fully utilize the index (data) pages.

The amount of free space in data pages depends on the number of columns in a table, and the data type of each of these columns.

For the index pages, the amount of free space also depends on the fill factor and pad parameters. Therefore, how full the page is depends on structure of the table and any change in the structure of the table will affect the size of your database, sometimes significantly.

Some modelling tools are available that can facilitate these sizing calculations, based on rows and indexes length. See, for example: The Basics of Sizing a SQL Server Database By Brian Knight, 2008/08/01 .

Disk Speed

While a disk system may be of sufficient size to cope with predicted requirements, it may not be able to handle the anticipated number of I/Os per second, generated by the workload.

In this case, you may need to add some extra disks in order to separate different database files physically, so that each will reside on its own disk or logical drive.

Alternatively, you may want to change the RAID configuration to one that is more suitable for your particular application.

Disk Utilization

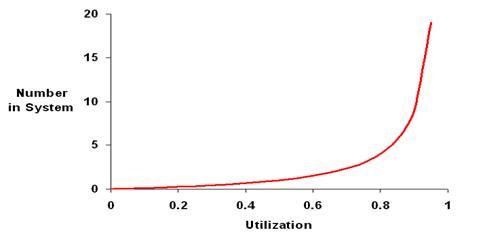

According to the utilization rule of queuing theory, when utilization of resources reaches a certain point (the knee of the curve on the utilization graph), the queue of processes waiting for the resources, and so the response time of the system, starts to grow exponentially.

For disk space, and for the number of I/O operations per second, the knee of the curve on the utilization graph occurs at 80-85% capacity. As such, your database should not occupy more than 85% of total disk space; and the number of I/Os per sec. should not regularly exceed 85% of its maximum. These factors must be considered in your planning calculations.

RAID Configuration

Storage planning also depends on the type of RAID configuration in use. Most RAID configurations require some disk redundancy in order to make the disk system fault-tolerant.

Sizing TempDB and the Transaction Log

You also need to reserve some space for TempDB and the transaction log – and both can vary in size over quite a wide range. Allowing inadequate space for TempDB is a trap into which many unwary DBAs fall, as we will discuss shortly.

Database Maintenance

Finally, one more area that should not be overlooked is database maintenance. Again, as we will see shortly, a number of database maintenance activities themselves require extra disk space.

TempDB and Consistency Checks

When estimating how much disk space you need for the database, it is important to consider the sizing of TempDB, and the space it will need to handle temp tables, dynamic SQL usage, merge joins and so on. It is also important to remember that performing consistency checks, by running the DBCC CHECKDB command, will also require space in TempDB. Failure to plan for this can lead to difficulties.

For example, one day I received a call from a customer who had a problem with a one Terabyte SQL Server database and needed help. The first thing I suggested was that they run a DBCC CHECKDB health check.

The DBCC CHECKDB command had been running for seven hours when it detected a corruption in the database. However, the error message was not about the corruption: the consistency check had failed due to a lack of space for TempDB!

Unfortunately, this situation is quite typical. On the one hand, most DBAs understand the importance of TempDB. They know that the database engine and the programmers use TempDB very intensively and they follow the recommendations of SQL Server Books Online and provide enough room for TempDB growth. In many cases, they also remember to place TempDB on a fast disk drive (RAID), separately from data files.

On the other hand, it is in a human nature to treat “temporary” things as less important, and this is may be why DBAs or operational staff often put applications, backups, temporary files and so on, on the same drive as TempDB. This reduces the space available for TempDB growth and increases the total number of I/O operations on the drive where TempDB resides.

Note: In light of the above, it seems to me that it would be a very good idea to rename “TempDB” to something like “Very Important Short-Term Storage (VIST or VistDB)”

You can estimate the space required in TempDB for performing a database consistency check, by executing DBCC CHECKDB using the WITH ESTIMATEONLY option:

|

1 |

DBCC CHECKDB ('database_name') WITH ESTIMATEONLY |

The result of that estimation, however, can be misleading, as the following example will illustrate. First, create a new database and allocate 50 GB of disk space for that database. Then, run the following statement:

|

1 |

DBCC CHECKDB ('database_name') WITH ESTIMATEONLY |

DBCC CHECKDB incorporates a few integrity checks, among them DBCC CHECKALLOC and DBCC CHECKTABLE, and the above provides an estimate of the space needed for each of these checks:

|

1 2 3 4 5 6 7 |

Estimated TEMPDB space needed for CHECKALLOC (KB) ------------------------------------------------- 760 Estimated TEMPDB space needed for CHECKTABLES (KB) -------------------------------------------------- 94 |

Add one more 50 GB data file to your newly created database, and then re-run the DBCC CHECKDB command:

|

1 |

DBCC CHECKDB ('database_name') WITH ESTIMATEONLY |

The result of your estimation this time will be:

|

1 2 3 4 5 6 7 |

Estimated TEMPDB space needed for CHECKALLOC (KB) ------------------------------------------------- 1518 Estimated TEMPDB space needed for CHECKTABLES (KB) -------------------------------------------------- 94 |

Based on these results, you might assume that the space required in TempDB for the database consistency check is about 0.8 MB for a 50 GB database and 1.6 MB for a 100 GB database, with the majority of space in each case being required to check the consistency of allocation structures, and very little space being required to check the consistency of system tables. However, these figures are misleading.

The problem is that we are working with an empty database. We allocated disk space but we didn’t create and populate any user tables. In this case, while the figures for CHECKALLOC should be roughly accurate, the figures for CHECKTABLES will be massively underestimated.

In reality, for a normal, populated 100 GB database, the amount of TempDB space needed for DBCC CHECKTABLES would be much higher than the space needed for DBCC CHECKALLOC. In fact, the difference in the values can be so big that the space needed for DBCC CHECKALLOC can be omitted.

In short, for databases without data, you cannot use “DBCC CHECKDB…WITH ESTIMATEONLY” to estimate the space required in TempDB in order to run DBCC CHECKDB. From my experience, however, I know that the TempDB space needed for DBCC CHECKDB is equal to about 1.5 – 2 % of the total database size. So, for a 100 GB database, you will need 1.5 – 2 GB for TempDB in order to complete the consistency check. Correspondingly, you will need approximately 15 – 20 GB for TempDB when you run the consistency check for 1 TB database.

Note: The numbers 1.5 – 2 % are correct for SQL Server 2005 only. For SQL Server 2000, these numbers are lower and equal to 1 – 1.5 %. The reason for such a difference is that SQL Server 2005 has more consistency checks, which also are more comprehensive. I have not yet had the opportunity to generate accurate figures for SQL 2008, though I imagine they may well be different again.

The problem is not usually the availability of extra space – after all, if you already have 1 TB for the user database, allocating an extra 15 – 20 GB should not be an issue – but in remembering to allocate this extra space before performing the check.

This issue is compounded by the length of time it takes to perform the check. A full consistency check for a 1 TB database may take 10-15 hours, depending on hardware, network and SAN characteristics. If your production database must be available 24×7, or very close, then you will not be able to run consistency checks very often. You will need to arrange with the customer to have regular maintenance windows to perform them and that time is not easy to get. When you finally get the time to run the consistency check, do you want DBCC CHECKDB to fail after 7-8 hours because of lack of space for TempDB?

Replication Maintenance and the Snapshot Folder

Database maintenance, and disk space planning in particular, can be a many-faceted task, depending on the environment. If, for example, you use replication in your system, then your disk space planning for a given server will need to take into consideration the space required for the subscription database; distribution database, the snapshot folder, additional space for transaction logs on Publisher and Subscriber databases, and so on.

Furthermore, the DBA will be tasked with ensuring that the replication process runs smoothly and to perform maintenance in light of any problems. It is easy to forget, when planning disk space requirements, that replication maintenance itself can require extra disk space.

We’ll consider a transactional replication example to illustrate this point, but first we need to review a few of the key components of transactional replication. It employs several components that keep track of the changes, made in the Publisher database, and help to transport these changes to the Subscriber(s). These components are:

- Transaction log – registers all data changes made in the Publisher database (regardless of recovery model)

- Log Reader Agent – checks transaction log for transactions that are marked “for replication” and transfers them to the Distribution database.

- Distribution Agent – moves transactions from Distribution database to subscription database.

In the context of transactional replication, you can think of the transaction log and distribution database as reservoirs i.e. accumulators of data that needs to be replicated to the subscribers. Using the same analogy, you can treat the Log Reader agent and Distribution agent as the pumps that move accumulated data from reservoir to reservoir and, ultimately, to the final subscriber destination.

Now, let’s assume that you use transactional replication in your production environment. You come into the office on a Monday morning to discover to your horror that the transaction log on the Publisher database has grown to 60 GB (the exact figure is irrelevant – the point is that the transaction log grew abnormally).

After short investigation, you find that neither the Log Reader Agent nor Distribution Agent is running. As the DBA your pressing concern is to get replication working so you need to understand what might have caused the problem in the first place i.e.

- Why did Log Reader and Distribution Agents stop running?

- Why did transaction log grow so large?

Your first port of call might be to check the space allocations for the various databases. If, for example, the subscription database does not have enough space to grow, the Distribution Agent will stop running. With this “pump” out of action, both reservoirs – the transaction log and distribution database – may overflow, meaning they will “eat” free space on disk drive completely. Similar scenarios will accompany the absence of space for the publisher or distribution databases.

If space allocation is not the problem, your next port of call should be SQL Server Agent. Physically, the Log Reader agent and Distribution agent are executables that, by default, run as the jobs under the supervision of SQL Server Agent. If SQL Server Agent fails then both the pumps will stop. Changes made in the Publisher database will still be registered in transaction log, but won’t be delivered further into distribution and subscription databases. If the volume of changes in the Publisher database is high, then the transaction log will start to grow enormously.

As it turns out in this example that is exactly what happened. The operational personnel rebooted the server over the weekend and didn’t check to make sure that SQL Server Agent restarted.

Now that you know why Log Reader Agent and Distribution Agent stopped and why transaction log grew, you need to find the way to resume the replication Process. Firstly, you may try to synchronize the Publisher and Subscriber by just starting Log Reader and Distribution agents. If this doesn’t work, the only way to resume the replication is to reinitialize the subscription, or to rebuild the replication completely.

The common way to initialize the subscription is to use a snapshot of the Publisher database, though initialization can also be done using a backup of Publisher database.

The snapshot re-initialization process involves two main steps:

- The Snapshot Agent generates a snapshot of the articles in the Publisher database and transfers it to the snapshot folder.

- The Distribution Agent transfers the snapshot to the Subscriber and applies the changes to the subscription database.

Step 1 produces numerous files, including schema, data, constraints and indexes files, which are all placed in the snapshot folder. That data may require a significant amount of additional disk space, depending on the number and size of the tables that have to be replicated. So, here we get to another example of why an essential maintenance task has space requirements that really need to be considered in disk space planning. Successfully restarting replication relies on there being sufficient disk space to accommodate the snapshot folder.

You can estimate the amount of disk space needed for the snapshot folder as follows. Let’s assume the tables that need to be replicated occupy 75 GB of disk space, with 50 GB allocated for data and 25 GB for the indexes.

Based on this, you can guess that about 50 GB of data will be BCPed out and placed in the Snapshot folder. Therefore, you will need an additional 50-55 GB of disk space in order to apply the initial snapshot. You will need that space only for the duration of the initialization process, when data in the snapshot folder and the fully populated subscription database will exist at the same time. In total, whole process can take a few hours. However, for those few hours you will need an extra disk space for the snapshot folder.

Summary

The best chance of success in resources estimation, and in the analysis of their consumption, can be achieved, when developers, DBAs and system analysts work together. However, even in that case, it is not an easy task and rarely or may be never can be done 100% accurately.

However, I hope that this article has made you aware of just how many factors are involved in performing estimations of disk space requirements, and that you will factor these in the next time you’re required to do this.

Load comments