With the proliferation of cloud computing, it’s now becoming easier than ever to create small, targeted microservice architecture using a variety of services. If you’ve chosen Azure as your cloud provider then there are many services that can help you achieve low-friction, high-throughput and low-cost solutions. This post aims to describe these services along with the corresponding pros and cons.

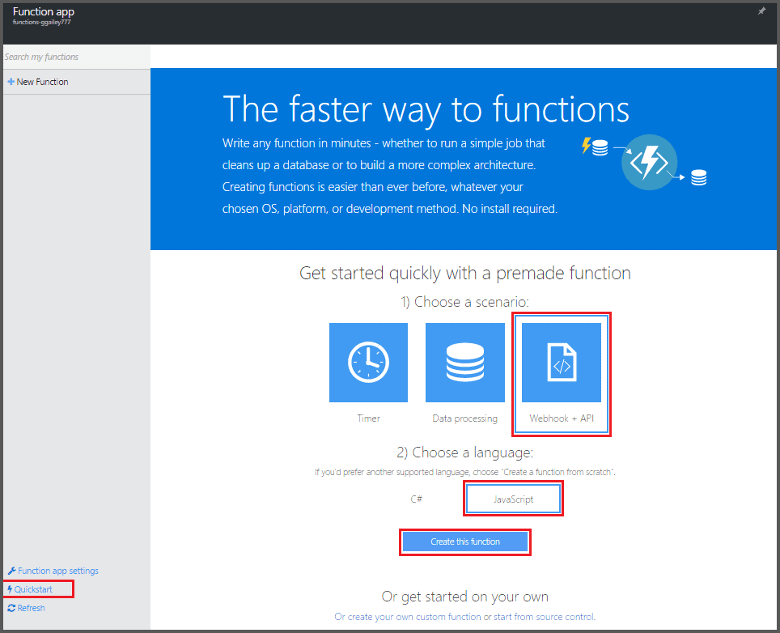

Azure Functions

‘Azure Functions’ is the newest service in the serverless architecture family. These are an event-driven Platform-as-a-Service that allows developers to use a number of languages to create “functions” to perform a task. The events can be raised by another service running on Azure (blob storage, service bus), a 3rd party SaaS service (Dropbox, Github etc) or by a custom trigger (timer or http trigger). Functions are great because you can write custom code, unlike some of the services we’ll examine below.

Advantages:

- Functions support several different languages (C#, Node.js, PowerShell etc)

- There is excellent integration with Azure services

- Functions can be developed and tested locally

- They have great DevOps support from source control to deployment

- They can scale infinitely to support complex and heavy workloads

- They come with an excellent web portal

- There are many new features currently under development

Disadvantages:

- The tooling is a bit immature and still in development

- The documentation is still in development

Verdict:

‘Azure Functions’ is an extremely versatile service that allows developers to quickly write and deploy code with minimal setup requirements. You should use this service when you need to write complex logic or provide integration with unsupported services.

LogicApps

LogicApps made their appearance around the same time that ‘Azure Functions’ did, but are a lot more mature in terms of the tooling and the DevOps story. LogicApps are the enterprise equivalent of IFTT (If This Then That) and allow developers to create complex logic operations that respond to predefined events or triggers. For example, if a file is uploaded to OneDrive for Business, copy that file to a Storage Account and send an email. The difference between ‘Azure Functions’ and LogicApps is that LogicApps offers no way to write custom code. You can create complex workflows using the built-in designer or code editor, but you cannot deviate from the already defined set of actions, triggers and conditions.

Advantages:

It is

- very easy to get started

- It has Very good integration for Business to Business operations

- There is a small learning curve

- It’s a fully managed service

- It requires no custom code

- There is excellent integration with both Azure-based and 3rd party services

Disadvantages

- It is a bit tricky to Monitor LogicApps and it requires access to the portal

- It doesn’t allow any custom code

Verdict:

LogicApps are one level up from ‘Azure Functions’. They have an excellent plug n’ play functionality, work well with many services and allow you to create complex workflows. Unfortunately, they are totally inflexible for implementing any process that deviates from the predefined set of rules. And this is the main value proposition for ‘Azure Functions’. They take over where LogicApps stop and can let you design custom integrations. For your workflows, start with LogicApps and move to ‘Azure Functions’ when you hit a roadblock.

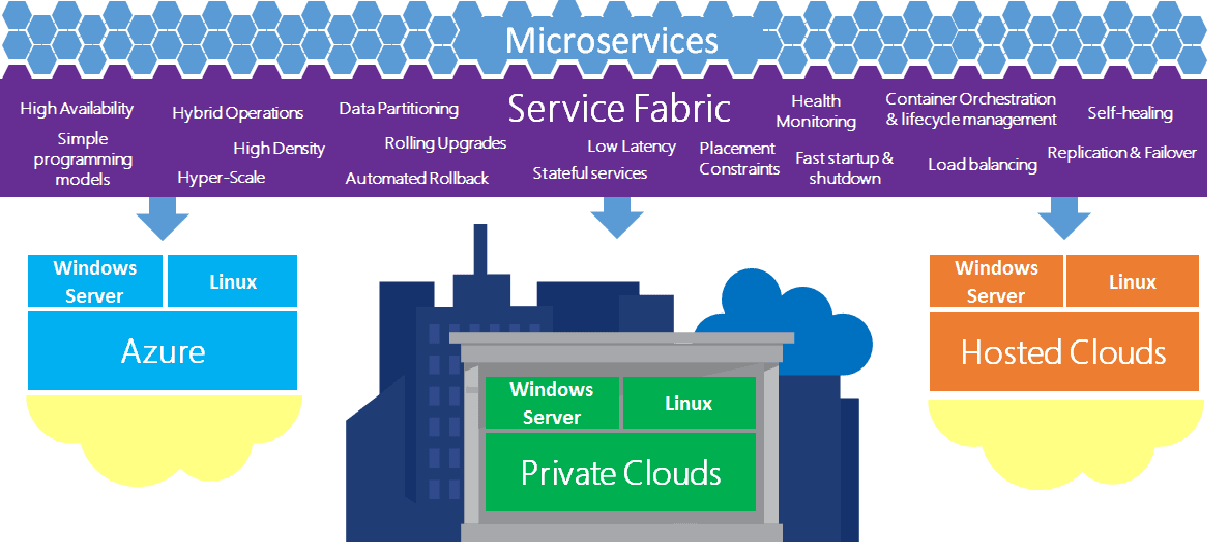

Azure Service Fabric

‘Azure Service Fabric’ is a distributed systems platform that makes it easy to package, deploy, and manage scalable and reliable microservices. Service Fabric consists of compute nodes which are managed by Azure. Unlike Virtual Machine Scale Sets (VMSS), Service Fabric removes the administrative needs of the underlying infrastructure. Service Fabric addresses the challenges in developing and managing cloud applications by allowing you to focus on implementing mission-critical workloads that are scalable, reliable, and manageable. You can think of it as middleware that supports both Windows and Linux workloads. An interesting twist is that you can run Service Fabric on any environment, both on the cloud or on premises so it’s not exclusively a cloud-based solution.

Advantages:

- Massively scalable

- Easy to manage and self-healing

- Supports highly reliable stateless and stateful microservices

- DevOps end-to-end

- Supports multiple languages

- Supports local development using the Service Fabric SDK

Disadvantages:

- Deployment to Service Fabric is likely to requires re-architecting the application to support a micro-services architecture

- Cluster replication is only supported locally and not across multiple data centres

- Costly if not managed carefully

Verdict:

Azure Service Fabric is a battle-tested service. Many of Azure’s PaaS services run on top of the Service Fabric infrastructure, so it comes with guarantees in terms or performance and reliability. The cluster management features and DevOps story make it a great choice for running multiple applications that abide by the microservices architecture. Its greater strength may also be one of its weaknesses because developers will need to ensure that their applications are designed to work in this new model. Monolithic applications will need to be broken up to make the solution more scalable and flexible.

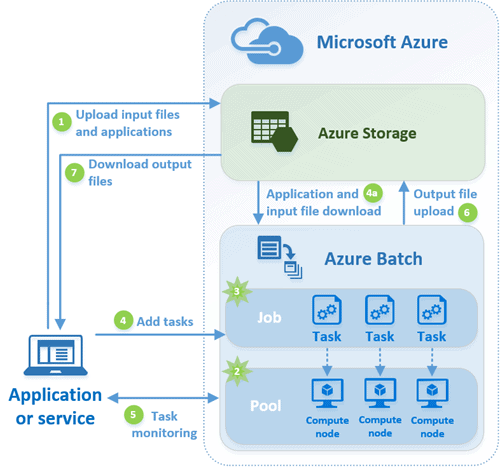

Azure Batch

This service is the hidden gem of HPC (high performance computing) within the Azure Compute service family. As the name implies, Azure Batch is designed to run large-scale and high-performance computing applications efficiently in the cloud. When you’re faced with large workloads, all you have to do is to use Azure Batch to define compute resources to execute your applications in parallel and at the desired scale. A good use-case for Azure Batch would be to perform financial risk modelling, climate data analysis or stress testing. What makes Batch so useful is the fact that you don’t need to manually manage the node cluster, virtual networks or scheduling because all this is handled by the service. You need to define a job, any associated data and the number of nodes you want to utilise. It makes no difference if you need to run on one, a hundred or even thousands of nodes. The service is designed to scale according to the workload needs.

Advantages:

- Fully managed High Performance Compute

- Extremely scalable

- Pay per compute use instead of the infrastructure

- Supports both Windows and Linux

Disadvantages:

- Complex tooling for setting up tasks

- The portal experience is complex

- Seems to be a better fit for deploying workloads programmatically. Would love better tooling support.

Verdict:

Azure Batch is great for running burst of compute workloads either scheduled or in response to increased demand. Whereas in the past you would have to manage everything from scheduling to VM maintenance, Batch allows you to run complex tasks only in a few steps. This means that you can migrate existing applications to Azure Batch with relatively small effort and you can take advantage of the elasticity and cost savings that come with Azure. On the other hand, it would be great if the tooling issues could be ironed out and the process to create and deploy Batch tasks was better documented.

Conclusion

Azure today offers a number of managed services that allow developers to create microservices that can run reliably and at scale. Whether you need a simple LogicApp to provide the “glue” between 2 services or a highly scalable, parallel job execution using Batch, you have the power and flexibility to do this both at scale and economically. Regardless of the disadvantages mentioned here, these services get updated constantly with more features and documentation added all the time to help you make the best of the cloud.

Load comments