Azure can create sticker shock for some companies as they venture into the cloud. As a database administrator, it is your job to fine-tune your environment to mitigate those costs the best you can.

Azure SQL Database Purchasing Models

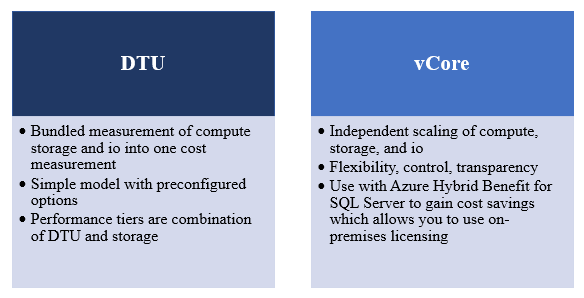

Microsoft gives you two purchasing models for your Azure SQL Databases (SQL DB), a DTU (Database Transaction Unit) and a vCore based model (logical CPU’s). Both options allow you to scale up or down based on your compute and storage requirements. Regardless of which model you choose, performance tuning can save you money when using SQL DB.

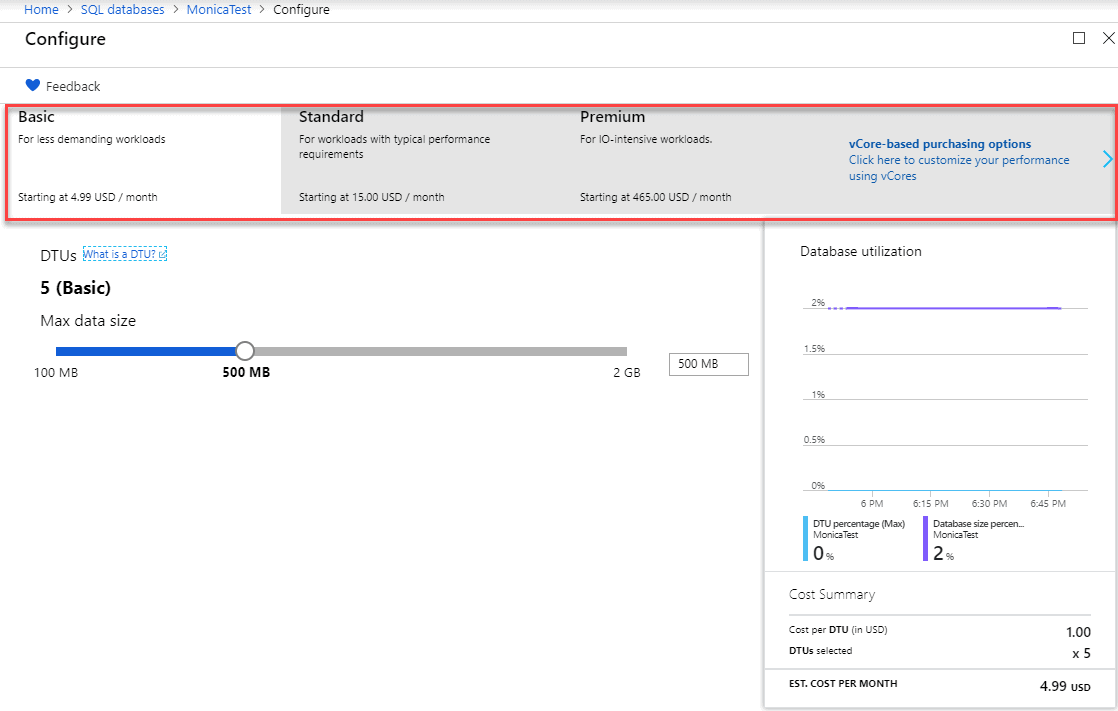

DTU (s) measure “a blended measure of CPU, memory, and data I/O and transaction log I/O” that Microsoft uses to help estimate which DTU based Service Tier you should be using. By reducing your I/O, memory or CPU consumption, you utilize less DTUs or cores and thus can step down service tiers. Stepping down service tiers helps to save money.

Stepping down tiers in the portal is just a matter of going into your SQL DB configuration, choosing a lower tier and clicking Apply.

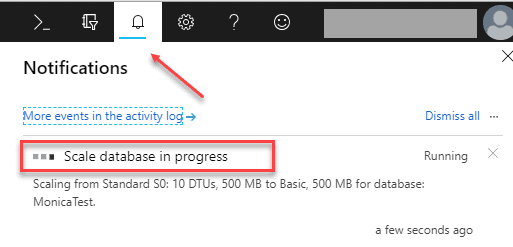

You will see in the in the notifications on the Right side that your scaling is in progress with no downtime.

Azure Automation Runbooks

It’s all about reducing the resources you consume. Just like On-Prem databases, Azure databases need performance tuning. However, unlike most On-Prem environments Azure makes it easy to scale down SQL DB processing resources without any downtime. On top of that, it allows you to save thousands of dollars a month while doing it.

For instance, consider a common scenario we have all seen. Most environments have some type of ETL process that loads data into a database used for reporting. In most of these cases, this can be a resource-intensive process requiring a very high DTU service tier to accomplish this potentially periodic data load. What if you could scale your environment to a higher DTU service tier to meet this load demand and then scale it down when not needed? Imagine how much money you could save the company. In a recent talk with a friend of mine, he was able to implement such a process so that his company’s monthly Azure SQLDB bill went from $55k a month to $21k. That’s an astronomical saving (note this a very large environment).

Let’s look at another scenario. Imagine if you worked for a company that has higher seasonal demands like retail or tax companies. Wouldn’t it be beneficial to be able to add more servers to your environment to handle the workload during your highest demand times? Azure lets you add servers, at will, as well as remove them. For instance, you could spin up additional servers to handle your four-month tax season and then turn them off the remaining nine months of the year. With on-premises hardware, you can’t just return the hardware during slow months to save money, unlike Azure where you can simply turn them off.

Using Azure Automation Runbooks makes the process of scaling your environment easy. As a database administrator, you can easily automate scaling your Azure SQL Database vertically according to any schedule you define even multiple times a day. If your environment has predictable peak times, you can simply move your workload to higher compacity levels during those hours and decrease your levels during off times. Here is a resource for scaling using a scheduled auto scale.

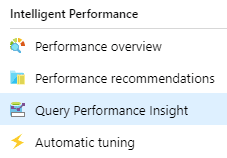

If scaling down based on workload doesn’t fit your environment and you are still looking for ways to save money, start index tuning. An easy place to start is by looking at your heaviest I/O consumption queries, and you can use the Azure SQL Database Query Performance Insights to help you identify these. Reducing the number of reads is the goal. The fewer reads you have, the less I/O and memory consumption you use and the smaller the DTU service tier you need. This is fine for monitoring each Azure SQL Database, but for insights on your entire estate, across cloud and on-premises, then a tool like SQL Monitor is needed.

Automated Tuning

If index tuning is not something you do well, Azure SQL Database includes an Automated Tuning option that you can enable, and it will create indexes (and potentially remove newly created, underutilized indexes) for you. Let’s dive into that further.

Managing indexes are a big part of a Database Administrators job whether it’s in Azure or on-premises. Indexes provide an essential way to performance tune queries, doing it right can be a time-consuming process. Microsoft has long given us DBA tools and suggestions to help tune databases, to fix queries with indexes, and many times they were of questionable merit. We have all seen the dreaded dta_index names in a database and rolled our eyes knowing that sometimes these indexes are not what is needed or create duplicates. While the Database Tuning Advisor, has gotten better over time, it is still not a tool more senior DBAs use. Microsoft made big steps in helping DBAs with performance with the introduction of Query Store in both Azure SQL Database and SQL Server 2016. Query Store collects execution and run-time performance information including plan regressions. Microsoft has taken an additional leap with the introduction of Automatic Tuning. Automatic Tuning is an intelligent performance tuning service which is the results of machine learning processes being applied directly to data generated by Query Store.

By continuously monitoring queries, Automatic Tuning can quickly and intelligently improve their performance. Since it is based on machine learning, it adapts to changing workloads and therefore is better at index recommendations than previously. You can enable it to Create Indexes, Drop Indexes and Force the Last Good Plan on the database level (which is the feature that is available in SQL Server 2017), so it’s not an all or nothing feature. I prefer not to have things automatically done, so given that you can set index creation or plan correction to allow you to manually apply recommendations using the portal is a great feature. According to Microsoft, there is a benefit to having it automatically making changes. They state: “The benefits of letting the system autonomously apply tuning recommendations for you is that in such case it automatically validates there exists a positive gain to the workload performance, or otherwise if a regression is detected it will automatically revert the tuning recommendation.” When manually applying suggestions the reversal mechanism is not available.

To Enable Automatic Tuning

Log in to the Azure Portal

Go to your SQL Database and click on it

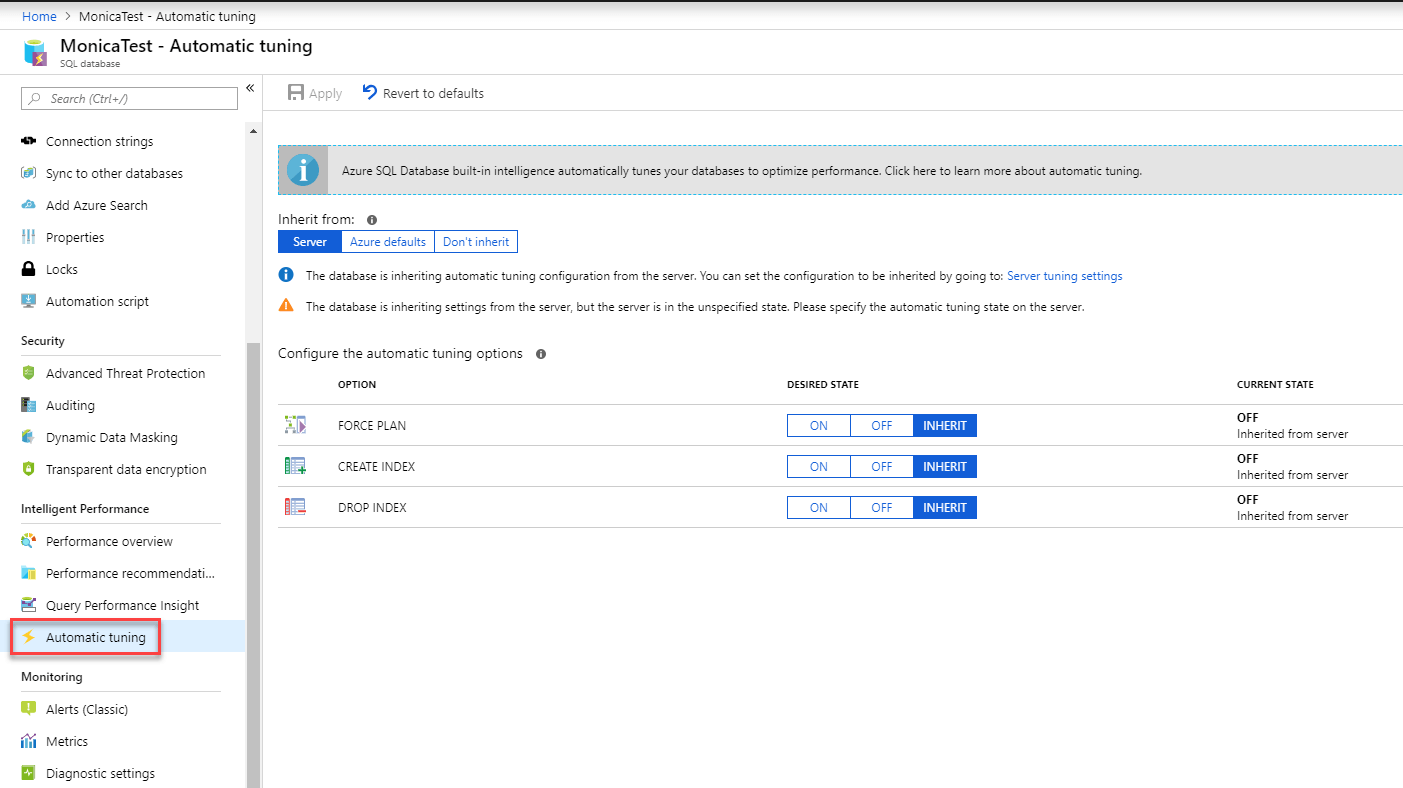

On the menu to the left Choose Automatic Tuning

Here you can toggle on and off each option separately. When I first started using it, I tended not to let it DROP indexes, now that I am more familiar with it, I realize it only drops those it created and knows based on AI knows if they are useful or not. The fact that Automatic Tuning was developed and tested over millions of different real-world workloads in Azure makes this a very promising feature for me. But like any good database administrator testing and seeing the results in your environment is key.

Automatic tuning options available in Azure SQL Database are:

|

Option |

Description |

|

CREATE INDEX |

Identifies indexes that may improve the performance of your workload, creates indexes, and automatically verifies that performance of queries has improved |

|

DROP INDEX |

Identifies redundant and duplicate indexes daily, except for unique indexes, and indexes that were not used for a long time (>90 days). |

|

FORCE LAST GOOD PLAN |

Identifies SQL queries using an execution plan that is slower than the previous good plan, and queries using the last known good plan instead of the regressed plan. |

If you choose not to use the GUI you can enable these using T-SQL as well.

ALTER DATABASE current SET AUTOMATIC_TUNING = AUTO | INHERIT | CUSTOM

ALTER DATABASE current SET AUTOMATIC_TUNING (FORCE_LAST_GOOD_PLAN = ON, CREATE_INDEX = DEFAULT, DROP_INDEX = OFF)

The force_last_good_plan flag will work in SQL Server 2017 as well.

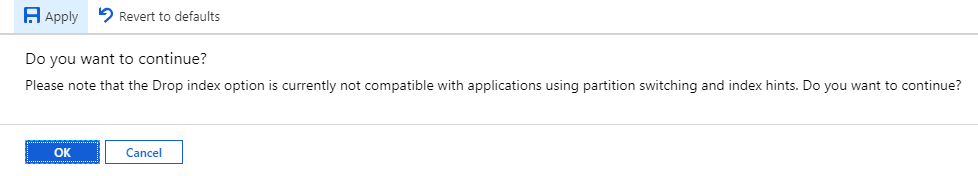

When you choose to enable DROP INDEX and APPLY it will remind you of the following.

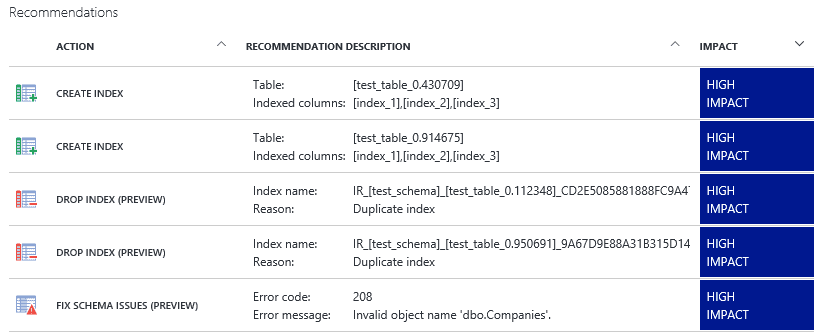

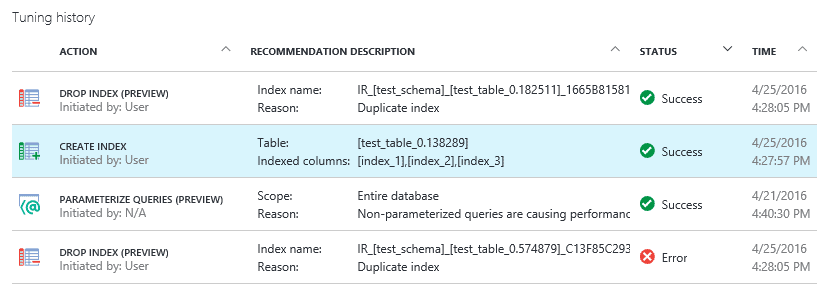

This image from MSDN shows what recommendations look like in Azure. It keeps a very nice history, so you can follow the changes over time on the Performance recommendations page. To manually run the recommendations, you can click on any one of them and then click View Script to have a script to run against your database.

Recommendations sometimes are not applied right away as Azure makes sure it does not interfere with the workload and may hold them. Again, Microsoft is making strides to better their processes which I applaud. You will see several “states” of recommendations shown in the table below from MSDN. This is a big win for me.

|

State |

Description |

|

Pending |

Apply recommendation command has been received and is scheduled for execution. |

|

Executing |

The recommendation is being applied. |

|

Verifying |

Recommendation was successfully applied, and the service is measuring the benefits. |

|

Success |

Recommendation was successfully applied, and benefits have been measured. |

|

Error |

An error occurred during the process of applying the recommendation. This can be a transient issue, or possibly a schema change to the table and the script is no longer valid. |

|

Reverting |

The recommendation was applied but has been deemed non-performant and is being automatically reverted. |

|

Reverted |

The recommendation was reverted. |

Conclusion

So far, I think Microsoft is on the right track with this. I look forward to seeing what else they come up with. Creating and managing your indexes is one of the simplest ways to reduce Azure related costs, and Microsoft has built-in the tools to help. You’ll be surprised how quickly you can make an impact on your bottom line with Index tuning.

These are only some ways you can save money on your monthly Azure bill. Sticker shock can be a real thing with companies venturing into the cloud. It is the database administrators’ job to constantly monitor works loads and find ways to reduce that cost. If the sticker shock becomes an issue remind them that Azure SQL DB saves in many ways.

Azure SQL DB reduces administrative and hardware costs as well. SQL DB has no hardware, operating systems or database software that you must manage. It provides built-in high availability and disaster recovery, database backups, and manages all your upgrades. Therefore, there is no need for redundant hardware, extensive backup storage, and no downtime windows. The cost savings can be substantial.

Load comments