JSON was initially designed for the informal transfer of data that has no schema. It has no concept of a table, or of an array of identical arrays. This means that it must tell you each key for each object, even if the original data object was a table. With the happy minimum of key-value pairs, the JSON document that is produced will produce tabular data that tells you the column to which the data object belongs, and it will do a reasonable job of implying a data type. Sadly, that just isn’t enough for us because JSON only recognises just four base data types; string, number, Boolean and null. In SQL Server, we’re used to a lot more than that. BSON and other extensions supplement this with functions that coerce the string into the correct datatype, but we don’t need to do that because all of the data for any one column will be under the same constraints. In fact, there is a lot of redundant data in a JSON document produced by SQL Server that represents a table or result. Not only that, but there isn’t enough of the right sort of metadata until we add a JSON Schema.

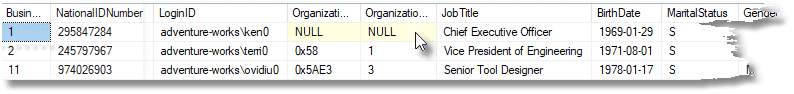

CSV provides the most economical way of transferring tabular data as an ASCII file, but SQL Server doesn’t always support it properly to the rfc4180 standard. However, JSON can transfer tabular data in a way that is almost as economical in space, and more reliably. Here, just to convince you that it is compact, are the first three records of Adventureworks humanResources.employee table in array-of-array JSON.

|

1 2 3 4 |

[[1,"295847284","adventure-works\\ken0",null,null,"Chief Executive Officer","1969-01-29","S","M","2009-01-14",true,99,69,true,"F01251E5-96A3-448D-981E-0F99D789110D","2014-06-30T00:00:00"], [2,"245797967","adventure-works\\terri0","\/1\/",1,"Vice President of Engineering","1971-08-01","S","F","2008-01-31",true,1,20,true,"45E8F437-670D-4409-93CB-F9424A40D6EE","2014-06-30T00:00:00"], [11,"974026903","adventure-works\\ovidiu0","\/1\/1\/5\/",3,"Senior Tool Designer","1978-01-17","S","M","2010-12-05",false,7,23,true,"F68C7C19-FAC1-438C-9BB7-AC33FCC341C3","2014-06-30T00:00:00"] ] |

It is valid JSON (RFC 4627). It isn’t the usual way of storing table data, which is much more verbose, being an array of JSON objects of key-value pairs. We are no longer bound to do this the conventional way because we can now transfer the metadata with the data. The JSON Schema tells you how it is stored.

JSON can be persuaded into this array-of-array format, and if we can read and write it in SQL Server, then we can use it. To prove this, we need to be able to save a database in this format, and to save the schema in this format.

In this article, I’ll demonstrate how to produce an array-in-array JSON document, and the schema to go with it. With this, you have sufficient information to make it easy to transfer such data.

JSON Arrays to Relational Table

Before we turn to the task of producing this sort of data from a table or expression we ought to show to turn the specimen json document that I’ve just shown you back into a relational table. We’ll start by doing the task manually, without a schema, as if it were CSV. You might think I’m being ironic when you first see the code that performs this action, but no. We can then go on to generate all the laborious stuff automatically:

Let’s shred our sample JSON. We have two alternatives. We can do it the JSON_Value way, referencing array elements within the row rather than key/value pairs.

|

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 |

DECLARE @MyJSON NVARCHAR(MAX) ='[ [1,"295847284","adventure-works\\ken0",null,null,"Chief Executive Officer","1969-01-29","S","M","2009-01-14",true,99,69,true,"F01251E5-96A3-448D-981E-0F99D789110D","2014-06-30T00:00:00"], [2,"245797967","adventure-works\\terri0","\/1\/",1,"Vice President of Engineering","1971-08-01","S","F","2008-01-31",true,1,20,true,"45E8F437-670D-4409-93CB-F9424A40D6EE","2014-06-30T00:00:00"], [11,"974026903","adventure-works\\ovidiu0","\/1\/1\/5\/",3,"Senior Tool Designer","1978-01-17","S","M","2010-12-05",false,7,23,true,"F68C7C19-FAC1-438C-9BB7-AC33FCC341C3","2014-06-30T00:00:00"] ] ' SELECT Convert(int,Json_Value(value, 'strict $[0]')) as [BusinessEntityID], Convert(nvarchar(15),Json_Value(value, 'strict $[1]')) as [NationalIDNumber], Convert(nvarchar(256),Json_Value(value, 'strict $[2]')) as [LoginID], Convert(hierarchyid,Json_Value(value, 'strict $[3]')) as [OrganizationNode], Convert(smallint,Json_Value(value, 'strict $[4]')) as [OrganizationLevel], Convert(nvarchar(50),Json_Value(value, 'strict $[5]')) as [JobTitle], Convert(date,Json_Value(value, 'strict $[6]')) as [BirthDate], Convert(nchar(1),Json_Value(value, 'strict $[7]')) as [MaritalStatus], Convert(nchar(1),Json_Value(value, 'strict $[8]')) as [Gender], Convert(date,Json_Value(value, 'strict $[9]')) as [HireDate], Convert(bit,Json_Value(value, 'strict $[10]')) as [SalariedFlag], Convert(smallint,Json_Value(value, 'strict $[11]')) as [VacationHours], Convert(smallint,Json_Value(value, 'strict $[12]')) as [SickLeaveHours], Convert(bit,Json_Value(value, 'strict $[13]')) as [CurrentFlag], Convert(uniqueidentifier,Json_Value(value, 'strict $[14]')) as [rowguid], Convert(datetime,Json_Value(value, 'strict $[15]')) as [ModifiedDate] FROM OpenJson(@myJSON) AS lines; Go |

You can make sure that it is returning all the data in the correct format, even the pesky hierarchy ID, by doing a SELECT INTO. There is a big, big, problem here, though, that they don’t warn you about. JSON_Value has a maximum of 4000 for a string.

For data of any size, we will need to use the second alternative, OPENJSON. This has the rather neater Explicit Schema syntax. Note that, in our rendition of the explicit schema used by OpenJSON, we need to reference array elements rather than key/value pairs

|

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 |

SELECT BusinessEntityID, NationalIDNumber, LoginID, OrganizationLevel, JobTitle, BirthDate, MaritalStatus, Gender, HireDate, SalariedFlag, VacationHours, SickLeaveHours, CurrentFlag, rowguid, ModifiedDate FROM OpenJson(@myJSON) WITH ( BusinessEntityID INT 'strict $[0]', NationalIDNumber NVARCHAR(15) 'strict $[1]', LoginID NVARCHAR(256) 'strict $[2]', --[OrganizationNode] hierarchyid 'strict $[3]', OrganizationLevel SMALLINT 'strict $[4]', JobTitle NVARCHAR(50) 'strict $[5]', BirthDate DATE 'strict $[6]', MaritalStatus NCHAR(1) 'strict $[7]', Gender NCHAR(1) 'strict $[8]', HireDate DATE 'strict $[9]', SalariedFlag BIT 'strict $[10]', VacationHours SMALLINT 'strict $[11]', SickLeaveHours SMALLINT 'strict $[12]', CurrentFlag BIT 'strict $[13]', rowguid UNIQUEIDENTIFIER 'strict $[14]', ModifiedDate DATETIME 'strict $[15]' ); GO |

Unfortunately, in this ‘explicit schema’ format, they haven’t yet got around to supporting CLR types so you can’t create a HierarchyID from JSON this way. Instead, you need to specify the CLR type as being NVARCHAR and coerce it into its CLR type in the result, thus with the OrganizationNode in the HumanResources.Employee table ….

|

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 |

SELECT BusinessEntityID, NationalIDNumber, LoginID, Convert(HIERARCHYID,OrganizationNode) AS "OrganizationNode", JobTitle, BirthDate, MaritalStatus, Gender, HireDate, SalariedFlag, VacationHours, SickLeaveHours, CurrentFlag, rowguid, ModifiedDate FROM OpenJson(@myJSON) WITH ( BusinessEntityID INT 'strict $[0]', NationalIDNumber NVARCHAR(15) 'strict $[1]', LoginID NVARCHAR(256) 'strict $[2]', [OrganizationNode] nvarchar(30) 'strict $[3]', OrganizationLevel SMALLINT 'strict $[4]', JobTitle NVARCHAR(50) 'strict $[5]', BirthDate DATE 'strict $[6]', MaritalStatus NCHAR(1) 'strict $[7]', Gender NCHAR(1) 'strict $[8]', HireDate DATE 'strict $[9]', SalariedFlag BIT 'strict $[10]', VacationHours SMALLINT 'strict $[11]', SickLeaveHours SMALLINT 'strict $[12]', CurrentFlag BIT 'strict $[13]', rowguid UNIQUEIDENTIFIER 'strict $[14]', ModifiedDate DATETIME 'strict $[15]' ); |

This works fine but complicates the code. If you are inserting into a table, you can rely on implicit coercion of the datatype to convert the NVARCHAR into a hierarchyid or geography.

As well as getting this from the JSON Schema if it is available, we can get the explicit schema as well as the JSON_Value() column list. We can use either the schema or the sys.dm_exec_describe_first_result_set directly. The latter approach allows you to create a JSON Schema from any expression, which greatly extends the usefulness of this approach. if you already have the table that matches the JSON data, you merely specify a SELECT * from your table ( we used Humanresources.Employee) and the result contains your spec which you can then paste into the browser pane. This time, just so it is a bit more obvious what’s going on, I’ll do it without the aggregation of the lines. It is a bit more manual because you need to cut and paste the result, and don’t forget to nick out that last comma.

|

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 |

/* the JSON_VALUE syntax */ DECLARE @TheExpression sysname='adventureworks2016.HumanResources.Employee' DECLARE @SelectStatement NVARCHAR(200)=(SELECT 'Select * from '+@TheExpression) SELECT 'convert('+System_type_name + ',Json_Value(value, ''strict $['+ Convert(VARCHAR(3),column_ordinal-1)+']'')) as ['+f.name+']' FROM sys.dm_exec_describe_first_result_set (@SelectStatement, NULL, 1) AS f ORDER BY column_ordinal go /* the WITH Explicit Schema syntax */ DECLARE @TheExpression sysname='adventureworks2016.HumanResources.Employee' DECLARE @SelectStatement NVARCHAR(200)=(SELECT 'Select * from '+@TheExpression) SELECT '['+f.name+']'+ ' '+System_type_name + ' ''strict $['+ Convert(VARCHAR(3),column_ordinal-1)+']'',' FROM sys.dm_exec_describe_first_result_set (@SelectStatement, NULL, 1) AS f ORDER BY column_ordinal Go |

That select statement can be derived like this …

|

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 |

DECLARE @TheExpression sysname='adventureworks2016.HumanResources.Employee' DECLARE @SelectStatement NVARCHAR(200)=(SELECT 'Select * from '+@TheExpression) Select String_Agg( CASE WHEN user_type_id in (128,129,130) THEN 'convert('+system_type_name+','+name+') as "'+[name]+'"' --hierarchy (128) geometry (130) and geography types (129) can be coerced. WHEN user_type_id in (35) THEN 'convert(varchar(max),'+name+') as "'+[name]+'"' WHEN user_type_id in (99) THEN 'convert(nvarchar(max),'+name+') as "'+[name]+'"' WHEN user_type_id in (34) THEN 'convert(varbinary(max),'+name+') as "'+[name]+'"' ELSE quotename([name]) END,', ') FROM sys.dm_exec_describe_first_result_set (@SelectStatement, NULL, 1) |

Relational table to JSON Array

You can store tables or results from SQL Server in this economical format, though the process of generating the data isn’t so pretty. FOR JSON doesn’t support this directly, which is sad. Somehow, I was hoping for FOR JSON RAW.

Just as with CSV, the array-within-array format is only valuable if both ends of the data transfer are aware of enough of the schema/metadata to transform the document into a table. We’ll go into the detail of how we do this with JSON Schema later. The first task is to squeeze the array-in-array format from the somewhat reluctant OpenJSON function.

To get the JSON in array-of-array format from a particular table, and we’ve chosen adventureworks2016.person.person, you do this in SQL Server 2017. I’ve used the String_Agg() aggregation function, but you can do it as easily, but more messily, via the XML trick if you are on SQL Server 2016. Here is a simplified version of the way that we’ll do it

|

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 |

DECLARE @TheData NVARCHAR(MAX)=( SELECT '['+ String_Agg(f.EachLine,',')+']' FROM (SELECT '['+String_Agg ( CASE WHEN shredded.type=1 THEN '"'+String_Escape(Coalesce(shredded.value,'null'),'json')+'"' ELSE Coalesce(shredded.value,'null') END, ',') +']' AS TheValue FROM OpenJson((SELECT * FROM adventureworks2016.person.person FOR JSON AUTO, INCLUDE_NULL_VALUES )) f CROSS apply OpenJson(f.value) shredded GROUP BY f.[Key])f(EachLine) ) |

Note that we need to specify ‘INCLUDE_NULL_VALUES’ in that OpenJSON expression. This is because we need all the columns to be converted in that array, even if they are null in some rows. If we don’t we get the array in the right order but with maybe one or more fields missing, but without being able to know which!

This is much faster than the old way we did the conversion to JSON, using XML.

|

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 |

DECLARE @OurTable XML=(SELECT * FROM adventureworks2016.person.person FOR XML path, ROOT('root') ) DECLARE @Json nvarchar(MAX)=(SELECT '['--create a list (rows) of lists (tuples) +Stuff( --we want to snip out the leading comma (SELECT TheLine from --this is to glue each row into a string (SELECT ', ['+ --this is the start of the row, representing the row object in the JSON list --the local-name(.) is an eXPath function that gives you the name of the node Stuff((SELECT ',"'+ b.c.value('text()[1]','NVARCHAR(MAX)') +'"' -- 'text()[1]' gives you the text contained in the node from x.a.nodes('*') b(c) --get the row XML and split it into each node for xml path(''),TYPE).value('(./text())[1]','NVARCHAR(MAX)') ,1,1,'')+']'--remove the first comma from @OurTable.nodes('/root/*') x(a) --get every row ) JSON(theLine) --each row for xml path(''),TYPE).value('.','NVARCHAR(MAX)' ) ,1,1,'')--remove the first leading comma +' ] ') |

Whereas the XML version unfortunately shares a vice with the JSON version, because it also dislikes tables with CLR Datatypes and ‘errors out’.

|

1 2 |

Msg 13604, Level 16, State 1, Line 3 FOR JSON cannot serialize CLR objects. Cast CLR types explicitly into one of the supported types in FOR JSON queries. |

This is a shame. Where there are no CLR types, it is possible to save the entire contents of a database with a routine like this, which is very satisfying. Note we have a hard-wired directory path which we ought to avoid. You’ll need to alter that to a suitable server directory if you want to try this out.

|

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 |

EXEC sp_msforeachtable ' print ''Creating JSON for ?'' DECLARE @TheData NVARCHAR(MAX)=( SELECT ''[''+ String_Agg(f.EachLine,'','')+'']'' FROM (SELECT ''[''+String_Agg ( CASE WHEN shredded.type=1 THEN ''"''+String_Escape(Coalesce(shredded.value,''null''),''json'')+''"'' ELSE Coalesce(shredded.value,''null'') END, '','') +'']'' AS TheValue FROM OpenJson((SELECT * FROM ? FOR JSON AUTO, INCLUDE_NULL_VALUES )) f CROSS apply OpenJson(f.value) shredded GROUP BY f.[Key])f(EachLine) ) CREATE TABLE ##myTemp (Bulkcol nvarchar(MAX)) INSERT INTO ##myTemp (Bulkcol) SELECT @TheData print ''Writing out ?'' EXECUTE xp_cmdshell ''bcp ##myTemp out C:\data\RawData\JsonData\adventureworks\?.JSON -c -C 65001 -T'' DROP TABLE ##myTemp' |

Instead of this, We’ll add supporting temporary procedure to do the difficult bits that I’ve already illustrated

|

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27 28 29 30 31 32 33 34 35 36 37 38 39 40 41 42 43 44 45 46 47 48 49 50 51 52 53 54 55 56 57 58 59 60 61 62 63 64 65 66 67 68 69 70 71 72 73 74 75 76 77 78 79 80 81 82 83 84 85 86 87 88 89 90 91 92 93 94 95 96 |

CREATE OR ALTER PROCEDURE #ArrayInArrayJsonDataFromTable /** Summary: > This gets the JSON data from a table in Array Author: phil factor Date: 26/10/2018 Examples: > - use Adventureworks2016 DECLARE @Json NVARCHAR(MAX) EXECUTE #ArrayInArrayJsonDataFromTable @database='pubs', @Schema ='dbo', @table= 'authors', @JSONData=@json OUTPUT PRINT @Json - DECLARE @Json NVARCHAR(MAX) EXECUTE #ArrayInArrayJsonDataFromTable @TableSpec='bigpubs.[dbo].[oldTitles]',@JSONData=@json OUTPUT PRINT @Json Returns: > The JSON data **/ (@database sysname = NULL, @Schema sysname = NULL, @table sysname = NULL, @Tablespec sysname = NULL, @jsonData NVARCHAR(MAX) OUTPUT ) AS BEGIN DECLARE @Data NVARCHAR(MAX); IF Coalesce(@table, @Tablespec) IS NULL OR Coalesce(@Schema, @Tablespec) IS NULL RAISERROR('{"error":"must have the table details"}', 16, 1); IF @table IS NULL SELECT @table = ParseName(@Tablespec, 1); IF @Schema IS NULL SELECT @Schema = ParseName(@Tablespec, 2); IF @database IS NULL SELECT @database = Coalesce(ParseName(@Tablespec, 3),Db_Name()); IF @table IS NULL OR @Schema IS NULL OR @database IS NULL RAISERROR('{"error":"must have the table details"}', 16, 1); DECLARE @SourceCode NVARCHAR(255) = ( SELECT 'SELECT * FROM ' + QuoteName(@database) + '.' + QuoteName(@Schema) + '.' + QuoteName(@table) ); DECLARE @params NVARCHAR(MAX) =( SELECT String_Agg( CASE WHEN user_type_id IN (128, 129, 130) THEN'convert(nvarchar(100),' + name + ') as "' + name + '"' --hierarchyid (128) geometry (130) and geography types (129) can be coerced. WHEN user_type_id IN (35) THEN 'convert(varchar(max),' + name + ') as "' + name + '"' WHEN user_type_id IN (99) THEN 'convert(nvarchar(max),' + name + ') as "' + name + '"' WHEN user_type_id IN (34) THEN 'convert(varbinary(max),' + name + ') as "' + name + '"' ELSE QuoteName(name) END, ', ' ) FROM sys.dm_exec_describe_first_result_set(@SourceCode, NULL, 1) ); DECLARE @expression NVARCHAR(800) = ' USE ' + @database + ' SELECT @TheData=(SELECT ' + @params + ' FROM ' + QuoteName(@database) + '.' + QuoteName(@Schema) + '.' + QuoteName(@table) + ' FOR JSON auto, INCLUDE_NULL_VALUES)'; EXECUTE sp_executesql @expression, N'@TheData nvarchar(max) output', @TheData = @Data OUTPUT; SELECT @jsonData ='['+ String_Agg(f.EachLine,',')+']' FROM (SELECT '['+String_Agg ( CASE WHEN shredded.type=1 THEN '"'+String_Escape(Coalesce(shredded.value,'null'),'json')+'"' ELSE Coalesce(shredded.value,'null') END, ',') +']' AS TheValue FROM OpenJson(@data) f CROSS apply OpenJson(f.value) shredded GROUP BY f.[Key])f(EachLine) END; GO DECLARE @ourPath sysname = 'C:\data\RawData\JsonData\AdventureWorks\'; Declare @command NVARCHAR(4000)= ' print ''Creating JSON file for ?'' DECLARE @Json NVARCHAR(MAX) EXECUTE #ArrayInArrayJsonDataFromTable @TableSpec=''?'',@JSONData=@json OUTPUT CREATE TABLE ##myTemp (Bulkcol nvarchar(MAX)) INSERT INTO ##myTemp (Bulkcol) SELECT @JSON print ''Writing out ?'' EXECUTE xp_cmdshell ''bcp ##myTemp out '+@ourPath+'?.JSON -c -C 65001 -T'' DROP TABLE ##myTemp' EXECUTE sp_msforeachtable @command GO |

Now this isn’t fast: it is two minutes rather than eighteen seconds to dump out Adventureworks in native mode or twenty-one seconds in tab-delimited mode. However we want JSON, especially as we can validate it and distinguish between blank strings and nulls, and attach a schema to the table

Adding a schema.

The schema that accompanies this is reasonably simple to generate, Here, just to illustrate how we do it, is a batch that does it for the query ‘SELECT * FROM adventureworks2016.HumanResources.Employee‘.

|

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27 28 29 |

DECLARE @schema NVARCHAR(4000) SELECT @schema=SELECT 'https://mml.uk/jsonSchema/HREmployeeArray.json’ AS id,--just a unique reference to a real place 'http://json-schema.org/draft-04/schema#' AS [schema],--the minimum standard you want to use 'Array (rows) within an array (table) of adventureworks2016.HumanResources.Employee' AS description, 'array' AS type, 'array' AS [items.type], ( SELECT f.name, --the individual columns as an array of objects with standard and custom fields CASE WHEN f.is_nullable = 1 THEN Json_Query('["null","'+f.type+'"]') -- must be array! ELSE Json_Query('["'+f.type+'"]') END AS [type],--must be an array! f.SQLtype, f.is_nullable, Coalesce(EP.value,'') AS description FROM (--the basic columns we need. (the type is used more than once in the outer query) SELECT r.name, r.system_type_name AS sqltype, r.source_column, r.is_nullable, CASE WHEN r.system_type_id IN (58,52,56,58,59,60,62,106,108,122,127) THEN 'number' WHEN system_type_id =104 THEN 'boolean' ELSE 'string' END AS type, Object_Id(r.source_database + '.' + r.source_schema + '.' + r.source_table) AS table_id FROM sys.dm_exec_describe_first_result_set ('SELECT * FROM adventureworks2016.HumanResources.Employee', NULL, 1) AS r ) AS f LEFT OUTER JOIN sys.extended_properties AS EP -- to get the extended properties ON EP.major_id = f.table_id AND EP.minor_id = ColumnProperty(f.table_id, f.source_column, 'ColumnId') AND EP.name = 'MS_Description' AND EP.class = 1 FOR JSON PATH ) AS [items.items] FOR JSON PATH, WITHOUT_ARRAY_WRAPPER; |

This turns out a JSON Schema that, nicely formatted, looks like this

|

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27 28 29 30 31 32 33 34 35 36 37 38 39 40 41 42 43 44 45 46 47 48 49 50 51 52 53 54 55 56 57 58 59 60 61 62 63 64 65 66 67 68 69 70 71 72 73 74 75 76 77 78 79 80 81 82 83 84 85 86 87 88 89 90 91 92 93 94 95 96 97 98 99 100 101 102 103 104 105 106 107 108 109 110 111 112 113 114 115 116 117 118 119 120 121 122 123 124 125 126 127 128 129 130 131 132 133 134 135 136 137 138 139 140 141 142 143 144 145 146 147 148 149 150 151 152 153 154 155 156 157 158 159 160 161 162 163 164 165 166 167 168 169 170 171 172 |

"id": "https://mml.uk/jsonSchema/HREmployeeArray.json", "schema": "http://json-schema.org/draft-04/schema#", "description": "Array (rows) within an array (table) of adventureworks2016.HumanResources.Employee", "type": "array", "items": { "type": "array", "items": [ { "name": "BusinessEntityID", "type": [ "number" ], "SQLtype": "int", "is_nullable": false, "column_ordinal": 1, "description": "Primary key for Employee records. Foreign key to BusinessEntity.BusinessEntityID." }, { "name": "NationalIDNumber", "type": [ "string" ], "SQLtype": "nvarchar(15)", "is_nullable": false, "column_ordinal": 2, "description": "Unique national identification number such as a social security number." }, { "name": "LoginID", "type": [ "string" ], "SQLtype": "nvarchar(256)", "is_nullable": false, "column_ordinal": 3, "description": "Network login." }, { "name": "OrganizationNode", "type": [ "null", "string" ], "SQLtype": "hierarchyid", "is_nullable": true, "column_ordinal": 4, "description": "Where the employee is located in corporate hierarchy." }, { "name": "OrganizationLevel", "type": [ "null", "number" ], "SQLtype": "smallint", "is_nullable": true, "column_ordinal": 5, "description": "The depth of the employee in the corporate hierarchy." }, { "name": "JobTitle", "type": [ "string" ], "SQLtype": "nvarchar(50)", "is_nullable": false, "column_ordinal": 6, "description": "Work title such as Buyer or Sales Representative." }, { "name": "BirthDate", "type": [ "string" ], "SQLtype": "date", "is_nullable": false, "column_ordinal": 7, "description": "Date of birth." }, { "name": "MaritalStatus", "type": [ "string" ], "SQLtype": "nchar(1)", "is_nullable": false, "column_ordinal": 8, "description": "M = Married, S = Single" }, { "name": "Gender", "type": [ "string" ], "SQLtype": "nchar(1)", "is_nullable": false, "column_ordinal": 9, "description": "M = Male, F = Female" }, { "name": "HireDate", "type": [ "string" ], "SQLtype": "date", "is_nullable": false, "column_ordinal": 10, "description": "Employee hired on this date." }, { "name": "SalariedFlag", "type": [ "boolean" ], "SQLtype": "bit", "is_nullable": false, "column_ordinal": 11, "description": "Job classification. 0 = Hourly, not exempt from collective bargaining. 1 = Salaried, exempt from collective bargaining." }, { "name": "VacationHours", "type": [ "number" ], "SQLtype": "smallint", "is_nullable": false, "column_ordinal": 12, "description": "Number of available vacation hours." }, { "name": "SickLeaveHours", "type": [ "number" ], "SQLtype": "smallint", "is_nullable": false, "column_ordinal": 13, "description": "Number of available sick leave hours." }, { "name": "CurrentFlag", "type": [ "boolean" ], "SQLtype": "bit", "is_nullable": false, "column_ordinal": 14, "description": "0 = Inactive, 1 = Active" }, { "name": "rowguid", "type": [ "string" ], "SQLtype": "uniqueidentifier", "is_nullable": false, "column_ordinal": 15, "description": "ROWGUIDCOL number uniquely identifying the record. Used to support a merge replication sample." }, { "name": "ModifiedDate", "type": [ "string" ], "SQLtype": "datetime", "is_nullable": false, "column_ordinal": 16, "description": "Date and time the record was last updated." } ] } } |

Now, if you send the JSON schema with the JSON, either within the same JSON document or separately, you can create the OpenJSON explicit schema from the values in the JSON Schema. Let’s do this manually, just to show the ‘man behind the curtain’.

|

1 2 3 4 5 6 7 8 9 10 11 12 13 14 |

DECLARE @jsonSchema NVARCHAR(MAX) =' {"id": "http:\/\/mml.uk\/json\/schemas\/ahemployee.json", "schema": "http:\/\/json-schema.org\/draft-04\/schema#", "description": "Array (rows) within an array (table) of adventureworks2016.HumanResources.Employee", "type": "array", "items": {"type": "array", "items": [{"name": "BusinessEntityID", "type": ["number"],"SQLtype": "int", "is_nullable": false,"column_ordinal": 1,"description": "Primary key for Employee records. Foreign key to BusinessEntity.BusinessEntityID."},{"name": "NationalIDNumber", "type": ["string"],"SQLtype": "nvarchar(15)", "is_nullable": false,"column_ordinal": 2,"description": "Unique national identification number such as a social security number."},{"name": "LoginID", "type": ["string"],"SQLtype": "nvarchar(256)", "is_nullable": false,"column_ordinal": 3,"description": "Network login."},{"name": "OrganizationNode", "type": ["null", "string"],"SQLtype": "hierarchyid", "is_nullable": true,"column_ordinal": 4,"description": "Where the employee is located in corporate hierarchy."},{"name": "OrganizationLevel", "type": ["null", "number"],"SQLtype": "smallint", "is_nullable": true,"column_ordinal": 5,"description": "The depth of the employee in the corporate hierarchy."},{"name": "JobTitle", "type": ["string"],"SQLtype": "nvarchar(50)", "is_nullable": false,"column_ordinal": 6,"description": "Work title such as Buyer or Sales Representative."},{"name": "BirthDate", "type": ["string"],"SQLtype": "date", "is_nullable": false,"column_ordinal": 7,"description": "Date of birth."},{"name": "MaritalStatus", "type": ["string"],"SQLtype": "nchar(1)", "is_nullable": false,"column_ordinal": 8,"description": "M = Married, S = Single"},{"name": "Gender", "type": ["string"],"SQLtype": "nchar(1)", "is_nullable": false,"column_ordinal": 9,"description": "M = Male, F = Female"},{"name": "HireDate", "type": ["string"],"SQLtype": "date", "is_nullable": false,"column_ordinal": 10,"description": "Employee hired on this date."},{"name": "SalariedFlag", "type": ["boolean"],"SQLtype": "bit", "is_nullable": false,"column_ordinal": 11,"description": "Job classification. 0 = Hourly, not exempt from collective bargaining. 1 = Salaried, exempt from collective bargaining."},{"name": "VacationHours", "type": ["number"],"SQLtype": "smallint", "is_nullable": false,"column_ordinal": 12,"description": "Number of available vacation hours."},{"name": "SickLeaveHours", "type": ["number"],"SQLtype": "smallint", "is_nullable": false,"column_ordinal": 13,"description": "Number of available sick leave hours."},{"name": "CurrentFlag", "type": ["boolean"],"SQLtype": "bit", "is_nullable": false,"column_ordinal": 14,"description": "0 = Inactive, 1 = Active"},{"name": "rowguid", "type": ["string"],"SQLtype": "uniqueidentifier", "is_nullable": false,"column_ordinal": 15,"description": "ROWGUIDCOL number uniquely identifying the record. Used to support a merge replication sample."},{"name": "ModifiedDate", "type": ["string"],"SQLtype": "datetime", "is_nullable": false,"column_ordinal": 16,"description": "Date and time the record was last updated."}]}}' SELECT String_Agg( Json_Value( value, 'strict $.name')+' '+Json_Value(value,'strict $.SQLtype')+ ' $['+Convert(NvARCHAR(3),Json_Value(value,'strict $.column_ordinal')-1) + ']',', ') FROM OpenJson(@jsonSchema,'$.items.items') |

Now so far, we’ve Managed to create a schema for a single table. We need to automate this now to do any table. Let’s create this now and try it out

|

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27 28 29 30 31 32 33 34 35 36 37 38 39 40 41 42 43 44 45 46 47 48 49 50 51 52 53 54 55 56 57 58 59 60 61 62 63 64 65 66 67 68 69 70 71 72 73 74 |

CREATE OR ALTER PROCEDURE #CreateJSONArrayInArraySchemaFromTable /** Summary: > This creates a JSON schema from a table that matches the JSON you will get from doing a classic FOR JSON select * statemenmt on the entire table Author: phil factor Date: 4/12/2018 Examples: > DECLARE @Json NVARCHAR(MAX) EXECUTE #CreateJSONArrayInArraySchemaFromTable @database='pubs', @Schema ='dbo', @table= 'authors',@JSONSchema=@json OUTPUT PRINT @Json SELECT @json='' EXECUTE #CreateJSONArrayInArraySchemaFromTable @TableSpec='pubs.dbo.authors',@JSONSchema=@json OUTPUT PRINT @Json Returns: > nothing **/ (@database sysname=null, @Schema sysname=NULL, @table sysname=null, @Tablespec sysname=NULL,@jsonSchema NVARCHAR(MAX) output) --WITH ENCRYPTION|SCHEMABINDING, ... AS DECLARE @required NVARCHAR(max), @NoColumns INT, @properties NVARCHAR(max); IF Coalesce(@table,@Tablespec) IS NULL OR Coalesce(@schema,@Tablespec) IS NULL RAISERROR ('{"error":"must have the table details"}',16,1) IF @table is NULL SELECT @table=ParseName(@Tablespec,1) IF @Schema is NULL SELECT @schema=ParseName(@Tablespec,2) IF @Database is NULL SELECT @Database=Coalesce(ParseName(@Tablespec,3),Db_Name()) IF @table IS NULL OR @schema IS NULL OR @database IS NULL RAISERROR ('{"error":"must have the table details"}',16,1) DECLARE @SourceCode NVARCHAR(255)= 'SELECT * FROM '+QuoteName(@database)+ '.'+ QuoteName(@Schema)+'.'+QuoteName(@table) SELECT @jsonschema= (SELECT 'https://mml.uk/jsonSchema/'+@table+'.json' AS id,--just a unique reference to a real place 'http://json-schema.org/draft-04/schema#' AS [schema],--the minimum standard you want to use 'Array (rows) within an array (table) of'+@Schema+'.'+@table AS description, 'array' AS type, 'array' AS [items.type], ( SELECT f.name, --the individual columns as an array of objects with standard and custom fields CASE WHEN f.is_nullable = 1 THEN Json_Query('["null","'+f.type+'"]') -- must be array! ELSE Json_Query('["'+f.type+'"]') END AS [type],--must be an array! f.SQLtype, f.is_nullable, Coalesce(EP.value,'') AS description FROM (--the basic columns we need. (the type is used more than once in the outer query) SELECT r.name, r.system_type_name AS sqltype, r.source_column, r.is_nullable, CASE WHEN r.system_type_id IN (58,52,56,58,59,60,62,106,108,122,127) THEN 'number' WHEN system_type_id =104 THEN 'boolean' ELSE 'string' END AS type, Object_Id(r.source_database + '.' + r.source_schema + '.' + r.source_table) AS table_id FROM sys.dm_exec_describe_first_result_set (@SourceCode, NULL, 1) AS r ) AS f LEFT OUTER JOIN sys.extended_properties AS EP -- to get the extended properties ON EP.major_id = f.table_id AND EP.minor_id = ColumnProperty(f.table_id, f.source_column, 'ColumnId') AND EP.name = 'MS_Description' AND EP.class = 1 FOR JSON PATH ) AS [items.items] FOR JSON PATH, WITHOUT_ARRAY_WRAPPER); IF(IsJson(@jsonschema)=0) RAISERROR ('invalid schema "%s"',16,1,@jsonSchema) IF @jsonschema IS NULL RAISERROR ('Null schema',16,1) GO |

We can now try this out by writing the schemas of all the tables in AdventureWorks.

|

1 2 3 4 5 6 7 8 9 10 11 12 13 |

USE Adventureworks2016 DECLARE @ourPath sysname = 'C:\data\RawData\JsonSchema\AdventureWorks\'; Declare @command NVARCHAR(4000)= ' print ''Creating JSON file for ?'' DECLARE @Json NVARCHAR(MAX) EXECUTE #CreateJSONArrayInArraySchemaFromTable @TableSpec=''?'',@JSONSchema=@json OUTPUT CREATE TABLE ##myTemp (Bulkcol nvarchar(MAX)) INSERT INTO ##myTemp (Bulkcol) SELECT @JSON print ''Writing out ?'' EXECUTE xp_cmdshell ''bcp ##myTemp out '+@ourPath+'?.JSON -c -C 65001 -T'' DROP TABLE ##myTemp' EXECUTE sp_msforeachtable @command GO |

It takes 11 seconds to do them all on my machine. You aren’t limited to tables, you can do any SQL Query.

Conclusions

We seem to have used a lot of SQL to achieve our ends. However, we now have data that we can validate outside the database, share with JSON-friendly applications or import into JSON-savvy databases. We have a version of a JSON tabular document that is economical in storage.

The next stage is to use it to build a database. I’ve described elsewhere how to do it with the more conventional Object-within-array JSON document and schema but not in array-in-array JSON. That’s next.

SourceCode

The source to this article and various blogs on the topic of importing, validating and exporting both JSON Schema and data in SQL Server is on github here.

Load comments