Virtualization is one of the hottest trends in IT today. Virtualization allows administrators to reduce costs by making better use of underused server hardware. Often times, virtualization has the added benefit of reducing server licensing costs as well. For all of its benefits though, there are some down sides to virtualizing your servers. Capacity planning and scalability become much more important in virtualized environments, and yet measuring resource consumption becomes far more difficult. In this article series, I want to talk about server virtualization as it relates to Exchange Server.

Microsoft’s Support Policy

Before I even get started, I want to address the issue of whether or not Microsoft supports virtualizing Exchange Server. There seems to be a lot of confusion around the issue, but the official word is that Microsoft does support Exchange Server virtualization, but with some heavy stipulations.

I don’t want to get into all of the stipulations, but I will tell you that Exchange Server 2003 is only supported if you use Microsoft’s Virtual Server as the virtualization platform. Microsoft does not support running Exchange Server 2003 in a Hyper-V environment.

I could never, with a clear conscience, tell you to deploy an unsupported configuration. I will admit, however, that I virtualized my production Exchange 2003 servers using Hyper-V before Microsoft announced that the configuration would not be supported. To this day, I am still using this configuration, and it has been performing flawlessly.

Exchange Server 2008 can be virtualized using any hypervisor-based virtualization platform that has been validated under Microsoft’s Windows Server Virtualization Validation program (http://go.microsoft.com/fwlink/?LinkId=125375) including Hyper-V and VMWare ESX. There are a number of stipulations that you need to be aware of though. These stipulations are all outlined in Microsoft’s official support policy, which you can read at: http://technet.microsoft.com/en-us/library/cc794548.aspx

Server Roles

One of the biggest considerations that you must take into account when you are planning to virtualize Exchange Server 2007 is which server roles you want to virtualize. Microsoft’s official support policy states that they support the virtualization of all of Exchange Server’s roles, except for the Unified Messaging role. As such, you would think that figuring out which roles are appropriate for virtualization would be relatively simple. Even so, this is a fiercely debated topic, and everyone seems to have their own opinion about the right way to do things.

Since I can’t possibly tell you which roles you should virtualize without being ridiculed by the technical editors and receiving a flood of e-mail from readers, I am simply going to explain the advantages and the disadvantages of virtualizing each role, and let you make up your own mind as to whether or not virtualizing the various roles is appropriate for your organization.

The Mailbox Server Role

The mailbox server role is probably the role that receives the most attention in the virtualization debate. Opponents of virtualizing mailbox servers argue that mailbox servers make poor virtualization candidates because they are CPU and I/O intensive, and because the virtualization infrastructure adds to the server’s CPU overhead.

While it is true that mailbox servers are I/O intensive, that may not be a deal breaker when it comes to virtualization. Many larger organizations get around the I/O issue by storing the virtual hard drives used by the virtualized mailbox server on a SAN. Smaller organizations may be able to get around the I/O issue by using SCSI pass through storage to host the mailbox database and the transaction logs.

I have seen several different benchmark tests that show that while the abstraction layers used by the virtualization platform do place an increased load on the CPU, CPU utilization only goes up by about 5% (assuming that Hyper-V or VMWare ESX is being used).

The whole point of using virtualization is to make better use of underutilized hardware resources. Therefore, if your mailbox server is already running near capacity, then virtualizing it probably isn’t such a good idea. Even in those types of situations though, I have seen organizations implement CCR, and use a virtual machine to host the passive node, while the active node continues to run on a dedicated server.

The Hub Transport Role

The Hub Transport Server role is one of the most commonly virtualized Exchange 2007 roles. Even so, it is important to remember the critical nature of this server role. All messages pass through the hub transport server, and if the server crashes then mail flow stops.

When you are determining whether or not you want to virtualize a hub transport server, it is important to make sure that you have some sort of fault tolerance in place You should also use performance monitoring and capacity planning to ensure that the transport pipeline is not going to become a bottleneck once you virtualize the hub transport server.

The Client Access Server Role

Whether or not the CAS server should be virtualized depends on a number of factors. For instance, many organizations choose to use CAS as an Exchange front end that allows users to access their mailboxes through OWA. If this is how you are using your CAS server, then you need to consider the number of requests that the CAS server is servicing. Some organizations receive so much OWA traffic that they need multiple front end servers just to deal with it all. If your organization receives that much traffic, then you are probably better off not virtualizing your CAS servers.

On the other hand, if you only use CAS because it is a required role, or if your users don’t generate an excessive number of OWA requests, then your CAS server might be an ideal candidate for virtualization. The only way to know for sure is to use the Performance Monitor to find out how many of your server’s resources are being consumed. Performance monitoring is important because other functions such as legacy protocol proxying (Pop3 / IMAP), Outlook Anywhere (RPC over HTTP), and mobile device support can also place a heavy workload on a CAS server.

The Edge Transport Server Role

The edge transport server is one of the more controversial roles when it comes to virtualization. Many administrators are reluctant to virtualize their edge transport servers because they fear an escape attack. An escape attack is an attack in which a hacker manages to somehow break out of the confines of a virtual machine and take control of the entire server. To the best of my knowledge though, nobody has ever successfully performed an escape attack.

If you are concerned that someone might one day figure out how to perform an escape attack, but you want to virtualize your edge transport server, then my advice would be to carefully consider what other virtual servers you want to include on the server. The edge transport server is designed to sit in the DMZ, so if you are concerned about escape attacks, then why not reserve the physical server for only hosting virtual machines that are intended for use in the DMZ. That way, in the unlikely event that an escape attack ever does occur, you don’t have to worry about the physical server containing any data.

The Unified Messaging Server Role

As I stated earlier, the Unified Messaging role is the only Exchange 2007 role that Microsoft does not support in a virtualized environment. Even so, I have known of a couple of very small organizations that have virtualized their Unified Messaging servers, and it seems to work for them. Personally, I have to side with Microsoft on this one and say that just because you can virtualize a unified messaging server, doesn’t mean that you should. Unified Messaging tends to be very CPU intensive, and I think that is probably the reason why Microsoft doesn’t want you to virtualize it.

Resource Consumption

Capacity planning is an important part of any Exchange Server deployment, but it becomes even more important when you bring virtualization into the picture, because virtualizing implies that your Exchange Server is only going to be able to use a fraction of the server’s overall resources, and that the virtualized Exchange Server is going to have to compete with other virtual machines for a finite set of physical server resources. The problem with this is that it can be very tricky to figure out just how much of a server’s resources your virtual Exchange Server is actually using.

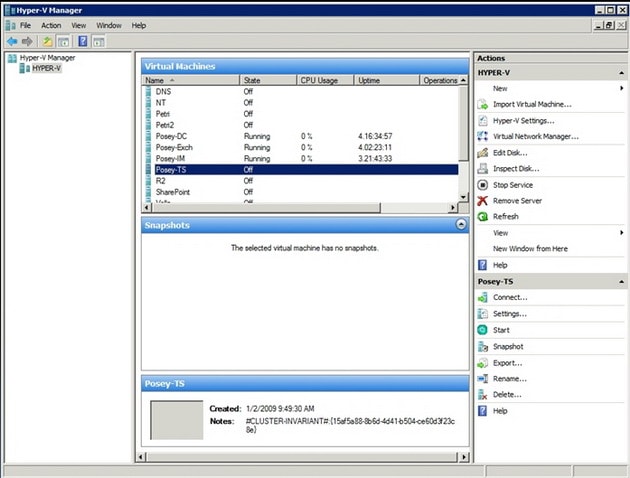

To show you what I am talking about, I want to show you three screen captures. First, take a look at the screen capture that is shown in Figure A. This screen capture shows a lab machine that is running three virtual servers. One of these virtual servers is a domain controller, another is running OCS 2007, and the third is running an Exchange 2007 mailbox server (this is just a sample configuration, and is by no means a recommendation).

Figure A

This lab machine is currently running three virtual servers.

If you look at the figure, you will notice that all three machines are running, and have been for a few days straight. You will also notice that the Hyper-V Manager reports each server as using 0% of the server’s CPU resources. Granted, all three of these machines are basically idle, but there is no way that an Exchange 2007 mailbox server is not consuming at least some CPU resources.

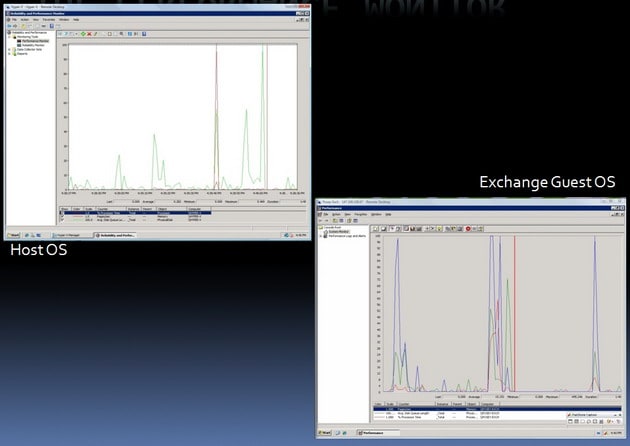

The second screen capture that I want to show you is in Figure B. This screen capture was taken from the same server, but this time I put a load on my Exchange 2007 mailbox server. While doing so, I opened up two instances of the Performance Monitor. One instance is running within the host operating system, and the other is running within the virtual Exchange server. Even though these two screen captures were taken at exactly the same time, they paint a completely different picture of how the server’s resources are being used. I will explain why this is the case later on, but you will notice that the host operating system reports much lower resource usage than the guest operating system does, even though there are a couple of other guest operating systems that are also running on the host.

Figure B

The host operating system and the guest operating systems have completely different ideas about how resources are being used.

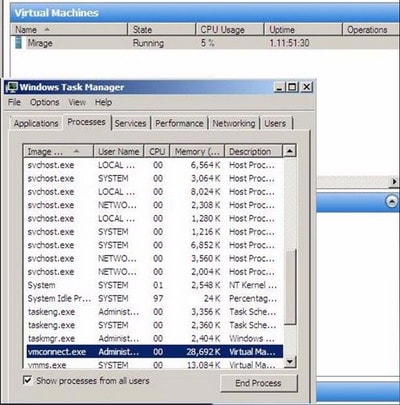

The last screen capture that I wanted to show you is the one shown in Figure C. What I wanted to show you in this screen capture is that even the Hyper-V Manager and the Windows Task Manager, which are both running within the host operating system can’t agree on how hard the CPU is working. The Hyper-V Manager indicates that five percent of the CPU resources are being used, while the Windows Task Manager implies that three percent of the CPU resources are in use (97% of the CPU resources are free).

The Anatomy of a Virtual Machine

So why do these discrepancies exist? In order to answer that question, you need to understand a little bit about the anatomy of a virtual machine. Before I get started though, I need to point out that virtual machines are implemented differently depending on which virtualization product is being used. For the sake of this discussion, I am going to be talking about Microsoft’s Hyper-V.

As I’m sure you probably know, Hyper-V (like many other virtualization products) is classified as a hypervisor. Hyper-V is what is known as a type 2 hypervisor. A type 2 hypervisor sits on top of a host operating system, as opposed to a type 1 hypervisor, which sits beneath the server’s operating system at the bare metal layer.

A type 2 hypervisor isn’t to be confused with hosted solution based virtualization products such as Microsoft’s Virtual PC or Virtual Server. Such products typically pass all of their hardware requests through the host operating system, which sometimes results in poor overall performance.

In contrast, guest operating systems in a Hyper-V environment do reside on top of the host operating system, but Hyper-V is only minimally dependant on the host operating system (which must be a 64-bit version of Windows Server 2008). The host operating system connects to each virtual machine through a worker process. This process is used for keeping track of the virtual machine’s heartbeat, taking snapshots of the virtual machine, emulating hardware, and similar tasks.

Now that I have explained some of the differences between a type 1 and a type 2 hypervisor, I want to talk about how the virtual machines function within Hyper-V. Hyper-V is designed to keep all of the virtual machines isolated from each other. It accomplishes this isolation through the use of partitions. A partition is a logical unit of isolation that is supported by the hypervisor.

Hyper-V uses two different types of partitions. The parent partition (which is sometimes called the root partition) is the lower level of the hypervisor. The parent partition runs the virtualization stack, and it has direct access to the server’s hardware.

The other type of partition that is used by Hyper-V is a child partition. Each of the guest operating systems resides in a dedicated child partition. Child partitions do not have direct hardware access, but there is always at least one virtual processor and a dedicated memory area that is set aside for each child partition.

Initially, Hyper-V treats each of the server’s processor cores as a virtual processor. Therefore, a server with two quad core processors would have eight virtual processors. It is important to keep in mind though, that Hyper-V does not force a one to one mapping of virtual processors to CPU cores. You can allocate more virtual processors than you have CPU cores, although Microsoft recommends that you do not exceed a two to one ratio.

Hyper-V manages memory differently from the way that it would be managed in a non virtualized environment. Hyper-V uses an Input Output Memory Management Unit (IOMMU) as a mechanism for mapping and managing the memory addresses for each child partition. In a non virtualized environment, low level memory mapping is handled primarily at the hardware level (although the Windows operating system does perform some higher level memory mapping of its own).

Earlier I mentioned that hypervisor based virtualization products tend to be more efficient than hosted solution based virtualization products, which pass all hardware calls through the host operating system. A big part of this efficiency is related to the root partition’s ability to communicate directly with the server hardware. As you will recall though, the guest operating systems reside in child partitions, which do not have the ability to talk directly to the server hardware. So what keeps the guest operating systems from suffering from poor performance?

There are several different mechanisms in place that help to improve the child partition’s efficiency, but one of the primary things that helps with guest operating performance is something called enlightenment. If you have ever installed a guest operating system in Hyper-V, then you know that one of the first things that you normally do after the operating system has been installed is to install the integration services. Technically, the integration services are not a requirement, and it’s a good thing that they aren’t. Many non Windows operating systems, as well as most of the older versions of Windows don’t support the integration services.

The first thing that most administrators notice after installing a guest operating system is that they can’t access the network until the integration services have been installed. This is where enlightenment comes into play. After the integration services have been installed, the guest operating system is said to be enlightened. What this really means is that the guest operating system becomes aware that it is running in a virtualized environment, and as such is able to access something called the VM Bus. This makes it possible for the guest operating system to access hardware such as a network adapter or SCSI drives without having to fall back on an emulation layer. Incidentally, it is possible to access the network from a non enlightened partition. You just have to use an emulated network adapter. In fact, I recently virtualized a server that was running Windows NT 4.0, and it is able to access the network by using the partition’s emulation layer.

So let’s get back to my original question. Why does the host operating system disagree with the guest operating systems about the amount of resources that are being used? The answer lies in the way that Hyper-V uses partitioning. Each partition is a completely isolated set of logical resources, and the host operating system lacks the ability to look inside of individual partitions to see how resources are truly being consumed.

At first this would seem to be irrelevant. After all, CPU usage is CPU usage, right? Remember though, that child partitions use virtual processors instead of communicating directly with the physical processor. It is the hypervisor (not the host operating system) that is responsible for scheduling virtual processor threads on physical CPU cores.

Ever since the days of Windows NT, it has been possible to determine how much CPU time is being consumed by monitoring the \Processor(*)\% Processor Time counter in the Performance Monitor. When you bring Hyper-V into the equation though, this counter becomes extremely unreliable (from the standpoint of the system as a whole), as you have already seen.

If you monitor the \% Processor Time counter from within a guest operating system, you are seeing how hard the virtual processors are working, but in a view that is relative to that virtual machine, not the server as a whole. If you watch this counter on the host operating system, the Performance Monitor is not aware of CPU cycles related to the hypervisor.

As you will recall., the screen captures that I showed you earlier generally reflected extremely low CPU utilization for virtual machines. The fact that the host operating system isn’t aware of CPU cycles related to hypervisor activity certainly accounts for at least some of that, but there are a couple of other reasons why CPU utilization appears to be so low.

First, Hyper-V allows you to decide how many virtual processors you want to allocate to each virtual server. This can be a limiting factor in and of itself. For instance, if a server has four CPU cores, and you only allocate one virtual processor to a child partition, then that partition can never consume more than 25% of the server’s total CPU resources regardless of how the partition’s CPU usage is actually reported.

Things can get a little bit strange when you start trying to allocate more virtual processors than the number of physical CPU cores that the server has. For instance, suppose that you have a server with four CPU cores, and you allocate eight virtual processors. In this type of situation, the virtual machines will try to use double the amount of CPU time that is actually available. Since this is impossible, CPU time is allocated to each of the virtual processors in a round robin fashion. When this occurs, CPU utilization is reported as being very low, because the workload is being spread across so many (virtual) processors.

In reality the virtual machine’s performance will be worse than it would have been had a fewer number of virtual processors been allocated. Allocating fewer virtual processors tends to cause CPU utilization to be reported as being higher than it would be had more virtual processors been allocated, but performance ultimately improves because there is a significant amount of overhead involved in distributing the workload across, and then allocating CPU time to all those virtual processors.

Conclusion

As you can see, the values that are reported by the Performance Monitor and by other performance measuring mechanisms vary considerably depending on where the measurement was taken. In Part 2, I will show you why this is the case, and how you can more accurately figure out how what system resources a virtual Exchange Server is actually consuming.

Load comments