Artificial Intelligence (AI) has been in the limelight in recent years with self-driving cars, its most famous application. Although experiments with AI began in the 1950s, the latest advancements in machine learning proves it’s emerging as a disruptive technology. Several companies in a variety of domains are trying to find ways to utilize AI agents or bots to automate tasks and improve productivity. Software testing is no different. A wave of automation has already hit software testing in the form of Automation Testing.

The automation of quality assurance and software testing has been around for over 15 years. Test automation has evolved from automation of test execution to using model-based testing tools to generate test cases. This evolution was primarily triggered by shifting of focus from reducing testing timelines to increasing test coverage and effectiveness of testing activities. However, the level of automation of software testing processes are still very low due to two main reasons:

- Difficulty to develop scalable automation to match the speeds of the changing applications with every release

- Challenges with architecting a reliable and reusable test environment with relevant test data

This is going to change with the adoption of AI, analytics, and machine learning in testing. This article will explain what AI is, dispel some widespread myths, and establish some ground realities regarding its current capabilities.

What is AI?

Artificial Intelligence is an application of individual technologies working in tandem which helps a computer to perform actions usually reserved by humans. Some of the common applications of AI in our daily lives are speech recognition and translation in the form of bots like Siri and Alexa and autonomous vehicles, including self-driving cars as well as spacecraft. When you consider these applications of AI, you will notice that each of these are very specialized functions. The primary limitation of the technology right now is that it is not generalized; meaning, implementation of AI which can do all the things above like a human being doesn’t exist yet.

During the early periods of AI development, scientists and researchers understood that it is not necessary for them to emulate the human mind completely to build autonomous learning or make computers think. Instead, they are working on ways to model the way we perceive inputs and act on them. This makes sense because the very hardware that our human brain runs on is different from the hardware in our computers. This is also the reason why we keep hearing analogous terms like neural nets which have been modeled from our understanding of how the neurons work in our brains. However, researchers will tell you that this model is oversimplified and perhaps doesn’t match reality at all. This shows that we are still far from the likes of developing Skynet (for the Terminator fans) or Ultron (from the Marvel universe) to rest some predictions from doomsayers that AI is an existential risk for all humans. For now, we are safe, unless we give AI access to our nuclear codes for them to use.

What Makes AI Possible?

In the previous section, I spoke of some individual technologies which work together to make AI possible. Now, I’ll explain in detail what these technologies are. Since the 1950s, AI has been experimented with in various forms by fields like computational linguistics for natural language processing, control theory to find the best possible actions, and machine evolution or genetic algorithms to find solutions to problems. However, with the advent of the information age and the decrease in the cost of computational power & memory, all these technologies have found common ground as machine learning.

Machine Learning is essentially the way in which we are making computers learn and perceive the world. There are primarily three main ways, computers learn:

- Supervised Learning

- Unsupervised Learning

- Reinforcement Learning

Supervised Learning

In supervised learning, a computer is provided with labeled data so that it can learn what distinguishes each feature. For example, to make the computer distinguish you from your partner, you need to provide a set of photos distinctly marked as such. This set of marked/labeled input is then provided to an algorithm for the computer to create a model. This model is later used by the computer to distinguish whether photos that are provided to it are from you or your partner. This is a simple case of classification. Another example would be to use insurance data to predict the range of losses the company could make from the insurance product, given a set of attributes of a person. Supervised learning is thus usually used for classification or regression problems.

Unsupervised Learning

Unlike supervised learning where labeled data is available, unsupervised learning tries to find patterns in the input provided on its own. This method of learning is used in a lot of applications like clustering (grouping similar objects together and finding point of separation between distinct ones), association (finding relations between objects) and anomaly detection (detecting unnatural behavior in the normal operation of a system).

Reinforcement Learning

Reinforcement learning is the closest method which is making AI agents possible. In this method of learning, the AI agent explores the environment and finds an optimal way to accomplish the goals. It uses an iterative process to perform actions, receive feedback because of those actions and an evaluation of the feedback to see if the action was positive or negative. Reinforcement learning also uses models created using supervised and unsupervised learning to evaluate or perform actions.

AI in Testing

Now that you know the capabilities of AI and have a better understanding of what goes behind the creation of AI, you’ll see how AI is impacting software testing. AI in testing refers to one of these things:

- AI-enabled testing

- Testing AI products and deliverables

AI will enable enterprises to transform testing into a fully self-generating, self-executing, and self-adapting activity. Although the use of AI in testing is still in initial phases in the industry, software testing tools have started implementing some or the other forms of AI in their toolkit. Tools like Eggplant and TestComplete have some AI features in their latest releases.

The main objectives with any QA or testing efforts are to:

- Ensure end-user satisfaction

- Increase the quality of software by detecting software defects before go-live

- Reduce waste and contribute to business growth and outcomes

- Protect the corporate image and branding

Hence with testing AI products and deliverables, the software tester could use traditional testing tools, or use AI-enabled testing tools. The primary objectives remain the same; however, one now needs considerable understanding and knowledge of the workings of AI algorithms to find new ways to break the application.

Currently, AI-enabled testing comes in three different flavors.

Predictive Analytics

Predictive analytics in software testing is simply the use of predictive techniques like object detection and identification to perform steps in test execution. Prominent tools like Eggplant and TestComplete have now introduced such features in the latest releases. You will see an example of this feature in detail later.

Robotic Automation

Robotic automation or Robotic Process Automation (RPA) is a technique to automate repetitive processes which require no decision making. This is more analogous to macros in Excel applied to an entire lifecycle rather than just a simple task. Some of the components of this technique are screen scraping, macros, and workflow automation.

Cognitive Automation

Cognitive automation is a level up on RPA which also introduces machine learning to automate software testing phases. Thus, providing decision-making capabilities to the tool. Cognitive automation is still a work in progress and you should expect to see more tools and products adopting this approach soon.

Now that you have gained an understanding of the capabilities of AI and its implications in testing, take a look at some examples of how testing tools are utilizing AI to empower testers.

An example of AI in testing

Automation tools gained prominence across the software industry aiming to reduce repetitive manual work, increase efficiency and accuracy of test execution, and perform regular checks by scheduling jobs to run on a release schedule. With the coming of AI, there are various tools available in the market which are adopting this technology, one of the most widely used tools is TestComplete. It can be used to automate mobile, desktop and web applications and supports the creation of smoke, functional, regression, integration, end-to-end, and unit test suites. It provides the functionality to record test cases or create them in various supported scripting languages such as C# Script, C++Script, DelphiScript, JavaScript, JScript, Python, VBScript. Recorded test cases can also be converted into scripts. Take a look at how the traditional steps in software testing are carried out in TestComplete.

Test Creation and Execution

There are multiple approaches supported by TestComplete for creating and executing the test cases. Record and play options are available to record step by step user interactions with an application, and then it can be played multiple times as needed. Once the test case has been recorded, multiple conditions can be added as follows:

- Using statements supported by TestComplete to perform iterative actions & conditions, e.g. while, if…else, try catch & finally etc.

- Passing runtime parameters during the execution

- Creating variables and passing it in test scripts

- Performing different actions on the selected object such as clicking on the button, entering text, opening and closing the application.

- Adding various property validation checkpoints such as reading and validating a label of any object, finding its location, checking whether it is enabled or disabled, etc.

Once the test cases are created and ready to be executed, following options can be used to execute them:

- Running test cases individually using the Run Test option.

- Creating separate functions in the script file and deciding which function to run under the Project Suites gives more flexibility for tester

- You can also rearrange the sequence of the test cases to be executed under the project. Another way is to add them under a specific folder and then decide which one to execute

- You can also call scripts written in any language into the keyword test cases which provides more flexibility.

- You can also execute an entire project suite by selecting the entire project suite and choosing the option Run Project .

What’s the Role of AI

When it comes to validating the property of an object present on the UI, TestComplete provides multiple options to access the object’s properties. For example, a button object can have properties like enabled or disabled, text, coordinates, Id, class, etc. Hence, it becomes easy to identify an object based on these properties and confirm the expected behavior. However, reading content on images or a graphical chart like interfaces which are becoming more common with the proliferation of business intelligence and data-driven dashboards is difficult to identify and validate or perform automated actions on them. However, this has changed with the introduction of the latest version of TestComplete viz. version 12.60 which overcomes this issue by making use of an API driven optical character recognition (OCR) service.

There are certain prerequisites to use this option. First, one needs to enable the OCR plugin by installing the extension under File –> Install Extension. Second, since it makes use of the ocr.api.dev.smartbear.com web service, firewall proxy settings should be enabled to access this web service.

There are two main ways in which this feature can be utilized:

- Keyword tests In Keyword tests, one basically uses the two operations, OCR Action and OCR Checkpoint

- Script tests For script tests, a tester would need to access the OCR object to invoke functions and properties

I’ll demonstrate the power of the new service with the help of two testing scenarios below.

Testing a Dashboard

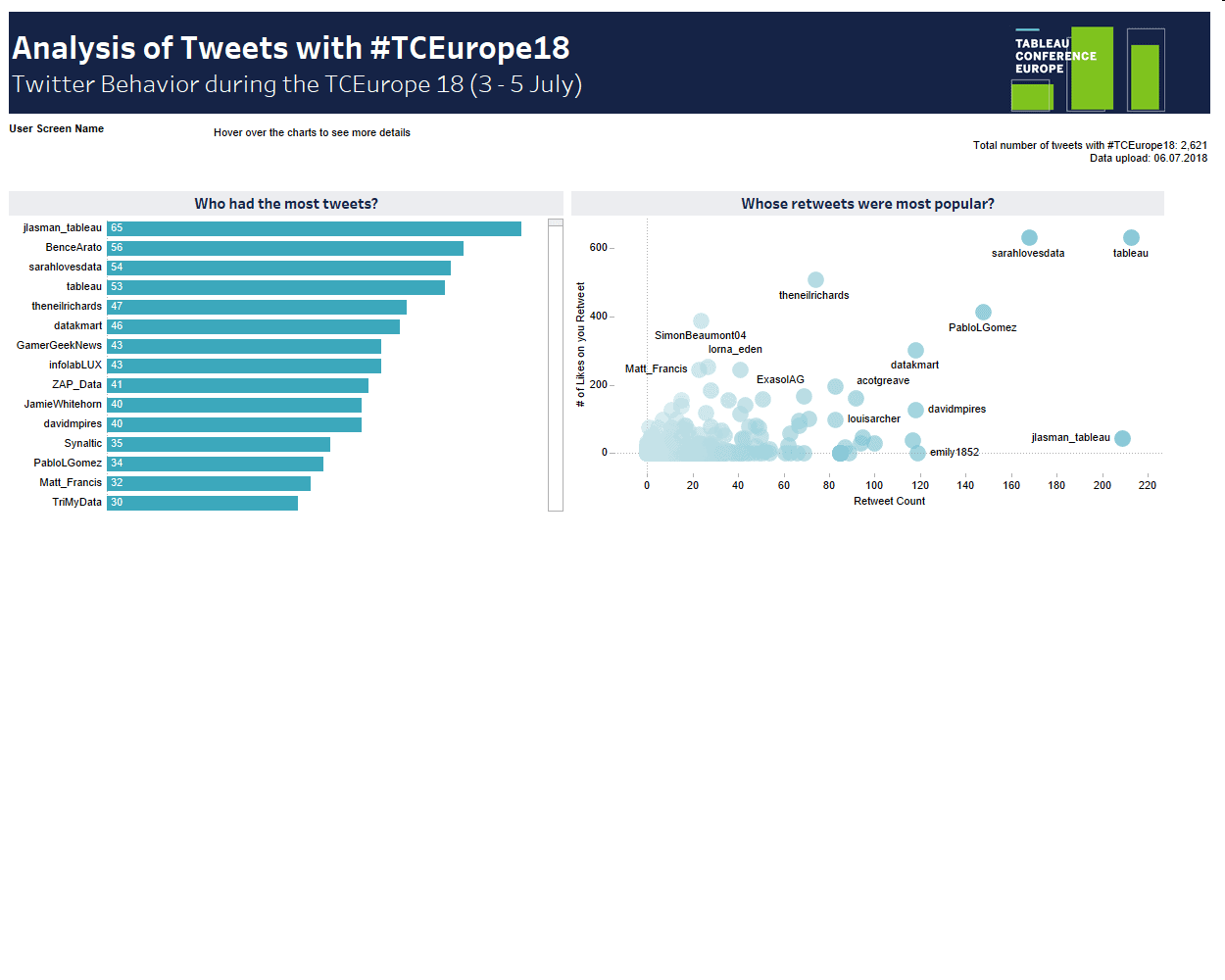

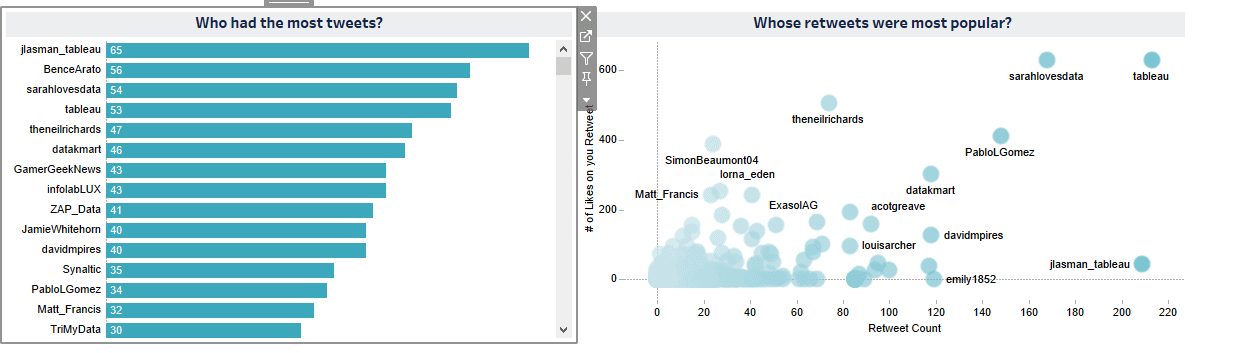

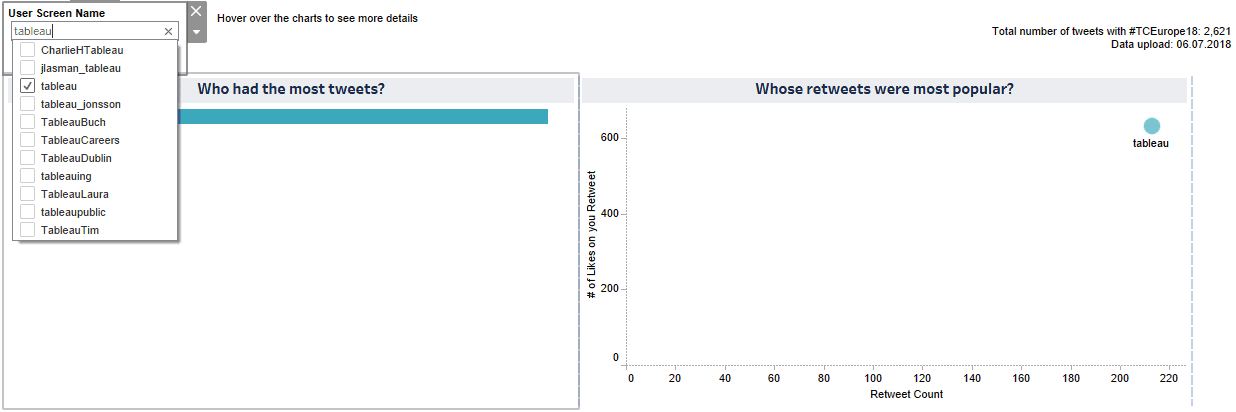

Application Description: A Tableau dashboard contains an analysis of tweets with the hashtag #TCEurope18. It contains three elements, (Figure 1.1)

- Textbox named User Screen Name

- Horizontal bar chart “Who had the most tweets?”

- Scatter plot “Whose retweets were more popular?”

You can filter the data using the User Screen Name filter textbox. Upon filtering data from the textbox, the horizontal bar chart Who had the most tweets? and the scatter plot Whose retweets were most popular? should both get updated as per the filtered value.

Also, selecting a bar on the bar chart should filter, the scatter plot for the same user.

Figure 1.1

Test Scenario#1

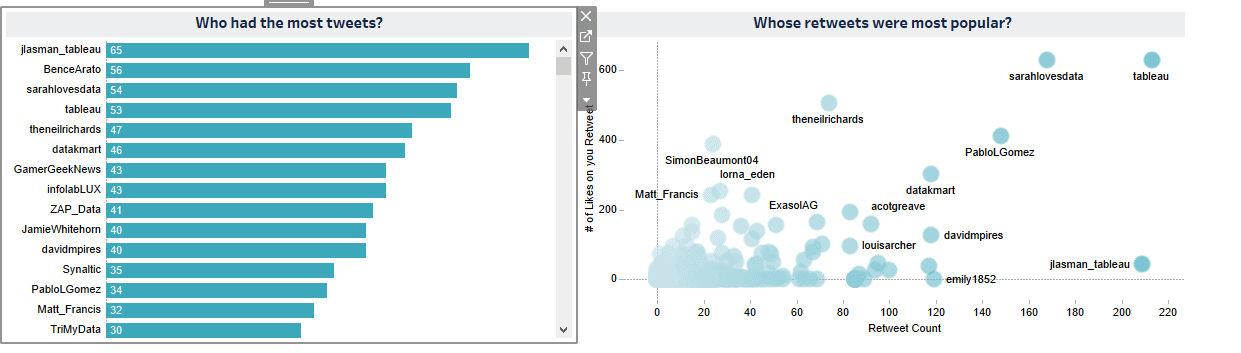

On selecting a bar from the bar chart Who had the most tweets?, the scatter plot Whose retweets were most popular should also show the plot for the same user (validating username).

Initial stage: all the usernames and the metrics are displayed on both the charts

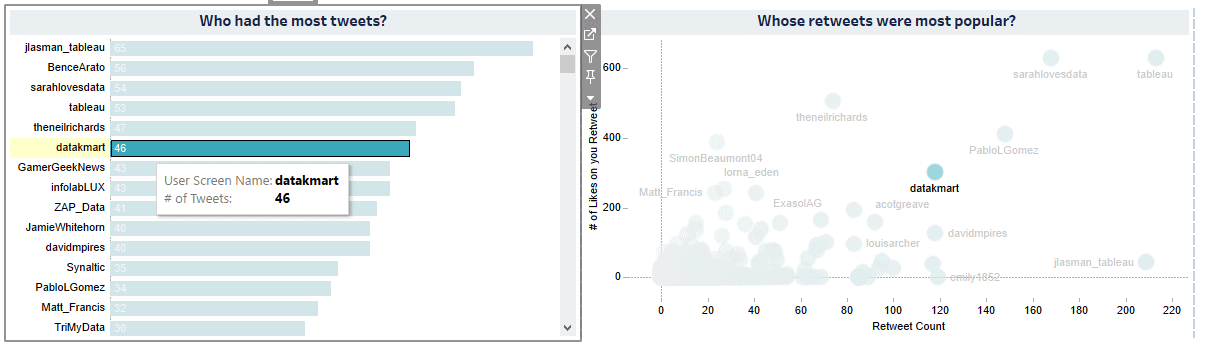

Record a step to click on the bar chart to select username datakmart to view data related to the datakmart user:

In the expected result it should display the username datakmart in both the graphs and confirm the same by reading the text from the graphs:

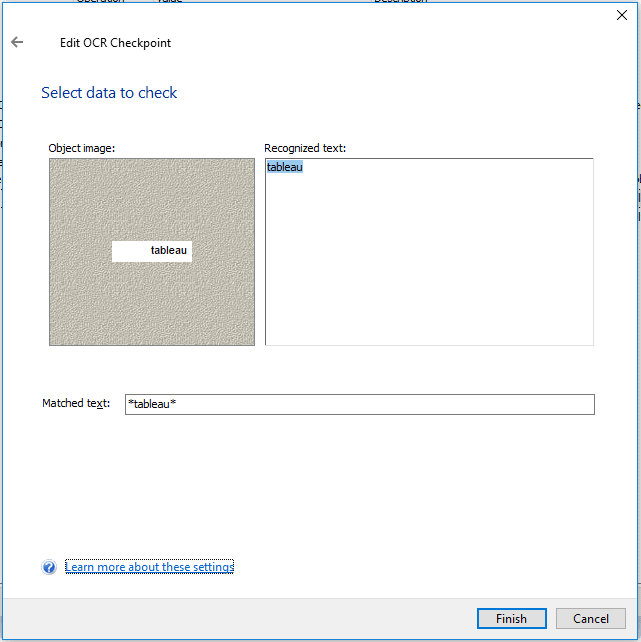

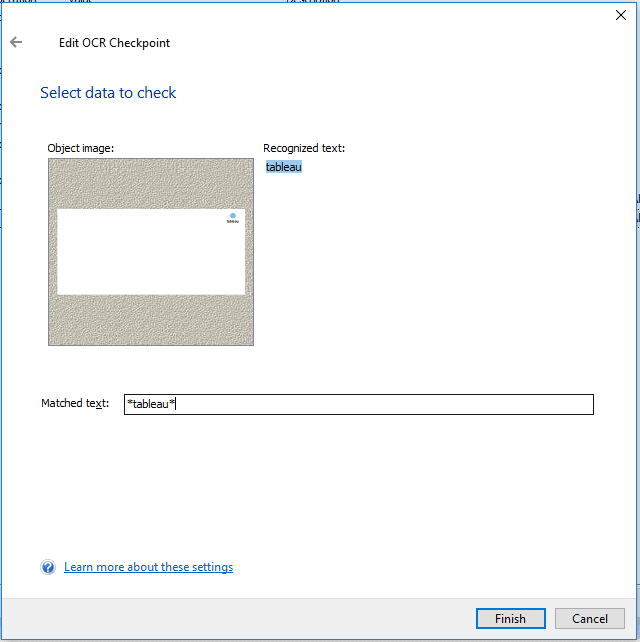

Now add a property checkpoint to read the username from both the graphs using the OCR option.

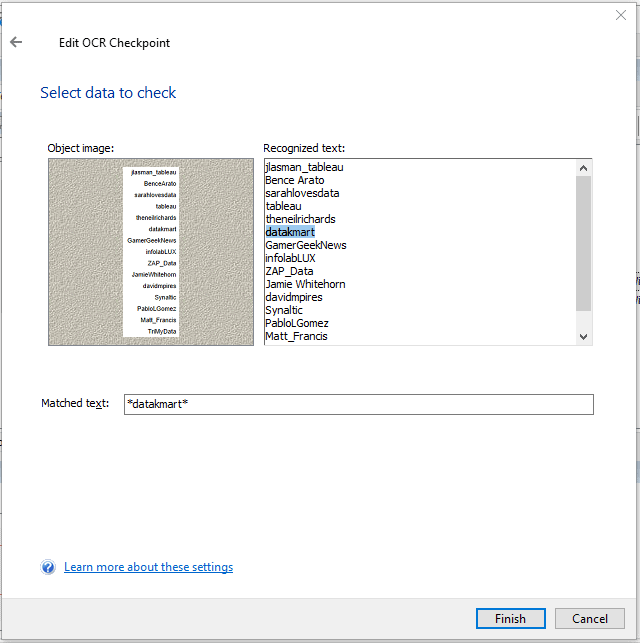

TestComplete reads the value from the graph and displays in the OCR section, you can select which value you want to use for the validation. The following image shows values from the horizontal bar chart Who had the most tweets?:

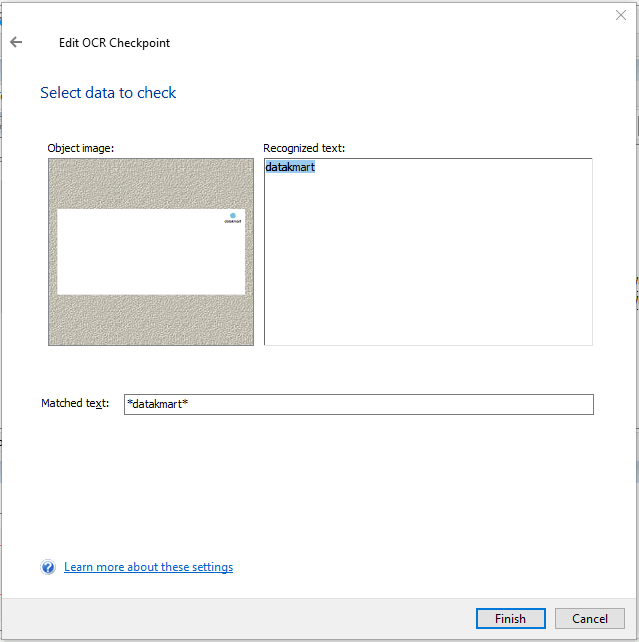

The following image shows values that TestComplete has captured from the Whose retweets were most popular? scatter plot graph:

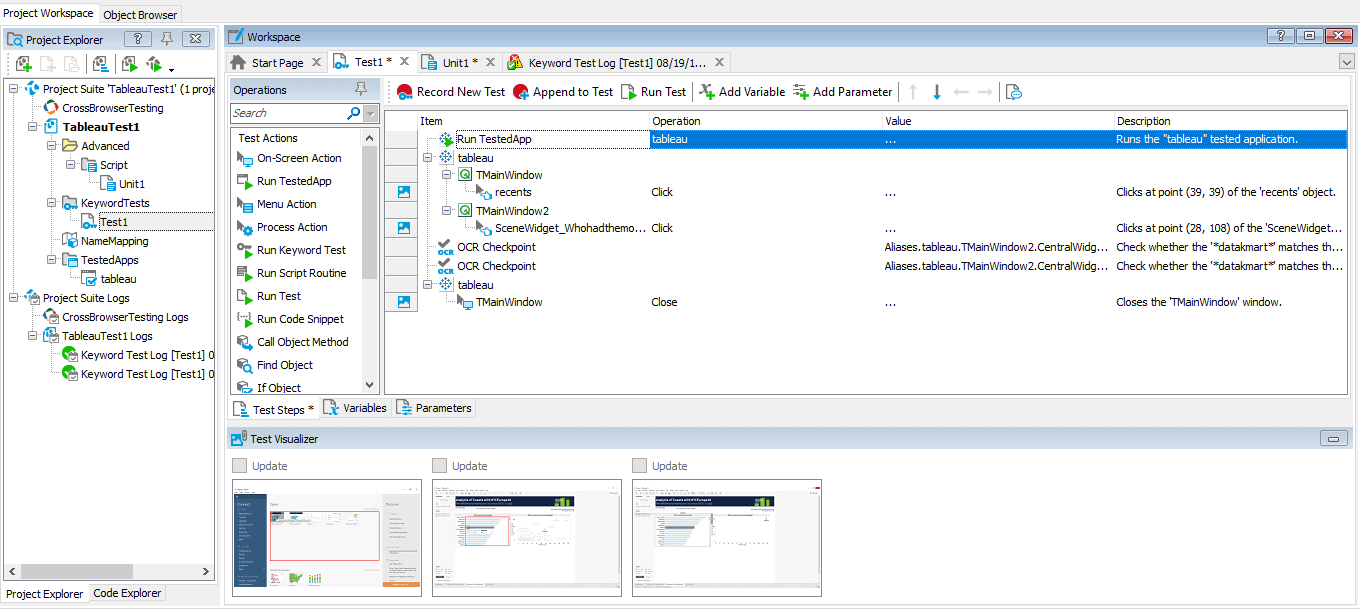

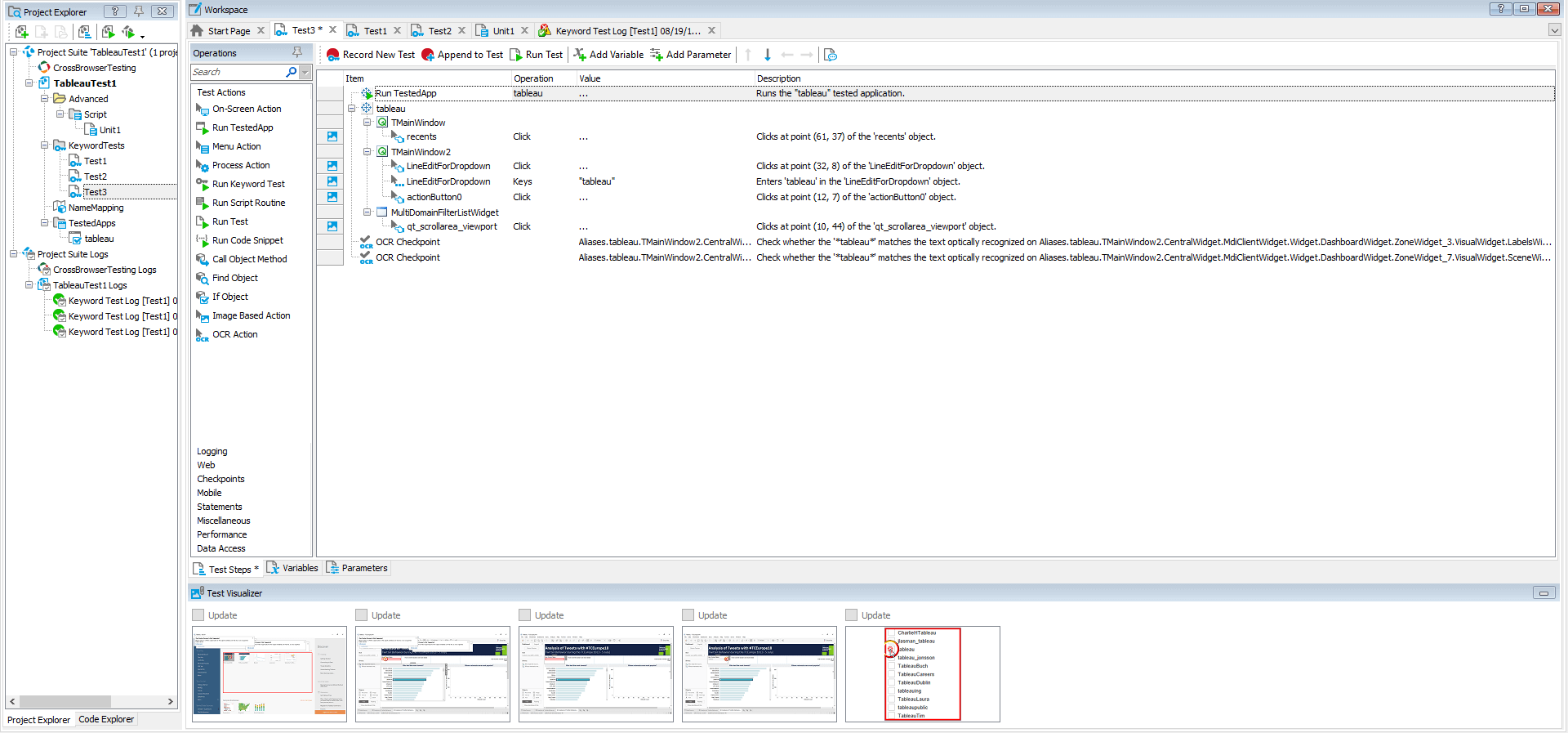

This is how your recorded keyword test case will look. It has two checkpoints with the name OCR Checkpoint in the test:

Test Scenario #2

Providing an input to the User Screen Name should filter the data in both the charts for the same input(username).

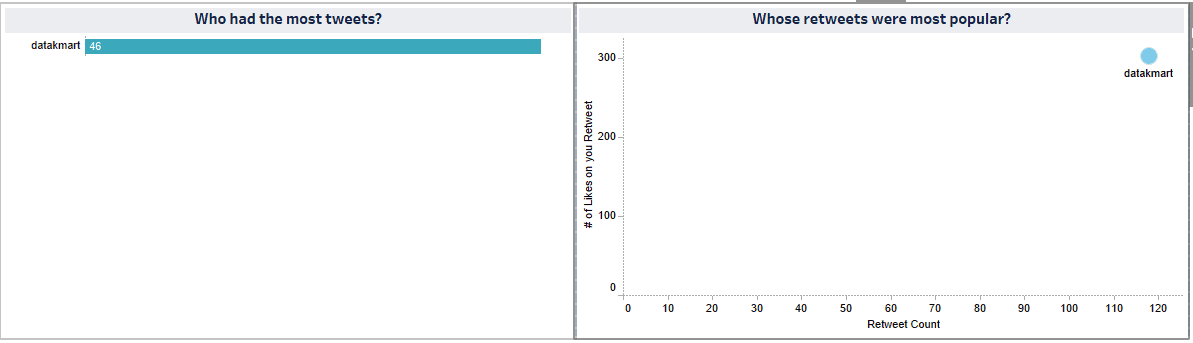

Initial stage: all the usernames and the metrics are displayed on both the charts (Figure 1.2)

Figure 1.2

Record a step to click on the User Screen Name textbox, enter the text tableau, hit the enter button and click on the checkbox for the tableau option from the filter drop-down:

Now verify that both the graphs display the data for the same filtered text, i.e., tableau by adding property checkpoints:

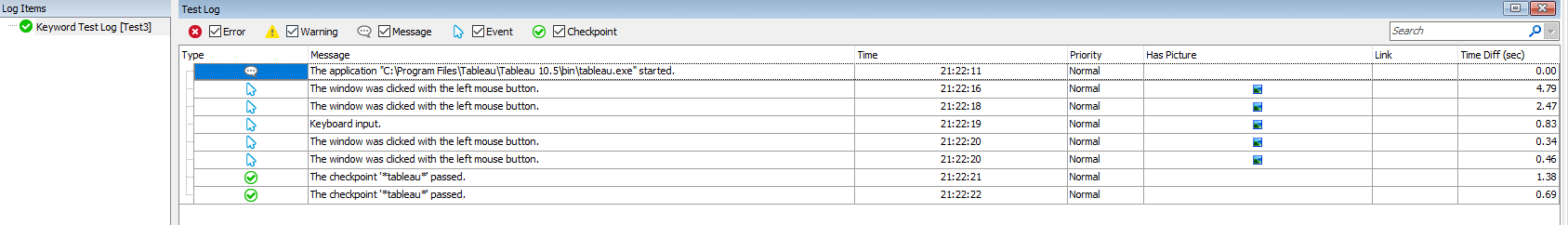

This is how your keyword test case will look:

Test execution result:

The AI feature you saw demonstrated in the above cases are examples of the Hybrid Object Detection feature. Another example of this feature is to test outputs in PDF files. Here’s an example of testing PDF outputs.

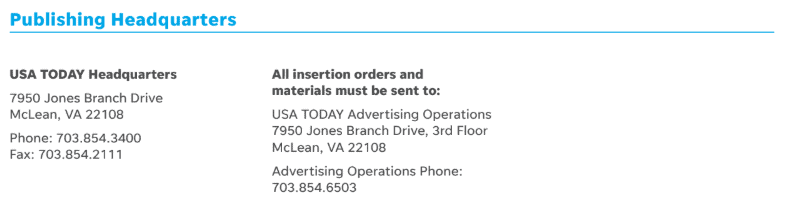

For this test, use a test PDF with addresses as below which is an output of a process.

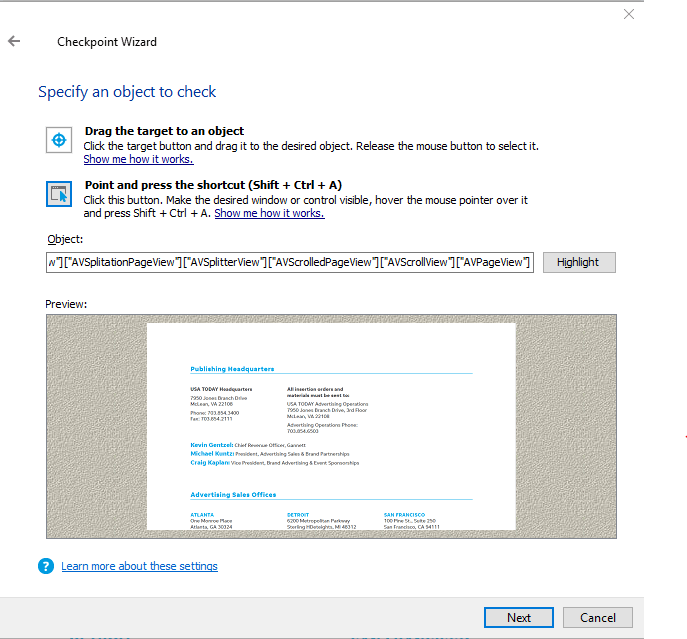

So same as before, start recording and select the PDF object.

This selects the object as above. Click Next.

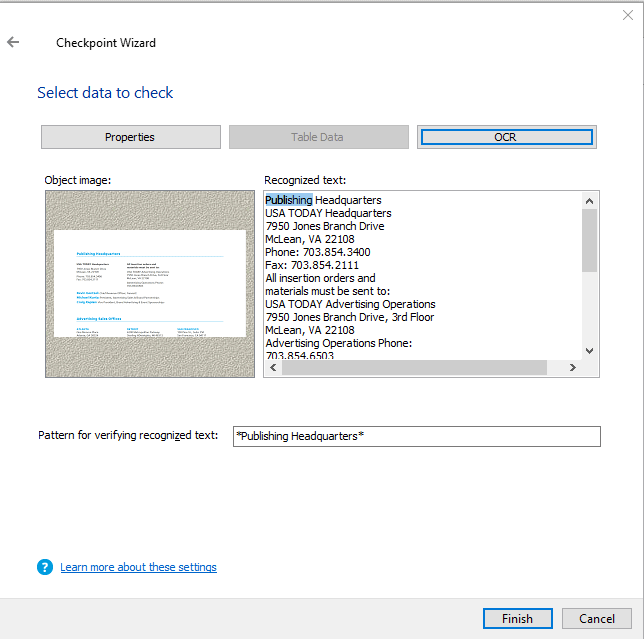

Select OCR, which will parse the PDF and recognize the text on the PDF. The recognized text will be displayed in the Recognized text field as below.

You can provide the text to find in the recognized text for satisfying the test criteria. You could also use patterns as well for a wildcard search.

Now that you have seen the examples of Hybrid Object Detection, here’s an example of the Intelligent Recommendation system.

TestComplete uses the Intelligent Recommendation system to detect objects in the Name Mapping repository. You can switch on the feature to add objects to the Name mapping repository automatically. This can also be utilized for dynamically searching and mapping objects. This comes in handy to maintain test cases for applications that change object positions in the object hierarchy. This dynamic behavior is common during state changes at various points in time during the application execution. The recommendation system helps automatically map objects across the object hierarchy based on the object properties.

These are some good examples of the application of machine learning models for software testing. TestComplete also has a provision to use external packages for Python and other scripting languages which can be leveraged to include other machine learning models for object detection, translation, audio to text, etc.

Conclusion

With the evolution of test automation from test execution & functional testing to a more lifecycle automation approach, it is imperative that robotic automation and cognitive automation will become a norm in the market soon. Machine learning & AI are game changers and testers should expand their skills to exploit the opportunities.

References

- Public dashboard

- Artificial Intelligence, A Modern Approach – S. Russell, P. Norvig

- AI-Powered Visual Recognition

- World Quality Report 2018-19

Load comments