Find out more

With database deployments, not all script-based processes are equal. Some use changescripts in a free-and-easy way, and some, which are normally called ‘migrations,’ have more discipline around them. I’ll go into some of the extra meaning around ‘migrations’ below and point out some of the key myths attached to all script-based processes. I’ll also show some of the new benefits that have come with some really good tooling that is designed to assist the change script processes. These tools include Redgate ReadyRoll, of course, but there’s a whole class of tools in this area now which we want to bring to your attention if you don’t already know about them.

So first of all I want to disambiguate the term ‘migration’. When I talk about a migration, I not only mean a change script to a database, but also one which is made specifically to take a database from a version to a new version; one which gets your database up to a particular functional level. This is not just any random change that goes into a database. If the database is close-coupled with an application, then the change brings it to a version that corresponds to a particular version of the application. The Migration process is, of necessity, a thing that goes in a strict sequence within a managed process. What we want to distinguish it from is its poor cousin, the wild delinquent change script out there that is not necessarily going to be applied in any particular order. It is not likely to be used in a process or a defined sequence because we don’t know the version of the database before or after it is applied. We so often see people using change-script-based approaches without much control of this process. So often, there is no defined method within organisations to share and manage scripts that make changes to databases. This means ad-hoc changes at the end of your process, which undermines all the testing and validation that came before.

Let’s take an example. We’ve got application developer, a database developer, a development manager, and a DBA all working together to try to make database changes. A sequence of conversation might look something like this:

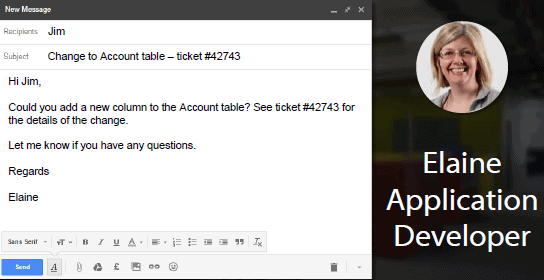

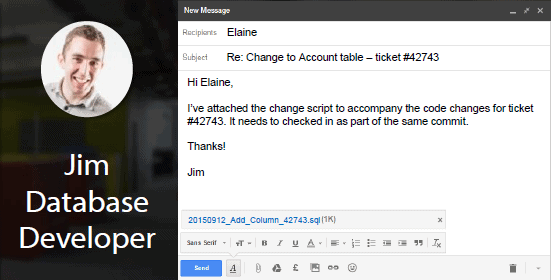

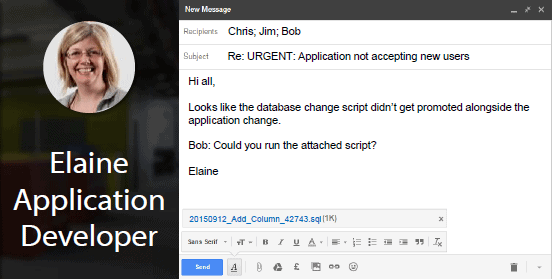

Application developer Elaine wants to get a change made, so she contacts the database developer Jim and says ‘I need these changes made, some details on the ticket, could you do this for me?’ Jim goes ahead and does it, probably on a shared database development environment, and then puts some script somewhere, maybe attaches them to the ticket, very often e-mails them around, and the e-mail somehow becomes the change management workflow of these systems. So Elaine says ‘Great, I’ll take care of it, got the release scripts in the release, no problem here’,

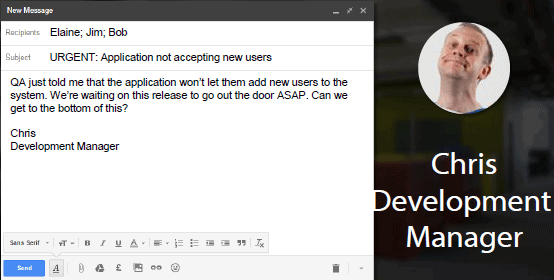

The email thread continues, until all goes quiet. Then, out of the blue, somebody says ‘Whoa! Something is really broken, nothing is working, what happened?’ It turns out, when Elaine looks into it, that one of the scripts didn’t get picked up, maybe somebody didn’t know there were two or didn’t necessarily know in what order to run them, all kinds of things go missing from the actual desired process to run these scripts. So she sighs and says ‘Okay, here’s the scripts’, sends the email off to Bob the DBA.

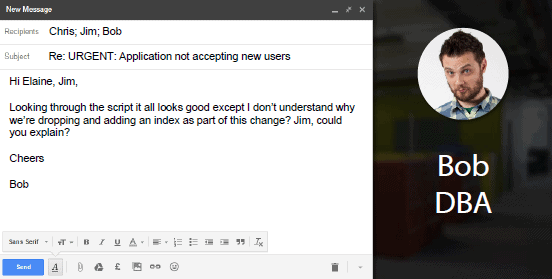

You’ll notice that all the collaboration happens via e-mail. Bob then says ‘Whoa! First time I’ve seen that script, I don’t think I like the look of that; It may be okay for your QA systems but that’s not going into my production system’.

Of course it’s QA that is on fire right now because they’re trying to get this through Test. But Bob is an awesome DBA. He offers to help, ‘Jim, can I show you how to make these changes the right way? So you’ll know for the future.’ But, y’know, the best time to educate someone on fire-safety is not when their pants are on fire. So Jim says, “No really, can you just run this? My whole team is blocked.” Bob, “Yeah, fine OK, it’s done in QA, but it’s not going into prod like that.”

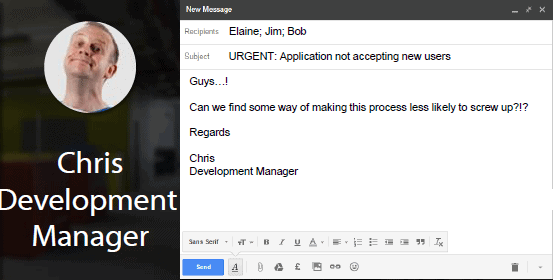

Finally, Chris the development manager looks at this whole thing unfolding before him and can see that, in fact, fundamentally this team didn’t do a bad job of working together to solve these problems; but he still looks at this process and thinks ‘This isn’t good, this isn’t right. What can we actually do to sort this process out?’

So as a good Agile team lead, he actually starts to do some ‘five whys’ on this:

- Why?

“Okay, so the problem was that we had a database that we couldn’t actually add our users to, the QA problem was an urgent problem we needed to fix, it should never have been that way.” - Why?

“Well the update script didn’t get run.” - Why?

“We messed up the manual steps. Somewhere in there we missed out on a change that we were supposed to make.” - Why?

“We’re not using our standard tools. An application development team that is actually working through the development cycle has things automated for releasing the application. The equivalent mistake doesn’t happen on the application side because Integration and QA/Test are both managed neatly through those environments and the application is deployed in an automated way.” - So why aren’t they using their standard tools for the database development?

“Search me. Maybe they just don’t know that there is an alternative.”

There is an alternative which keeps their basic process in place of designing the changes upfront, and then still sending them in an organised way down all the way into production.

So what’s with ‘comeback’ in the title of this article? Well, I mean a comeback of script-based deployment processes. Perhaps it is more like a revival, because plenty of database developers using other relational database systems are using script-based deployment processes, so they clearly have never gone away. But those of us in the SQL Server world have wonderful tools such as SQL Source Control which help us design the end-state of the database, then let tools worry about how to make the changes correctly. In addition, in this ‘comeback’ there’s now a new breed of tools for SQL Server – like ReadyRoll – which follow a migrations-based approach. There isn’t a right or wrong approach, but you now have a choice between methods depending on your requirements. In this article, I’d like to go into more detail on two circumstances that are a good fit for a migration-based approach:

Database Re-engineering

We have mature systems where the changes have data actions in them, and most of the changes actually affect the organisation of the data itself. Imagine something like a business acquisition or re-engineering where you are having to make schema changes to your base tables. It sometimes isn’t enough to know what the schema changes are going to be, you need to also specify in detail what’s going to happen to the existing data in the table. There is a limit to what a software tool can do to guess this. No machine can guess a business decision. Often, on mature systems, you need to make an explicit decision how the existing data changes on every change to a base table. Whenever that’s the case, the developer needs to specify up-front what is meant to go in the altered table structure after you’ve actually made your changes

Database design by evolution

In many Agile database developments, the data model is still actively ‘evolving’ after the first production release. Each release involves table splits and other design changes. This means much more change to base tables that have production data in them. We’ve seen developments where DBAs are getting slammed by the complications and modifications to the change scripts, and are trying to push more of that decision-making into the development teams to say ‘Okay, you guys need to actually design these changes yourself and we can still okay them, but you actually have to get on top of how these changes are getting made because we’re just taking on too much. We can’t put all that expertise in, we need to actually train you up or do what’s necessary so that you guys can do that at your end as well.’

In these cases we are seeing an increase in the need for manual script-based processes come back, and particularly managed ones. So we’re not talking about the wild, risk-taking, carefree scripts; we’re talking about the ones which are following a good, well-defined, clear process and participating in the system.

What’s Missing?

Why was it that Bob and Elaine’s team weren’t jumping on this and sorting out the confusion? We think that they are actually held back by a bunch of myths about migrations which suggest that this is intrinsically difficult. This is probably because the change script processes tend to be ad-hoc and pretty badly managed in businesses, and so where ad-hoc change script processes are used we see a lot of error-prone releases: we see scripts getting missed out, we see changes being made very late and therefore not actually being made and tested. The upgrade scripts themselves aren’t being tested through the test environments, so those releases become error prone. I think that the reputation of script-based processes are getting tarred a bit by that in consequence.

The other problem is that the collaboration is really hard to get right. So how do we share these files? How do we get them done in the same order? How can I take this whole set of changes and expect my developers to know what to run where, and what’s supposed to be in which state? Once they have done all that, how can they know what the contents of those files are? How can we flag up conflicts early because I don’t actually want my developers stepping on each other’s toes? Once again, the reputation of script-based methods often get tarred with the blame. It isn’t their fault, but rather the fact that the tools and workflows don’t support this sort of process. The new generation of migrations-based tools actually makes these things obsolete, makes these things completely untrue, and help to solve both of these problems.

The predicament you face, of course, is to find a tool that allows you to maintain version control while allowing you to take a migration-based approach to table changes.

There are many tools that can help with this predicament. RoundhousE, Redgate ReadyRoll, DBvcs, DbUp, Liquibase, and Flyway come to mind. Redgate ReadyRoll comes to my mind particularly easily because I’ve been closely involved with the product, but I can say without blushing that ReadyRoll is one of the most fully-featured out there, which is why Redgate wanted to offer it as part of the toolset. It takes an unashamedly ‘migration-based’ approach to deployment in that it deploys each change script in strict sequence to change a database from one version to another. If the number of scripts gets unmanageable, then it allows you to ‘rebase’ by consolidating a whole sequence of scripts into one logical change.

Redgate ReadyRoll:

- Helps you make and validate the changes.

- Lets you manage objects such as stored procedures conventionally as object-level scripts so you don’t need to deploy versions in sequence. This gives you an object-level history for easy debugging and makes it obvious if other developers are making conflicting changes.

- Allows you to use the Offline Schema model to ‘back-fill’ object-level table scripts to display the complete history of changes at the object level: you get the best of both worlds.

- Sits right in Visual Studio, so you are using exactly the version control patterns from your application.

- Ships with deployment pieces to slot into CI and release management.

We’ve seen this approach transform database development for application teams, turning it from a weak point into a huge strength. When you can count on the database to be fully in sync with the application at all times, think of what that enables. Your testing is always valid. Things are so well checked that deployments are trivial. Blissfully boring. A whole chunk of time you spend troubleshooting today just evaporates.

So if you dismissed script-based processes in the past, it’s definitely time to take another look.

Load comments