You don’t see it very often, but voice commands are no stranger to the world of video games. Games that could be played using your voice have existed since the late 90s with games like Hey You, Pikachu and Seaman being two notable examples from that time. Even now, with a little searching, you can easily find a game online that requires a microphone and your voice to play. What if you were told that you can make your own voice-controlled experience? As long as you have Unity and a microphone to test the project, you can!

In a moment you’ll be creating a project that will be controlled using nothing but your voice. You will, of course, need a mic to be able to run the project. A single cube will be created, and you will be able to command the cube to change colors, spin in a certain direction, make a sound, and print a message to Unity’s Debug Log. Accomplishing this will require Unity to look for certain phrases, which you will define. If what you say matches the phrase you define in code, then a user-defined function will be performed.

Setting Up

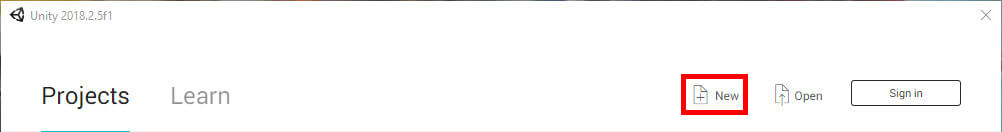

Upon starting Unity, you will need to create a new project.

Figure 1: Creating a new project.

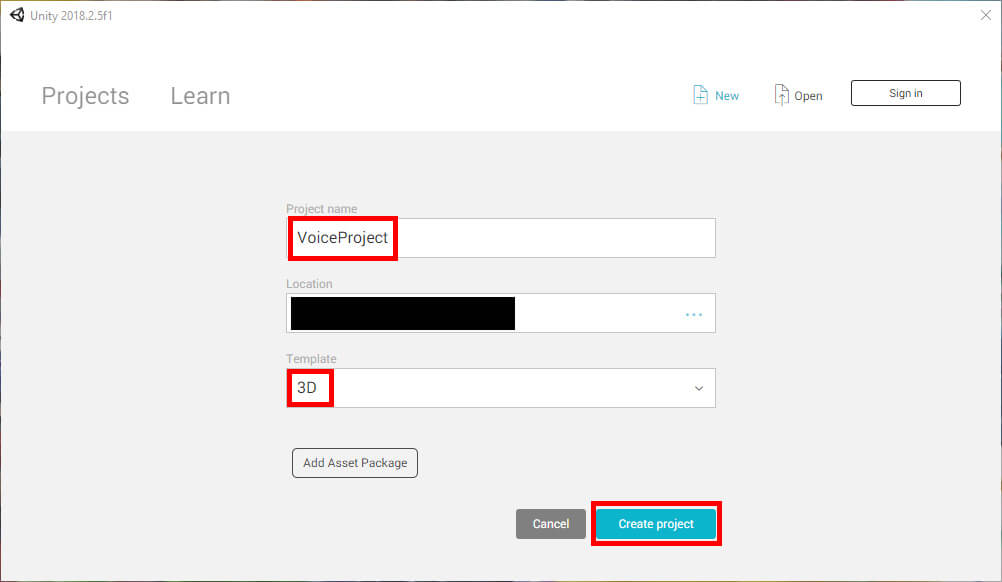

Give the project the name VoiceProject, then specify the project location. This example images will be of a 3D project, but you can apply the same concepts in a 2D project as well. Once everything is set up, click Create Project.

Figure 2: Naming the project.

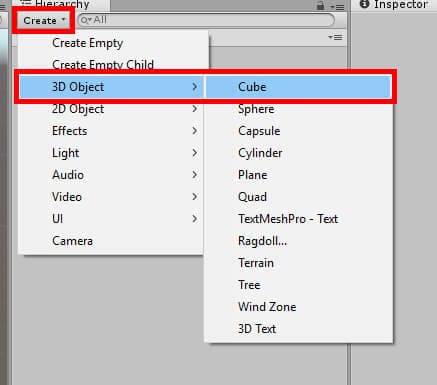

The first thing you’ll be doing is creating the Cube object needed for the project. In the Hierarchy window click Create->3D Object->Cube. For this project, you can leave the object to the default Cube name.

Figure 3: Creating a cube object.

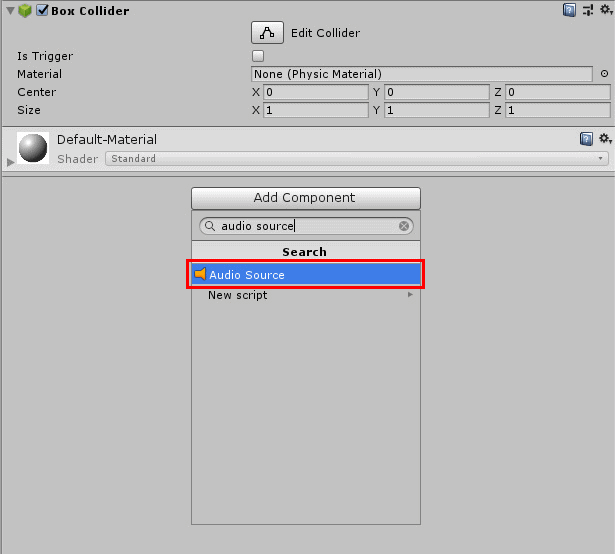

Once the object has been created, you’ll need to add an Audio Source component to it. In the Inspector window, click the Add Component button. Search for Audio Source then select the component at the top of the list.

Figure 4: Adding a new component.

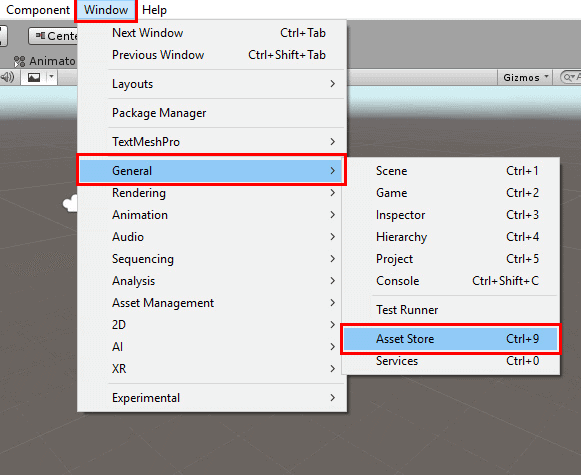

Of course, an Audio Source component would be rather useless without sounds. You can import sounds from your computer if you wish, but in this example, the Asset Store will be used to acquire some free sounds. At the top of the Unity window select Window->General->Asset Store.

Figure 5: Opening the Asset Store.

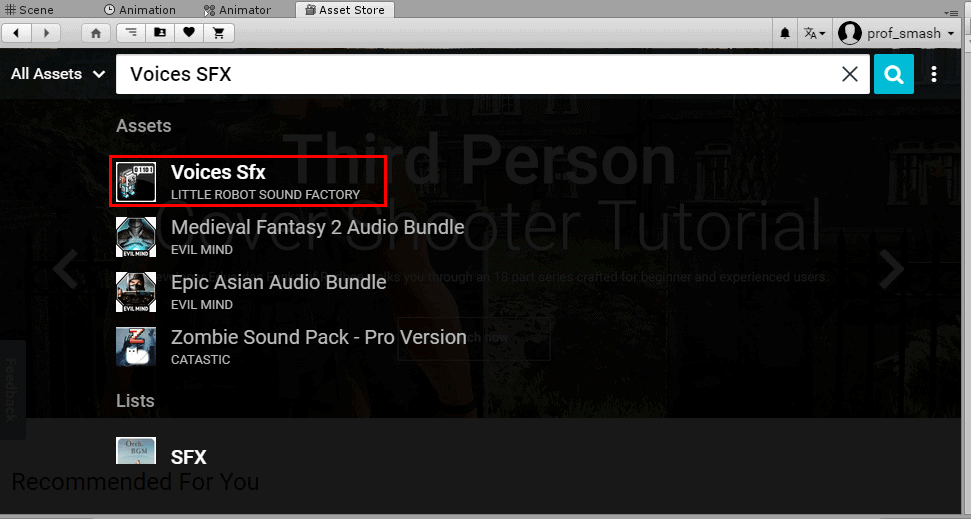

In the window that appears, search for Voices SFX and select the corresponding item by Little Robot Sound Factory in the drop-down menu that appears.

Figure 6: Selecting Voices SFX.

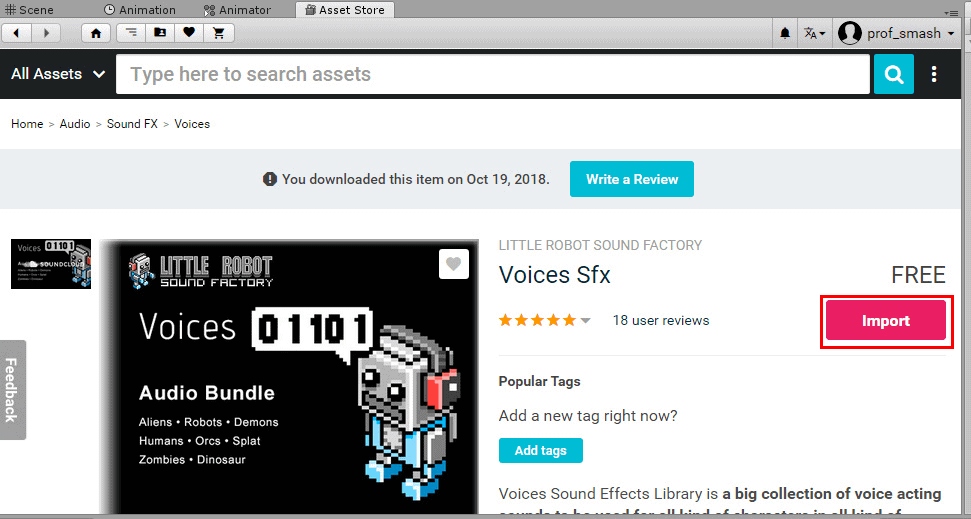

On the next screen, you’ll need to select the Download button to download the assets. After the download has been completed, you’ll need to press the same button to import the assets. The button should say Import after the download has completed.

Figure 7: Beginning the import process.

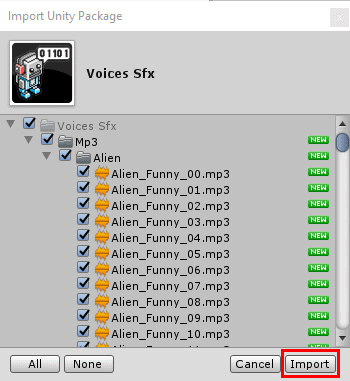

After pressing Import, the Import Unity Package window will appear. You can deselect all the sounds you don’t desire if you wish, but to keep things simple, this example import all the sounds in the package.

Figure 8: Importing the assets.

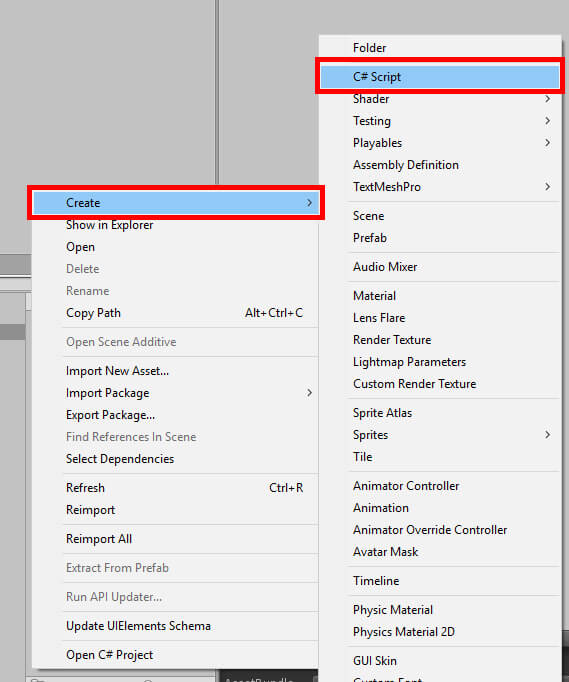

Once the sound assets have finished importing, you can either remove the Asset Store window you opened or simply switch over to the Scene window. Next, it will be time to make the script needed to implement voice commands. In the Assets window, right-click and choose Create->C# Script.

Figure 9: Creating a new C# script

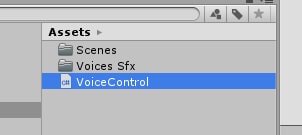

Name this script VoiceControl. When finished, the Assets window will look like the below figure.

Figure 10: The current Assets window.

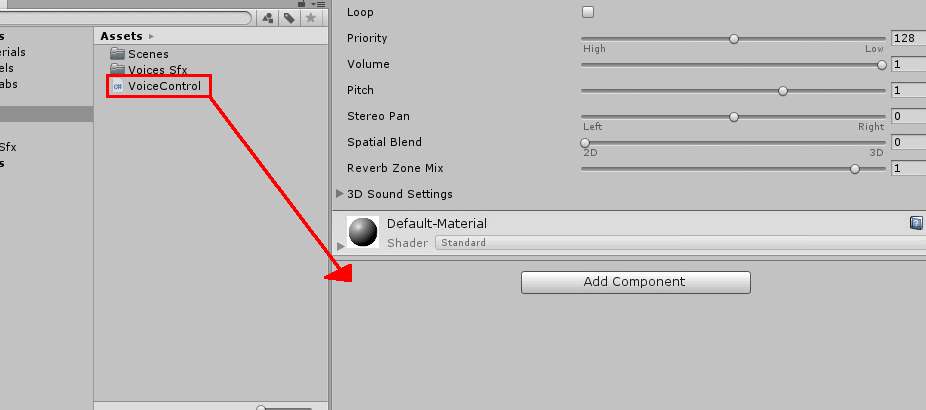

Finally, attach the VoiceControl script to the Cube object. Select Cube in the Hierarchy, then click and drag the VoiceControl script into the Inspector window underneath the Add Component button.

Figure 11: Adding the VoiceControl script component.

Now that the script is attached to the object, it’s time to make the code. Open the script in Visual Studio by double-clicking it in the Assets window.

The Code

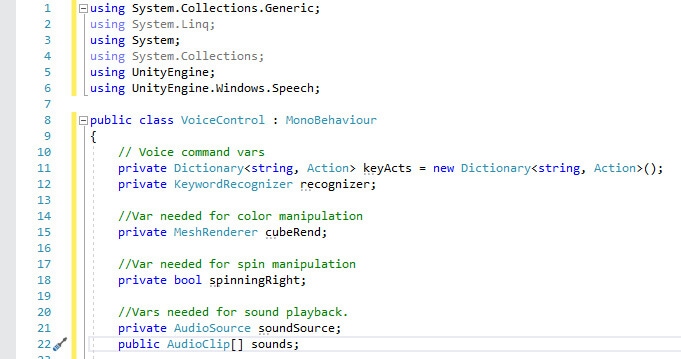

Before creating any voice commands, you will need the following using statements at the top of the script.

|

1 2 3 4 5 6 |

using System.Collections.Generic; using System.Linq; using System; using System.Collections; using UnityEngine; using UnityEngine.Windows.Speech; |

The key statement is using UnityEngine.Windows.Speech. As you may have guessed, this is what will allow Unity to take voice commands and perform certain actions from there. With that completed, declare the following variables inside the class.

|

1 2 3 4 5 6 7 8 9 10 |

// Voice command vars private Dictionary<string, Action> keyActs = new Dictionary<string, Action>(); private KeywordRecognizer recognizer; // Var needed for color manipulation private MeshRenderer cubeRend; //Var needed for spin manipulation private bool spinningRight; //Vars needed for sound playback. private AudioSource soundSource; public AudioClip[] sounds; |

First, you’ll need to define the Dictionary that will store the voice commands and what action they perform. Next, you declare a KeywordRecognizer to, well, recognize your words. Next, a MeshRenderer variable is declared. This will get the MeshRenderer component from the Cube object. This is needed because it’s the MeshRenderer that will allow you to change the color of the Cube object. After that, you have a boolean named spinningRight. You’ll use this boolean to tell the program whether the Cube object is to be spinning left or right depending on if spinningRight is true or false. Next, you will create a private AudioSource variable and a public array of AudioClip. SoundSource will be used to play sounds after hearing certain voice commands, and sounds will simply be a list of sounds that can be played. You will define what goes into the sounds array after entering the code. When you’ve entered all this, your script should look similar to the figure below.

Figure 12: Using statements and variable declaration.

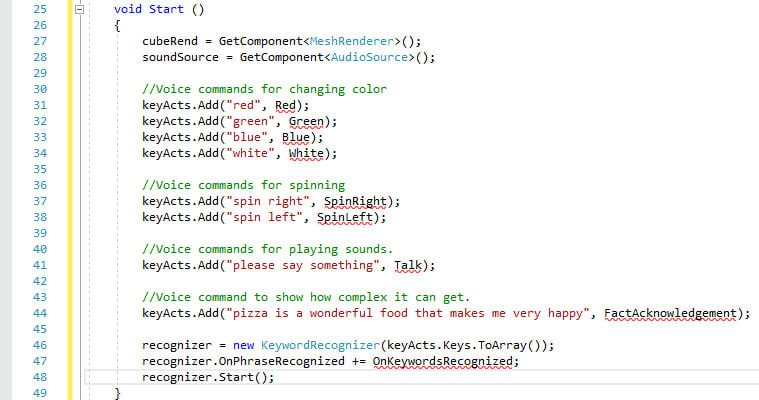

From here, you’ll move on to the Start function. The Update function will not be needed for this project, so you can either comment it out or delete it. In the Start function, input the following code:

|

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 |

cubeRend = GetComponent<MeshRenderer>(); soundSource = GetComponent<AudioSource>(); //Voice commands for changing color keyActs.Add("red", Red); keyActs.Add("green", Green); keyActs.Add("blue", Blue); keyActs.Add("white", White); //Voice commands for spinning keyActs.Add("spin right", SpinRight); keyActs.Add("spin left", SpinLeft); //Voice commands for playing sound keyActs.Add("please say something", Talk); //Voice command to show how complex it can get. keyActs.Add("pizza is a wonderful food that makes the world better", FactAcknowledgement); recognizer = new KeywordRecognizer(keyActs.Keys.ToArray()); recognizer.OnPhraseRecognized += OnKeywordsRecognized; recognizer.Start(); |

The Start function can be split into three parts. First, you begin by getting the Cube object’s MeshRenderer and AudioSource components using GetComponent. After that, you will define the various voice commands and corresponding functions that will be in your keyActs dictionary. You’ll be working on the functions in a moment. Notice how complex the voice commands can get. Near the end of your dictionary definitions, there’s an especially long voice command that is declared. As you’ll find out later, Unity will still be able to recognize this long series of words and perform the function that correlates to that command.

Next, recognizer gets its list of words to look out for by looking at the keys, or voice commands, in the keyActs dictionary. Then, whenever a phrase is recognized, the OnKeywordsRecognized function will be called, which will also be defined momentarily. Finally, the KeywordRecognizer will be initialized and will continue to run for as long as the program is operational.

When finished, the Start function should appear similar to this:

Figure 13: The completed Start function.

Now would be a good time to declare the different functions this script will need. OnKeywordsRecognized will be a good place to begin. Add the following function:

|

1 2 3 4 5 |

void OnKeywordsRecognized(PhraseRecognizedEventArgs args) { Debug.Log("Command: " + args.text); keyActs[args.text].Invoke(); } |

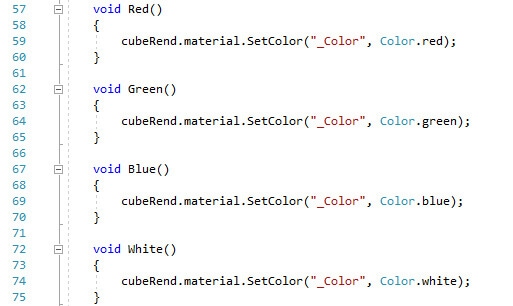

First, Unity’s console log will show what command was said once the user says any of the voice commands you’ve defined. Then the actual function that changes the object’s color will be called using Invoke. After this, enter the code that will change the cube’s color.

|

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 |

void Red() { cubeRend.material.SetColor("_Color", Color.red); } void Green() { cubeRend.material.SetColor("_Color", Color.green); } void Blue() { cubeRend.material.SetColor("_Color", Color.blue); } void White() { cubeRend.material.SetColor("_Color", Color.white); } |

All of these functions perform the same basic task. They change the color of the cube object. The color the cube is changed to is dependent on the function. In each function, you first get the cubeRend's material and call SetColor. You then specify that you want to change the main color the material, and then specify the color in question. With that completed, your color changing functions and keyword recognizer function will look like Figure #.

Figure 14: Color changing functions.

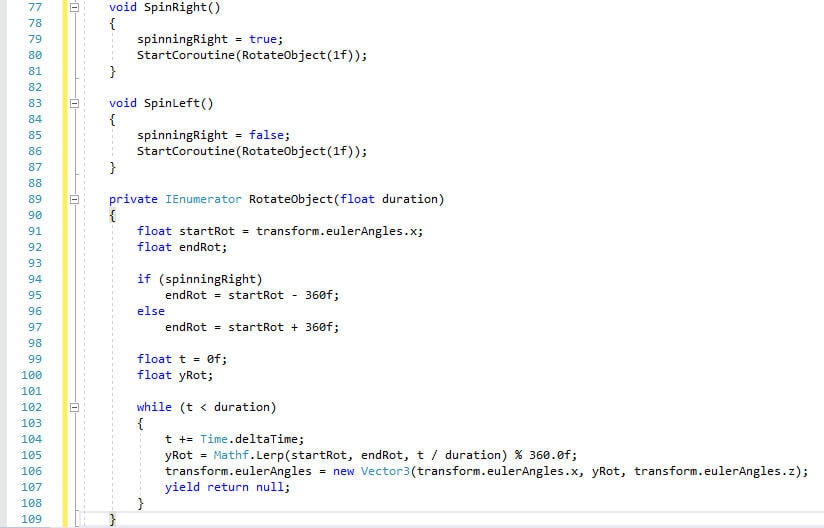

Now you’ll work on the functions that spin the cube either left or right.

|

1 2 3 4 5 6 7 8 9 10 |

void SpinRight() { spinningRight = true; StartCoroutine(RotateObject(1f)); } void SpinLeft() { spinningRight = false; StartCoroutine(RotateObject(1f)); } |

These functions get a little more complex. First, you define whether spinningRight is true or false, depending on which direction the Cube object will be spinning. Then a Coroutine is started that will rotate the object for one second. A Coroutine is similar to a function, but it has the ability to pause execution and return control to Unity and then continue where it left off on the next frame. They’re very useful for actions such as spinning an object around. Coroutines are declared with a return type of Ienumerator and must contain a yield return statement somewhere in the body. The Coroutine you’ll need is as follows:

|

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 |

private IEnumerator RotateObject(float duration) { float startRot = transform.eulerAngles.x; float endRot; if (spinningRight) endRot = startRot - 360f; else endRot = startRot + 360f; float t = 0f; float yRot; while (t < duration) { t += Time.deltaTime; yRot = Mathf.Lerp(startRot, endRot, t / duration) % 360.0f; transform.eulerAngles = new Vector3(transform.eulerAngles.x, yRot, transform.eulerAngles.z); yield return null; } } |

As mentioned earlier, it’s given a parameter that controls how long to spin the object for. In this case, it’s a float variable named duration. You then declare a few variables to be used within the Coroutine along with their declarations. In the case of endRot, it will change its starting value based on if spinningRight is true or false. The while loop will run for as long as t is less than duration. During this time, the object will be spun around by setting a new Vector3 to the object’s transform.eulerAngles, or rotation. Mathf.Lerp exists to help make the rotation look nice and smooth instead of jagged and ugly looking.

The finished functions and Coroutine should look like the figure below.

Figure 15: Spinning functions and Coroutine.

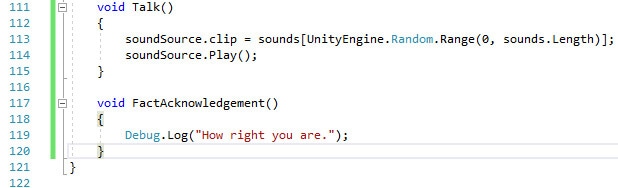

Now you will make the program respond to your request to speak! Unfortunately, this program won’t allow you to have a complete conversation with the computer, but it could certainly be used as a starting point. Beneath the Coroutine, you will enter the following code.

|

1 2 3 4 5 |

void Talk() { soundSource.clip = sounds[UnityEngine.Random.Range(0, sounds.Length)]; soundSource.Play(); } |

After responding to the command “please say something,” the program will respond by playing a random sound. You get the sound clip from the sounds array and then immediately play the sound afterward. Finally, create one last function to work with the last voice command you created.

|

1 2 3 4 |

void FactAcknowledgement() { Debug.Log("How right you are."); } |

There’s not much going on in this function. After registering the voice command, Unity simply prints a message to the Debug Log agreeing with your statement. With those two functions out of the way, the script is now complete. Save your work and return to the Unity editor to finish the project.

Figure 16: The Talk and FactAcknowledgement functions.

Completing the Project

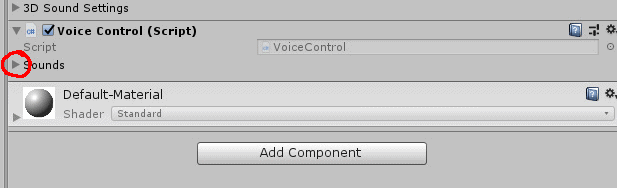

One task must be completed before you can test out your project. You need to assign some sound effects to the sounds array. To do this, select the Cube, go to the Inspector window and into the VoiceControl script component. Then, click the arrow next to the sounds array.

Figure 17: Opening the sounds array.

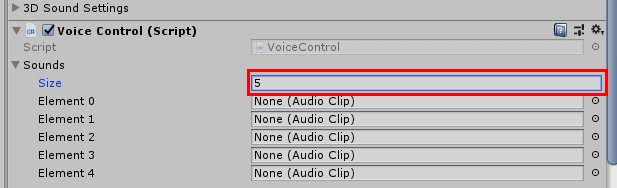

A field named Size appears when you click this arrow. Decide on how many individual sound effects you’d like to use. The example figure below sets the value of Size to five.

Figure 18: Defining the number of elements.

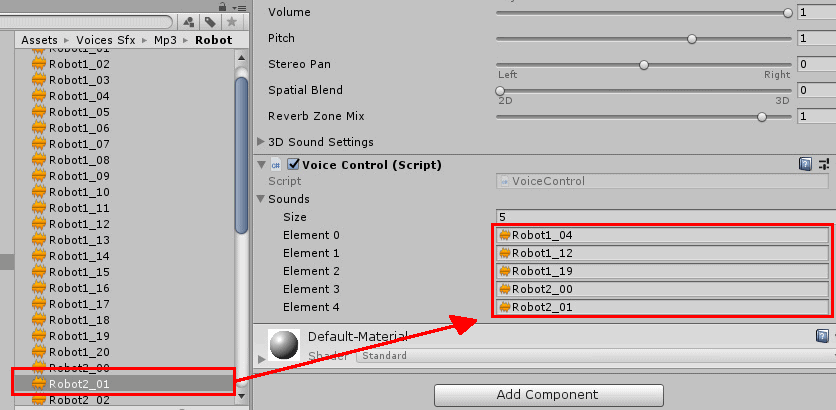

The moment you give Size a number, a list of empty elements appears. At this point, you need to drag some sound assets into these fields to populate the array. Remember those sound effects you downloaded early on? They’re going to be put to use here. In the Assets window, navigate to Voices SFX->Mp3. From there you must select one of the folders that contain the sounds you want. Any of the sound effects will do, but this example will use the sounds in the Robot folder.

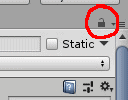

At this point, you’ll need to select the Cube object from the Hierarchy and lock the inspector so you can more easily set the sounds to be used in your sounds array. With the Cube object selected, click the lock icon in the top right of the Inspector window.

Figure 19: Locking the Inspector.

All you need to do now is click and drag whatever sounds you wish to use into the empty fields in the sounds array.

Figure 20: Setting sound effects for sounds array.

After selecting your sounds of choice, the project will be complete! Give the project a test run by pressing the play button at the top of the Unity window. While playing, say any of the voice commands and watch your object change and react based on those commands. Don’t forget to have your microphone ready! Below is a list of the voice commands you’ve made:

- “Red”

- “Blue”

- “Green”

- “Spin Left”

- “Spin Right”

- “Please say something”

- “Pizza is a wonderful food that makes the world better”

Figure 21: The project in action.

Conclusion

Voice commands don’t have to be relegated to mere gimmicks. The examples mentioned at the beginning are two examples of games using the voice to play. Though they aren’t very common, there are plenty of other cases of voice commands in video games. You can certainly take that functionality outside of gaming as well. Most smartphones have voice input functionality allowing you to create text messages or check the weather. Here, you made an object change its color, spin around, play a sound, and print a message to the console using Debug Log. This is all being done with your voice. No hands required!

At the end of the day, the microphone can be used as another input device just like a mouse or keyboard if you know how to utilize it. Perhaps it can be used for accessibility, or to assist in multi-tasking. Voice commands are often relegated to mere gimmicks, but with the right idea and ability to do it, the power of the voice can be much better realized in games and other applications. Perhaps you have that idea. You can make it a reality!

Load comments